| Network Fluctuation |

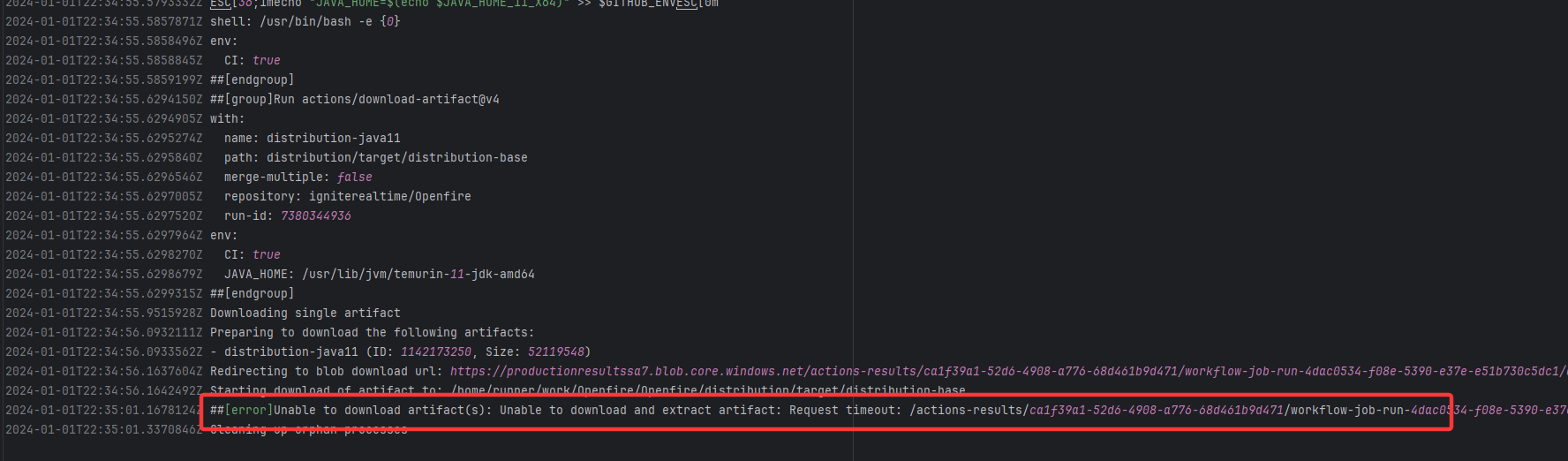

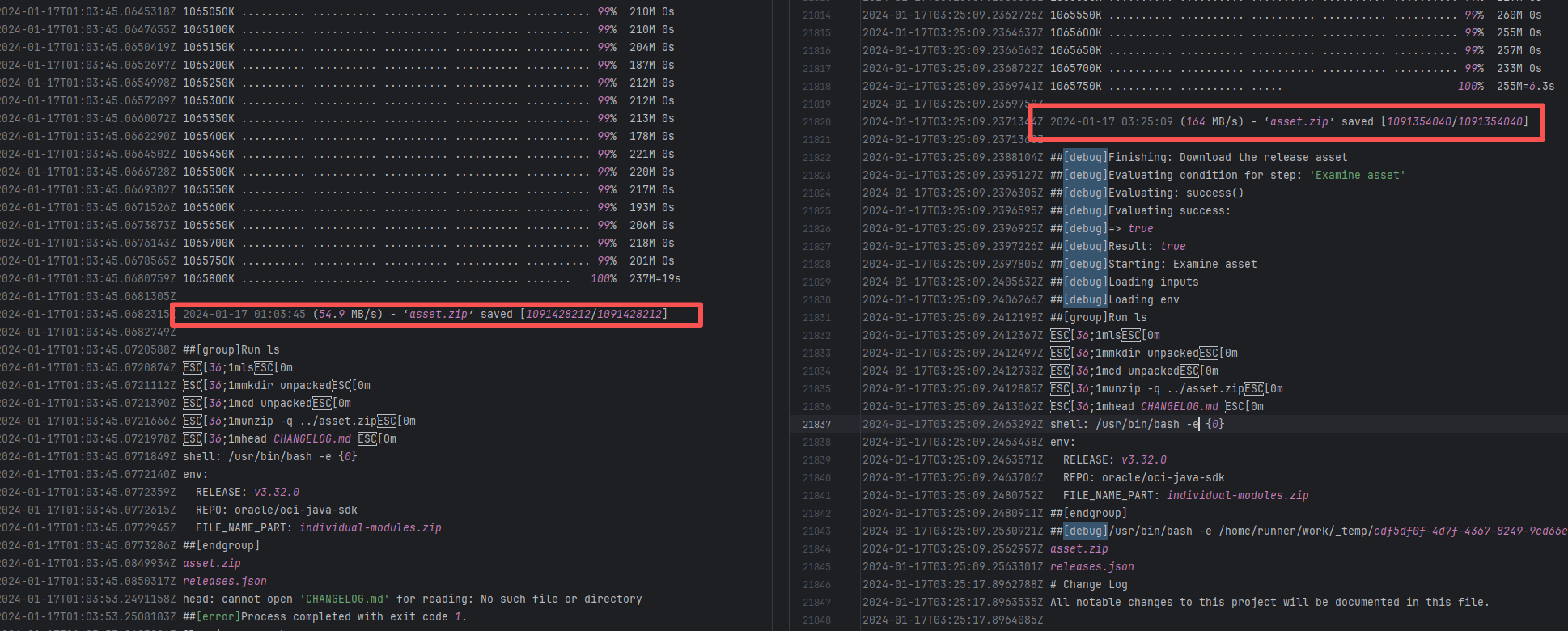

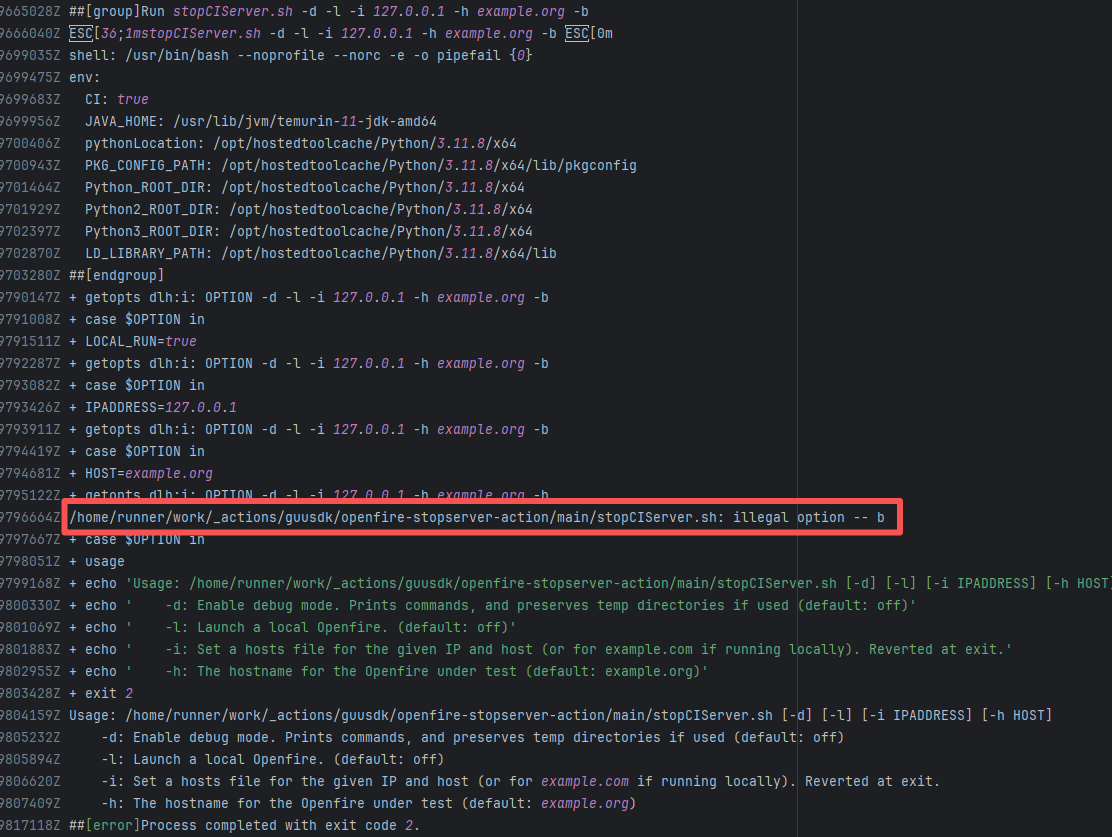

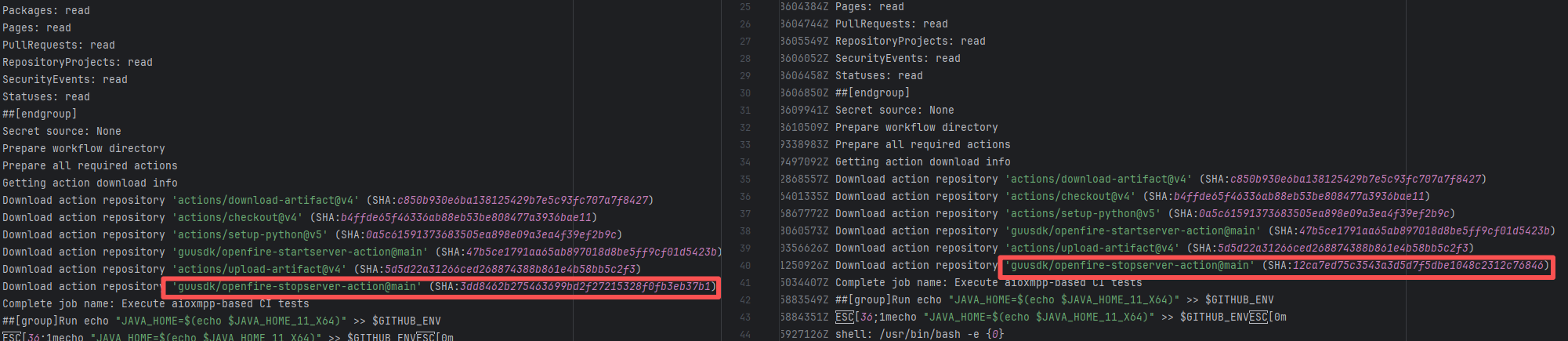

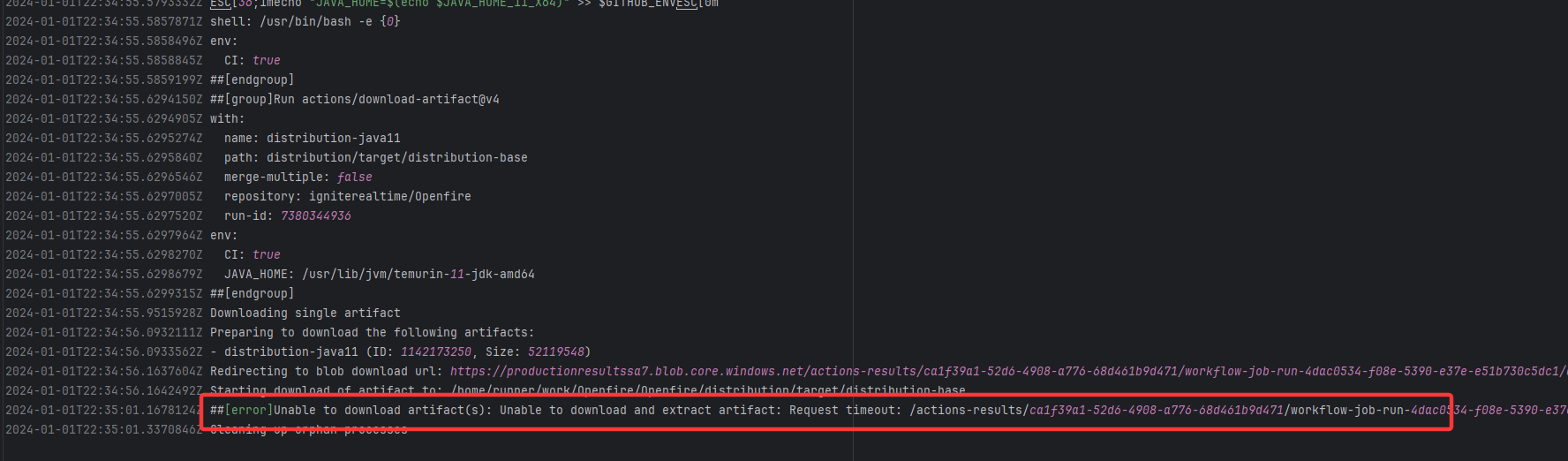

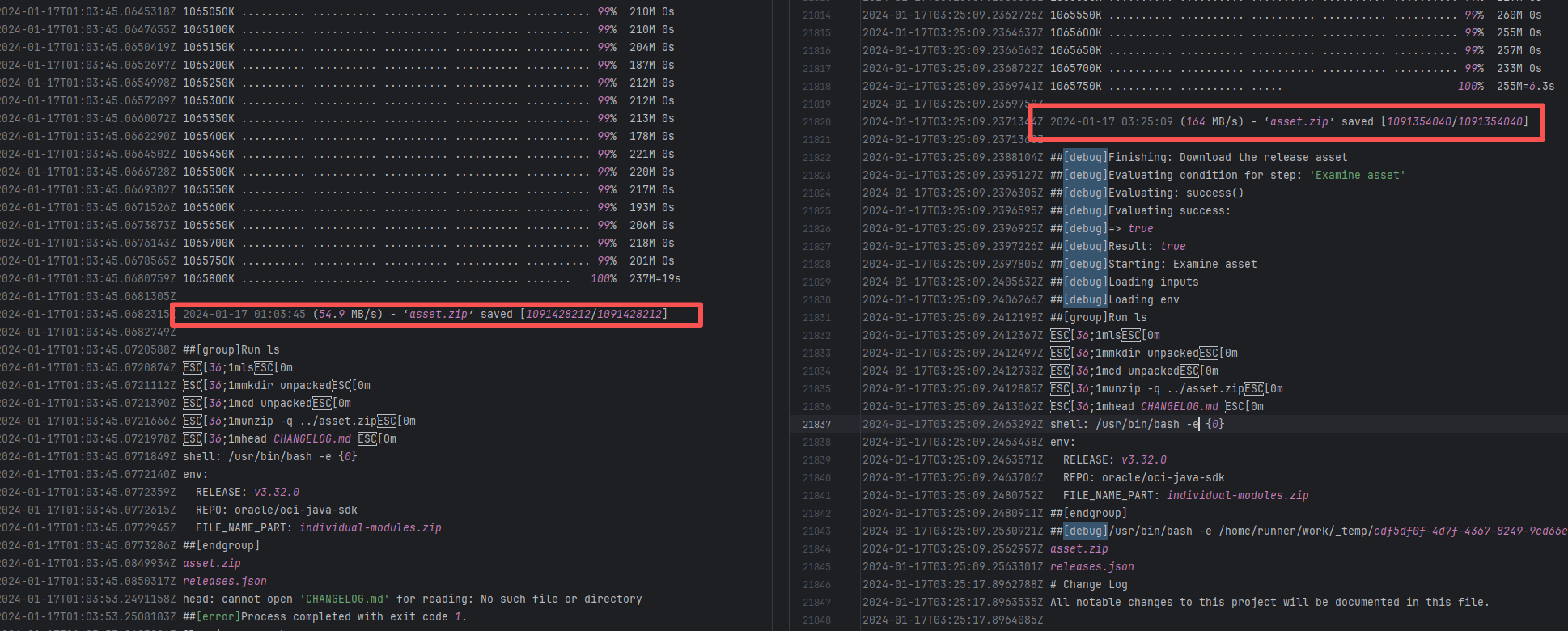

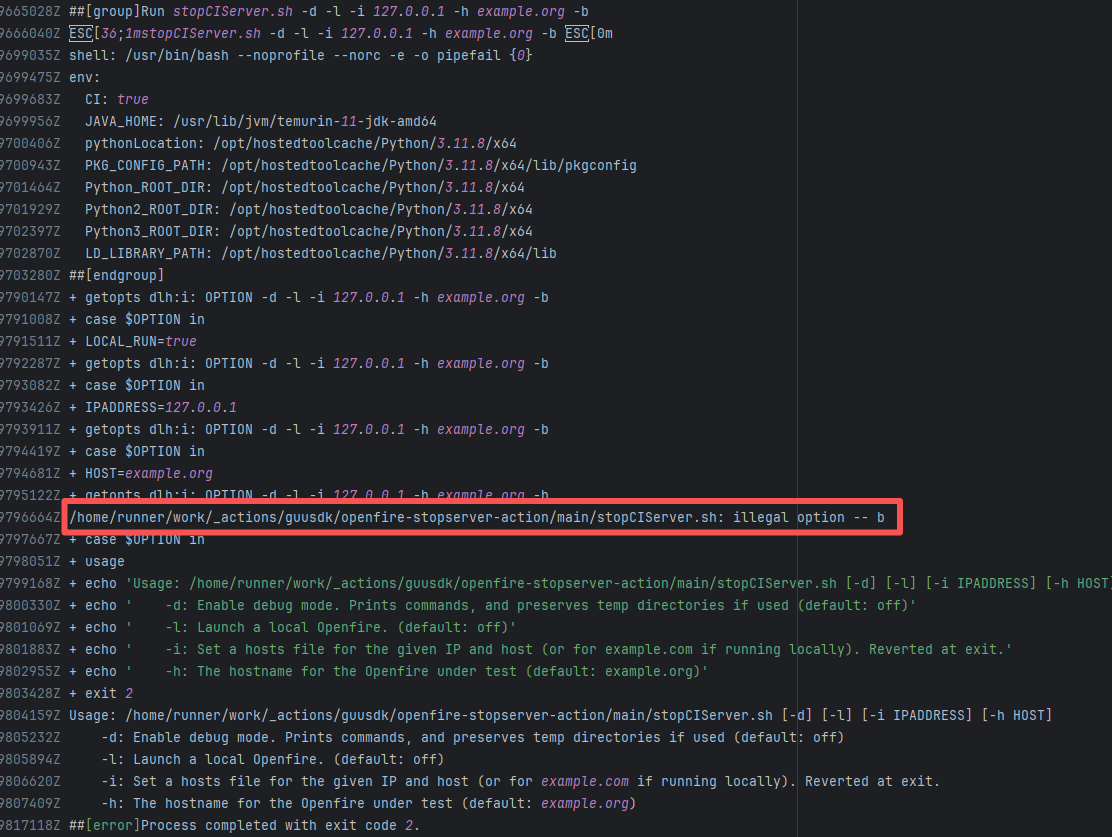

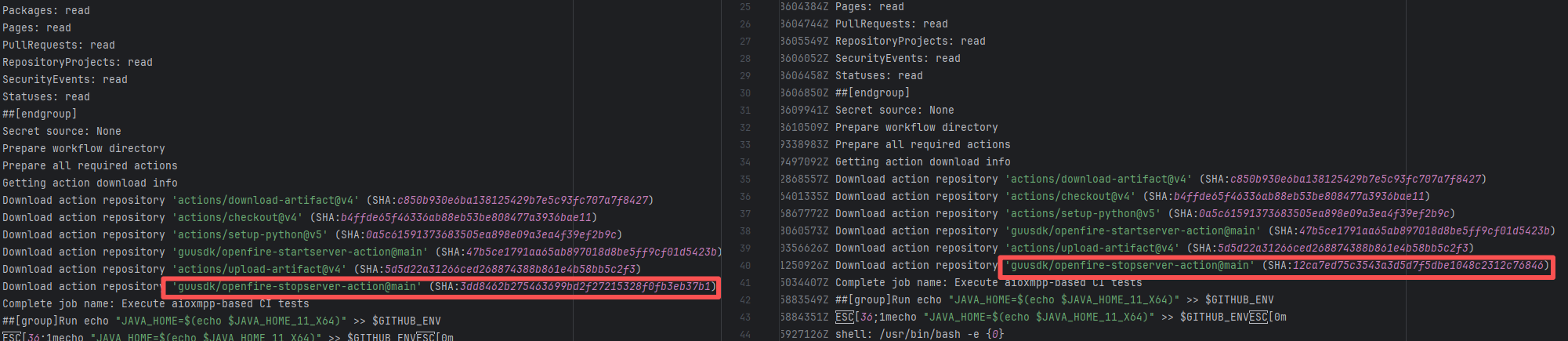

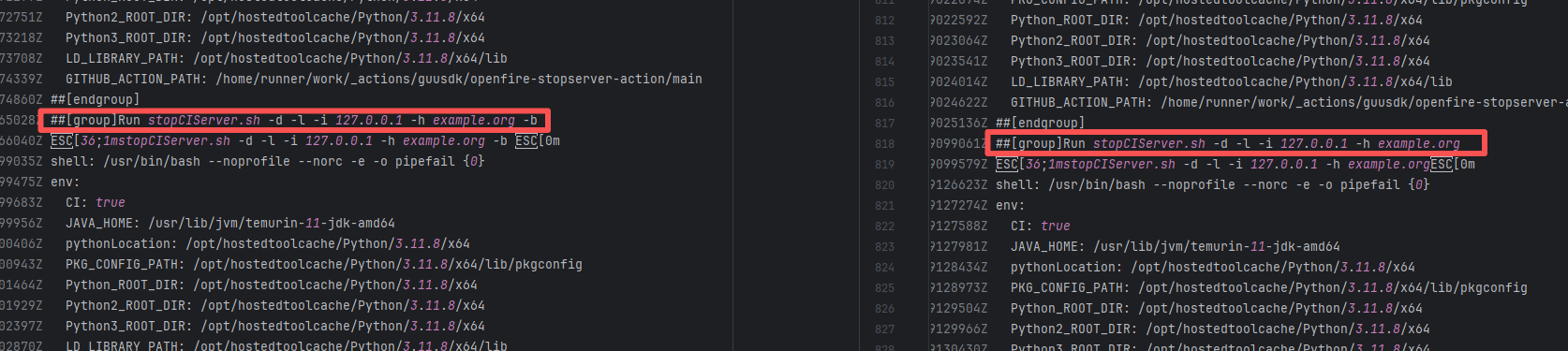

igniterealtime/Openfire |

20077703205 |

Request Timeout |

Error: The error indicates that the workflow failed due to a timeout while downloading the Artifact.

Root Cause: The download operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

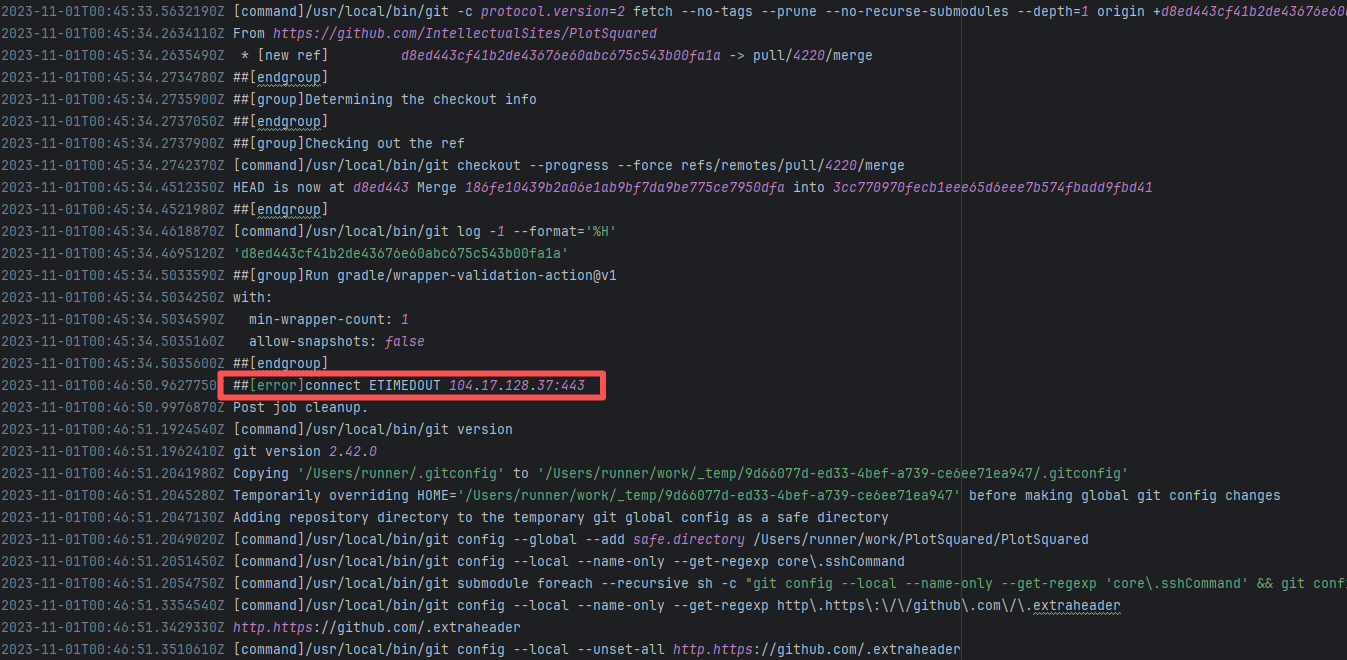

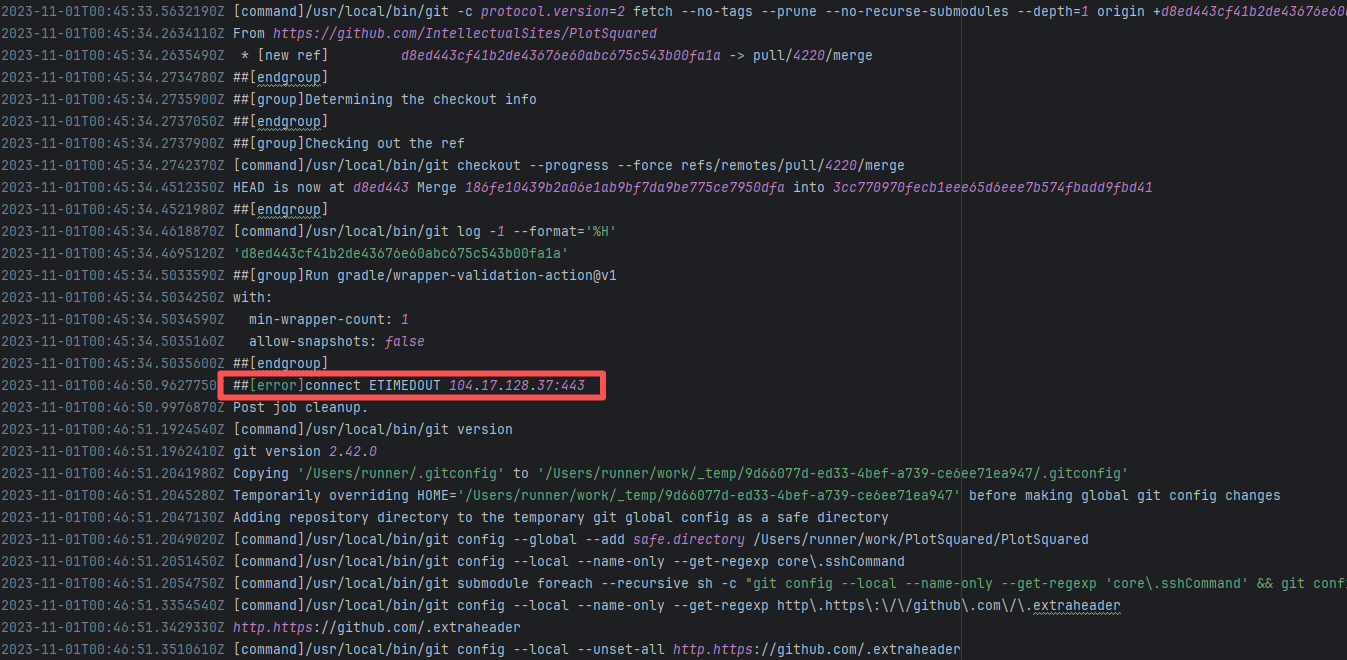

| IntellectualSites/PlotSquared |

18246935468 |

Request Timeout |

Error: connect ETIMEDOUT 104.17.128.37:443 indicates that the execution machine attempted to connect to Git

Root Cause: The download operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

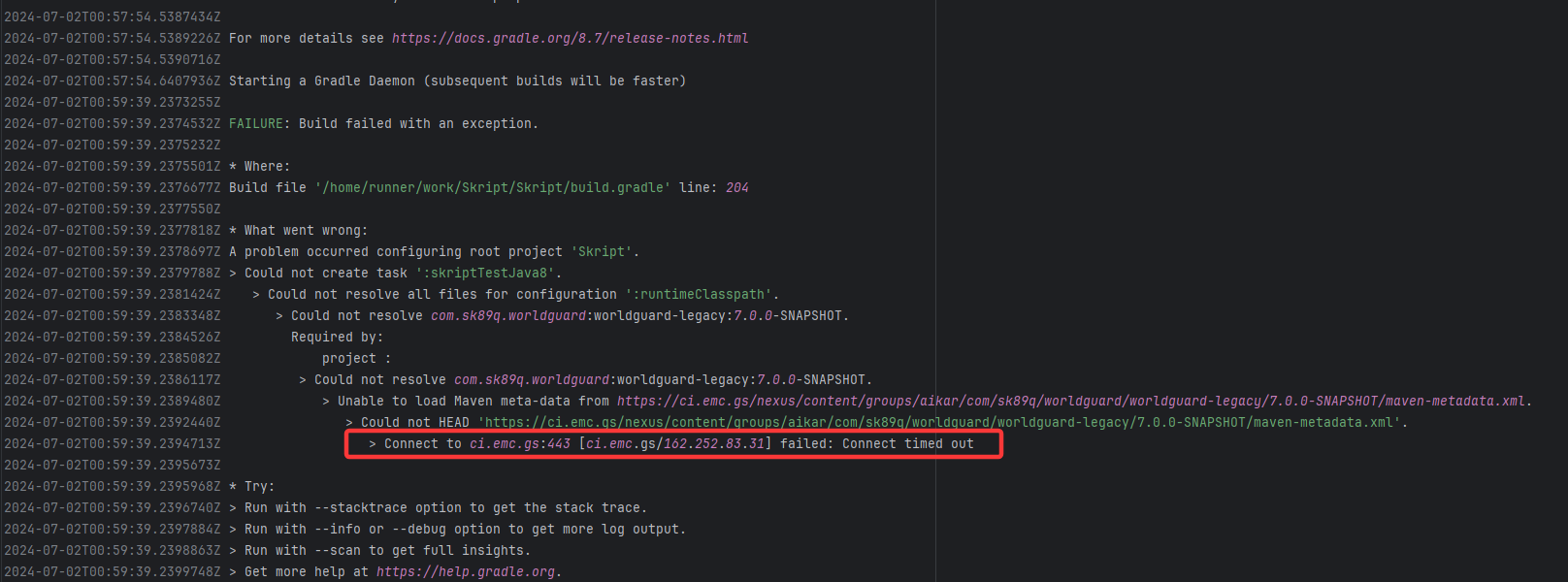

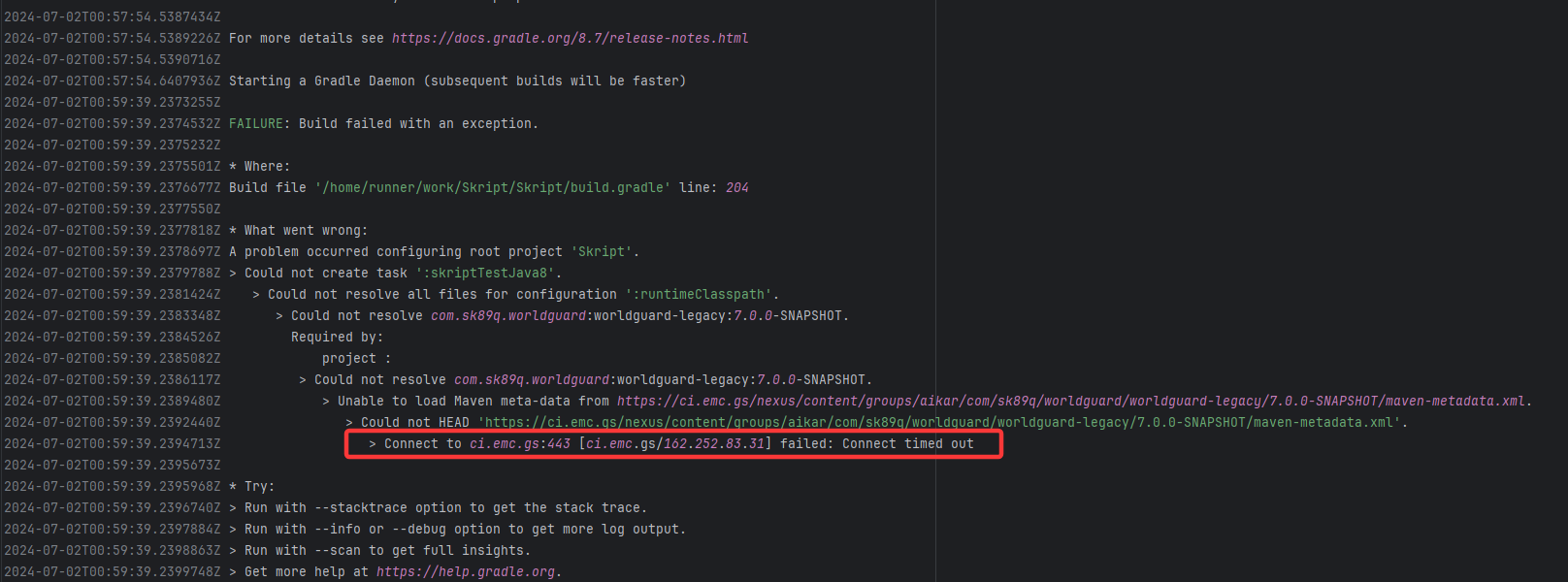

| SkriptLang/Skript |

26918113411 |

Request Timeout |

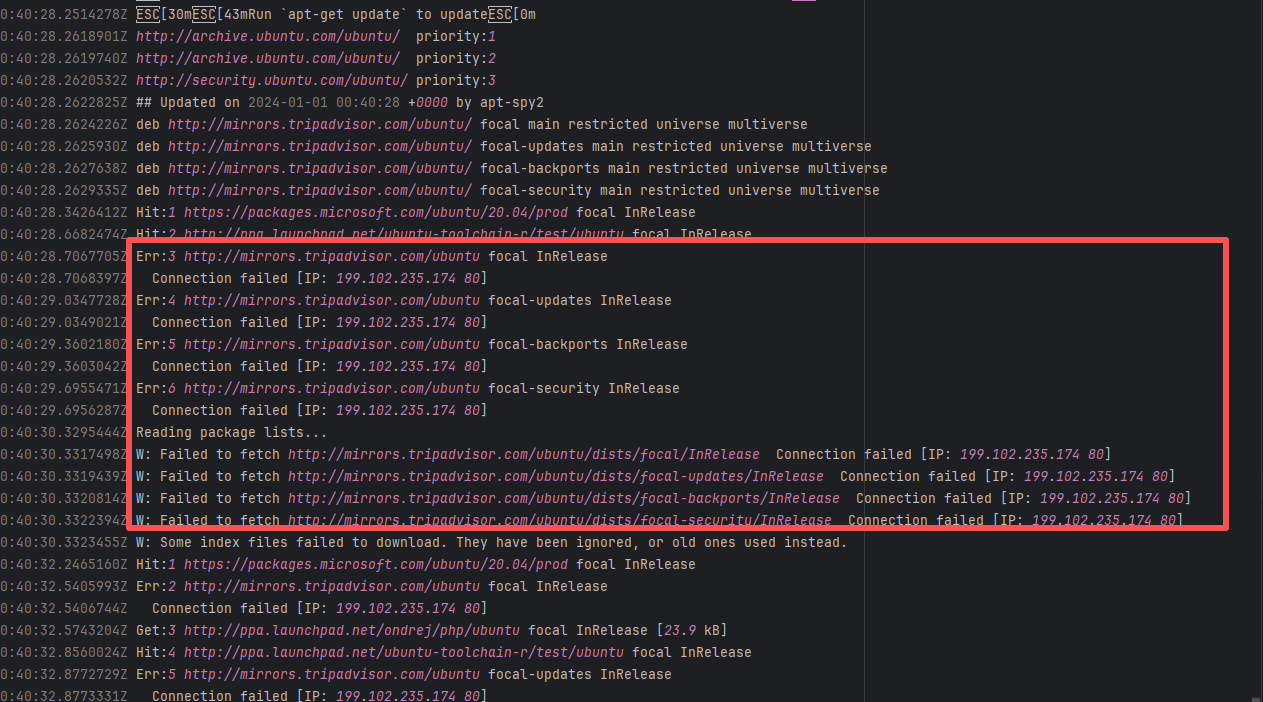

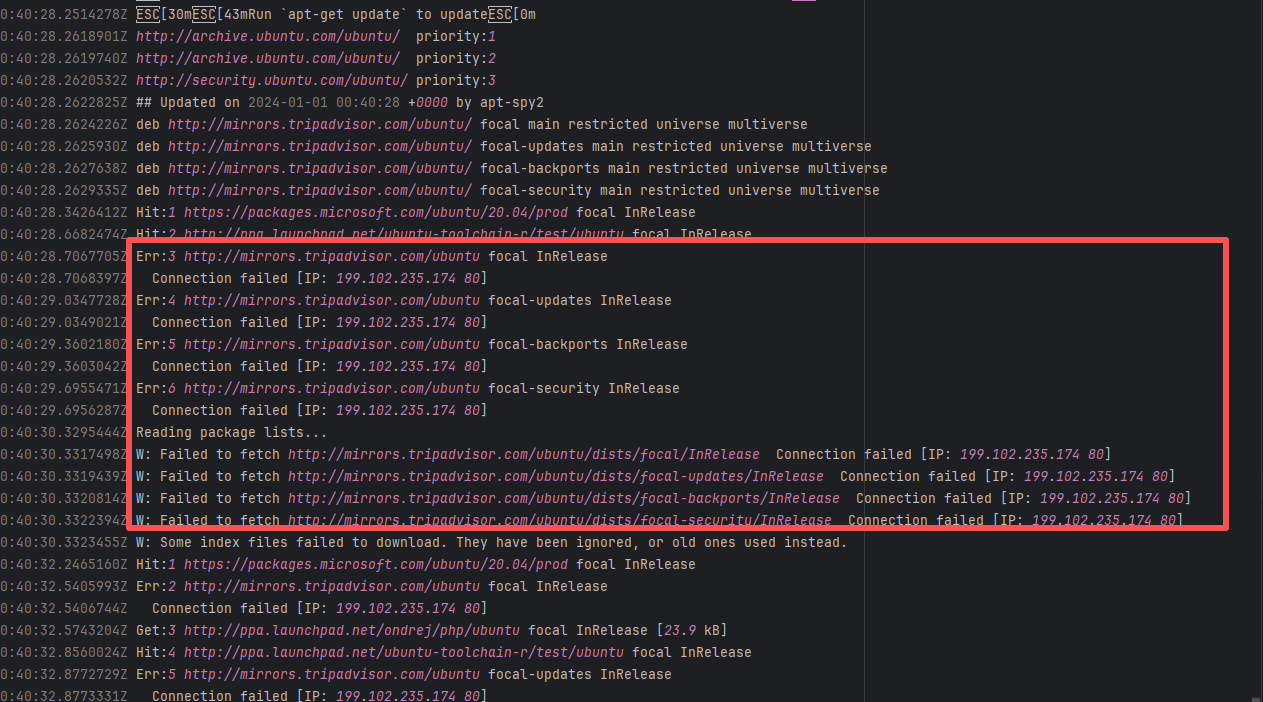

Error: Connect timed out indicates that the build tool (Gradle) attempted to fetch from an external Maven rep

Root Cause: The download operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

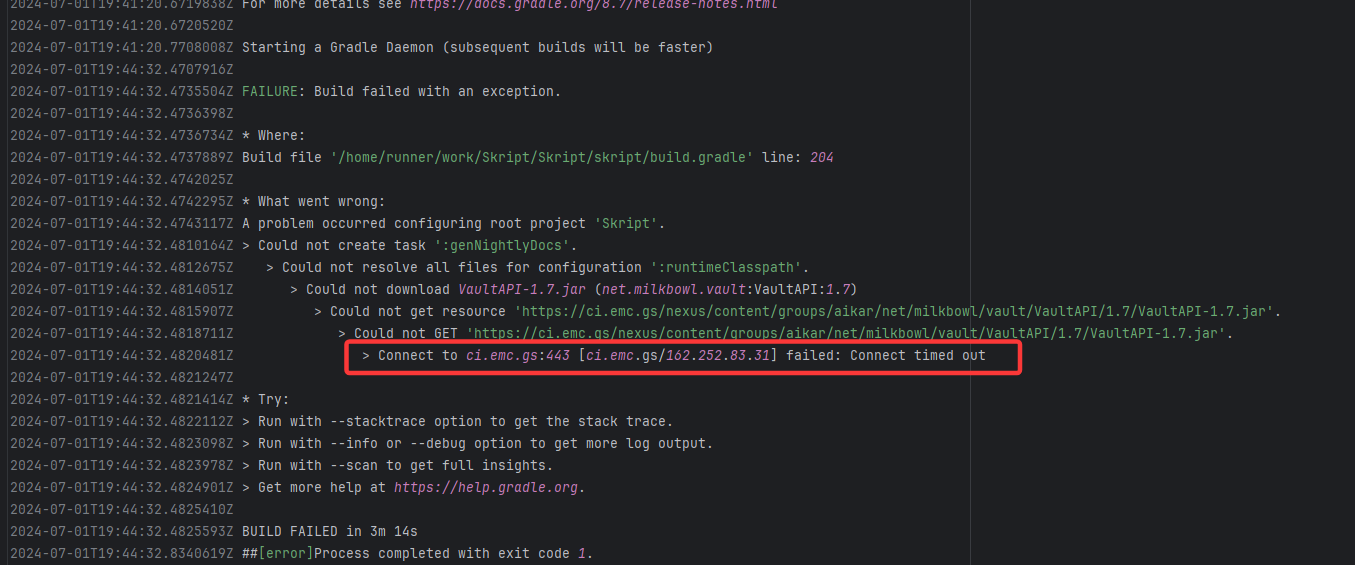

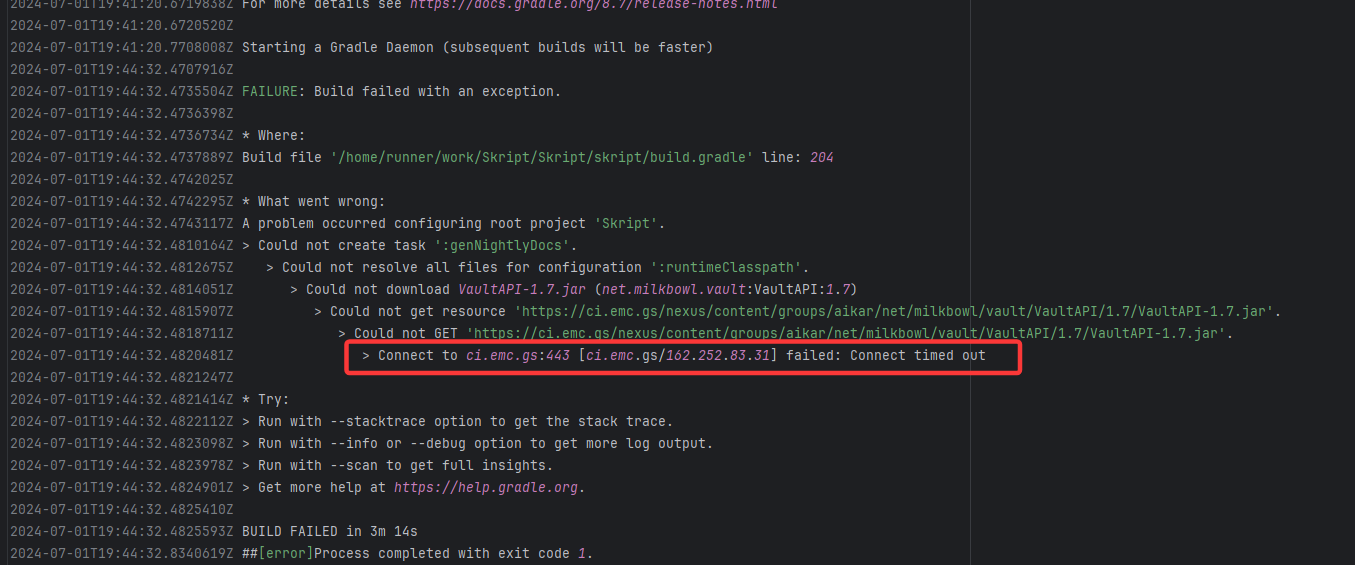

| SkriptLang/Skript |

26908758150 |

Request Timeout |

Error: Connect timed out indicates that the build tool (Gradle) attempted to fetch from an external Maven rep

Root Cause: The download operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

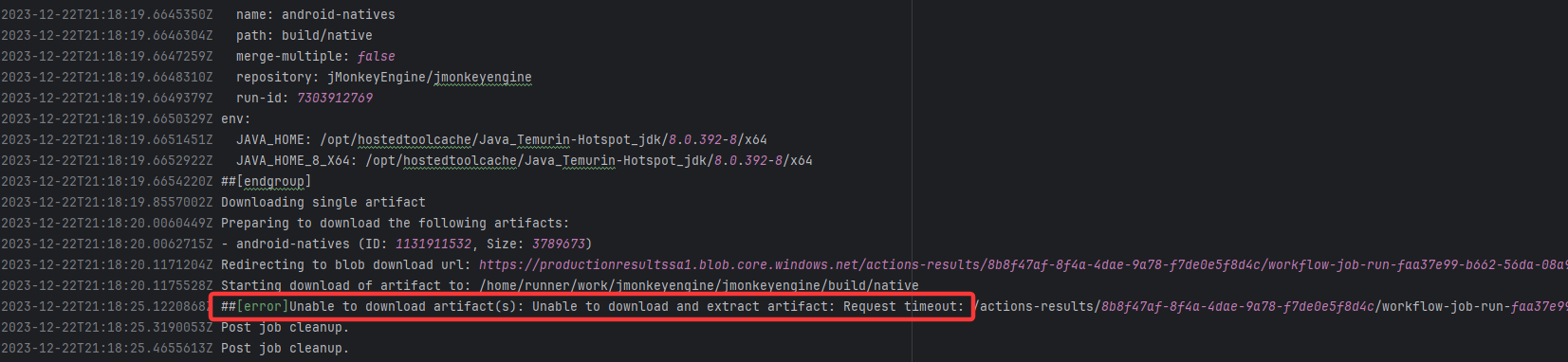

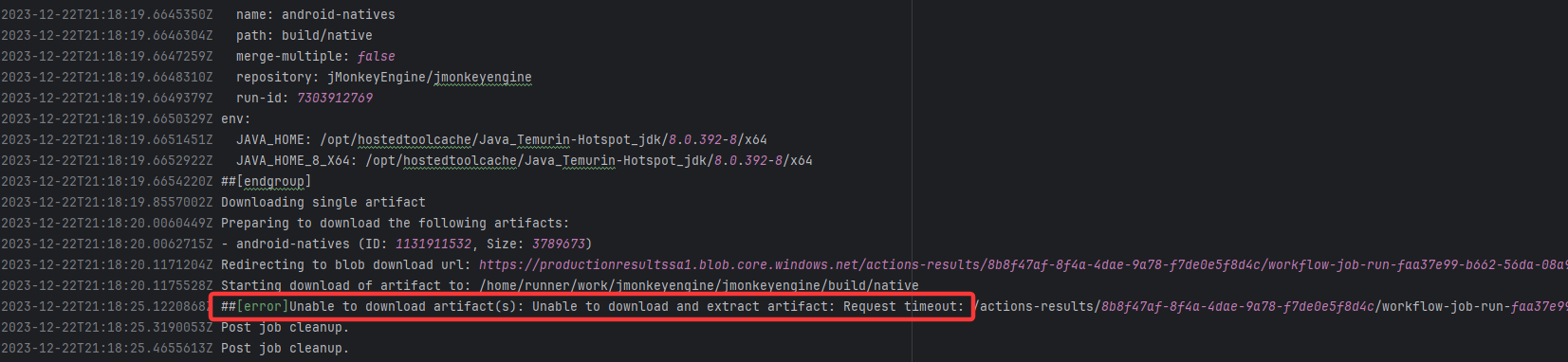

| jMonkeyEngine/jmonkeyengine |

19905287914 |

Request Timeout |

Error: The error indicates that the workflow failed due to a timeout while downloading the Artifact.

Root Cause: The download operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

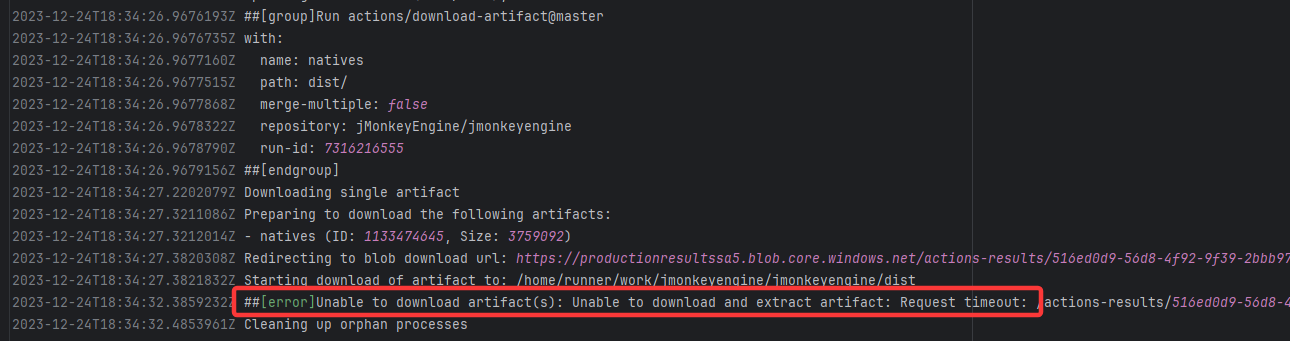

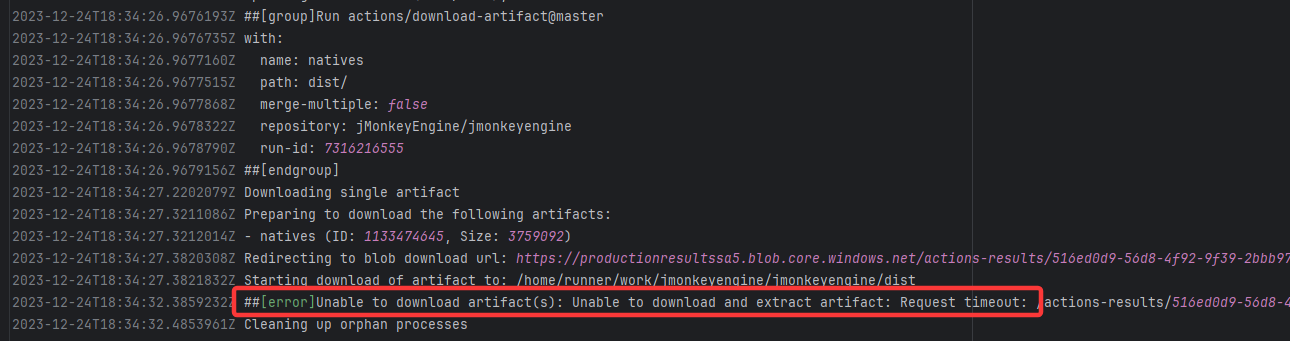

| jMonkeyEngine/jmonkeyengine |

19930700187 |

Request Timeout |

Error: The error indicates that the workflow failed due to a timeout while downloading the Artifact.

Root Cause: The download operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

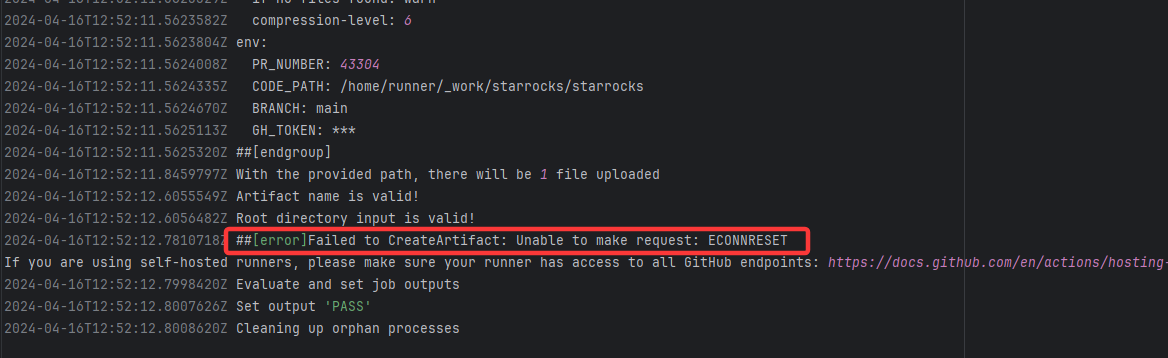

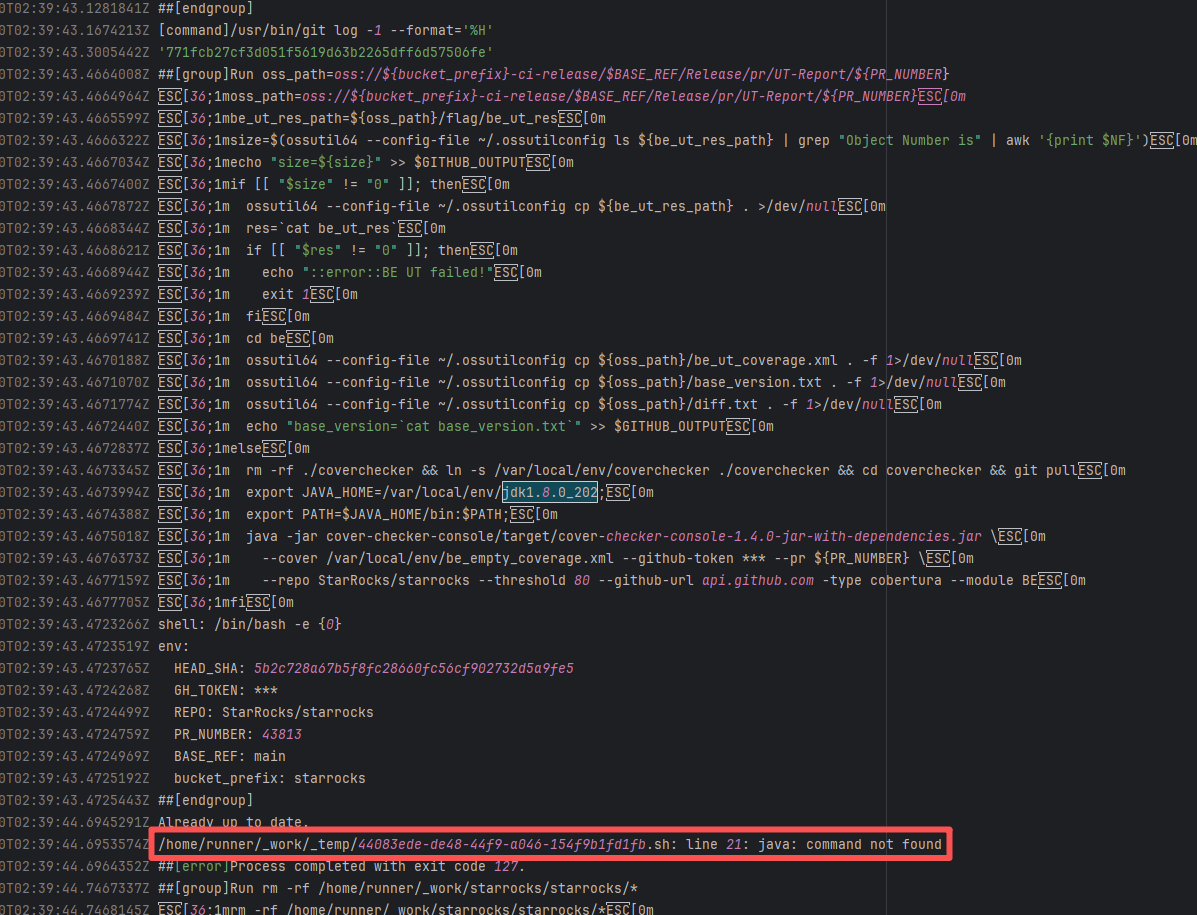

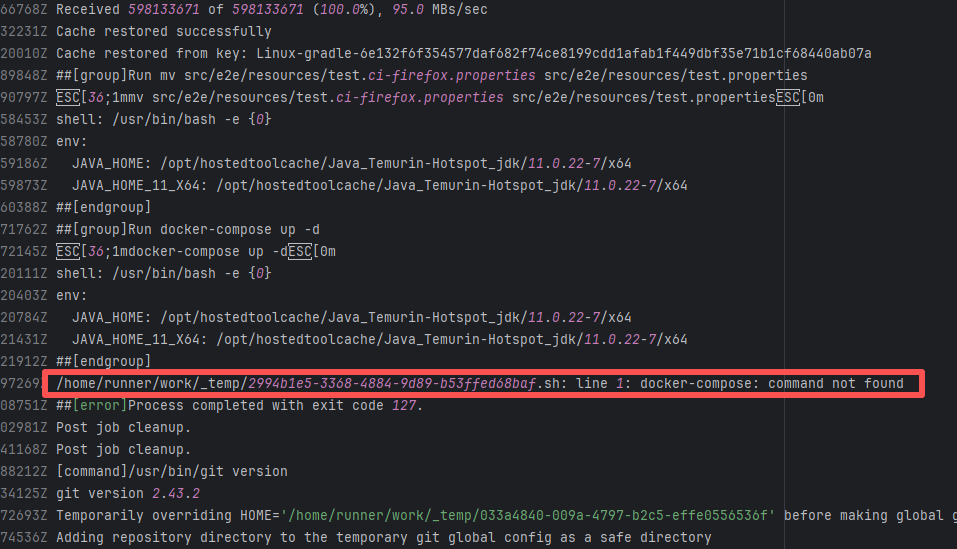

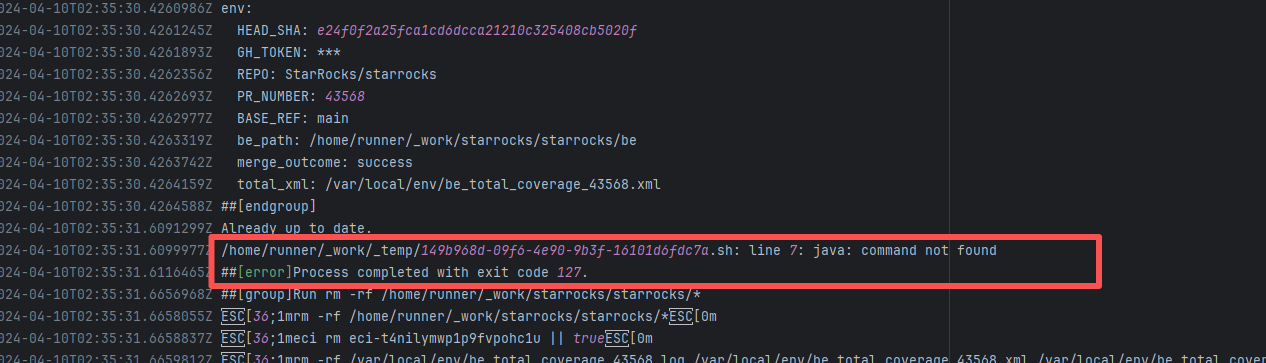

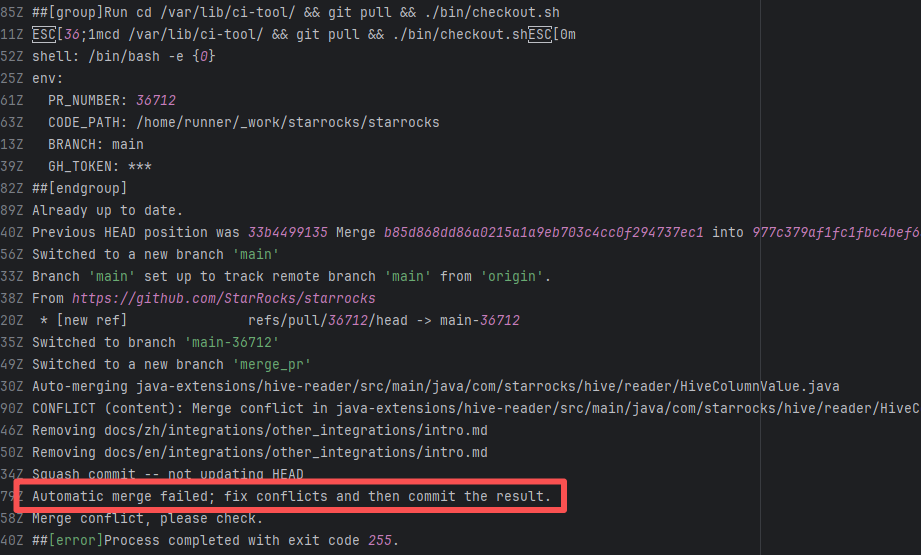

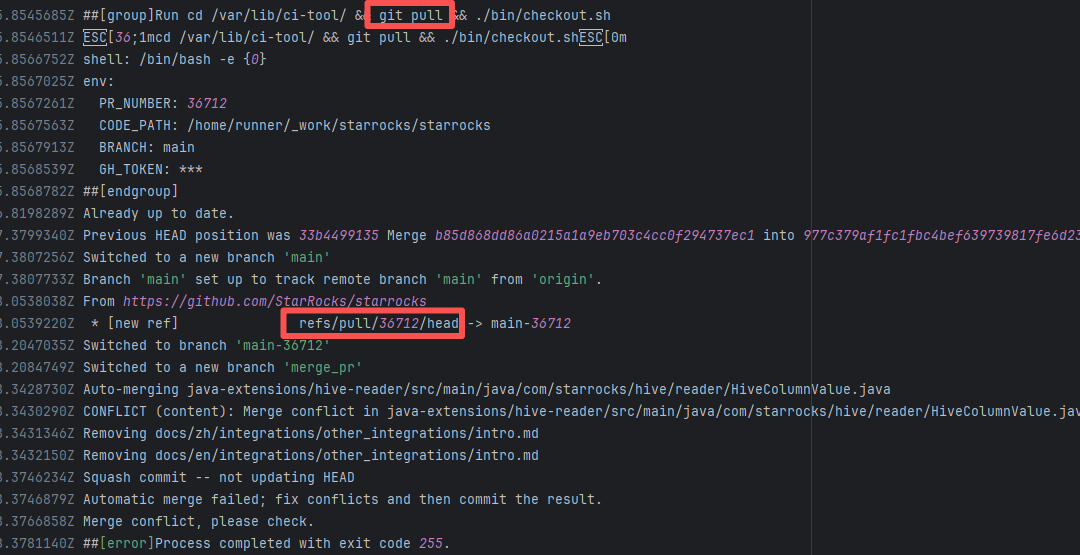

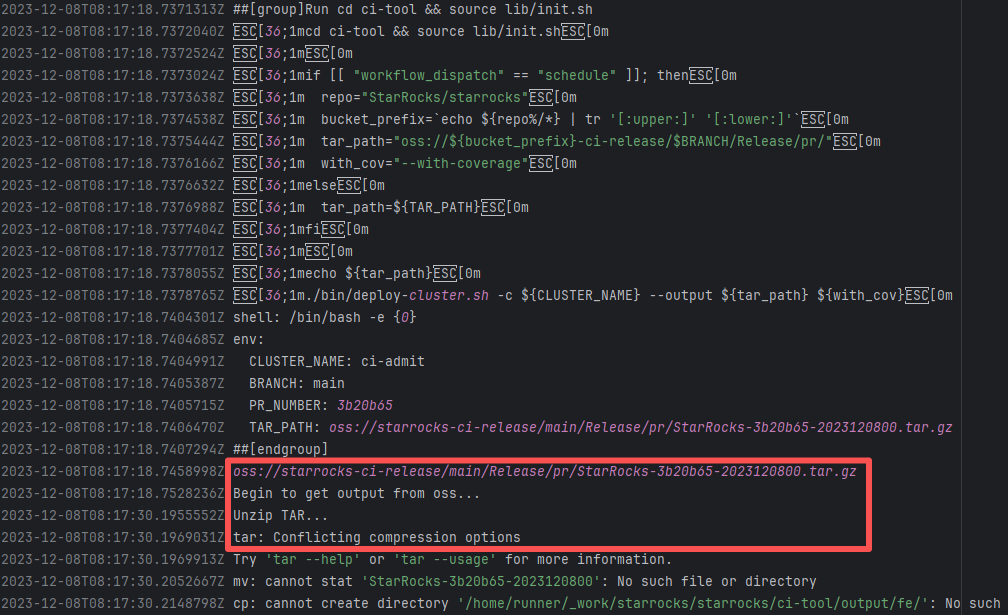

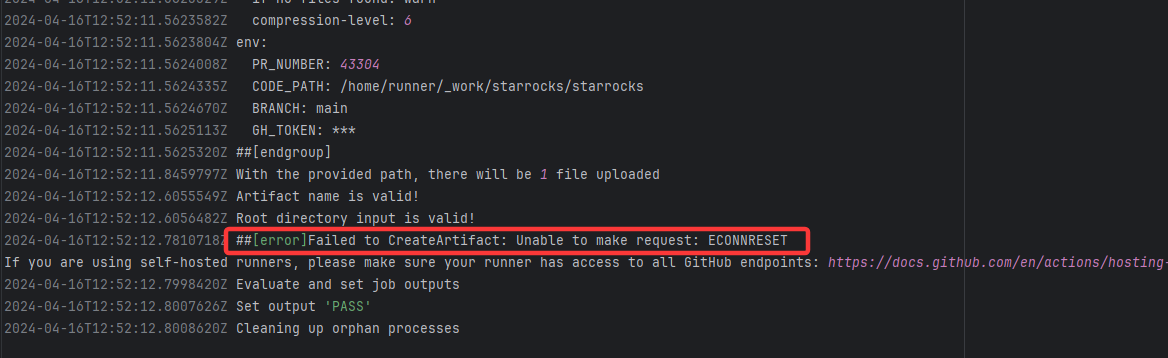

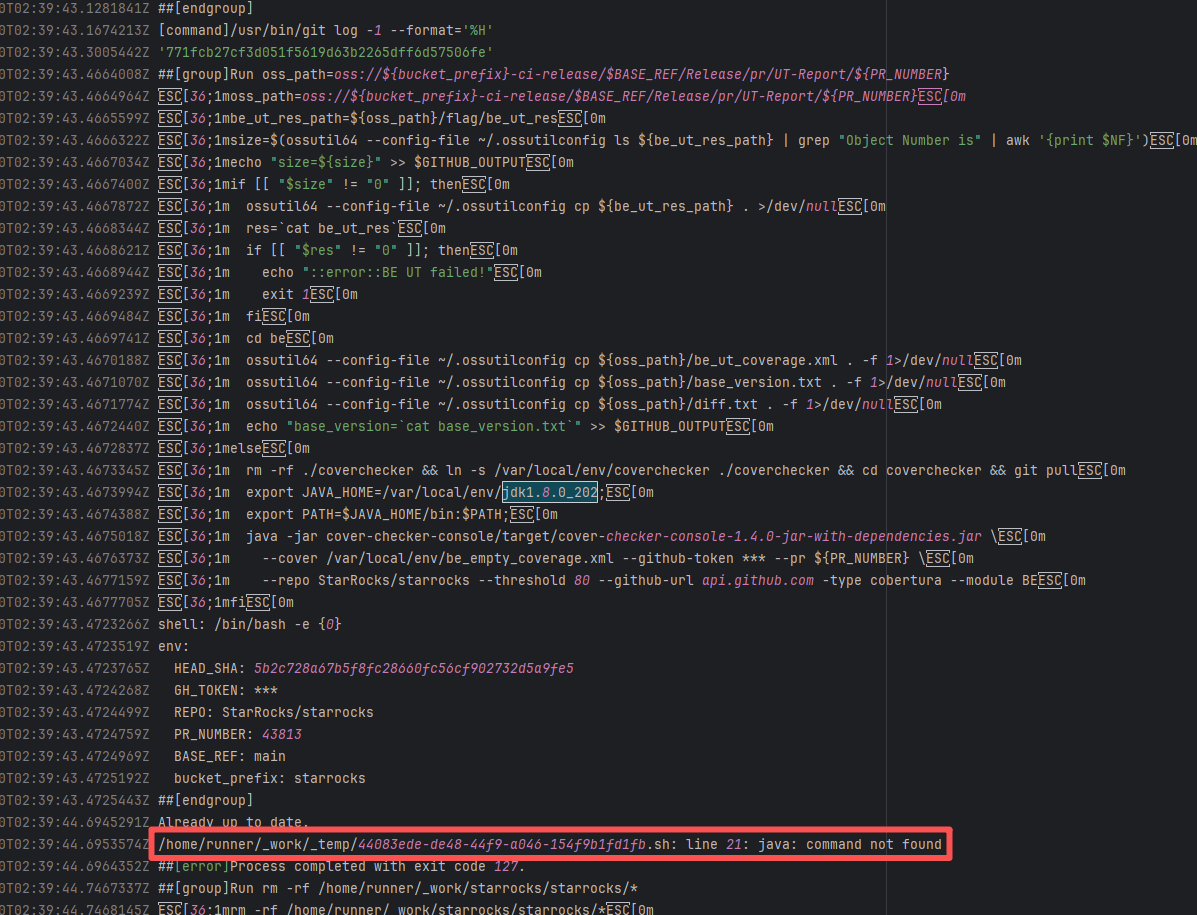

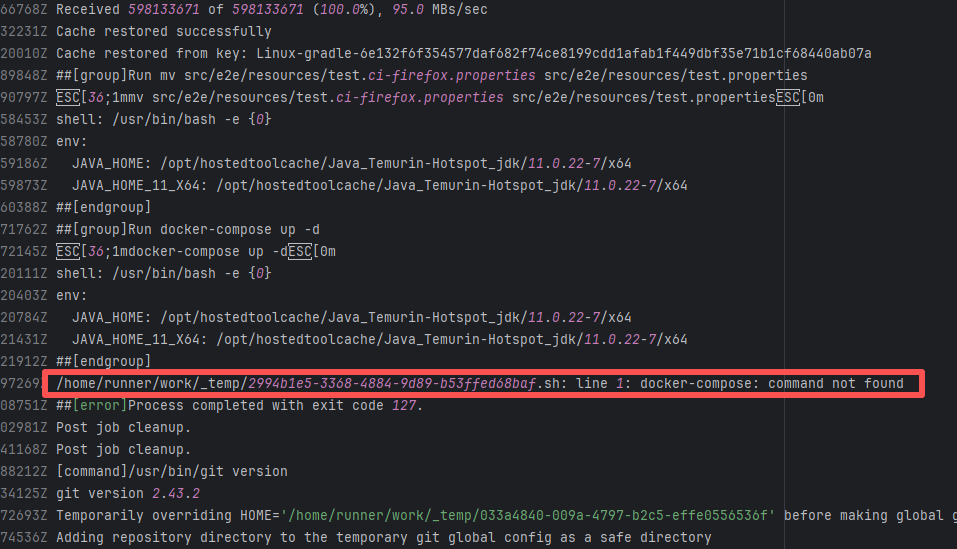

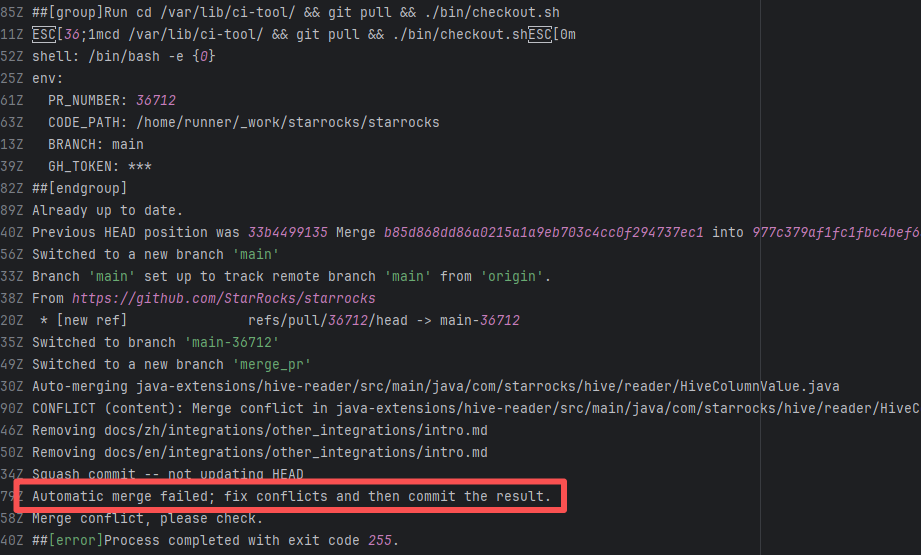

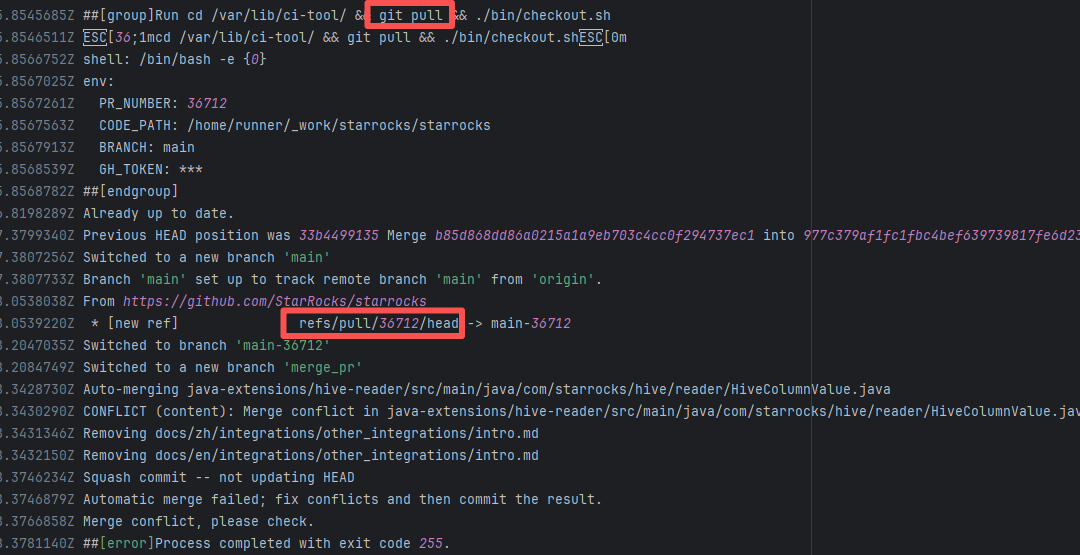

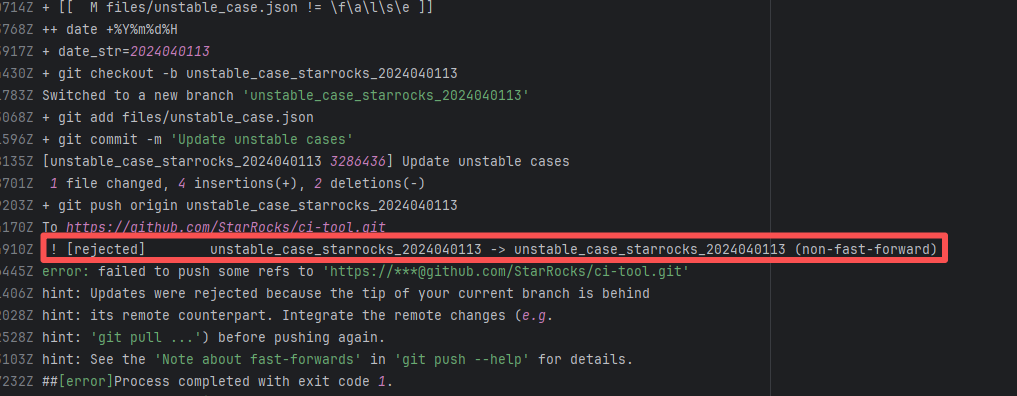

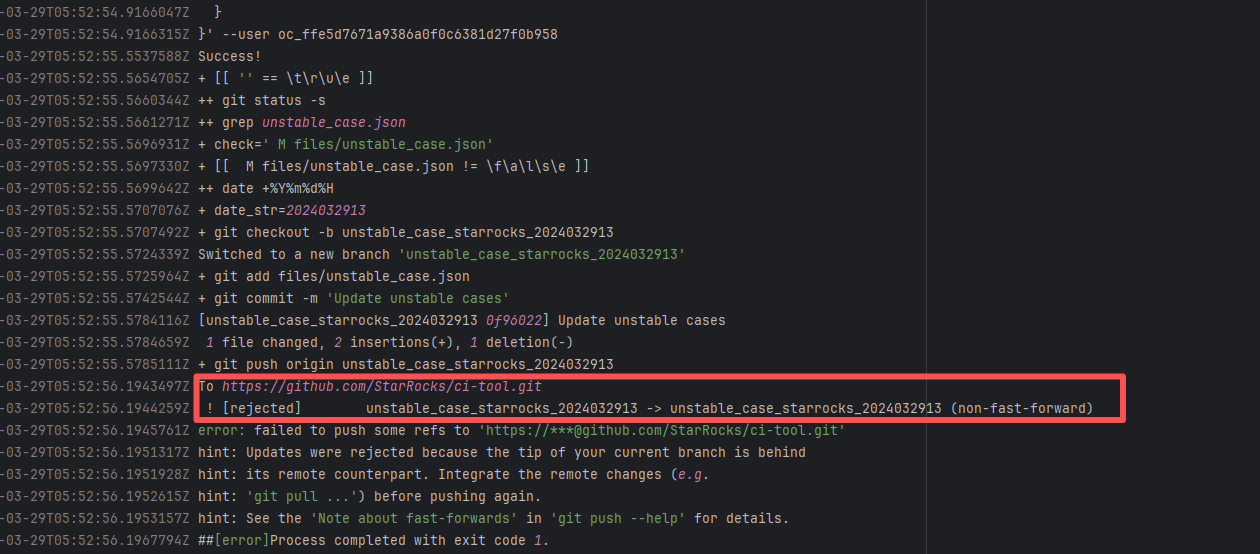

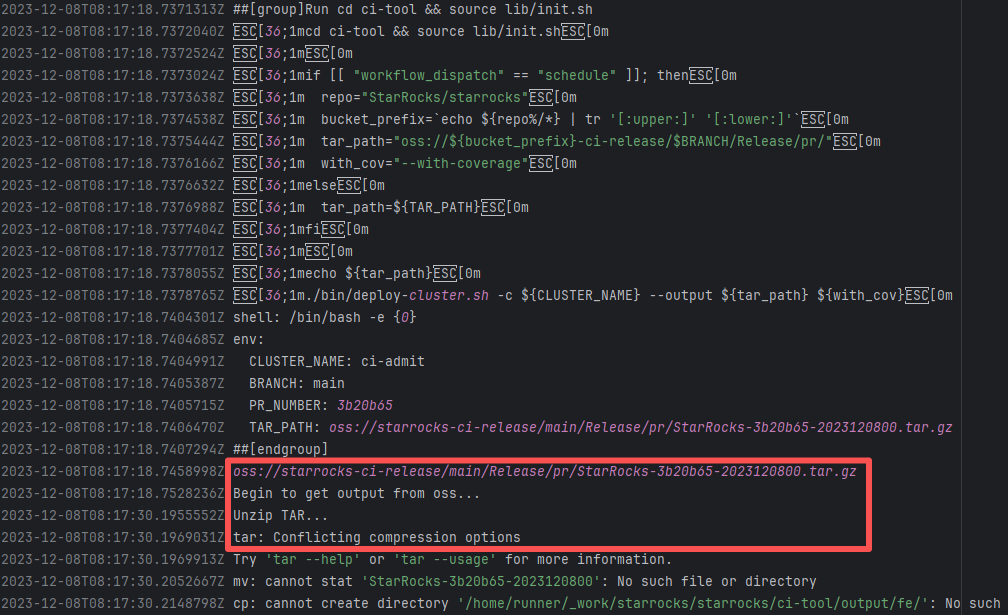

| StarRocks/starrocks |

23877440817 |

Connection Reset |

Error: ECONNRESET indicates that the Action attempted to upload the build artifact to Git

Root Cause: The upload operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

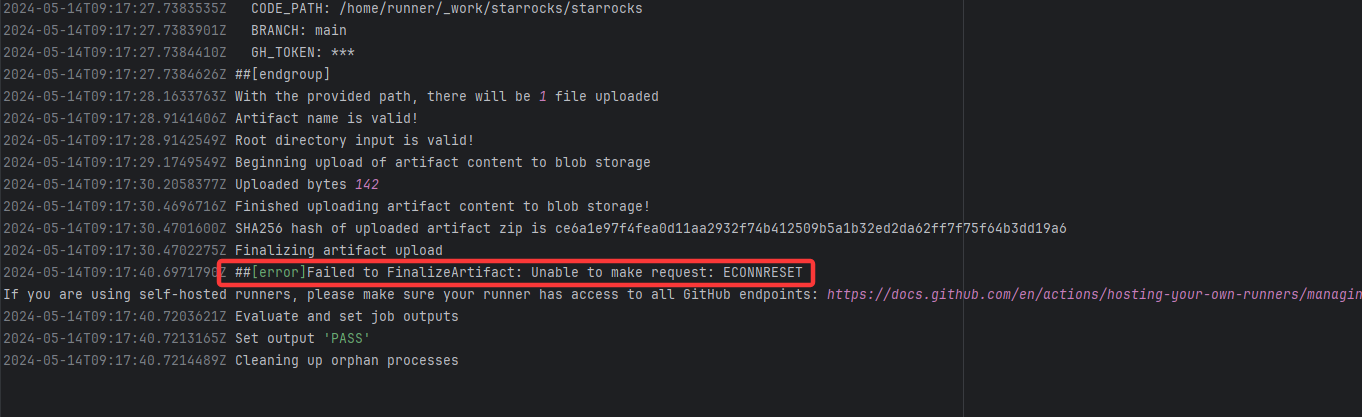

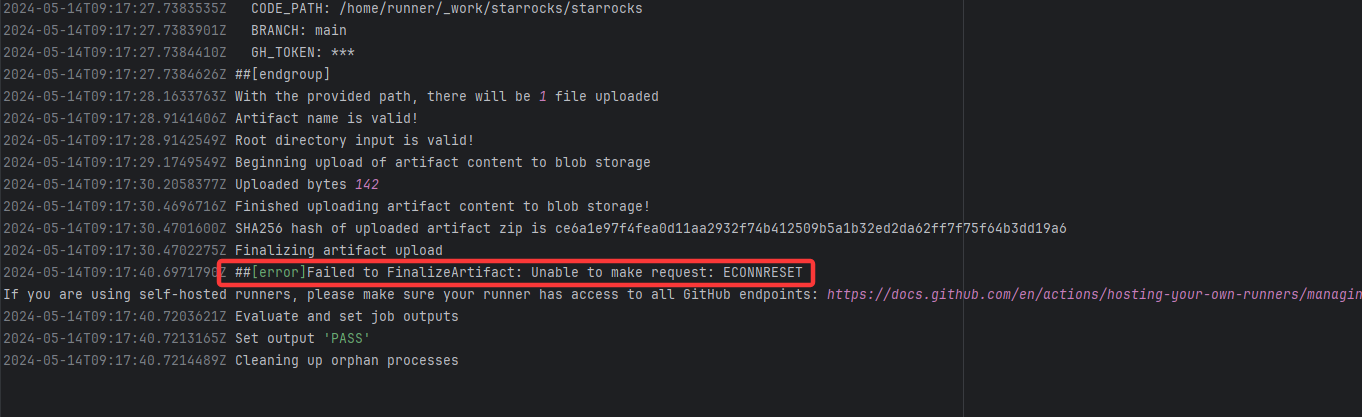

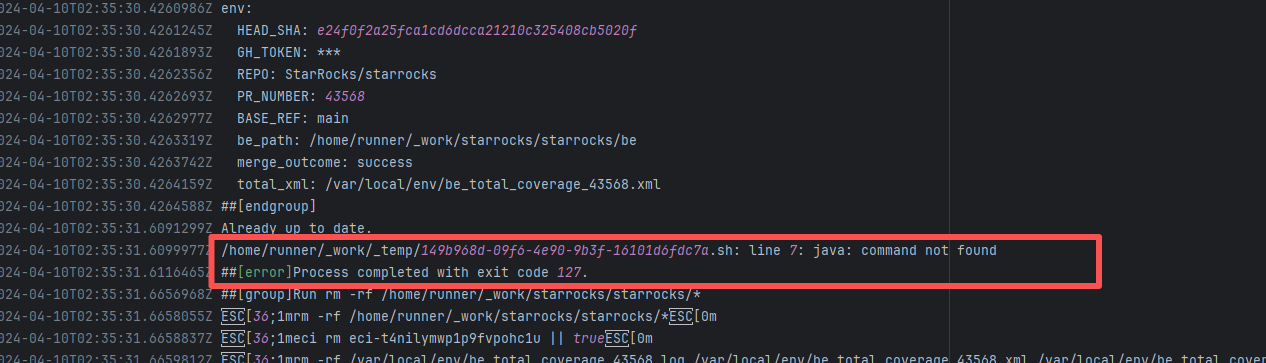

| StarRocks/starrocks |

24940507896 |

Connection Reset |

Error: ECONNRESET indicates that the Action attempted to upload the build artifact to Git

Root Cause: The upload operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

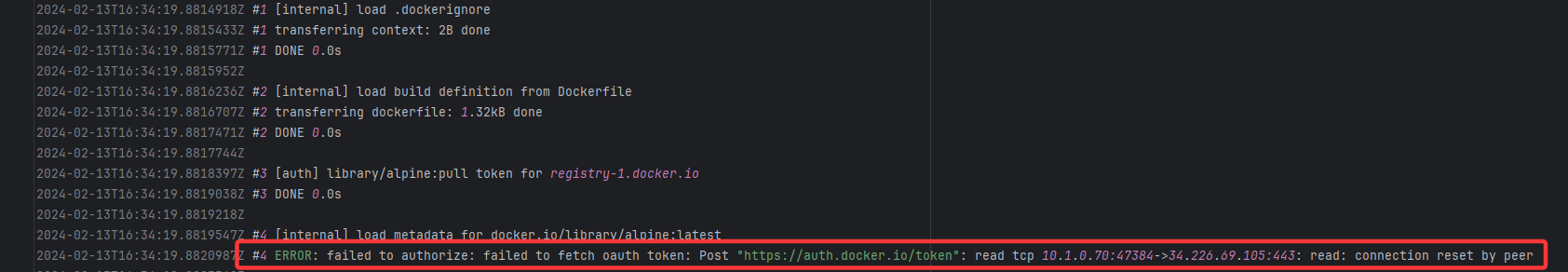

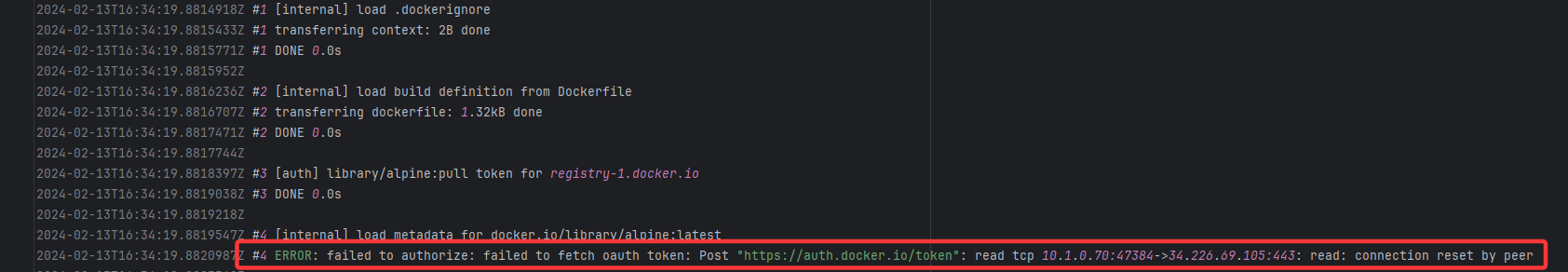

| apache/tinkerpop |

21529798021 |

Connection Reset |

Error: connection reset by peer indicates that Docker attempted to connect to Docker Hub auth

Root Cause: The authentication operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

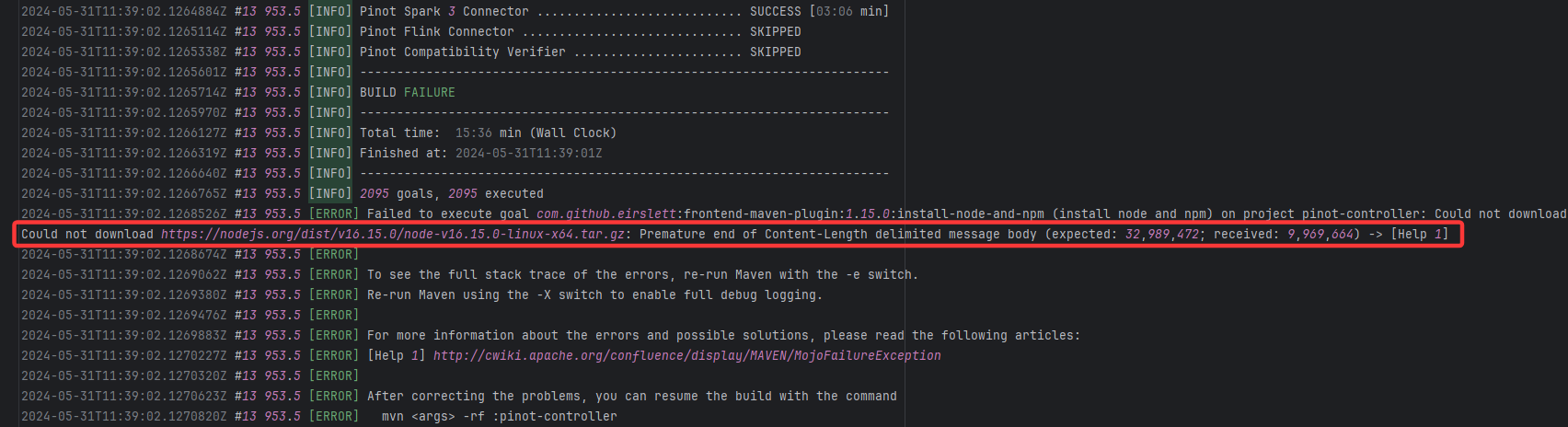

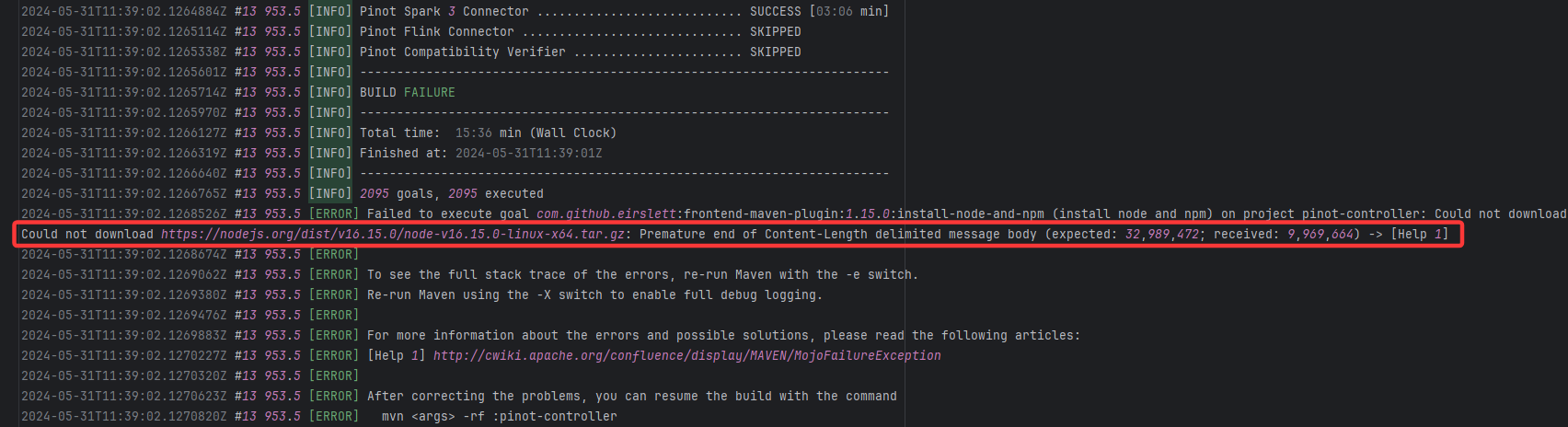

| apache/pinot |

25647531041 |

Resource Download Interruption |

Error: Premature end of Content-Length indicates that while downloading the Node.js installation package, the net

Root Cause: The download operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

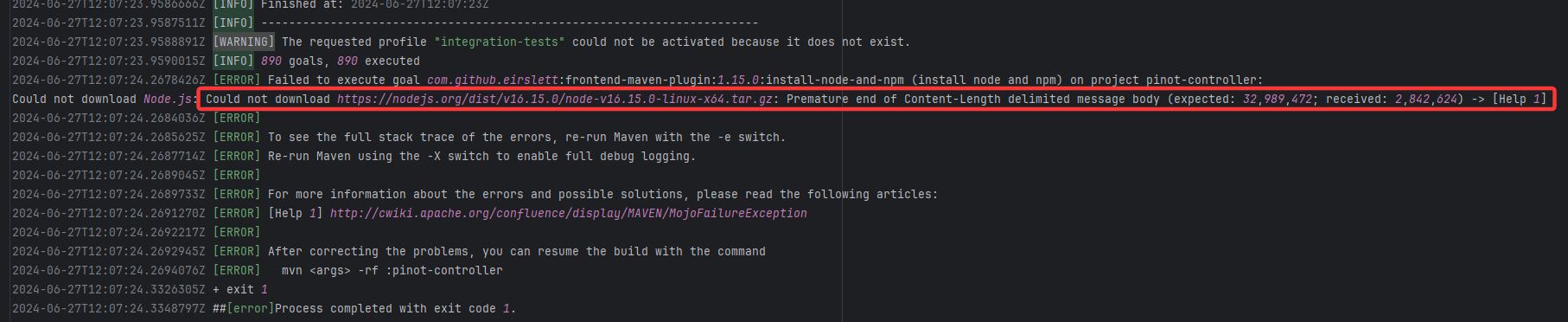

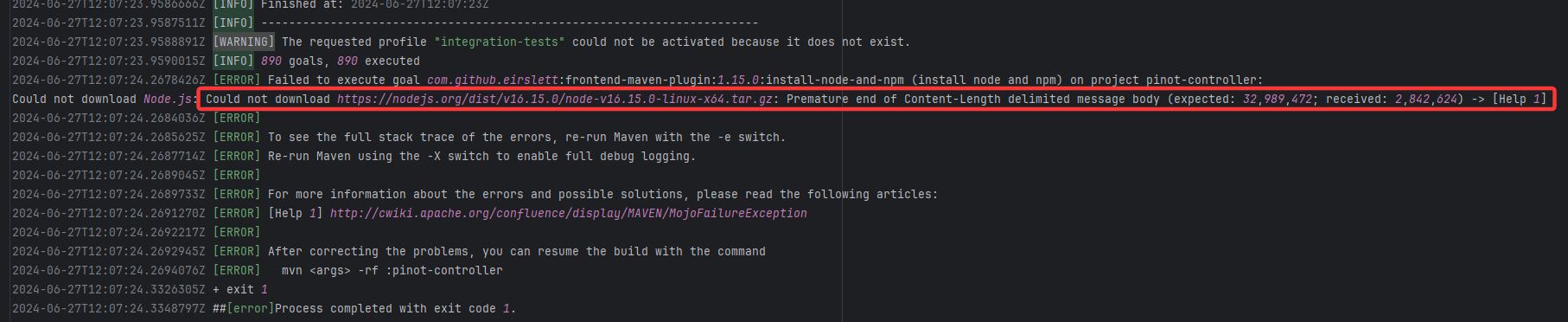

| apache/pinot |

26757406909 |

Resource Download Interruption |

Error: Premature end of Content-Length indicates that while downloading the Node.js installation package, the net

Root Cause: The download operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

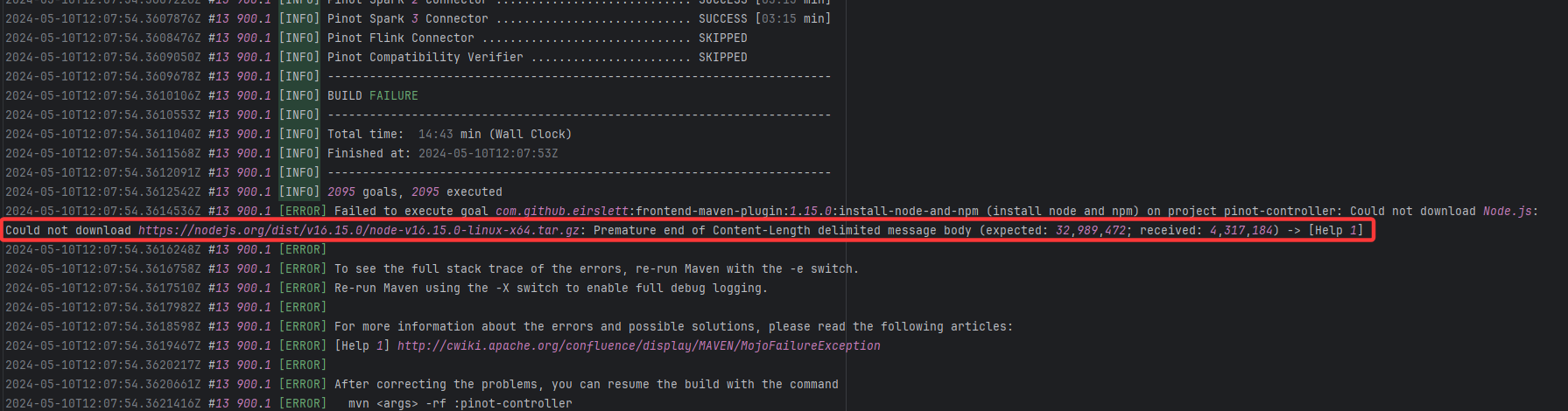

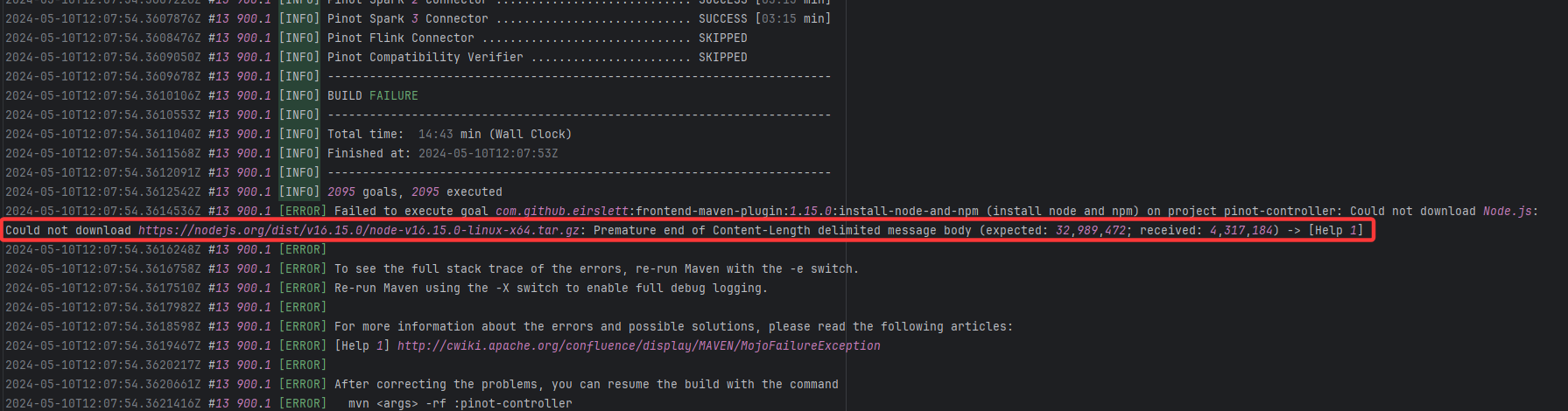

| apache/pinot |

24818704176 |

Resource Download Interruption |

Error: Premature end of Content-Length indicates that while downloading the Node.js installation package, the net

Root Cause: The download operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

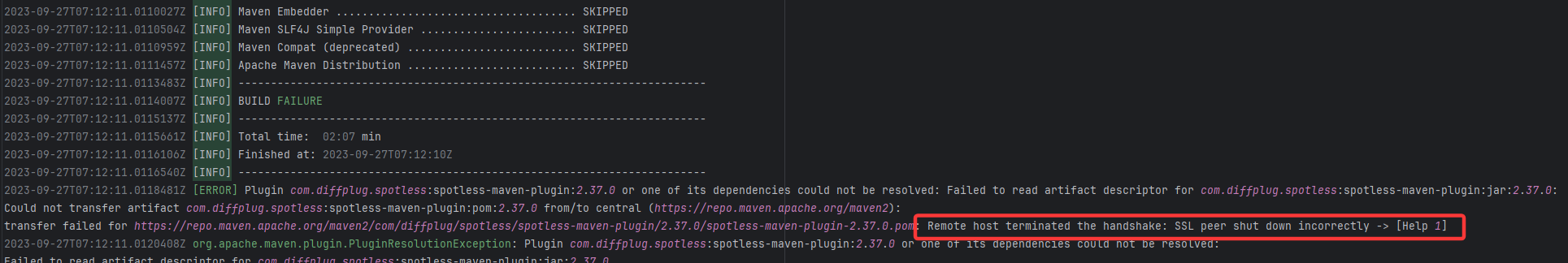

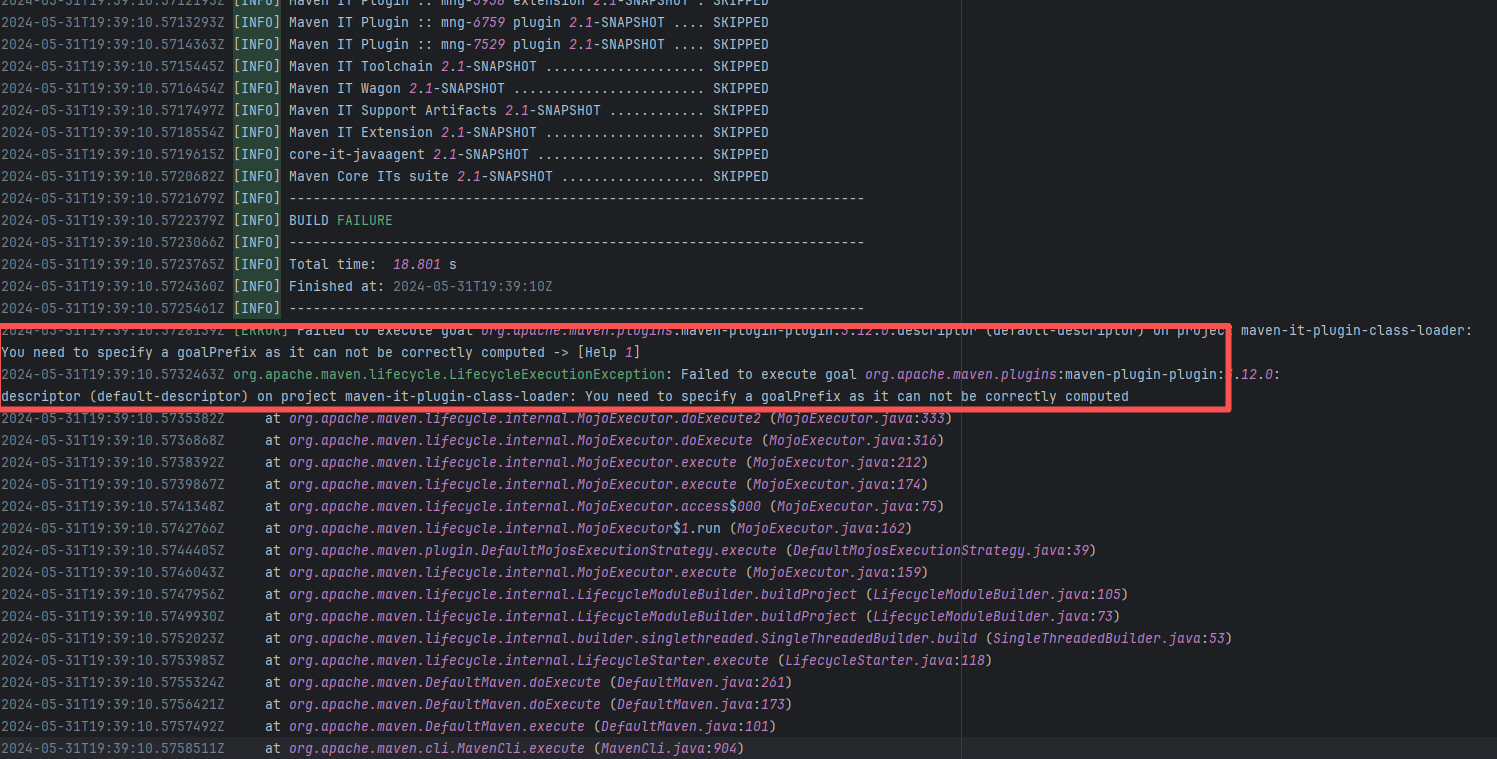

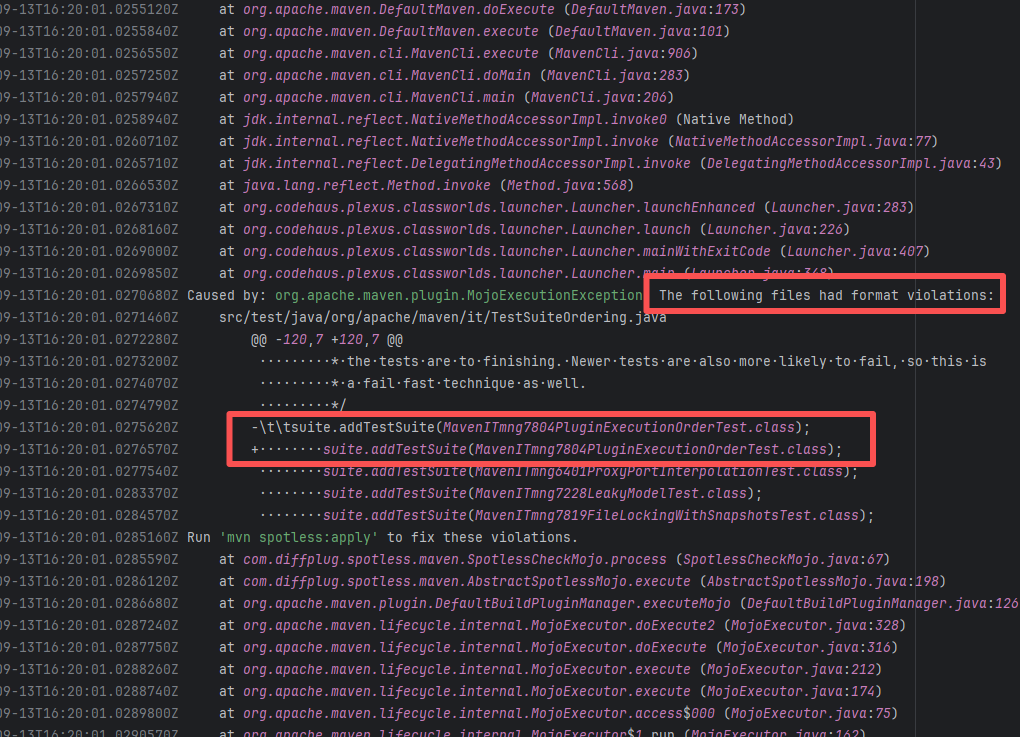

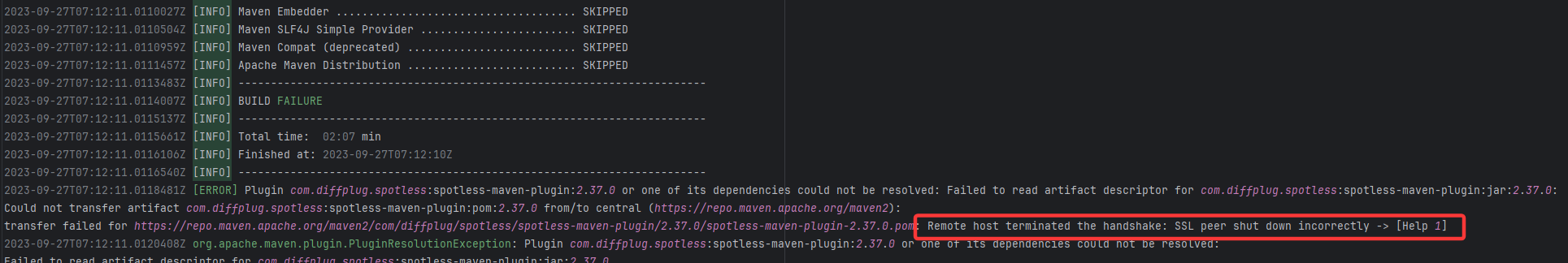

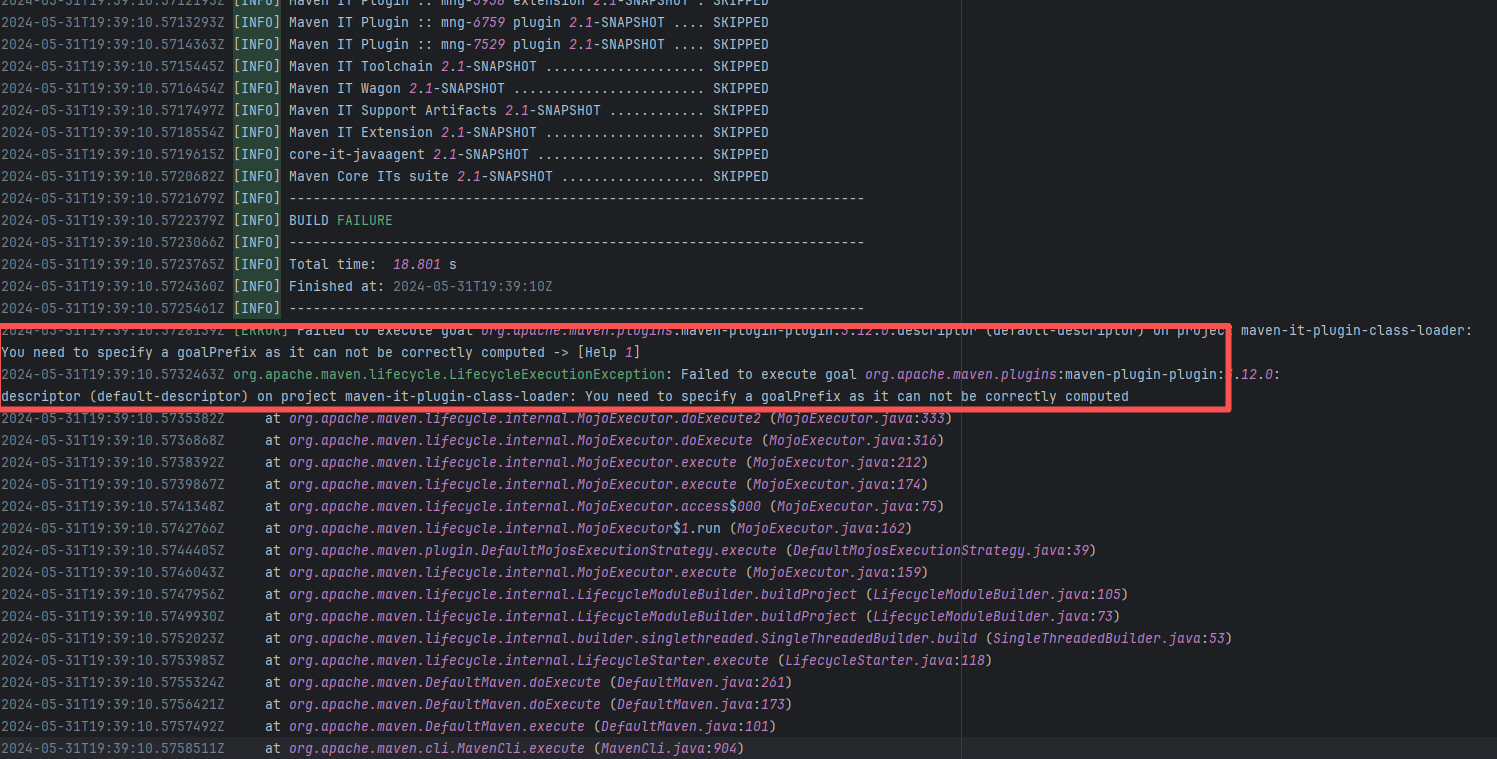

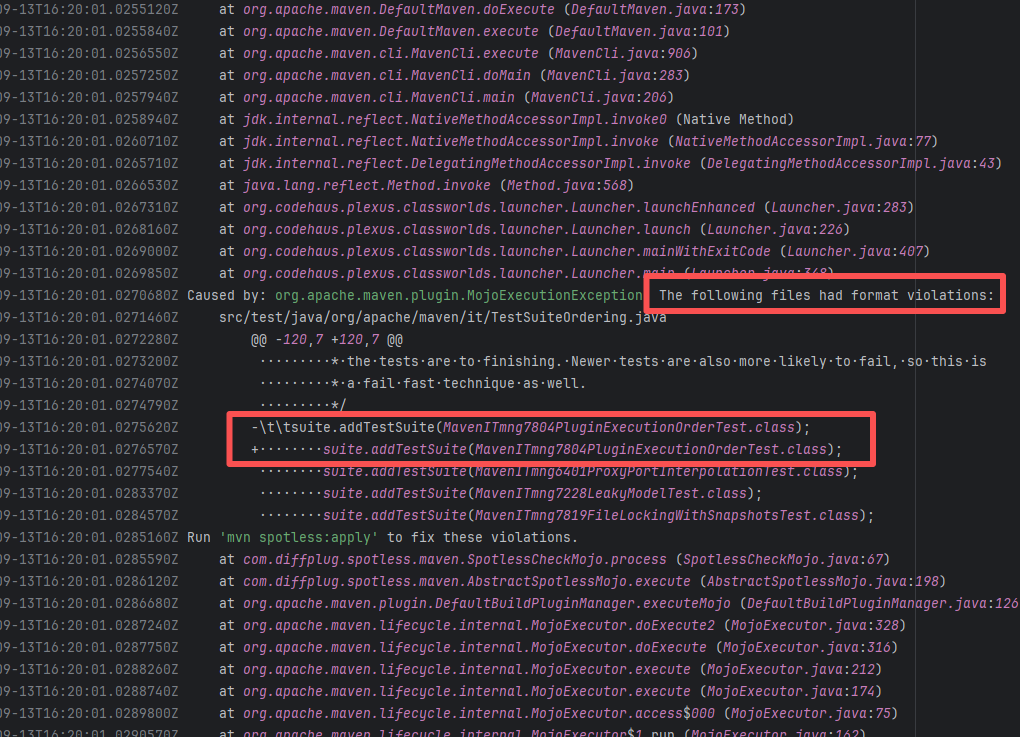

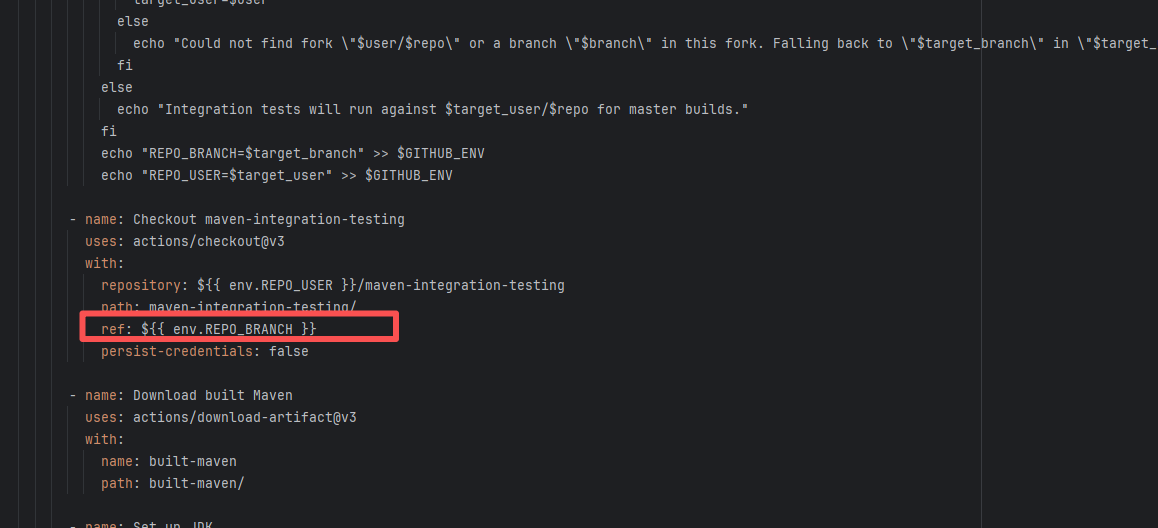

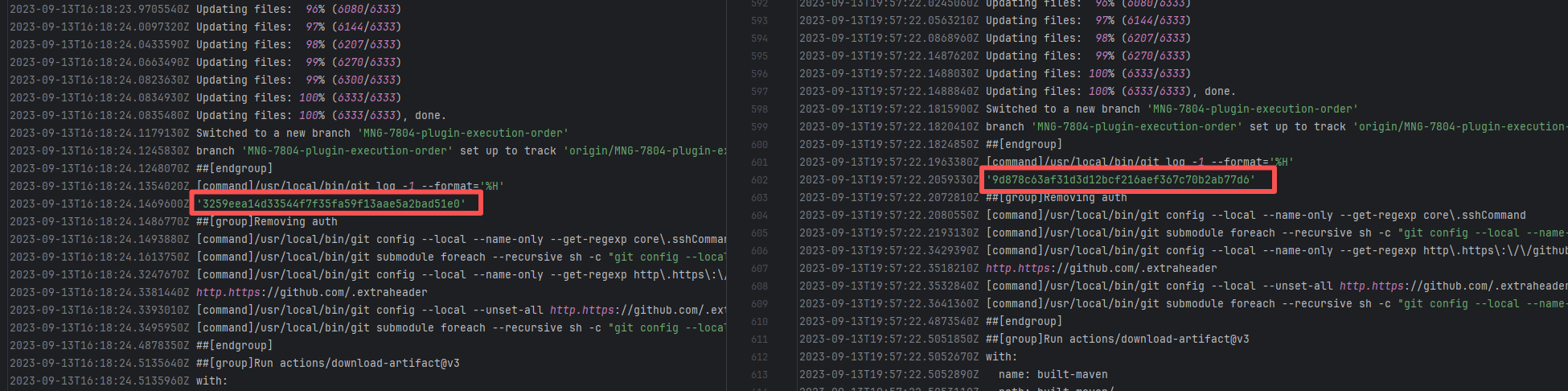

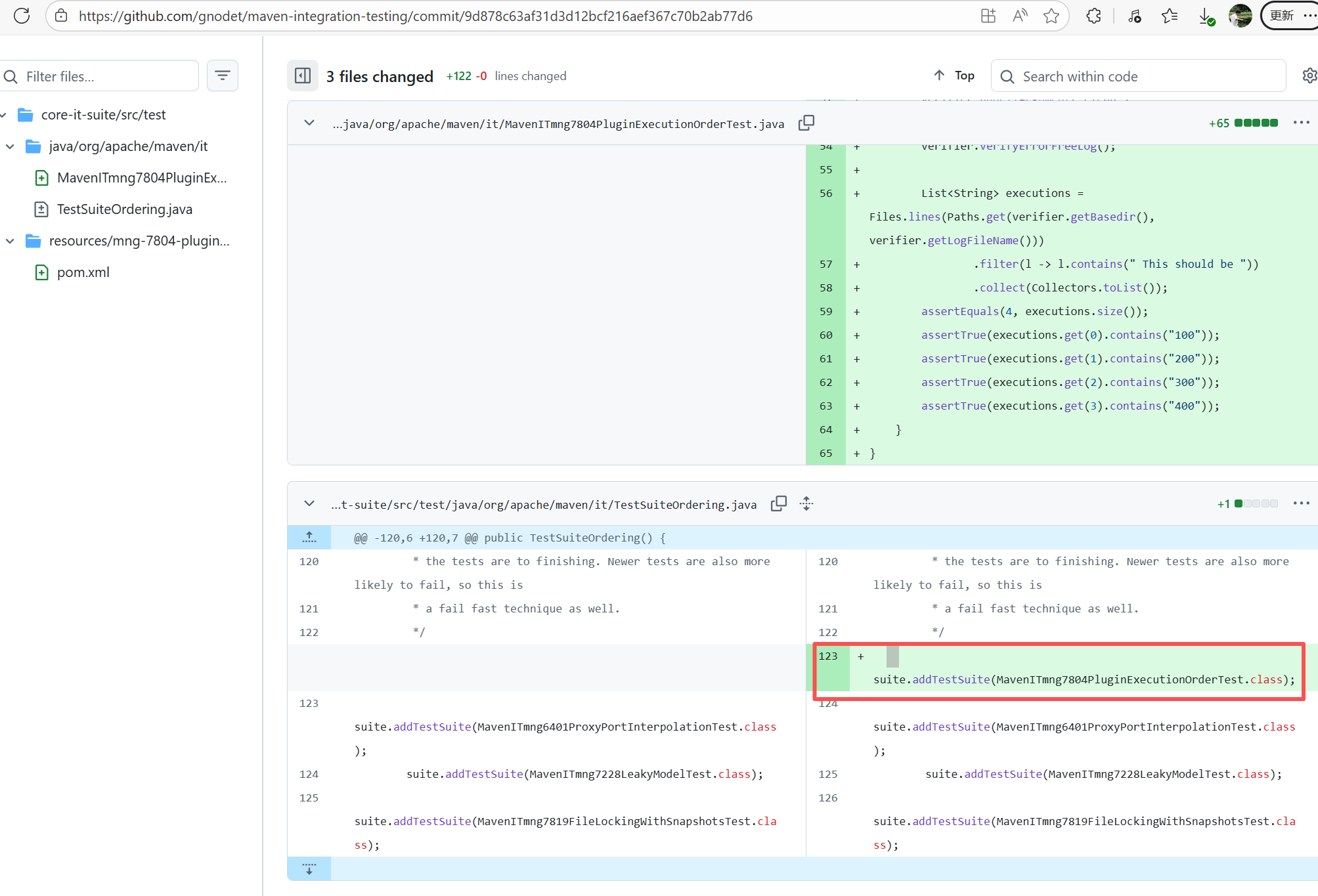

| apache/maven |

17168458533 |

TLS Handshake Failure |

Error: Remote host terminated the handshake indicates that while downloading from the Maven central repository,

Root Cause: The download operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

| apache/maven |

17000228151 |

TLS Handshake Failure |

Error: Remote host terminated the handshake indicates that while downloading from the Maven central repository,

Root Cause: The download operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

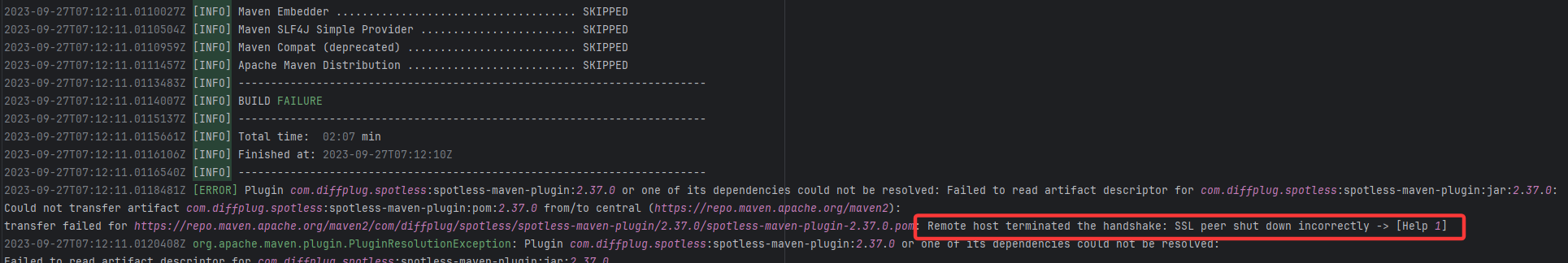

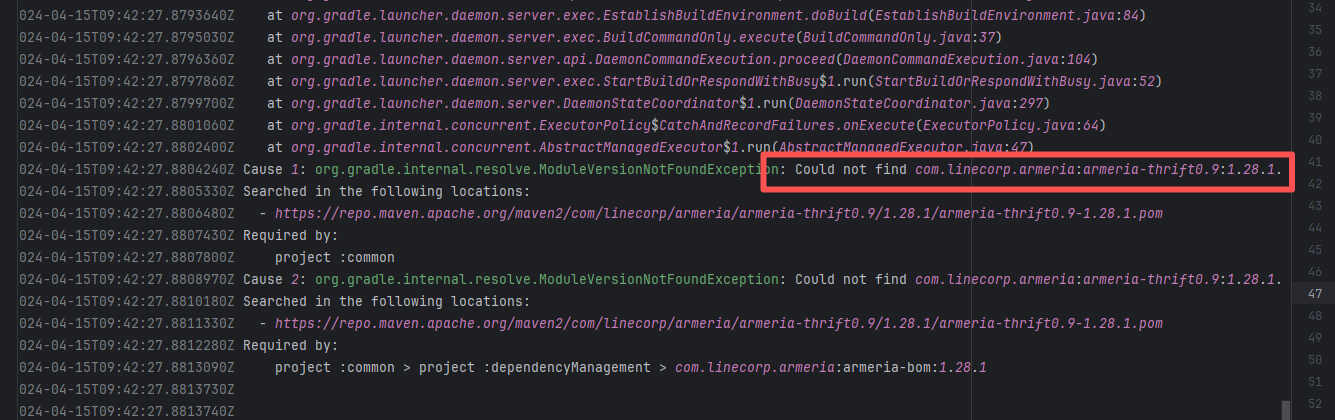

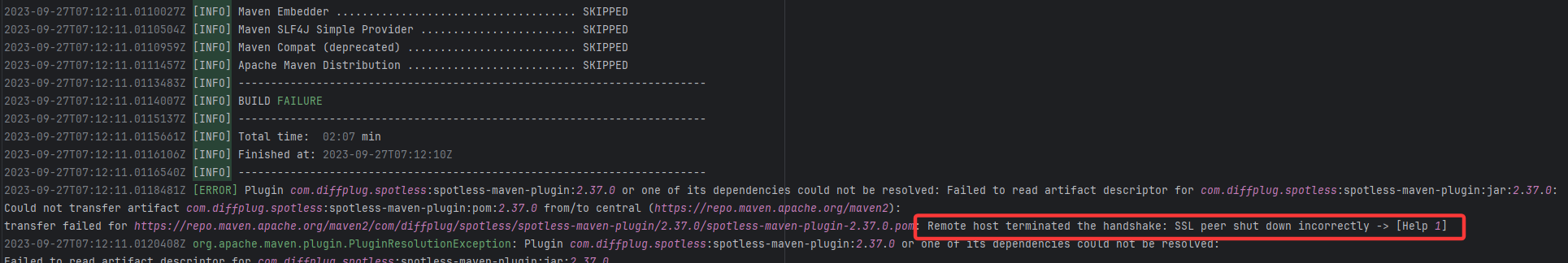

| apache/iotdb |

24938834029 |

Connection Refuse |

Error: Connection refused indicates that the build machine attempted to connect to the Apache mirror repository (repo

Root Cause: The download operation was successfully executed after a rerun. |

Short-term Fix: Rerun the job, which will automatically succeed after network conditions recover.

Long-term Defense:

- Continuously monitor the frequency of this error;

- Introduce an automatic retry mechanism (e.g., using a retry plugin) in the Workflow for steps susceptible to network fluctuations;

- If using a self-hosted Runner, troubleshoot the stability of the local firewall, proxy, or network egress. |

|

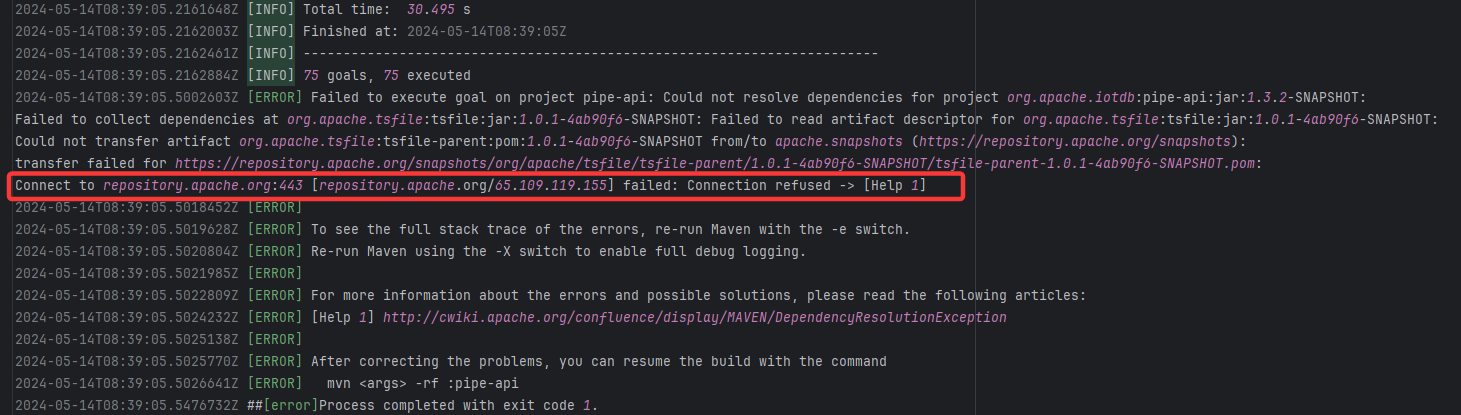

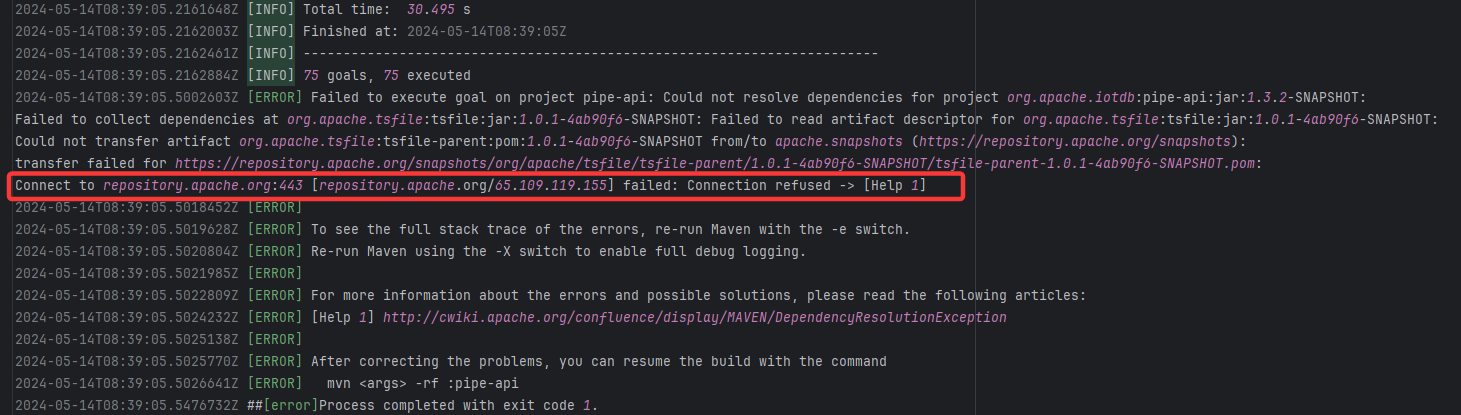

| Dependency Resolution Issue |

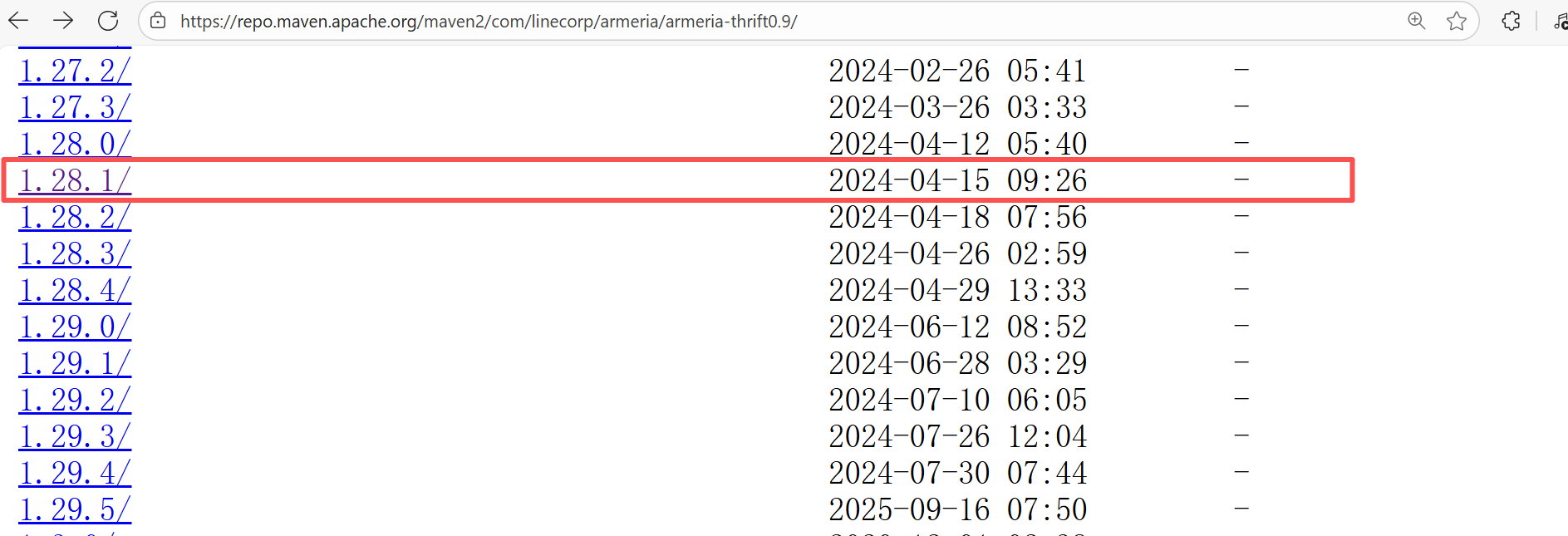

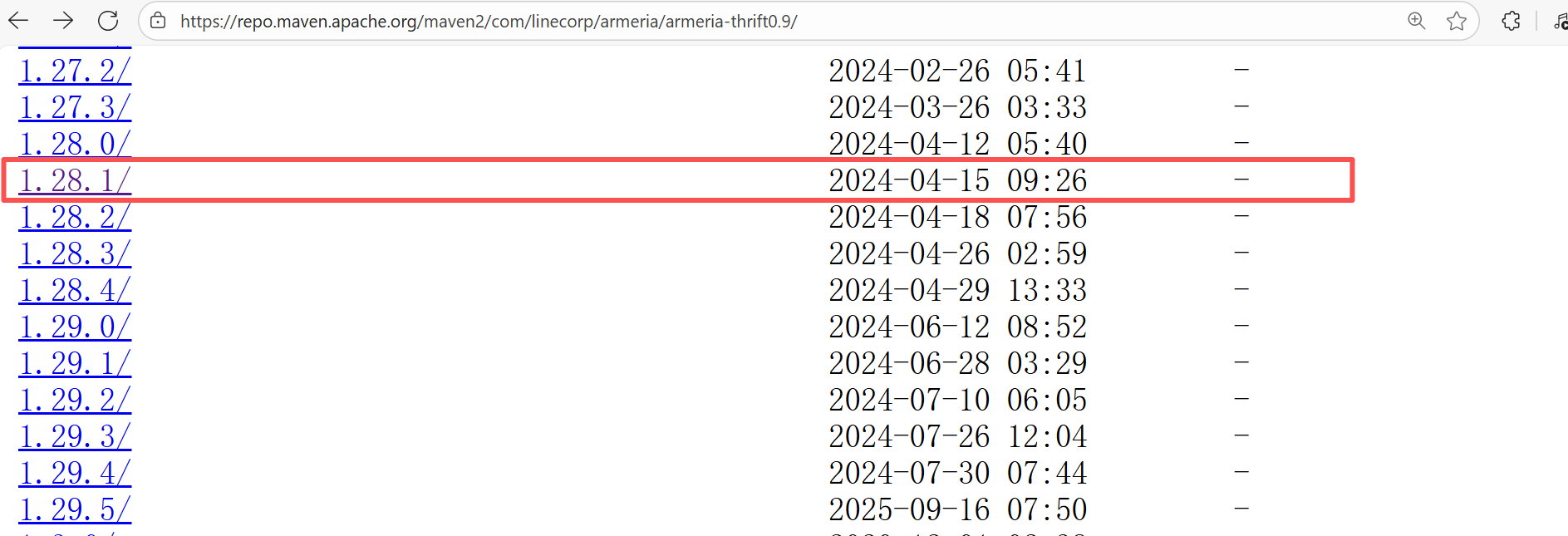

line/centraldogma |

23819340068 |

Missing Dependency |

Error: The error indicates incorrect dependency coordinates, and the dependency does not exist in the specified Maven repository.

Root Cause: Checking the repository dependencies revealed that it was uploaded (4-15 9:26) before the job execution (4-15 9:40). Therefore, the error is due to repository synchronization delay. The dependency was just uploaded to the official repository, but the mirror had not yet synchronized, leading to the dependency not being found. Succeeded after waiting for a period of time and rerunning. |

Wait for a while, and rerun after the mirror repository synchronization is complete. |

|

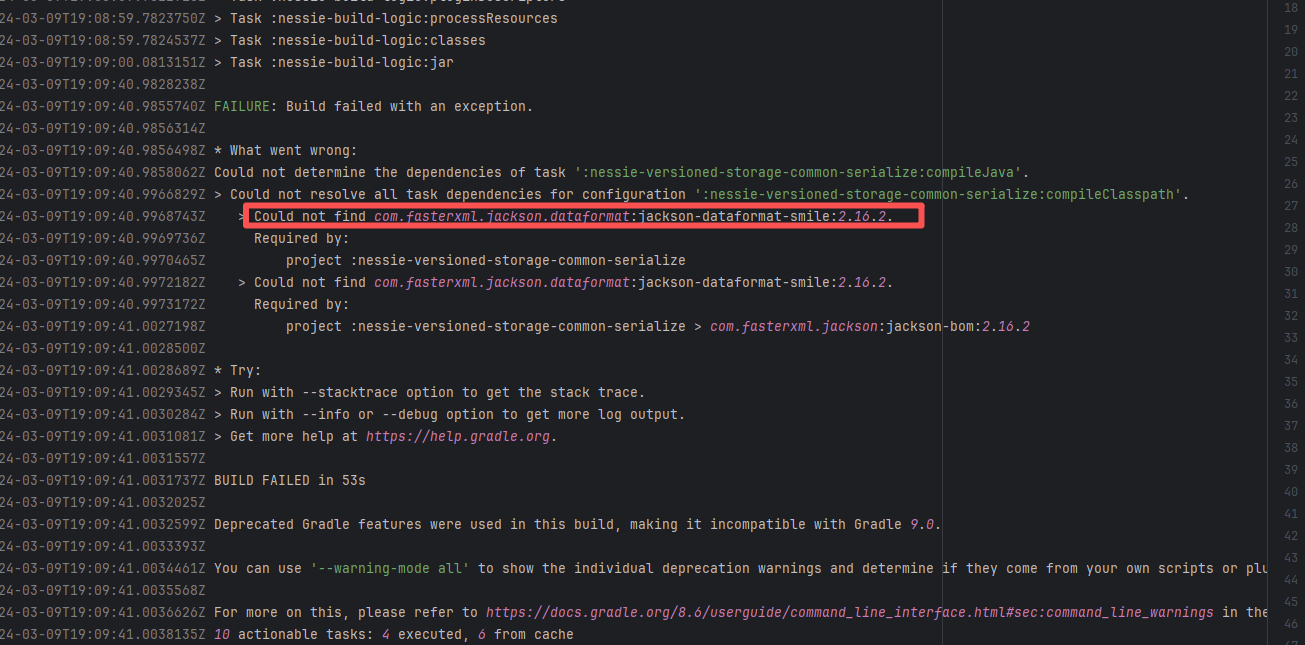

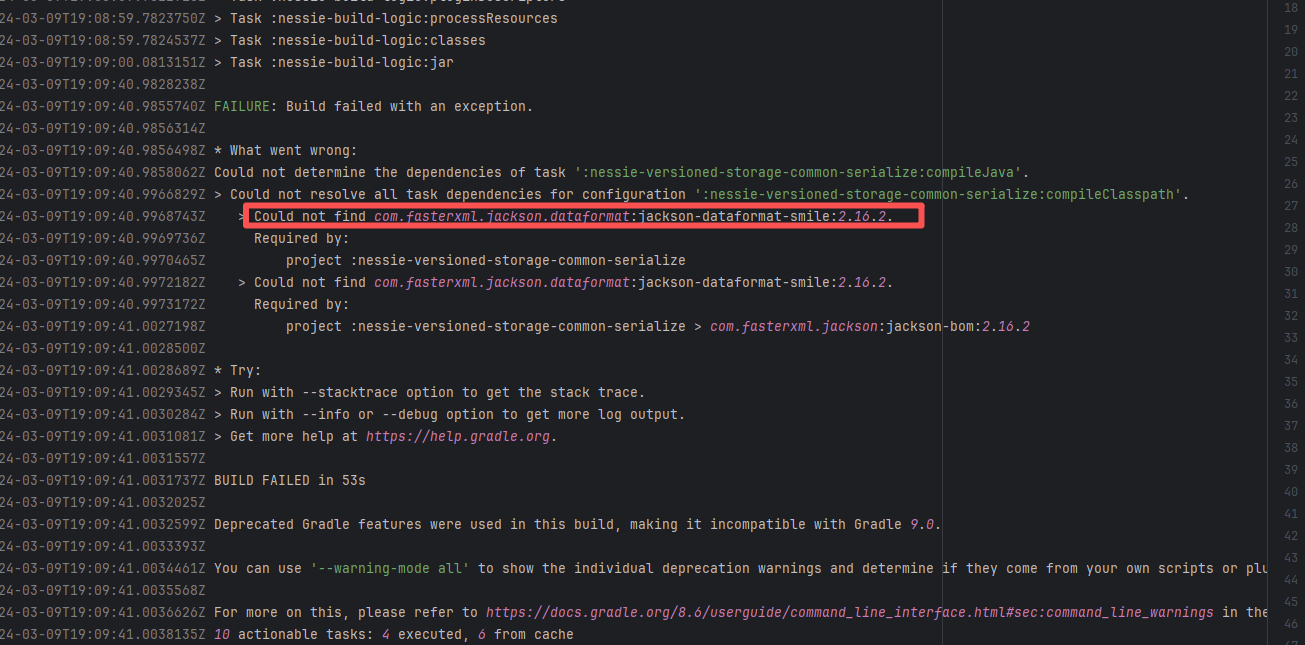

| projectnessie/nessie |

22471407186 |

Network Issue |

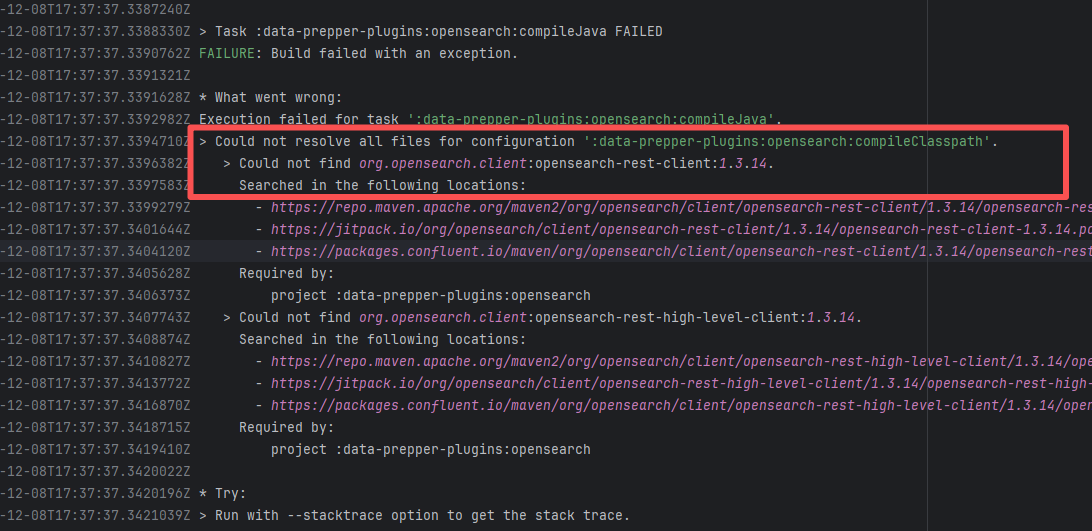

Error: An error occurred while fetching dependencies from the Maven repository.

Root Cause: Succeeded after a rerun, which indicates that the error is a transient error caused by network fluctuations. |

Short-term Fix: Rerun the job; it will automatically succeed after network conditions recover.

Long-term Defense:

1. Configure domestic mirror sources to replace official sources;

2. Add a retry mechanism (e.g., retry plugin);

3. Use CDN to accelerate dependency downloads. |

|

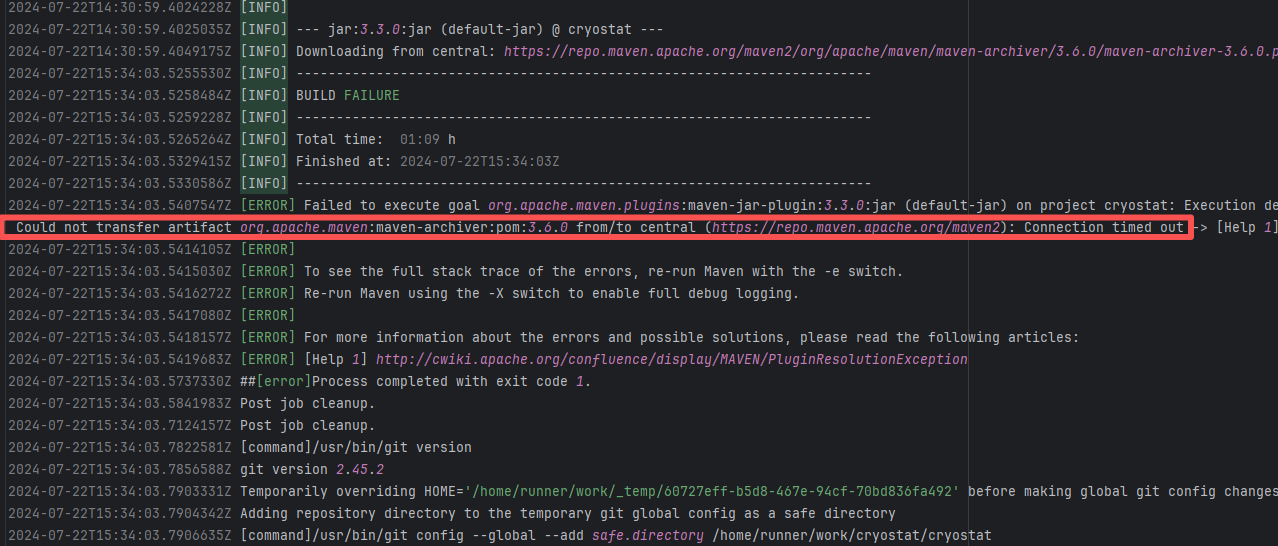

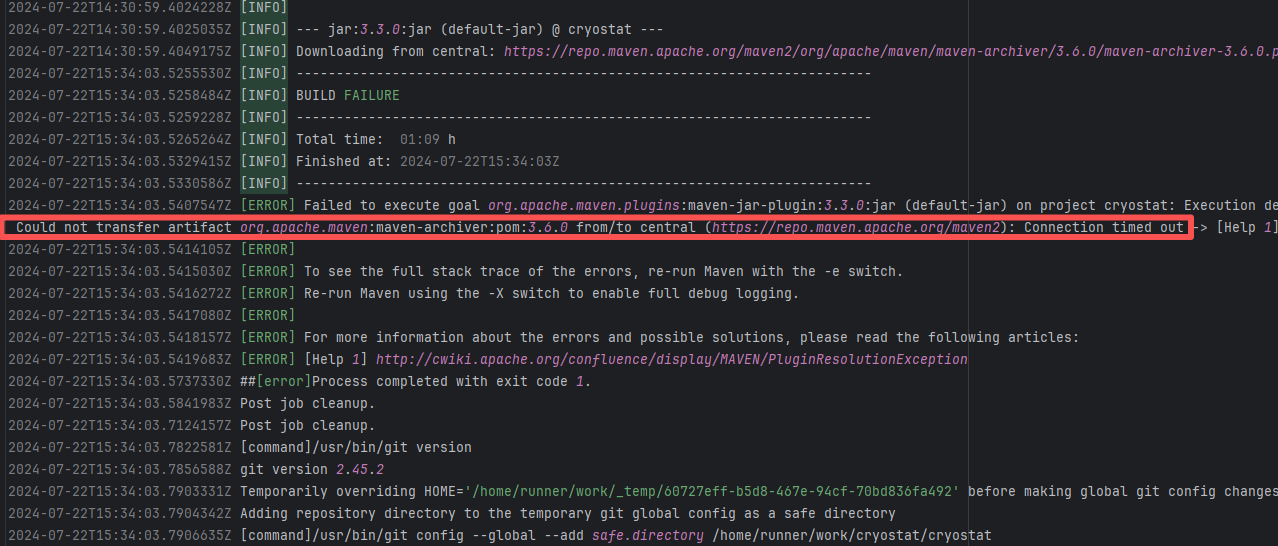

| cryostatio/cryostat |

27753664930 |

Network Issue |

Error: An error occurred while fetching dependencies from the Maven repository, resulting in a Connection timed out error.

Root Cause: Succeeded after a rerun. This indicates that the error was not a service provider server error, but a transient error caused by network fluctuations. |

Short-term Fix: Rerun the job; it will automatically succeed after network conditions recover.

Long-term Defense:

1. Configure domestic mirror sources to replace official sources;

2. Add a retry mechanism (e.g., retry plugin);

3. Use CDN to accelerate dependency downloads. |

|

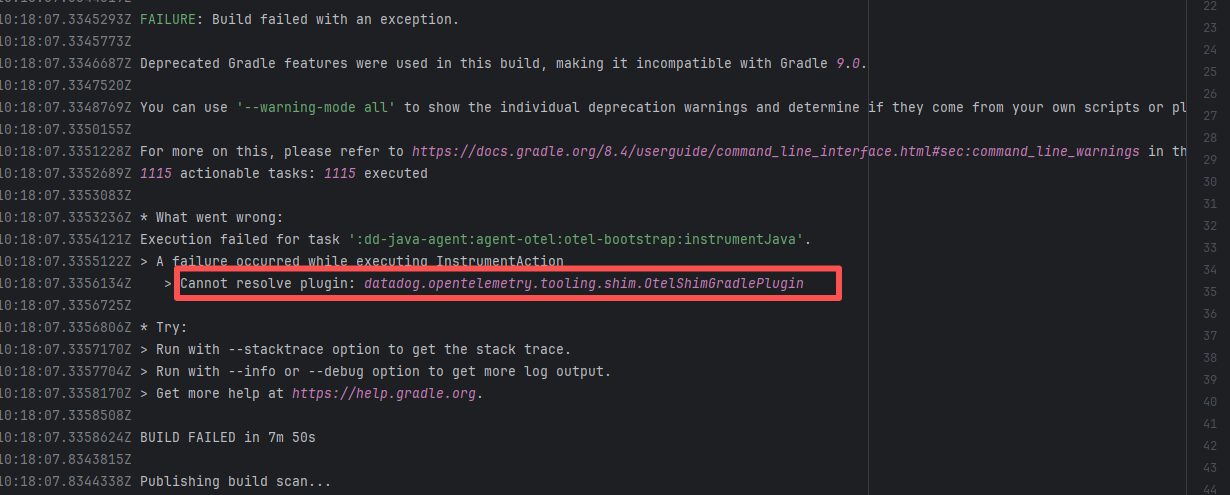

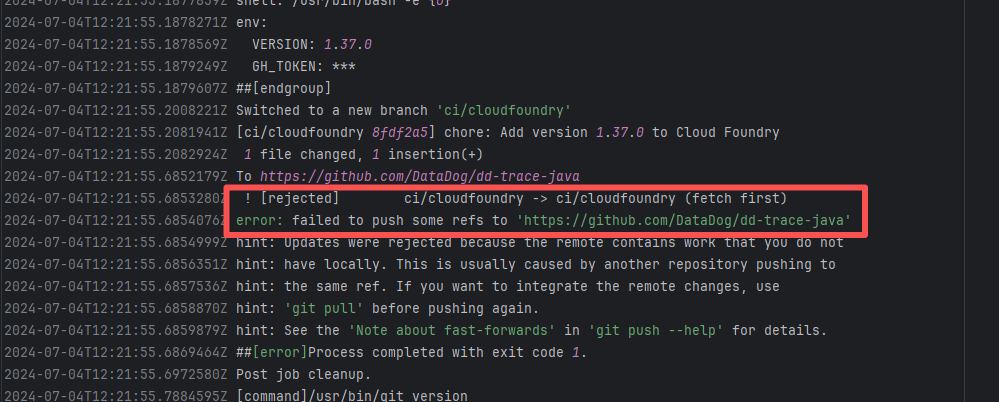

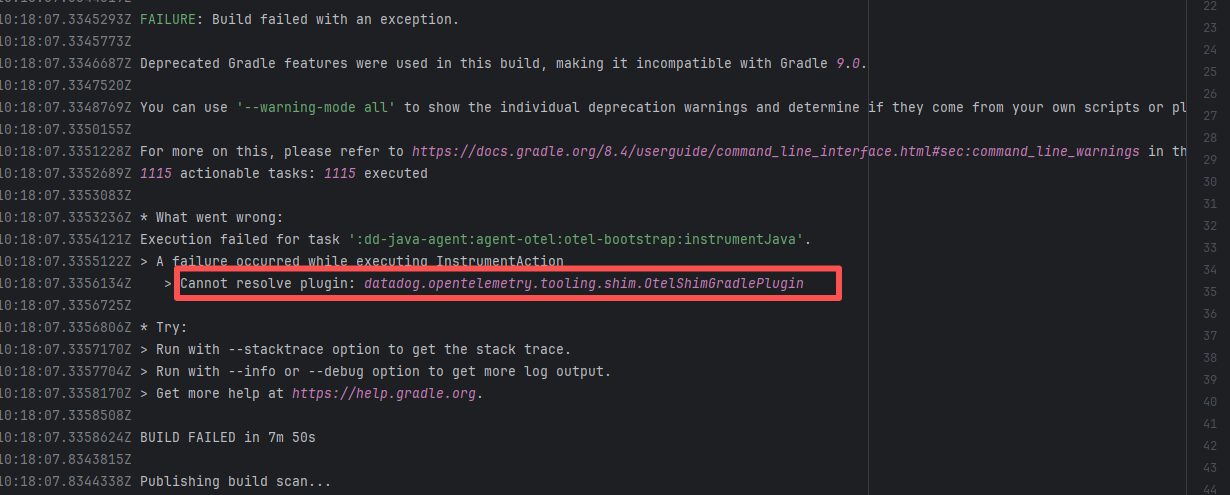

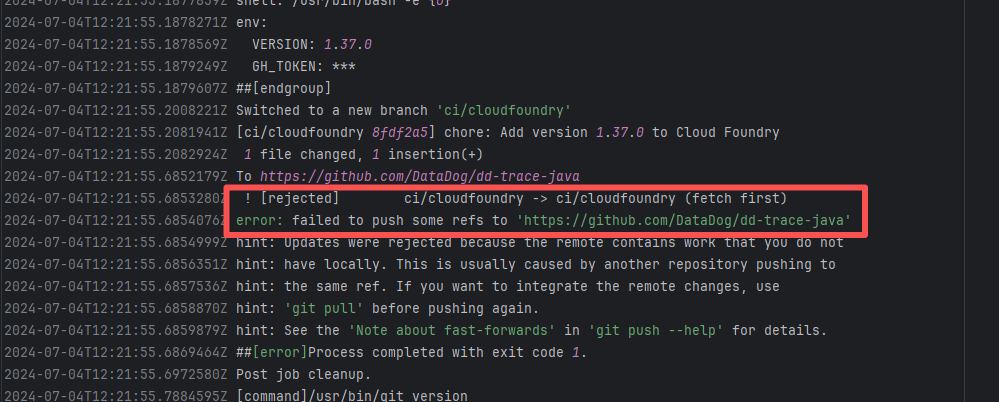

| datadog/dd-trace-java |

27448113573 |

Network Issue |

Error: An error occurred while fetching Plugin dependencies from the Maven repository.

Root Cause: Succeeded after a rerun, which indicates that the error is a transient error caused by network fluctuations. |

Short-term Fix: Rerun the job; it will automatically succeed after network conditions recover.

Long-term Defense:

1. Configure domestic mirror sources to replace official sources;

2. Add a retry mechanism (e.g., retry plugin);

3. Use CDN to accelerate dependency downloads. |

|

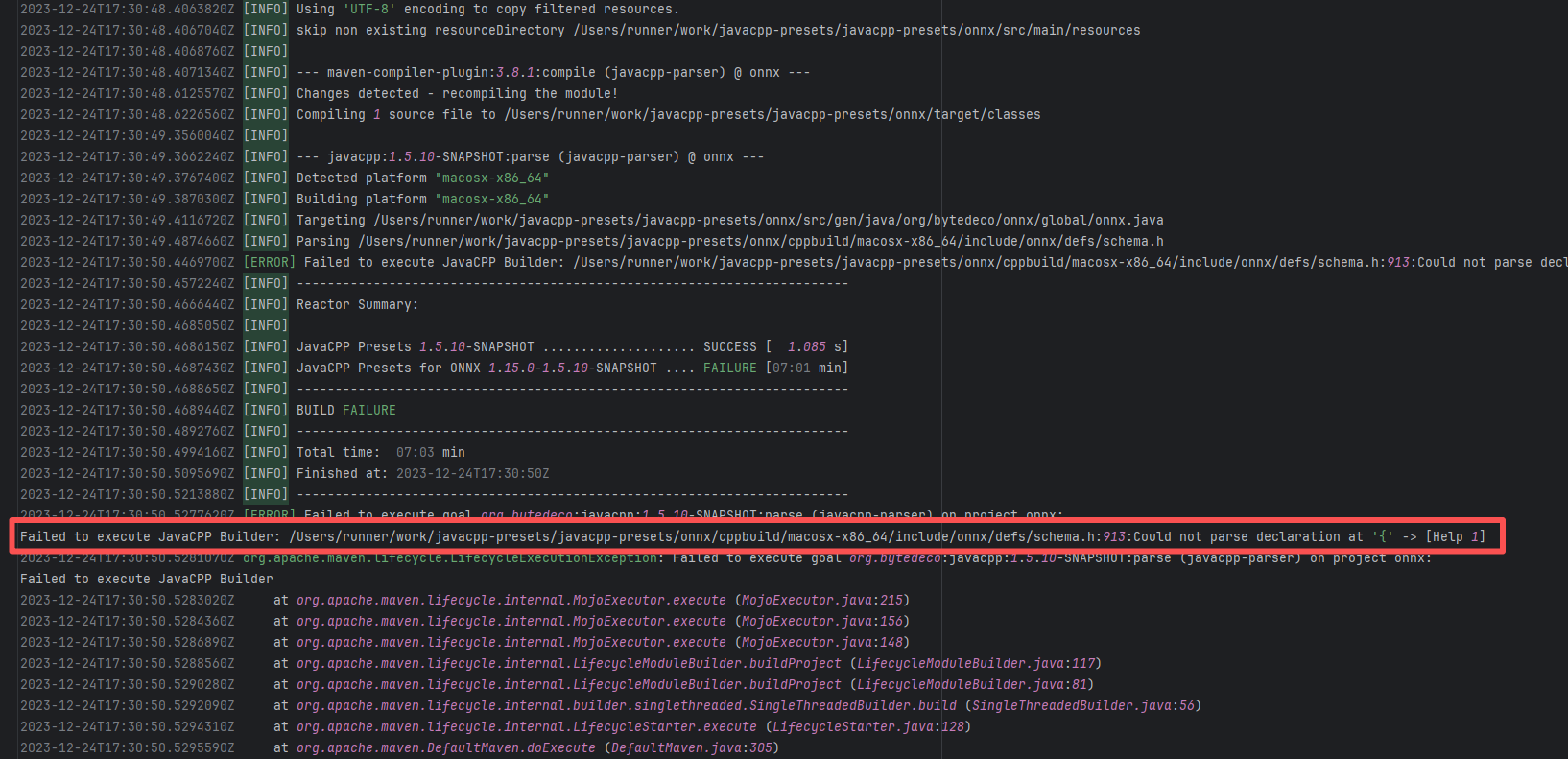

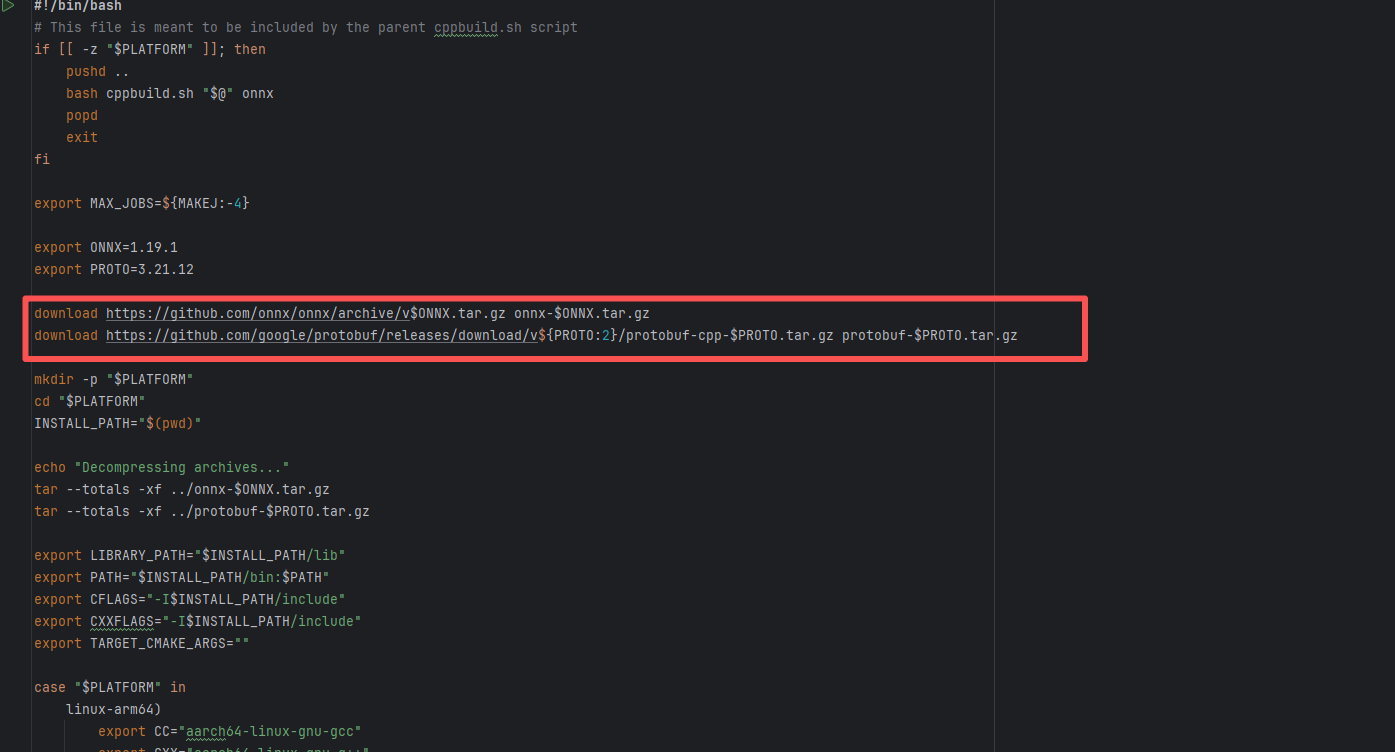

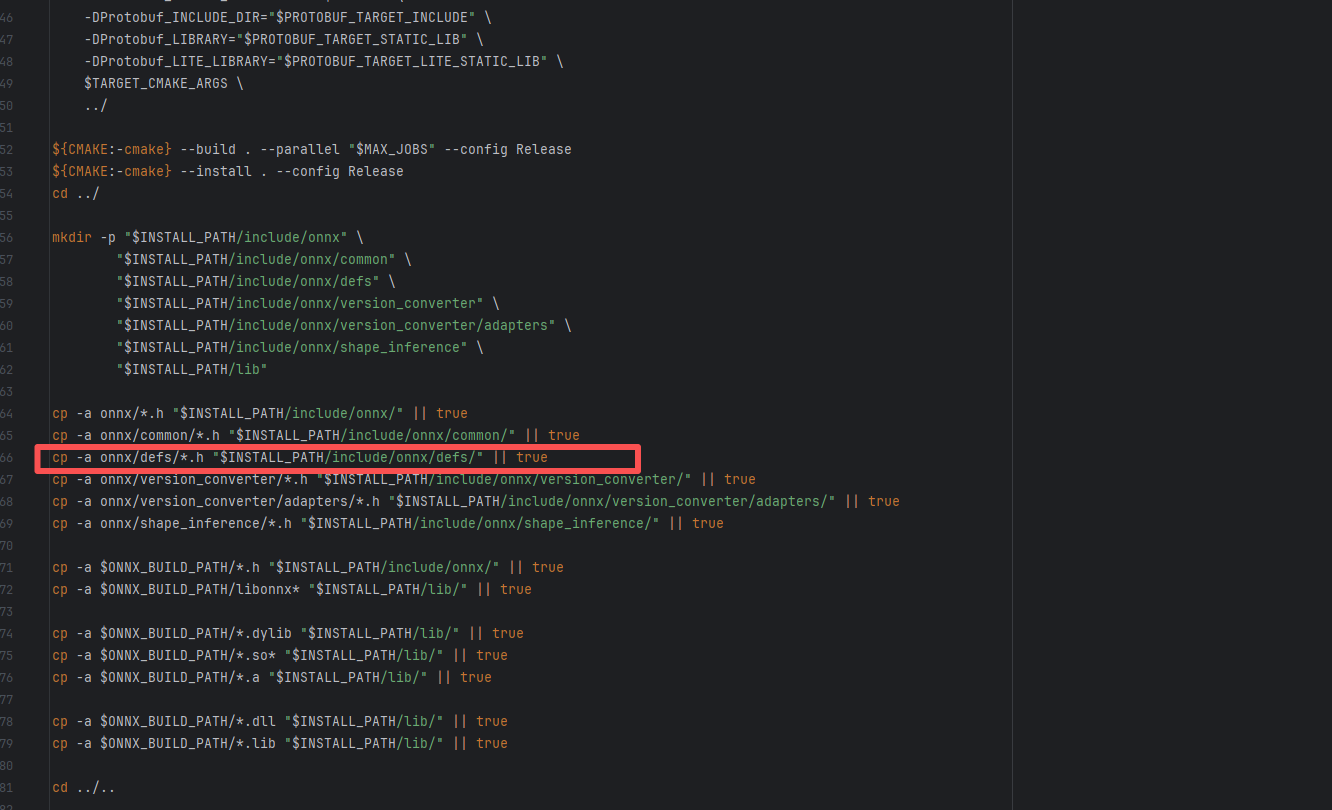

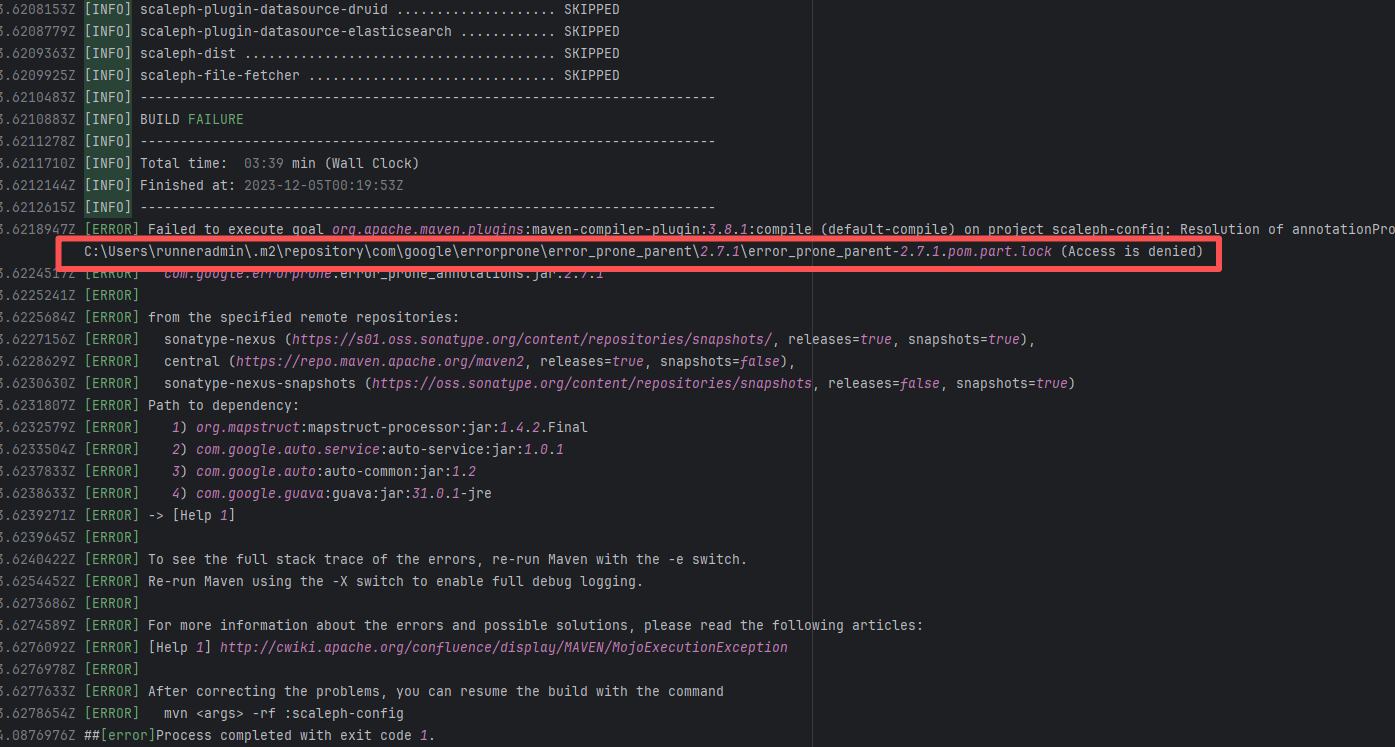

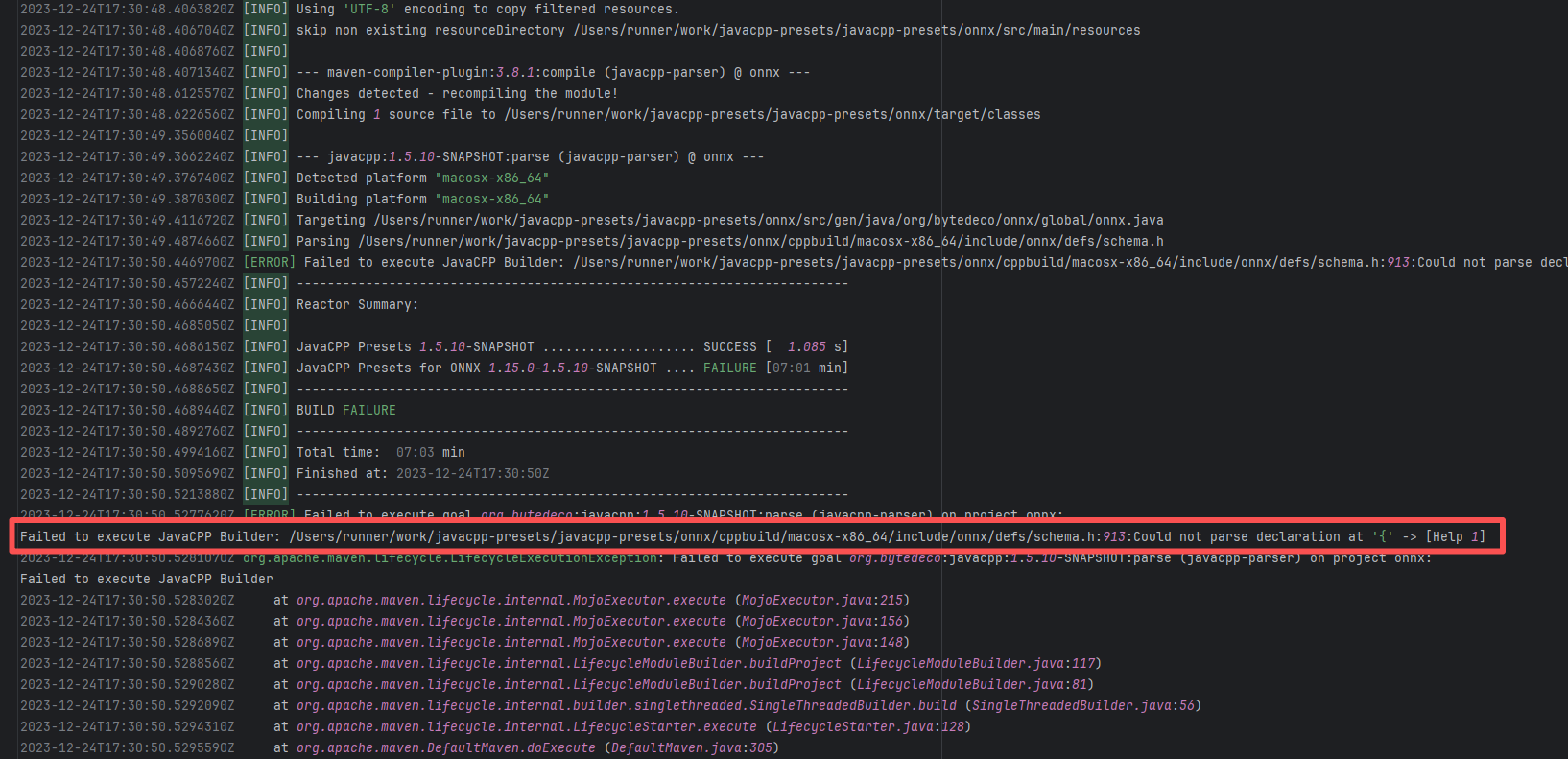

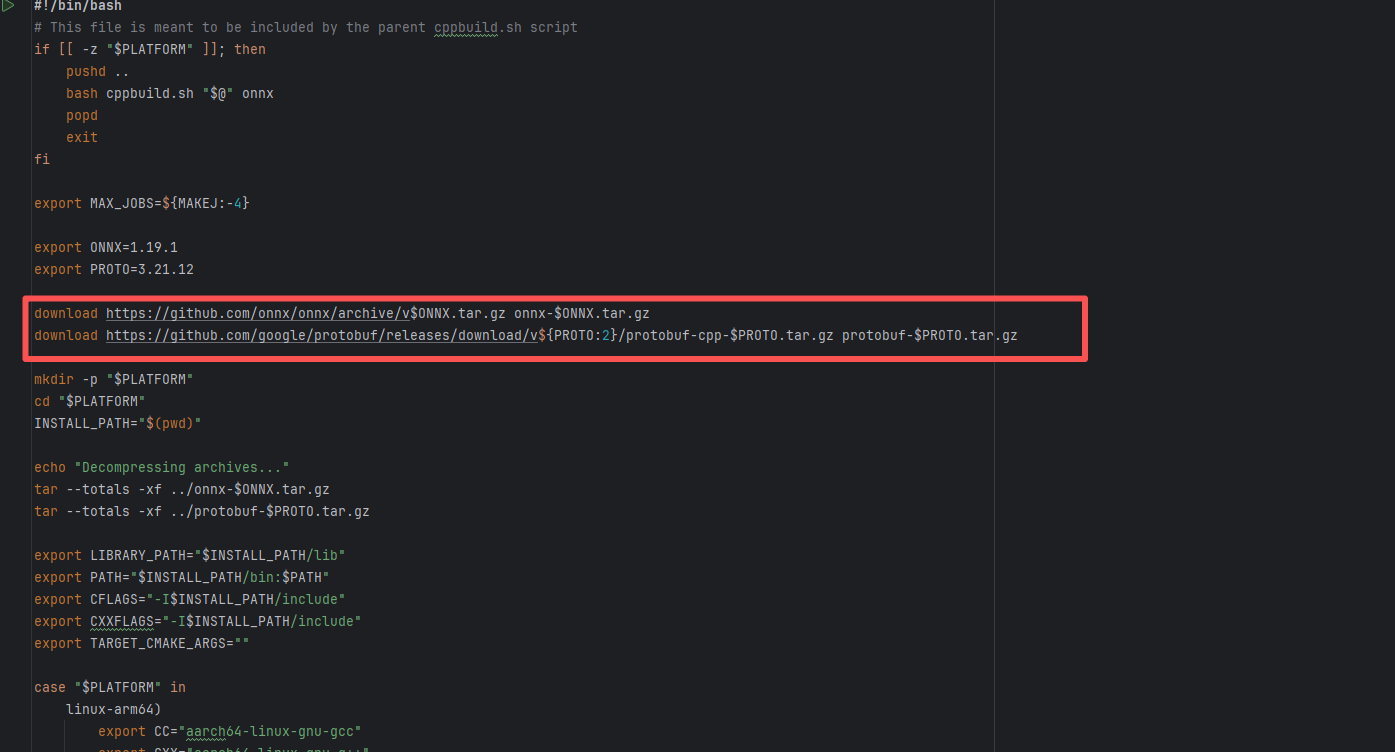

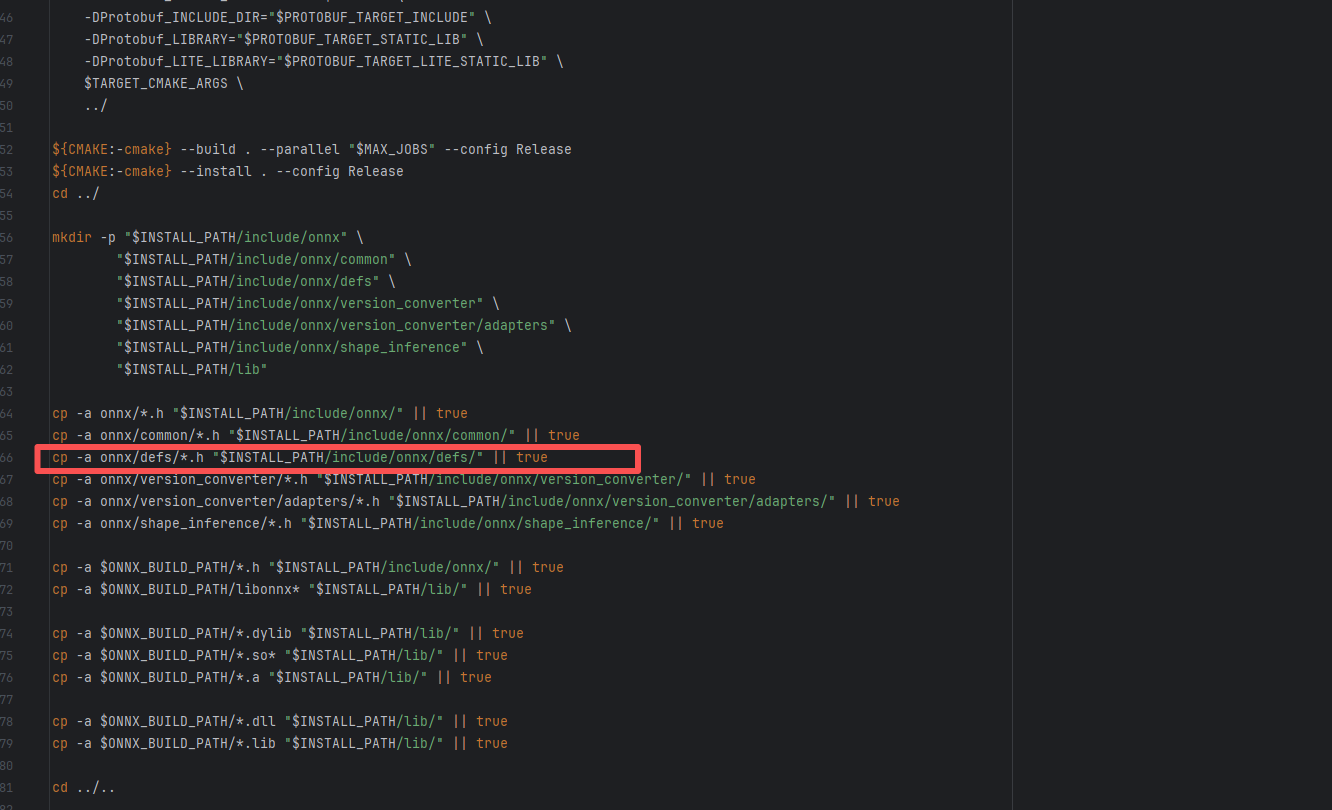

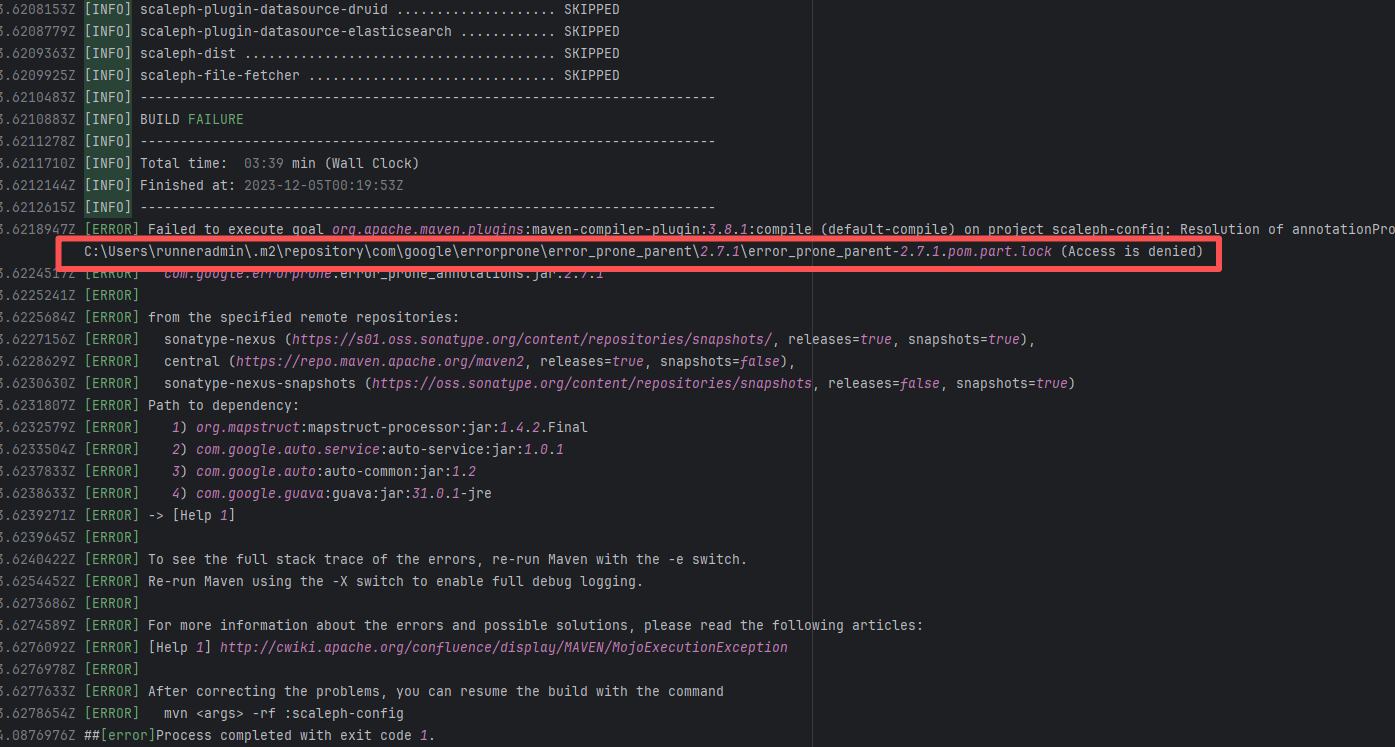

| bytedeco/javacpp-presets |

20063025513 |

Network Issue |

Error: An error occurred while fetching dependencies from the Maven repository; connecting to the IP failed.

Root Cause: Succeeded after a rerun, which indicates that the error is a transient error caused by network fluctuations. |

Short-term Fix: Rerun the job; it will automatically succeed after network conditions recover.

Long-term Defense:

1. Configure domestic mirror sources to replace official sources;

2. Add a retry mechanism (e.g., retry plugin);

3. Use CDN to accelerate dependency downloads. |

|

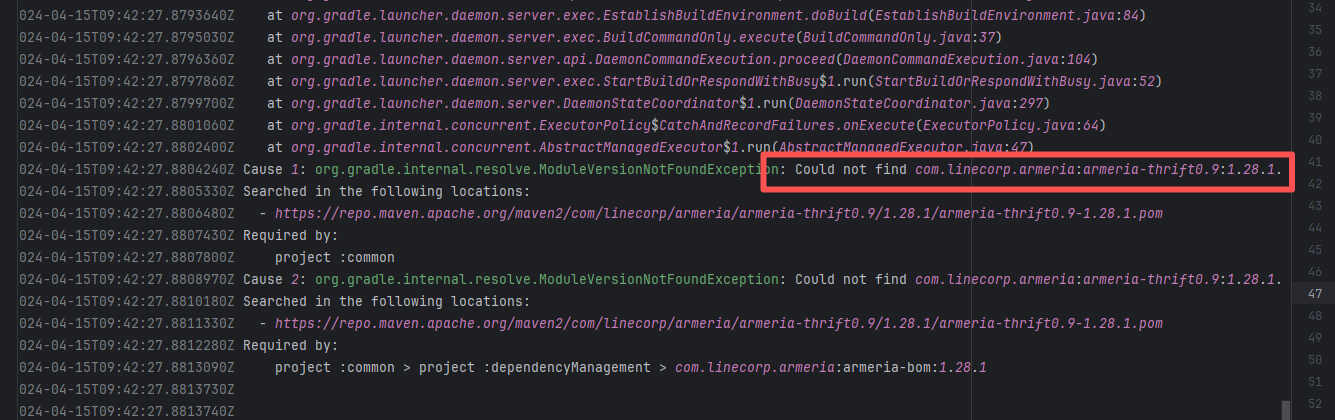

| junit-team/junit5 |

19042736168 |

Missing Dependency |

Error: The error indicates incorrect dependency coordinates, and the dependency does not exist in the specified Maven repository.

Root Cause: Checking the repository dependencies revealed that it was uploaded (4-15 9:26) before the job execution (4-15 9:40). Therefore, the error is due to repository synchronization delay. The dependency was just uploaded to the official repository, but the mirror had not yet synchronized, leading to the dependency not being found. Succeeded after waiting for a period of time and rerunning. |

Wait for a while, and rerun after the mirror repository synchronization is complete. |

|

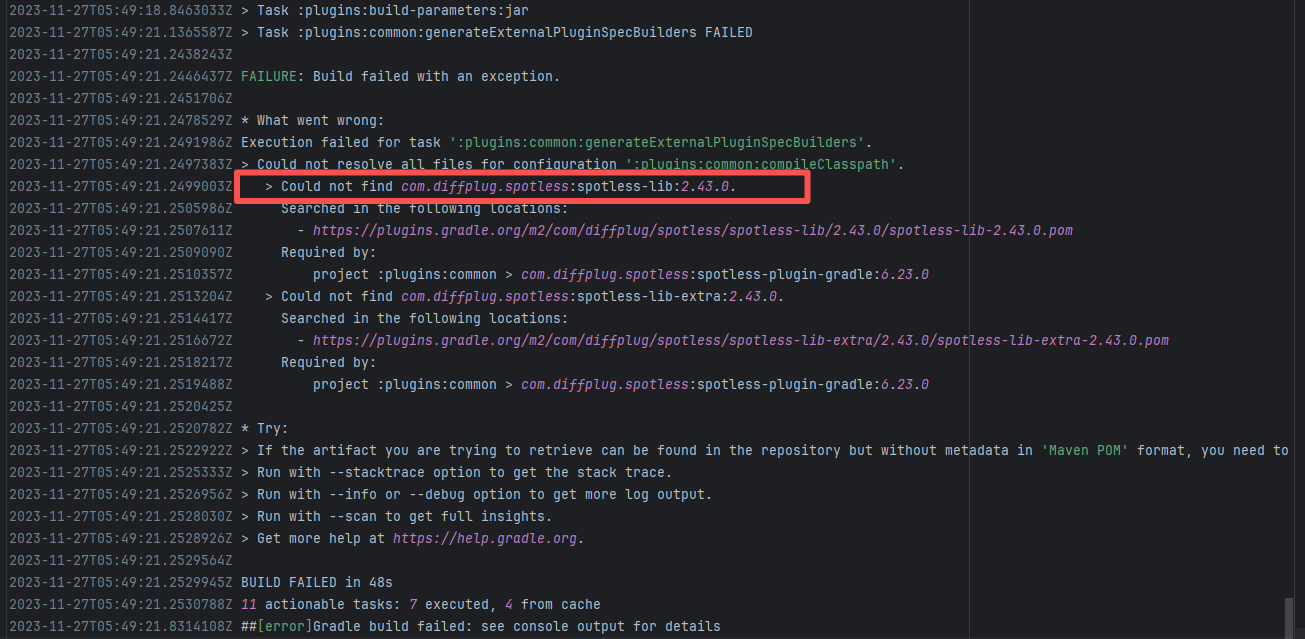

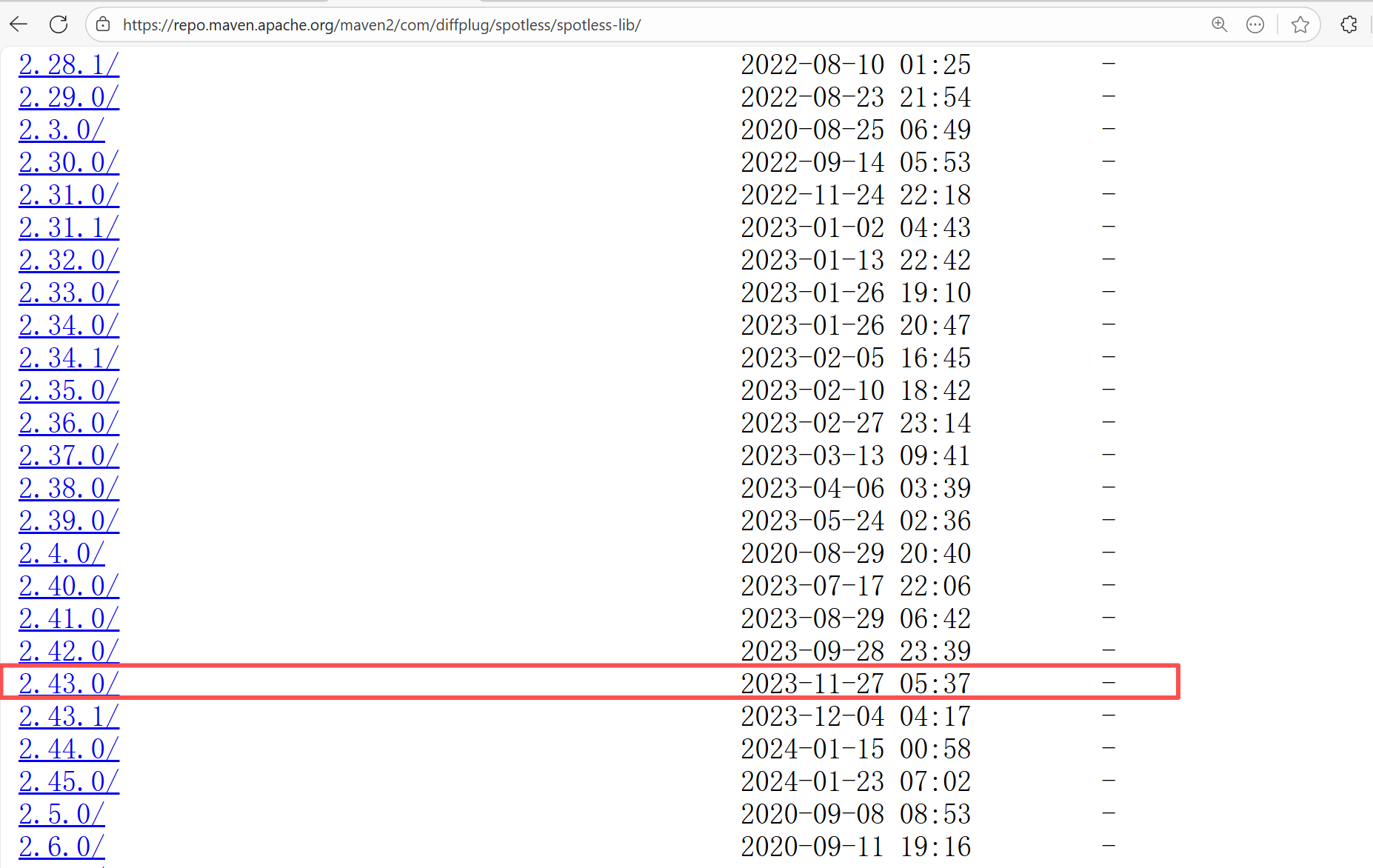

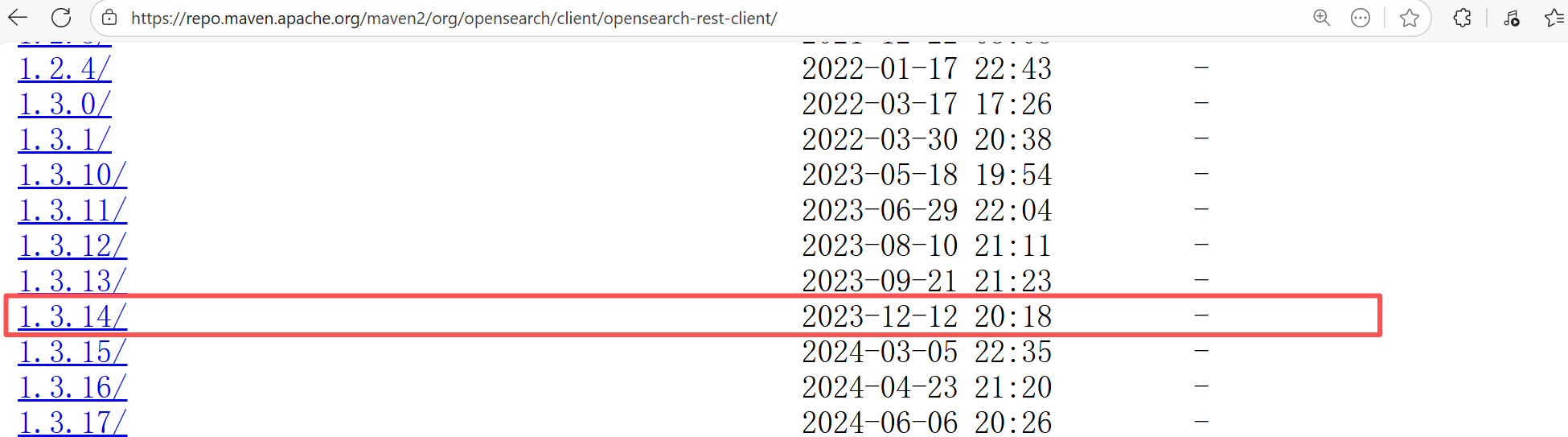

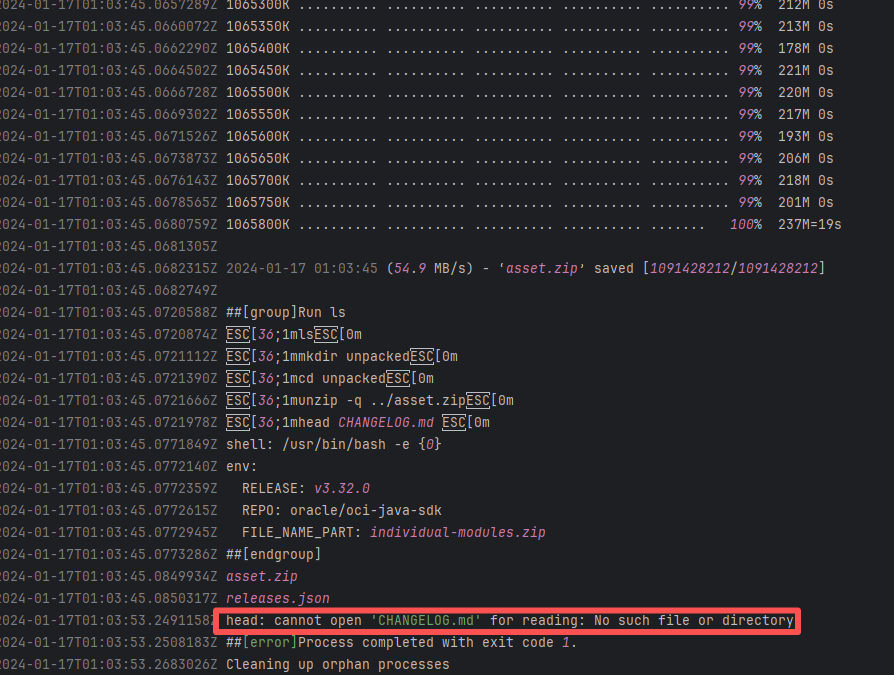

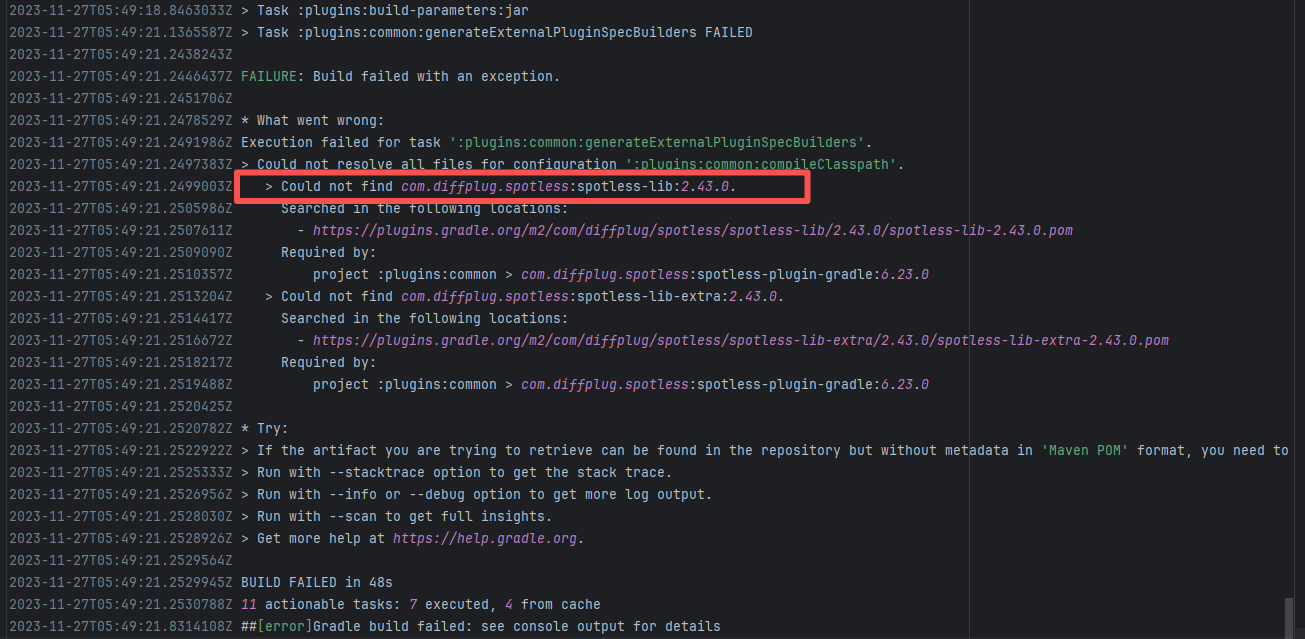

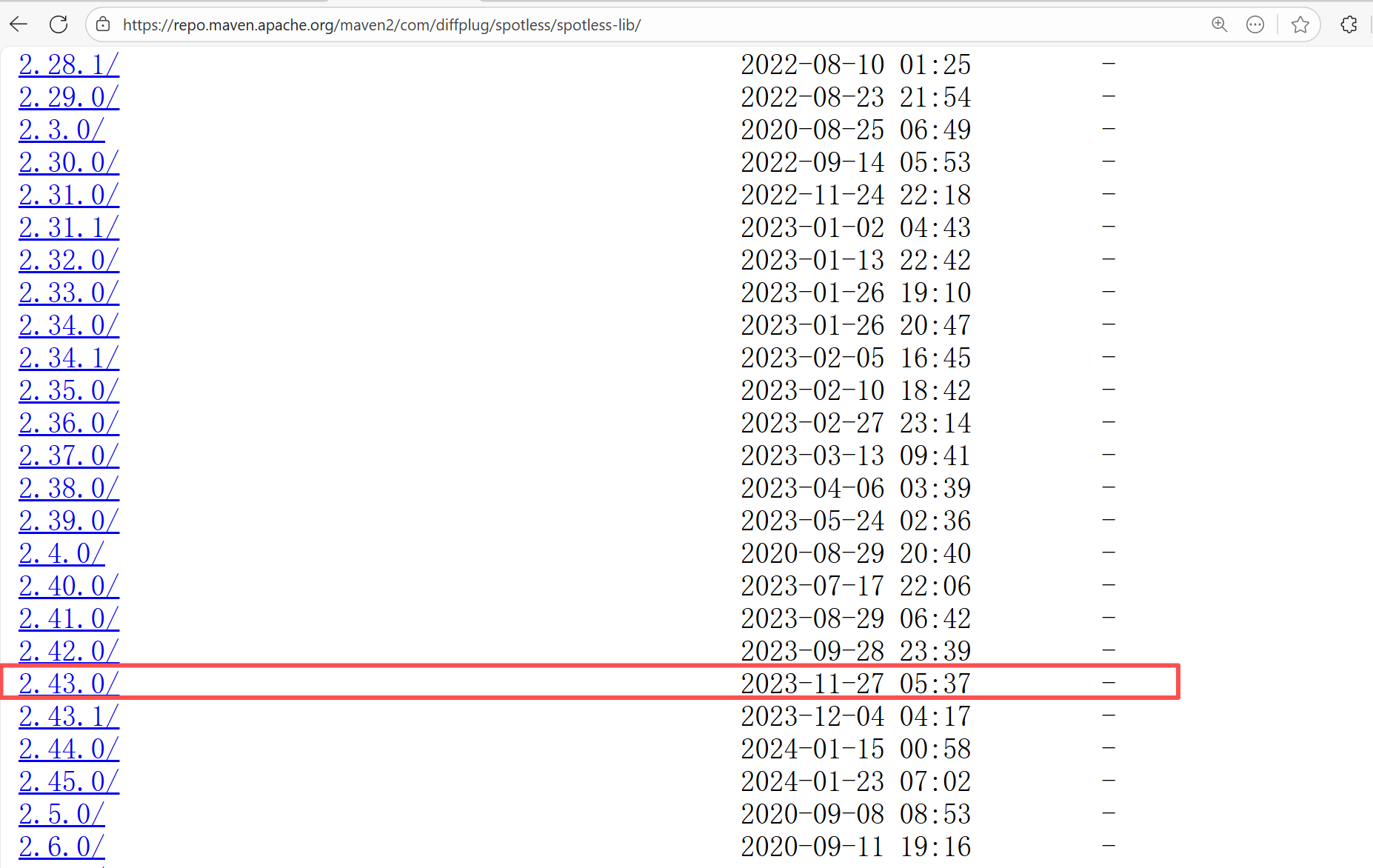

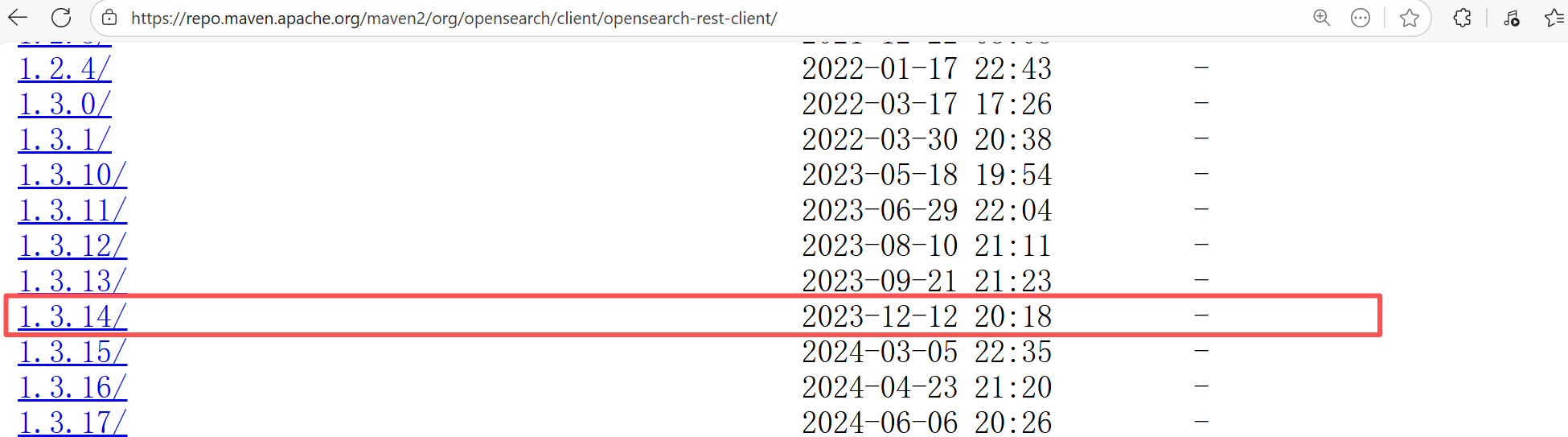

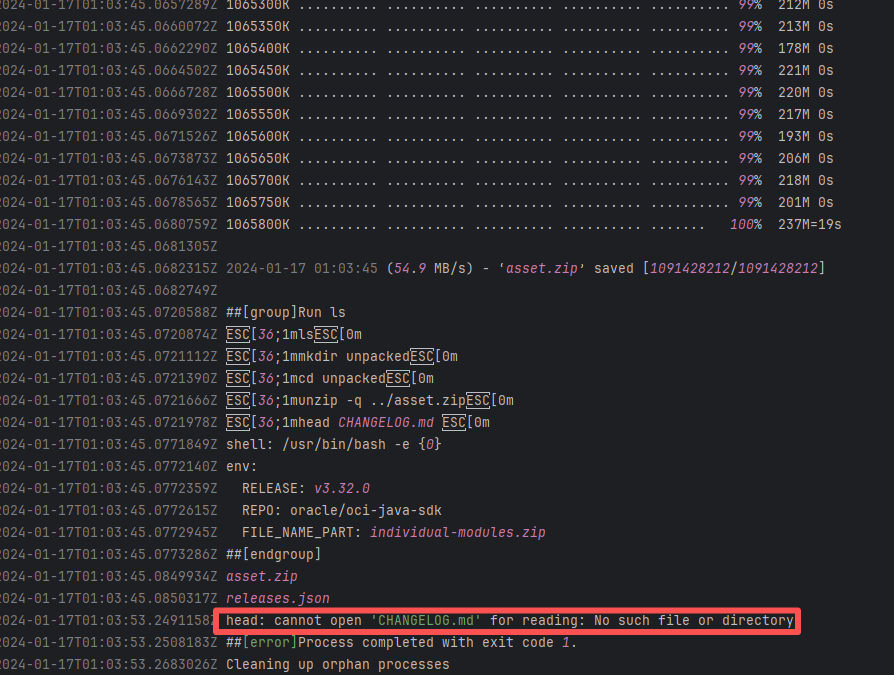

| opensearch-project/data-prepper |

19458129958 |

Missing Dependency |

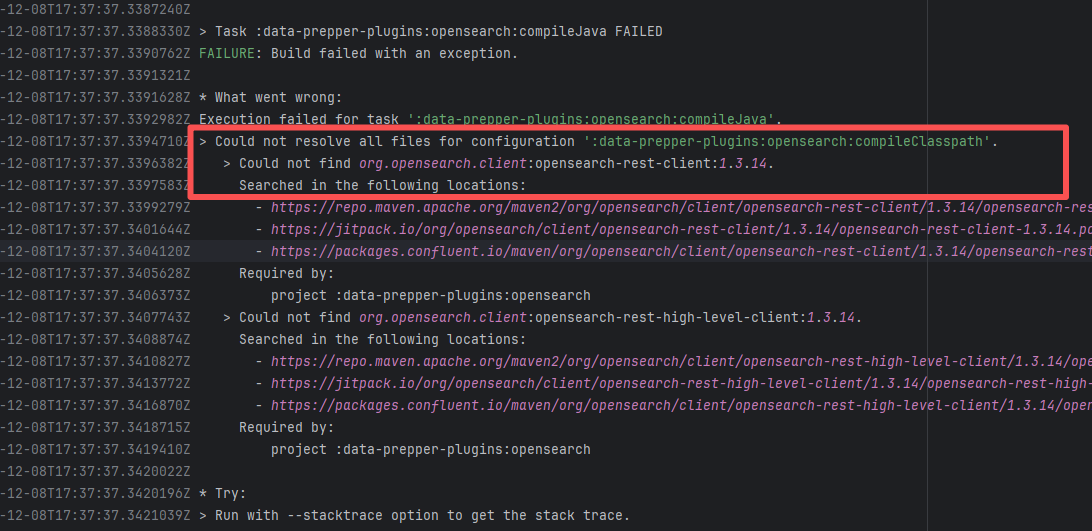

Error: The error indicates incorrect dependency coordinates, and the dependency does not exist in the specified Maven repository.

Root Cause: Checking the repository dependencies revealed that it had not been uploaded before the job execution (12-8). The dependency was uploaded on 12-12, and the dependency downloaded successfully after a rerun on 12-19. |

- Upload the correct dependency files to the maven repository

- Use dependency coordinates that already exist in the maven repository |

|

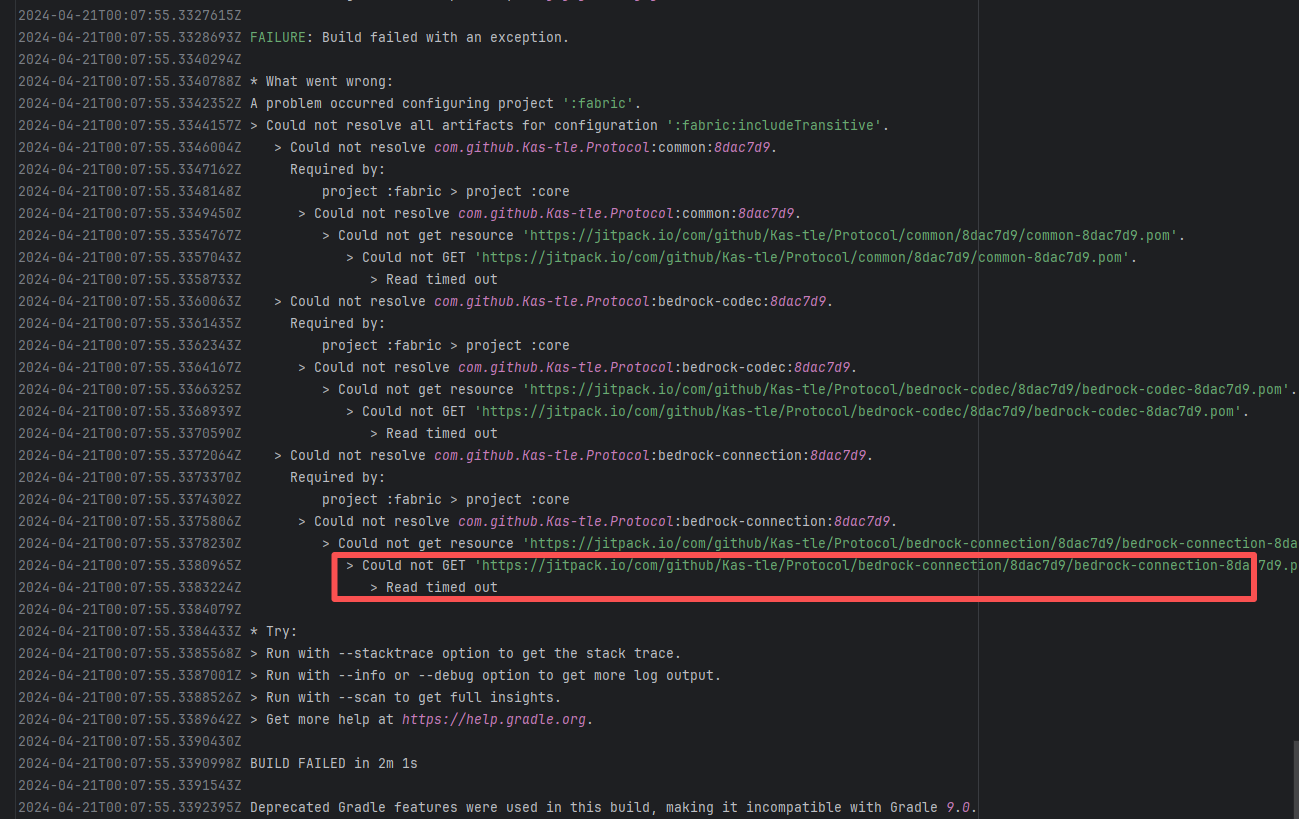

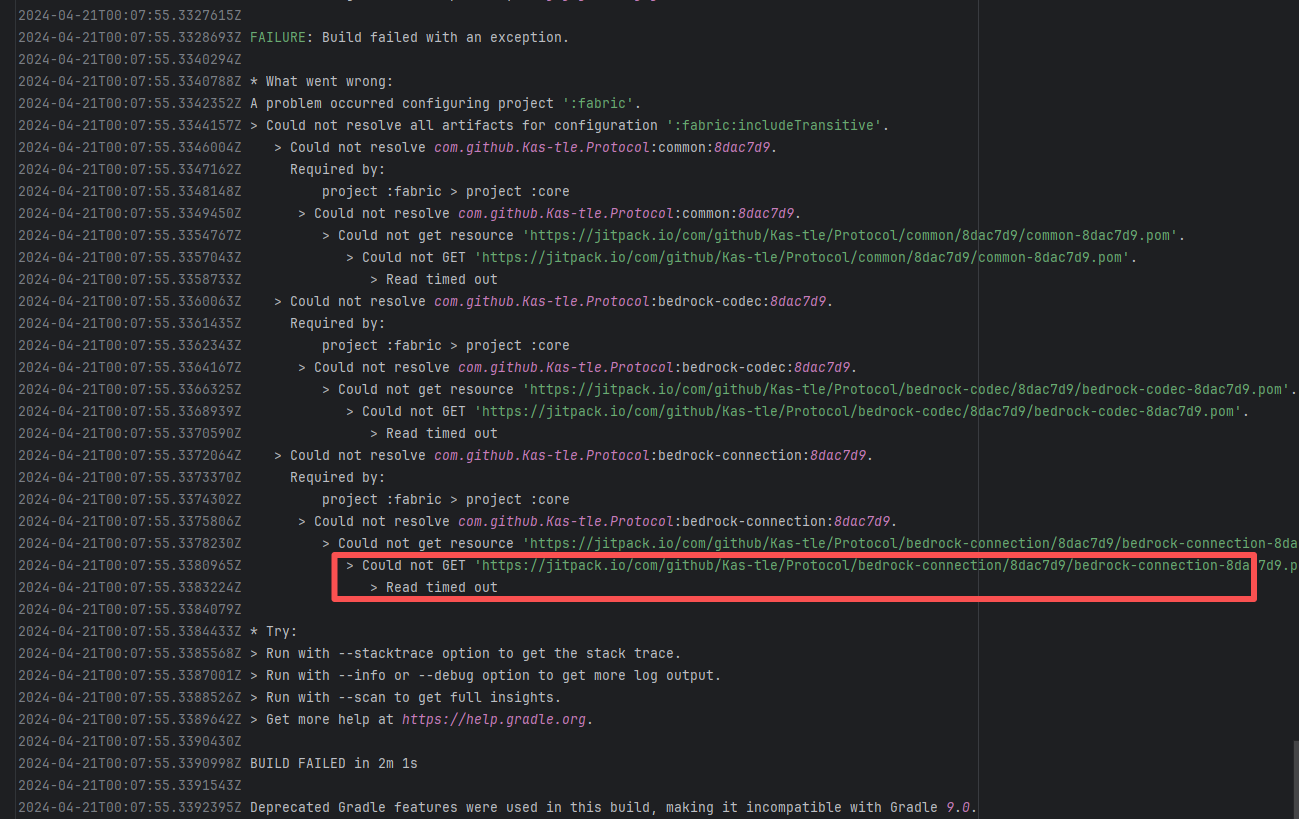

| geysermc/geyser |

24063124241 |

Network Issue |

Error: A timeout error occurred while fetching resources.

Root Cause: Transient connection timeout caused by network fluctuations, not a service provider server error. |

Short-term Fix: Rerun the job; it will automatically succeed after network conditions recover.

Long-term Defense:

1. Configure domestic mirror sources to replace official sources;

2. Add a retry mechanism (e.g., retry plugin);

3. Use CDN to accelerate dependency downloads. |

|

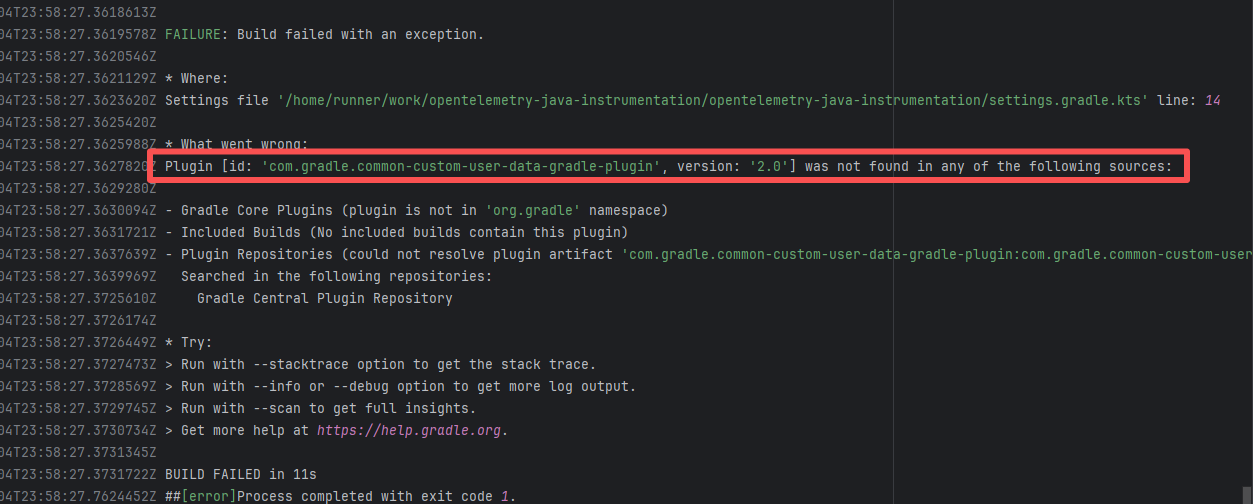

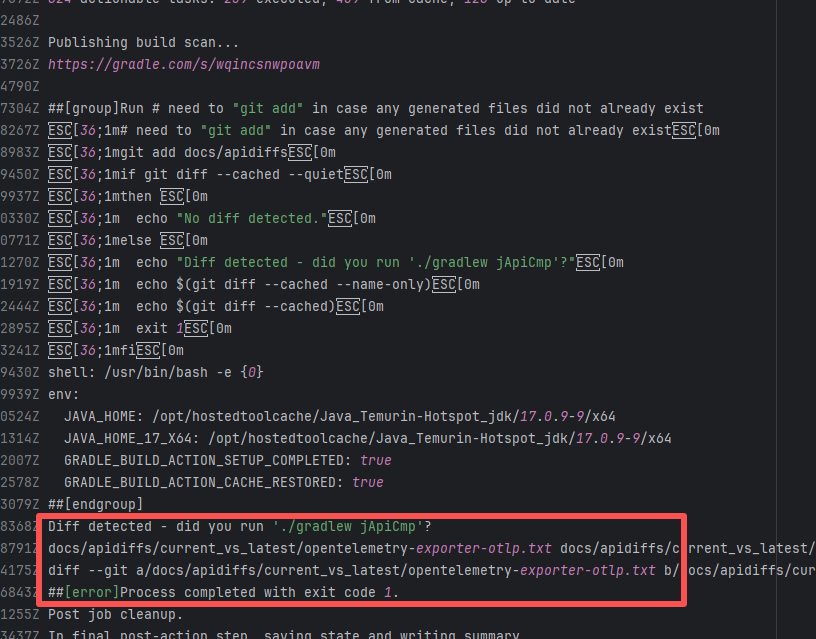

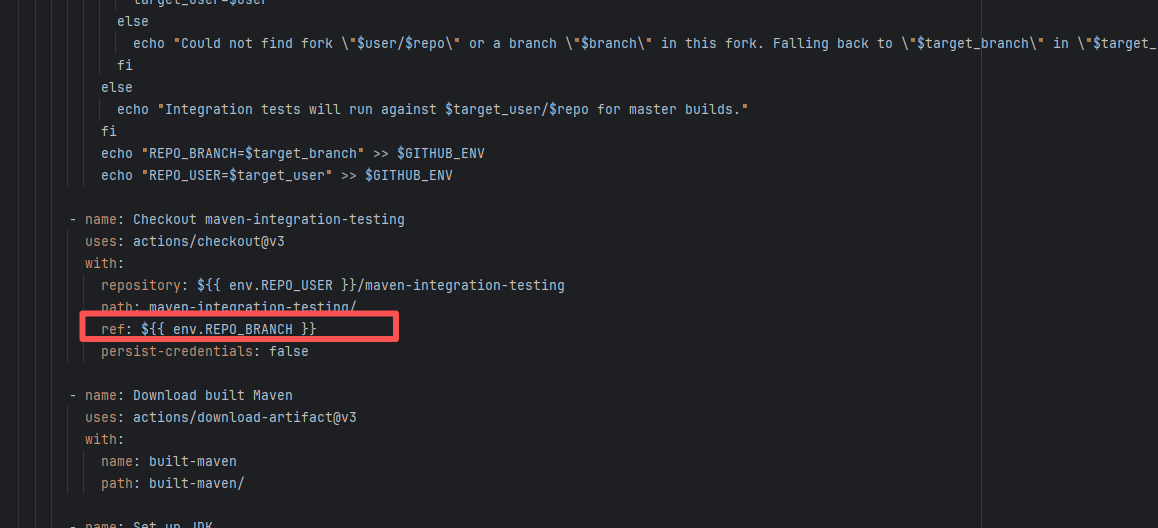

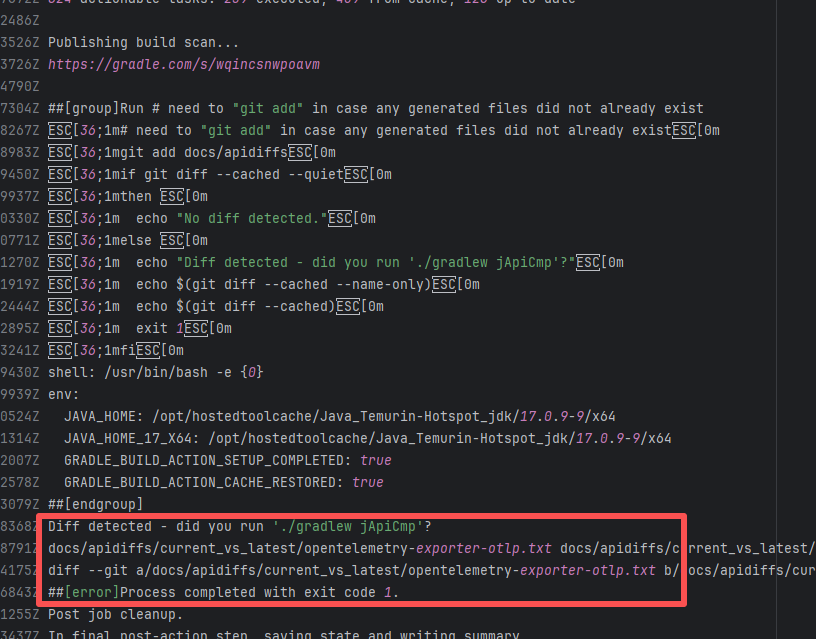

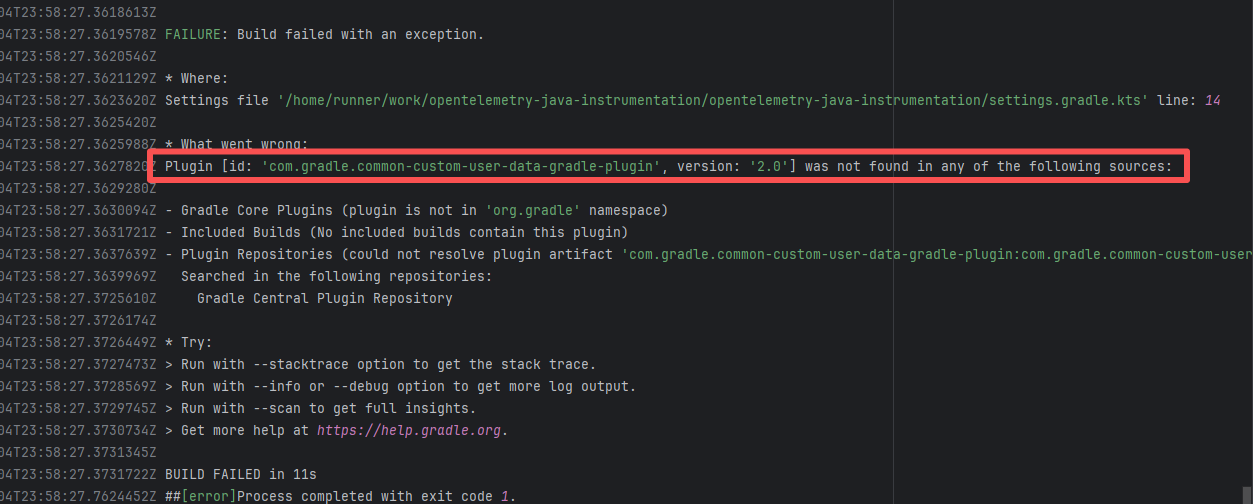

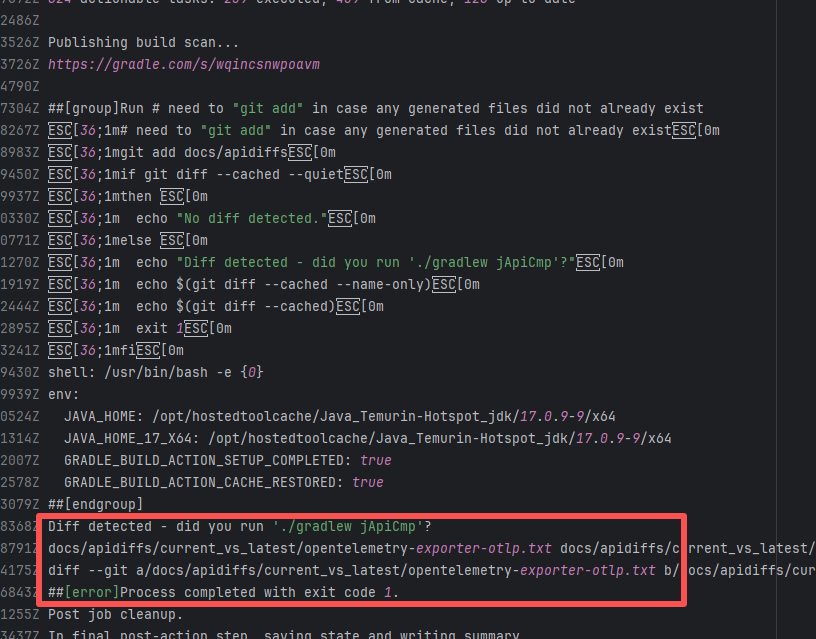

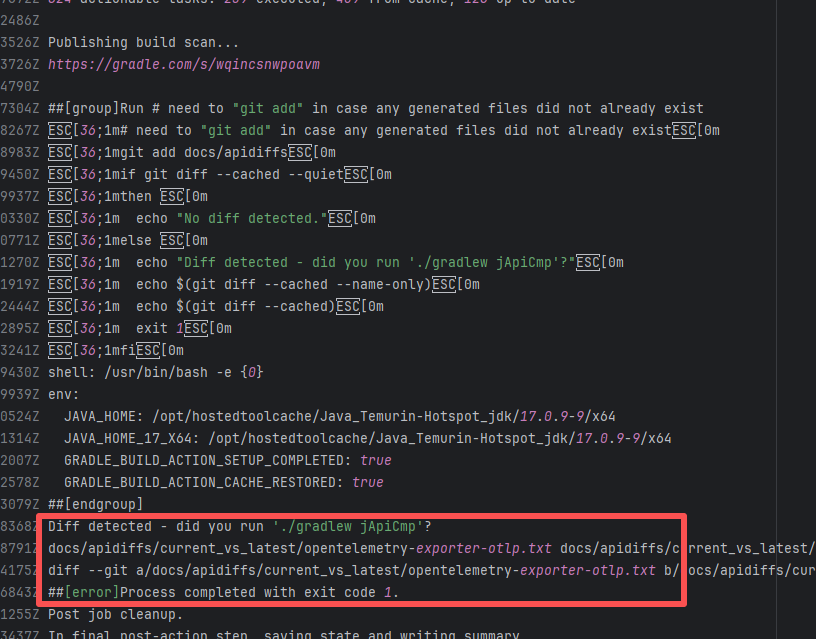

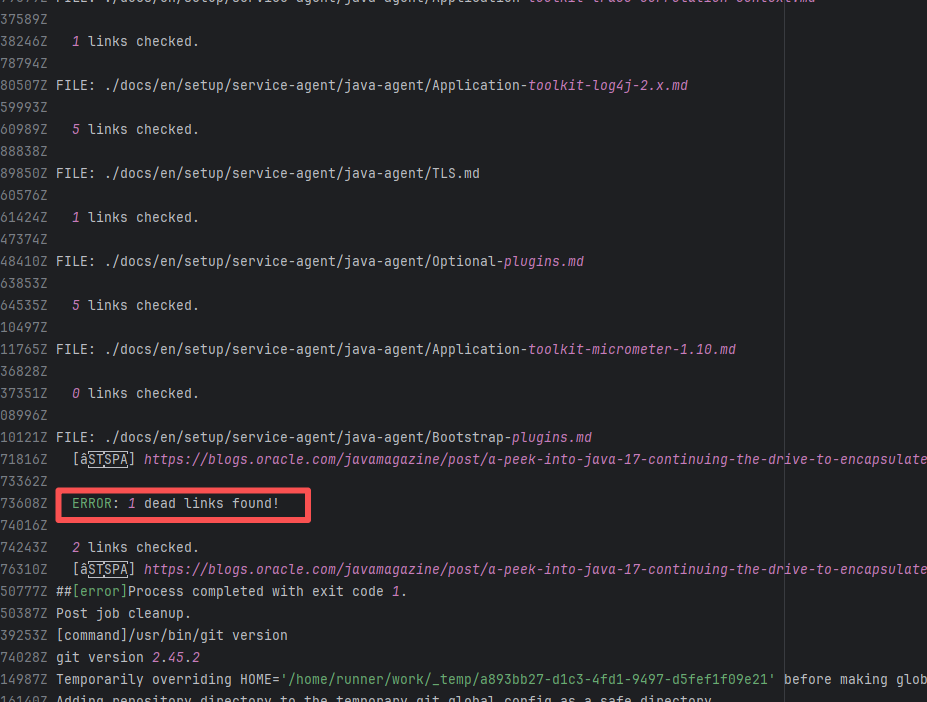

| open-telemetry/opentelemetry-java-instrumentation |

23466795502 |

Network Issue |

Error: The plugin com.gradle.common-custom-user-data-gradle-plugin:2.0 referenced on line 14 of settings.gradle.kts was not found, because the plugin could not be resolved in the plugin repository.

Root Cause: Succeeded after a rerun, which indicates that the error is a transient error caused by network fluctuations. |

Short-term Fix: Rerun the job; it will automatically succeed after network conditions recover.

Long-term Defense:

1. Configure domestic mirror sources to replace official sources;

2. Add a retry mechanism (e.g., retry plugin);

3. Use CDN to accelerate dependency downloads. |

|

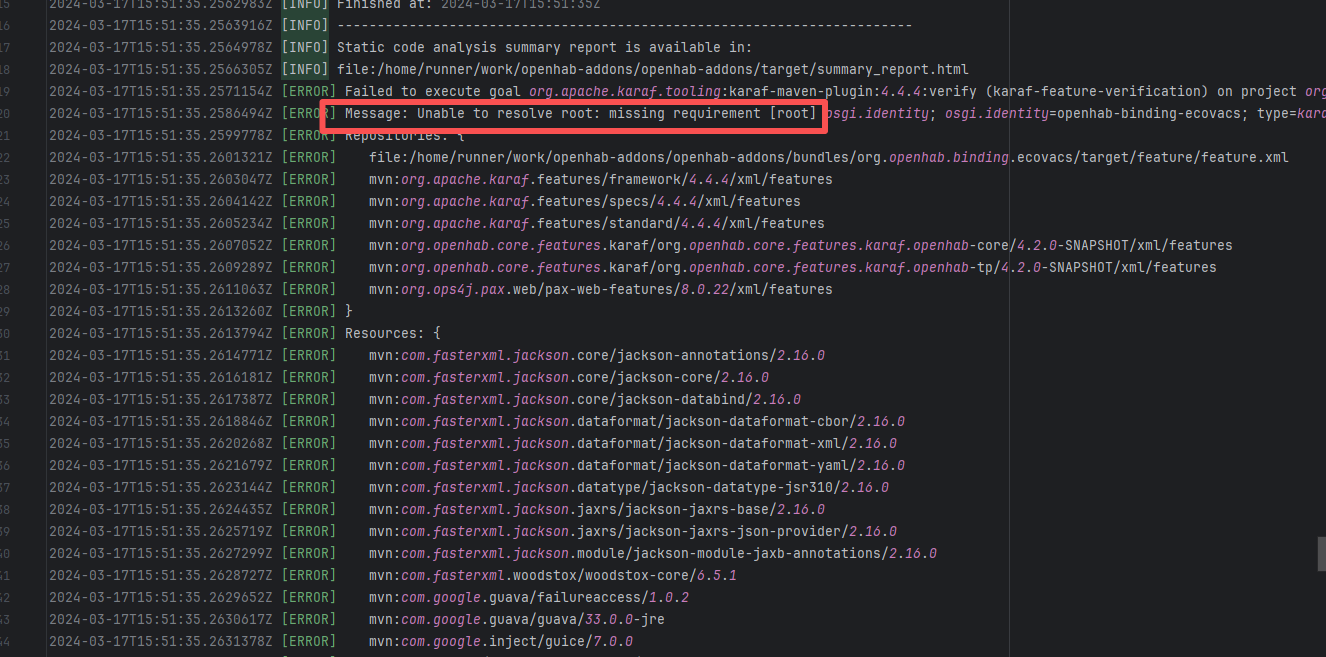

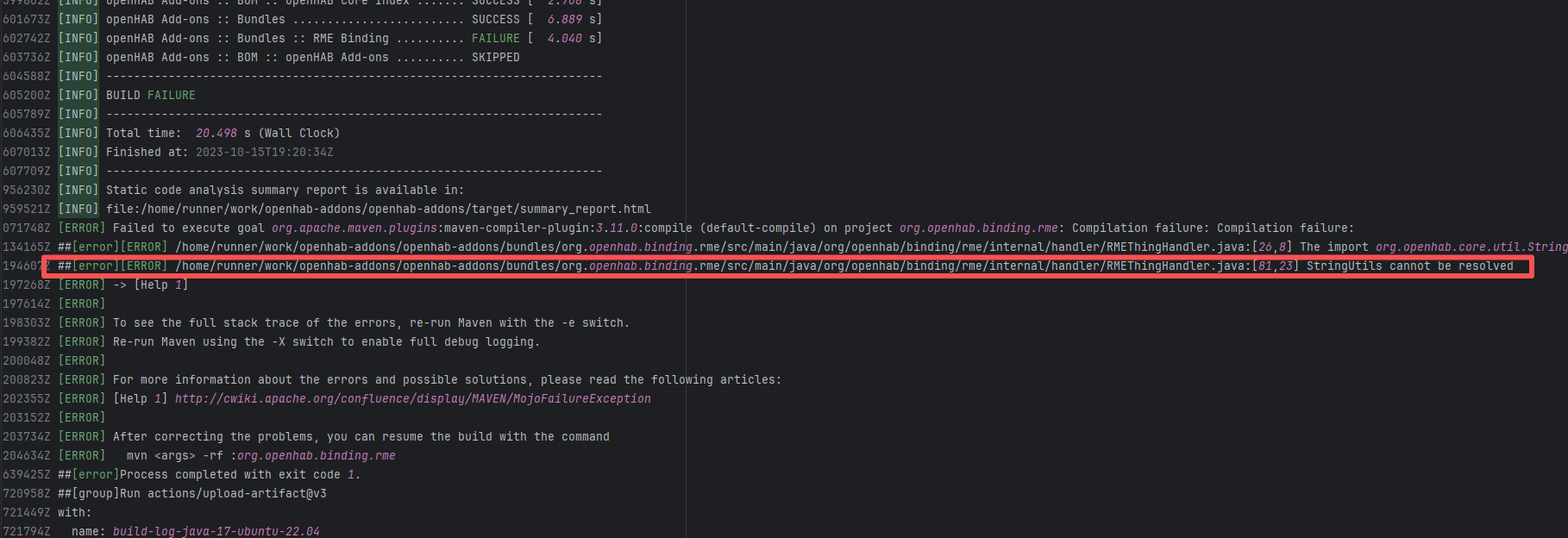

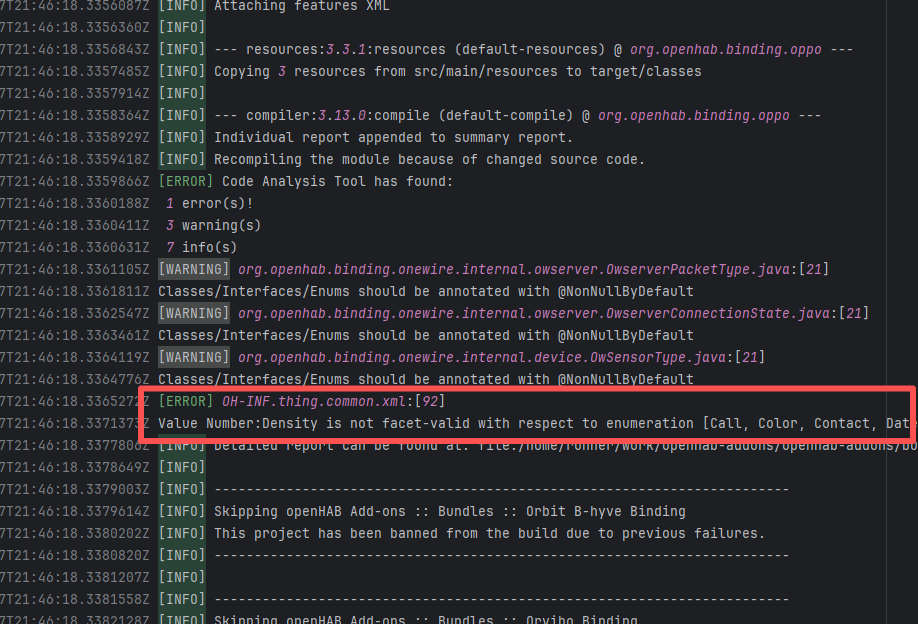

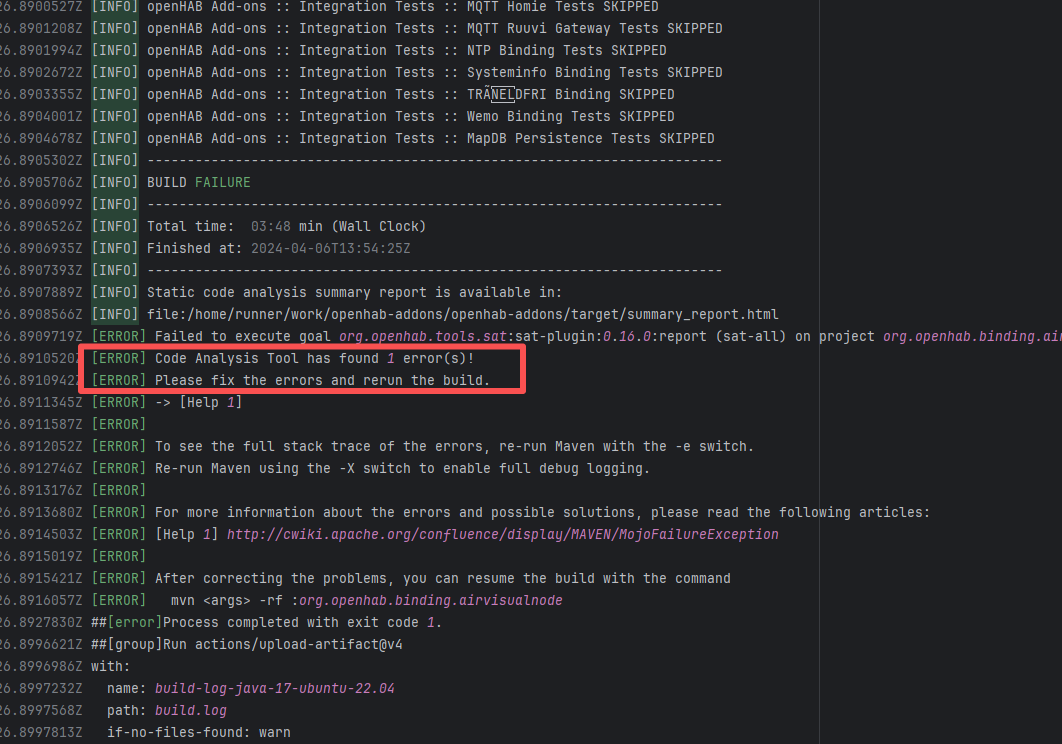

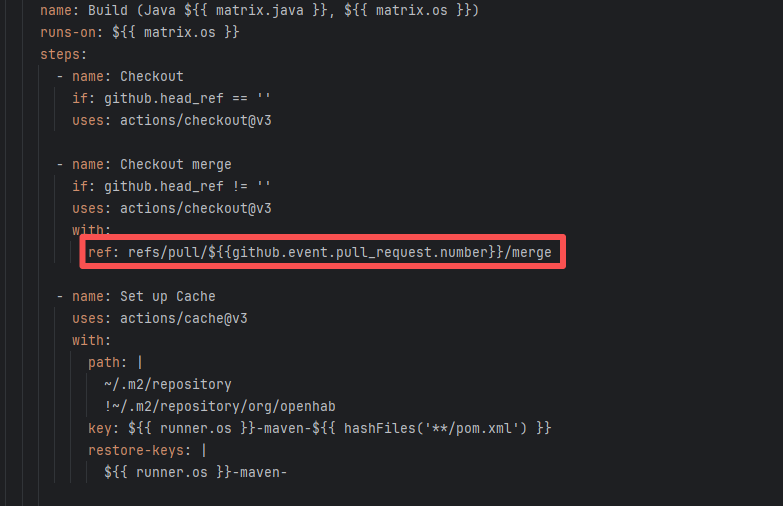

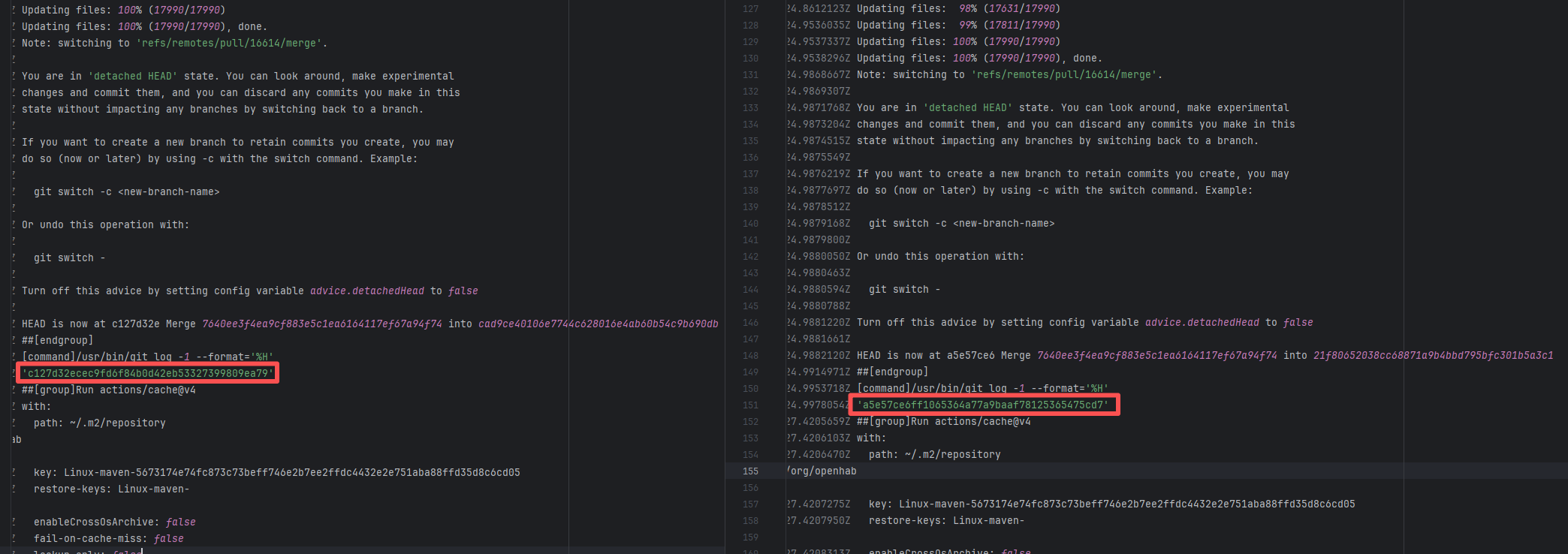

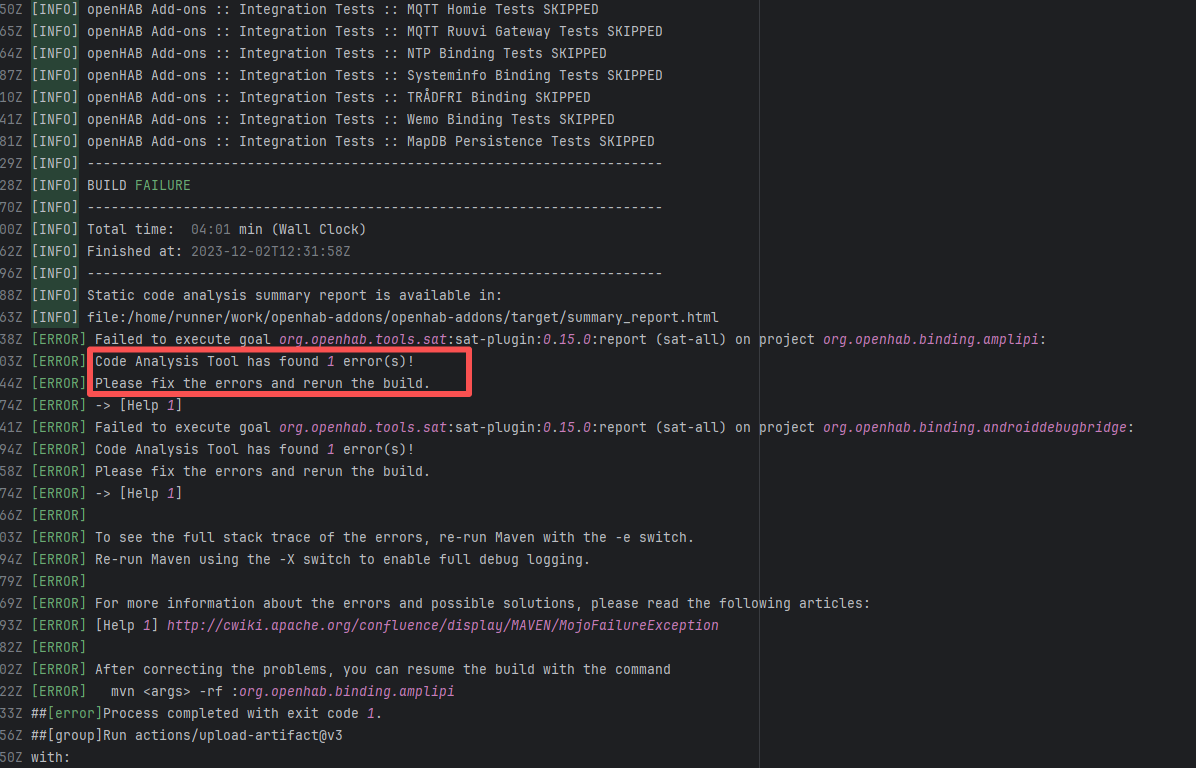

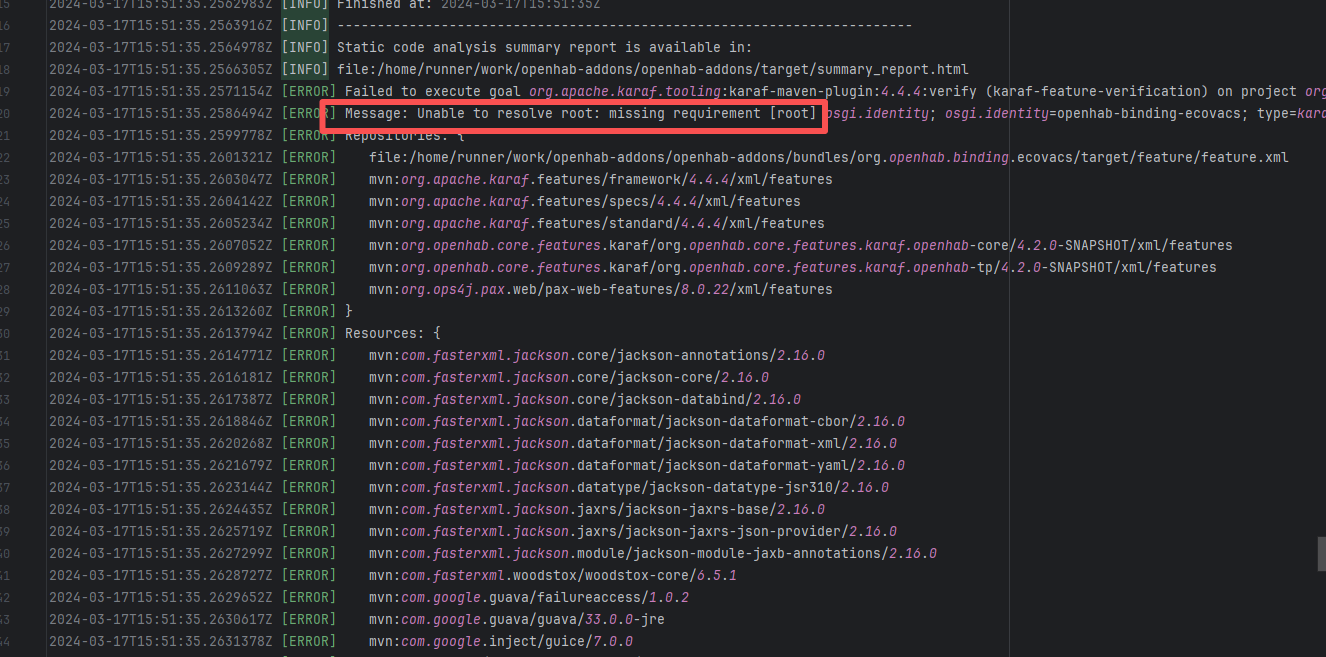

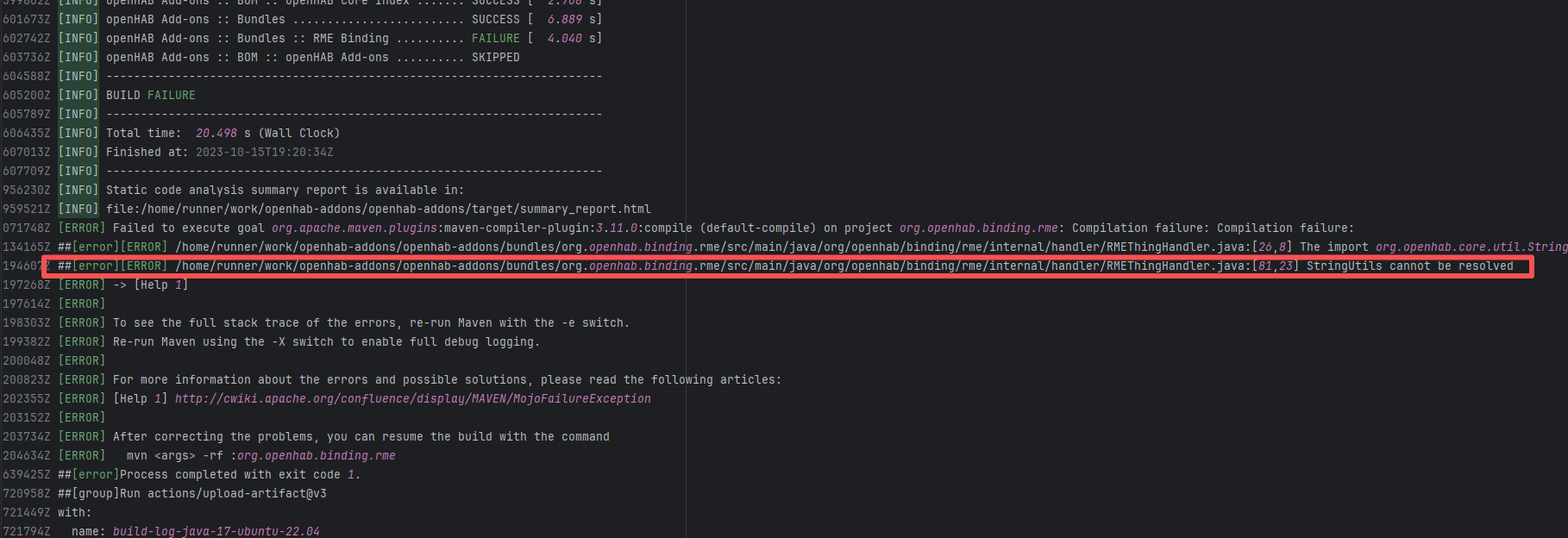

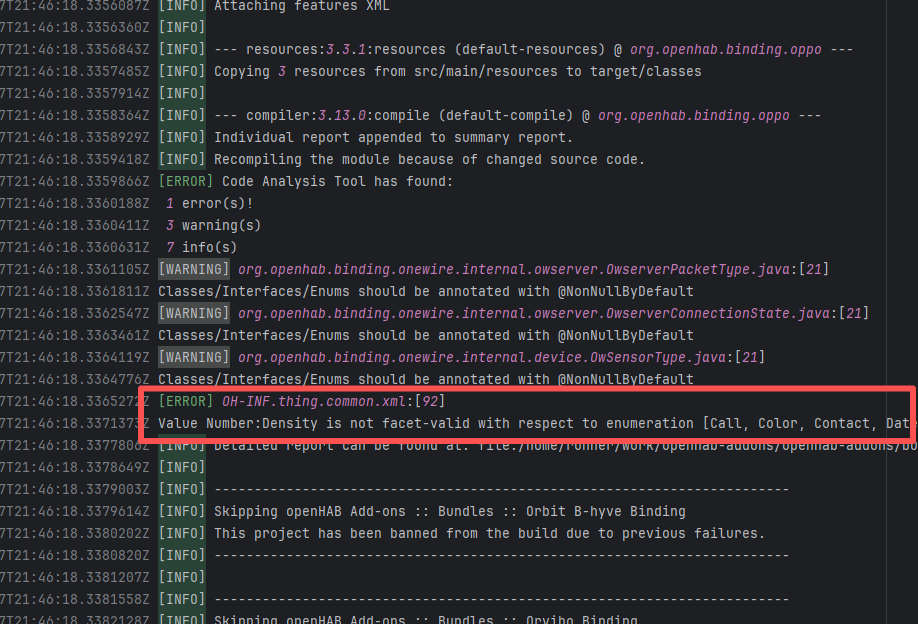

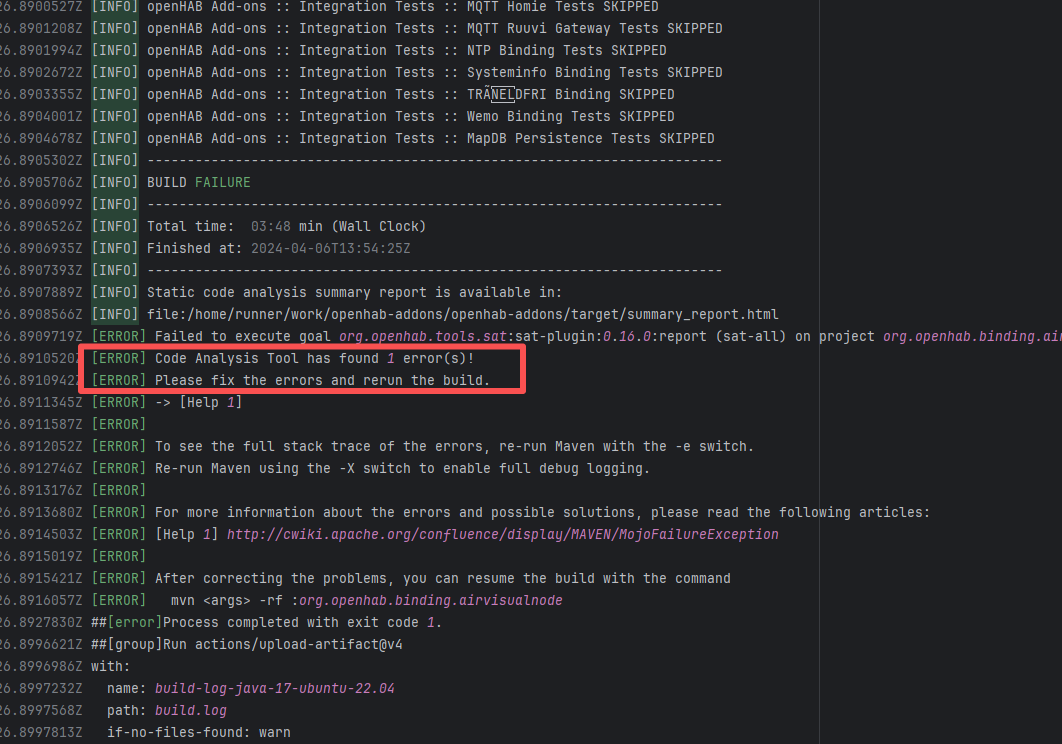

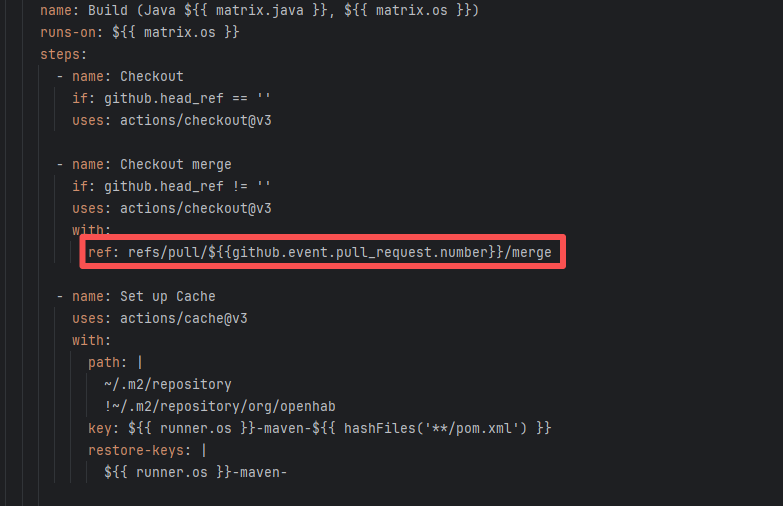

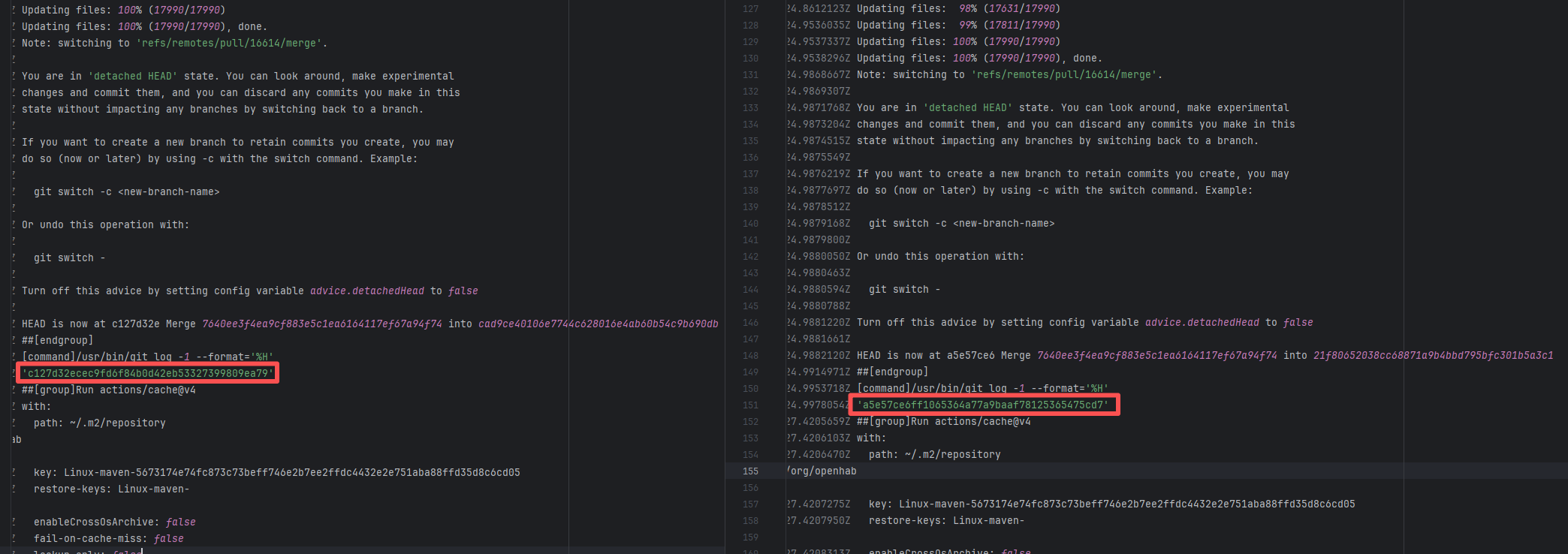

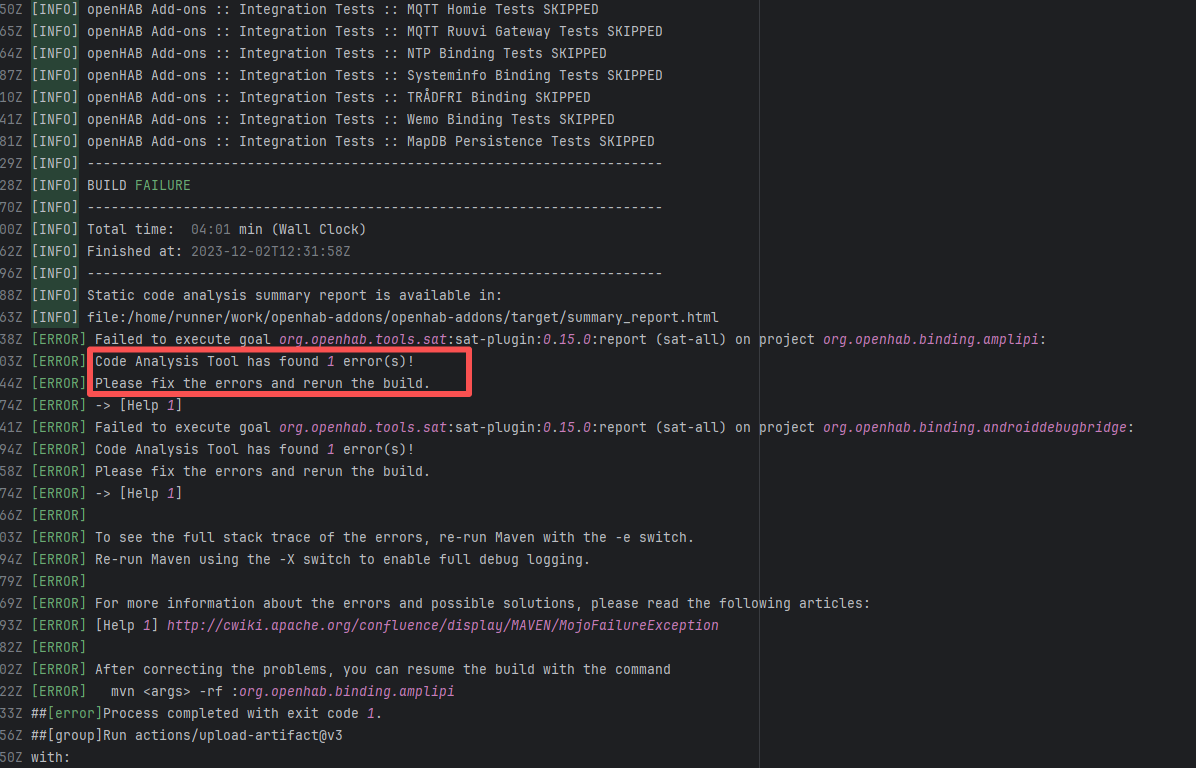

| openhab/openhab-addons |

22756221782 |

Network Issue |

Error: The error occurred when building the OpenHAB binding org.openhab.binding.ecovacs using the Karaf Maven plugin. The issue occurred during feature resolution, specifically due to missing dependencies or the inability to resolve dependencies, causing the build to fail.

Root Cause: Succeeded after a rerun, which indicates that the error is a transient error caused by network fluctuations. |

Short-term Fix: Rerun the job; it will automatically succeed after network conditions recover.

Long-term Defense:

1. Configure domestic mirror sources to replace official sources;

2. Add a retry mechanism (e.g., retry plugin);

3. Use CDN to accelerate dependency downloads. |

|

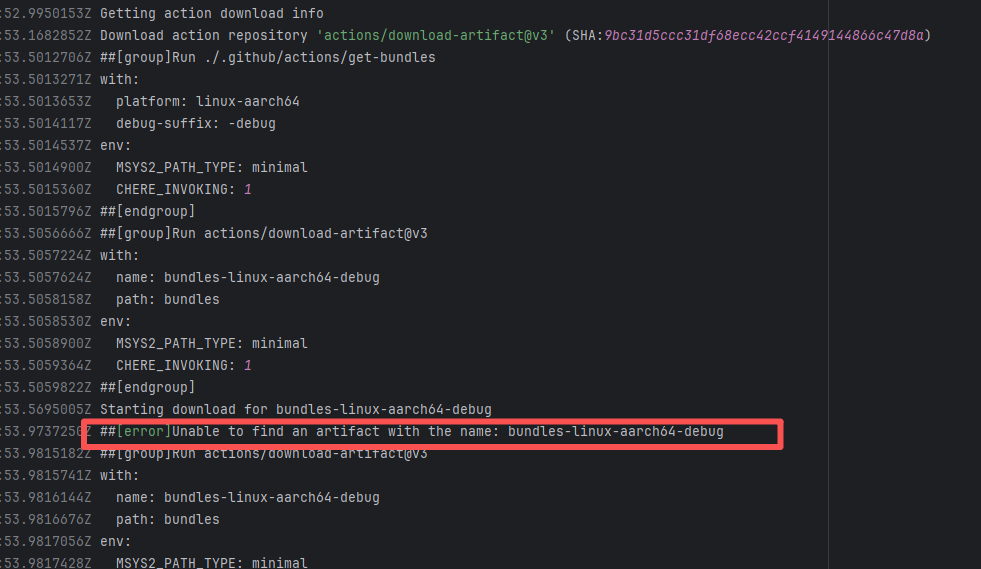

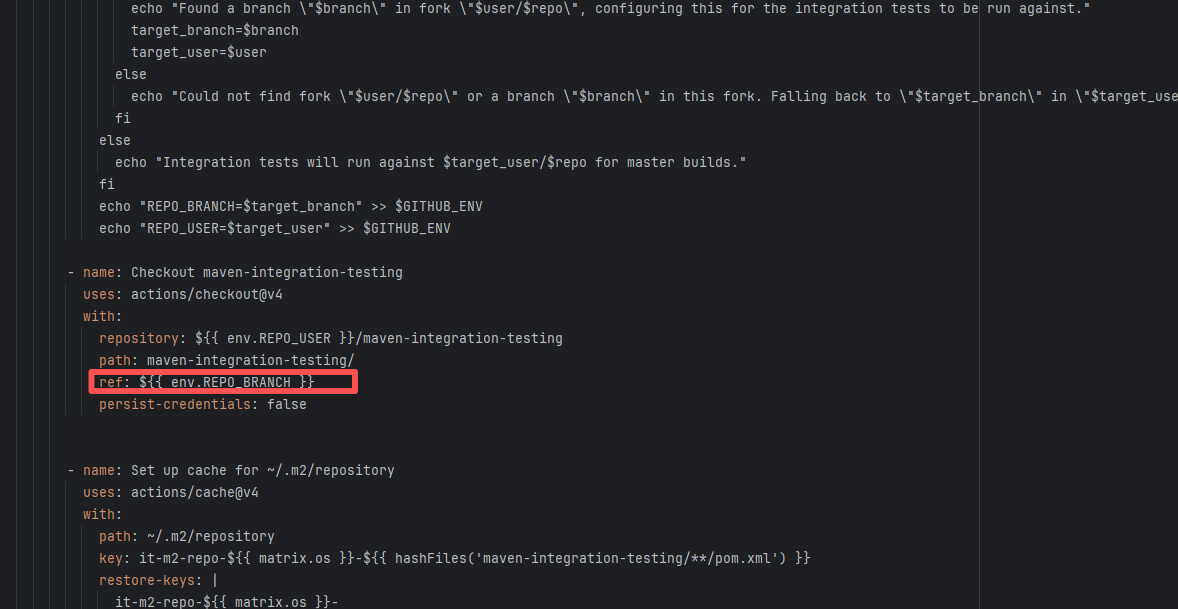

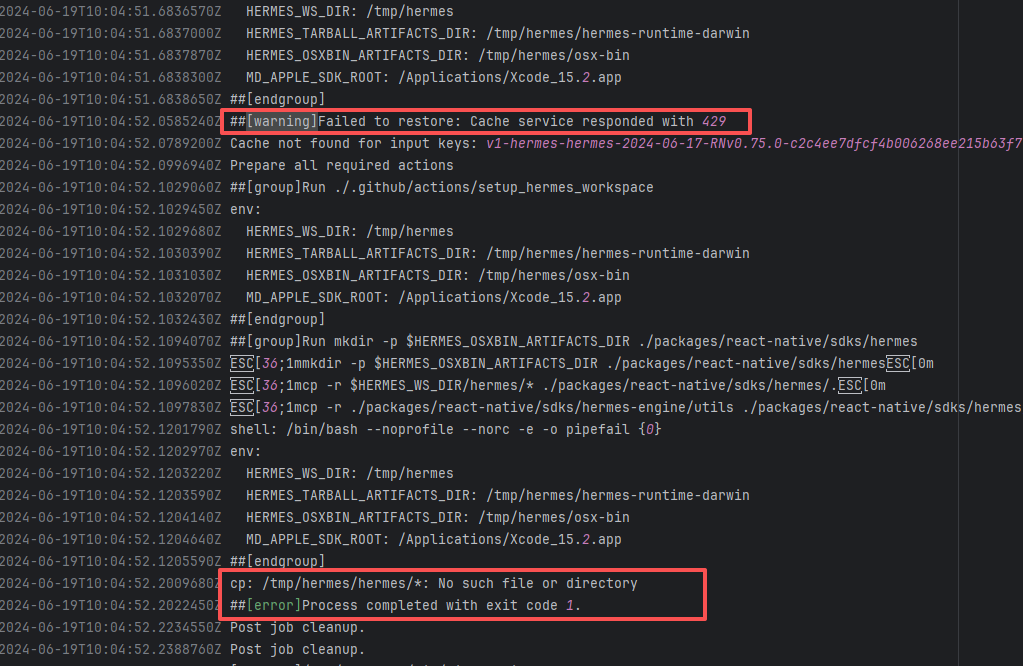

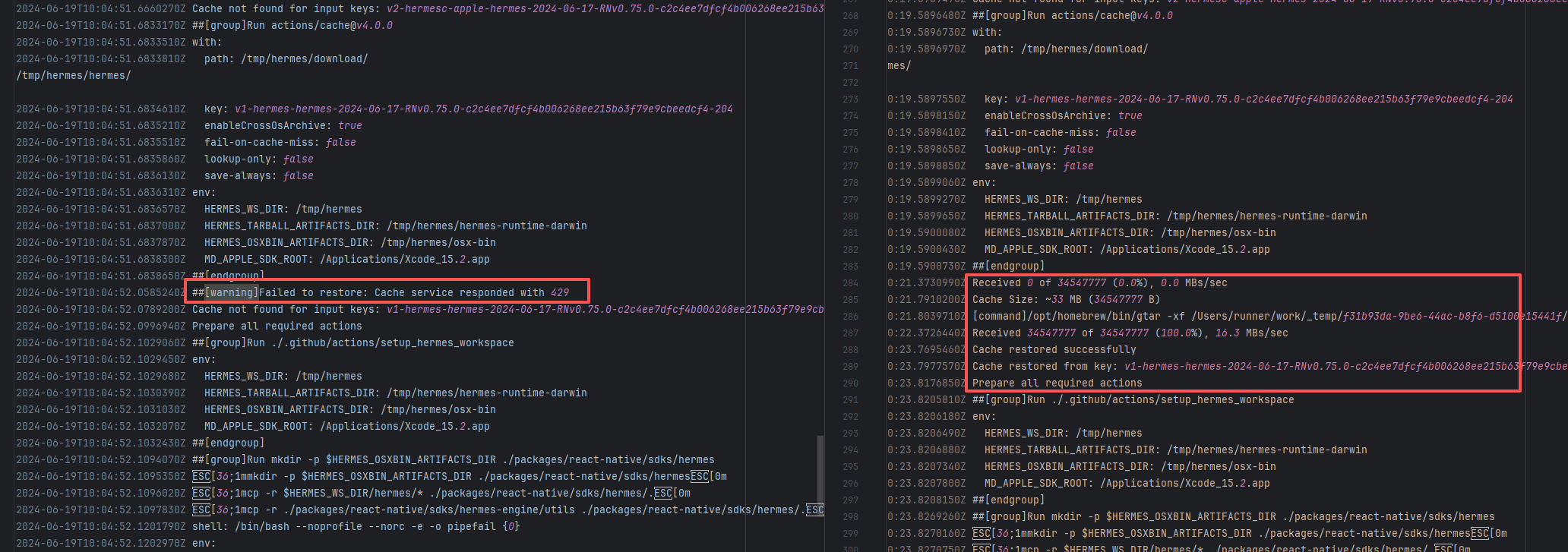

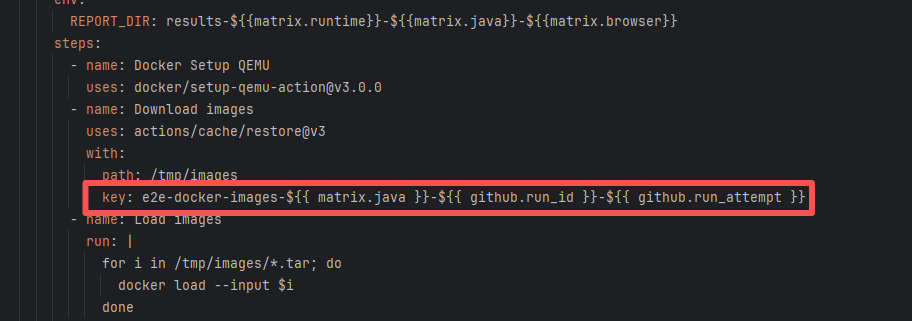

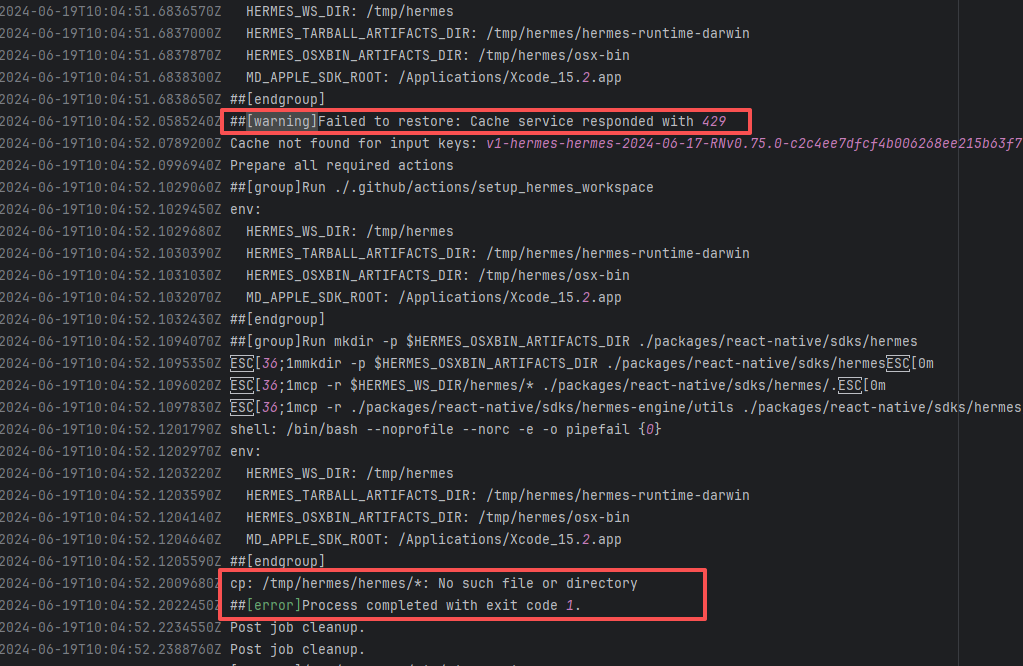

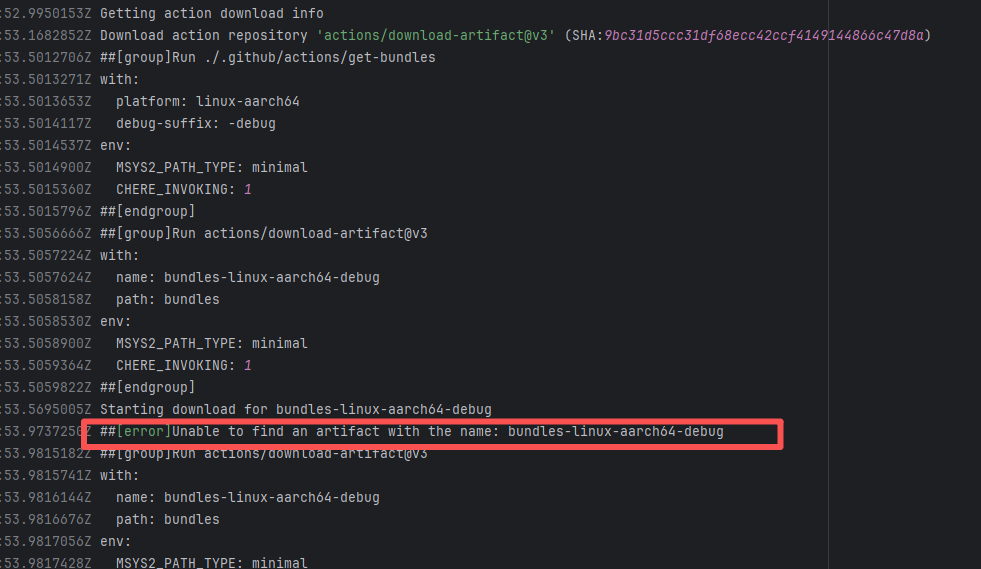

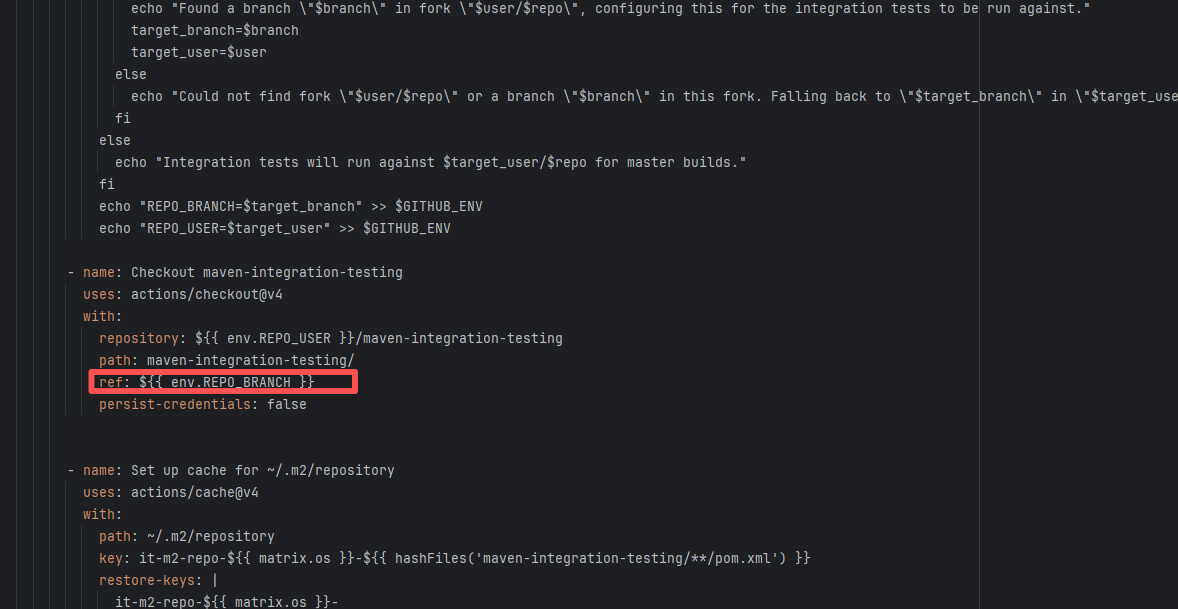

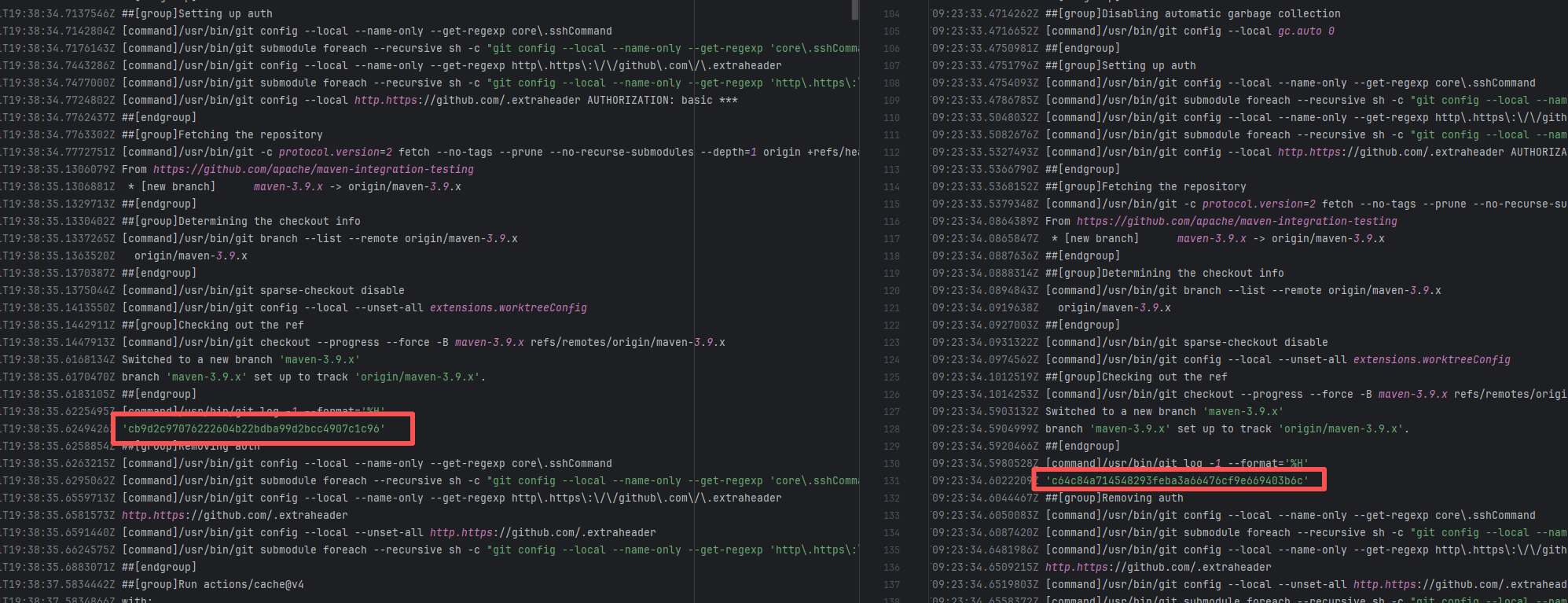

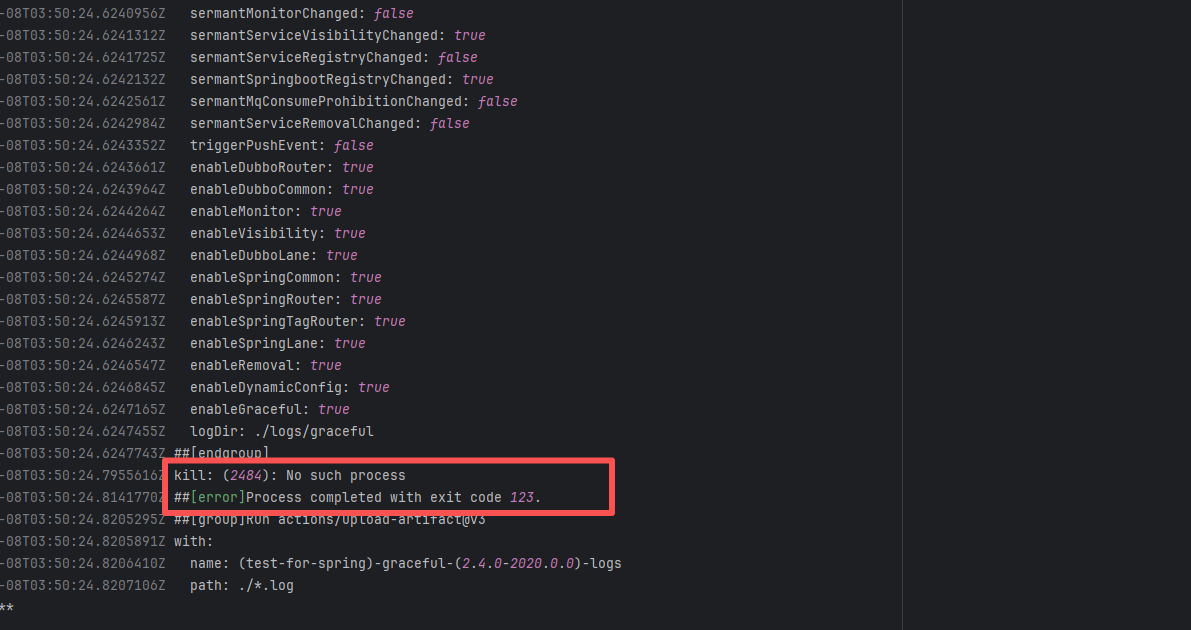

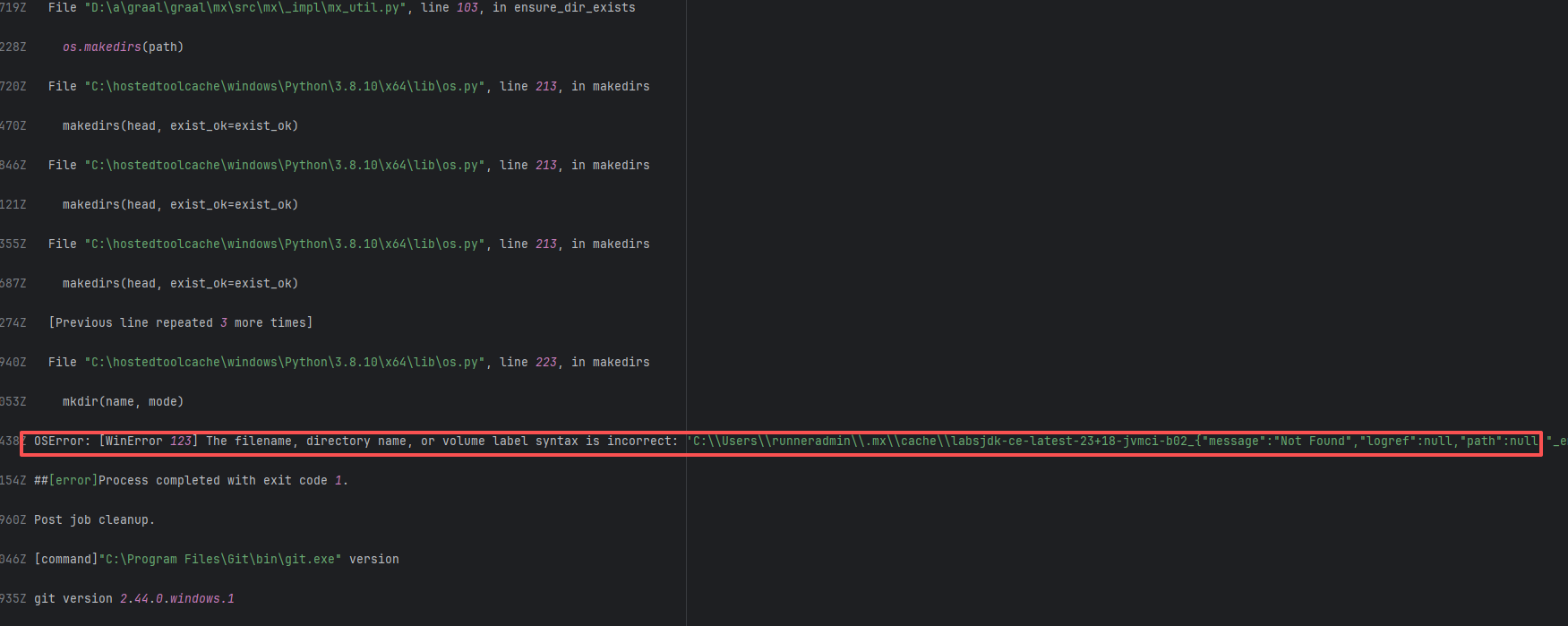

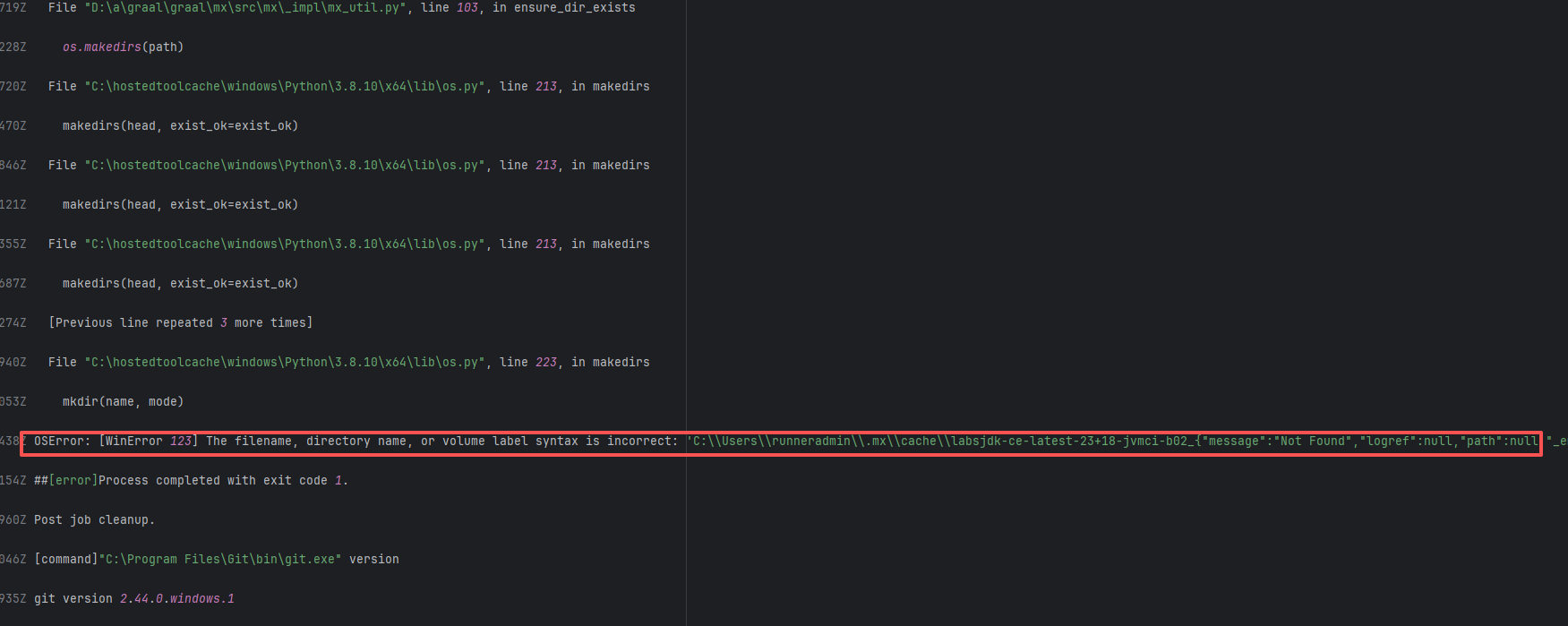

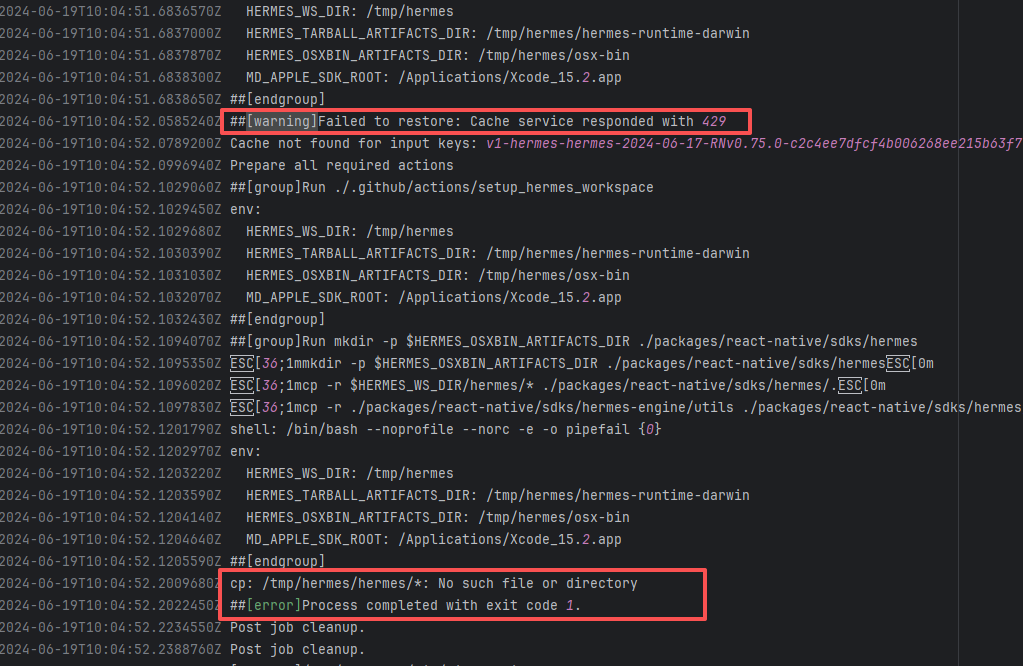

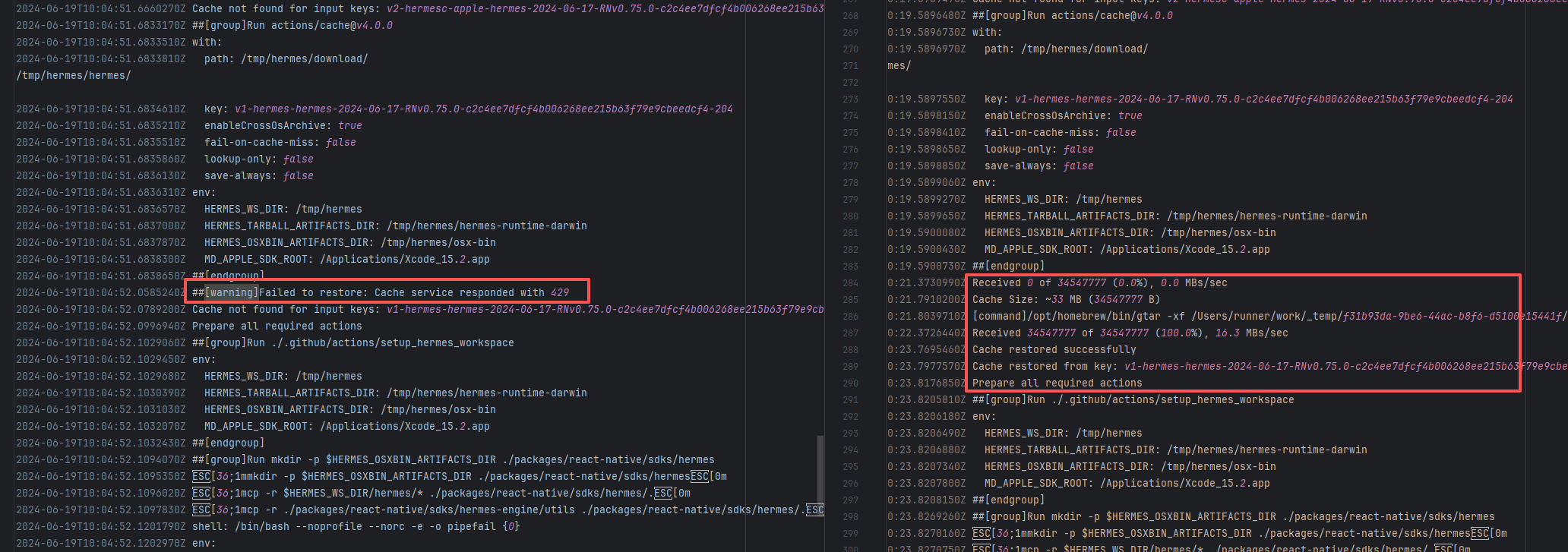

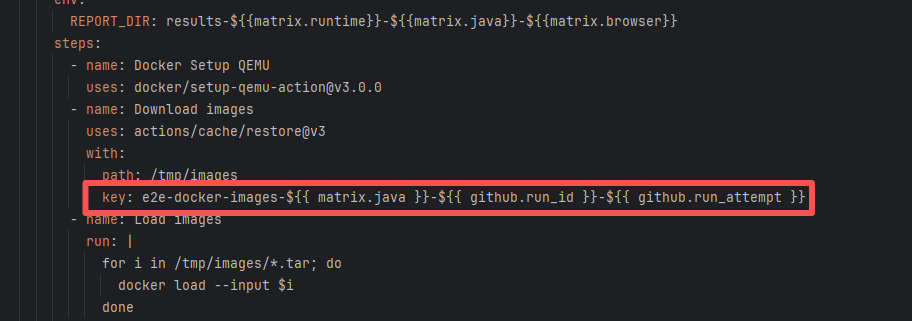

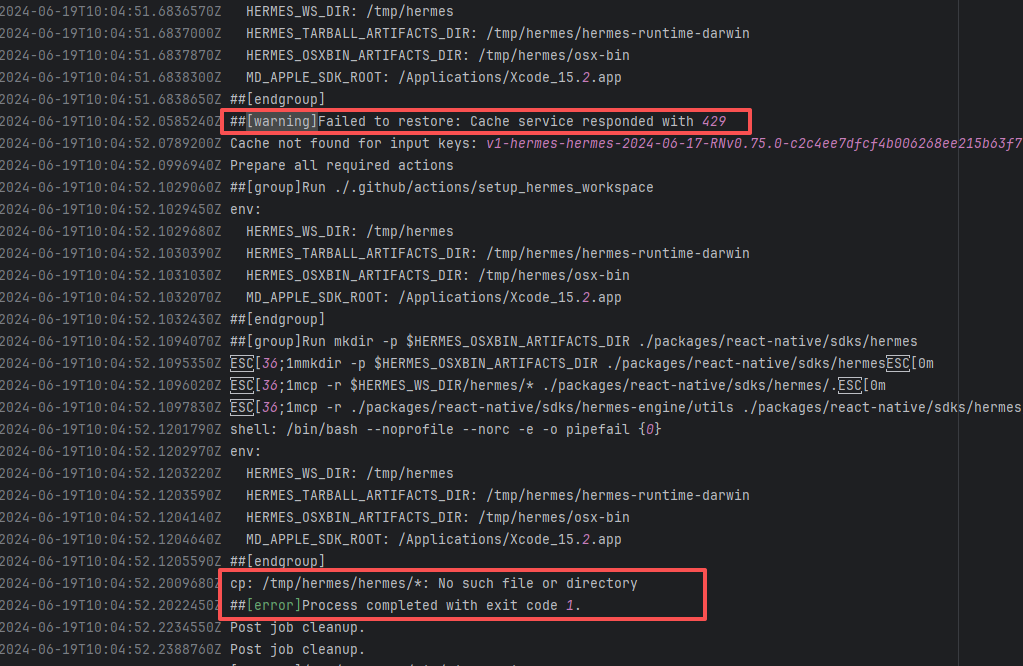

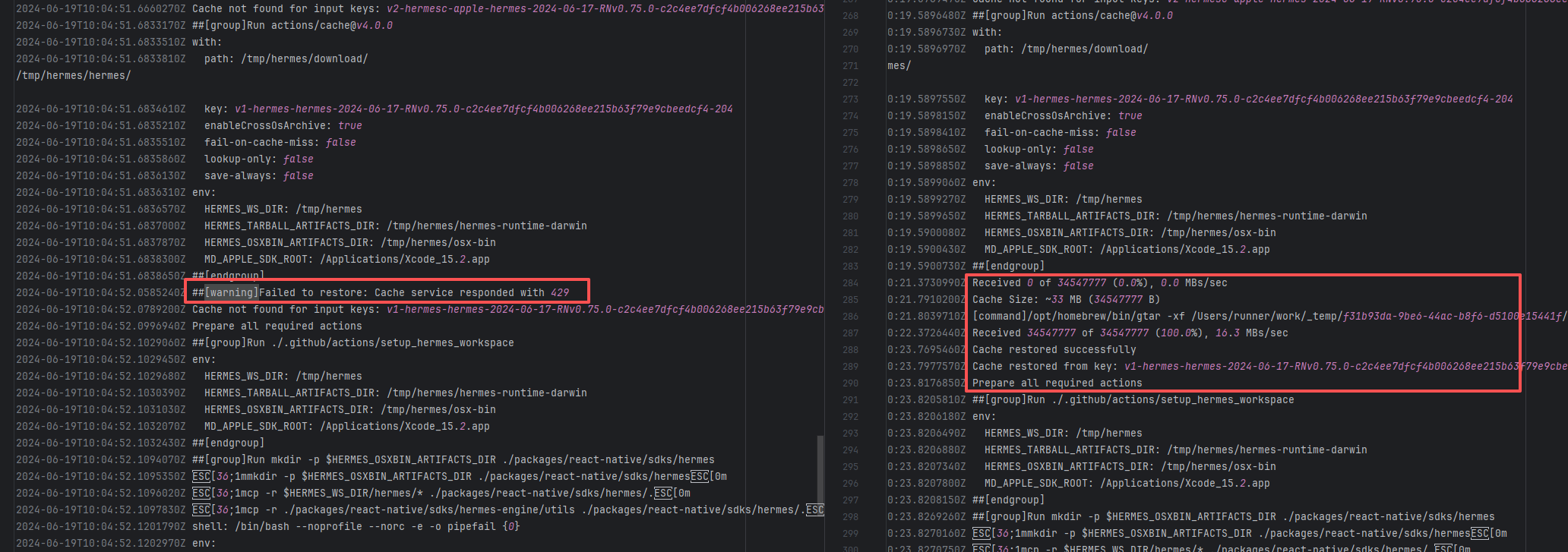

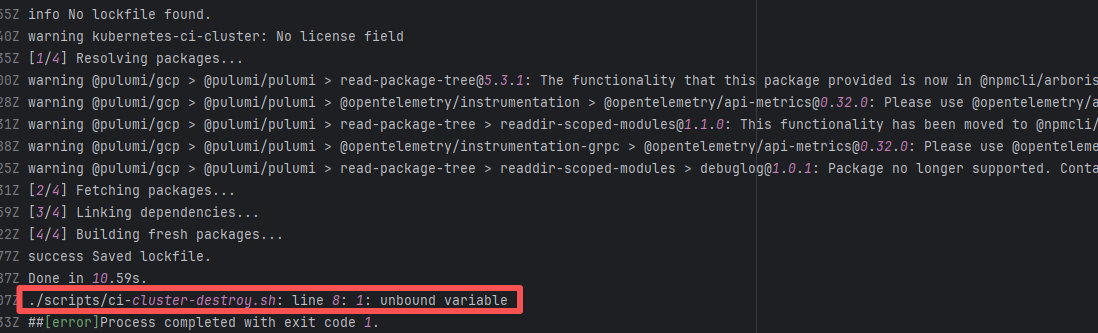

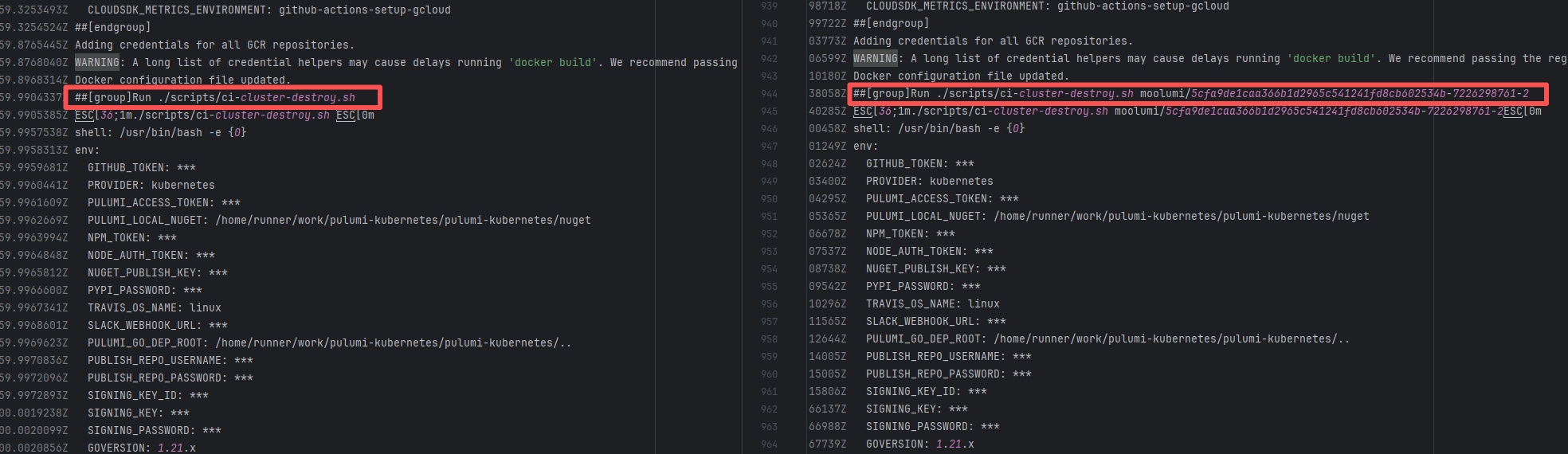

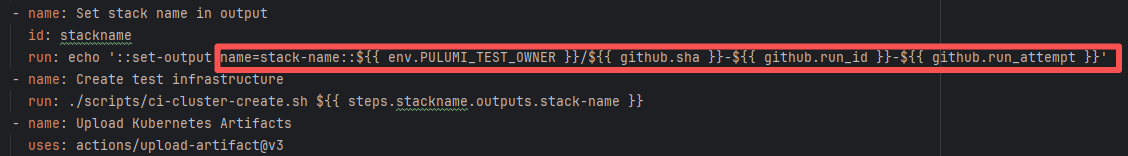

| dragonwell-project/dragonwell11 |

22821050143 |

Network Issue |

Error: The error message is "Unable to find an artifact with the name: bundles-linux-aarch64-debug", indicating that the download step could not find an artifact named bundles-linux-aarch64-debug, thereby causing the build to fail.

Root Cause: Succeeded after a rerun, which indicates that the error is a transient error caused by network fluctuations. |

Short-term Fix: Rerun the job; it will automatically succeed after network conditions recover.

Long-term Defense:

1. Configure domestic mirror sources to replace official sources;

2. Add a retry mechanism (e.g., retry plugin);

3. Use CDN to accelerate dependency downloads. |

|

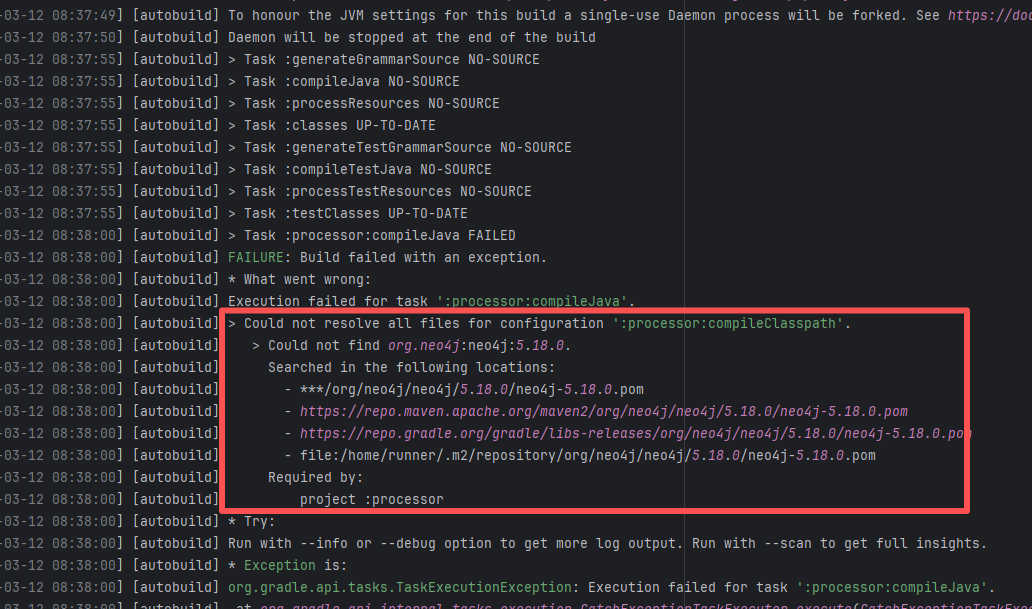

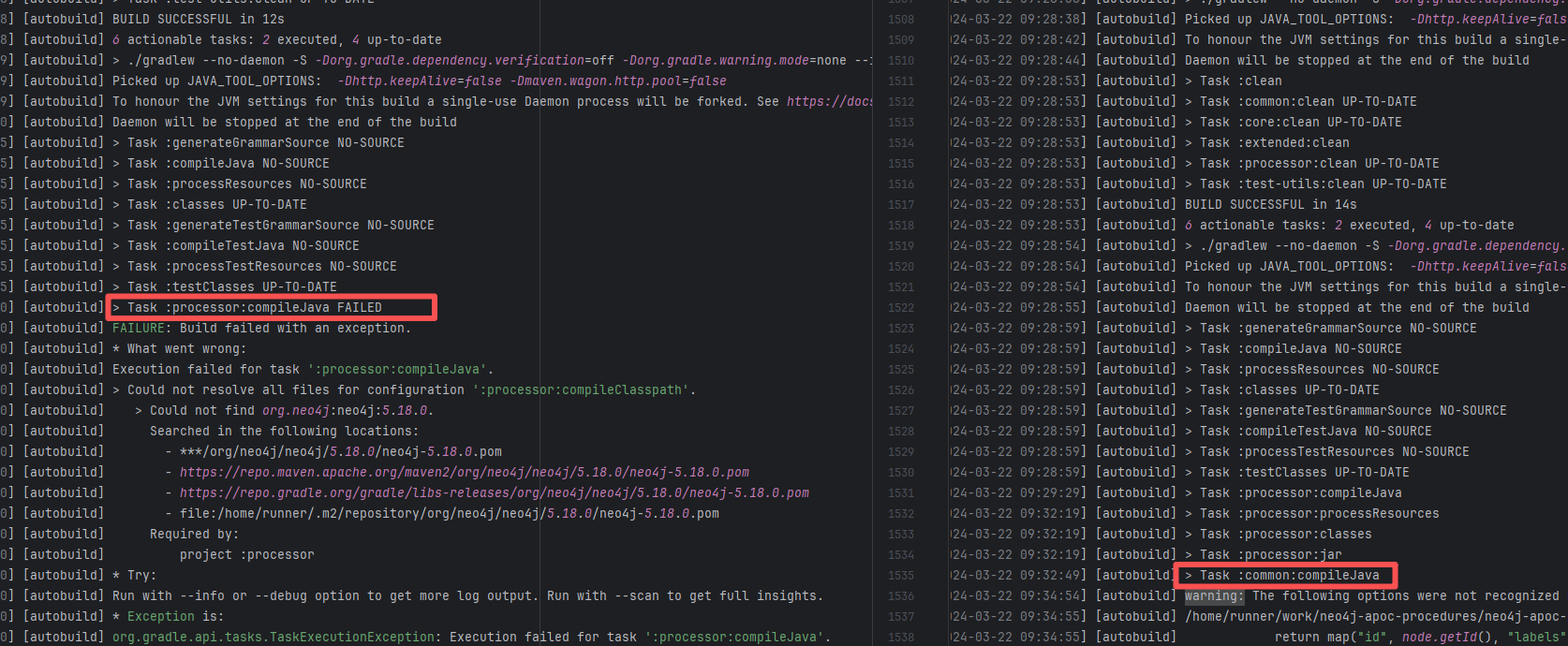

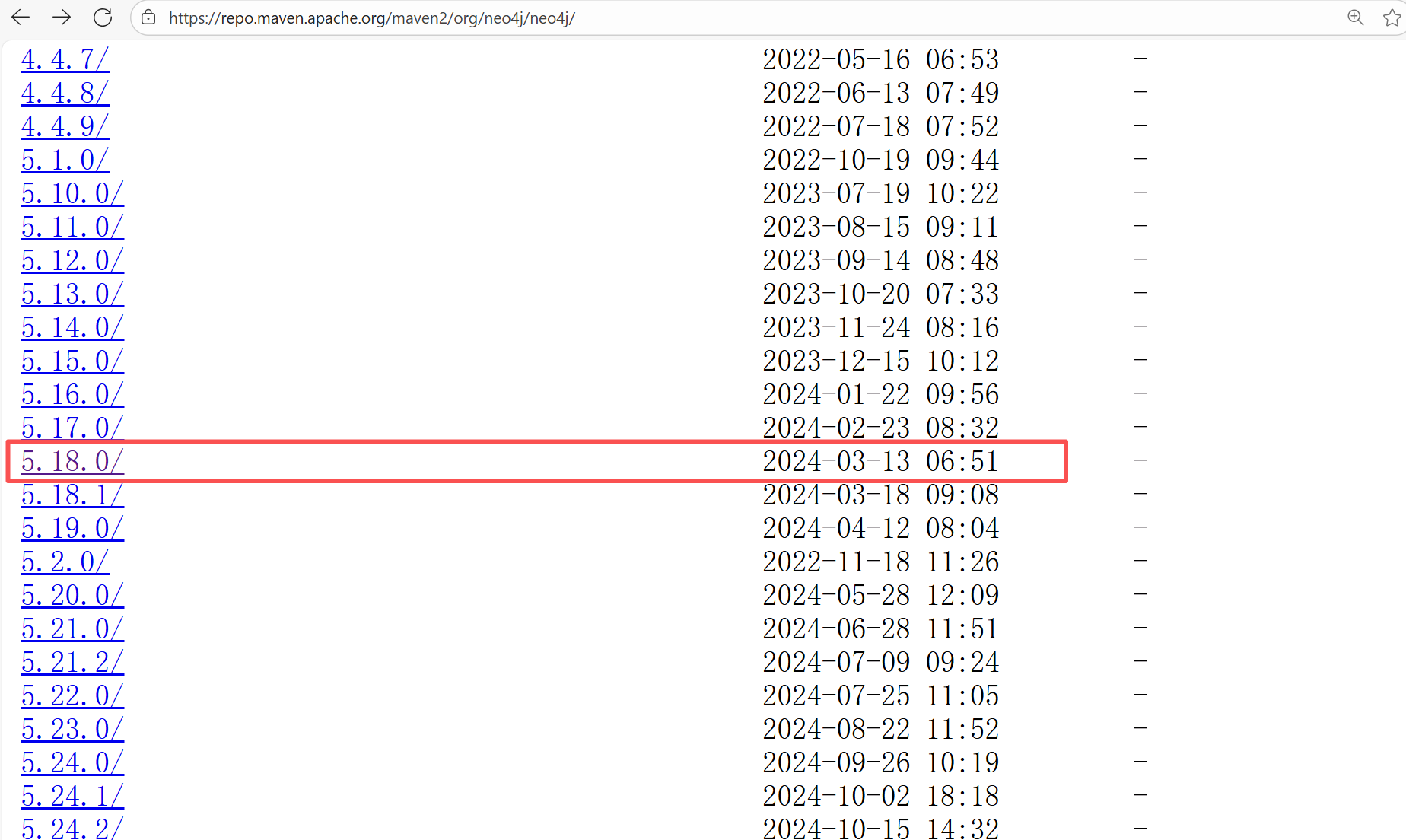

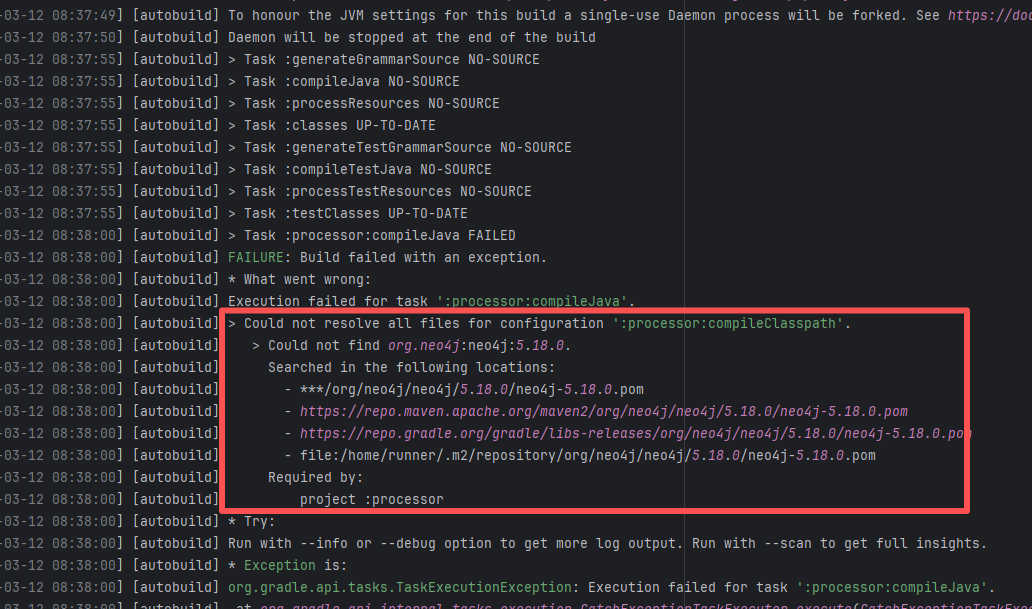

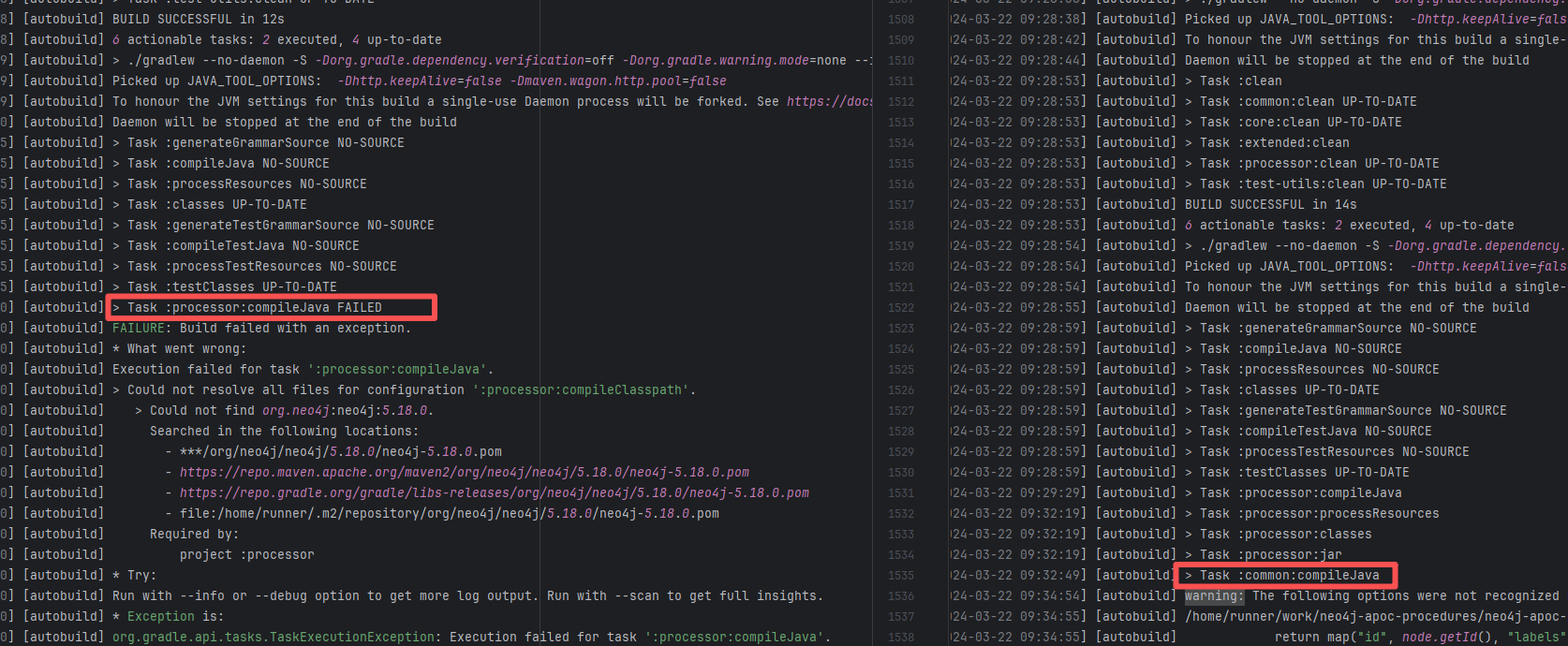

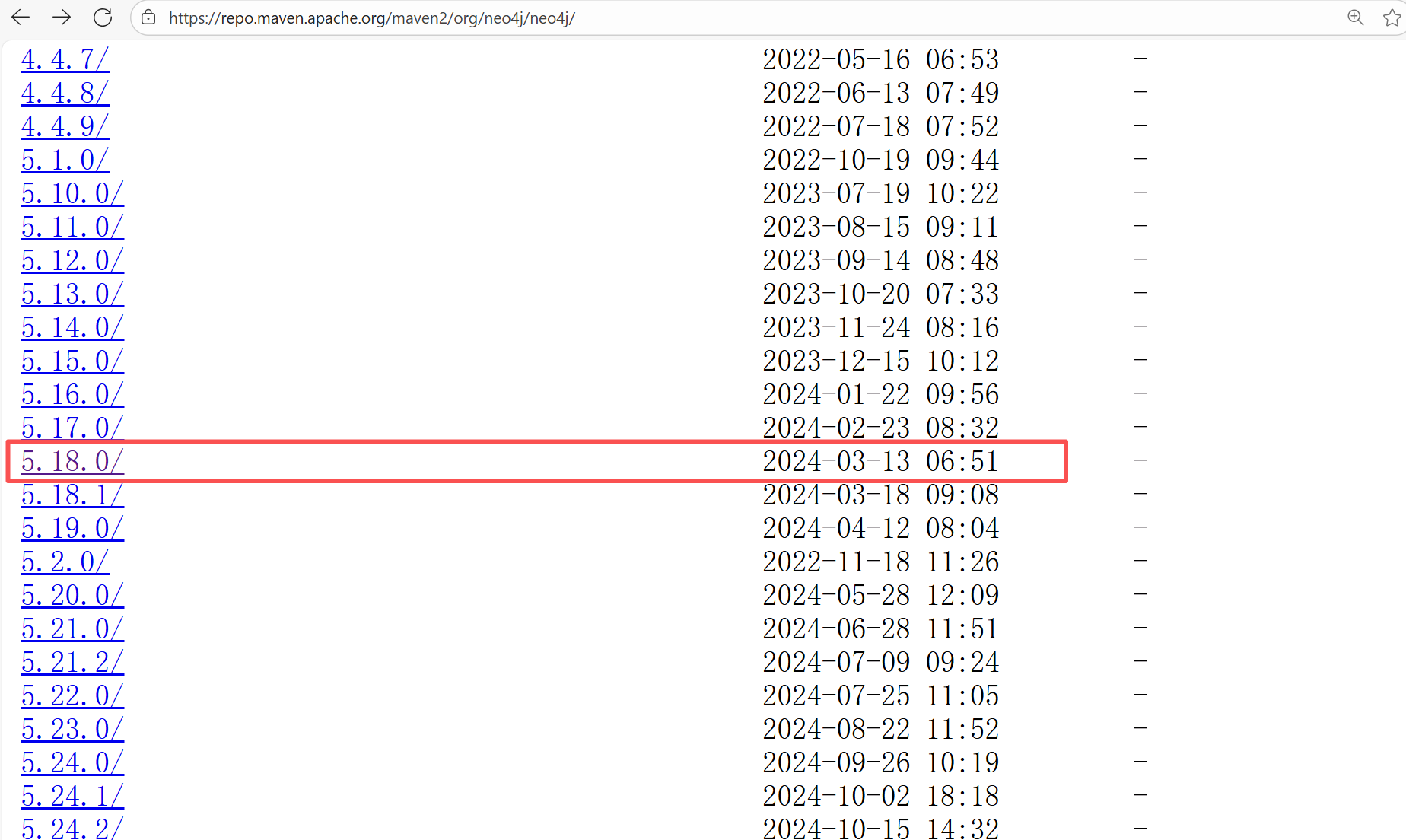

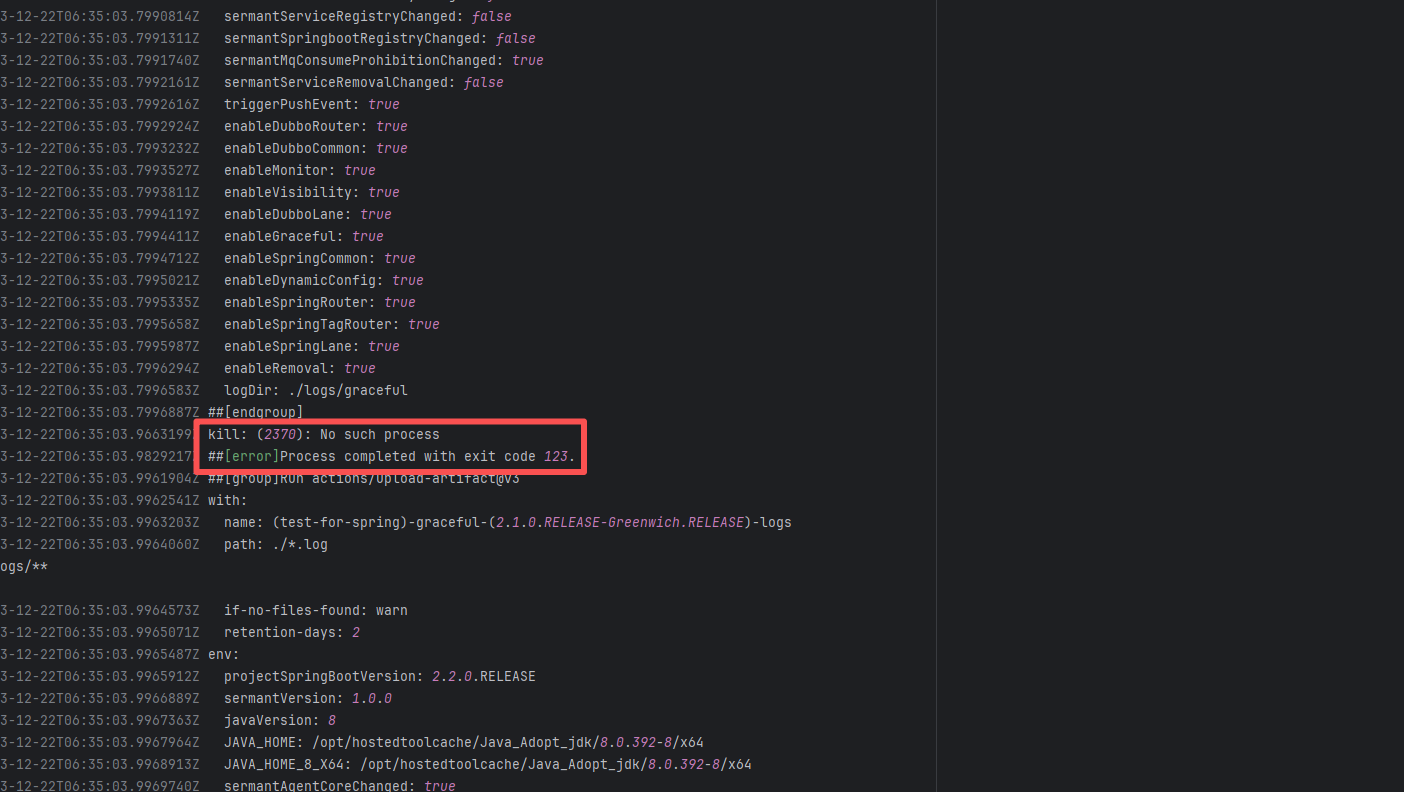

| neo4j-contrib/neo4j-apoc-procedures |

21758477796 |

Missing Dependency |

Error: The error message indicates that the build failed because the dependency org.neo4j:neo4j:5.18.0 could not be resolved. The build system tried multiple repository addresses but did not find the dependency file for this version.

Root Cause: Checking the repository dependencies revealed that it had not been uploaded before the job execution (3-12). The dependency was uploaded on 3-13, and the dependency downloaded successfully after a rerun on 3-22. |

- Upload the correct dependency files to the maven repository

- Use dependency coordinates that already exist in the maven repository |

|

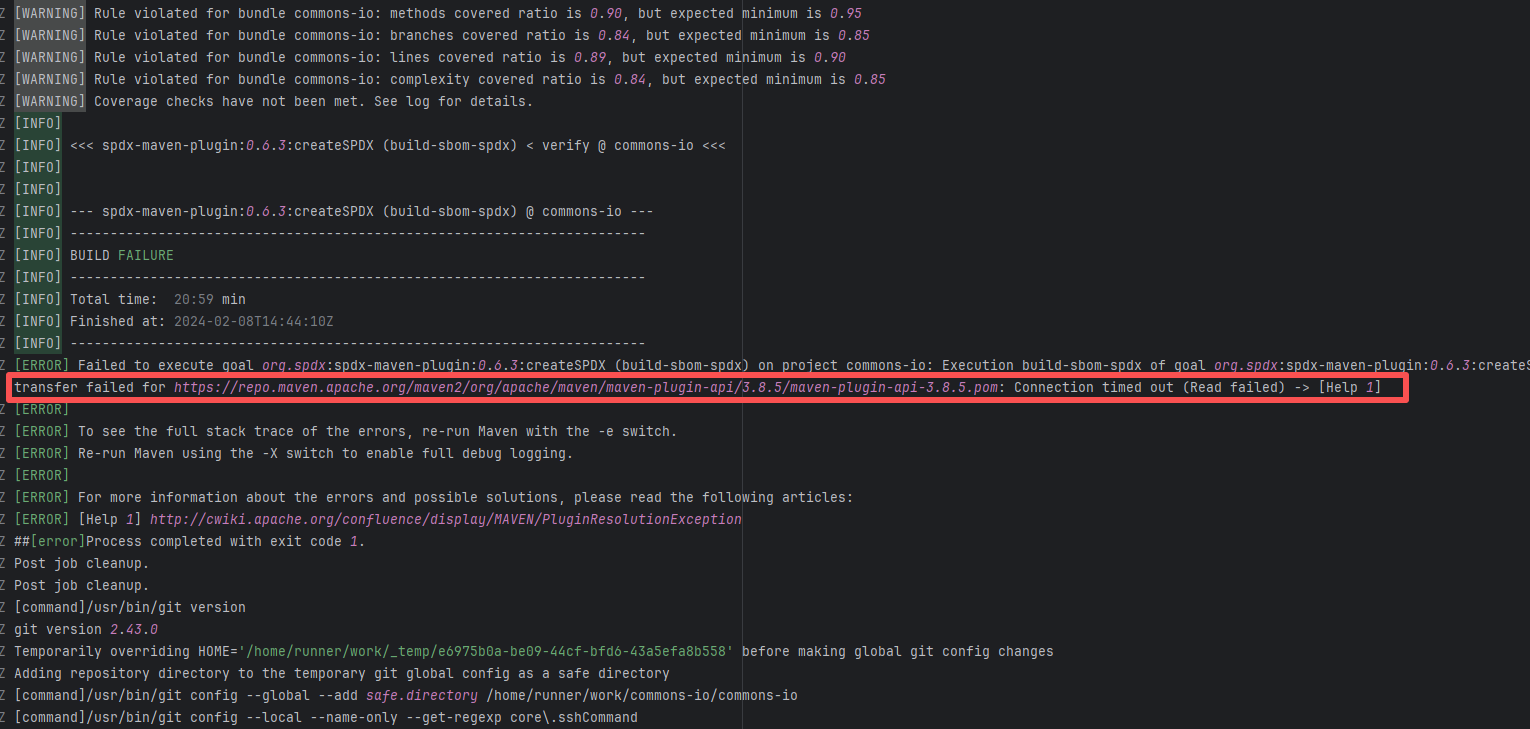

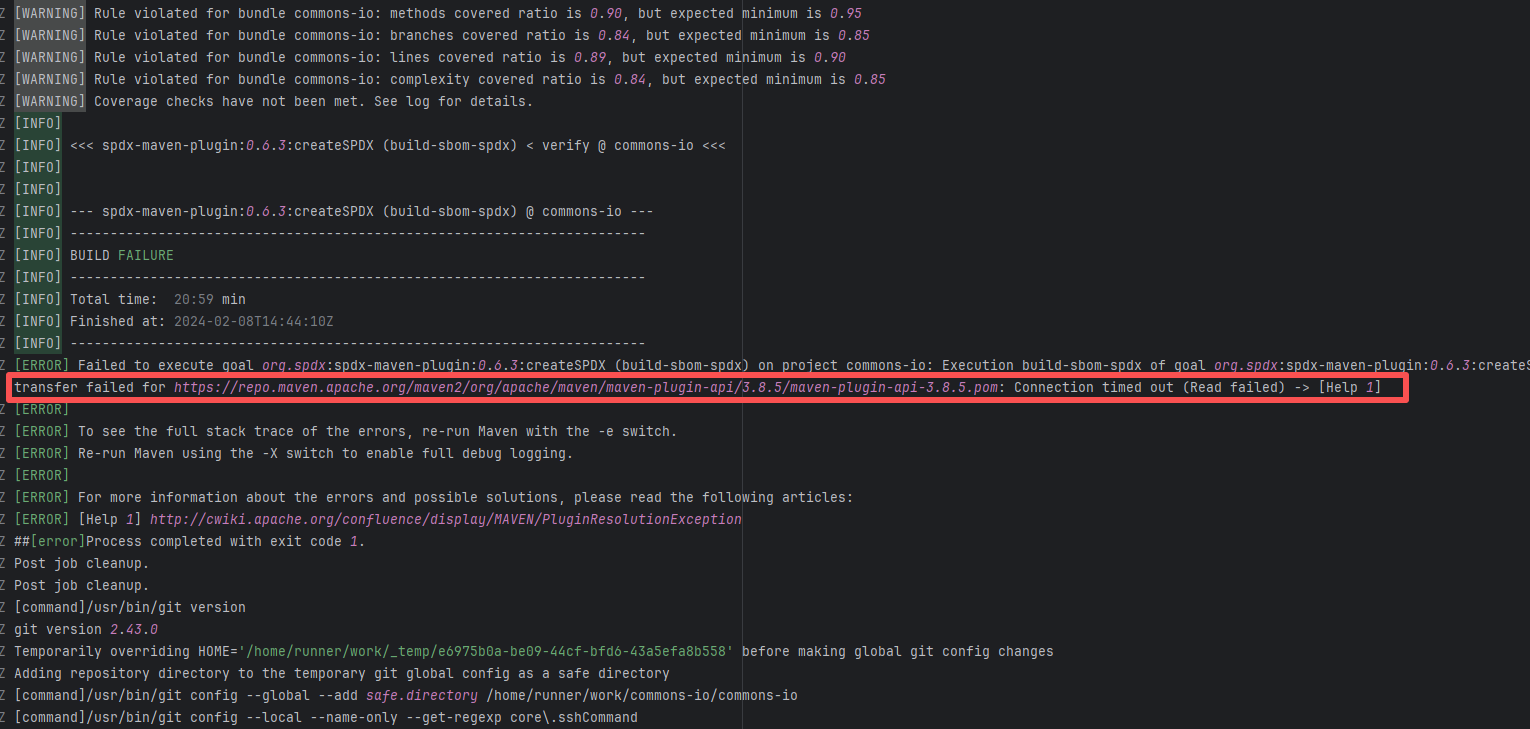

| apache/commons-io |

21366553306 |

Network Issue |

Error: A timeout error occurred while fetching resources.

Root Cause: Transient connection timeout caused by network fluctuations, not a service provider server error. |

Short-term Fix: Rerun the job; it will automatically succeed after network conditions recover.

Long-term Defense:

1. Configure domestic mirror sources to replace official sources;

2. Add a retry mechanism (e.g., retry plugin);

3. Use CDN to accelerate dependency downloads. |

|

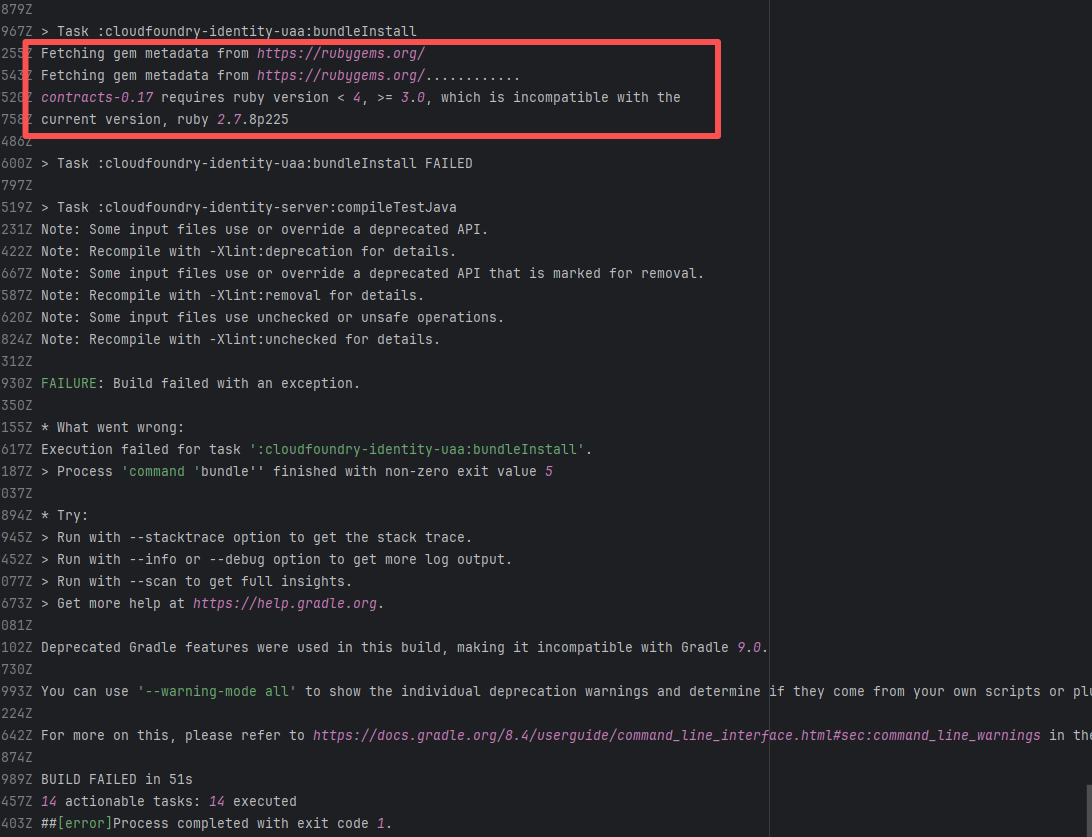

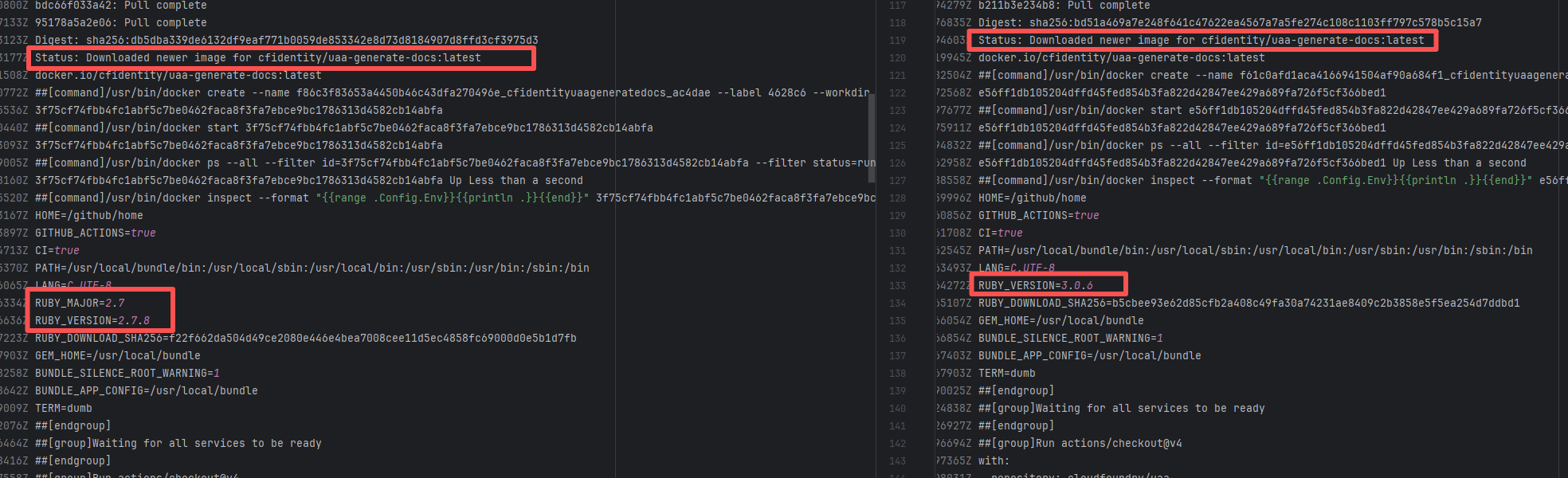

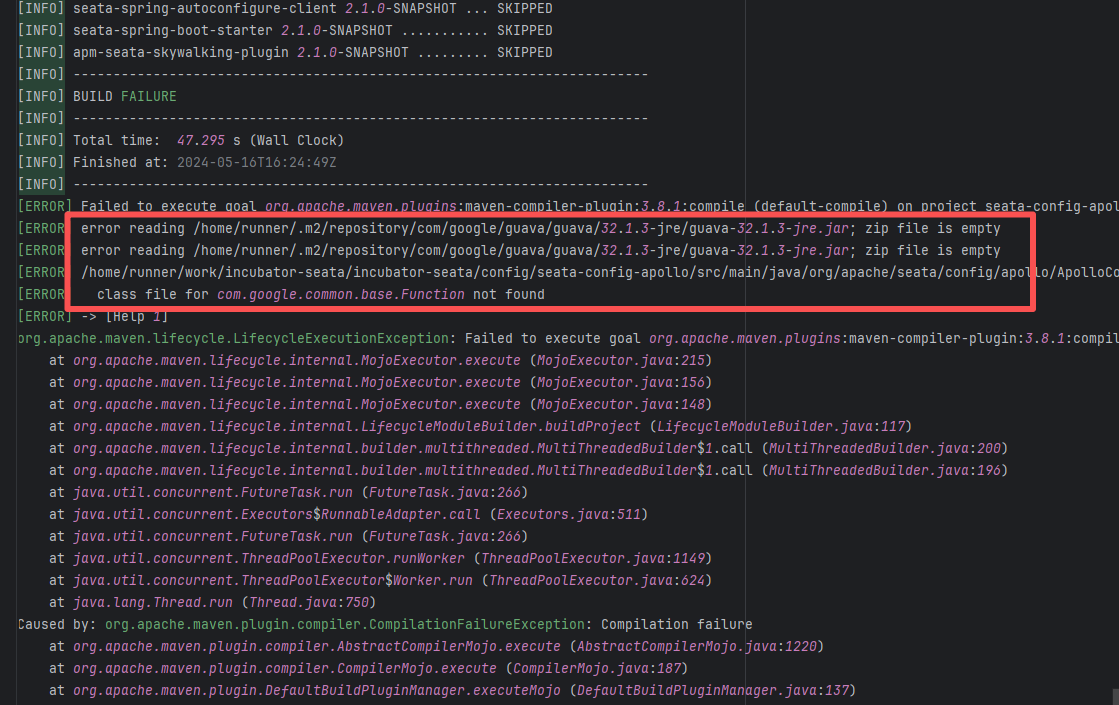

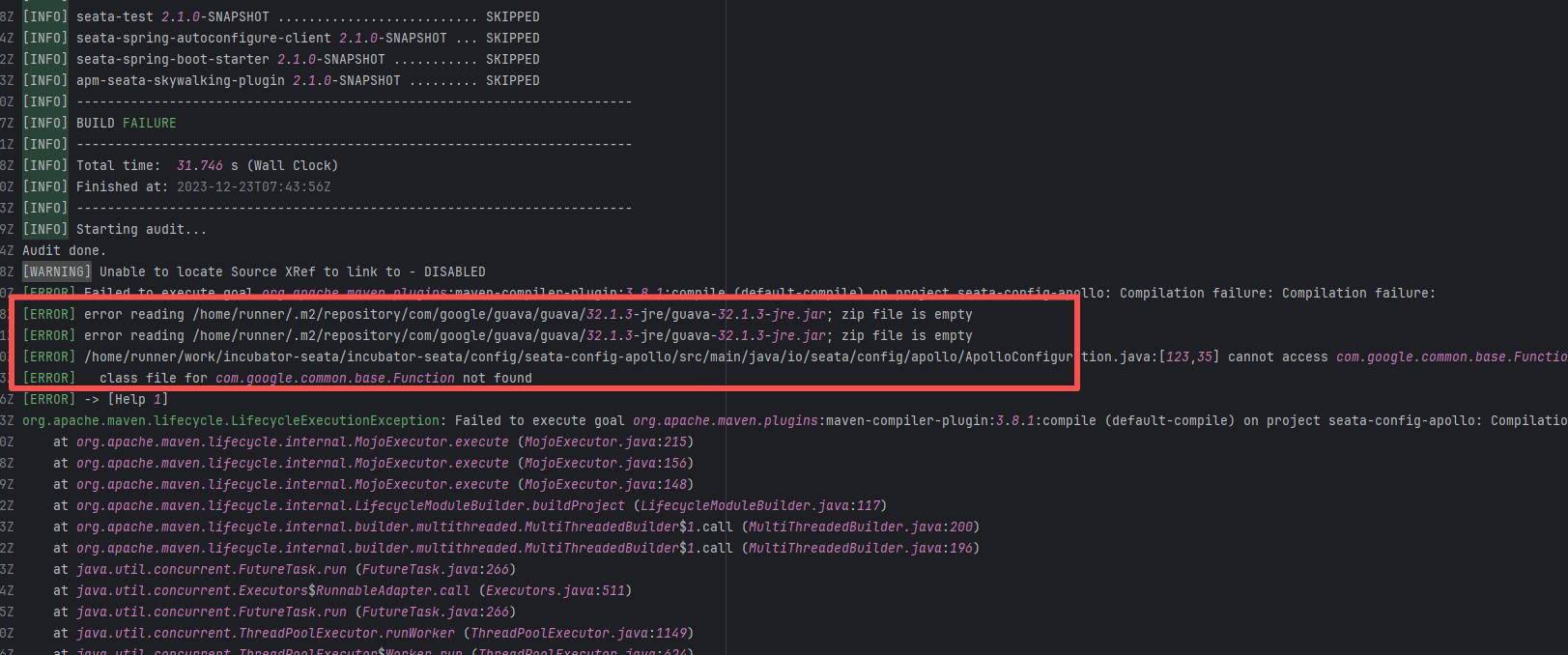

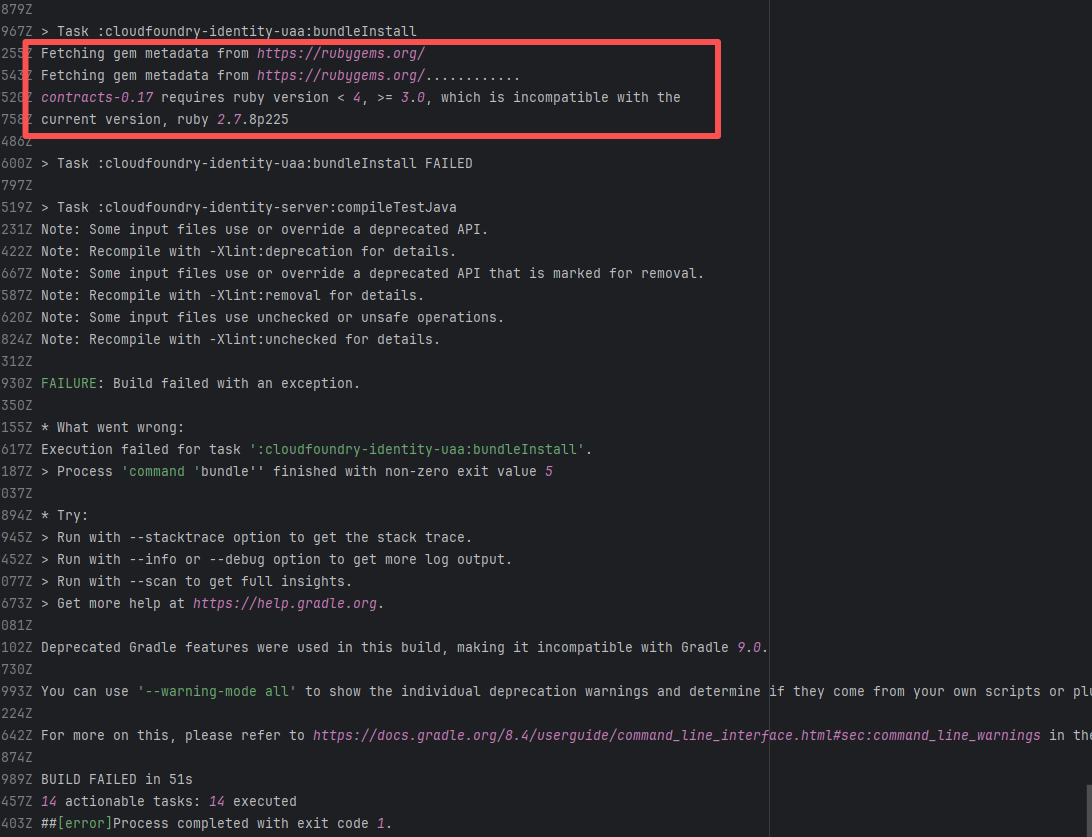

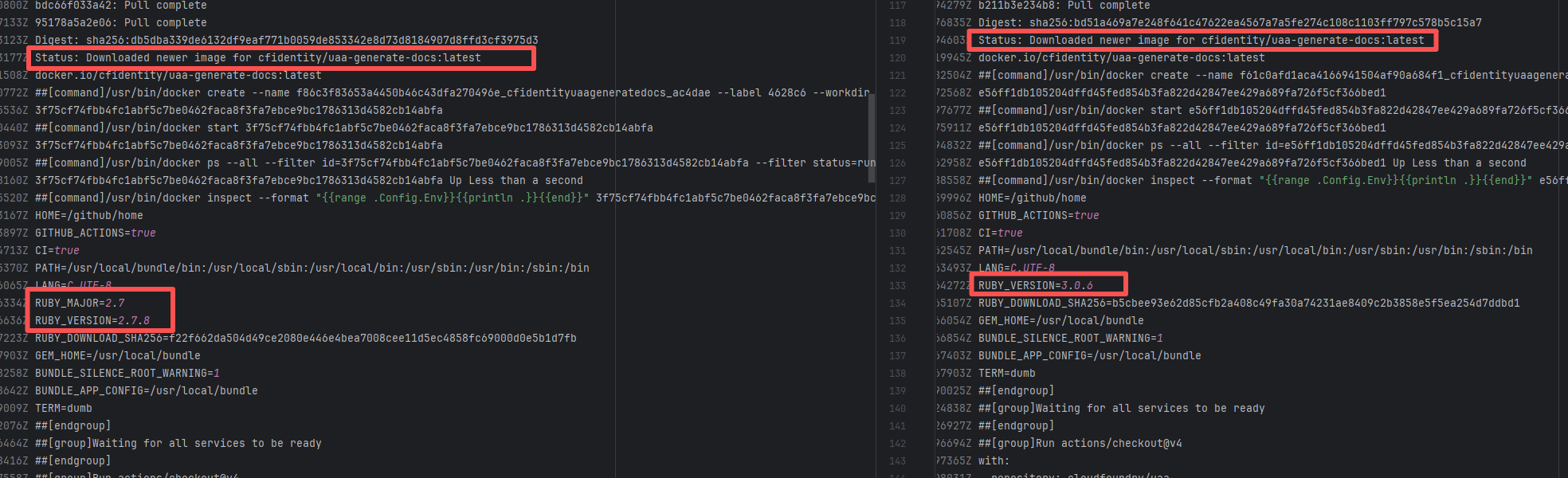

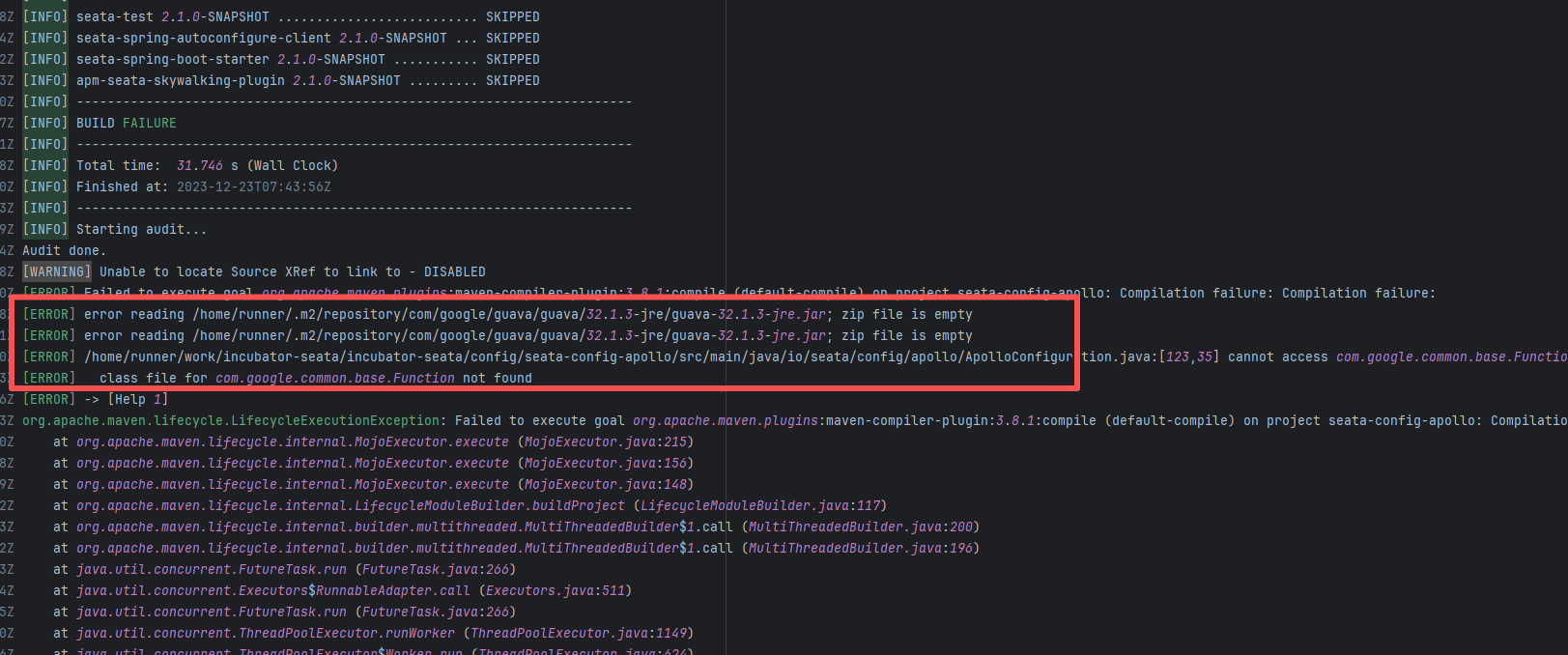

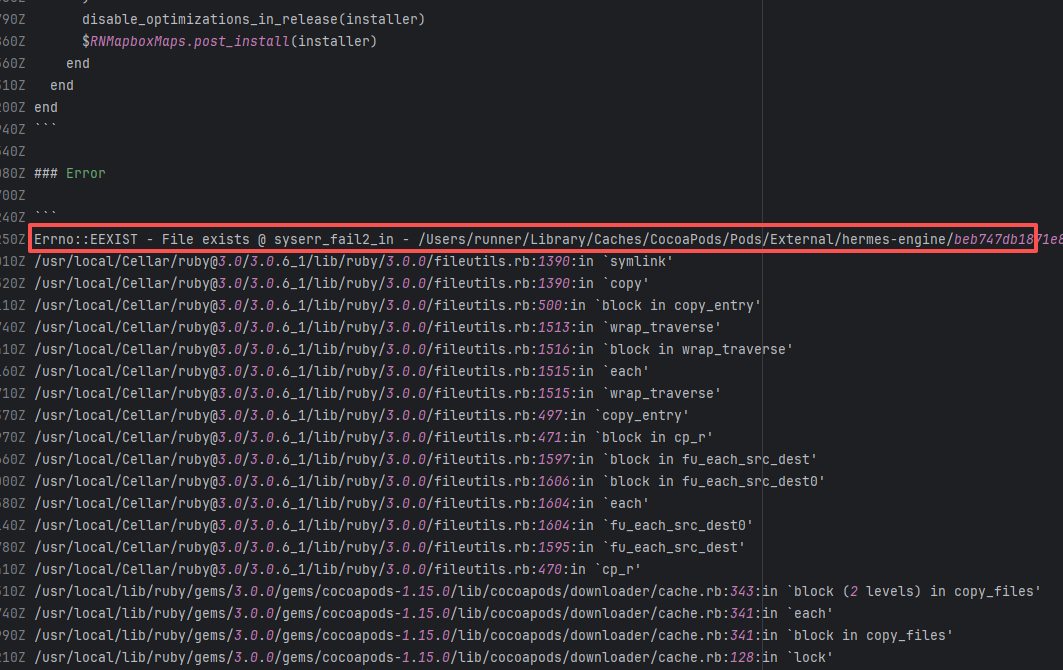

| cloudfoundry/uaa |

18110996326 |

Missing Dependency |

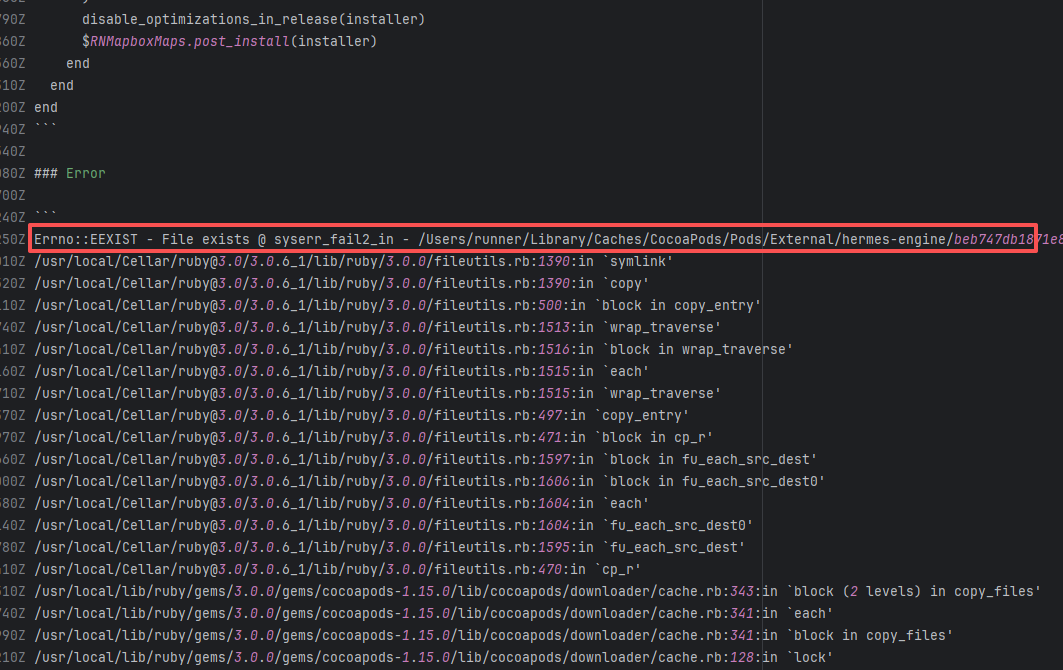

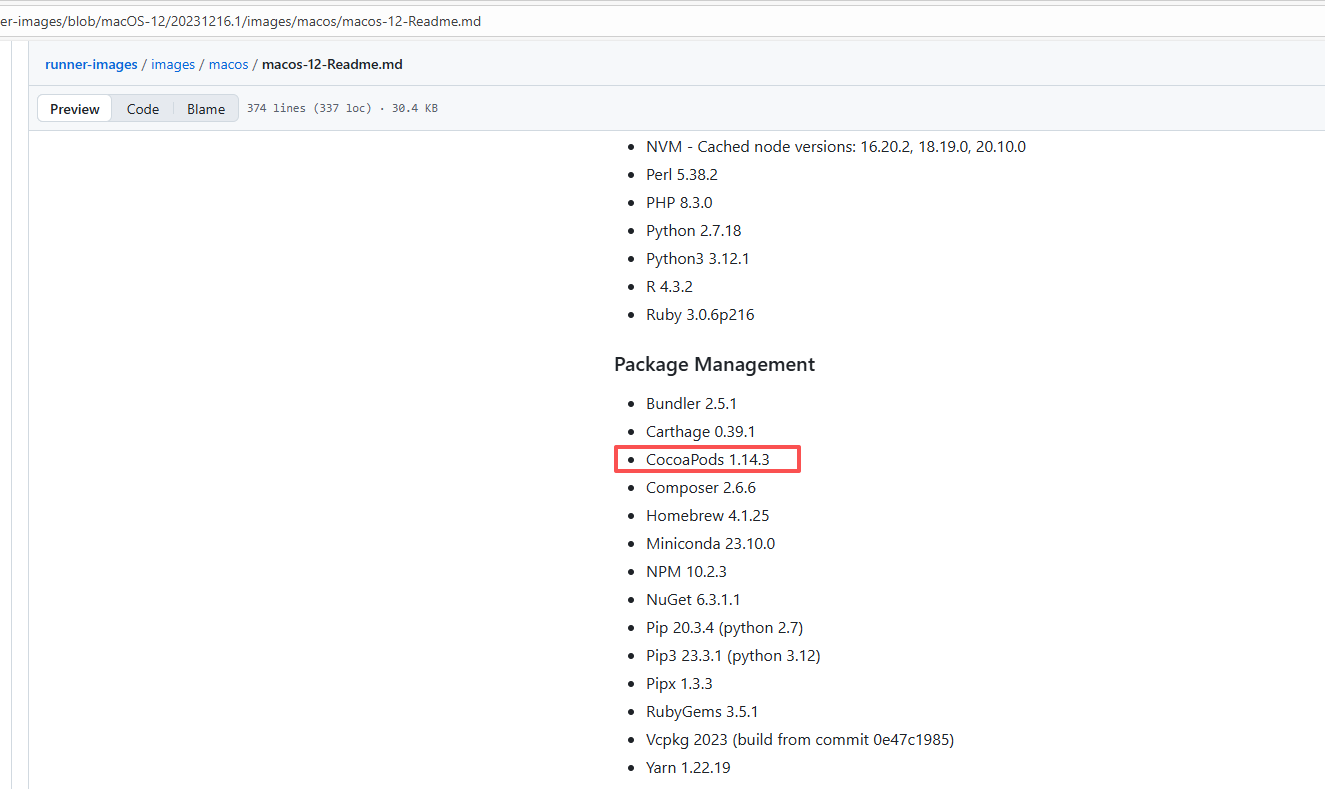

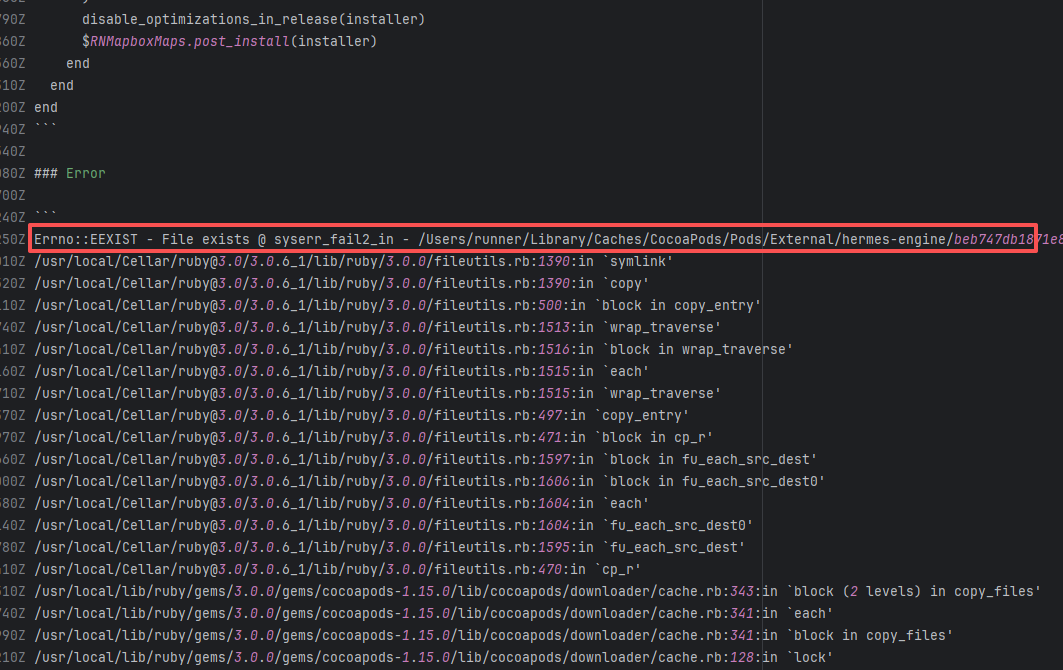

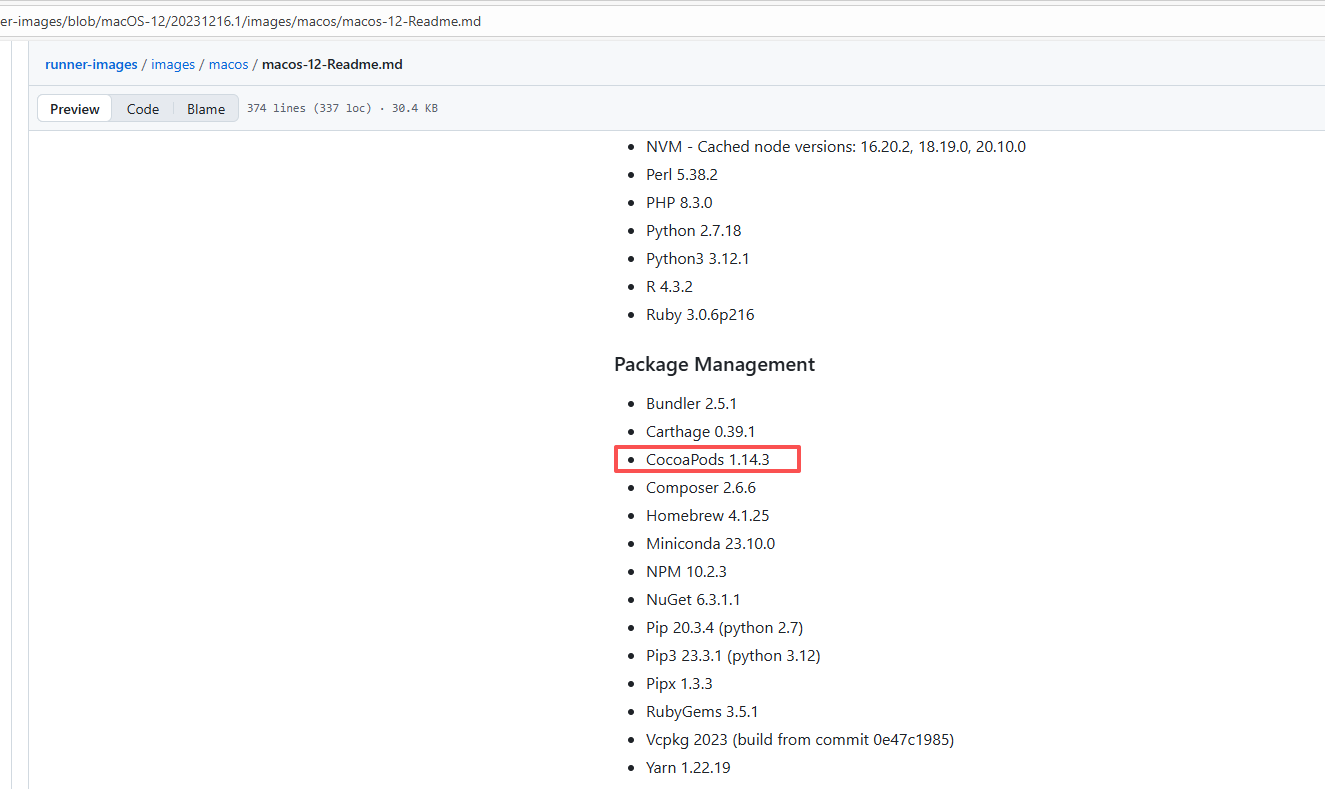

Error: The error log shows that during the execution of the bundleInstall task, the Ruby version being used (2.7.8) was incompatible with the required Ruby version. contracts-0.17 requires a Ruby version between 3.0 and 4.0, but the current version is 2.7.8, so the dependencies could not be installed normally.

Root Cause: Comparing the successful and failed logs revealed that during the build process, the docker image installed, cfidentity/uaa-generate-docs, is always the latest version. After the failure, the developer modified the dependency version in the image, upgrading RUBY_VERSION from 2.7.8 to 3.0.6. The ruby version met the dependency requirements after rerun, thus the build succeeded. |

- Modify the Dockerfile configuration to change the tool version to a suitable version |

|

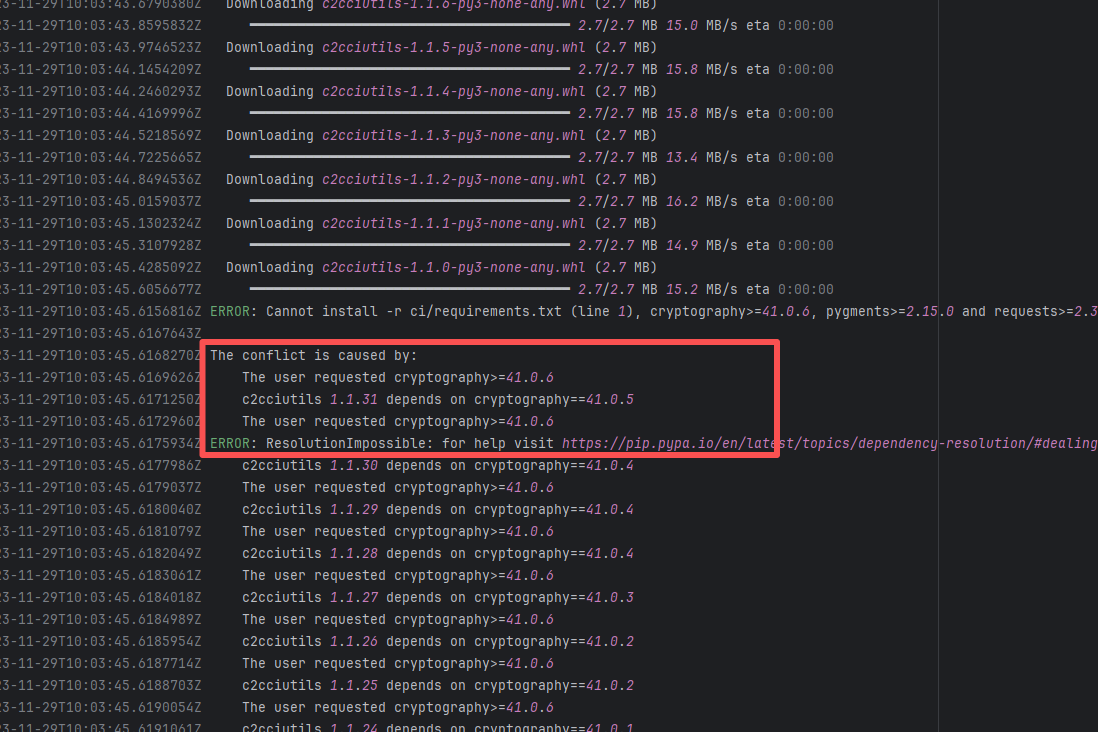

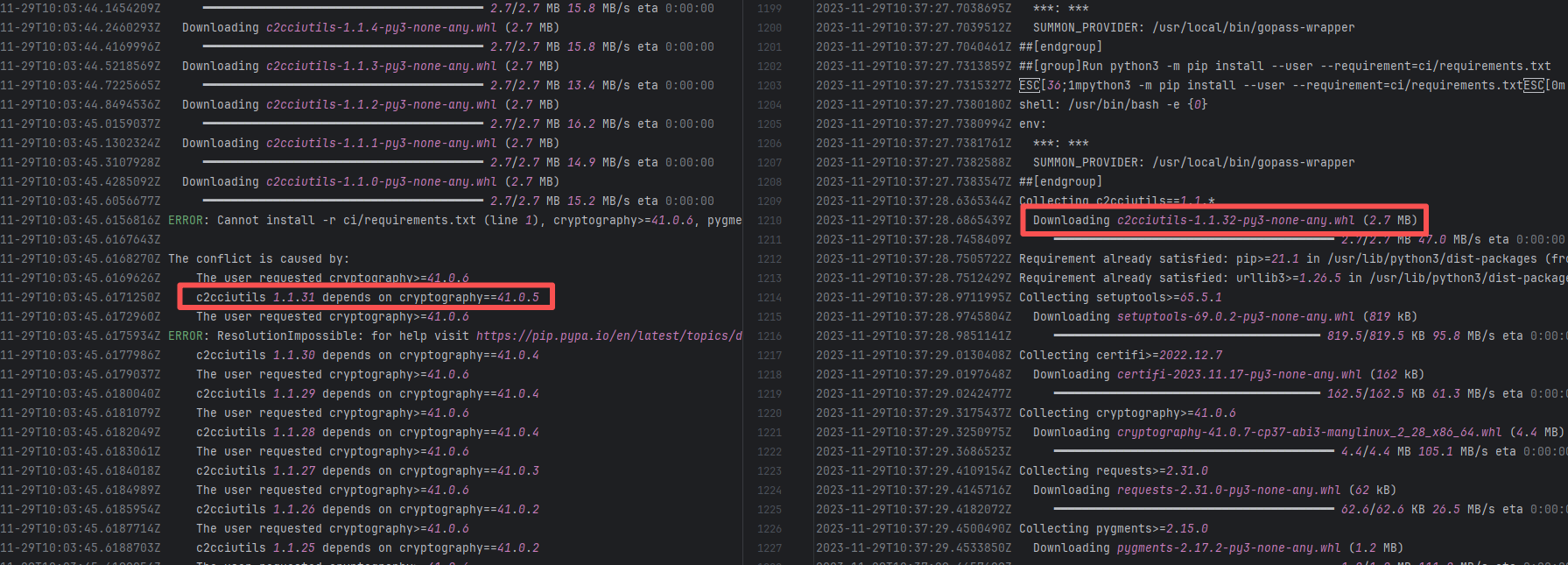

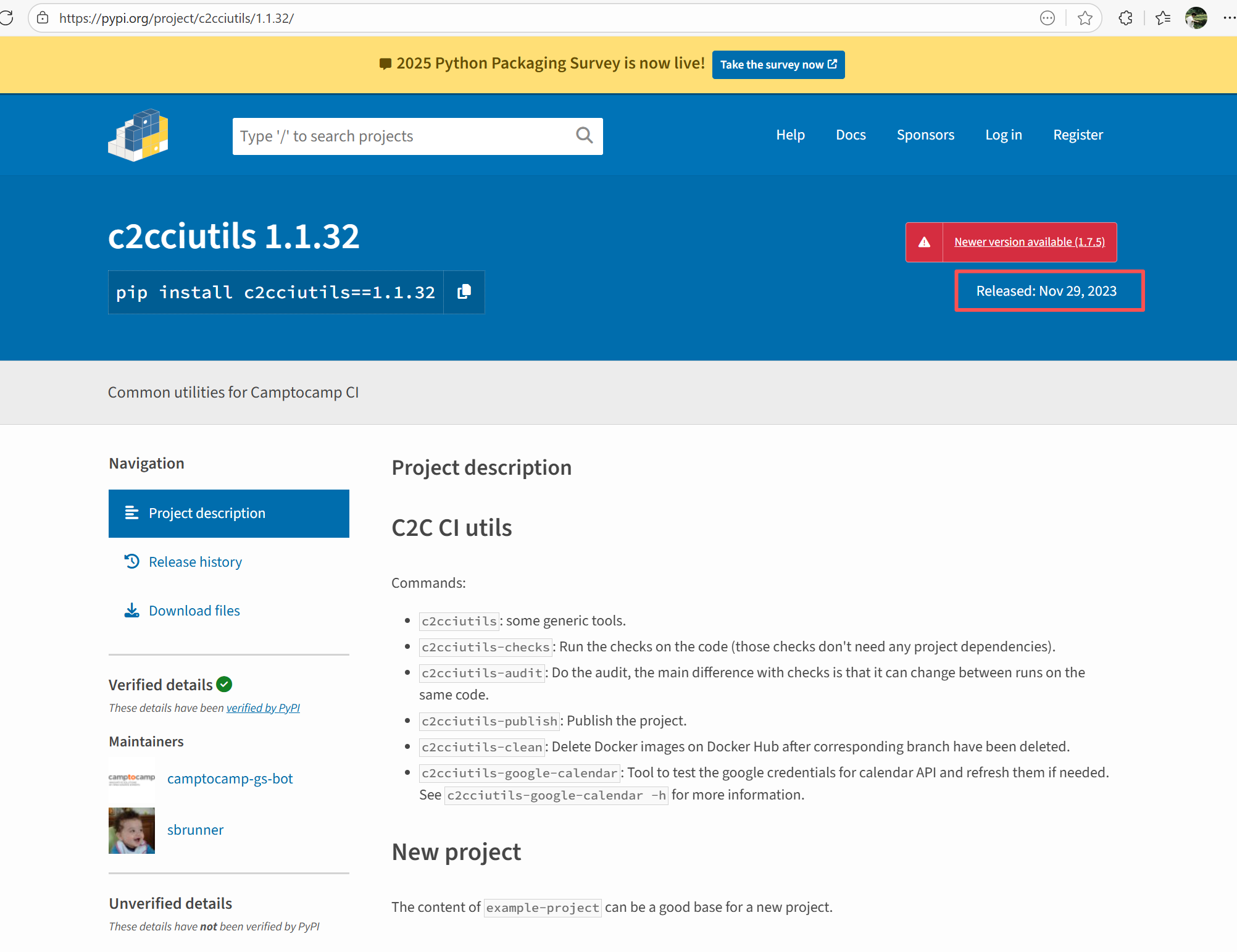

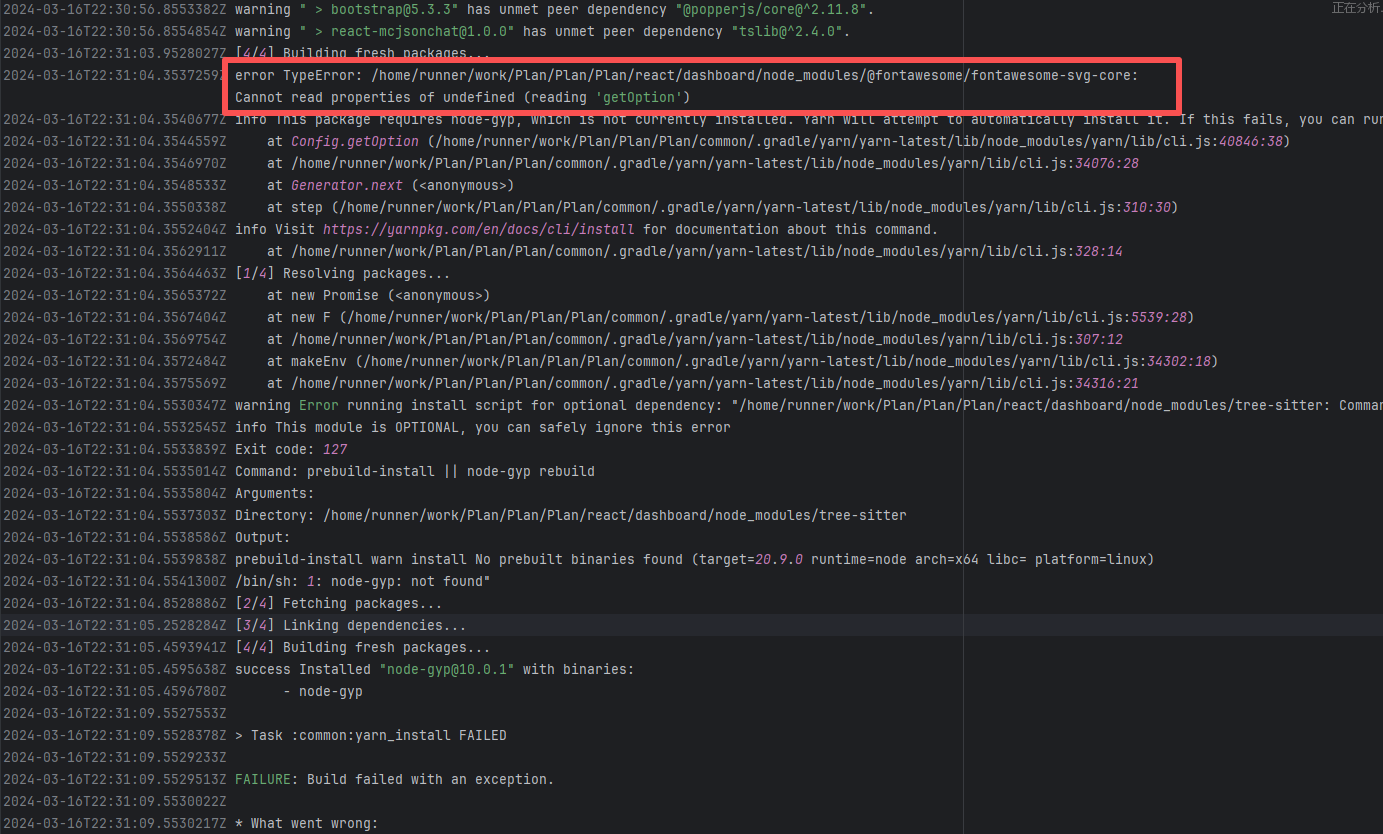

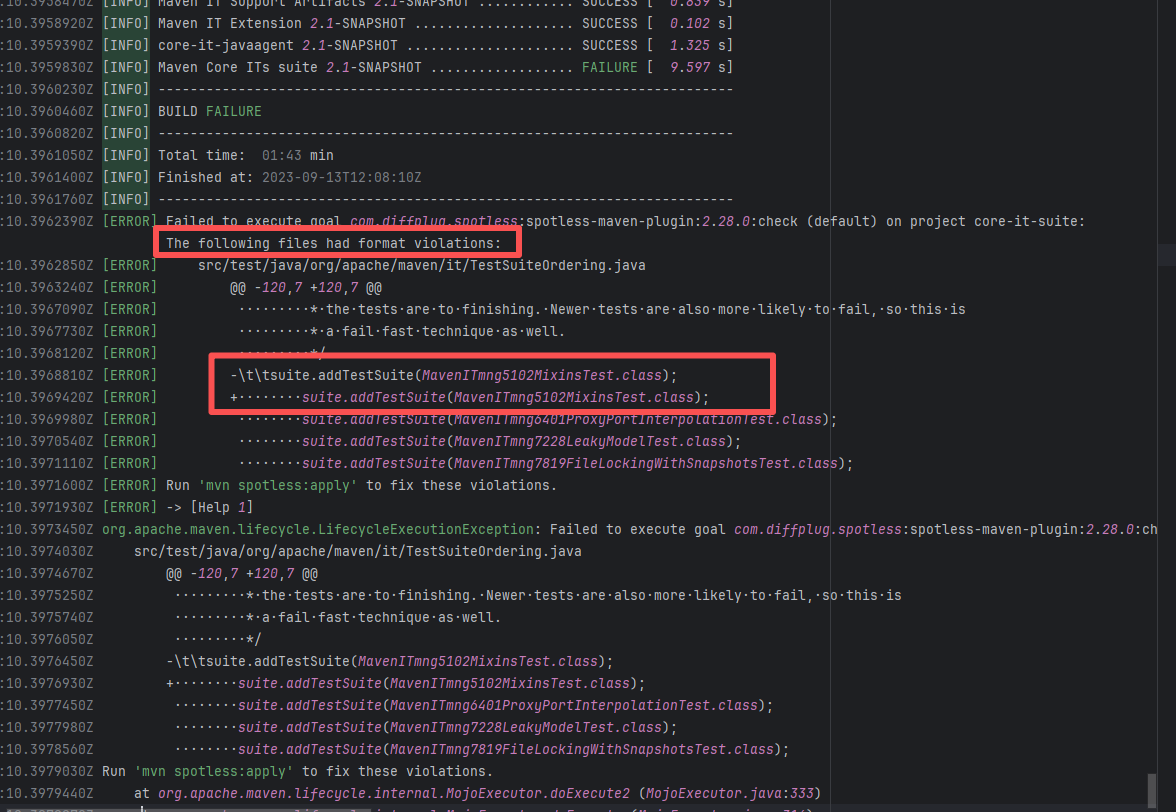

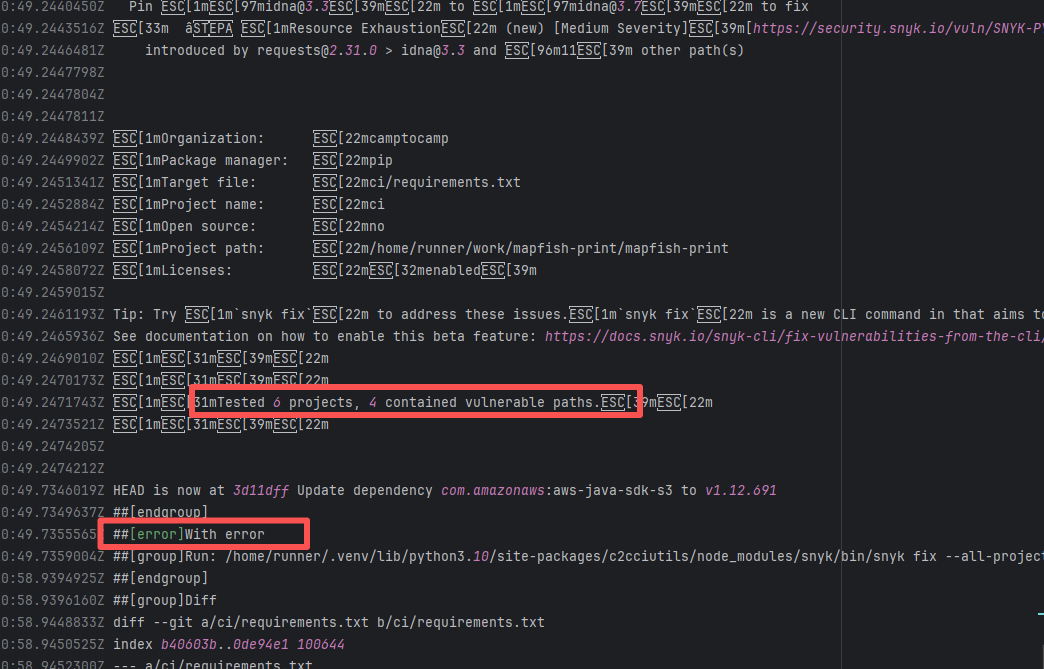

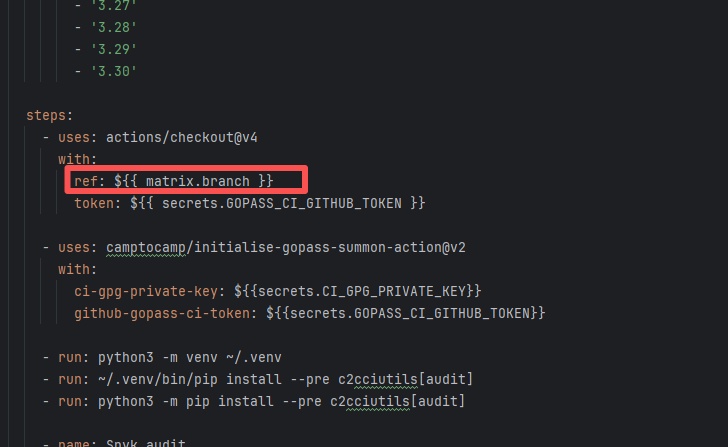

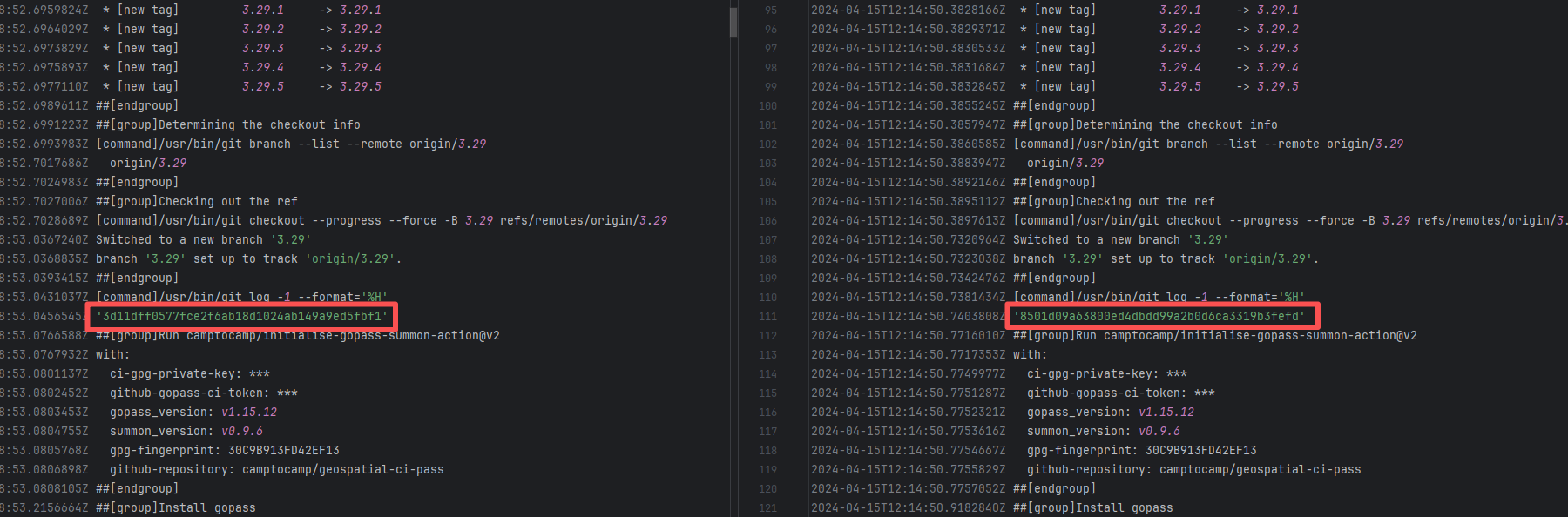

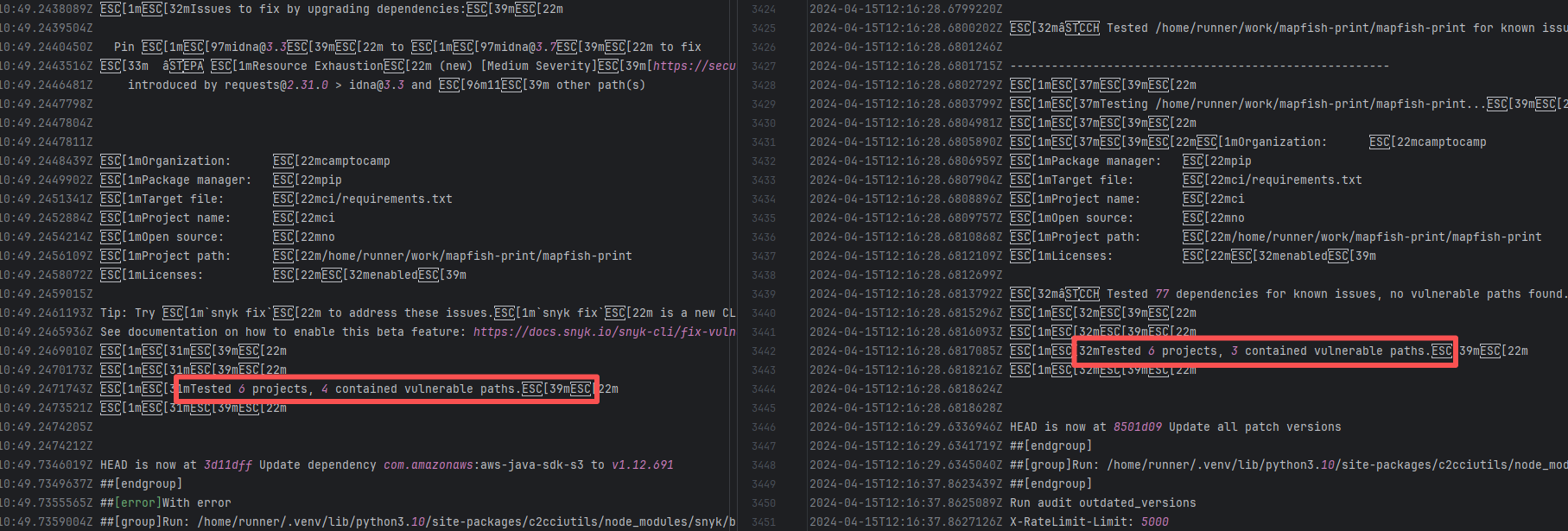

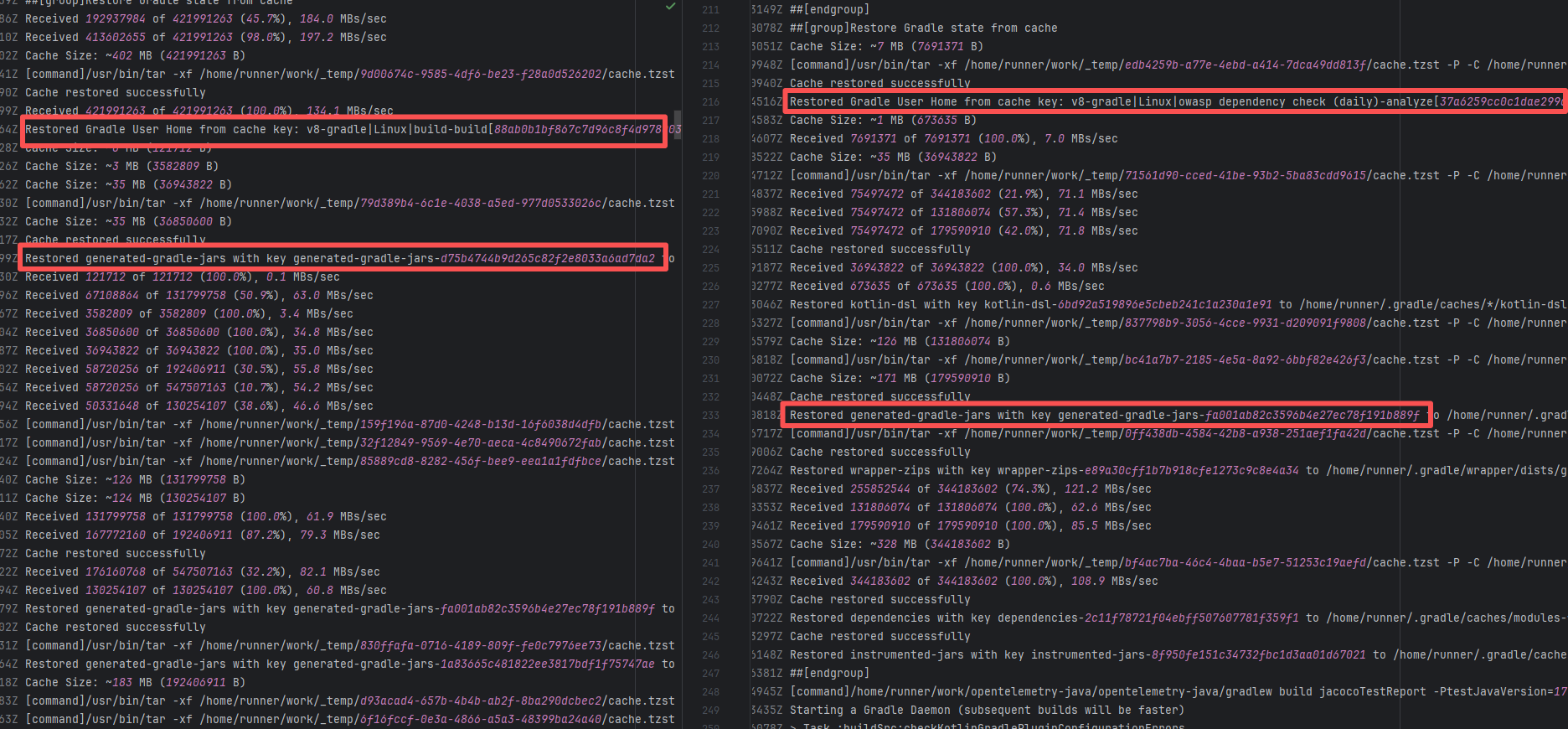

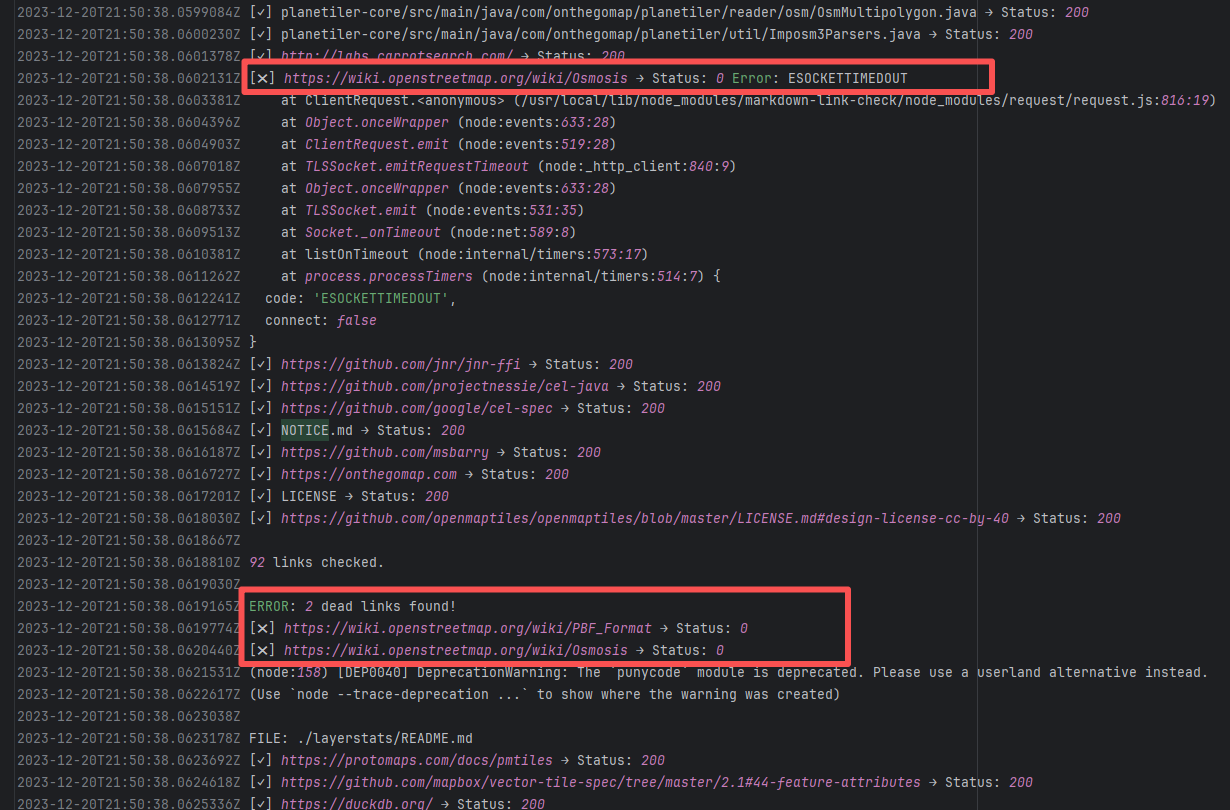

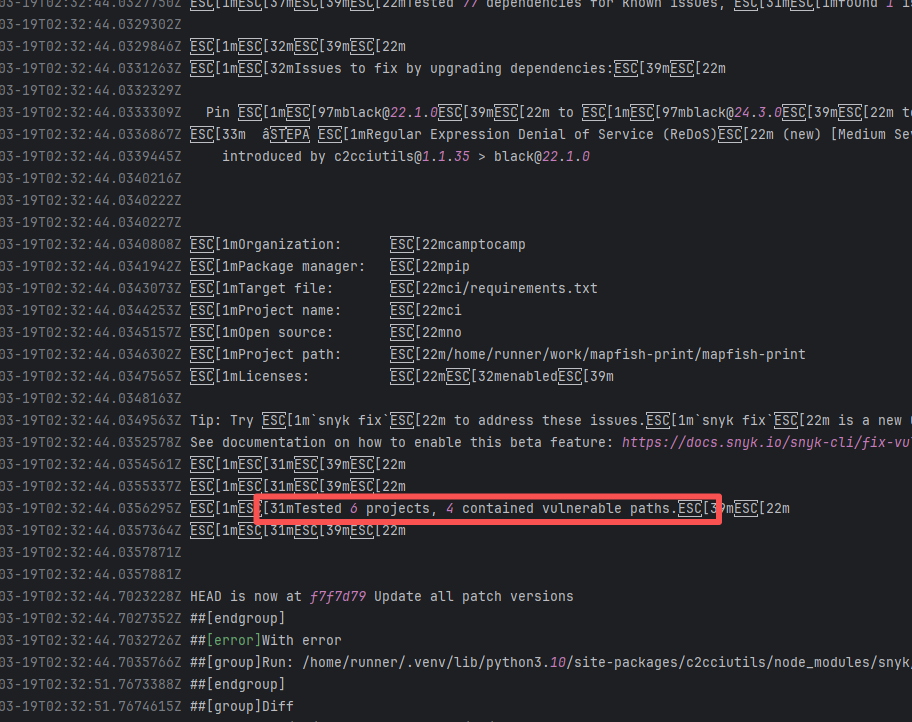

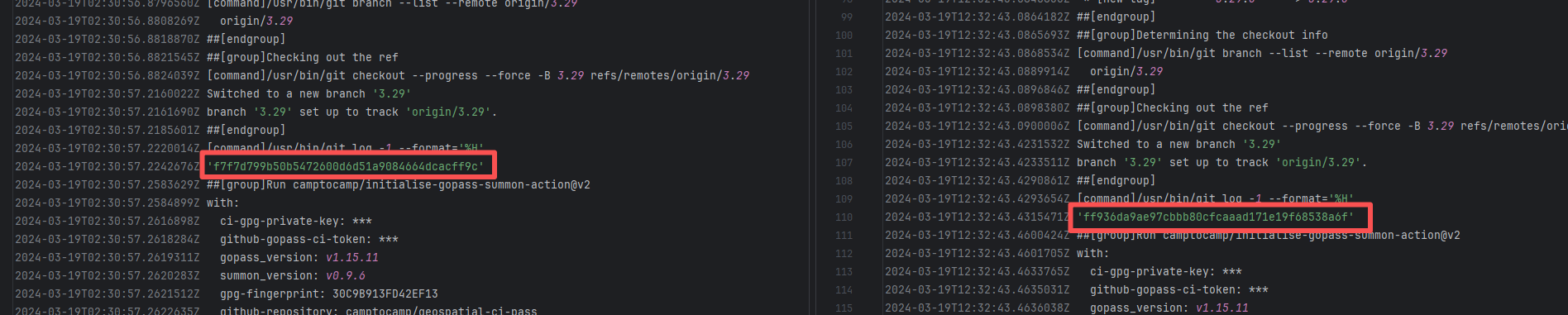

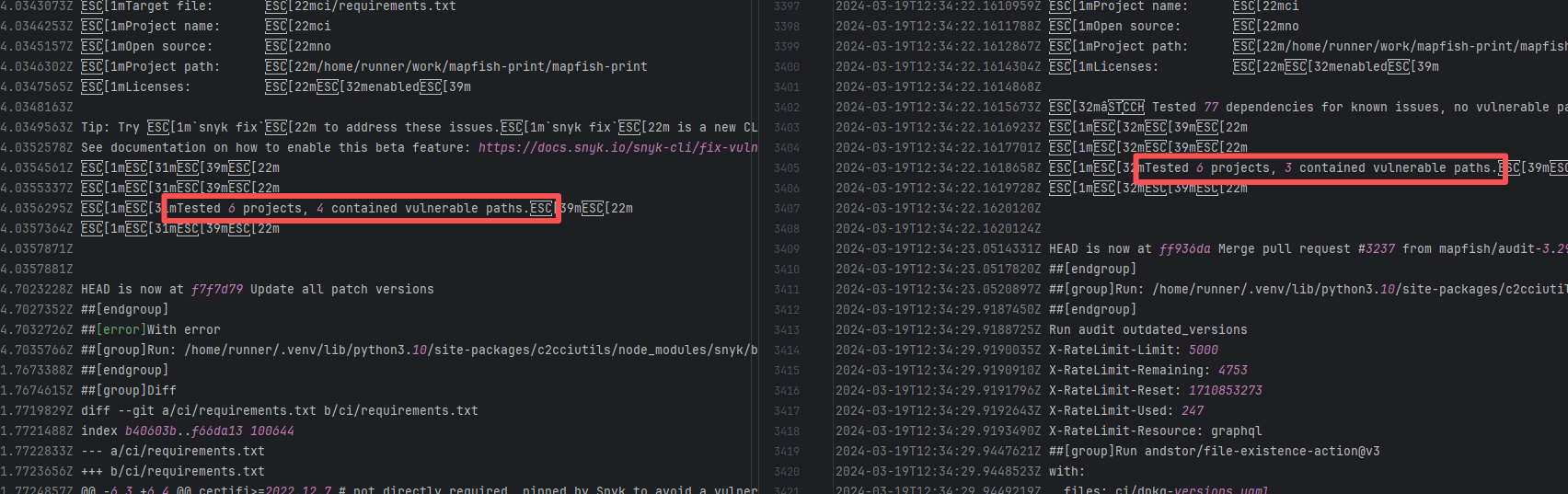

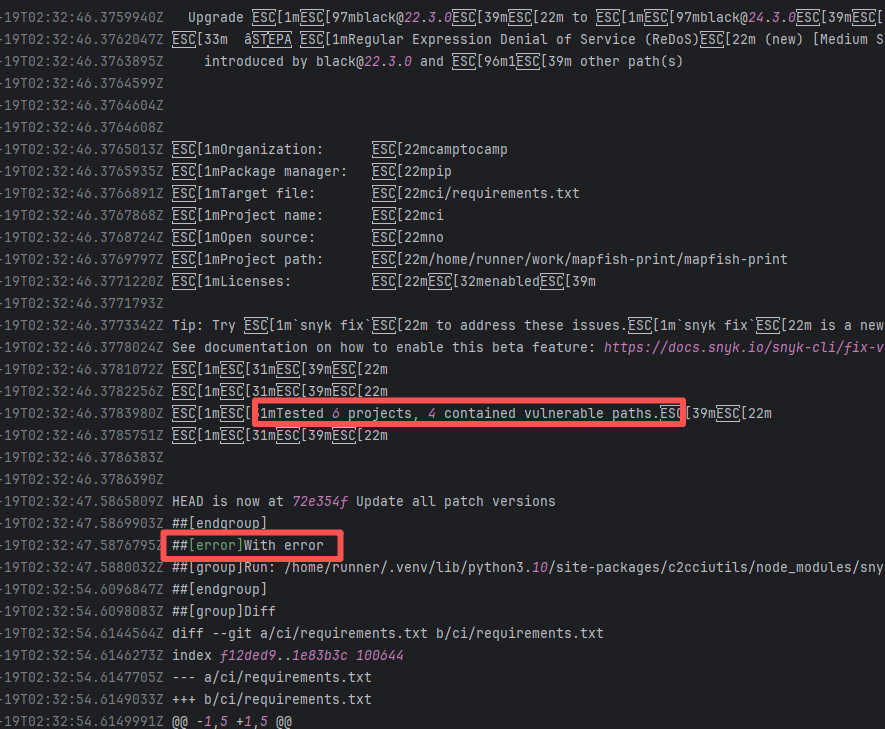

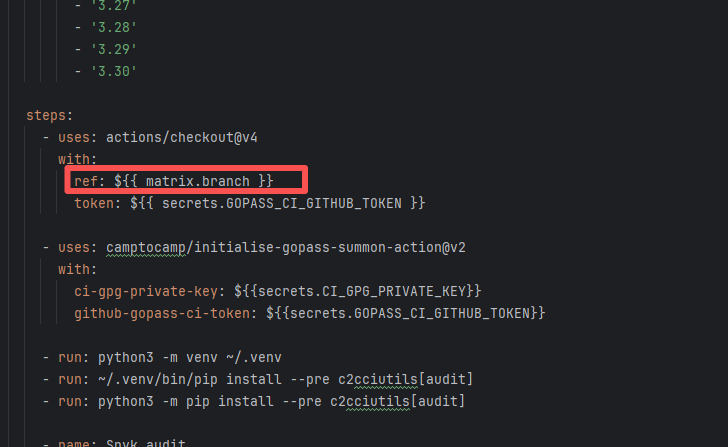

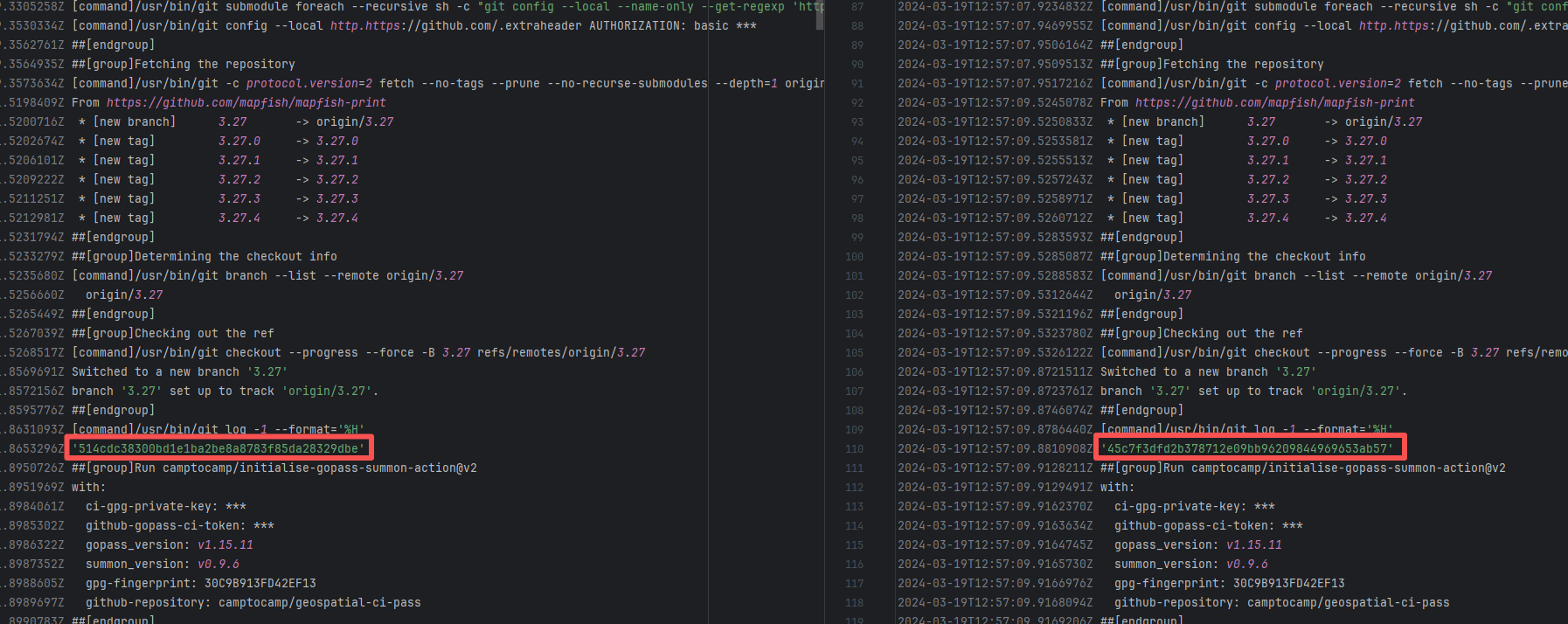

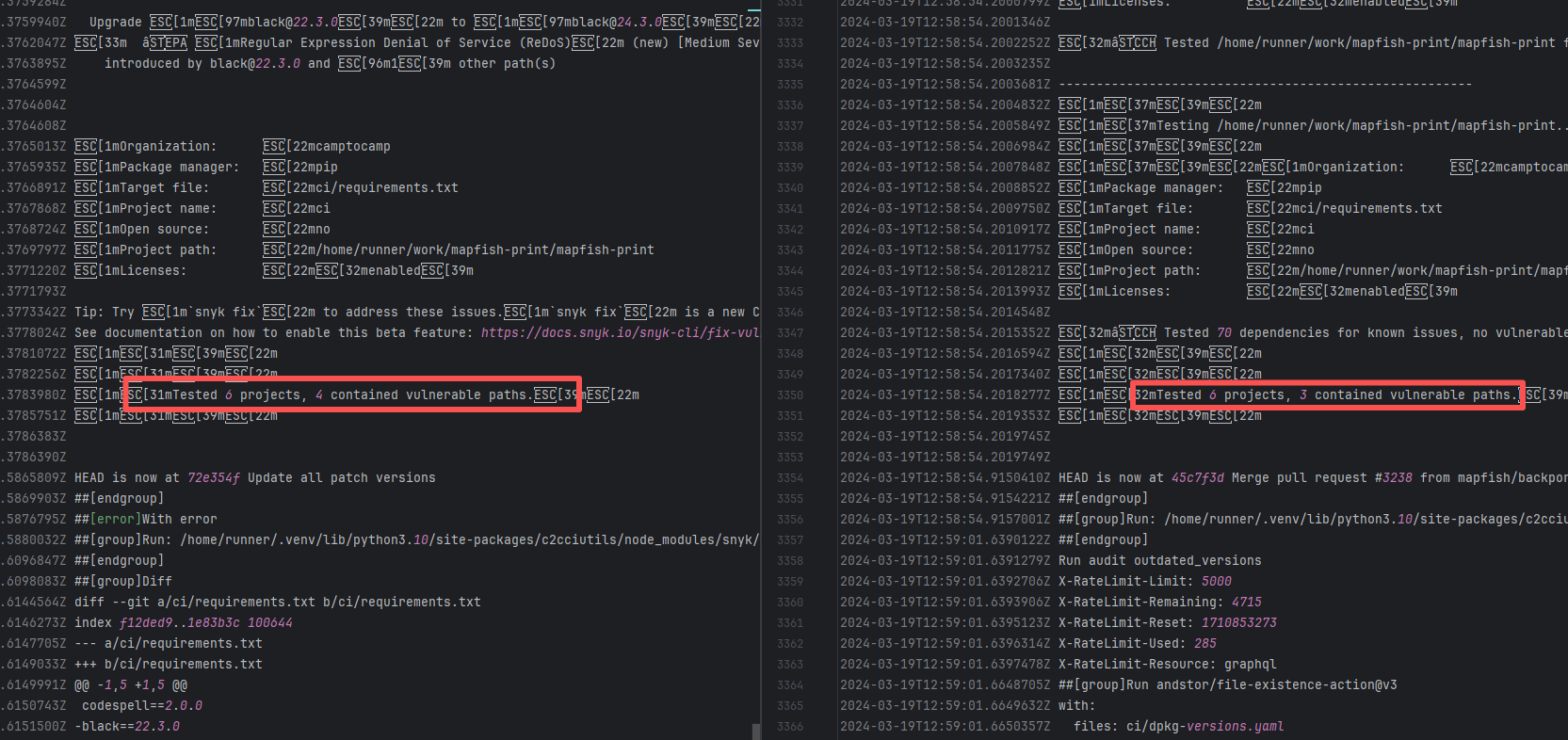

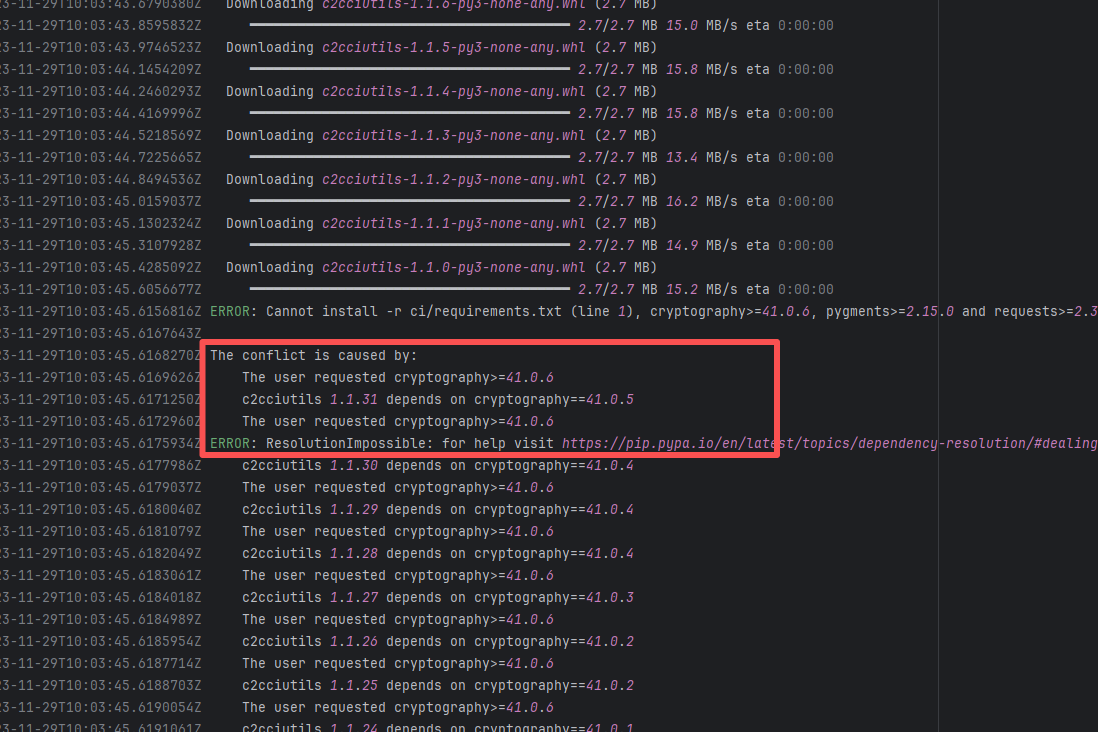

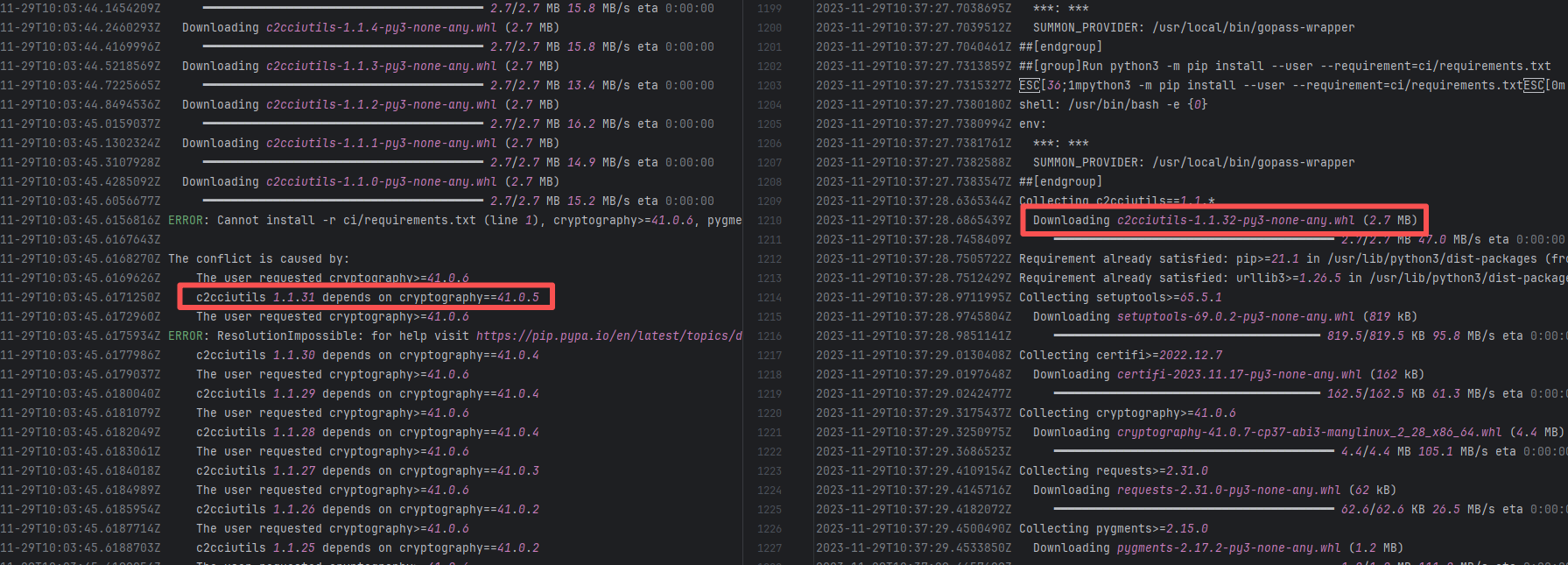

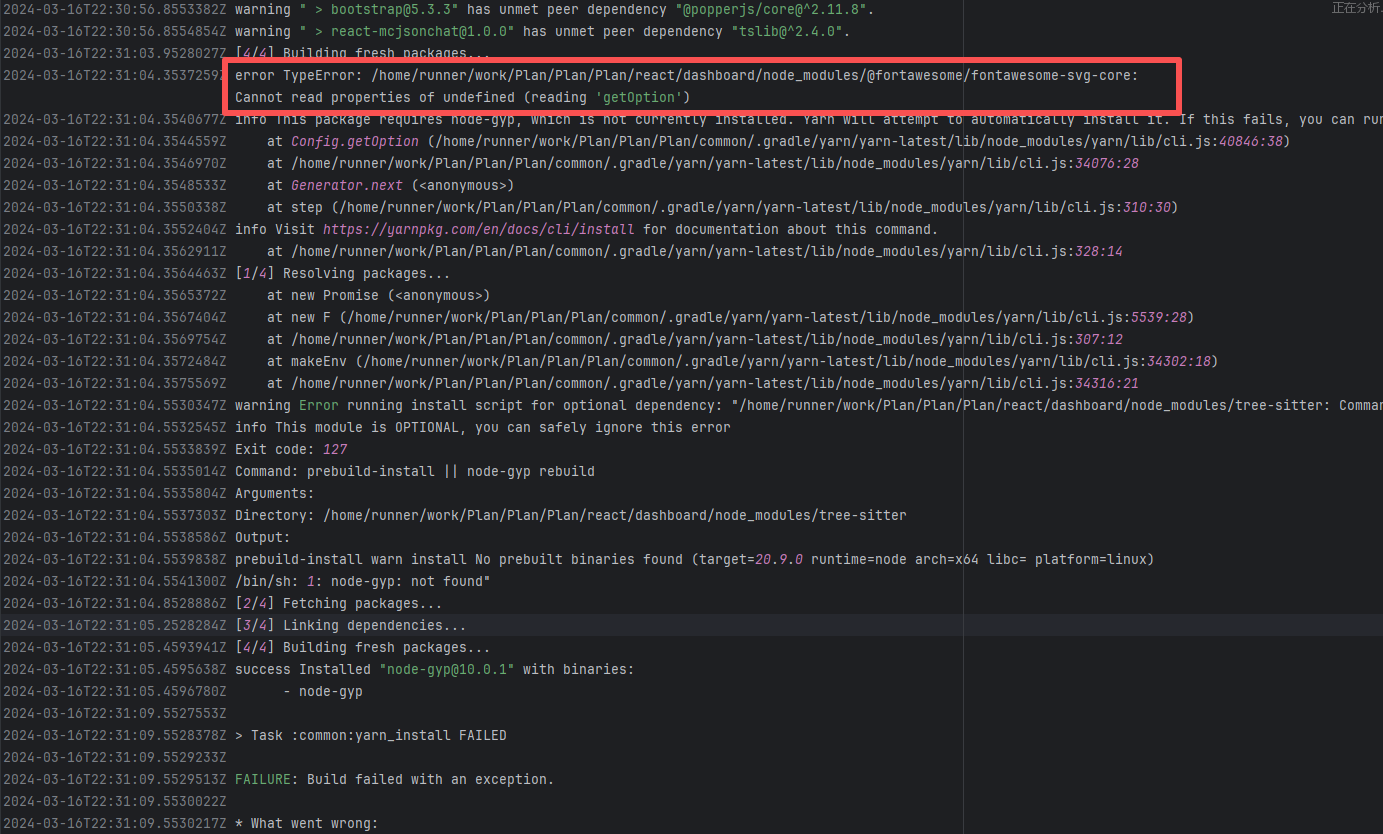

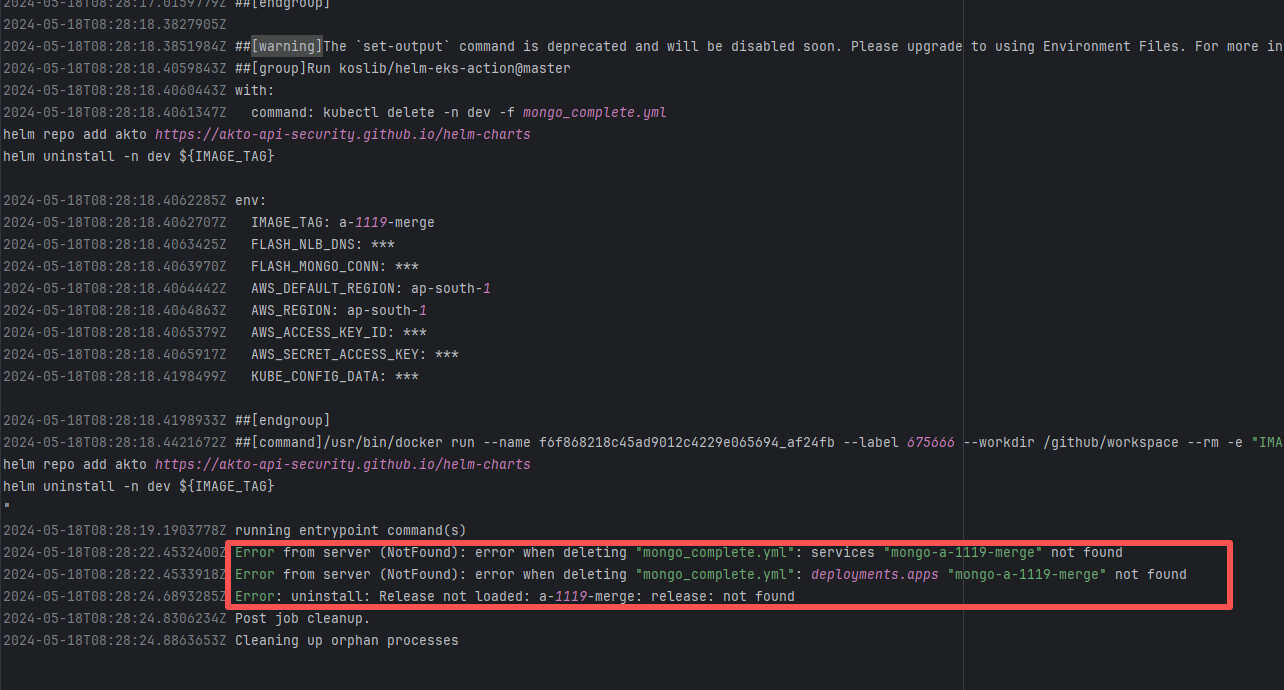

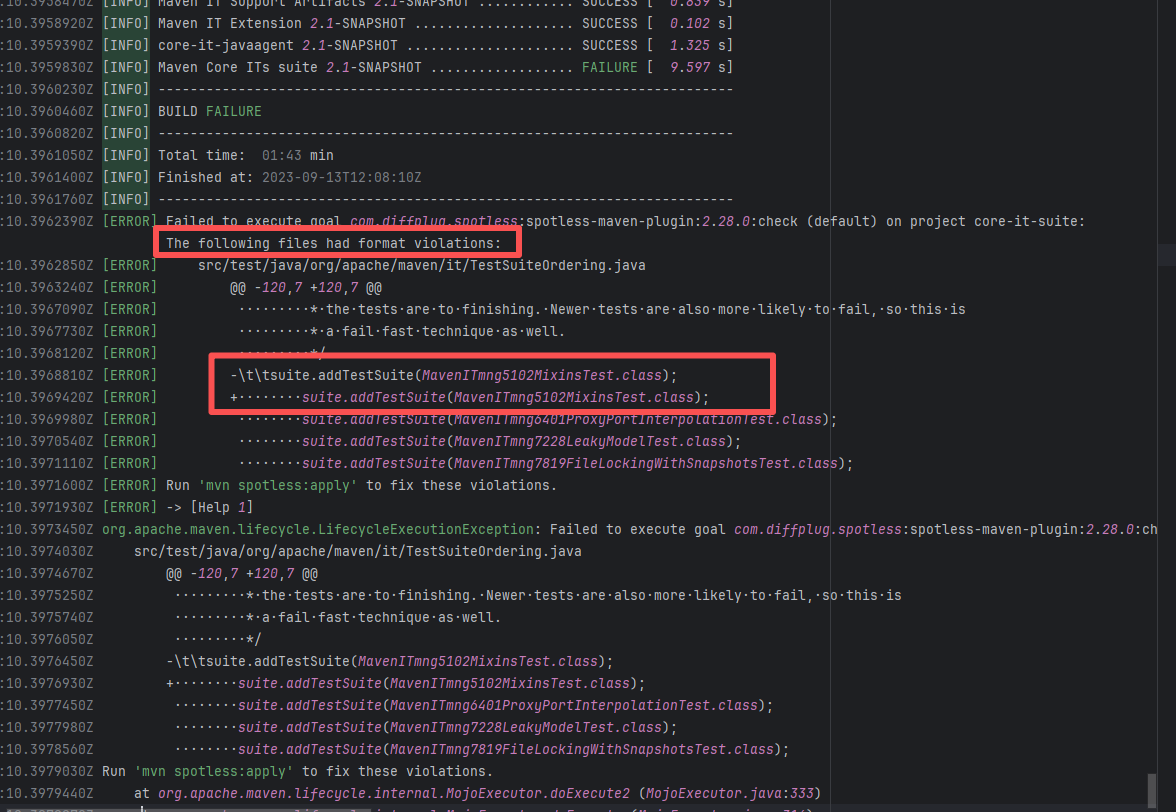

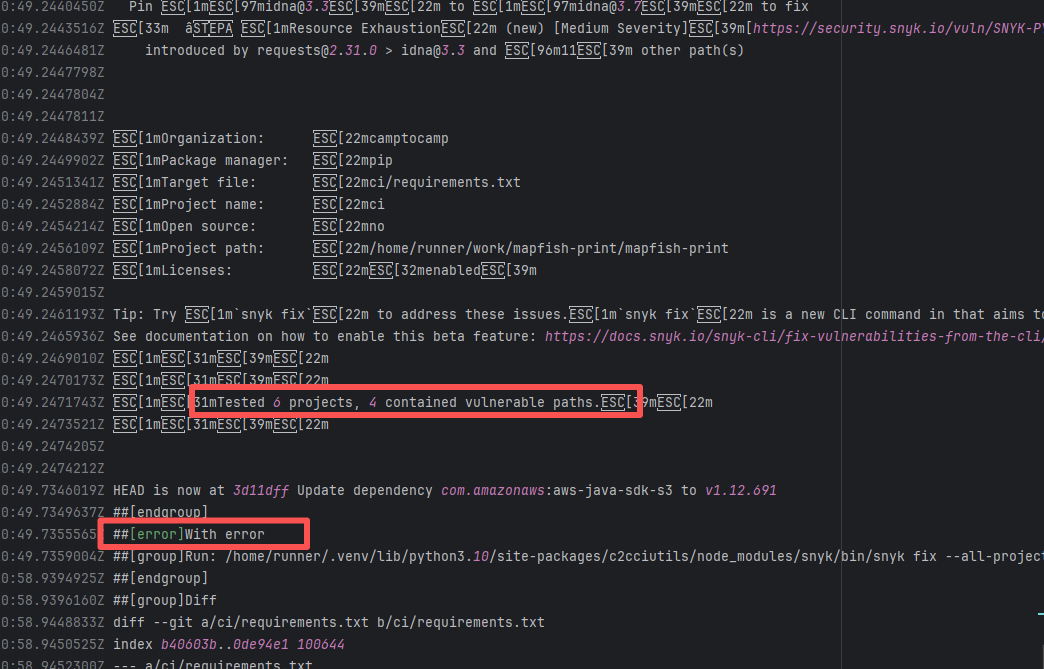

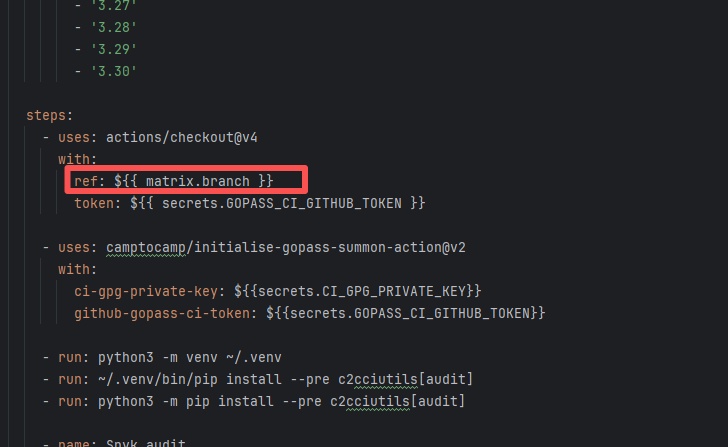

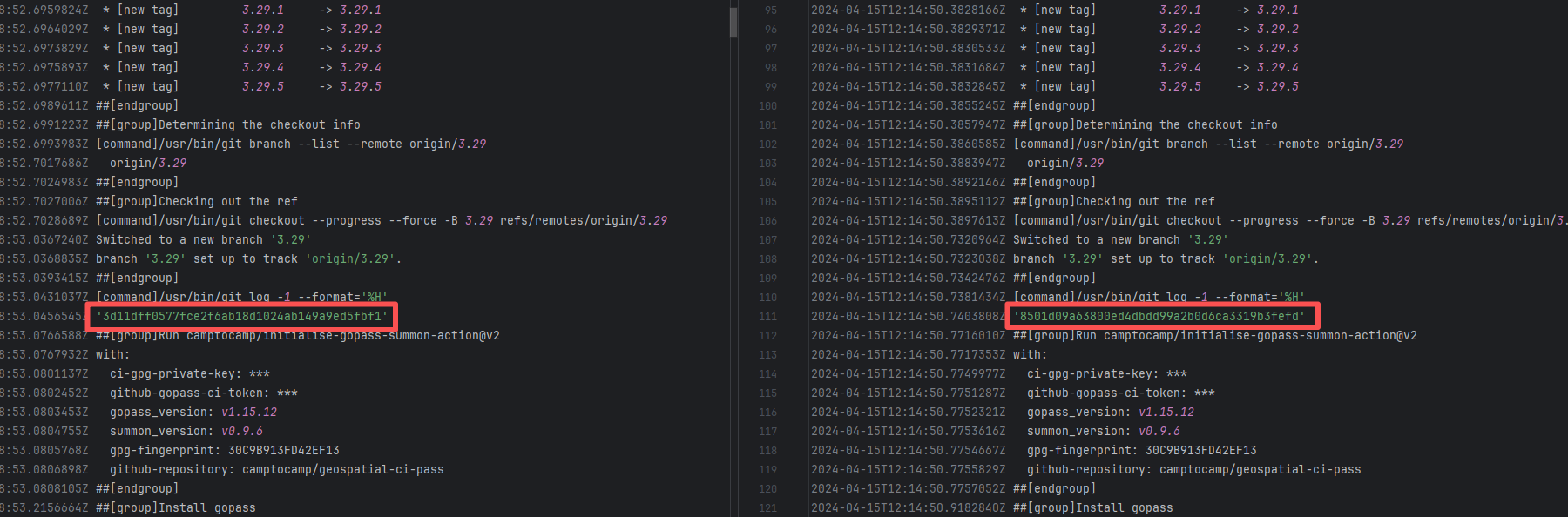

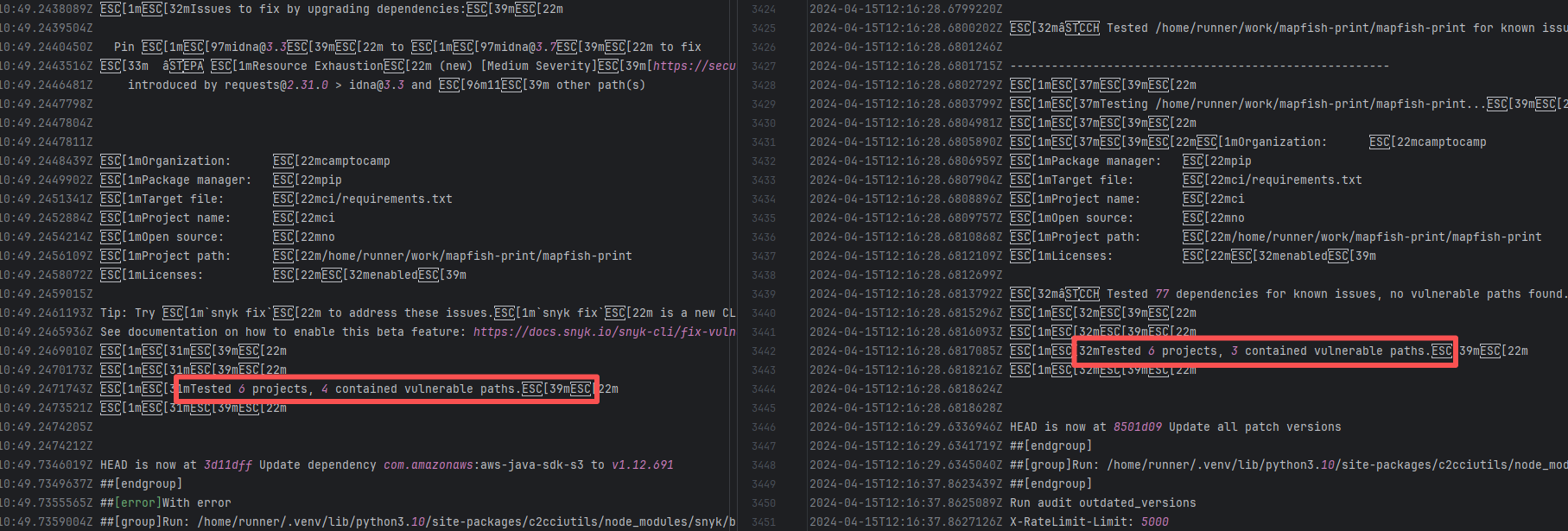

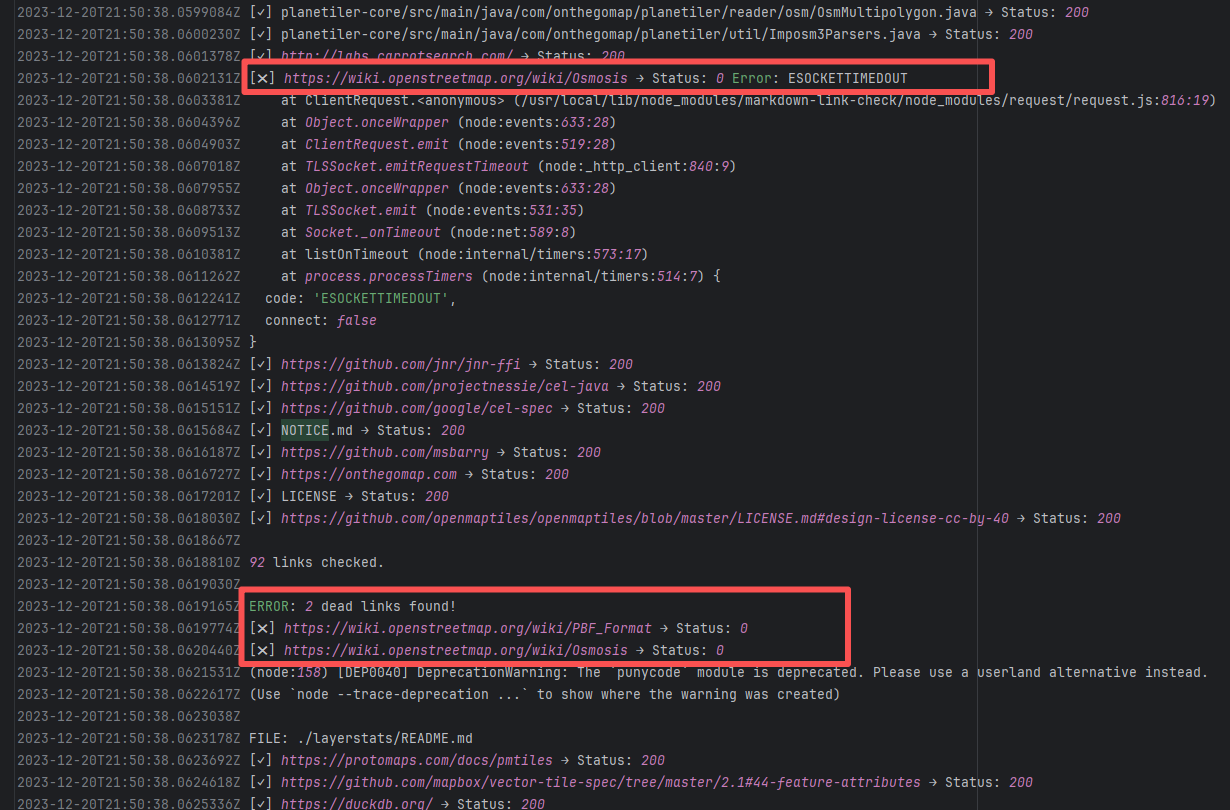

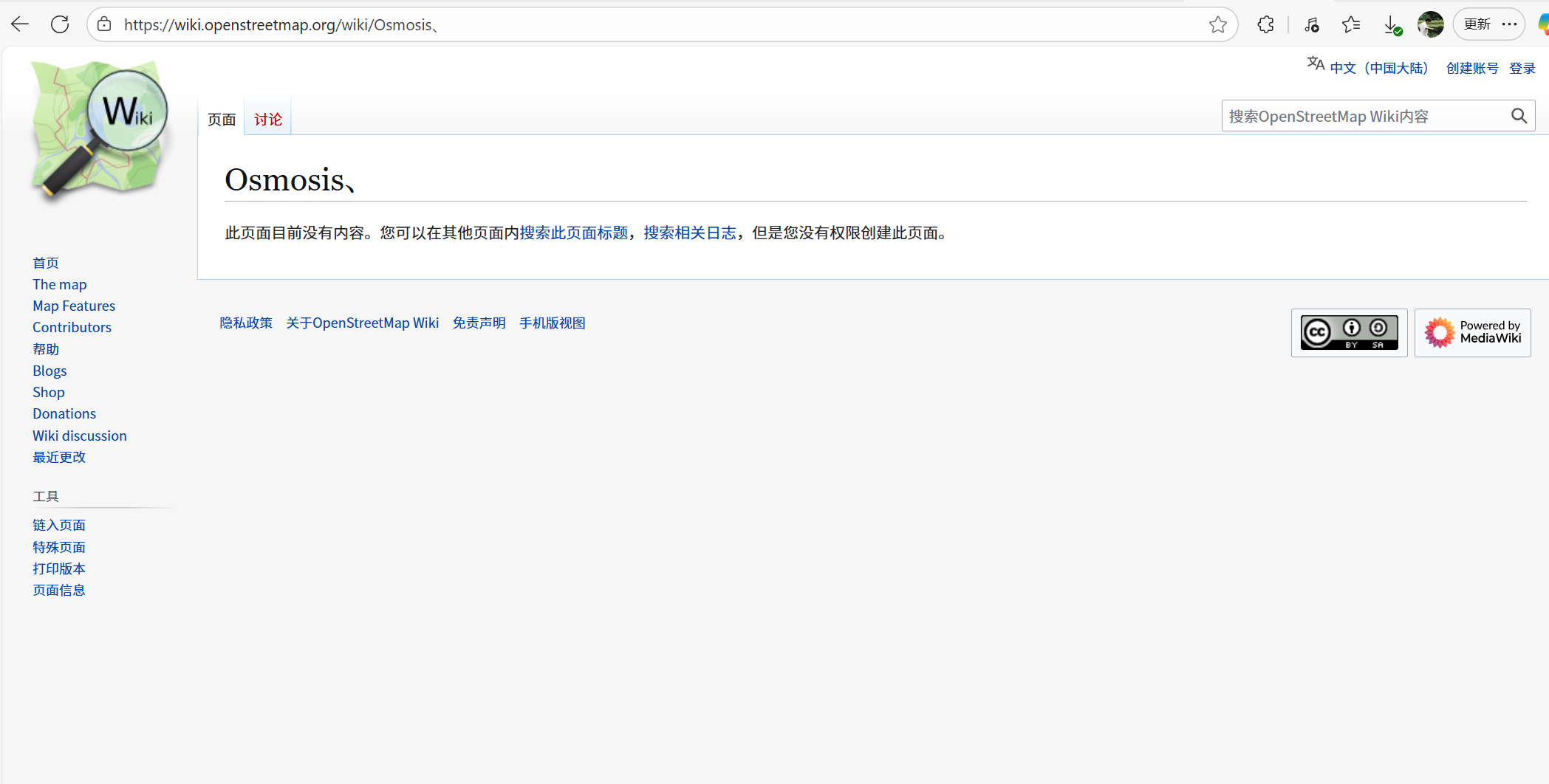

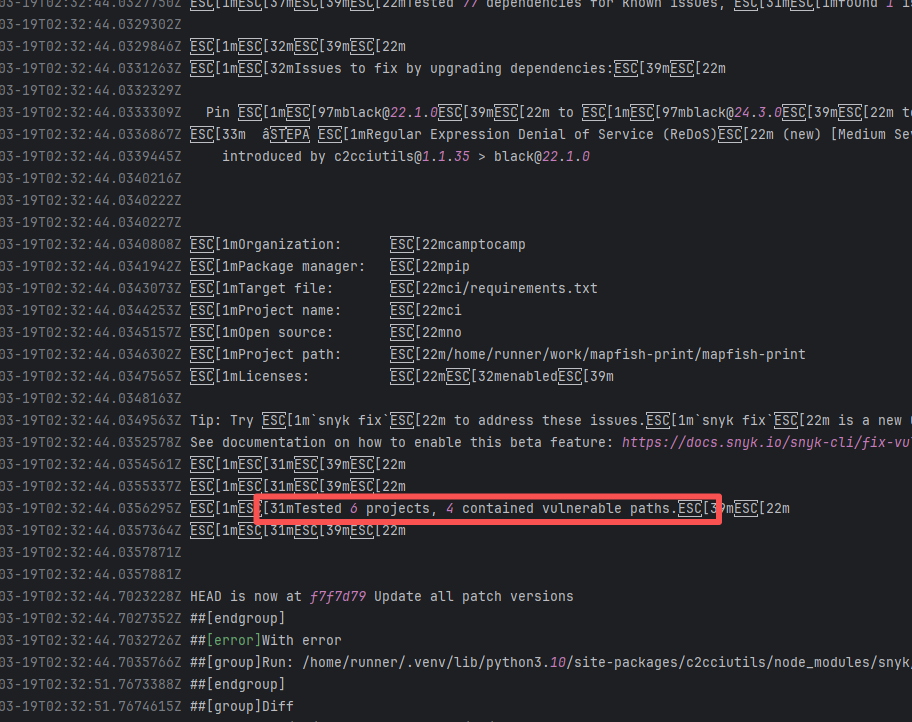

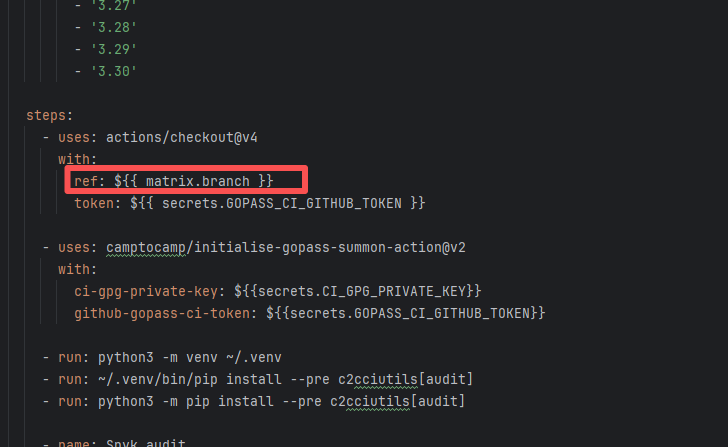

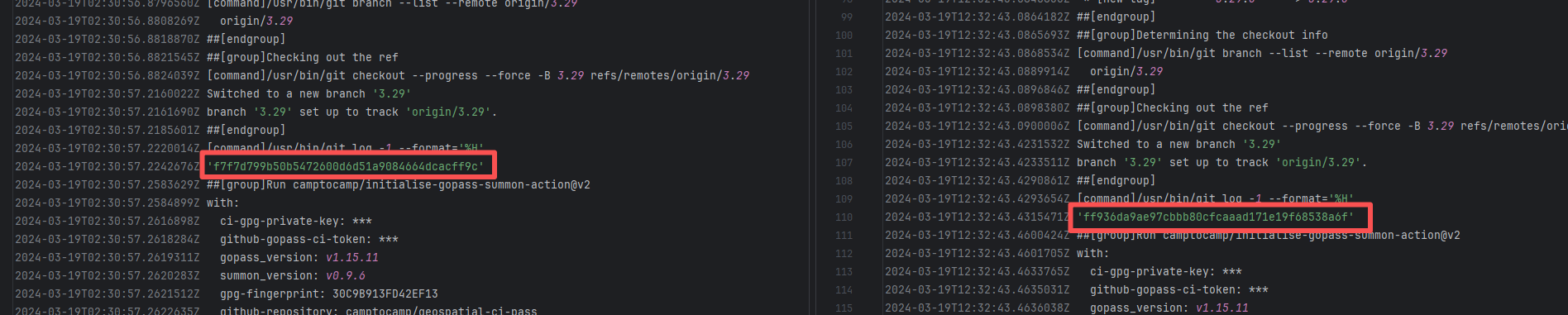

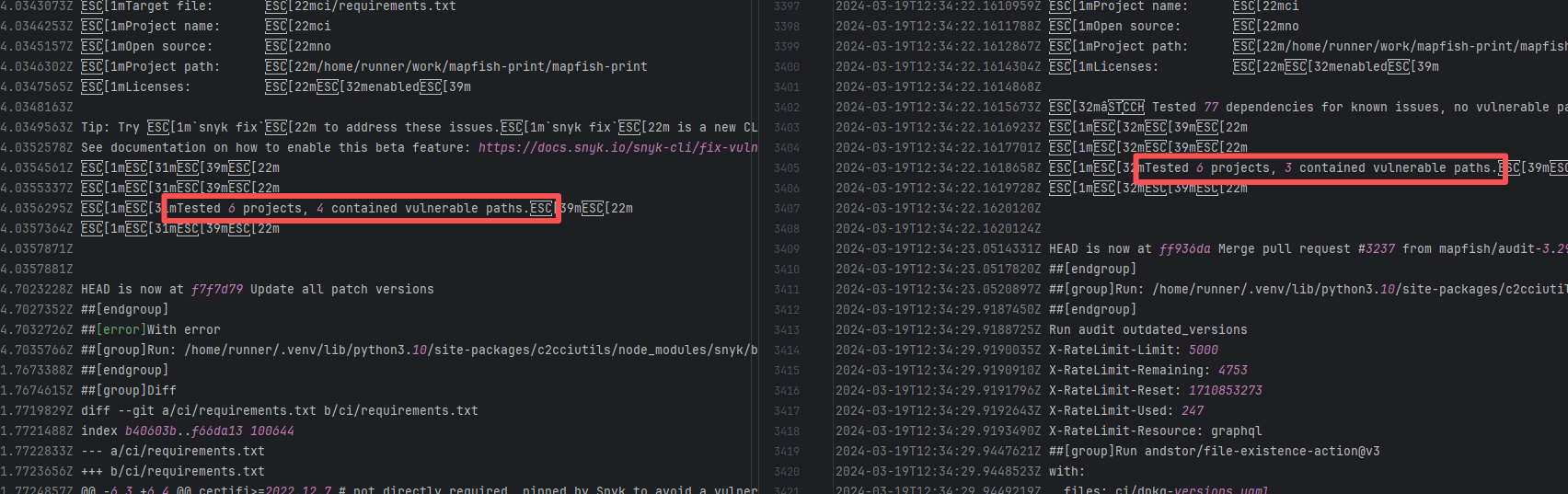

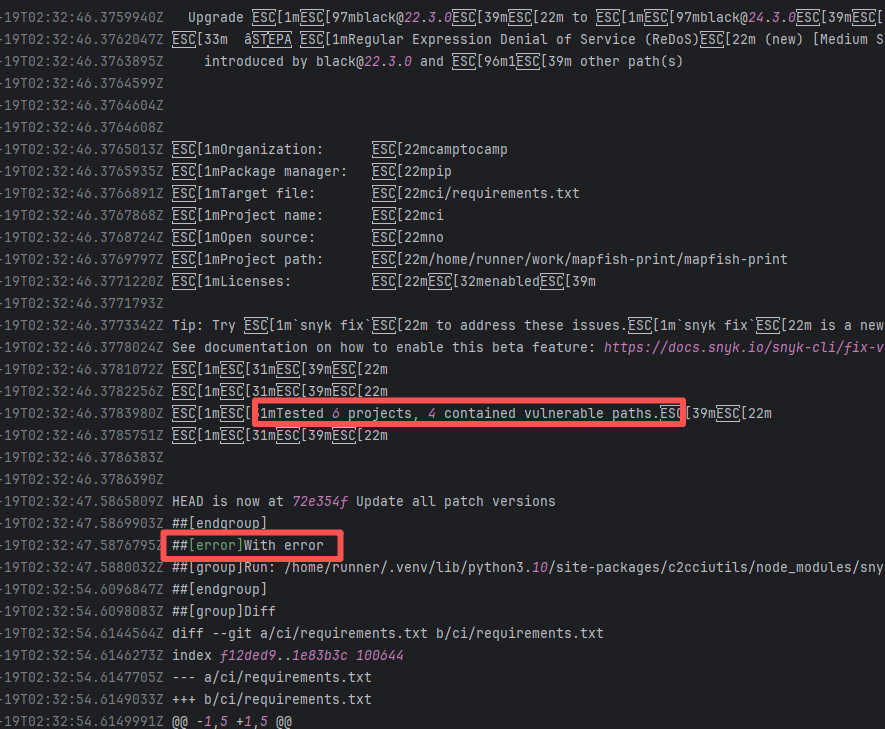

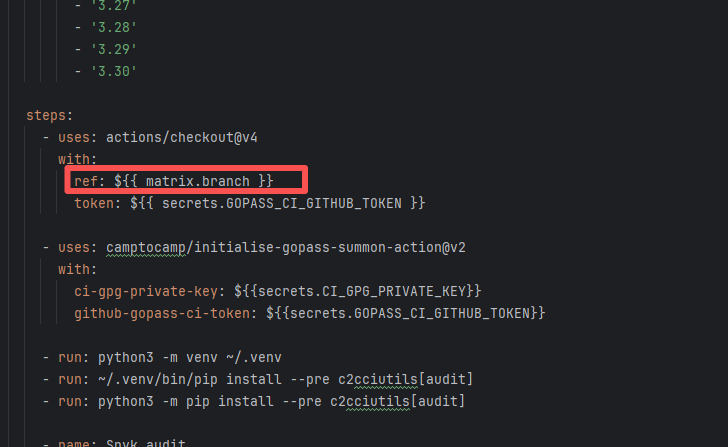

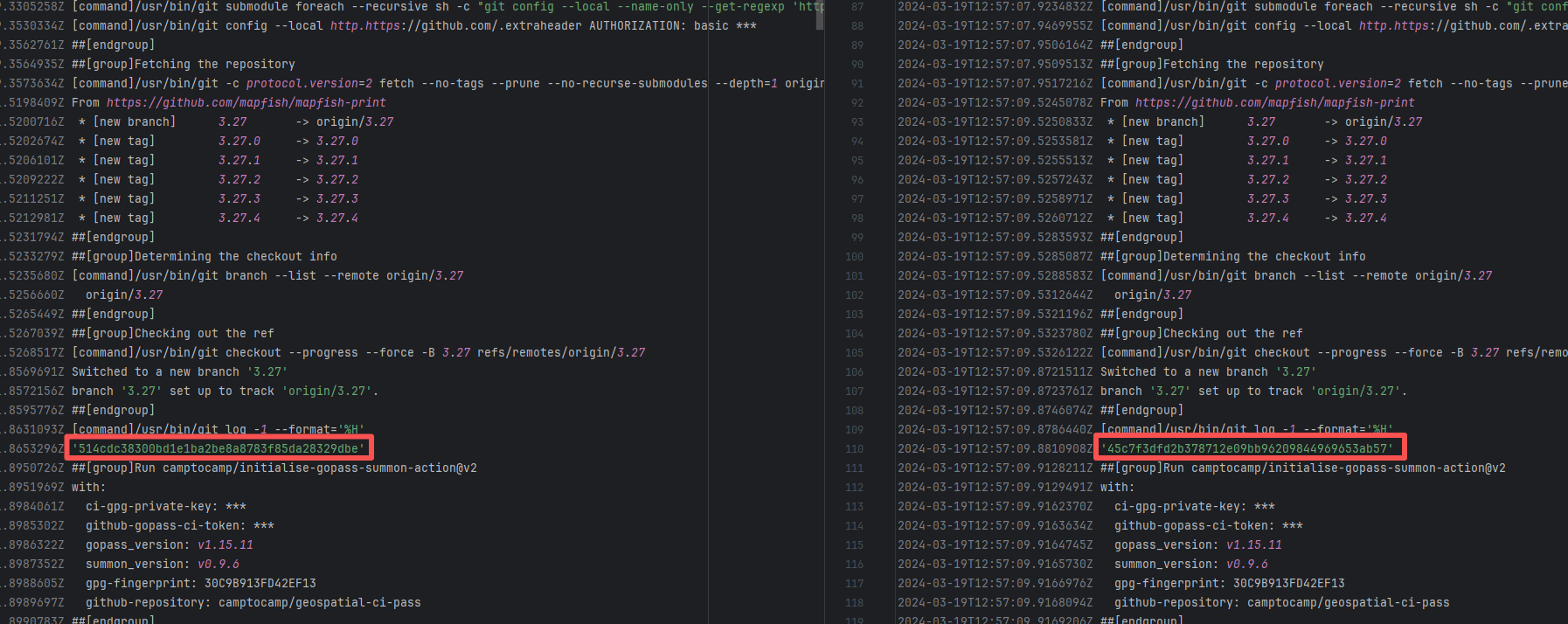

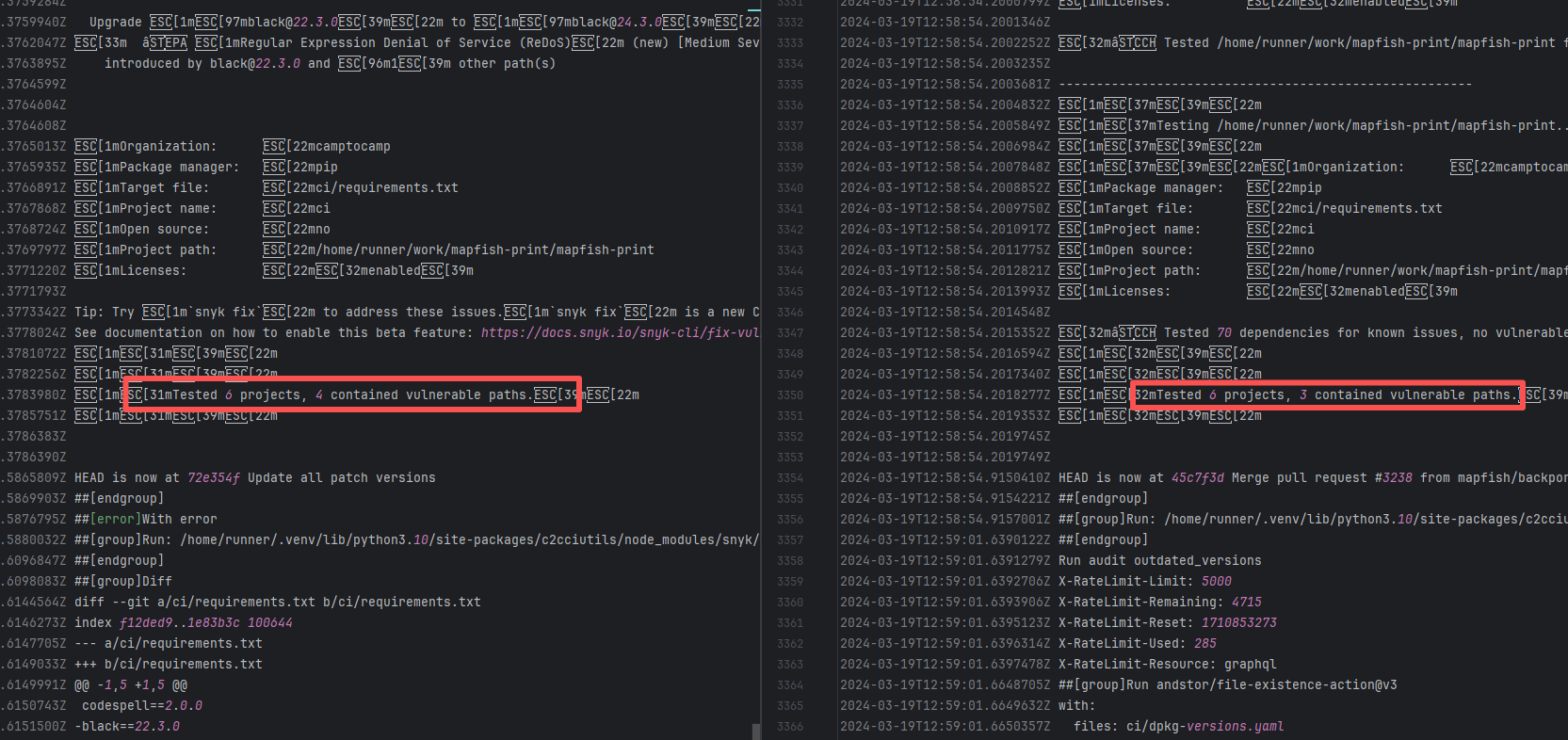

| mapfish/mapfish-print |

19131451089 |

Missing Dependency |

Error: The error log indicates conflicts between the version of the c2cciutils package and its dependencies, particularly concerning version incompatibilities with the cryptography package. The specific issue is: the installation of cryptography>=41.0.6 is requested in requirements.txt, but different versions of the c2cciutils package (such as 1.1.31, 1.1.30, 1.1.29, etc.) require the cryptography version to be 41.0.5 or lower, leading to version conflicts.

Root Cause: Checking the upload log of https://pypi.org/project, when the failed build occurred, the latest available version of c2cciutils was 1.1.31. However, after the failure, version 1.1.32 was uploaded. Version 1.1.32 is based on cryptography version 41.0.6, which satisfies the requirements in the requirement file. Therefore, the rerun succeeded. |

- Upload the correct dependency files to the dependency repository

- Modify the dependency version to use a compatible combination of dependencies |

|

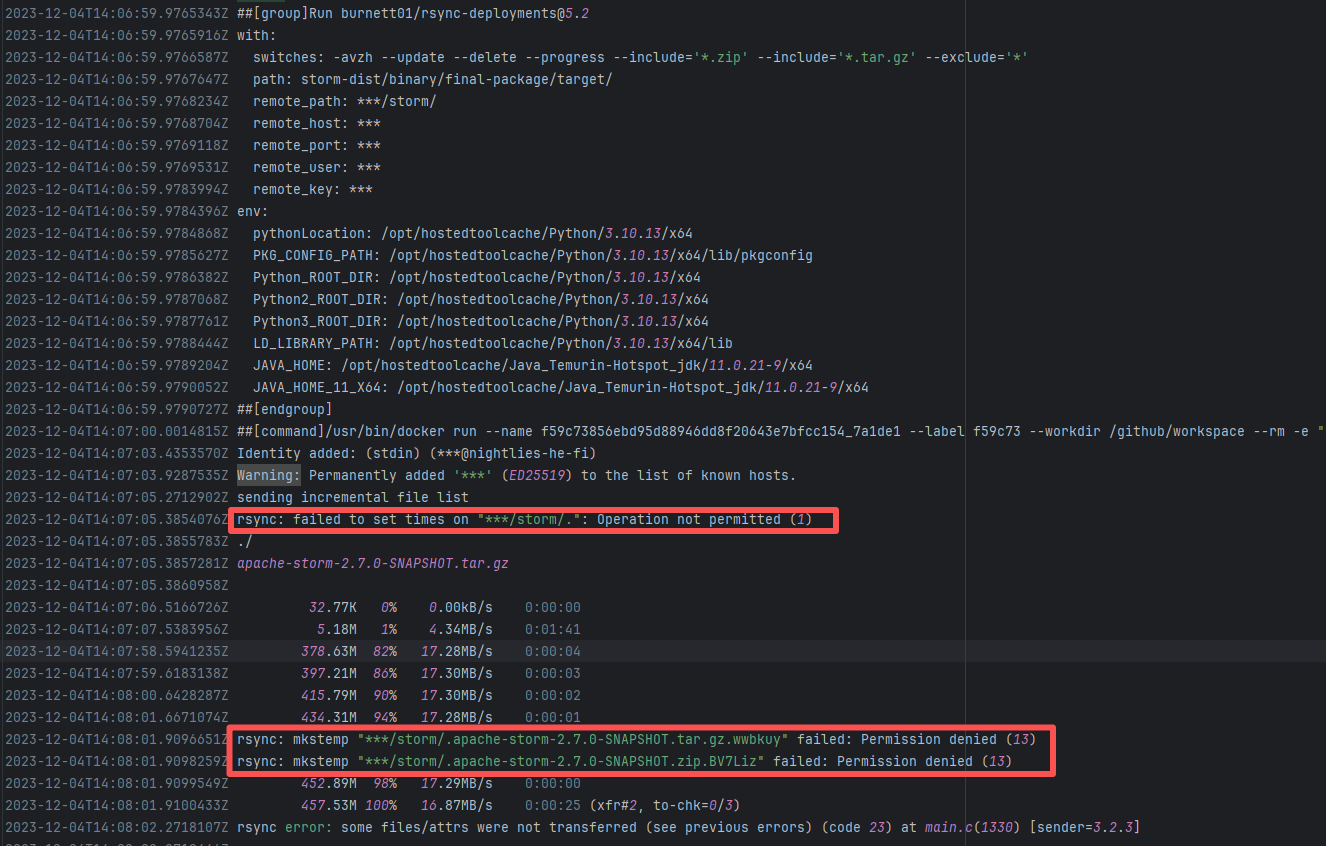

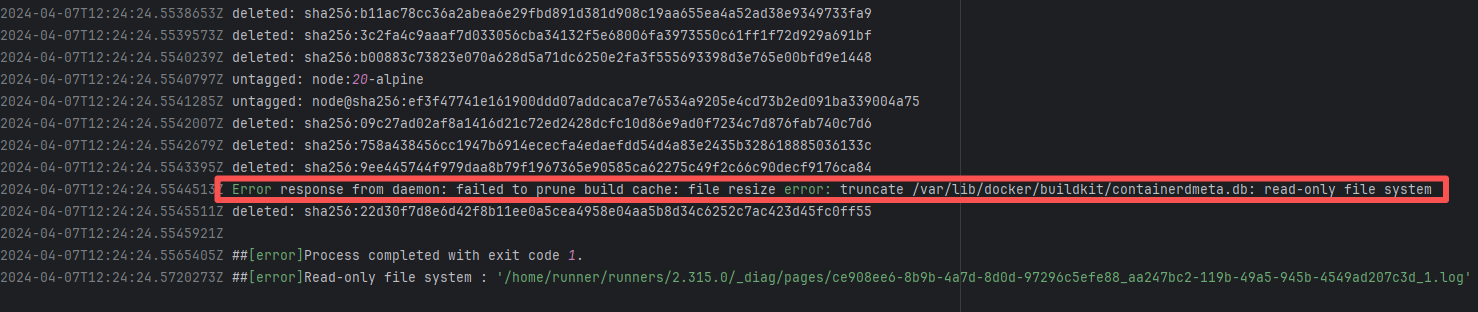

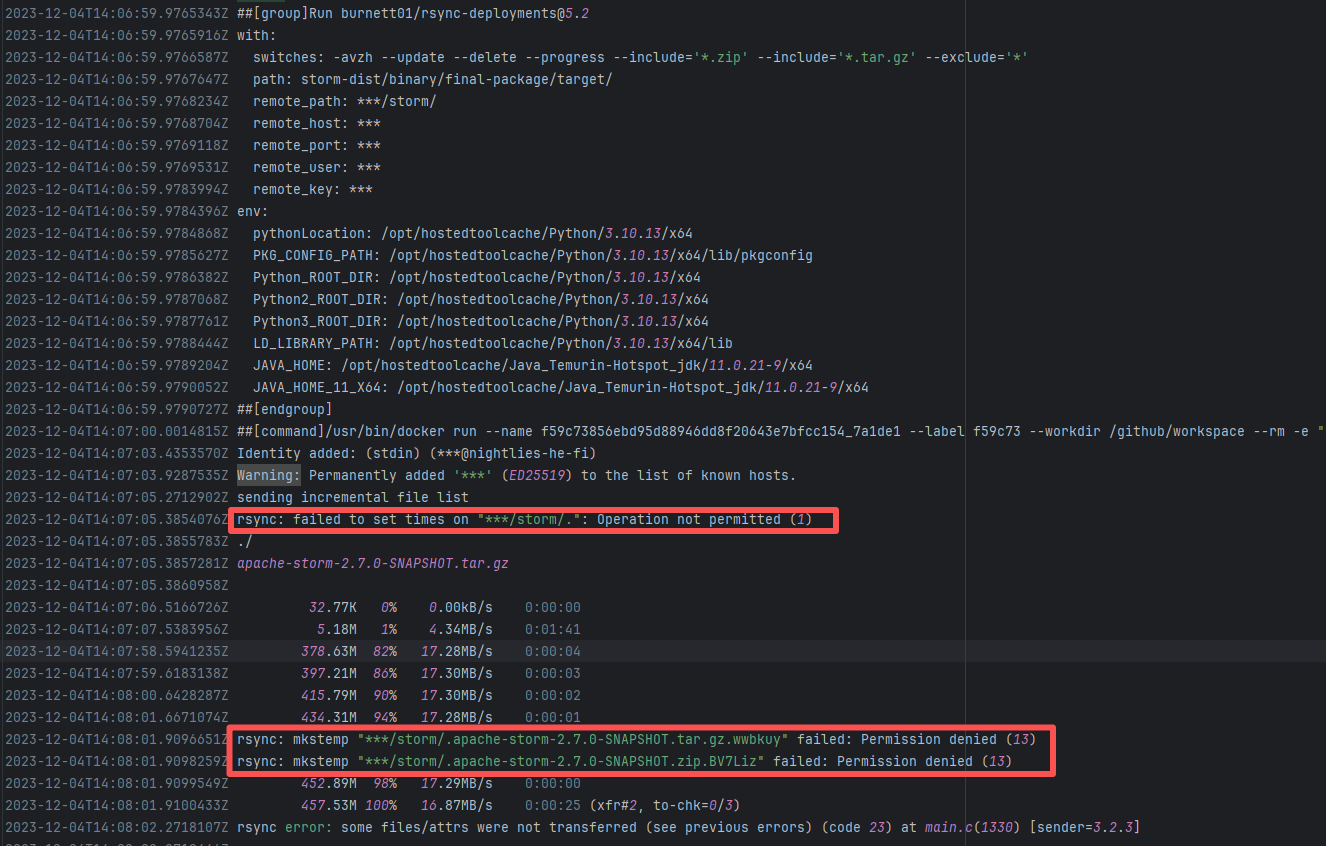

| External Environment Inconsistency |

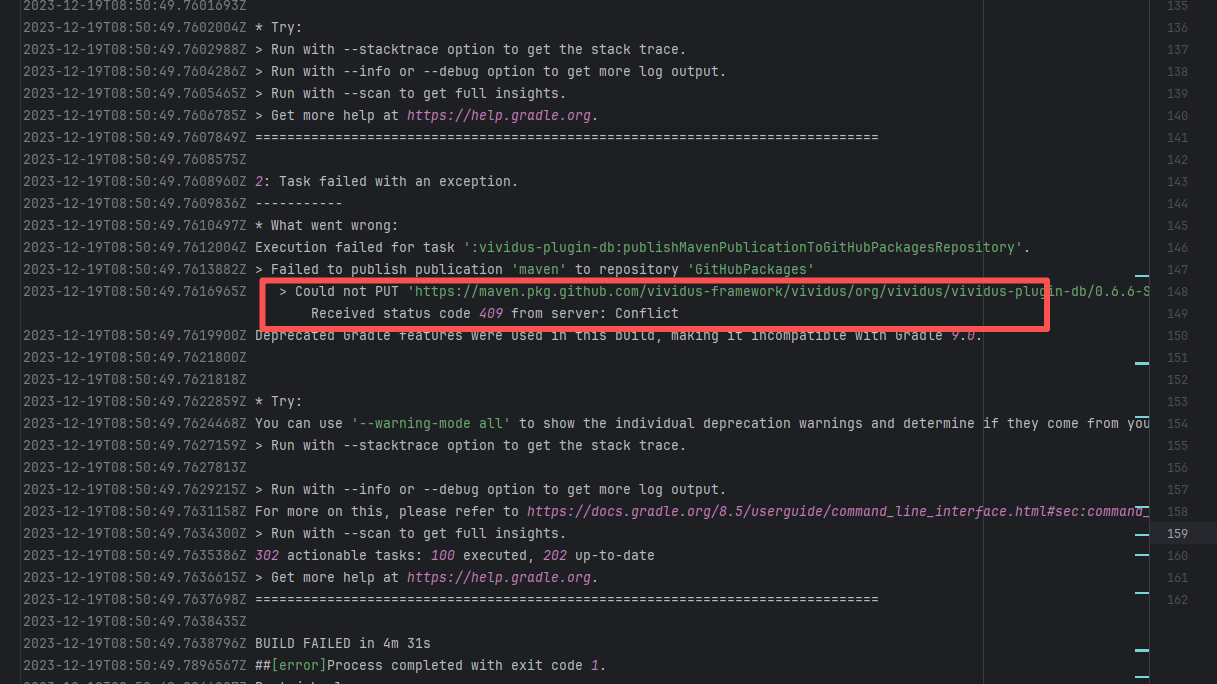

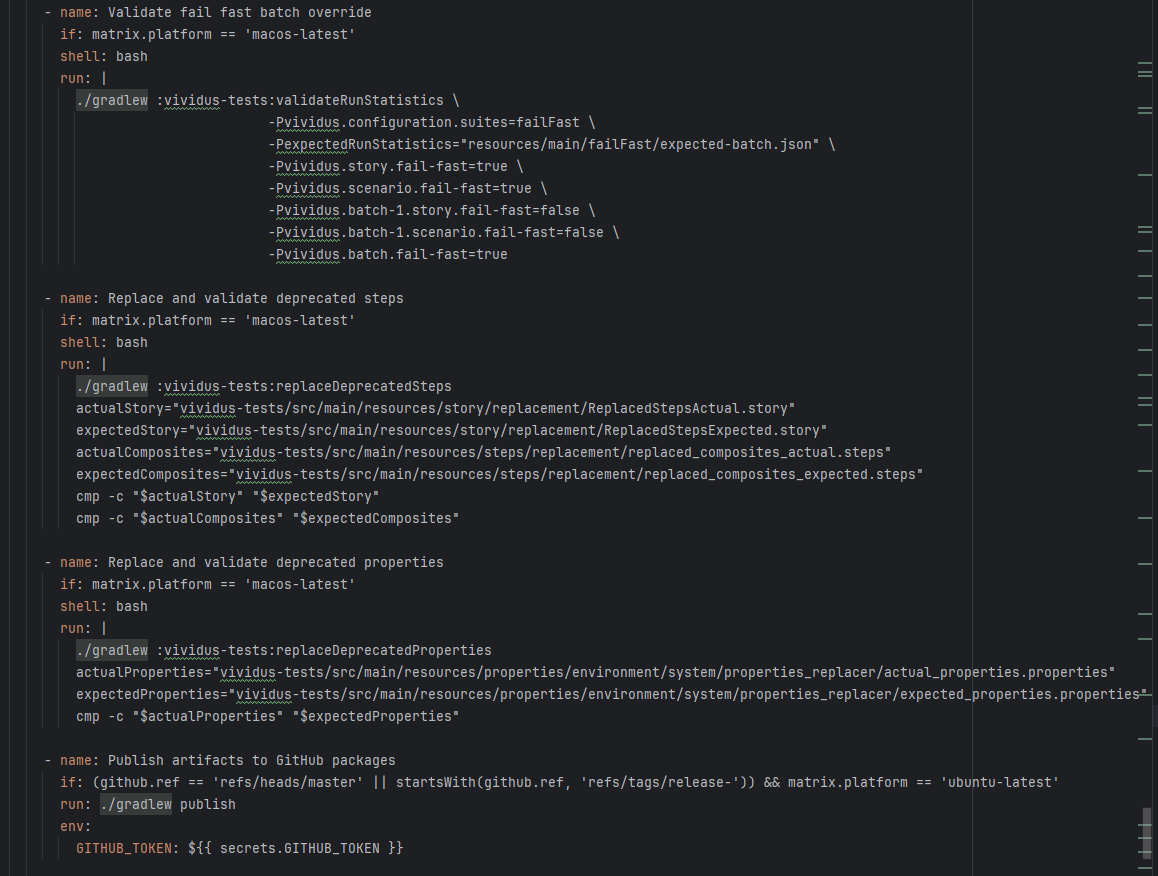

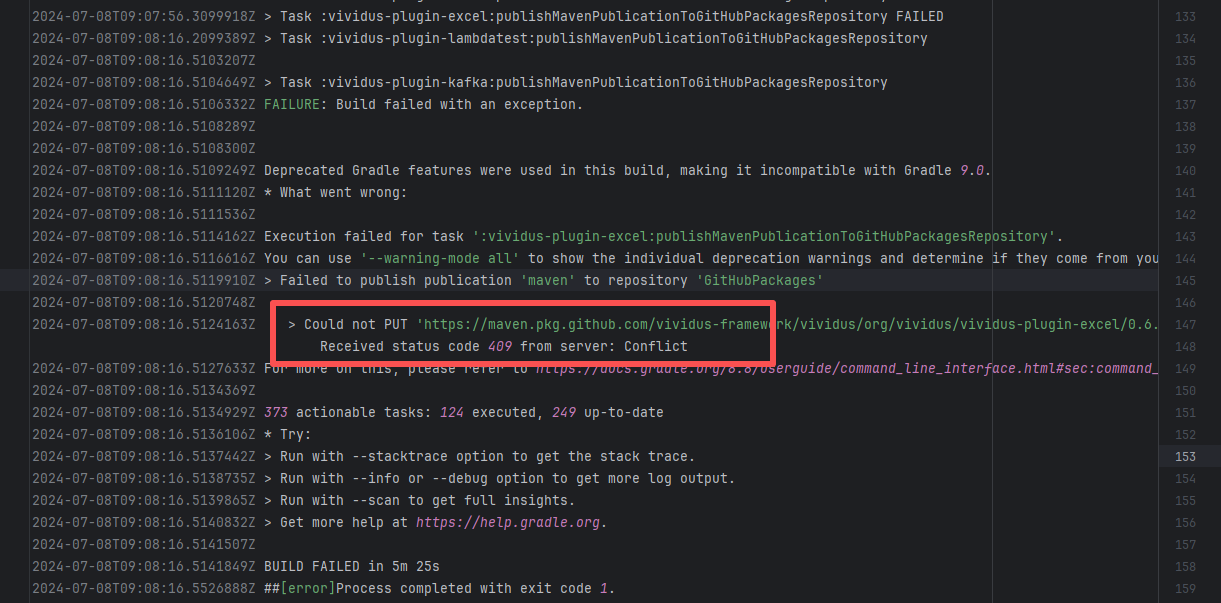

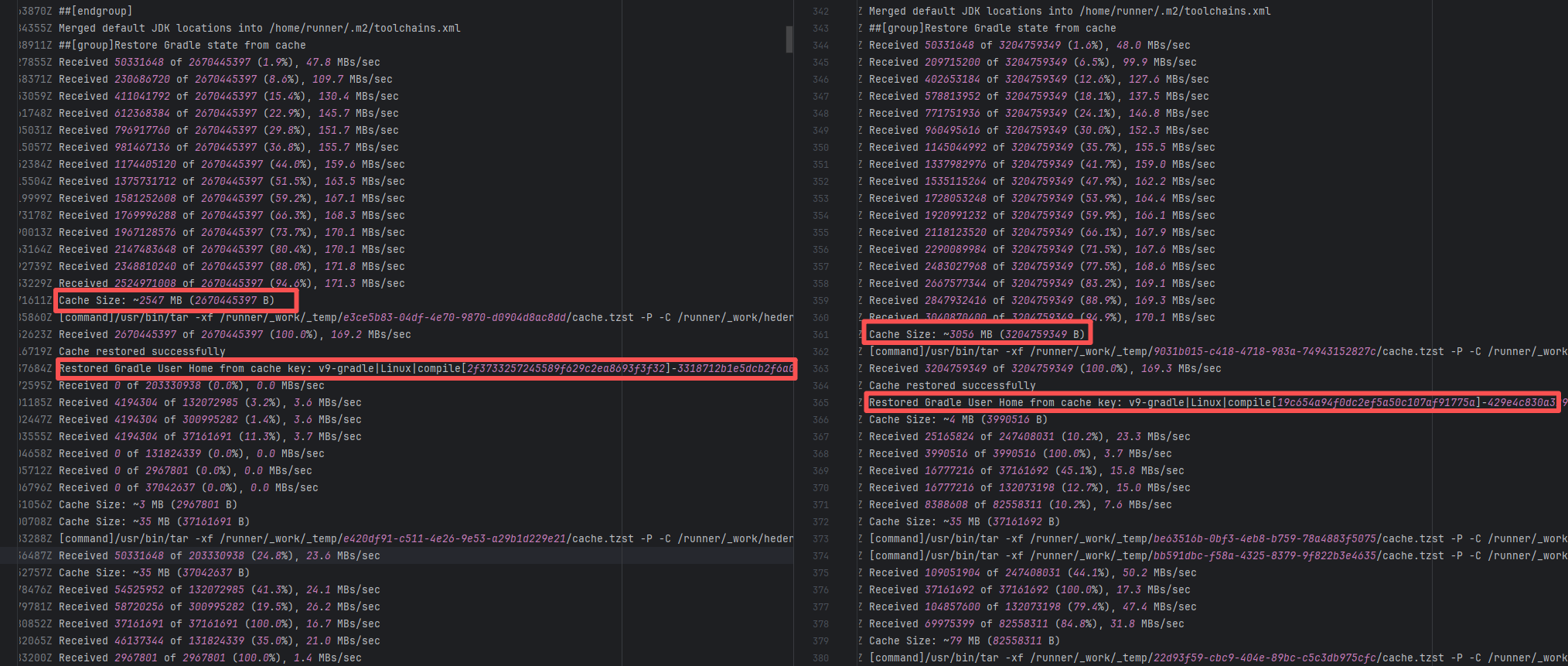

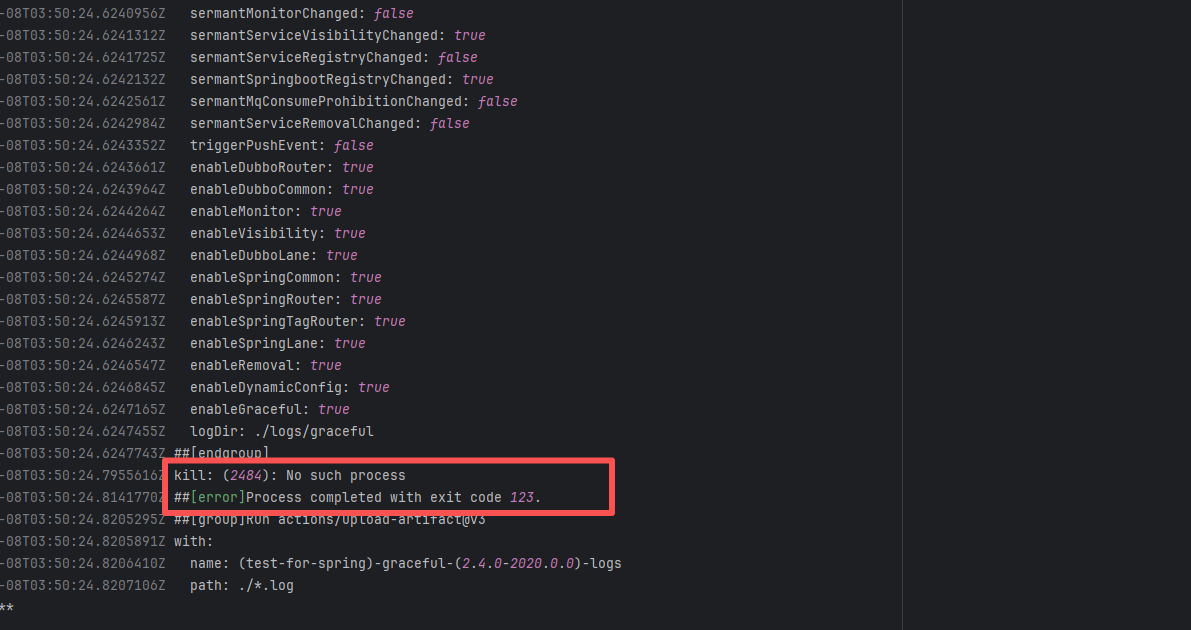

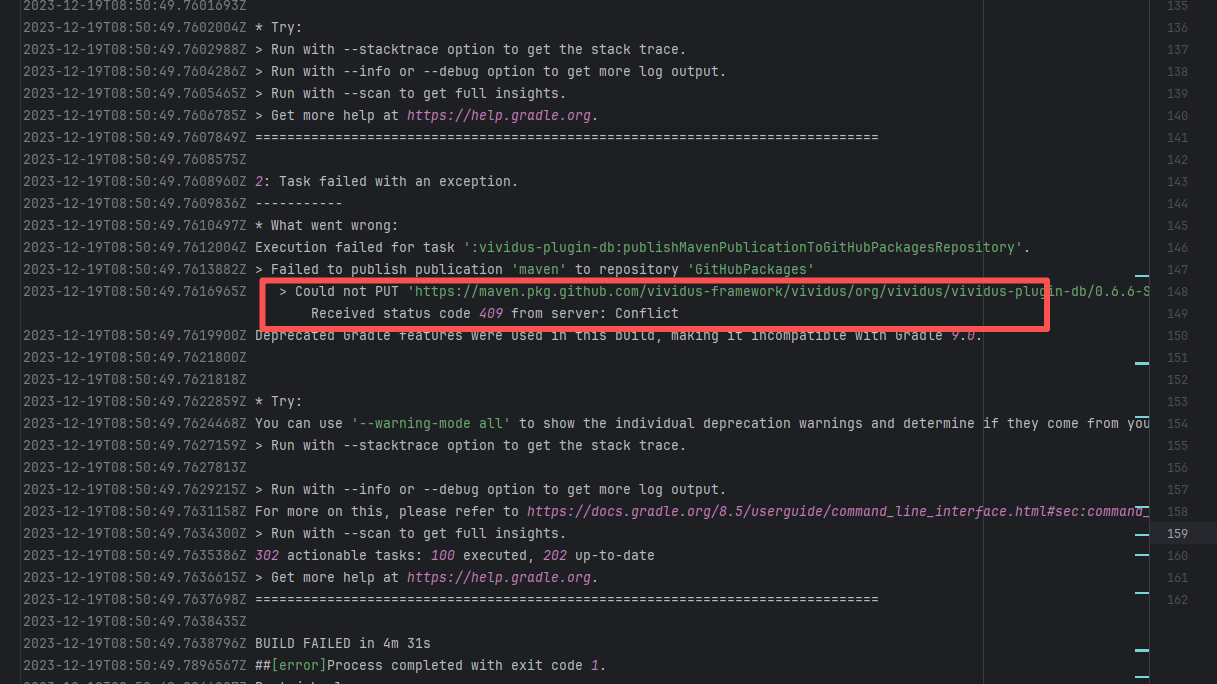

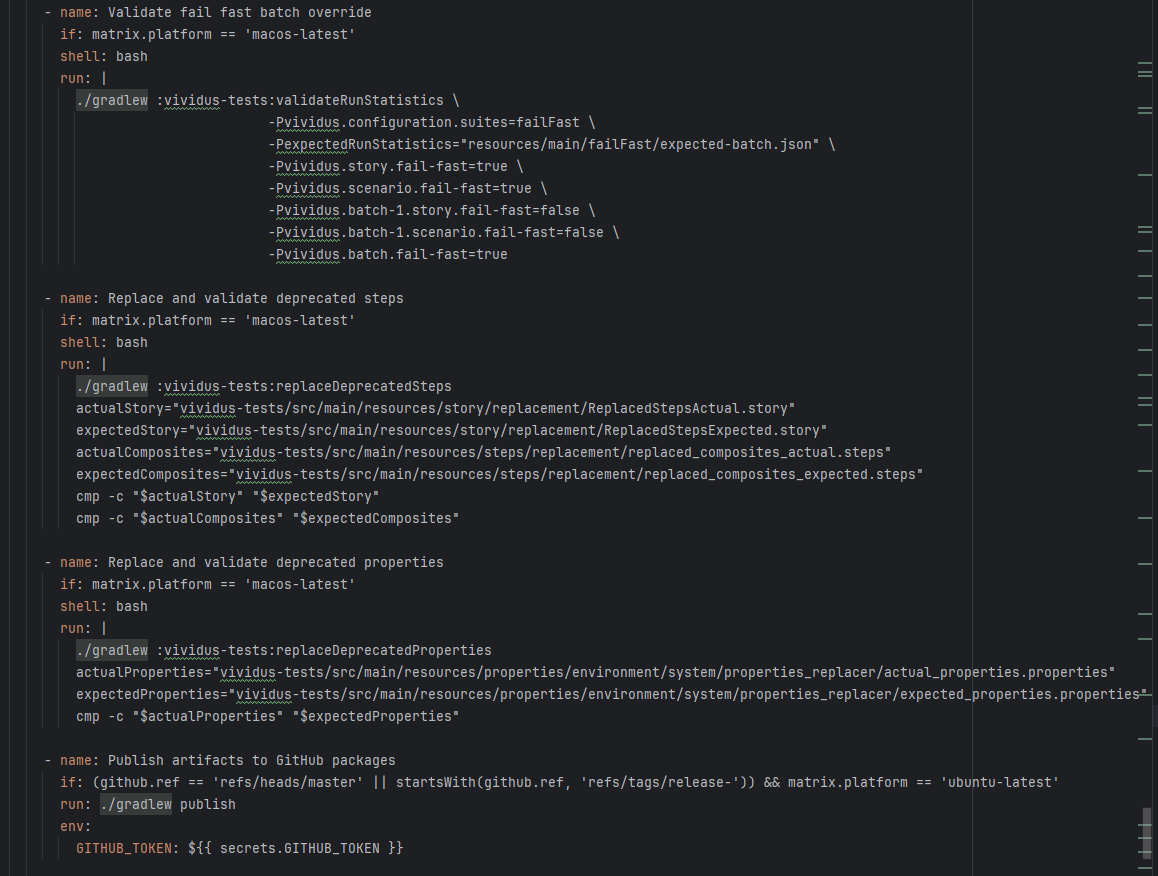

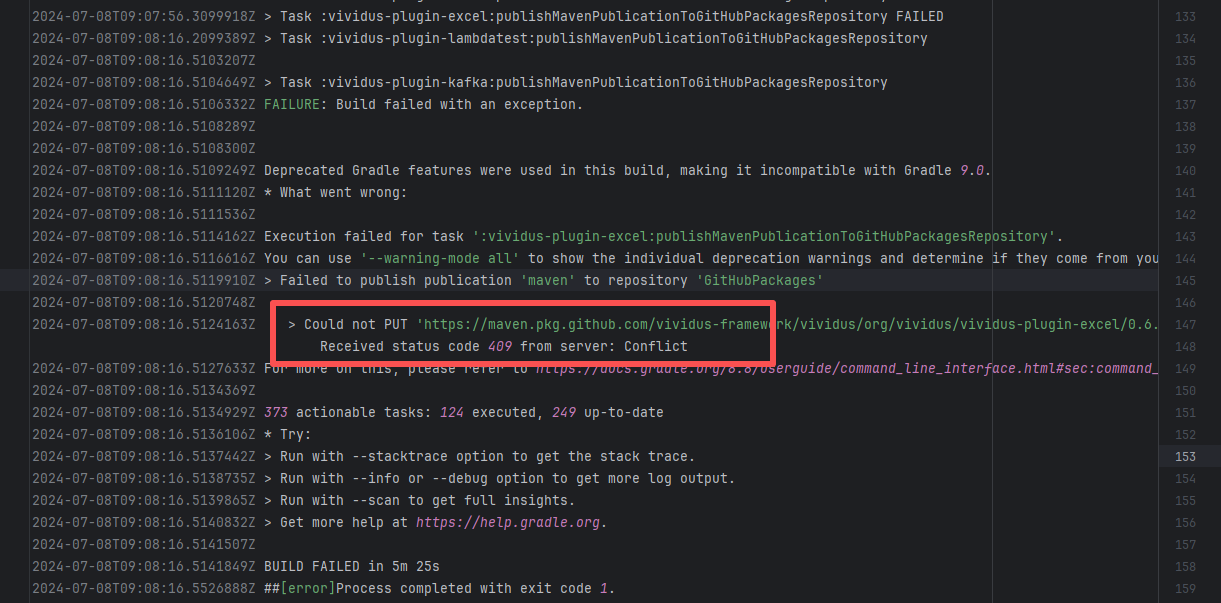

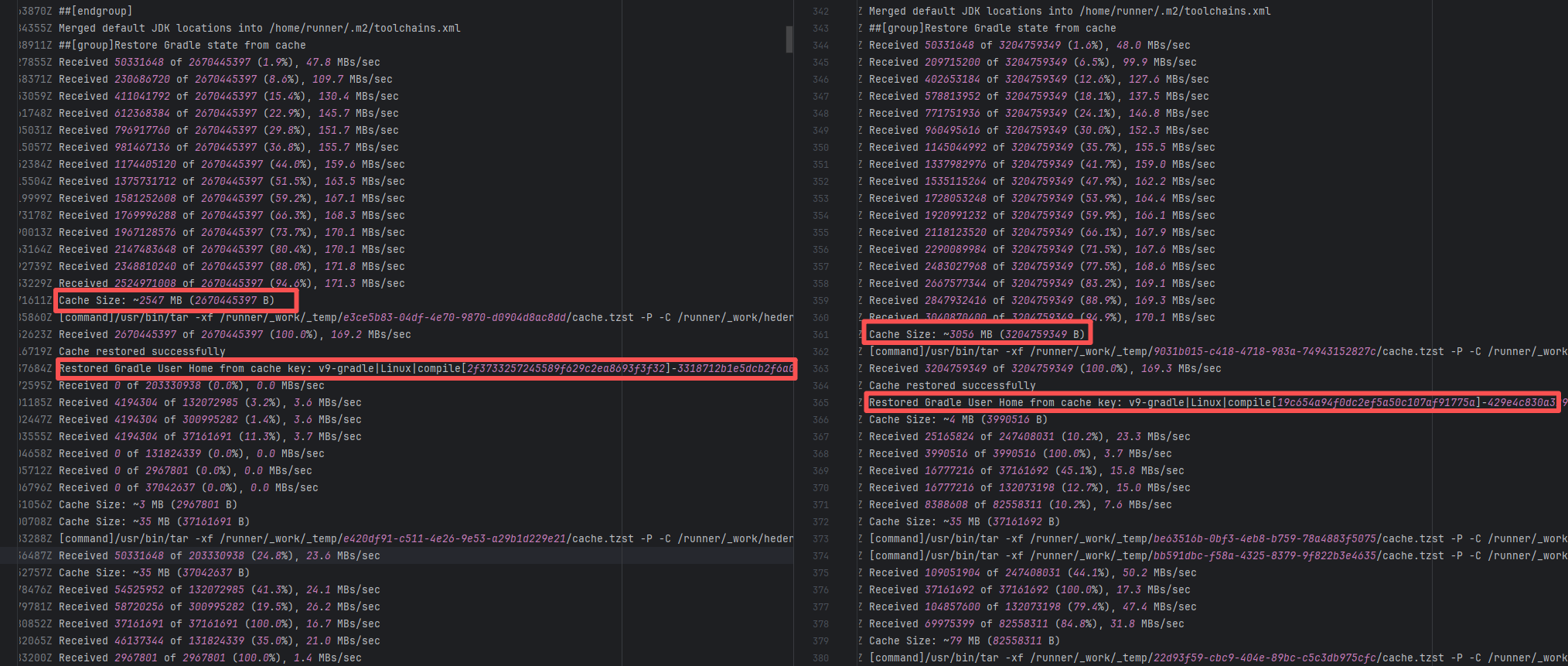

vividus-framework/vividus |

19775576809 |

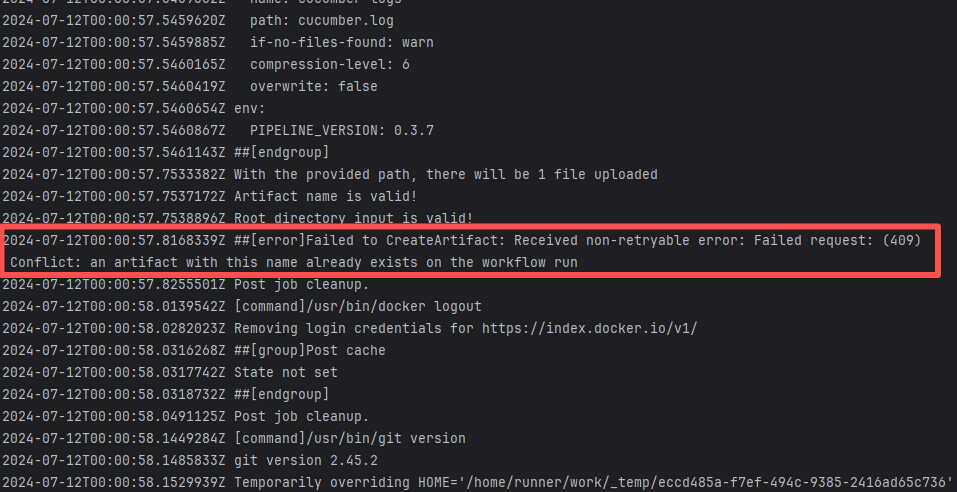

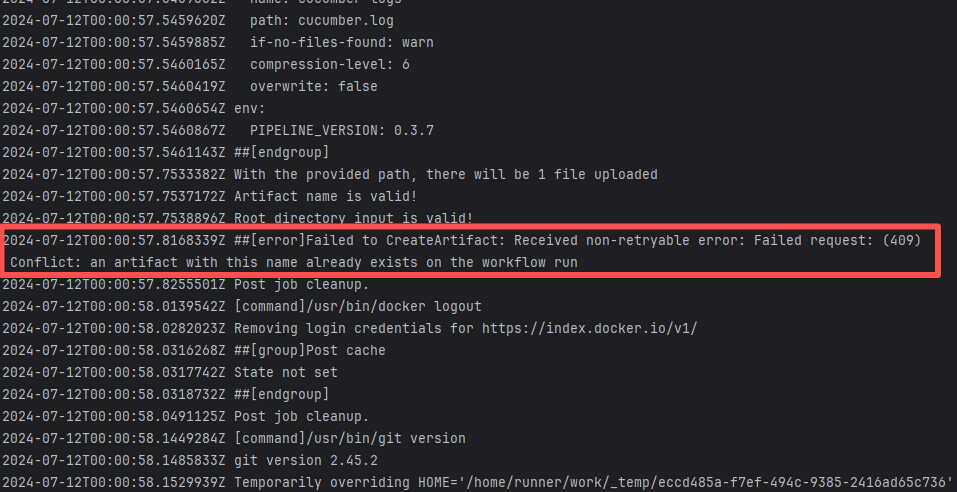

Artifact Conflict |

Error: The error log indicates a failure when publishing a Maven artifact to the GitHub Packages repository. The core reason is receiving a 409 Conflict response, indicating that the artifact to be uploaded conflicts with an artifact that already exists in the repository.

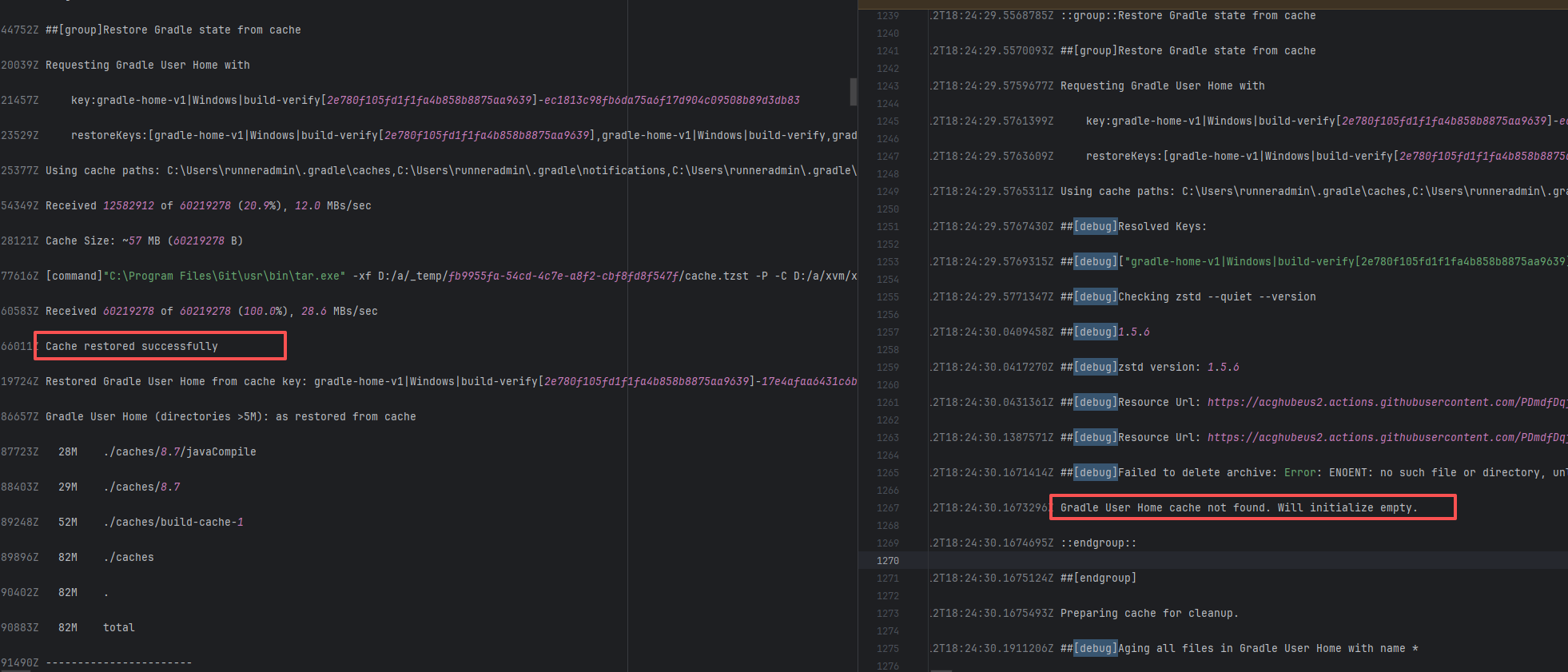

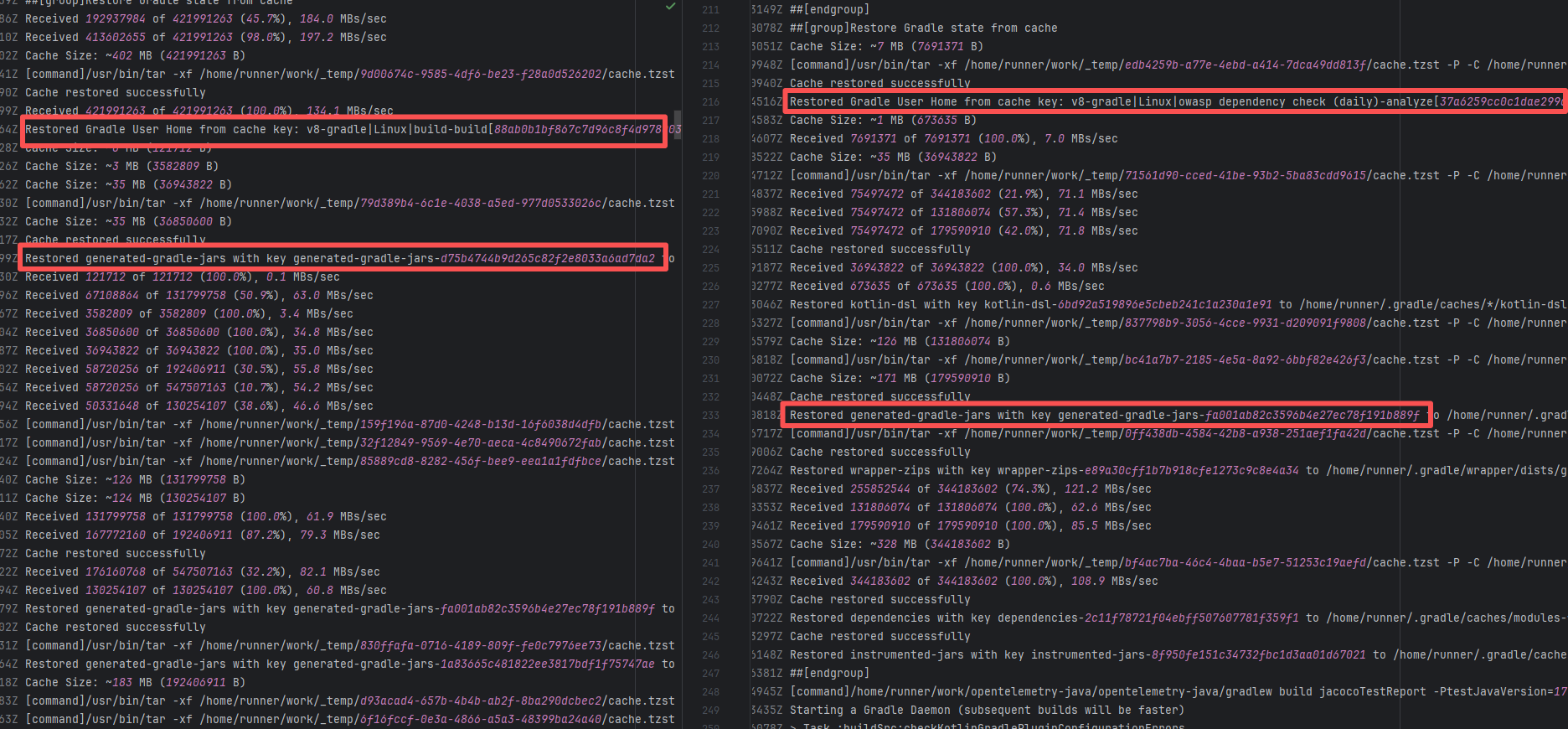

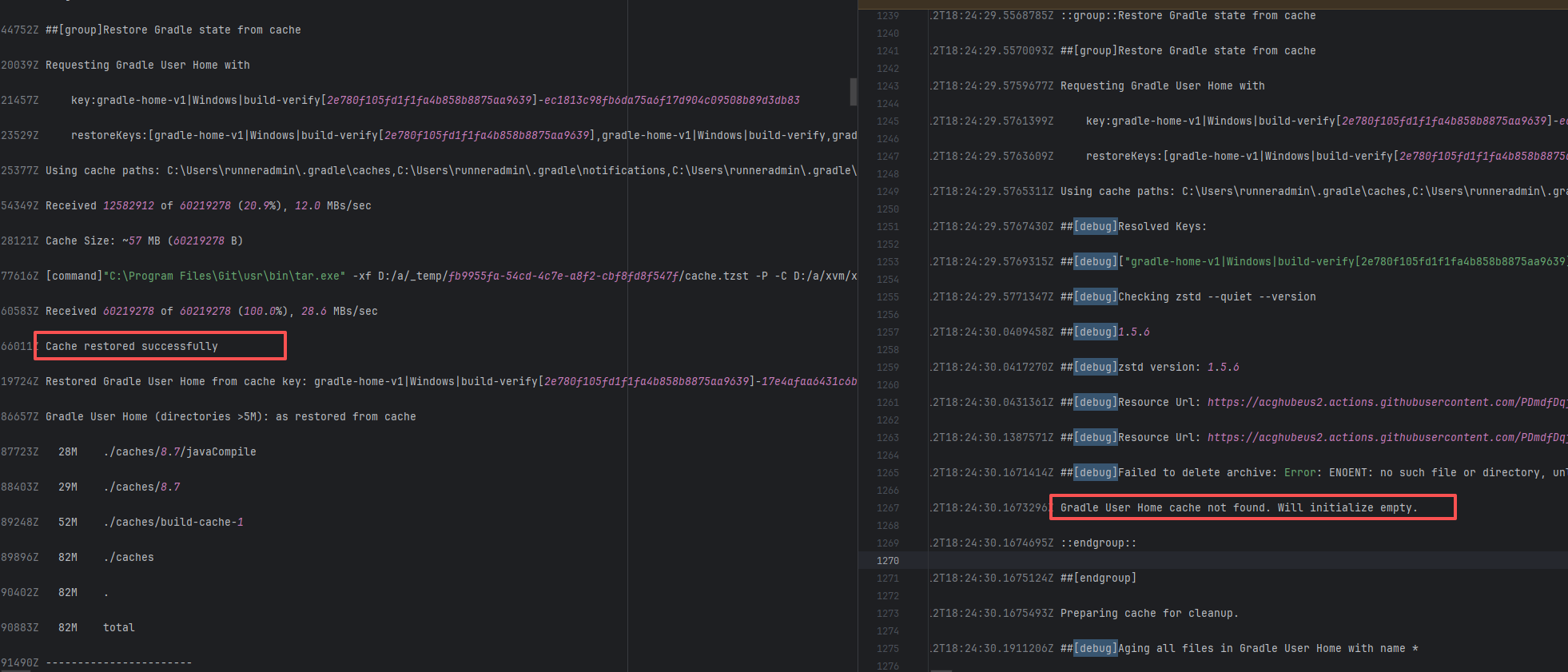

Root Cause: The script specifies that the snapshot version of the build product should be named with a timestamp, so conflicts should not occur during the upload process. However, because caching was enabled during the Gradle build process and the clean command was not executed, the old snapshot artifact and compilation results in the build/ directory were used and directly reused for upload, leading to a GitHub Packages 409 conflict. Rerun succeeded after clearing the previous local cache results. |

Rerun after deleting previous build results in the cache |

|

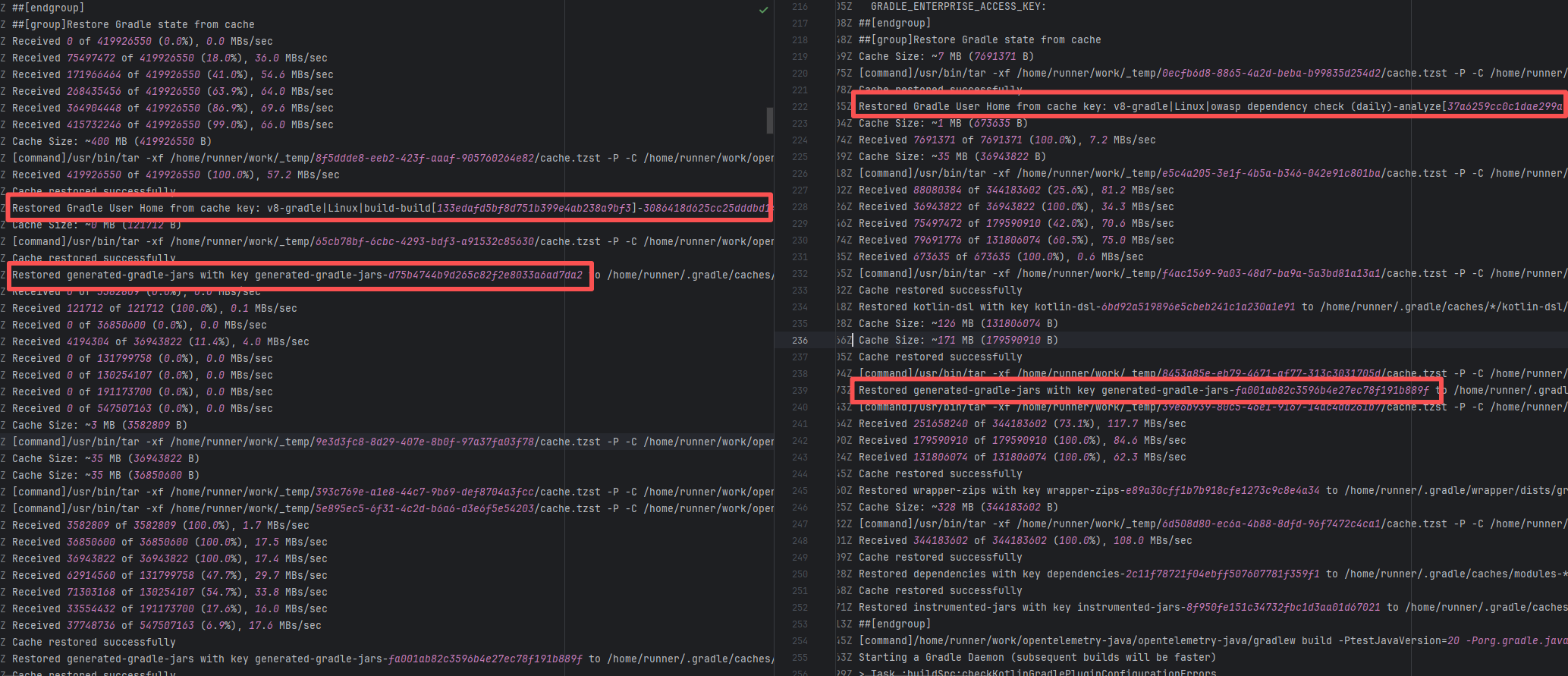

| vividus-framework/vividus |

27150758033 |

Artifact Conflict |

Error: The error log indicates a failure when publishing a Maven artifact to the GitHub Packages repository. The core reason is receiving a 409 Conflict response, indicating that the artifact to be uploaded conflicts with an artifact that already exists in the repository.

Root Cause: The script specifies that the snapshot version of the build product should be named with a timestamp, so conflicts should not occur during the upload process. However, because caching was enabled during the Gradle build process and the clean command was not executed, the old snapshot artifact and compilation results in the build/ directory were used and directly reused for upload, leading to a GitHub Packages 409 conflict. Rerun succeeded after clearing the previous local cache results. |

Rerun after deleting previous build results in the cache |

|

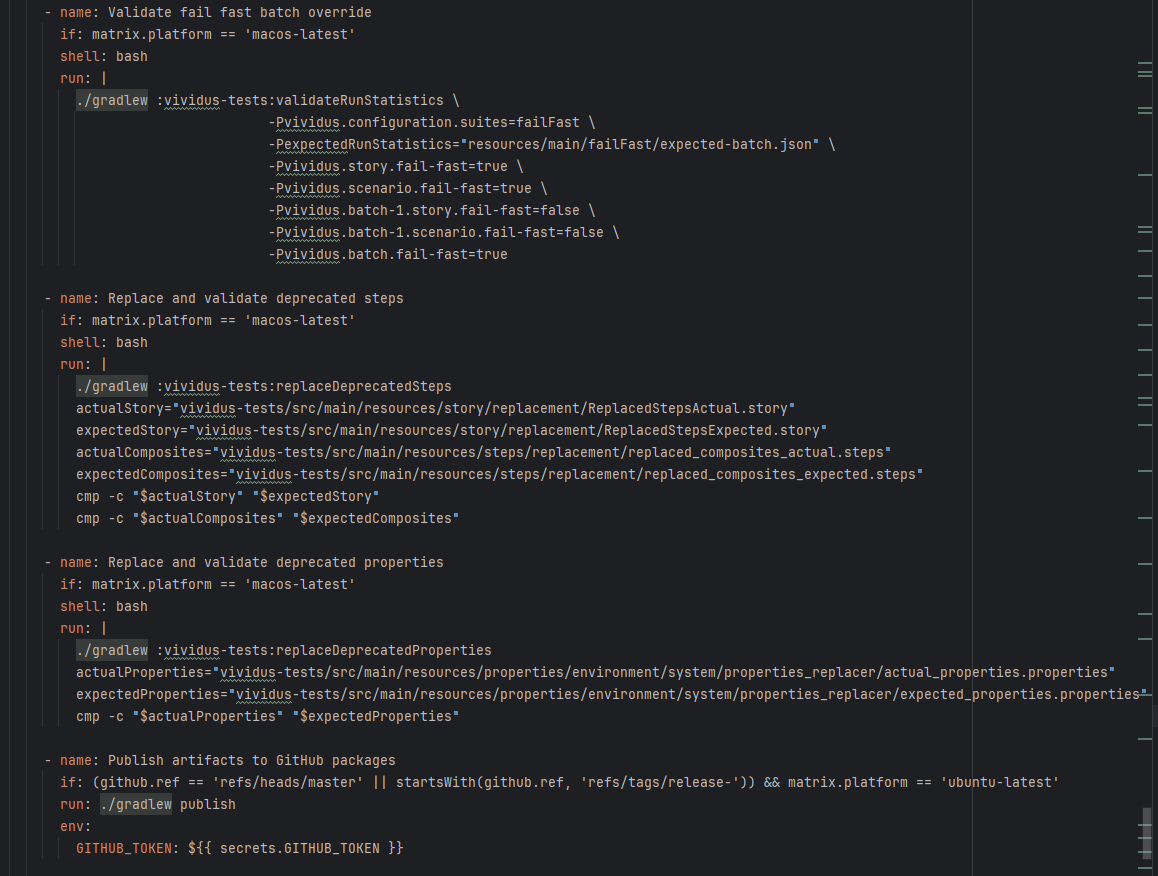

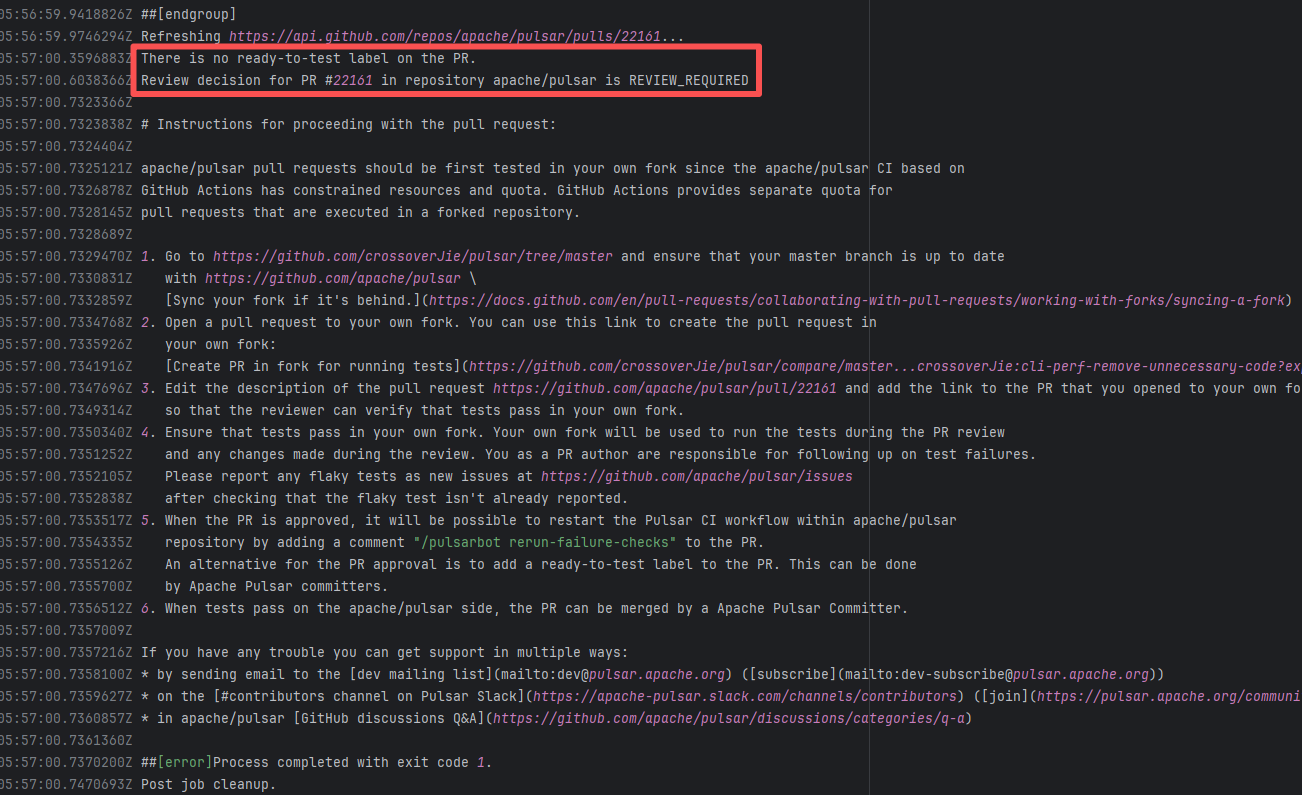

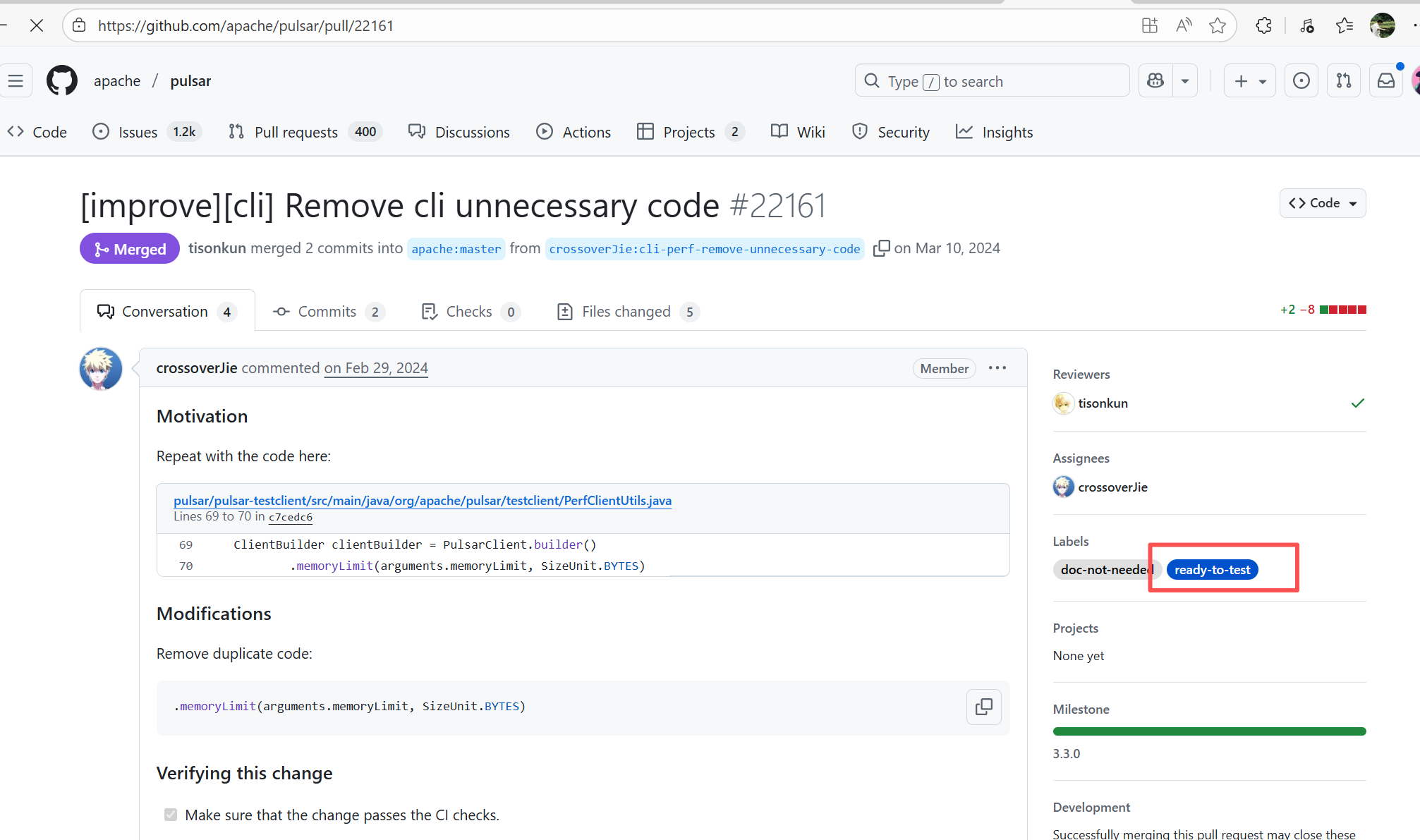

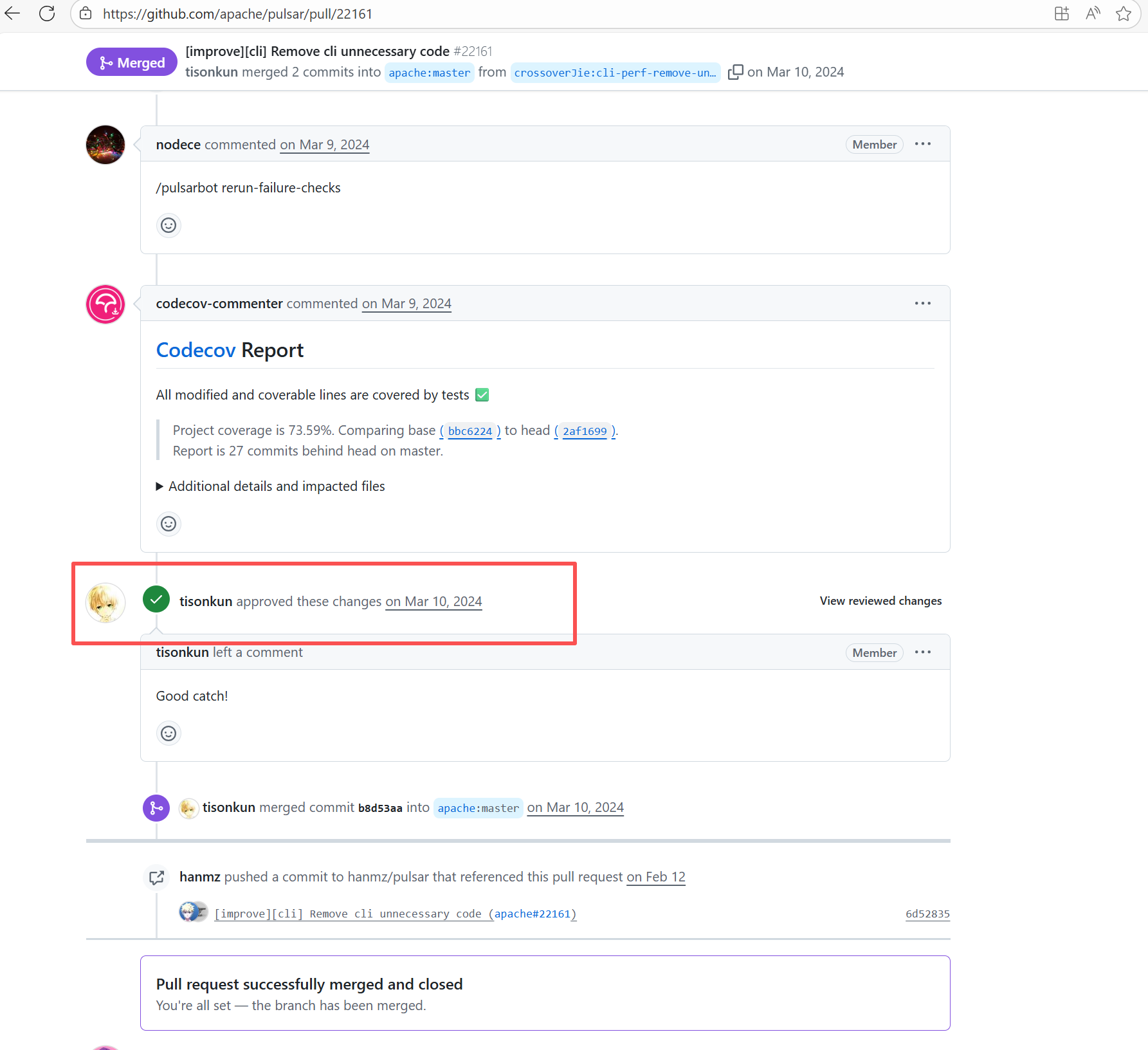

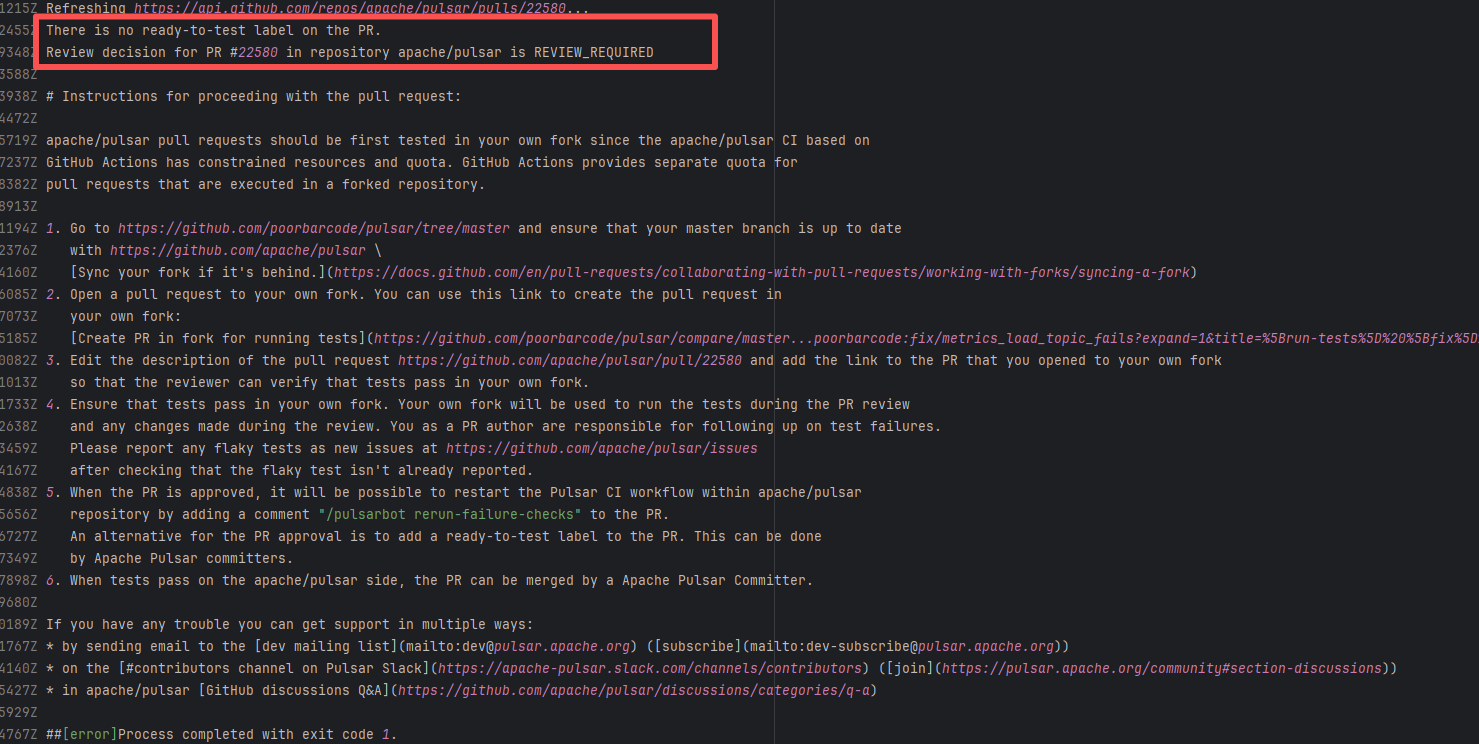

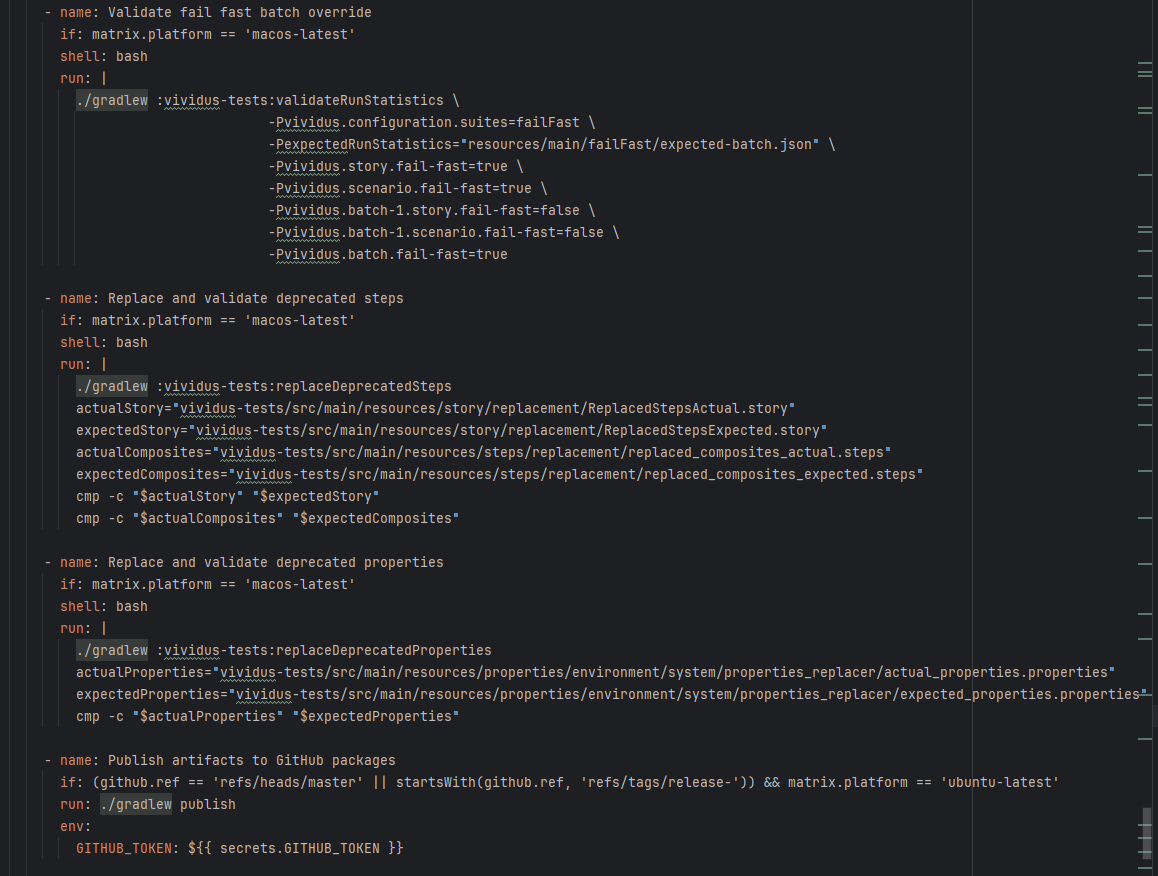

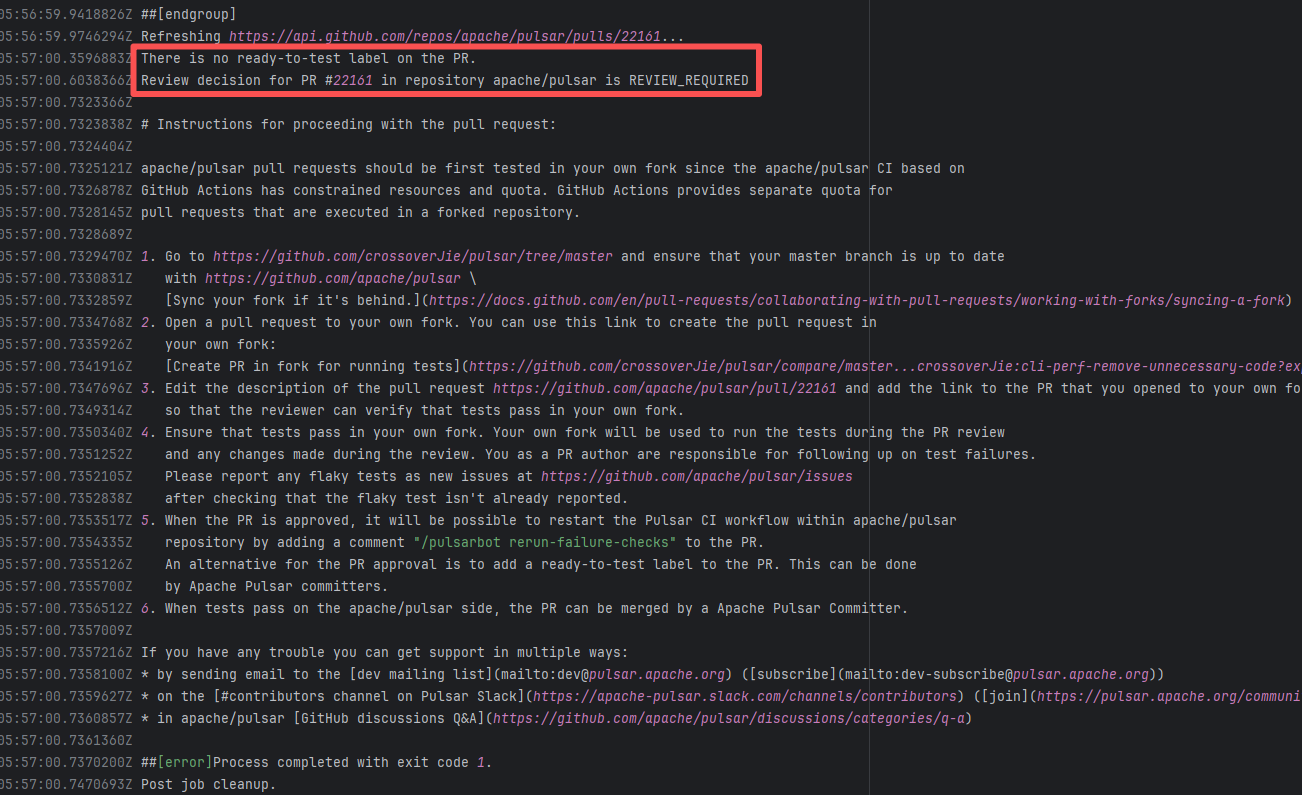

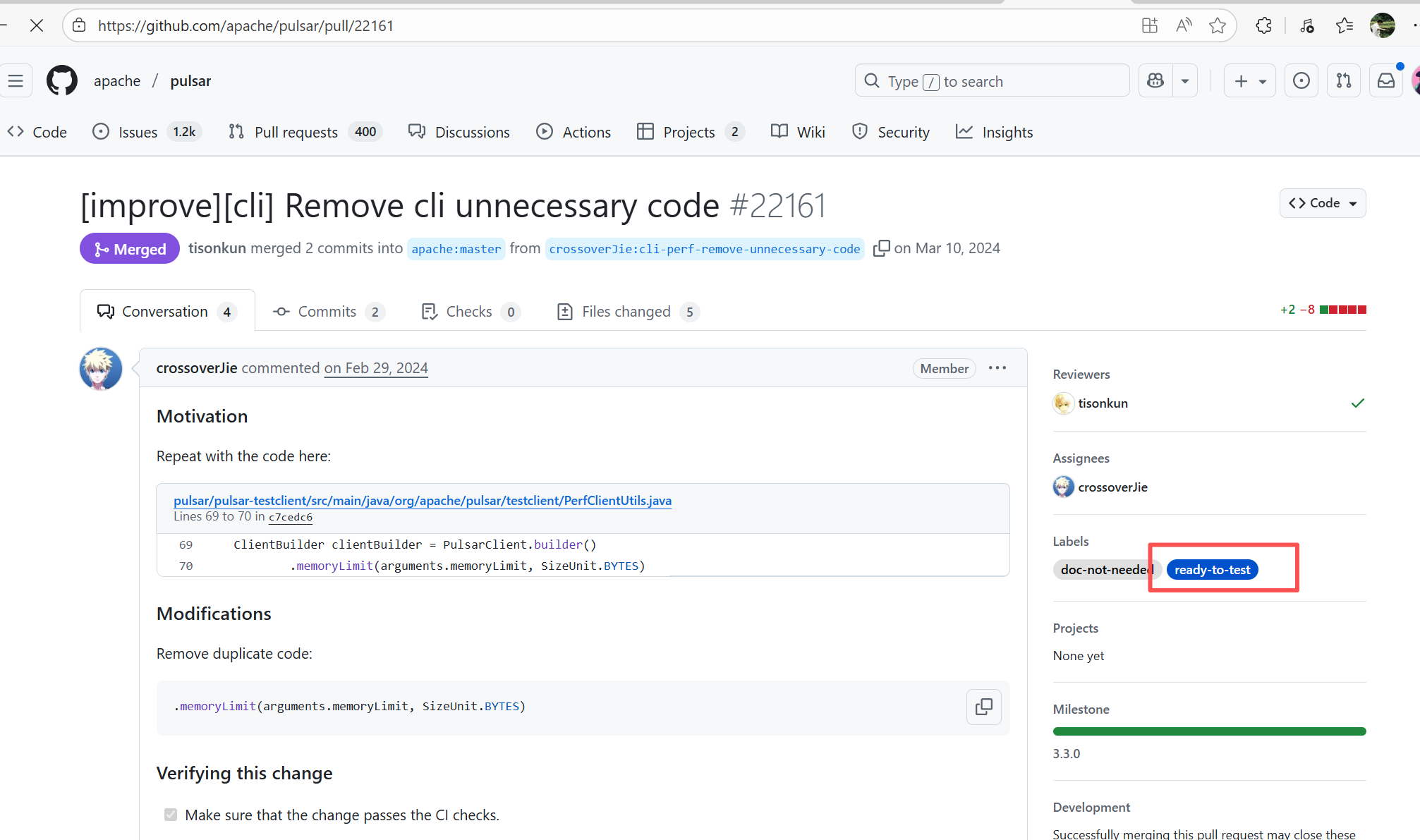

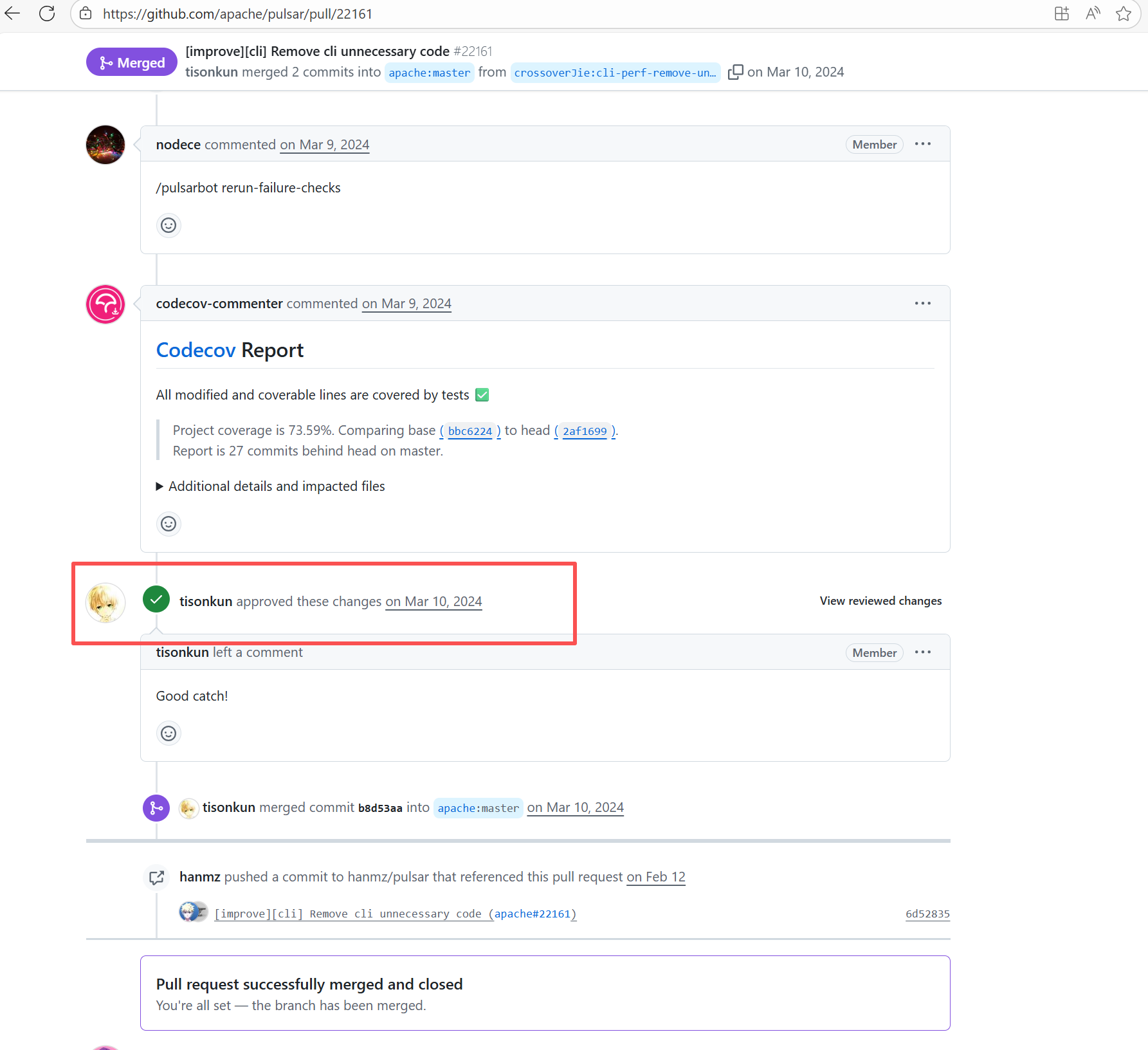

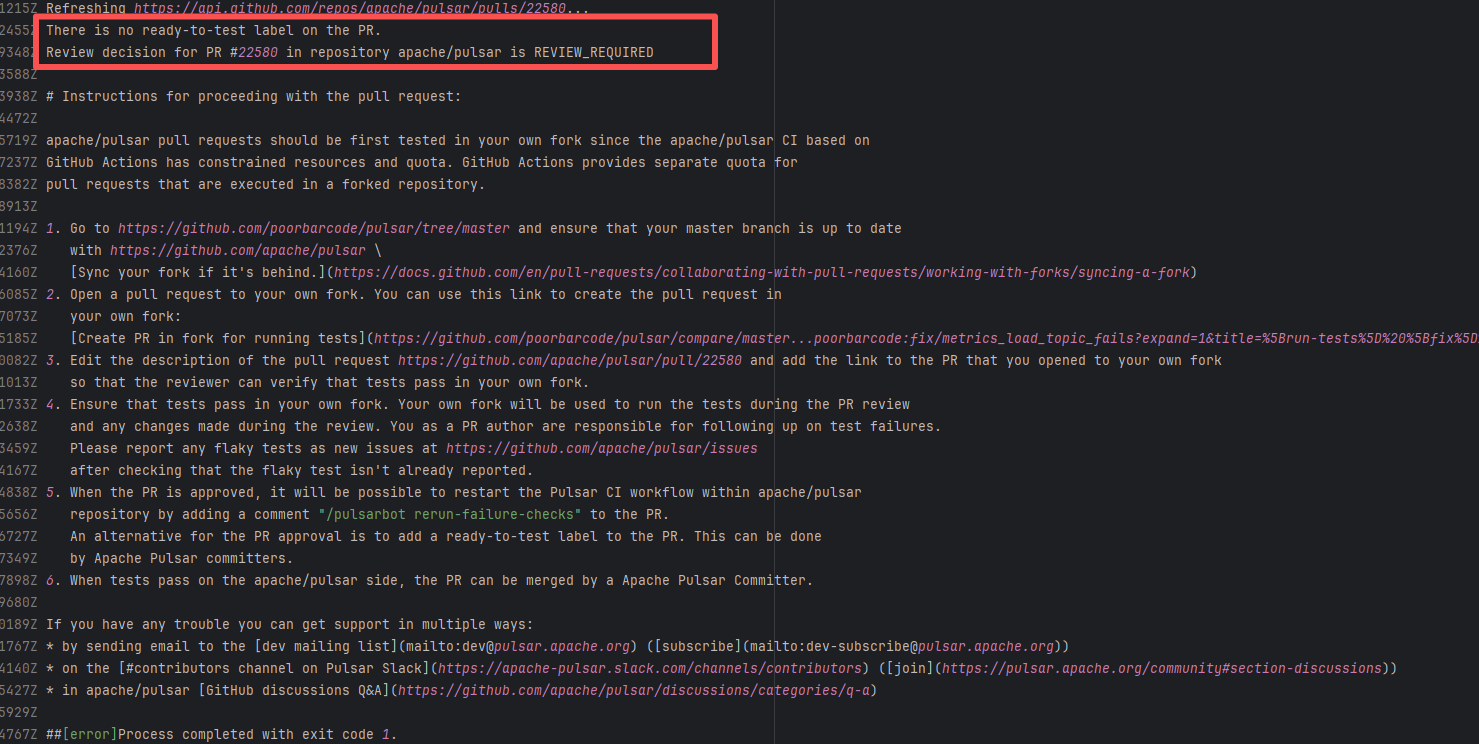

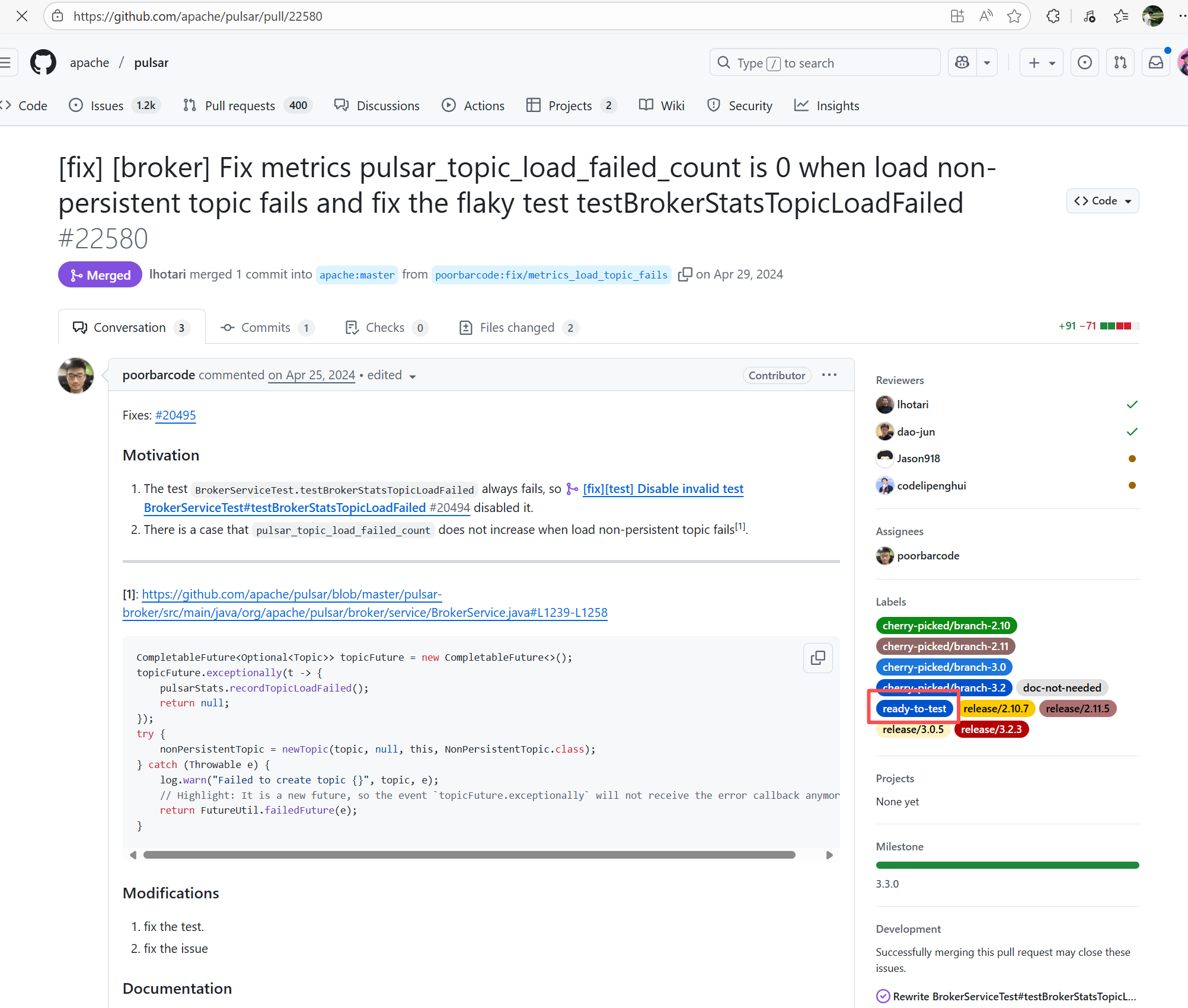

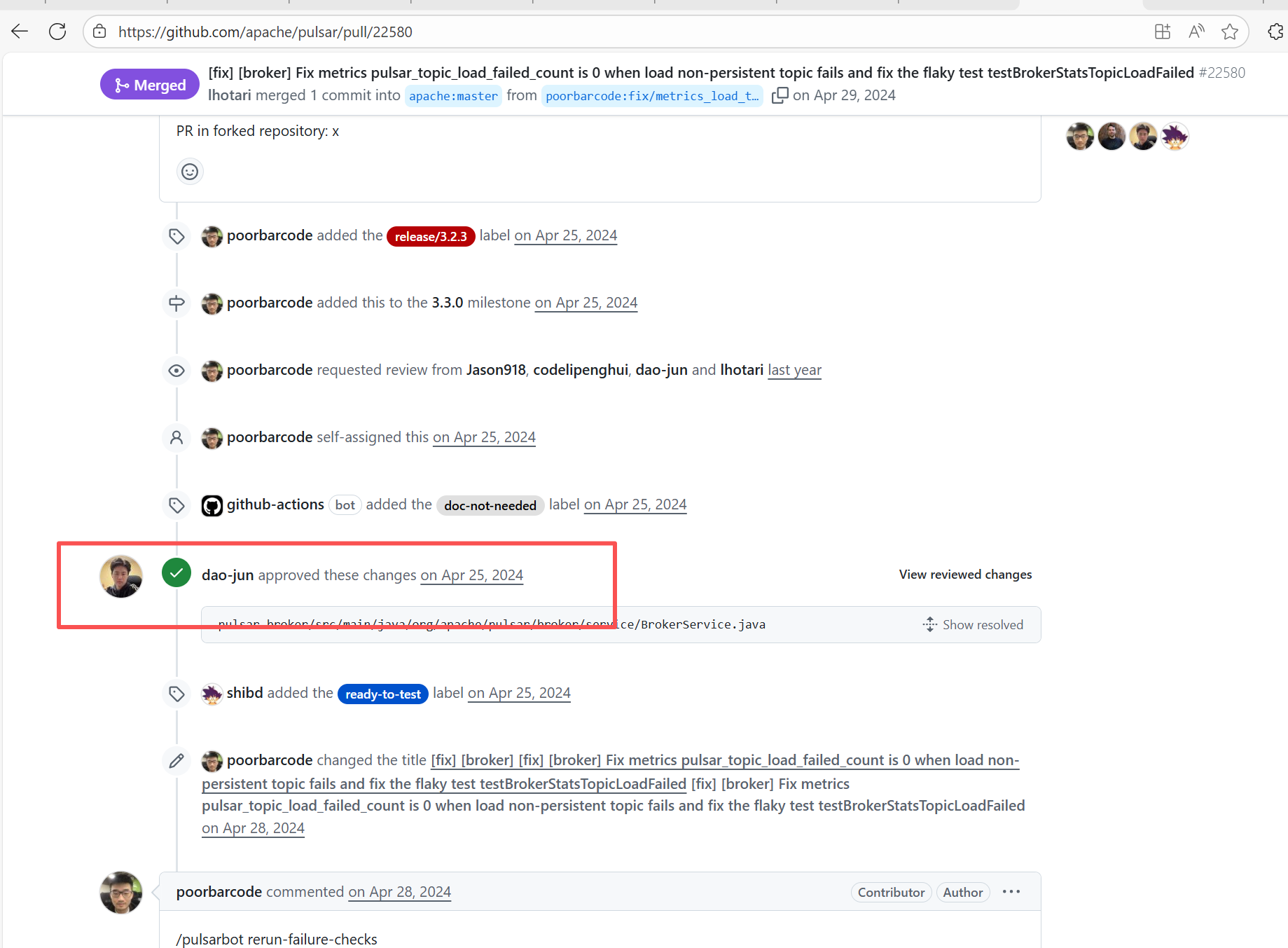

| apache/pulsar |

25103043188 |

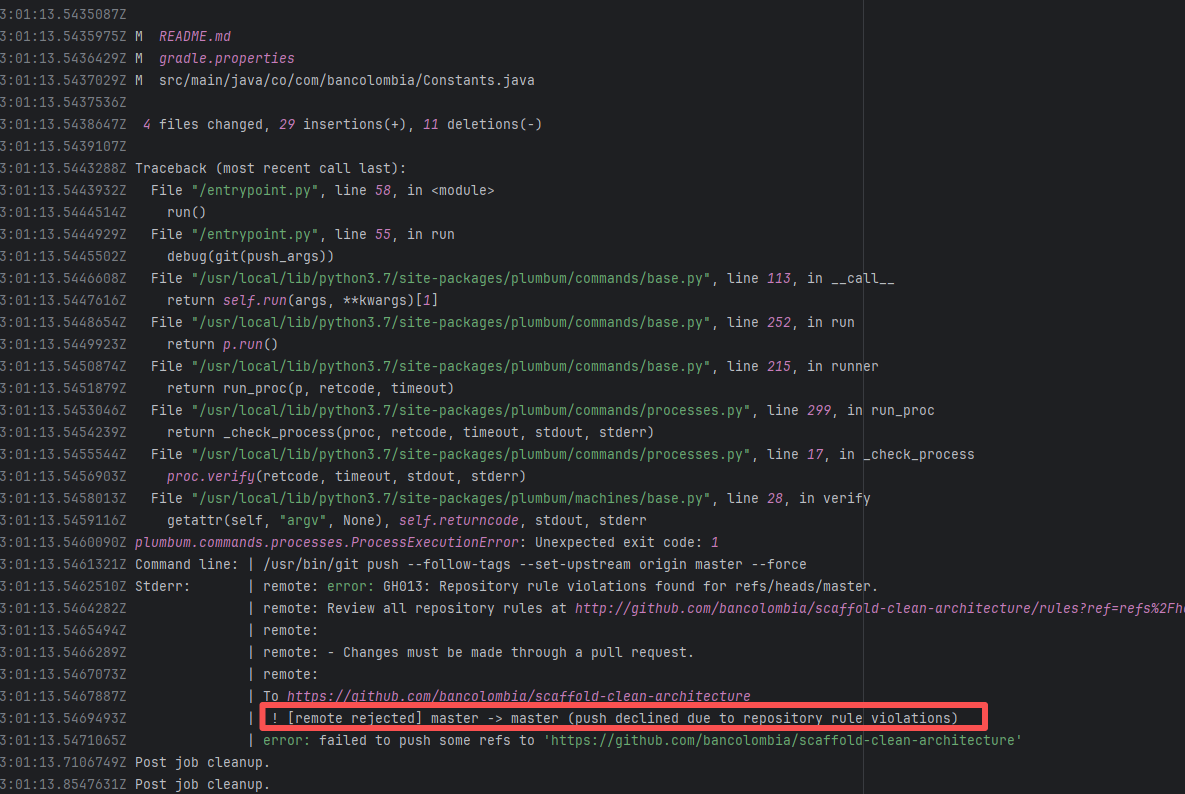

Workflow Policy Violation |

Error: Operations on the PR #22858 in the Apache Pulsar repository are currently restricted. The core reason is that the PR is missing the "ready-to-test" label and is in the "REVIEW_REQUIRED" state, so subsequent operations are prohibited.

Root Cause: CI based on GitHub Actions in the main repository has resource and quota limits. The official mandatory requirement is: all PRs must first complete test verification in the contributor's personal Fork repository. Only after confirming there are no problems can they be submitted to the main repository, to avoid occupying the limited CI quota of the main repository.

After a PR is submitted, it is necessary to contact the repository administrator to add the "ready-to-test" label to the PR. After the label is added, the main repository CI will allow the test process to execute; the repository administrator will review the code, modify the code according to the review comments (if any), and push it to the corresponding branch of the Fork repository (the PR will automatically synchronize the modifications). After the review is passed, the PR state will change from "REVIEW_REQUIRED" to "APPROVED", and then the subsequent merging process can be entered. |

Contact the relevant personnel to conduct testing and review according to the main repository requirements, and rerun after completion |

|

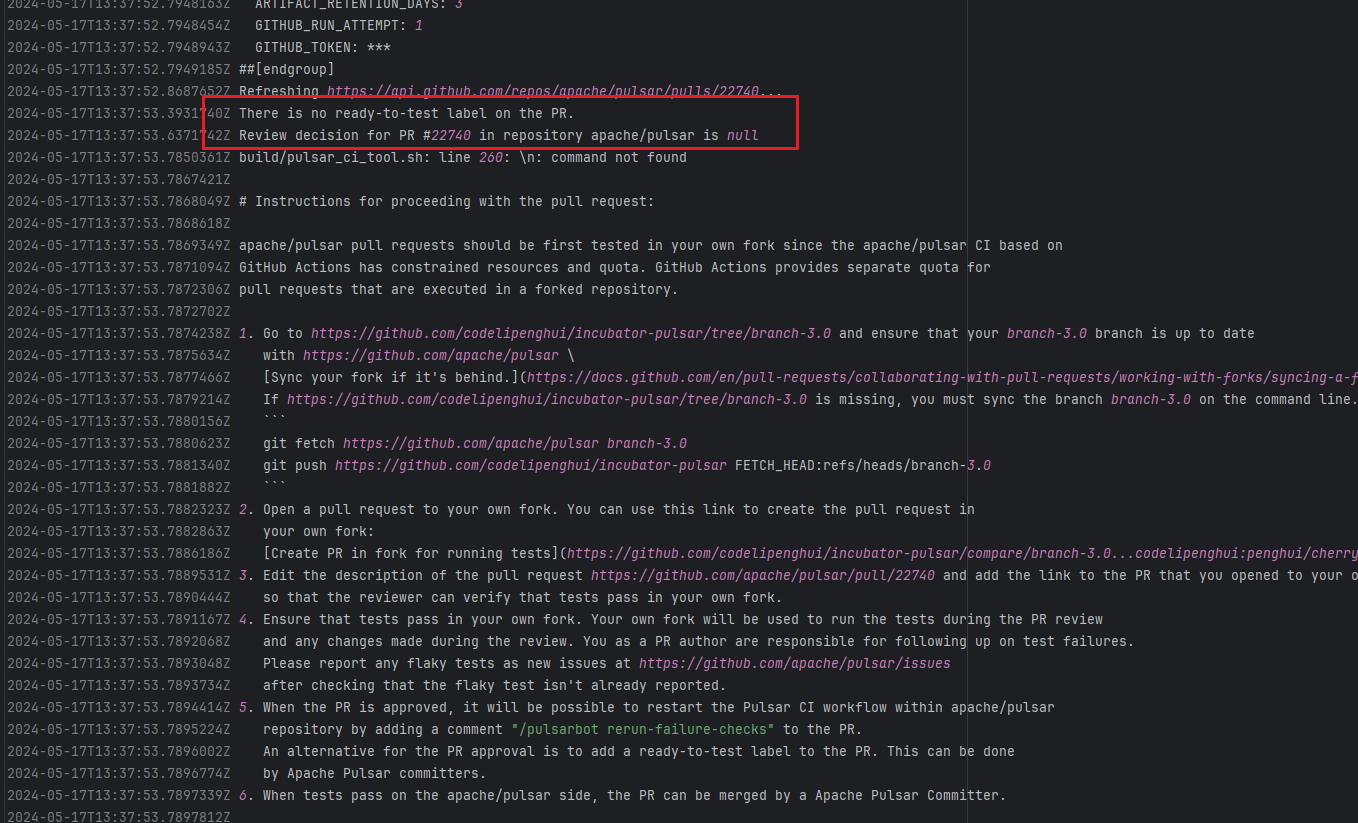

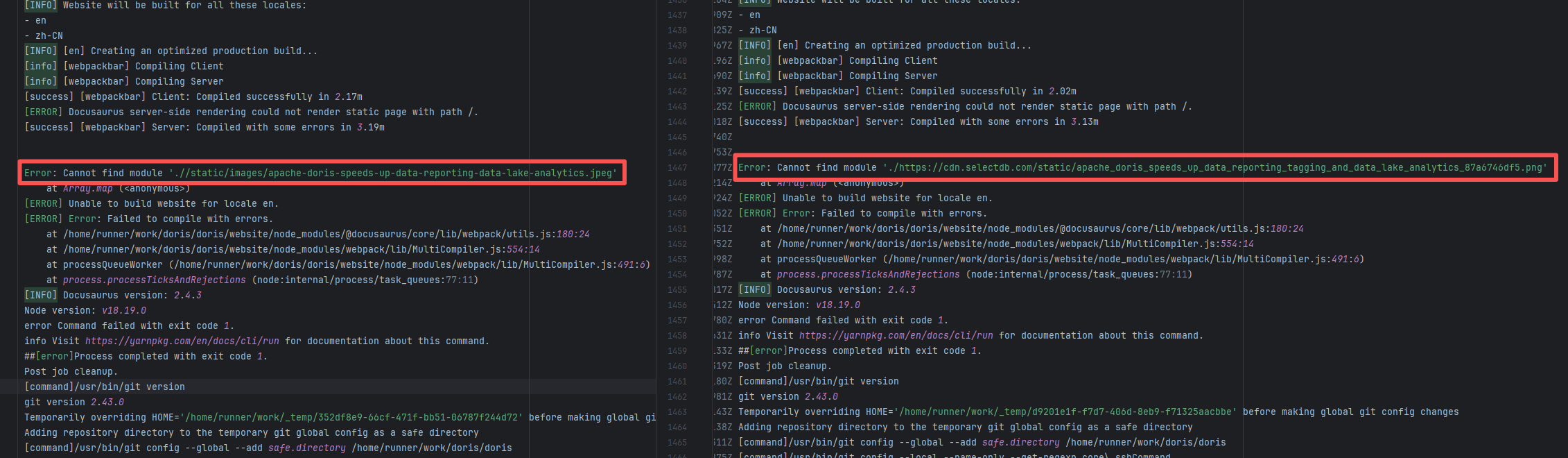

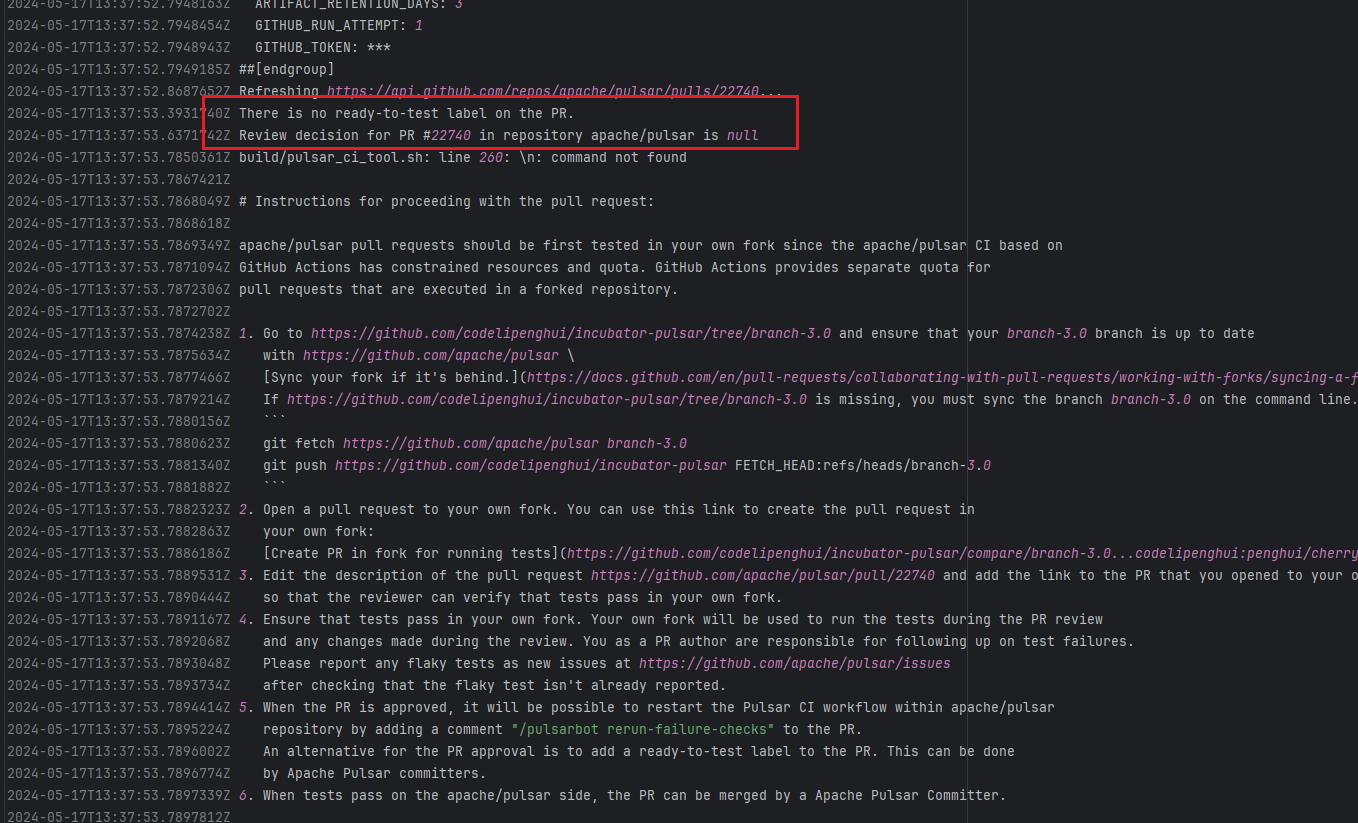

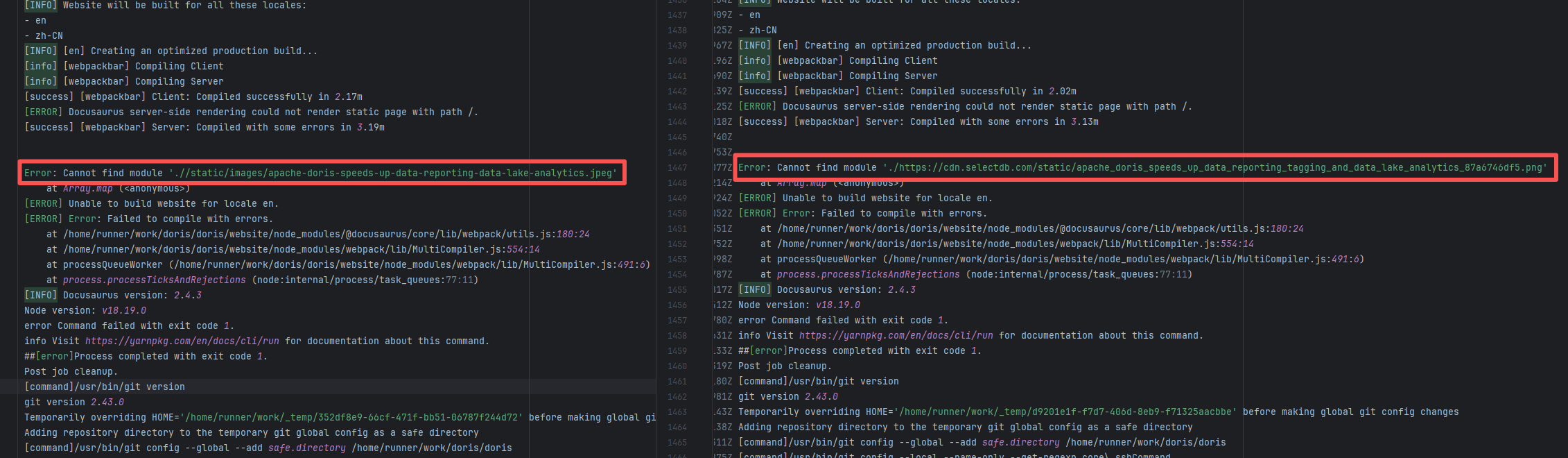

| apache/doris |

20018755235 |

Upstream Repository Issue |

Error: Docusaurus failed when building the static website. The core reason is that the image module could not be found, leading to a server-side rendering failure. A compilation error occurred.

Root Cause: Analysis of the workflow revealed that the code for building the website was cloned from another repository. For the first failure, the file path was ./https://cdn.selectdb.com/static/apache_doris_speeds_up_data_reporting_tagging_and_data_lake_analytics_87a6746df5.png. The second time (local), it was .//static/images/apache-doris-speeds-up-data-reporting-data-lake-analytics.jpeg. Both were path concatenation errors. After correcting the path the third time, the static website was successfully built. |

Rerun successfully after modifying the static resource path to the correct path in the other repository |

|

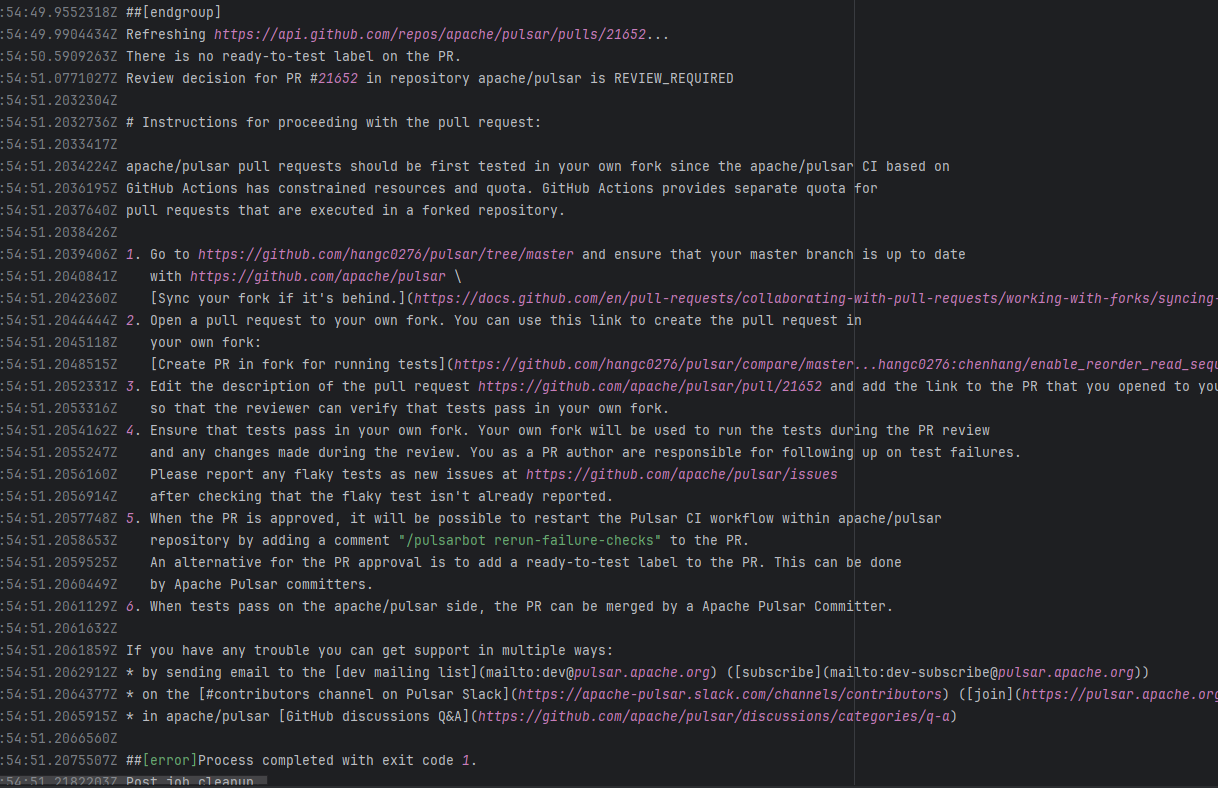

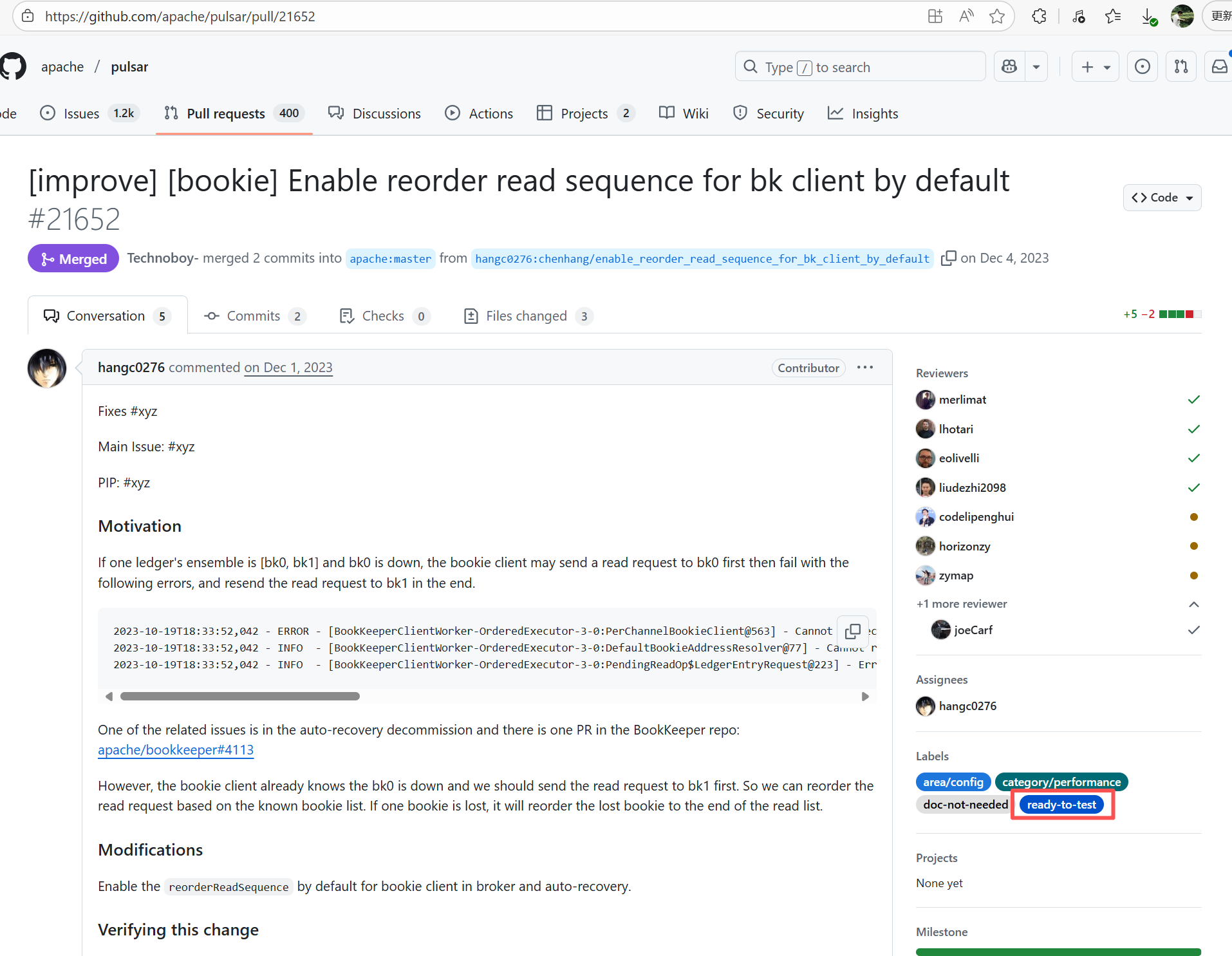

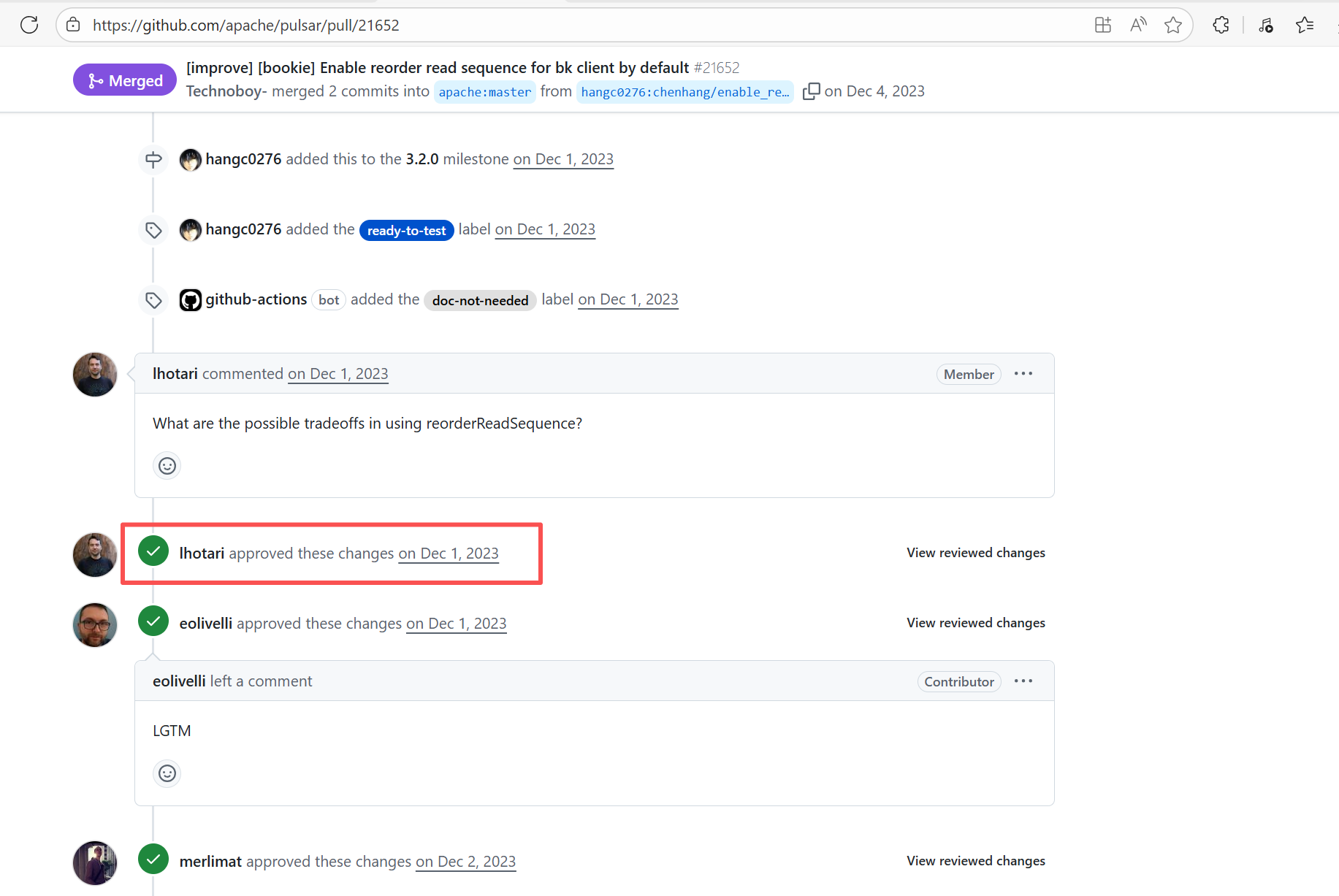

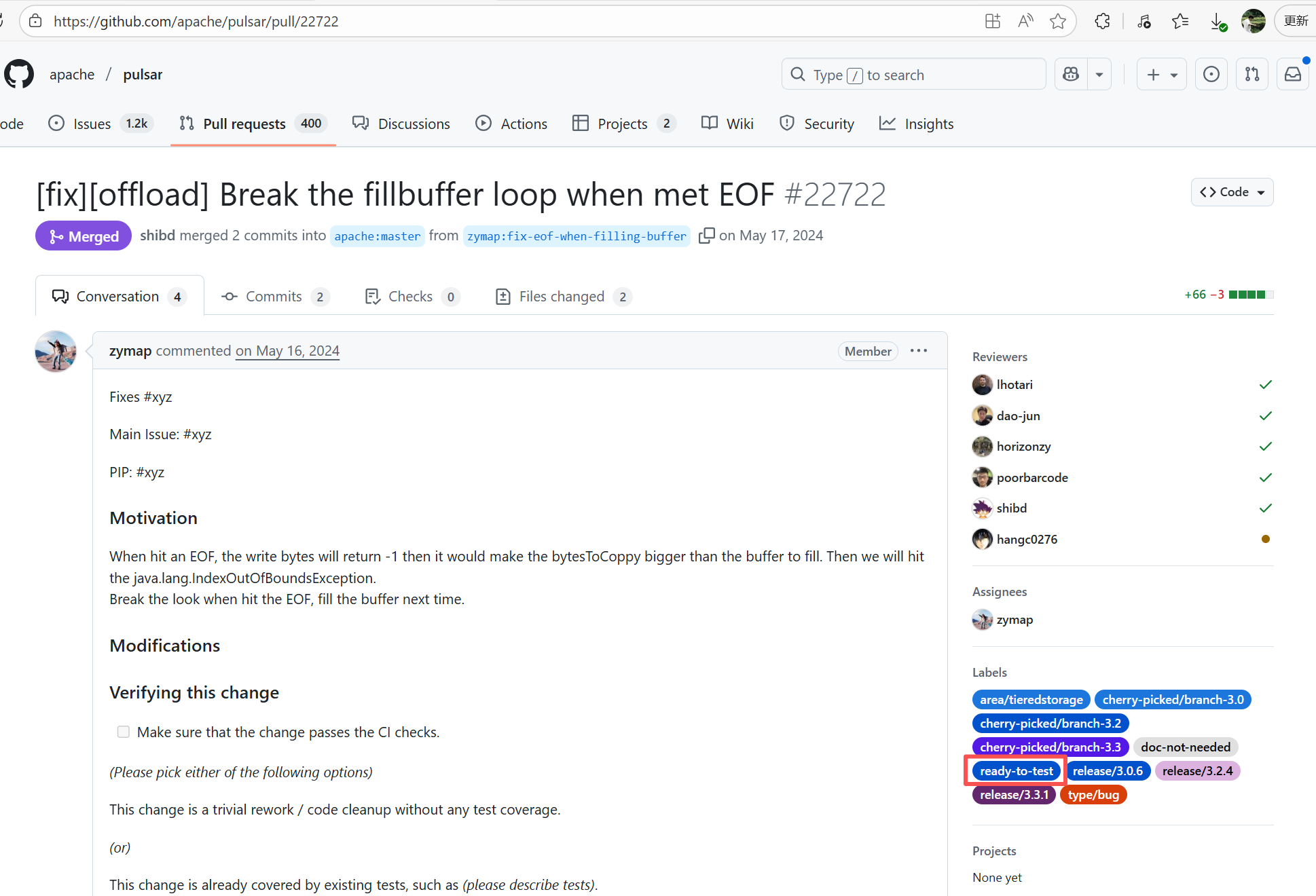

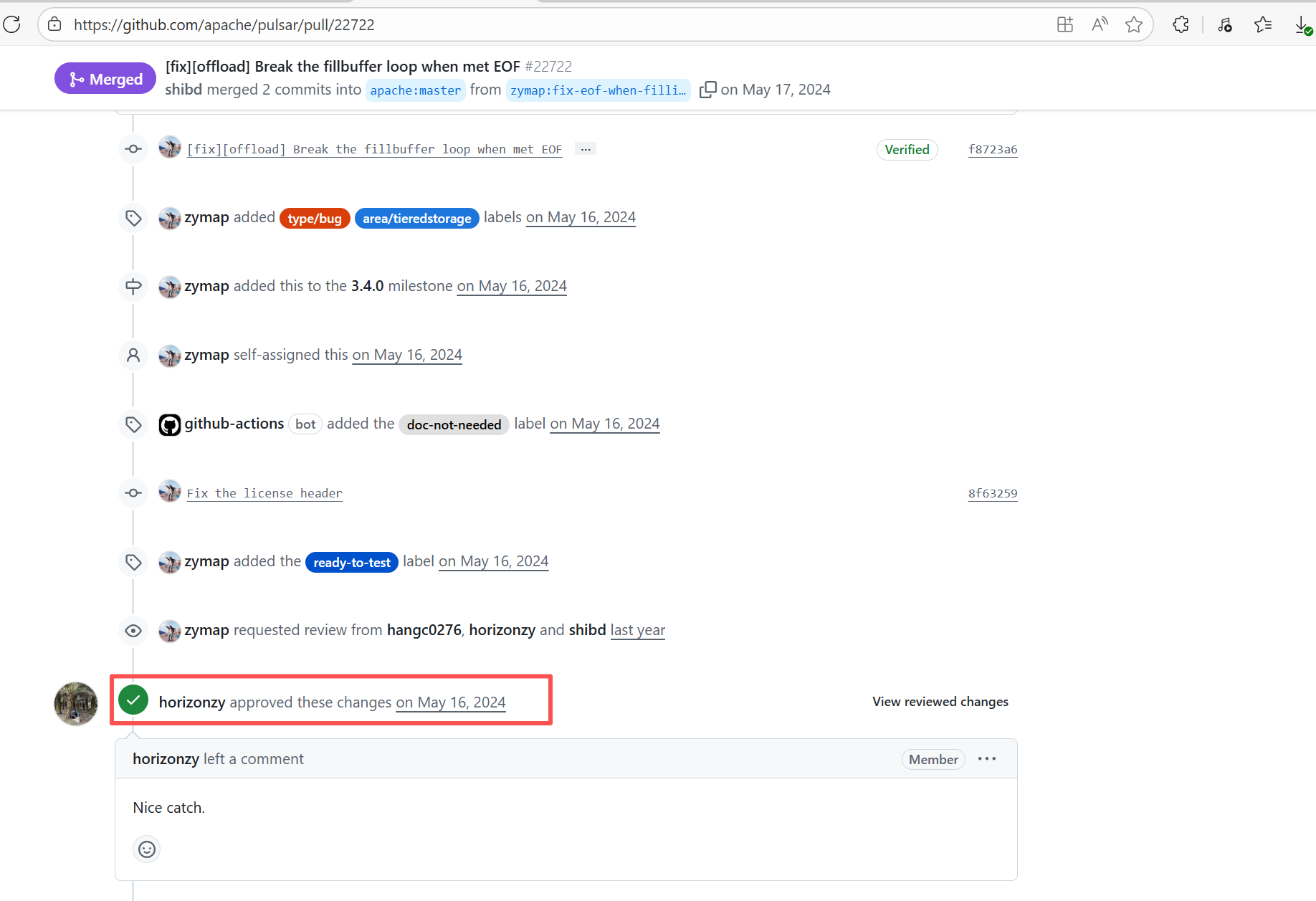

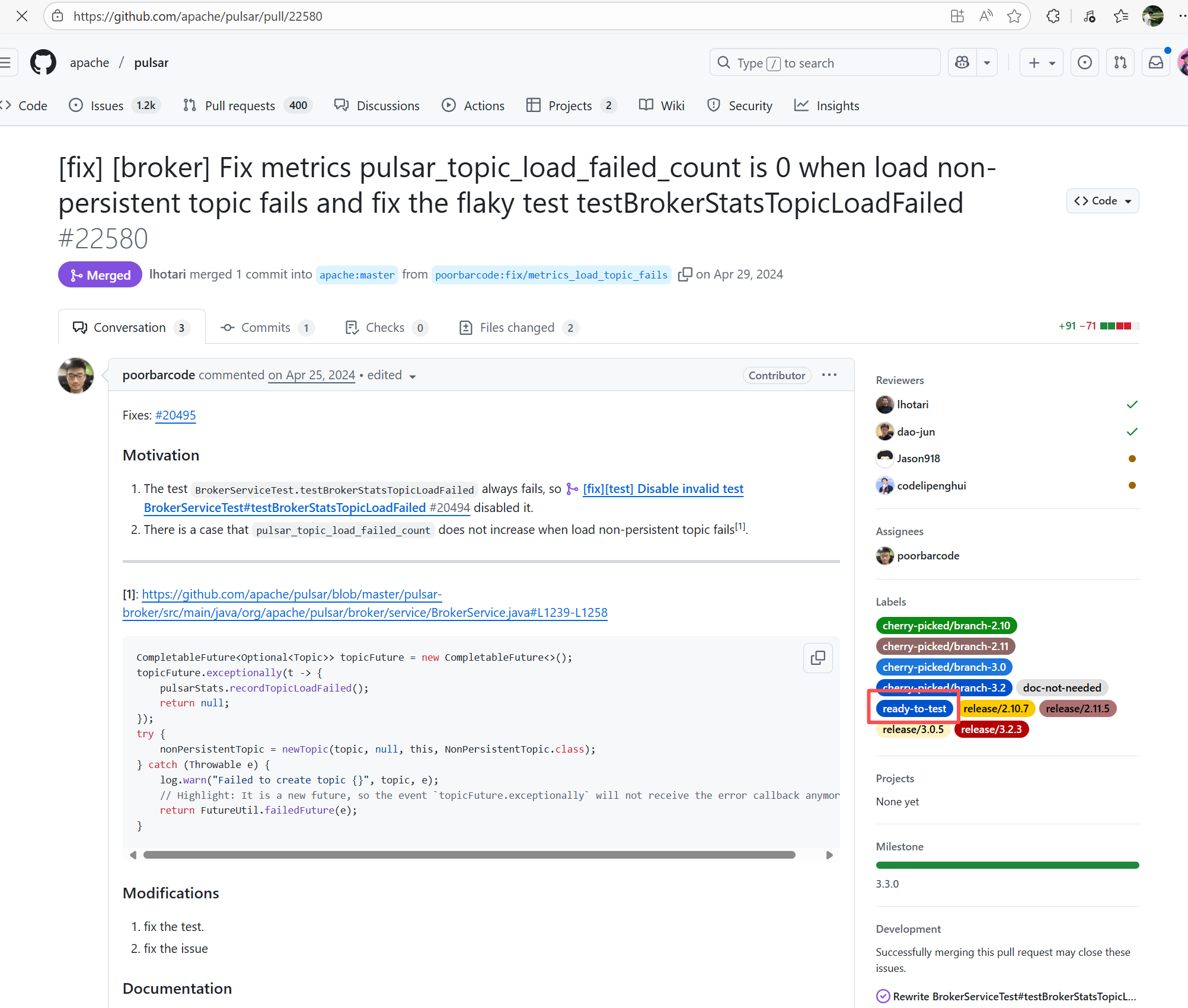

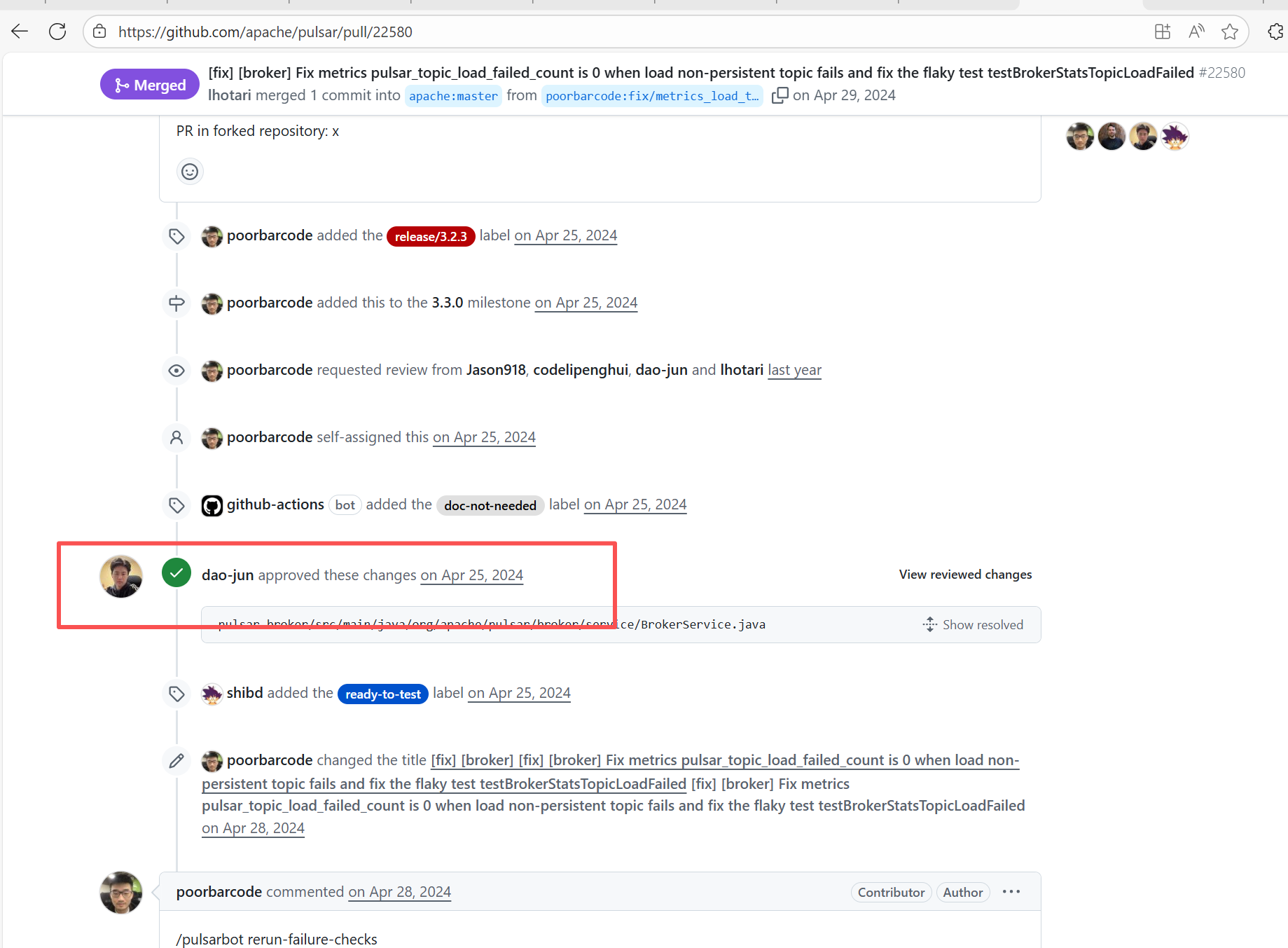

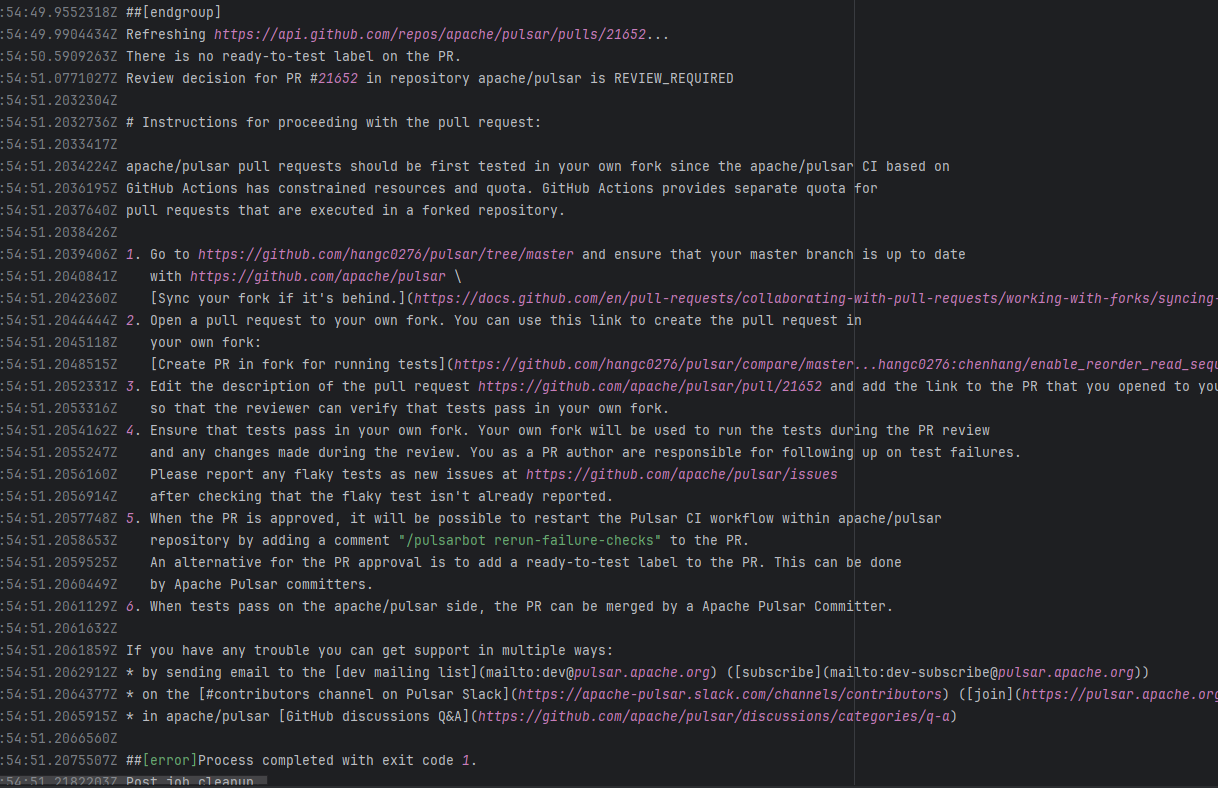

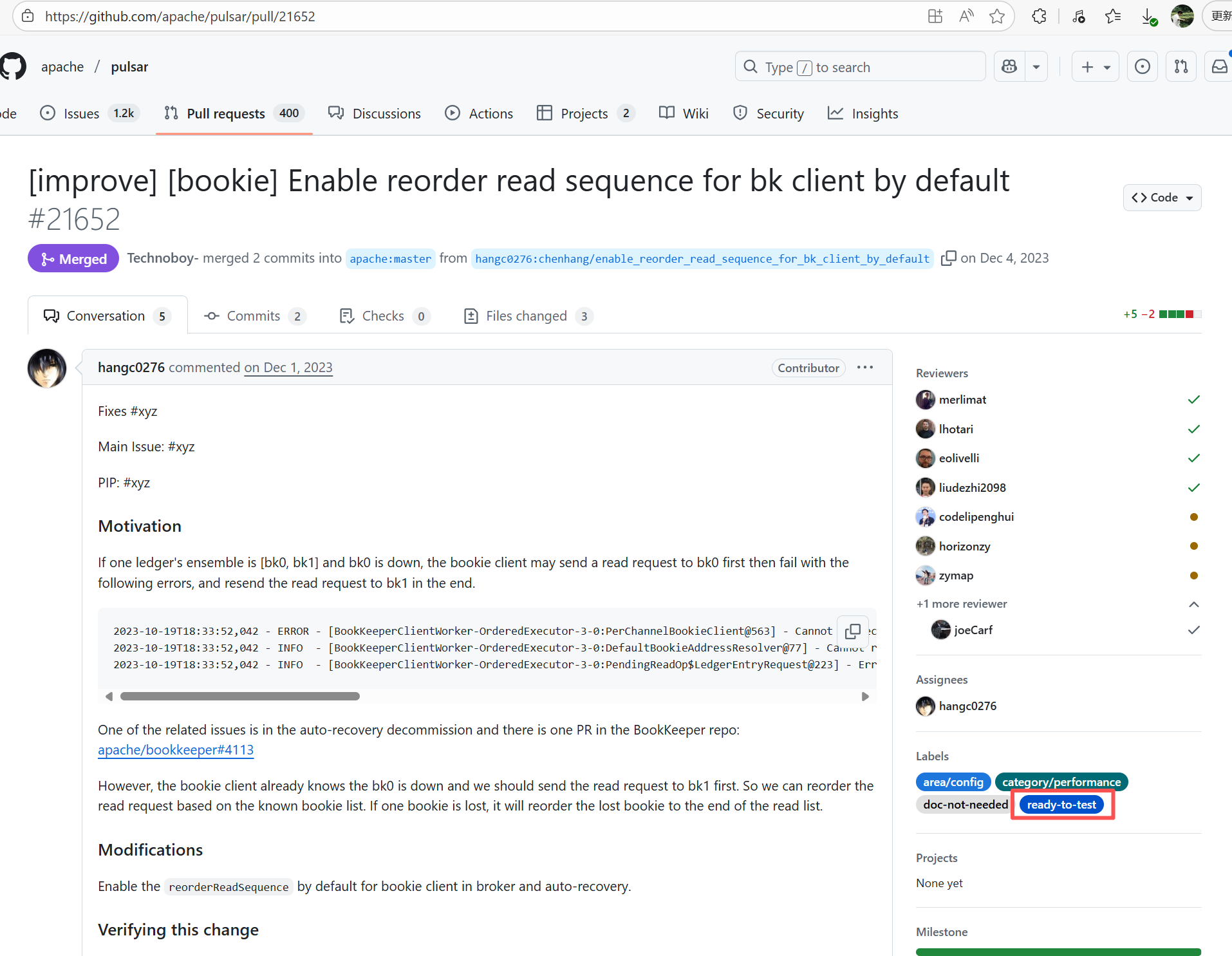

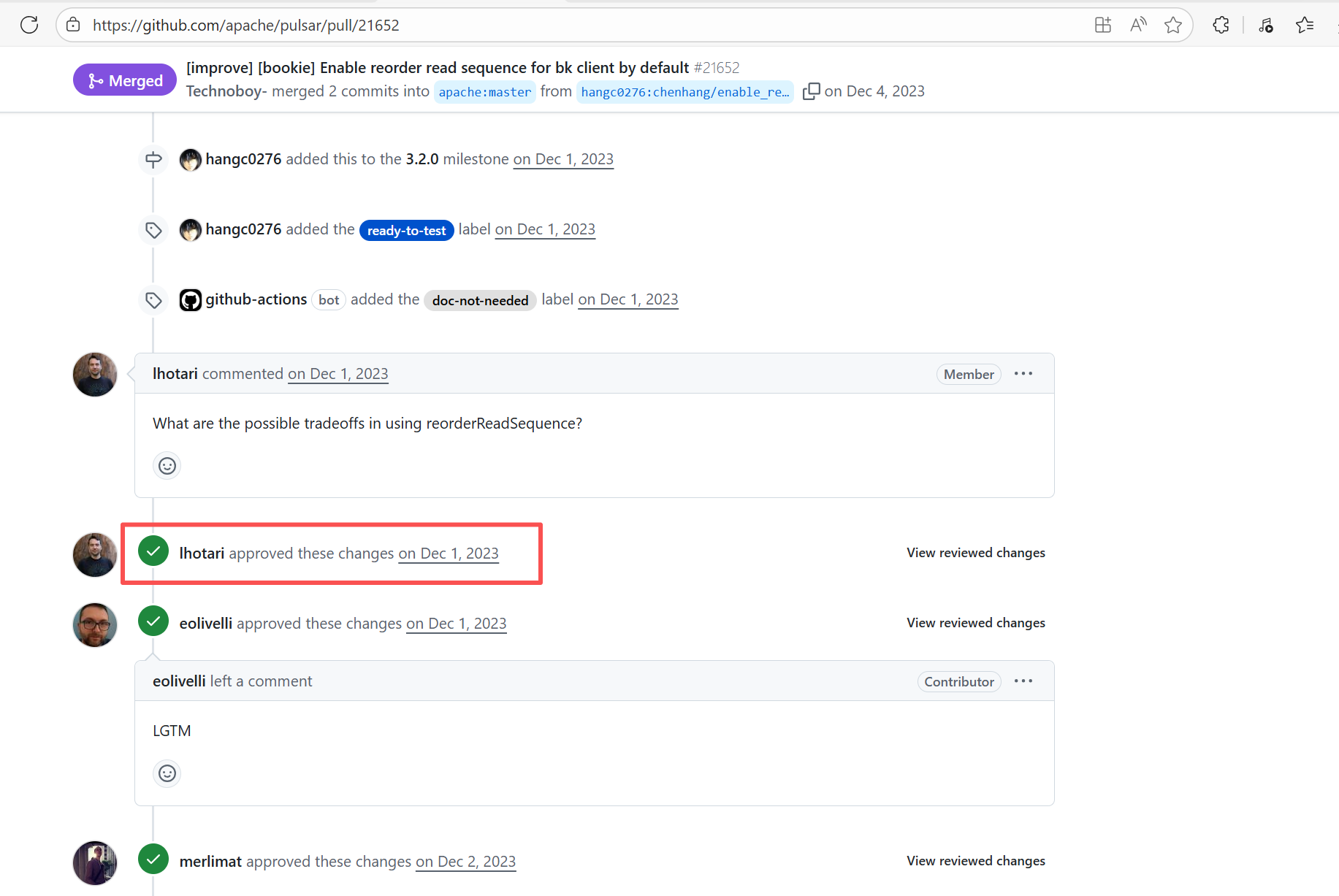

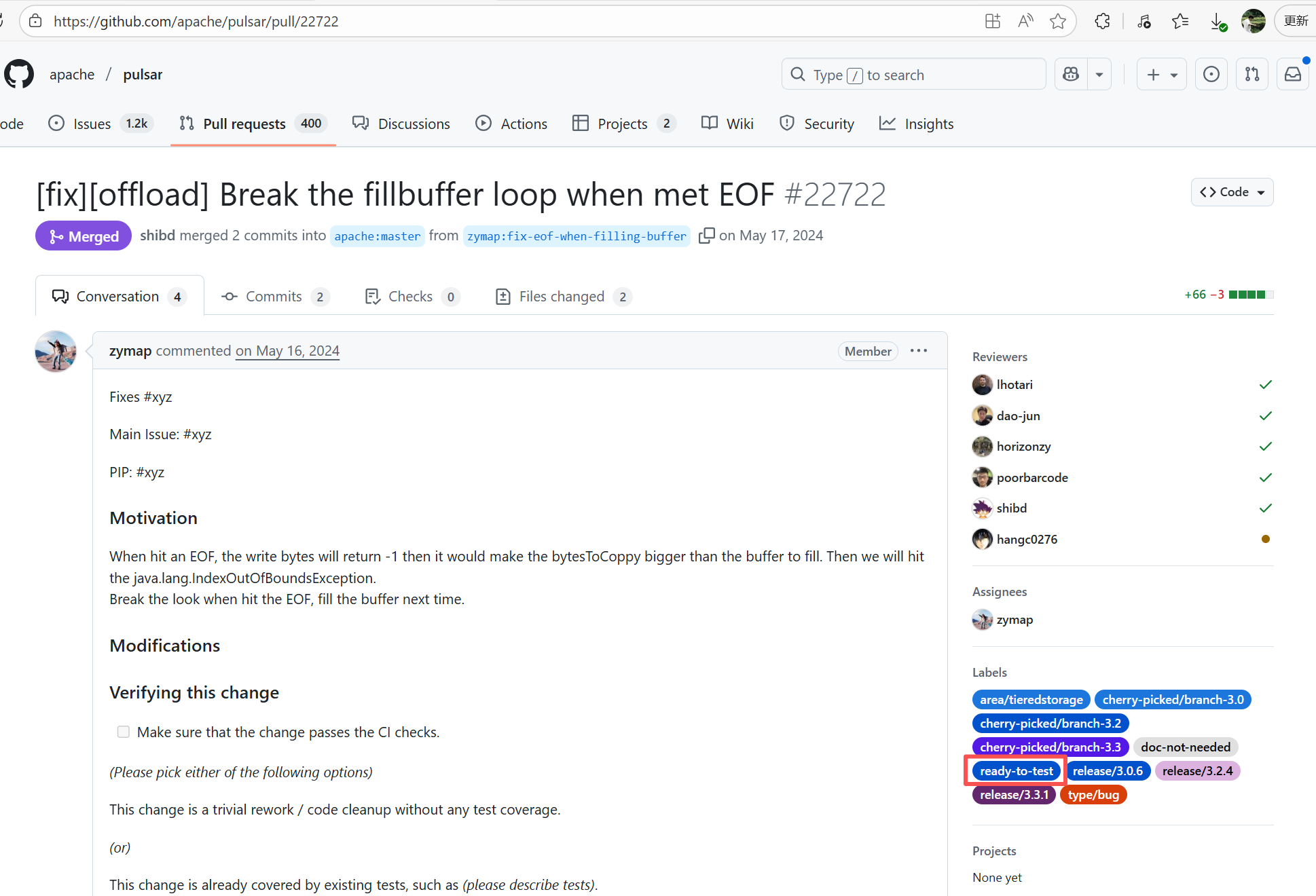

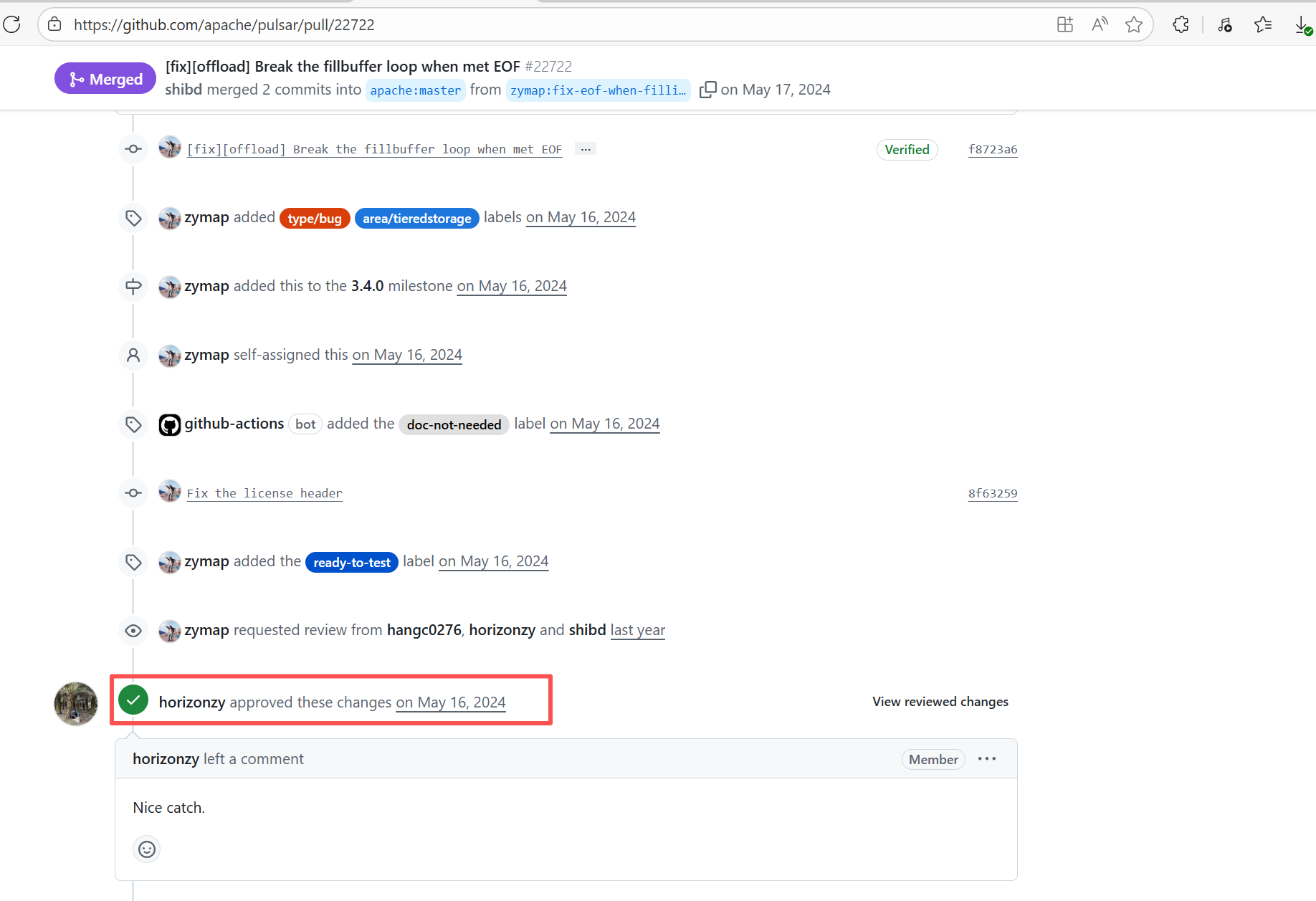

| apache/pulsar |

19209414833 |

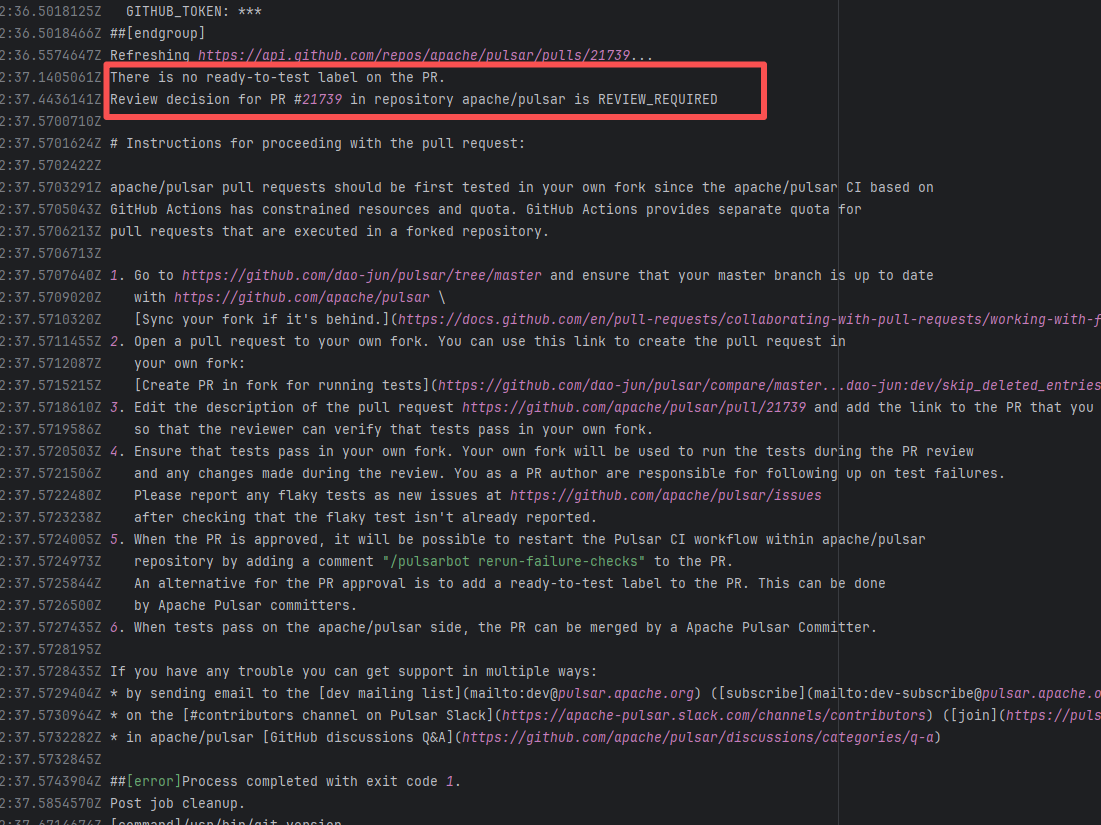

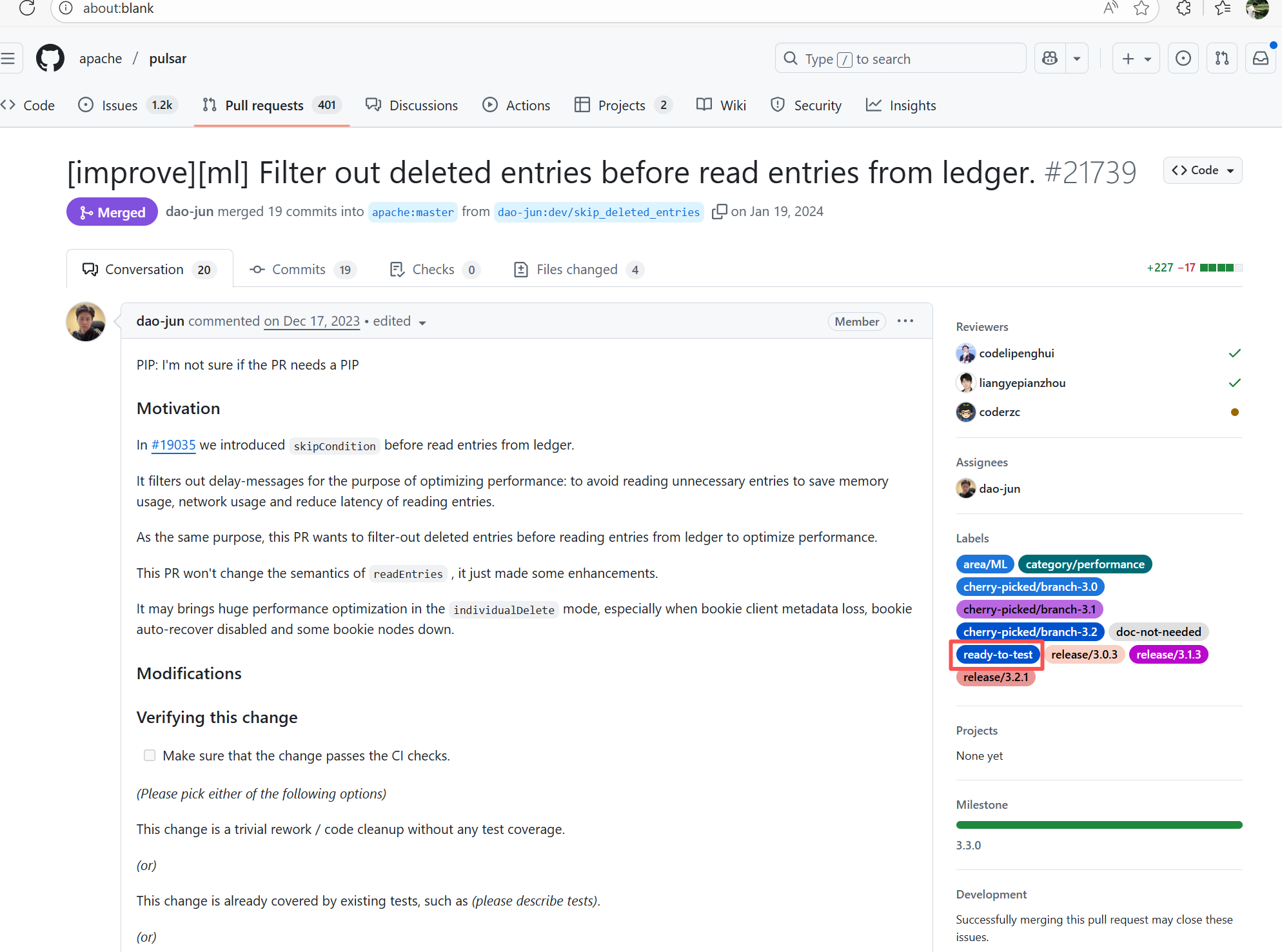

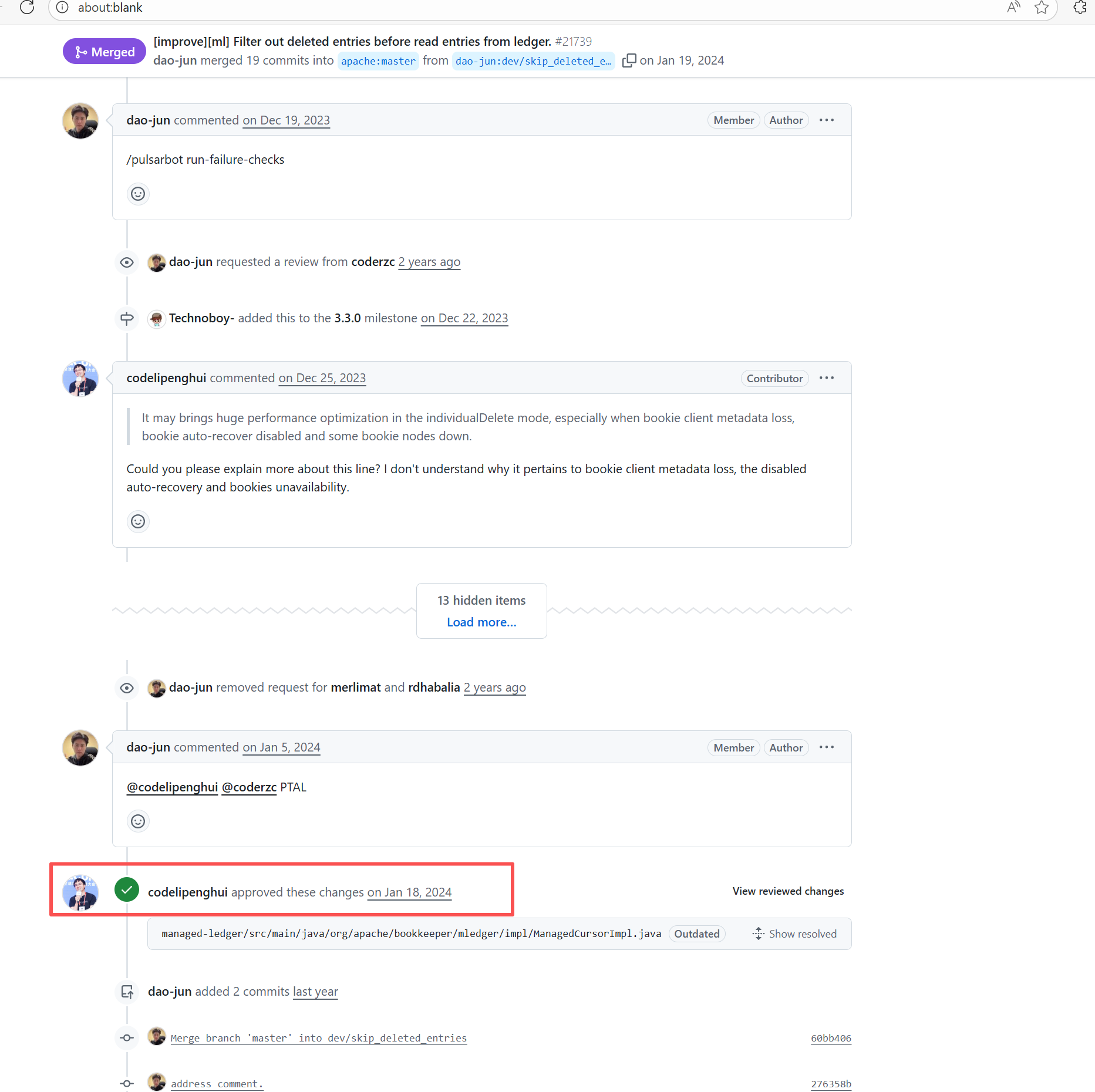

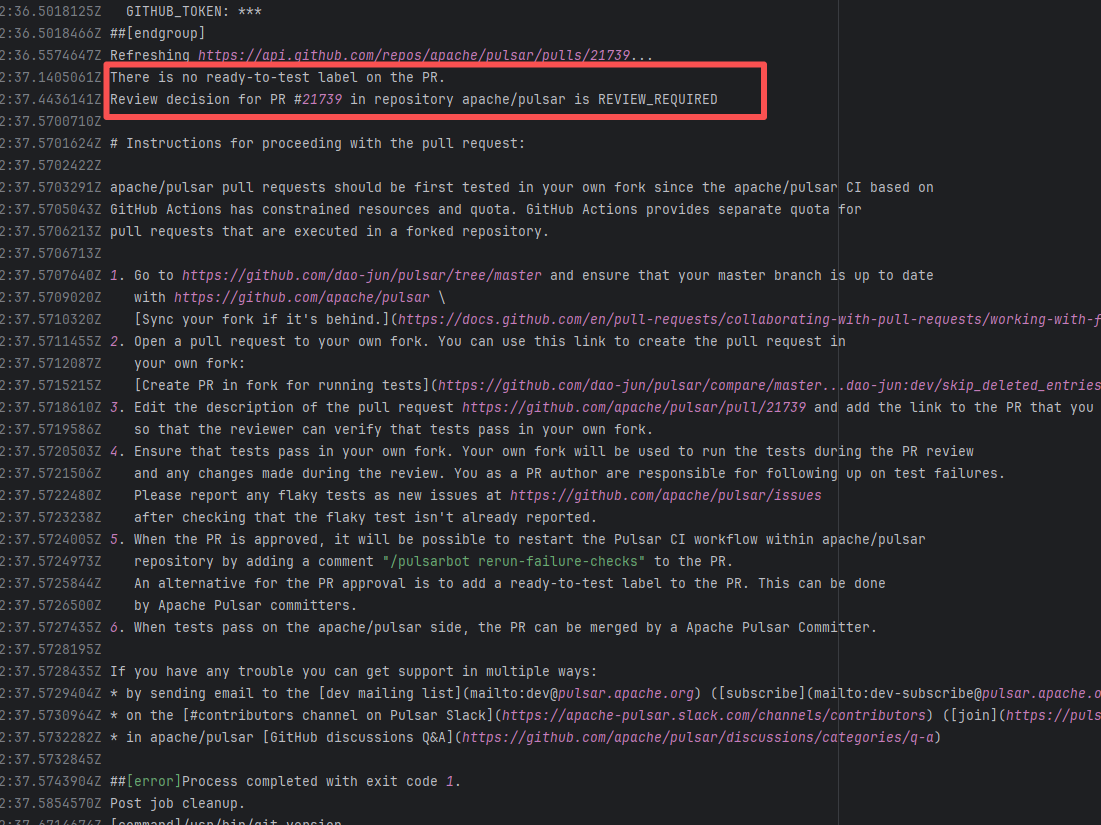

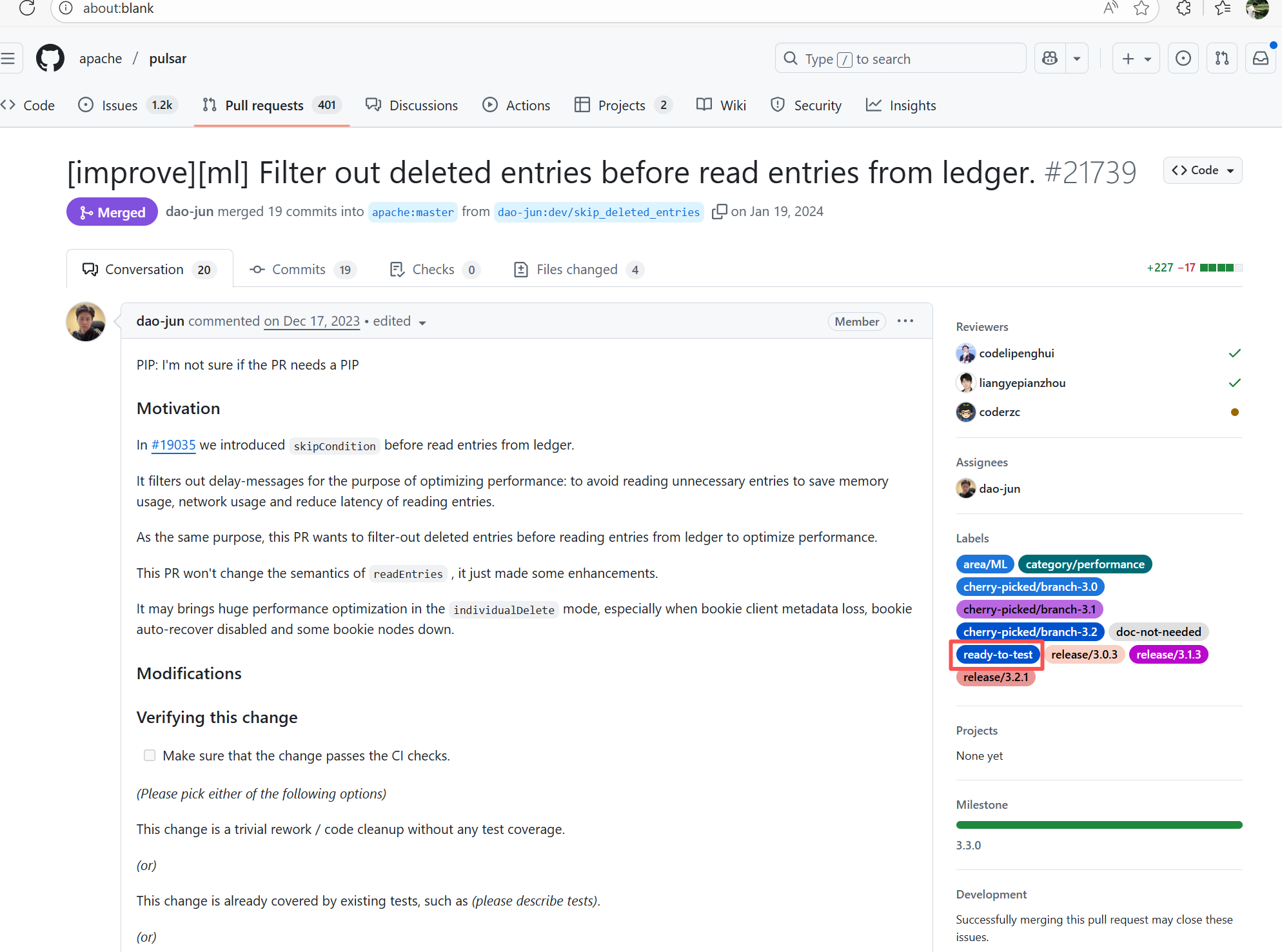

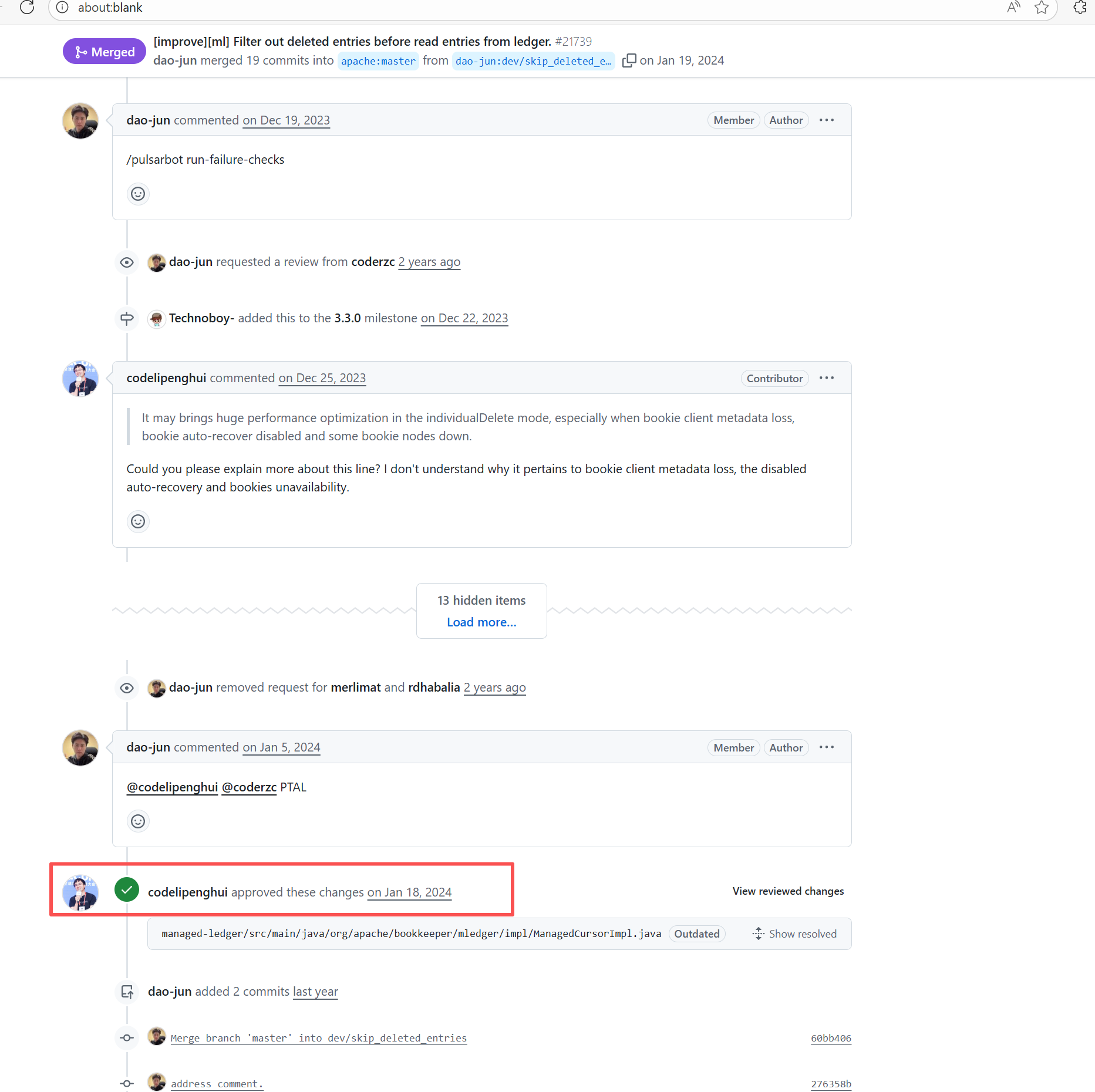

Workflow Policy Violation |

Error: Operations on the PR #21652 in the Apache Pulsar repository are currently restricted. The core reason is that the PR is missing the "ready-to-test" label and is in the "REVIEW_REQUIRED" state, so subsequent operations are prohibited.

Root Cause: CI based on GitHub Actions in the main repository has resource and quota limits. The official mandatory requirement is: all PRs must first complete test verification in the contributor's personal Fork repository. Only after confirming there are no problems can they be submitted to the main repository, to avoid occupying the limited CI quota of the main repository.

After a PR is submitted, it is necessary to contact the repository administrator to add the "ready-to-test" label to the PR. After the label is added, the main repository CI will allow the test process to execute; the repository administrator will review the code, modify the code according to the review comments (if any), and push it to the corresponding branch of the Fork repository (the PR will automatically synchronize the modifications). After the review is passed, the PR state will change from "REVIEW_REQUIRED" to "APPROVED", and then the subsequent merging process can be entered. |

Contact the relevant personnel to conduct testing and review according to the main repository requirements, and rerun after completion |

|

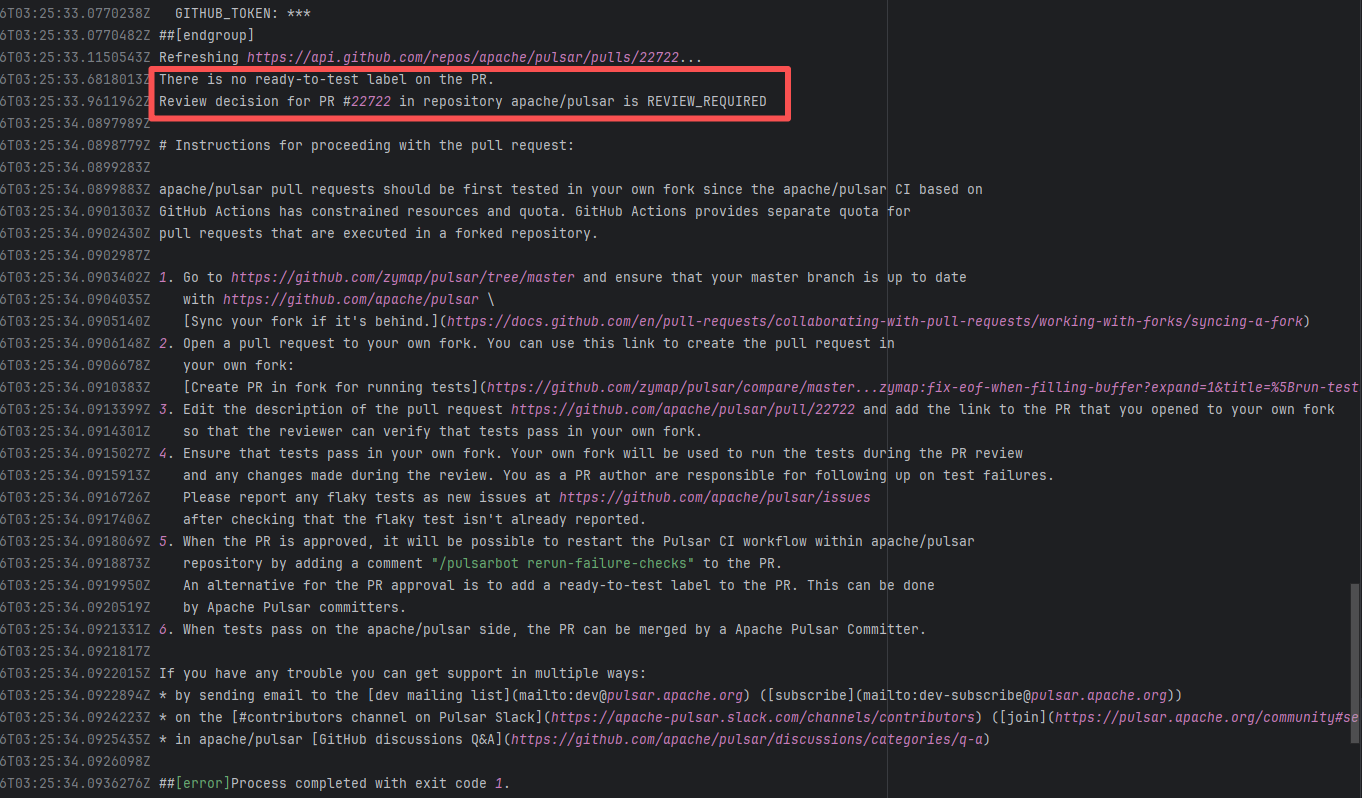

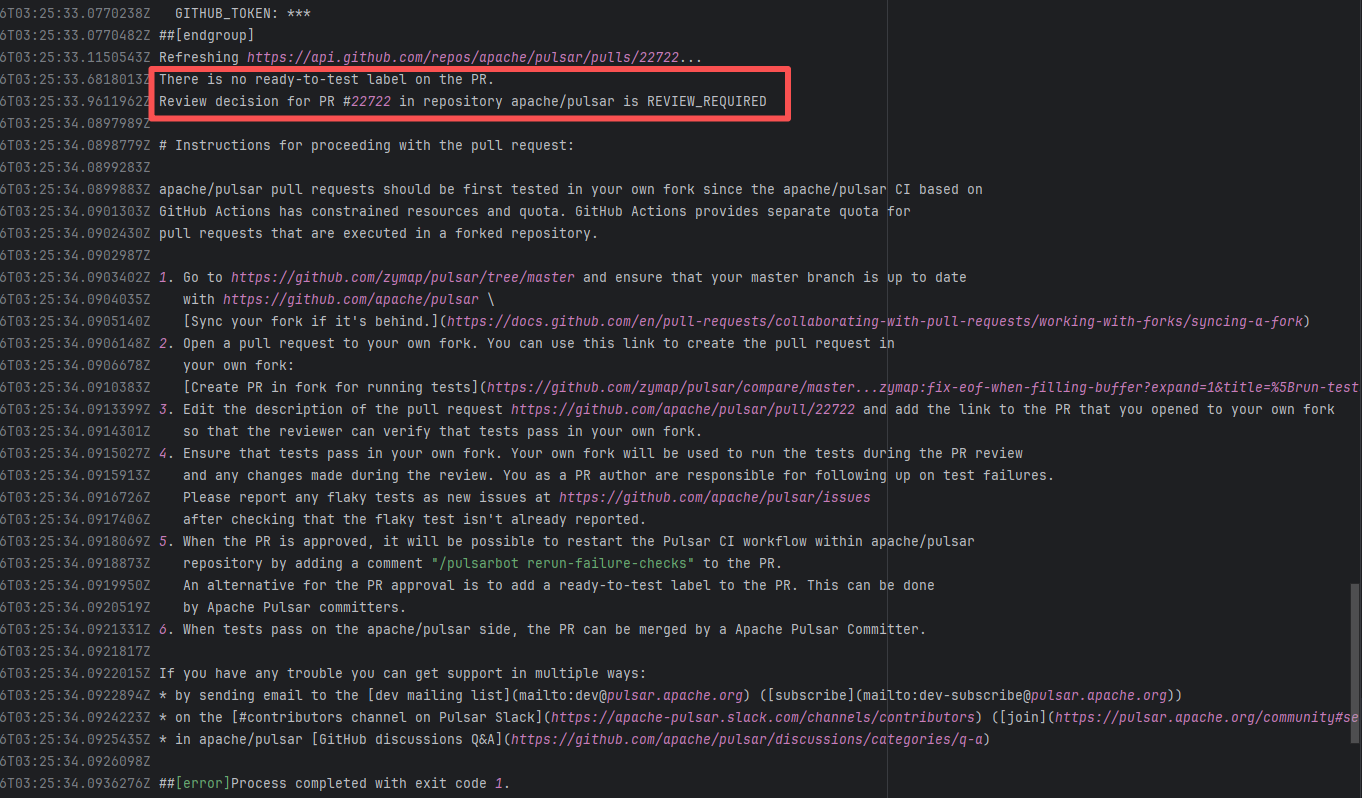

| apache/pulsar |

25032490889 |

Workflow Policy Violation |

Error: Operations on the PR #22722 in the Apache Pulsar repository are currently restricted. The core reason is that the PR is missing the "ready-to-test" label and is in the "REVIEW_REQUIRED" state, so subsequent operations are prohibited.

Root Cause: CI based on GitHub Actions in the main repository has resource and quota limits. The official mandatory requirement is: all PRs must first complete test verification in the contributor's personal Fork repository. Only after confirming there are no problems can they be submitted to the main repository, to avoid occupying the limited CI quota of the main repository.

After a PR is submitted, it is necessary to contact the repository administrator to add the "ready-to-test" label to the PR. After the label is added, the main repository CI will allow the test process to execute; the repository administrator will review the code, modify the code according to the review comments (if any), and push it to the corresponding branch of the Fork repository (the PR will automatically synchronize the modifications). After the review is passed, the PR state will change from "REVIEW_REQUIRED" to "APPROVED", and then the subsequent merging process can be entered. |

Contact the relevant personnel to conduct testing and review according to the main repository requirements, and rerun after completion |

|

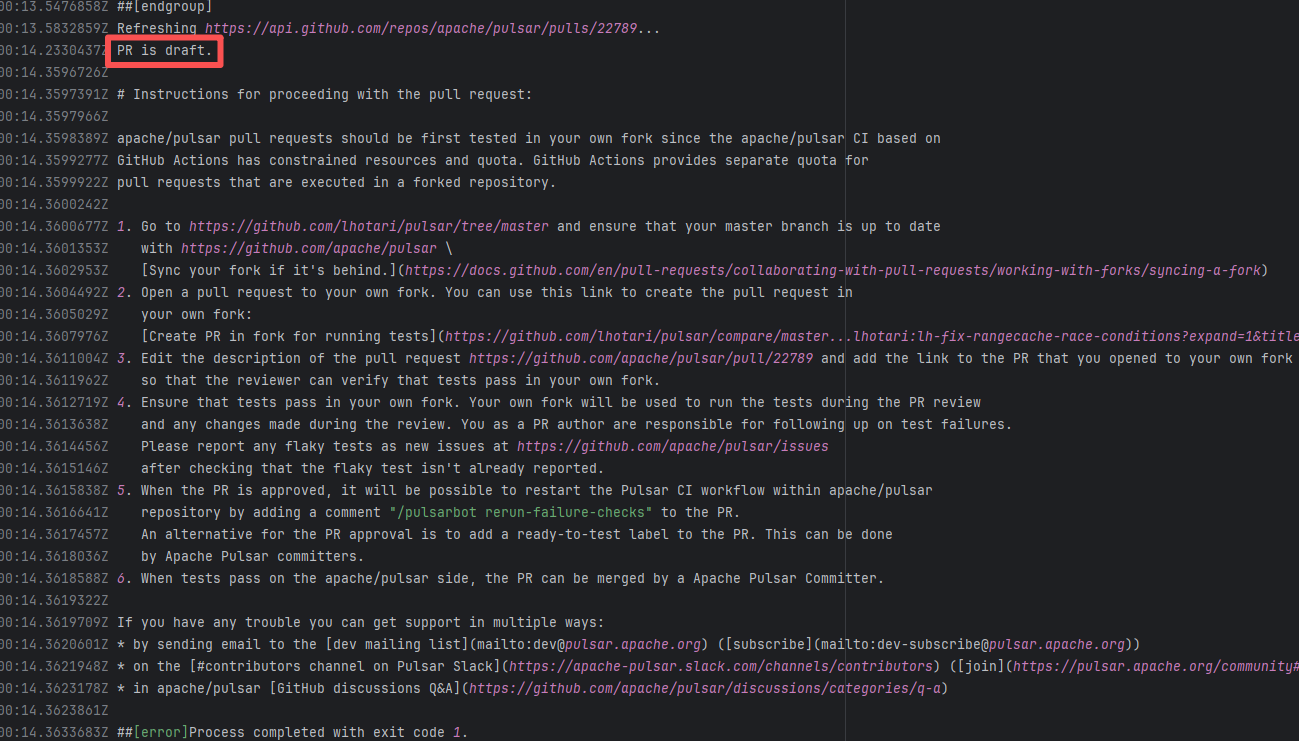

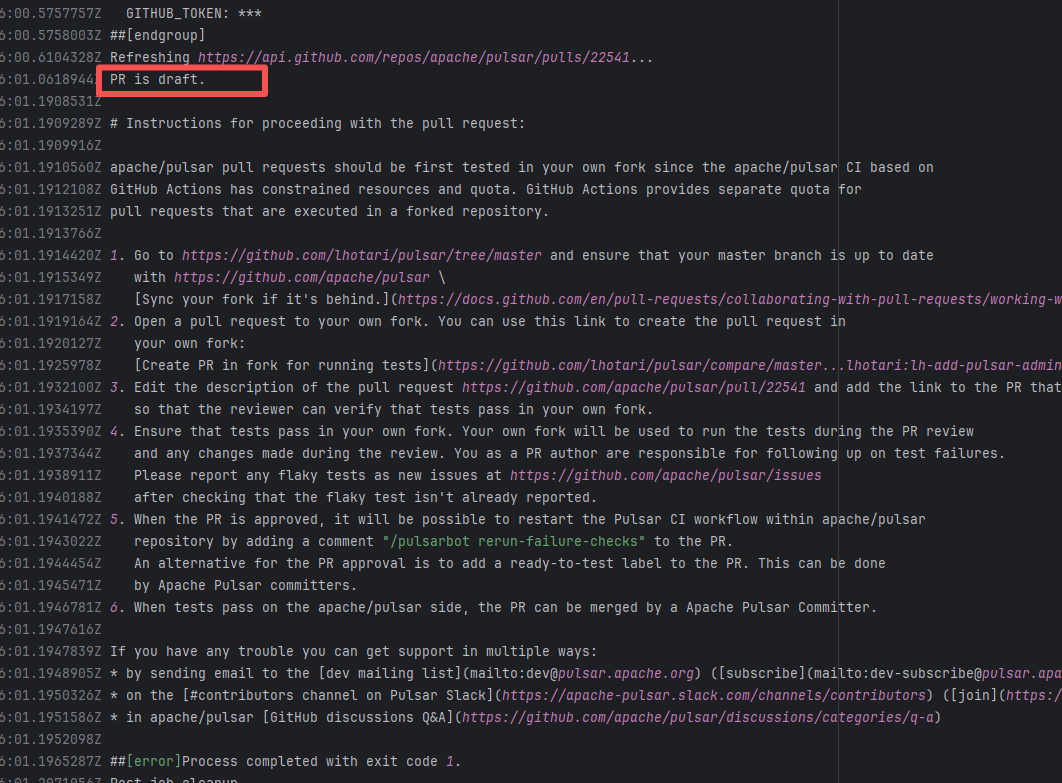

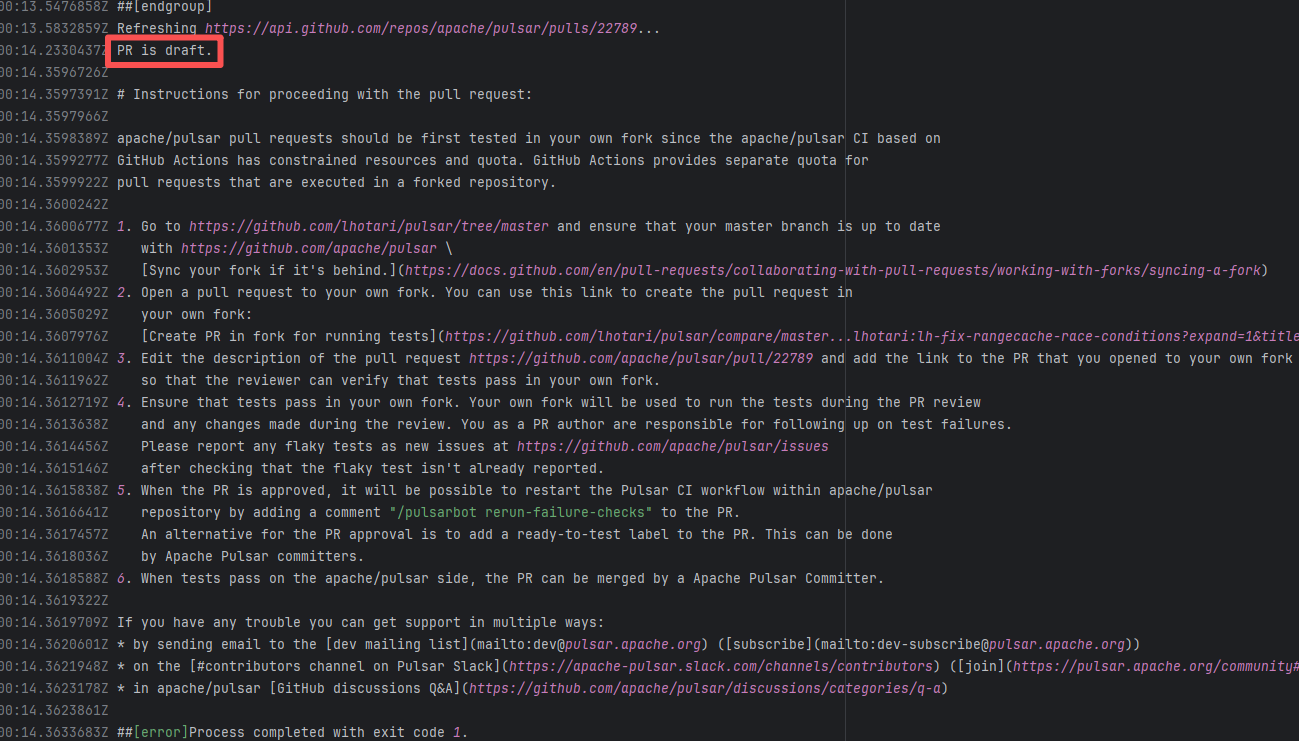

| apache/pulsar |

25623936811 |

Workflow Policy Violation |

Error: "Draft PRs" in GitHub are usually used to mark "work in progress". The Apache Pulsar project places additional restrictions on the use of main repository CI resources for such PRs, mandating verification in the Fork repository first to avoid wasting resources.

Root Cause: Rerun succeeded after converting the PR from "Draft state" to "Formal PR" once it was ready. |

Convert the PR from "Draft state" to "Formal PR" once it is ready, and rerun successfully. |

|

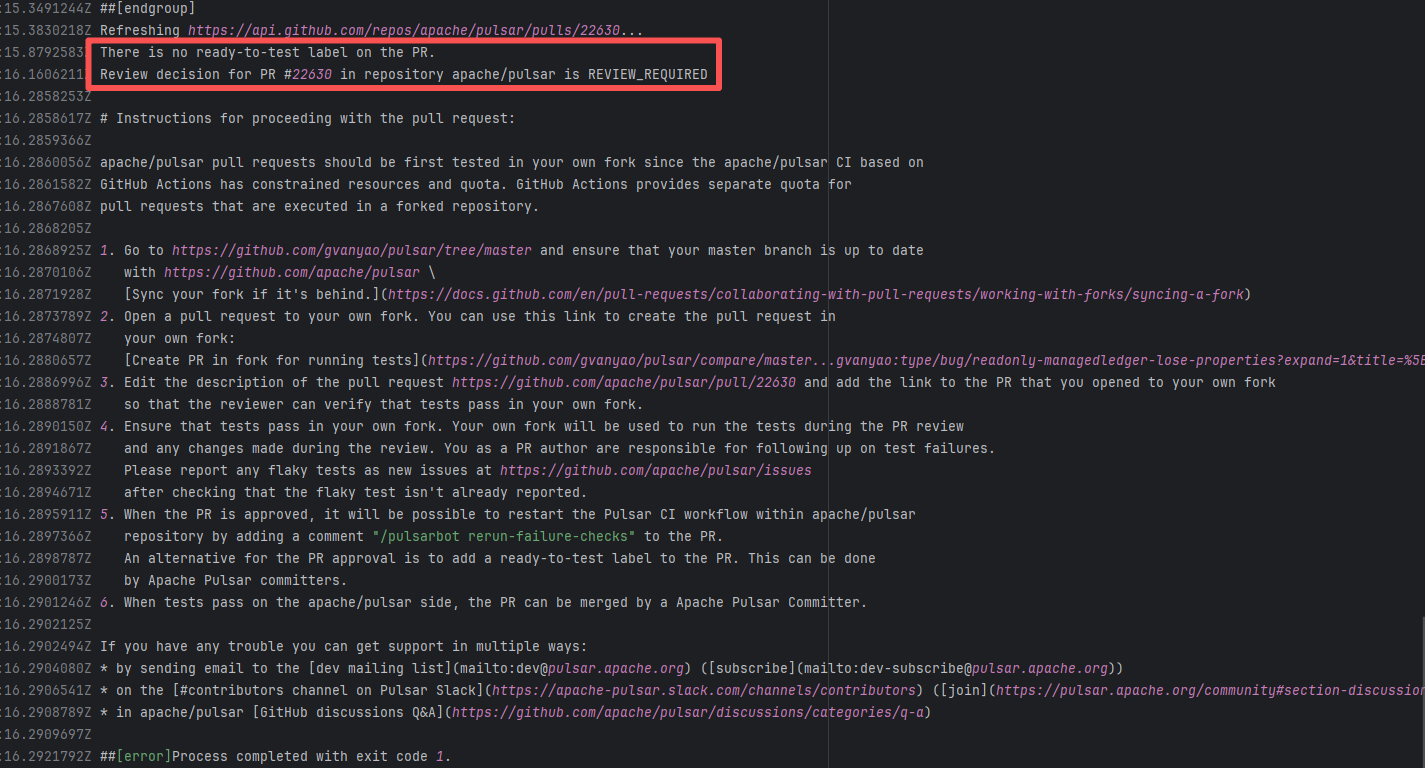

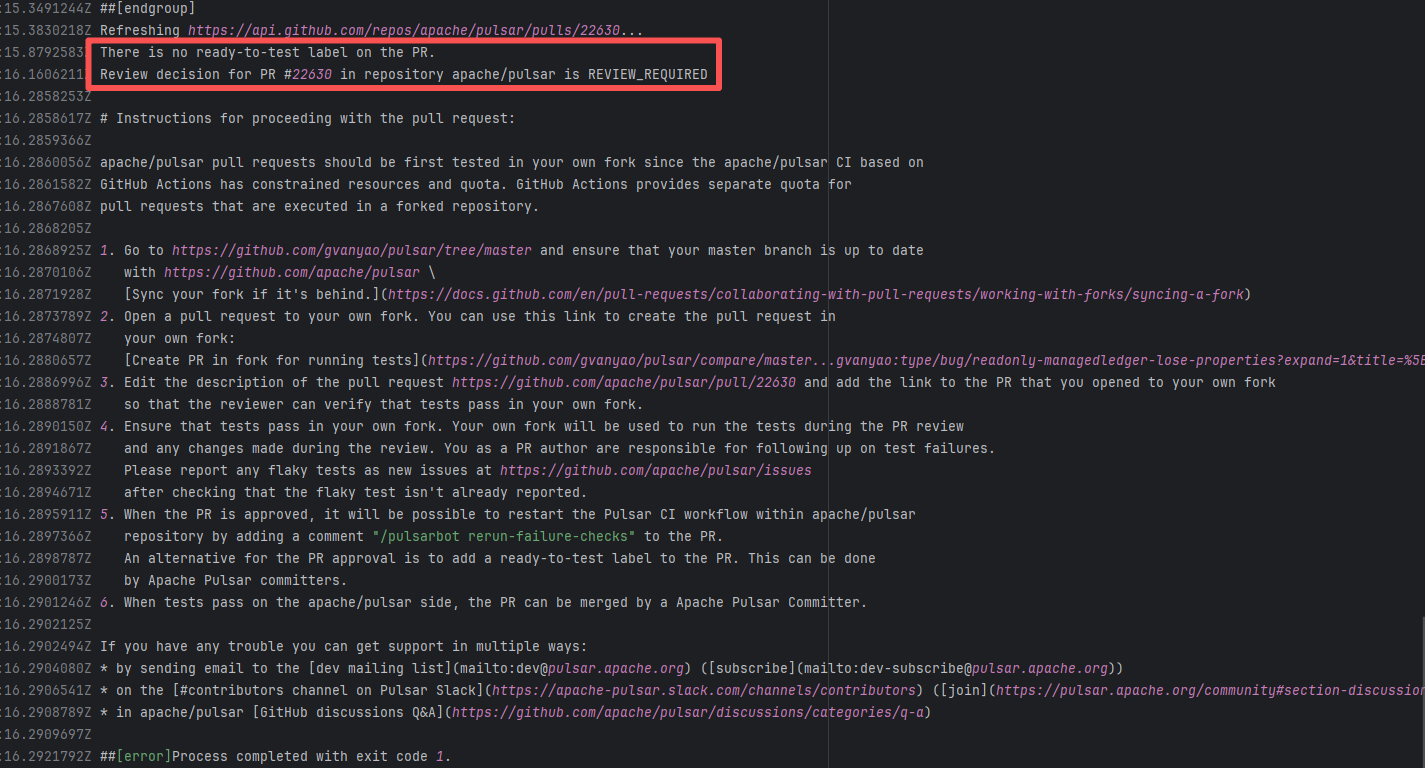

| apache/pulsar |

24546846695 |

Workflow Policy Violation |

Error: Operations on the PR #22630 in the Apache Pulsar repository are currently restricted. The core reason is that the PR is missing the "ready-to-test" label and is in the "REVIEW_REQUIRED" state, so subsequent operations are prohibited.

Root Cause: CI based on GitHub Actions in the main repository has resource and quota limits. The official mandatory requirement is: all PRs must first complete test verification in the contributor's personal Fork repository. Only after confirming there are no problems can they be submitted to the main repository, to avoid occupying the limited CI quota of the main repository.

After a PR is submitted, it is necessary to contact the repository administrator to add the "ready-to-test" label to the PR. After the label is added, the main repository CI will allow the test process to execute; the repository administrator will review the code, modify the code according to the review comments (if any), and push it to the corresponding branch of the Fork repository (the PR will automatically synchronize the modifications). After the review is passed, the PR state will change from "REVIEW_REQUIRED" to "APPROVED", and then the subsequent merging process can be entered. |

Contact the relevant personnel to conduct testing and review according to the main repository requirements, and rerun after completion |

|

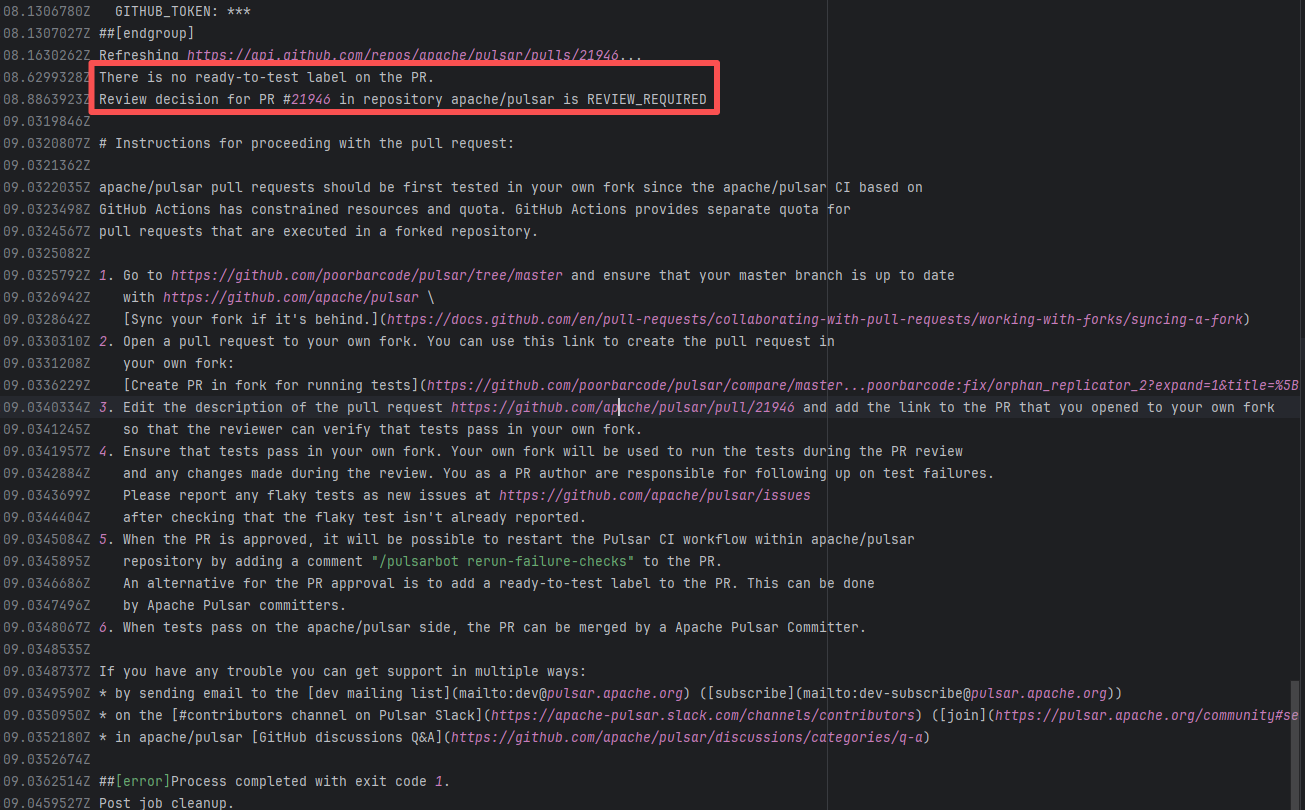

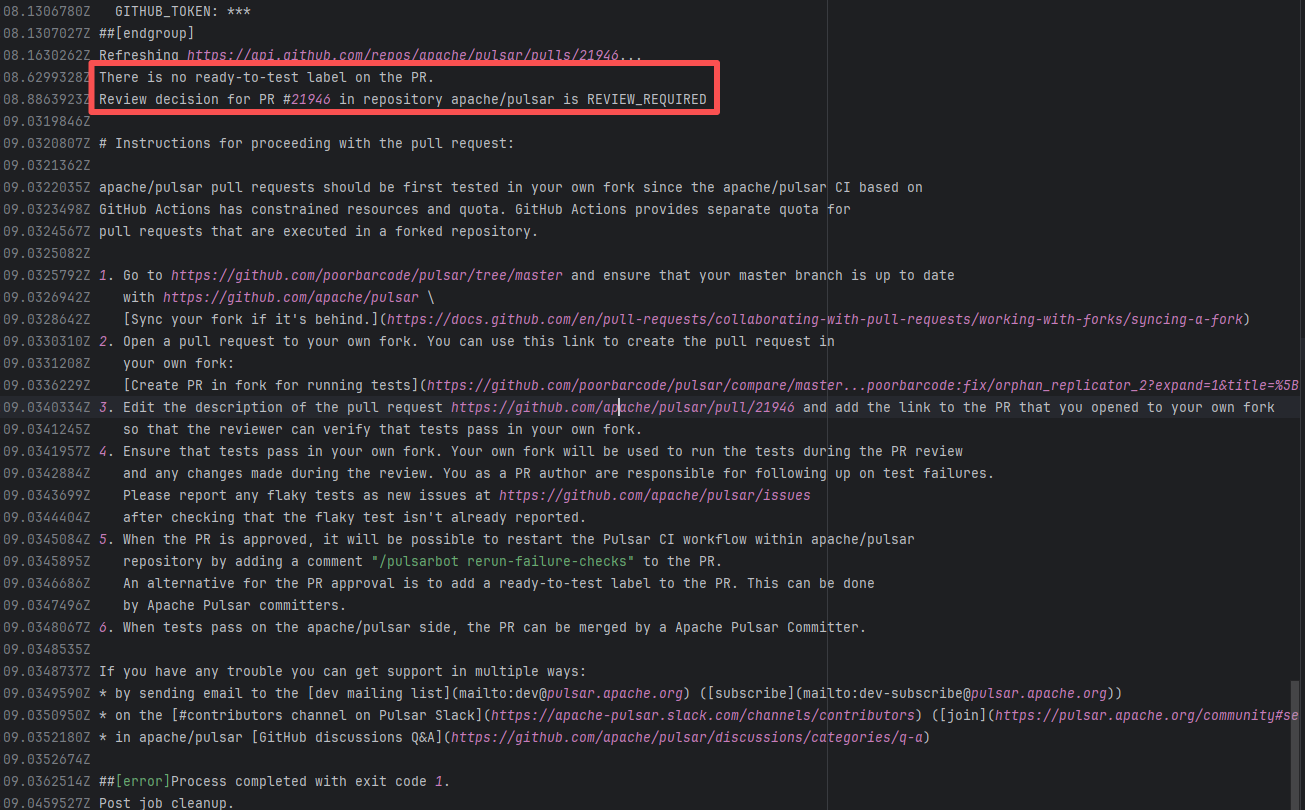

| apache/pulsar |

21093406893 |

Workflow Policy Violation |

Error: Operations on the PR #21946 in the Apache Pulsar repository are currently restricted. The core reason is that the PR is missing the "ready-to-test" label and is in the "REVIEW_REQUIRED" state, so subsequent operations are prohibited.

Root Cause: CI based on GitHub Actions in the main repository has resource and quota limits. The official mandatory requirement is: all PRs must first complete test verification in the contributor's personal Fork repository. Only after confirming there are no problems can they be submitted to the main repository, to avoid occupying the limited CI quota of the main repository.

After a PR is submitted, it is necessary to contact the repository administrator to add the "ready-to-test" label to the PR. After the label is added, the main repository CI will allow the test process to execute; the repository administrator will review the code, modify the code according to the review comments (if any), and push it to the corresponding branch of the Fork repository (the PR will automatically synchronize the modifications). After the review is passed, the PR state will change from "REVIEW_REQUIRED" to "APPROVED", and then the subsequent merging process can be entered. |

Contact the relevant personnel to conduct testing and review according to the main repository requirements, and rerun after completion |

|

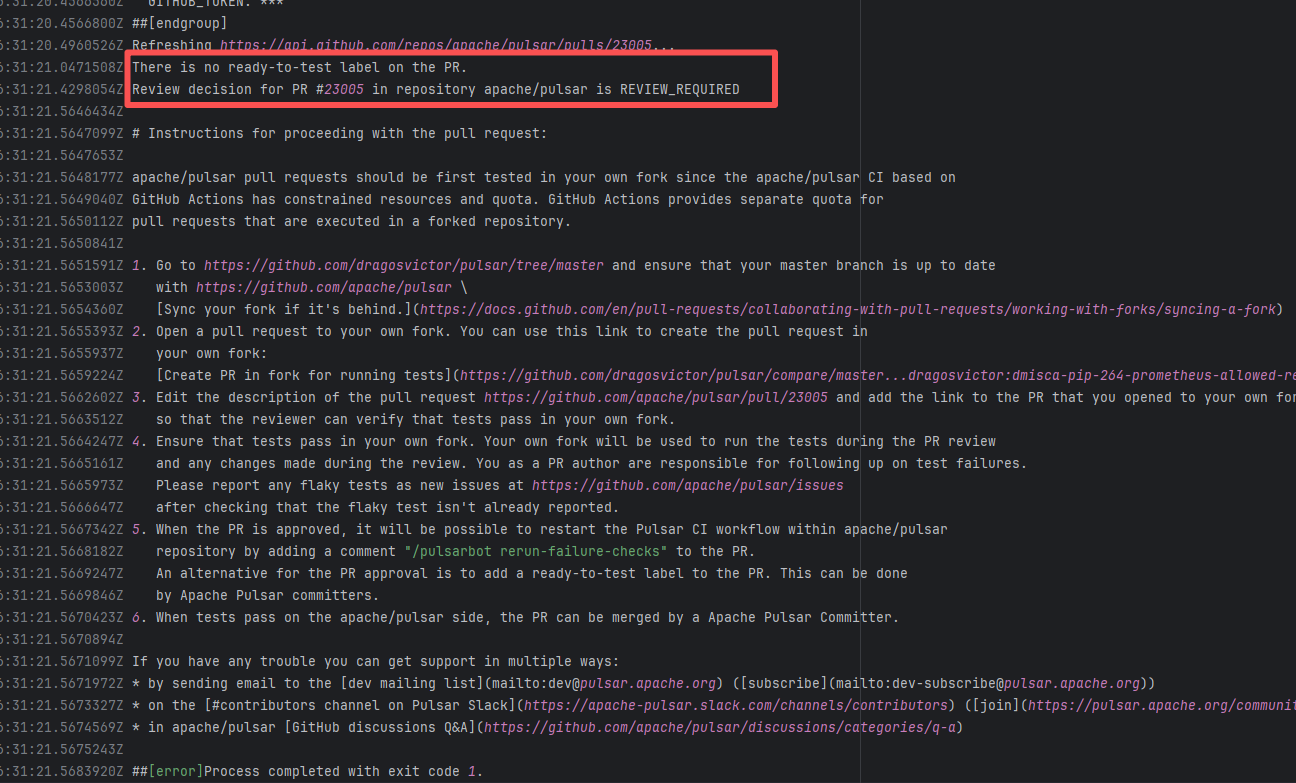

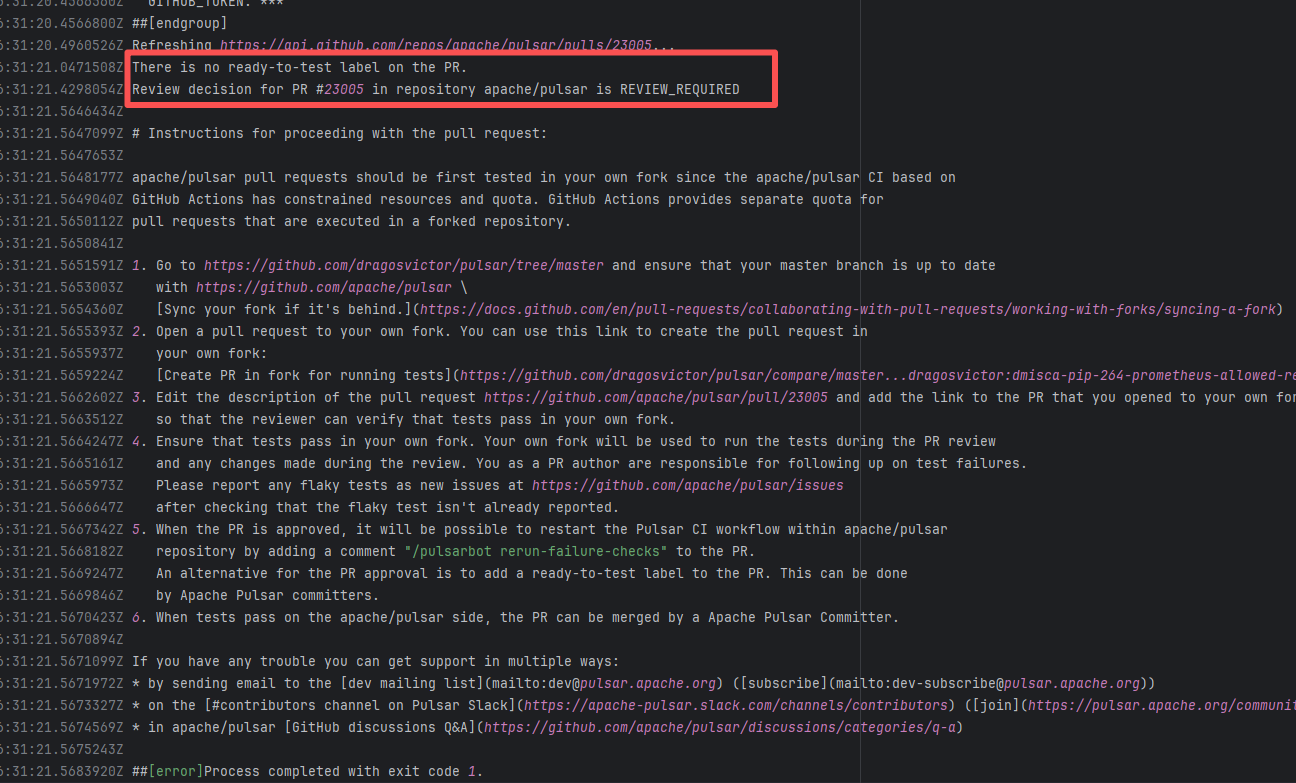

| apache/pulsar |

27071308443 |

Workflow Policy Violation |

Error: Operations on the PR #23005 in the Apache Pulsar repository are currently restricted. The core reason is that the PR is missing the "ready-to-test" label and is in the "REVIEW_REQUIRED" state, so subsequent operations are prohibited.

Root Cause: CI based on GitHub Actions in the main repository has resource and quota limits. The official mandatory requirement is: all PRs must first complete test verification in the contributor's personal Fork repository. Only after confirming there are no problems can they be submitted to the main repository, to avoid occupying the limited CI quota of the main repository.

After a PR is submitted, it is necessary to contact the repository administrator to add the "ready-to-test" label to the PR. After the label is added, the main repository CI will allow the test process to execute; the repository administrator will review the code, modify the code according to the review comments (if any), and push it to the corresponding branch of the Fork repository (the PR will automatically synchronize the modifications). After the review is passed, the PR state will change from "REVIEW_REQUIRED" to "APPROVED", and then the subsequent merging process can be entered. |

Contact the relevant personnel to conduct testing and review according to the main repository requirements, and rerun after completion |

|

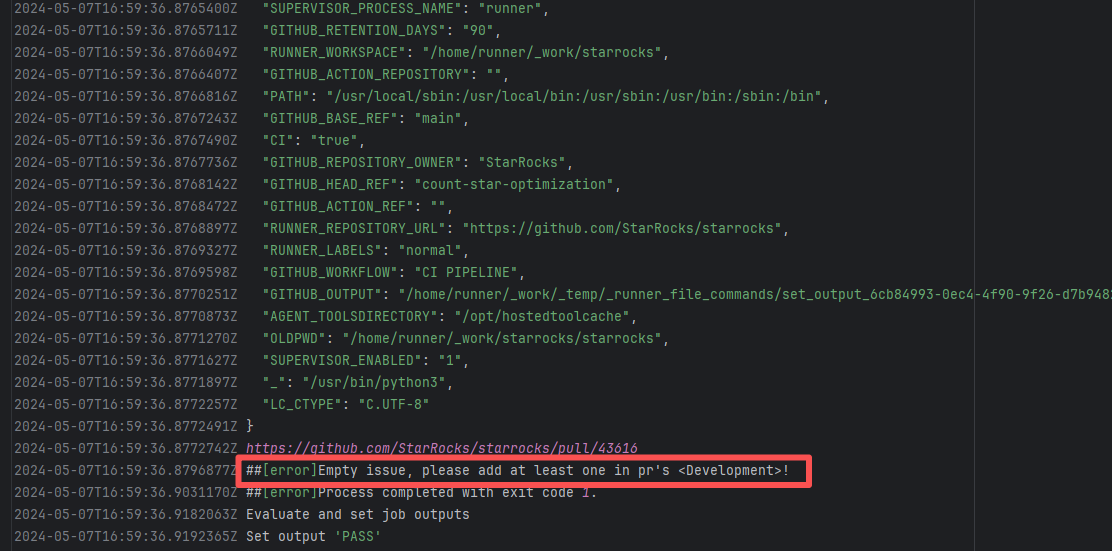

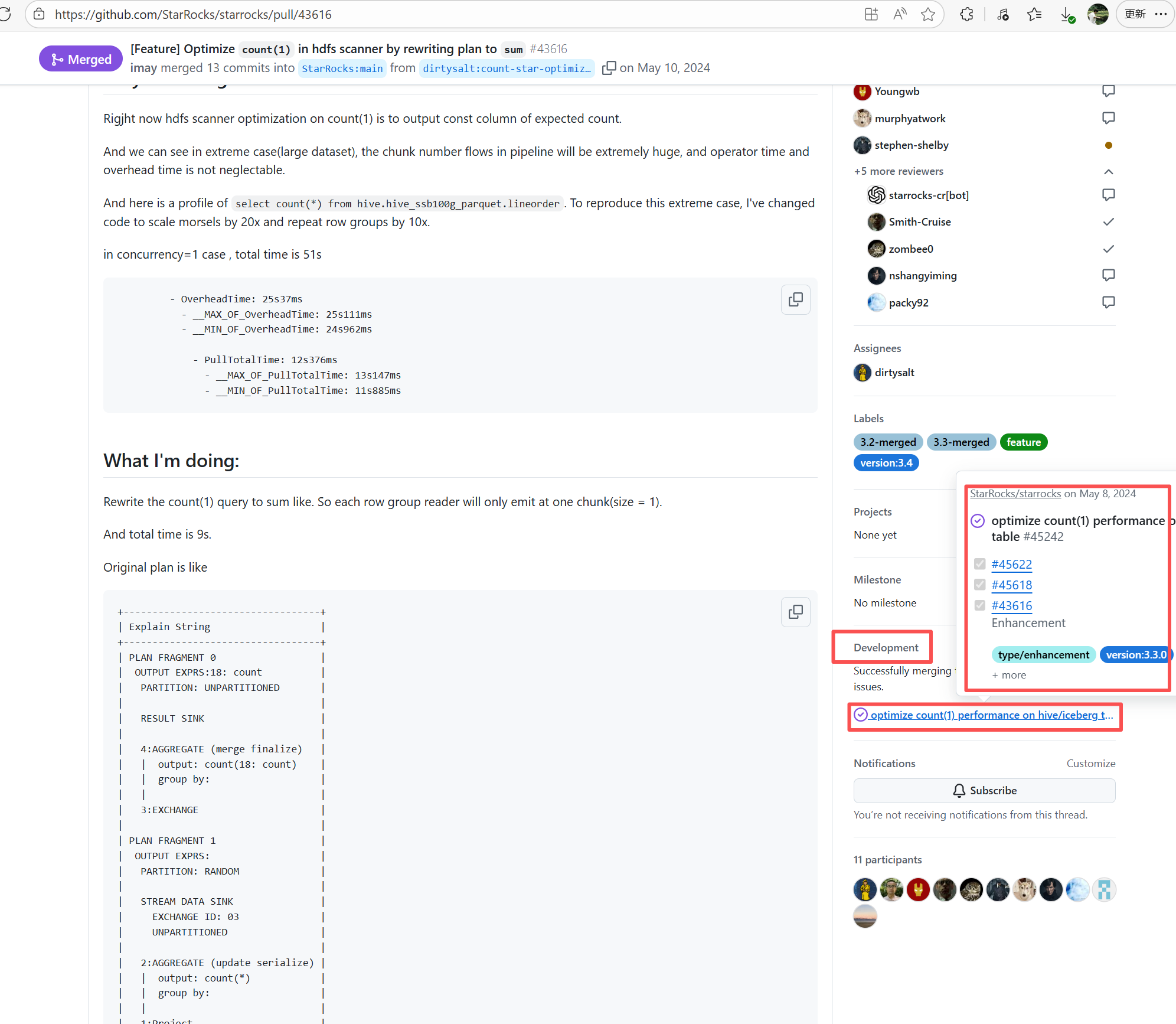

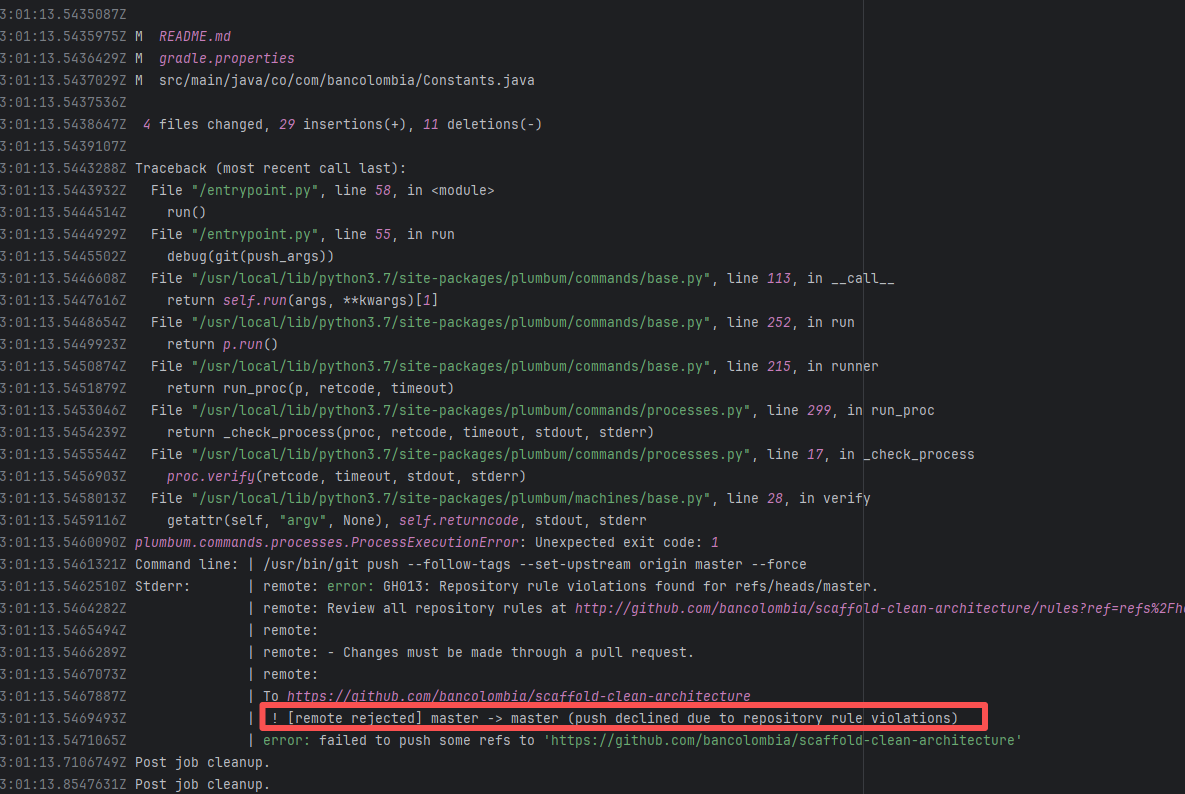

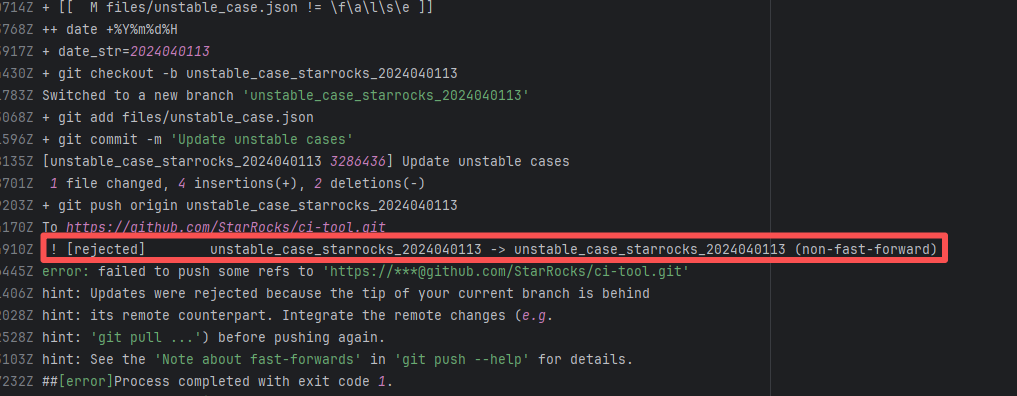

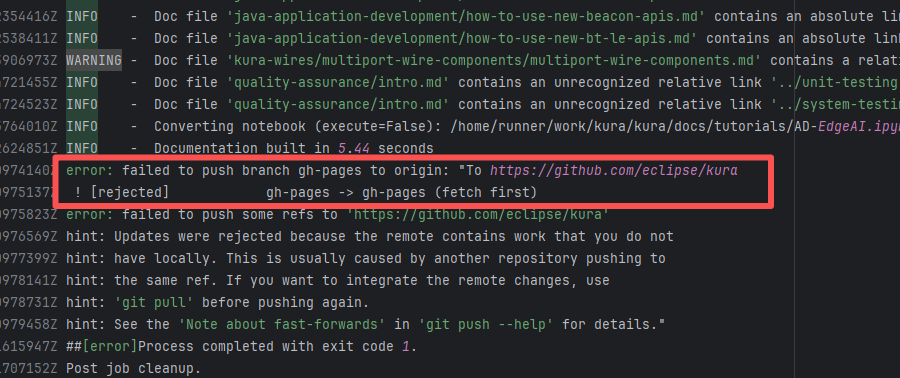

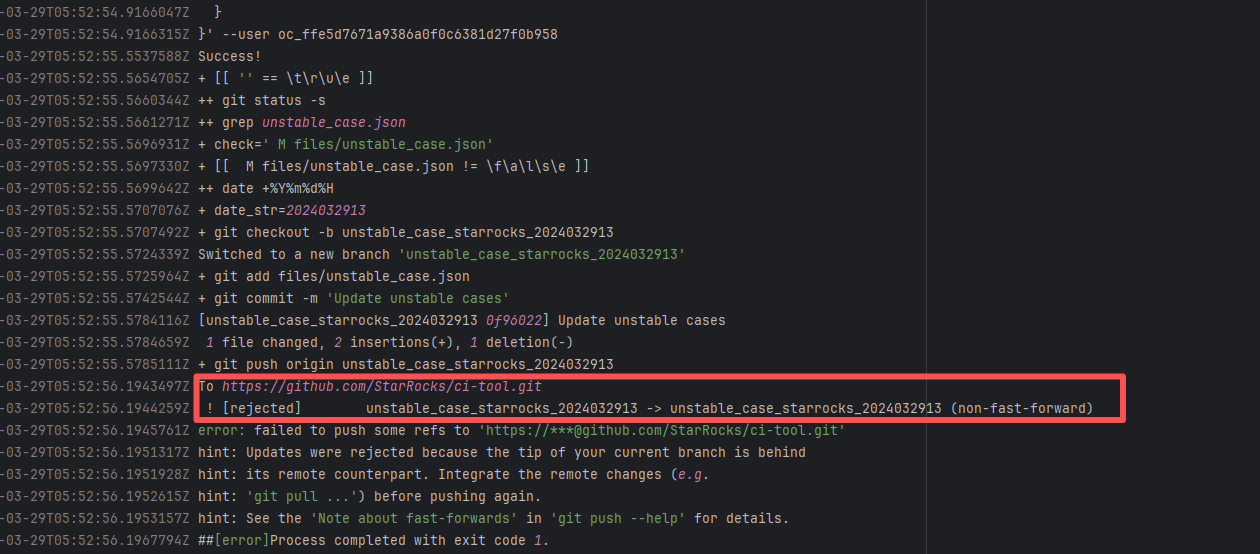

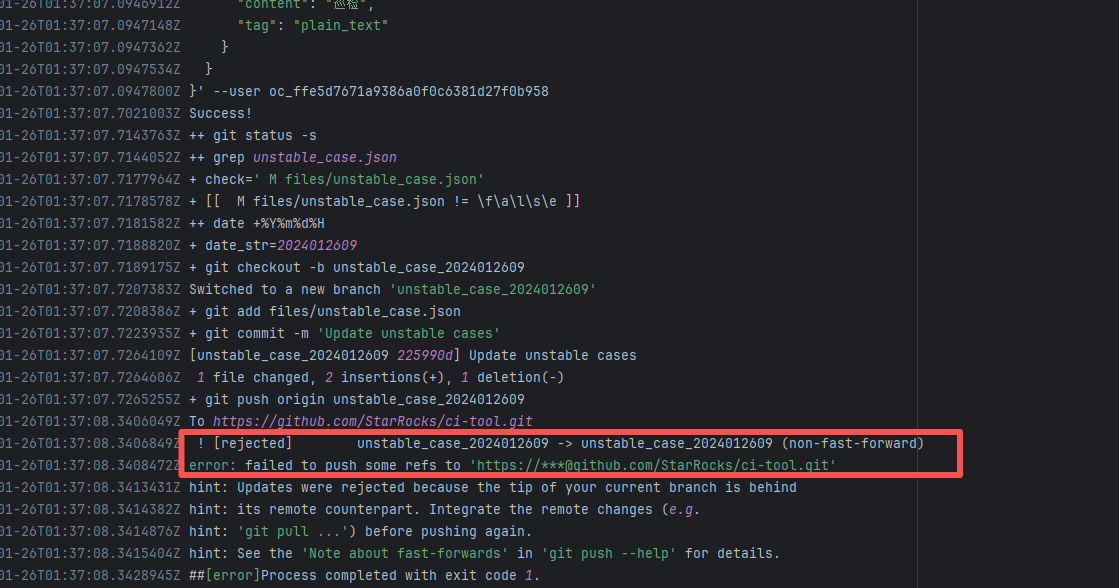

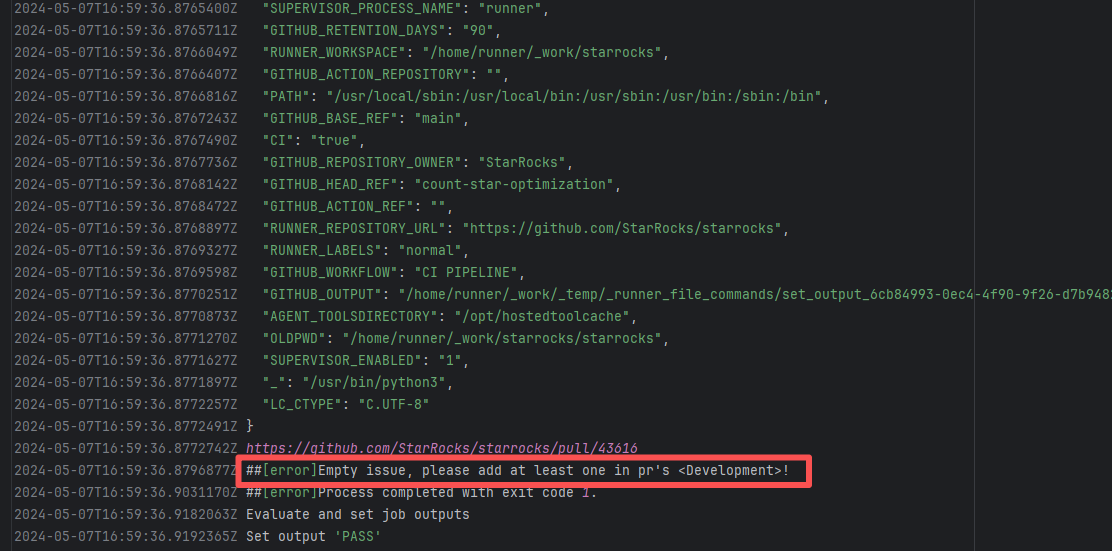

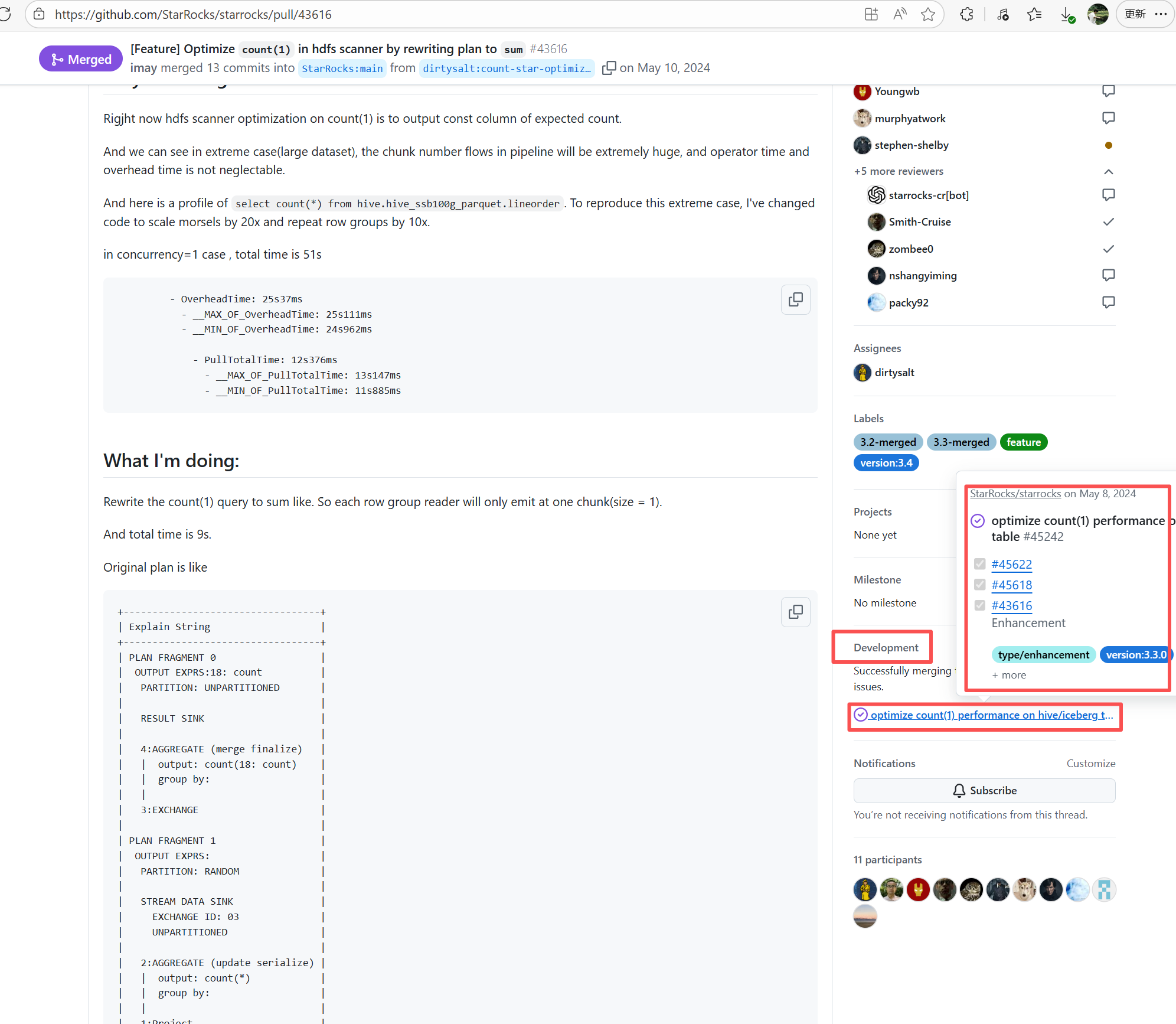

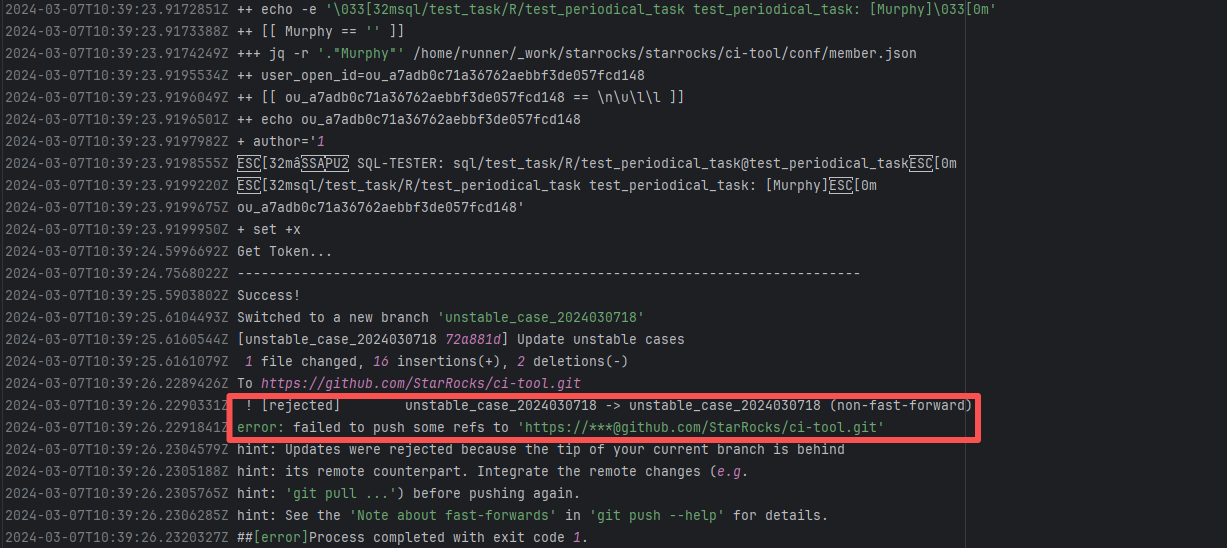

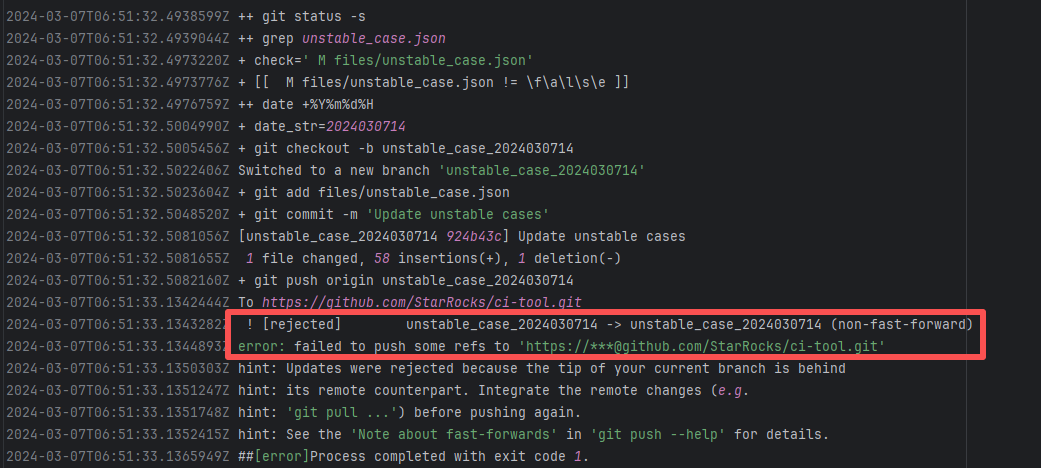

| StarRocks/starrocks |

24692245610 |

Workflow Policy Violation |

Error: The current main repository configuration requires at least one issue to be specified in the PR, but the current PR does not specify any issue.

Root Cause: Select and specify an issue under PR-<Development> |

Select and specify an issue under PR-<Development> |

|

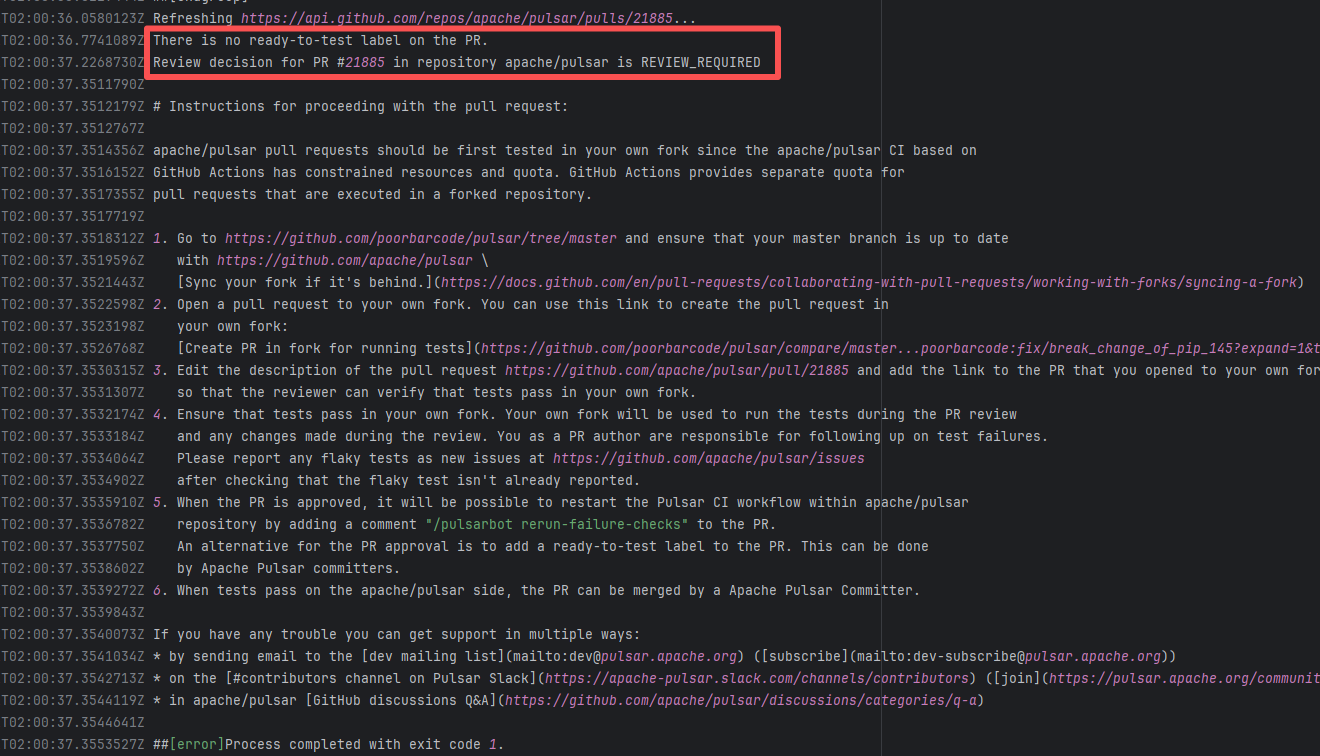

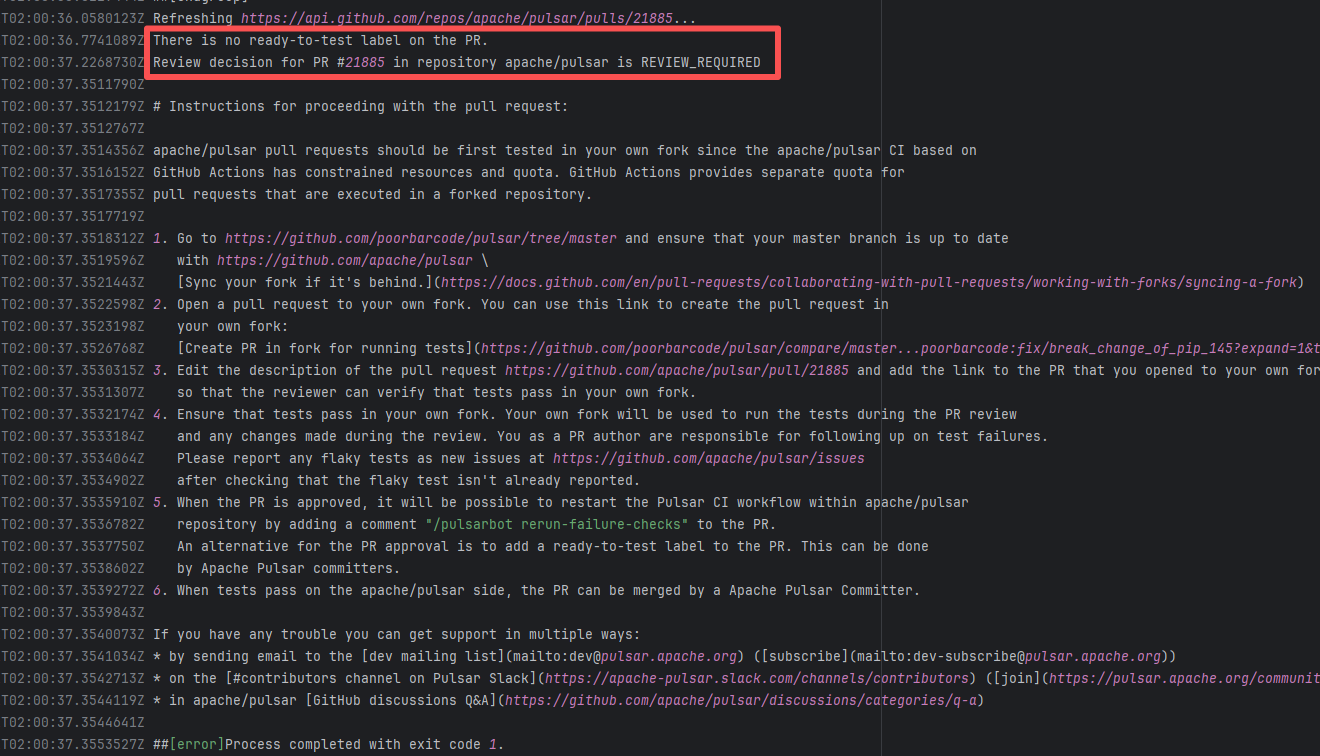

| apache/pulsar |

20409788055 |

Workflow Policy Violation |

Error: Operations on the PR #21885 in the Apache Pulsar repository are currently restricted. The core reason is that the PR is missing the "ready-to-test" label and is in the "REVIEW_REQUIRED" state, so subsequent operations are prohibited.

Root Cause: CI based on GitHub Actions in the main repository has resource and quota limits. The official mandatory requirement is: all PRs must first complete test verification in the contributor's personal Fork repository. Only after confirming there are no problems can they be submitted to the main repository, to avoid occupying the limited CI quota of the main repository.

After a PR is submitted, it is necessary to contact the repository administrator to add the "ready-to-test" label to the PR. After the label is added, the main repository CI will allow the test process to execute; the repository administrator will review the code, modify the code according to the review comments (if any), and push it to the corresponding branch of the Fork repository (the PR will automatically synchronize the modifications). After the review is passed, the PR state will change from "REVIEW_REQUIRED" to "APPROVED", and then the subsequent merging process can be entered. |

Contact the relevant personnel to conduct testing and review according to the main repository requirements, and rerun after completion |

|

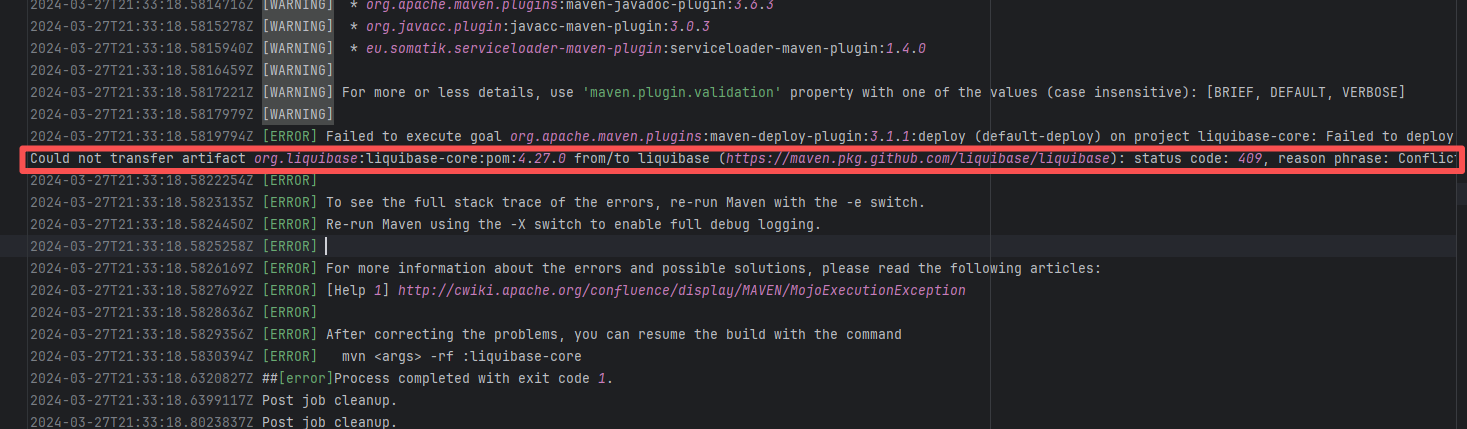

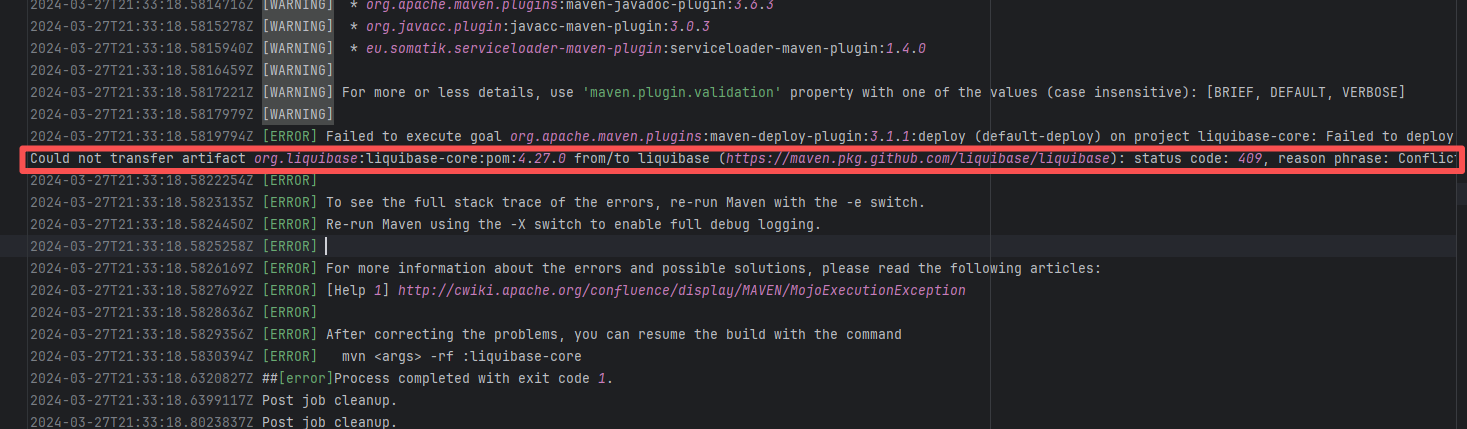

| liquibase/liquibase |

23174187019 |

Artifact Conflict |

Error: The error log indicates a failure when publishing a Maven artifact to the GitHub Packages repository. The core reason is receiving a 409 Conflict response, indicating that the artifact to be uploaded conflicts with an artifact that already exists in the repository.

Root Cause: Maven repositories like GitHub Packages typically do not allow repeatedly deploying an artifact with the same groupId:artifactId:version (i.e., overwriting an existing version is not allowed). When the current artifact was published, it collided with an artifact that had already been deployed to the repository. The rerun succeeded after deletion. |

Delete previously uploaded artifacts |

|

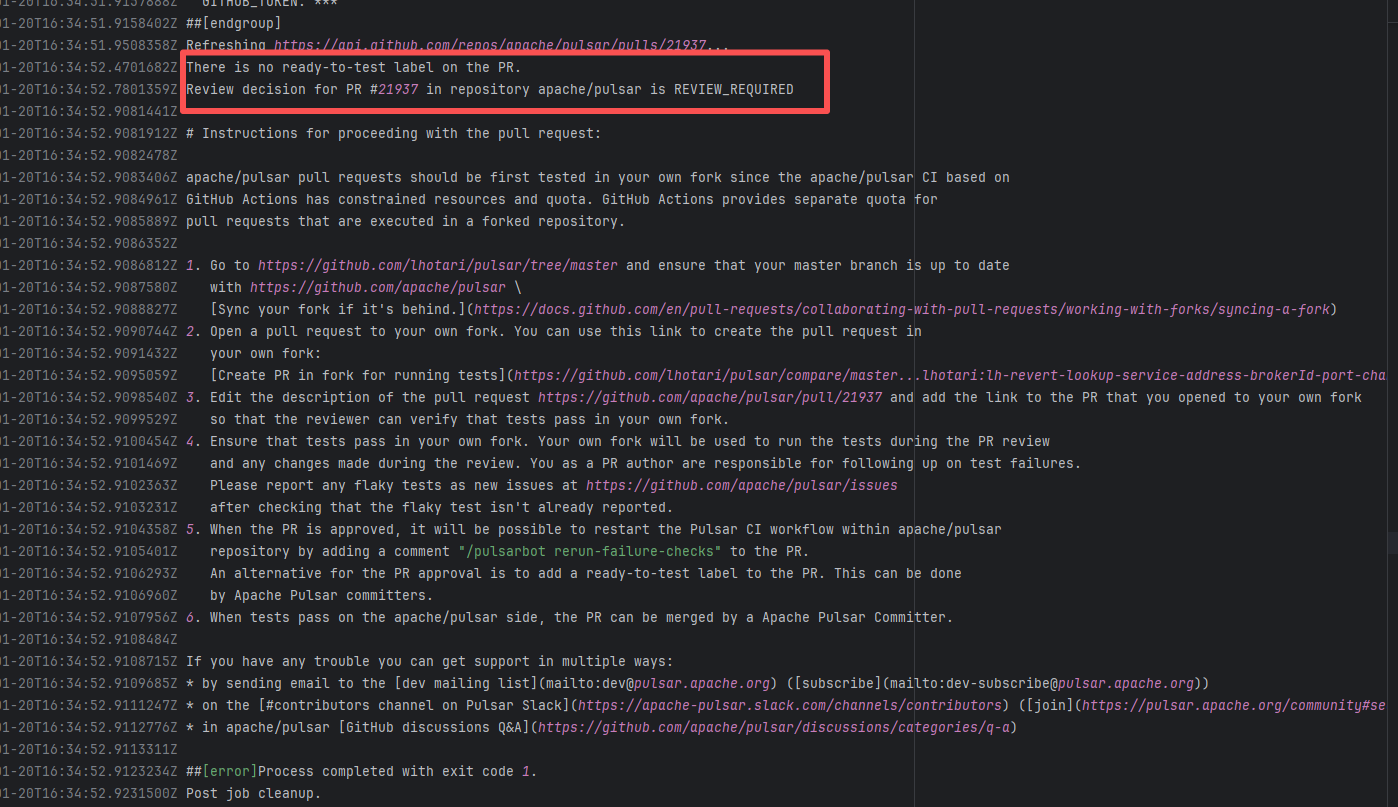

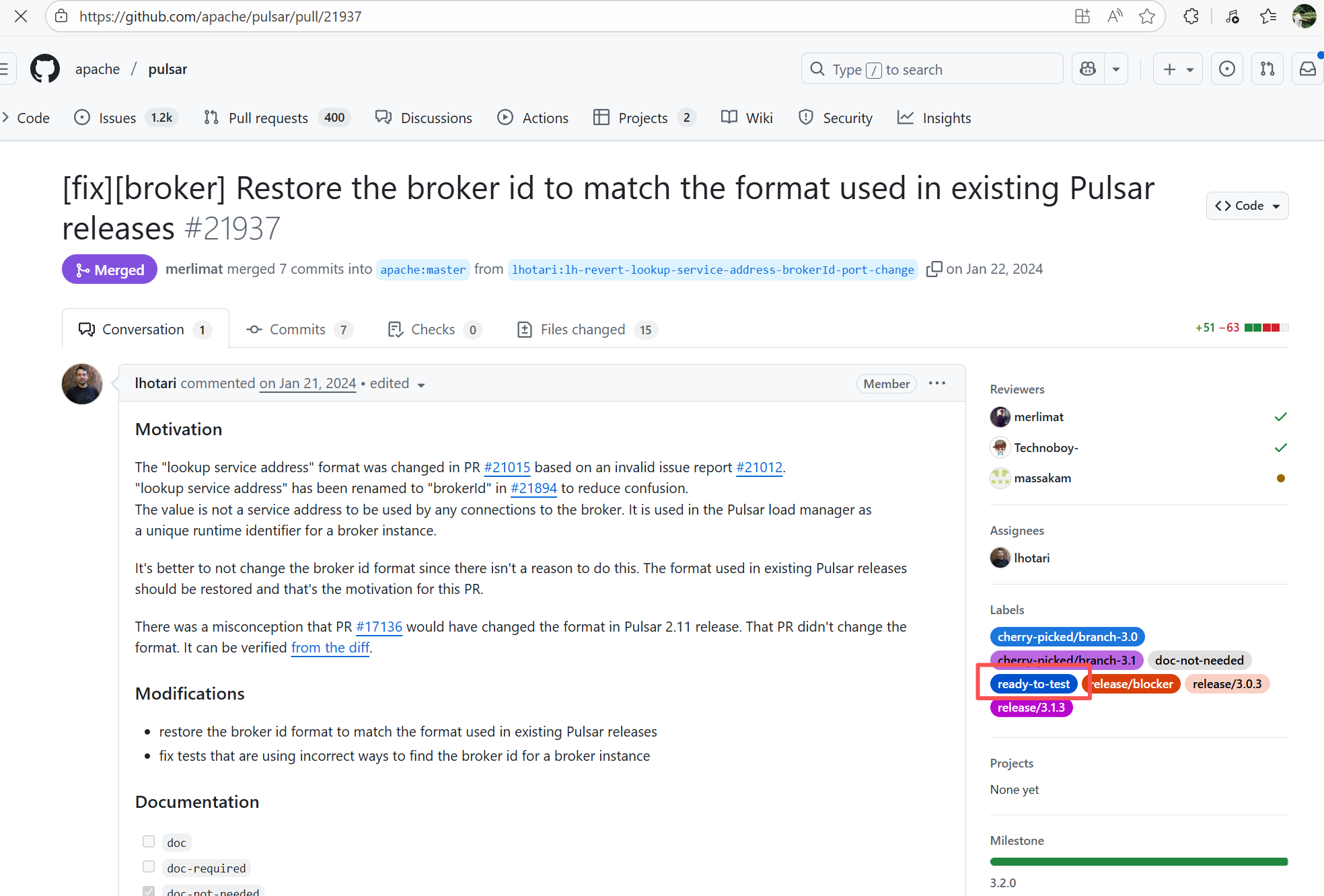

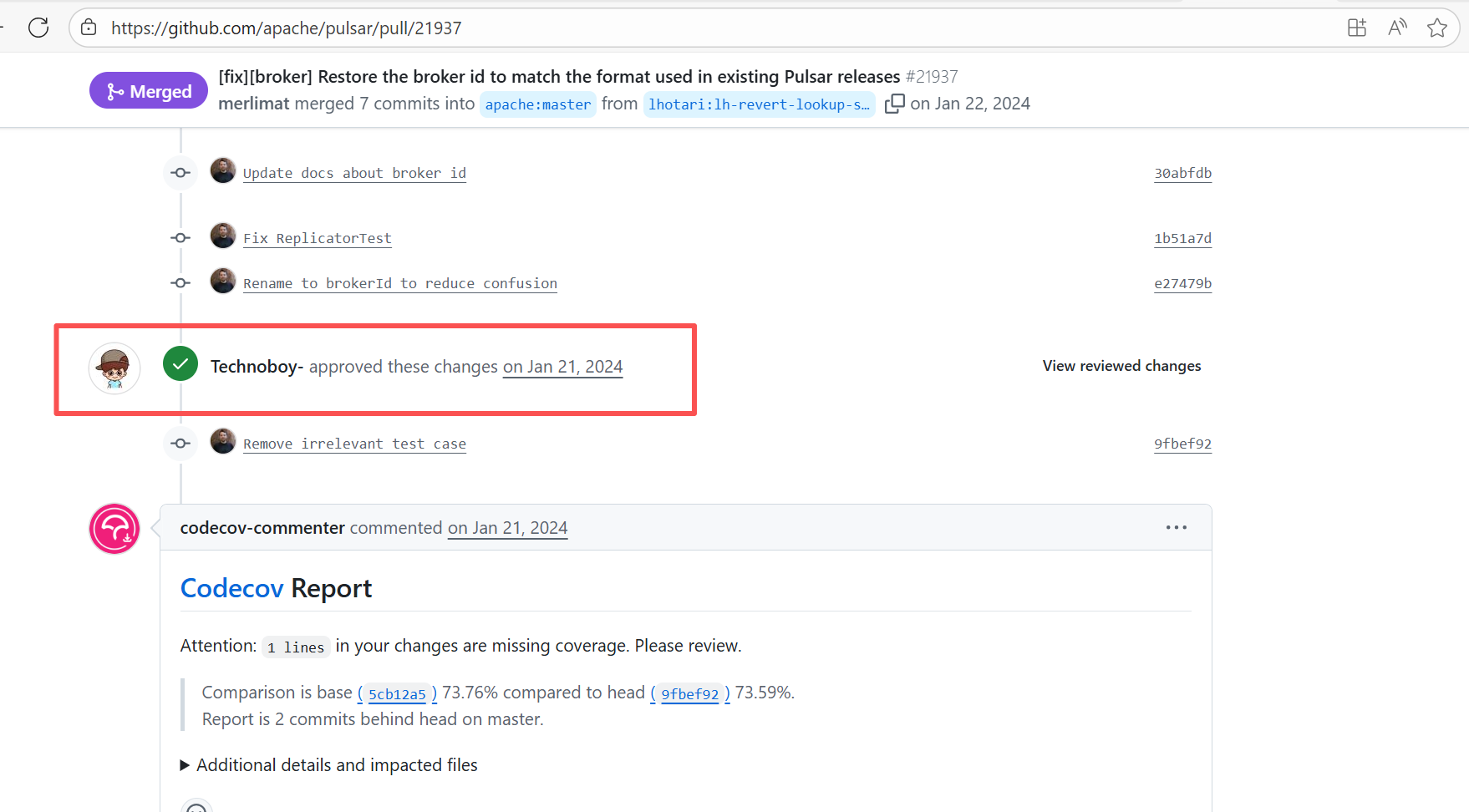

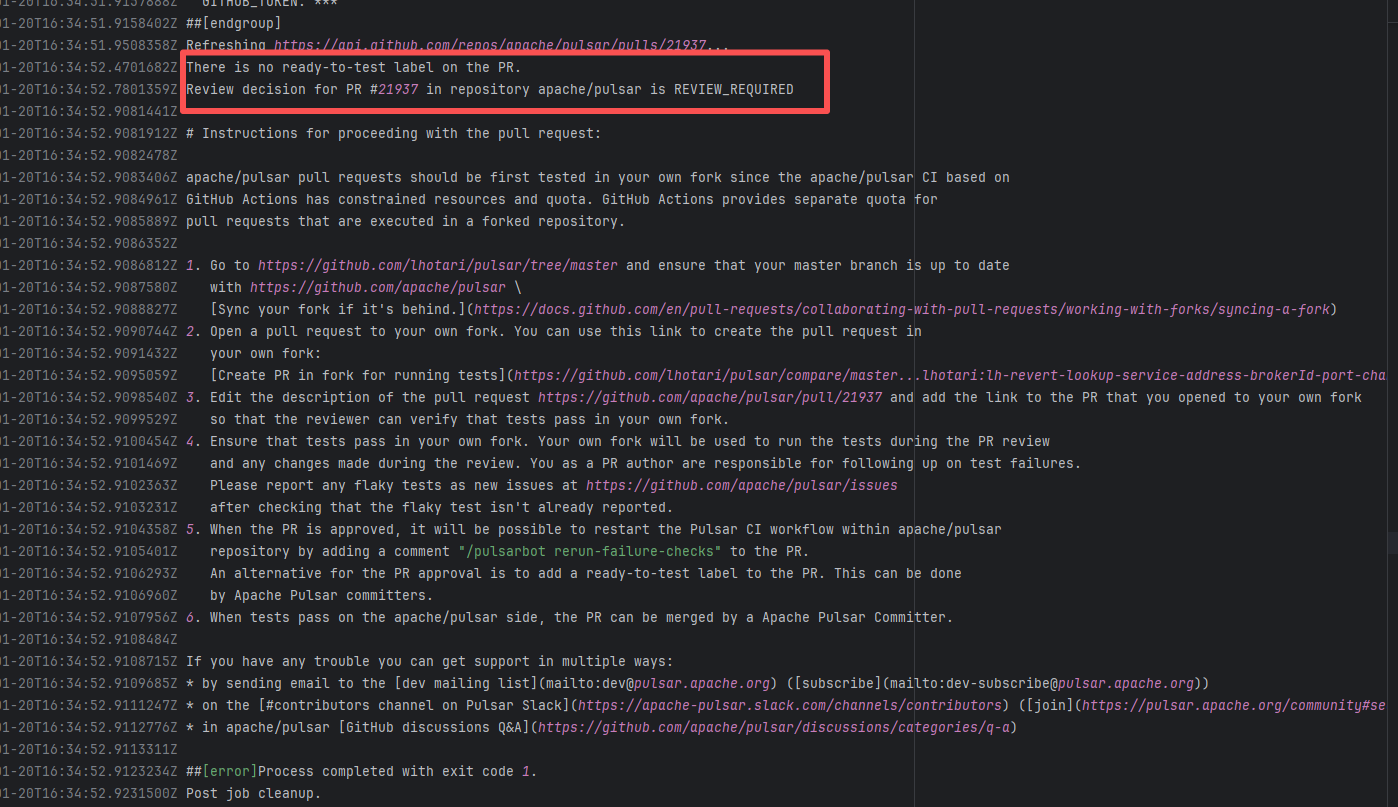

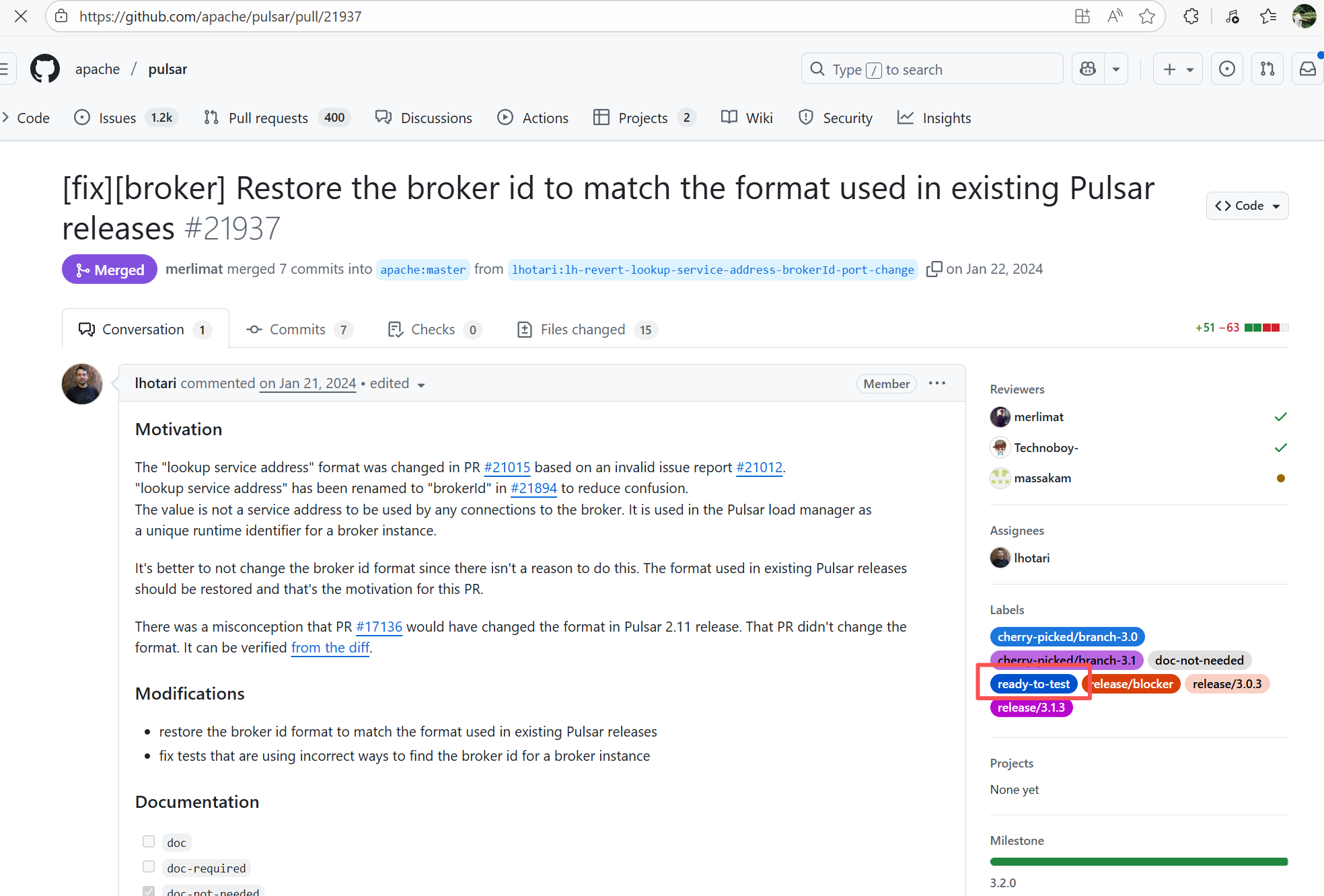

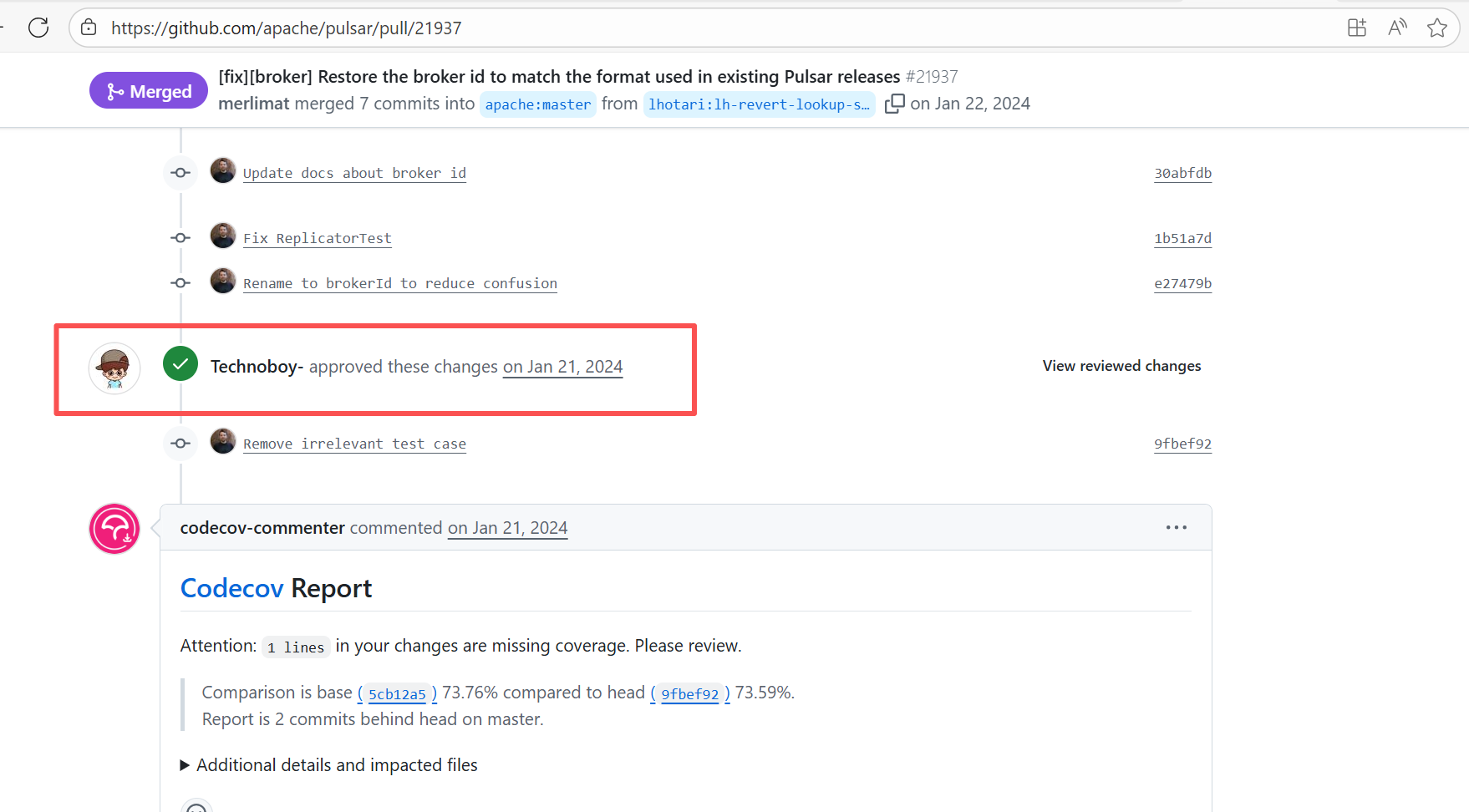

| apache/pulsar |

20688274346 |

Workflow Policy Violation |

Error: Operations on the PR #21937 in the Apache Pulsar repository are currently restricted. The core reason is that the PR is missing the "ready-to-test" label and is in the "REVIEW_REQUIRED" state, so subsequent operations are prohibited.

Root Cause: CI based on GitHub Actions in the main repository has resource and quota limits. The official mandatory requirement is: all PRs must first complete test verification in the contributor's personal Fork repository. Only after confirming there are no problems can they be submitted to the main repository, to avoid occupying the limited CI quota of the main repository.

After a PR is submitted, it is necessary to contact the repository administrator to add the "ready-to-test" label to the PR. After the label is added, the main repository CI will allow the test process to execute; the repository administrator will review the code, modify the code according to the review comments (if any), and push it to the corresponding branch of the Fork repository (the PR will automatically synchronize the modifications). After the review is passed, the PR state will change from "REVIEW_REQUIRED" to "APPROVED", and then the subsequent merging process can be entered. |

Contact the relevant personnel to conduct testing and review according to the main repository requirements, and rerun after completion |

|

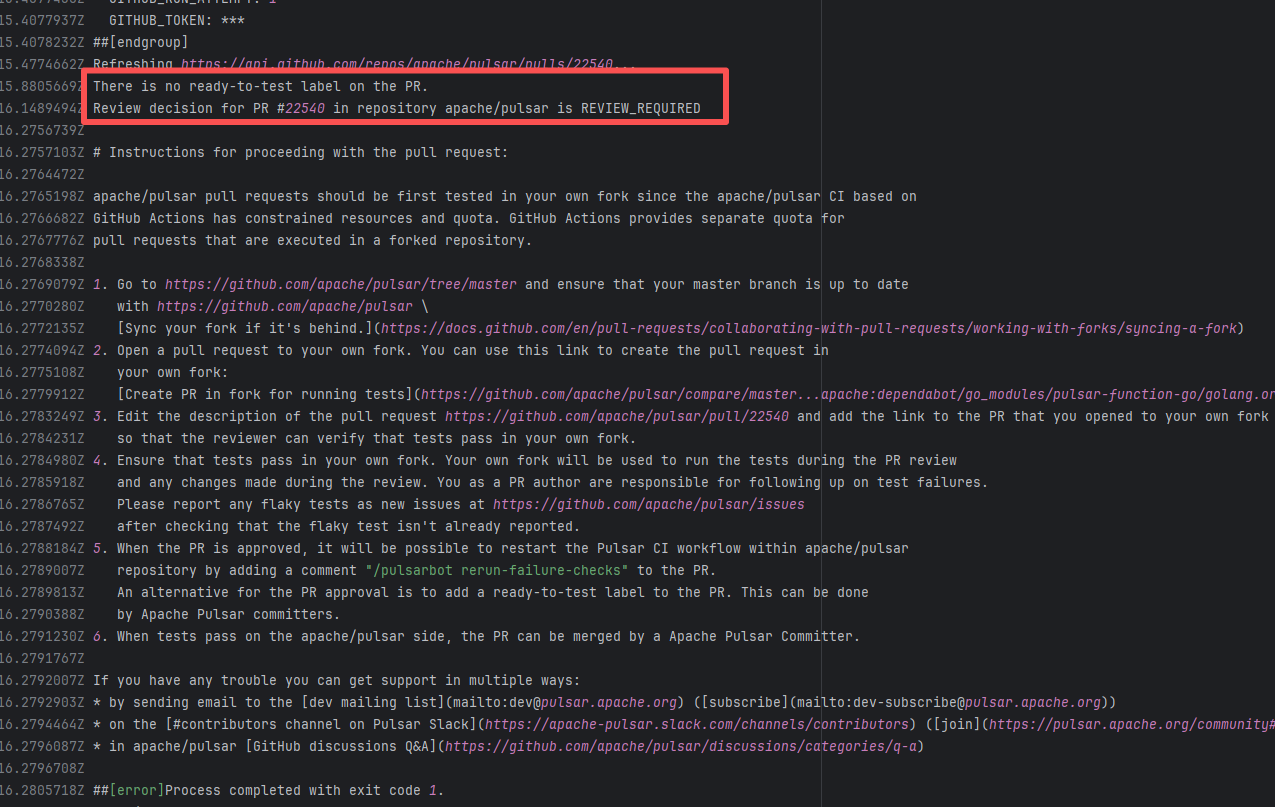

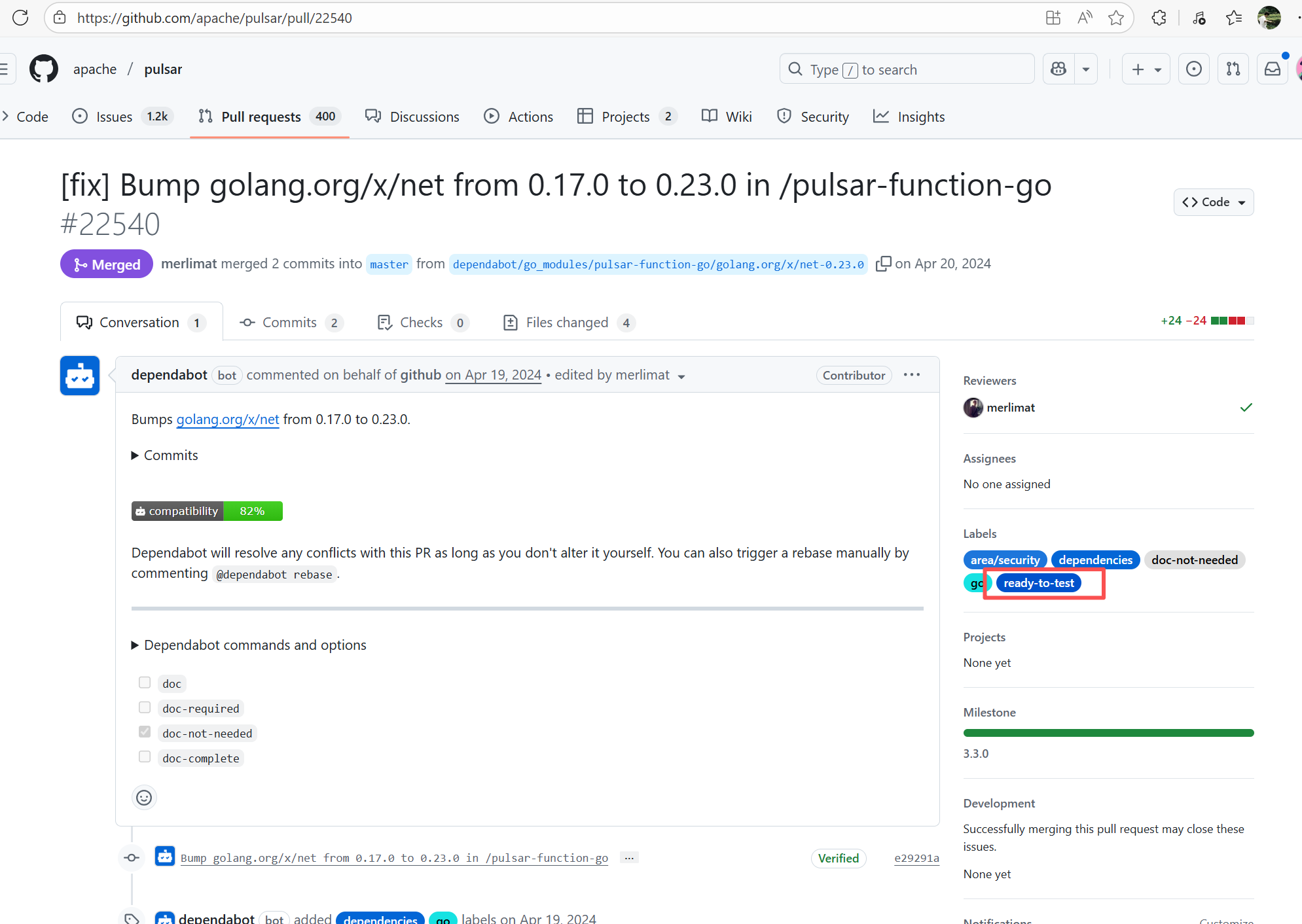

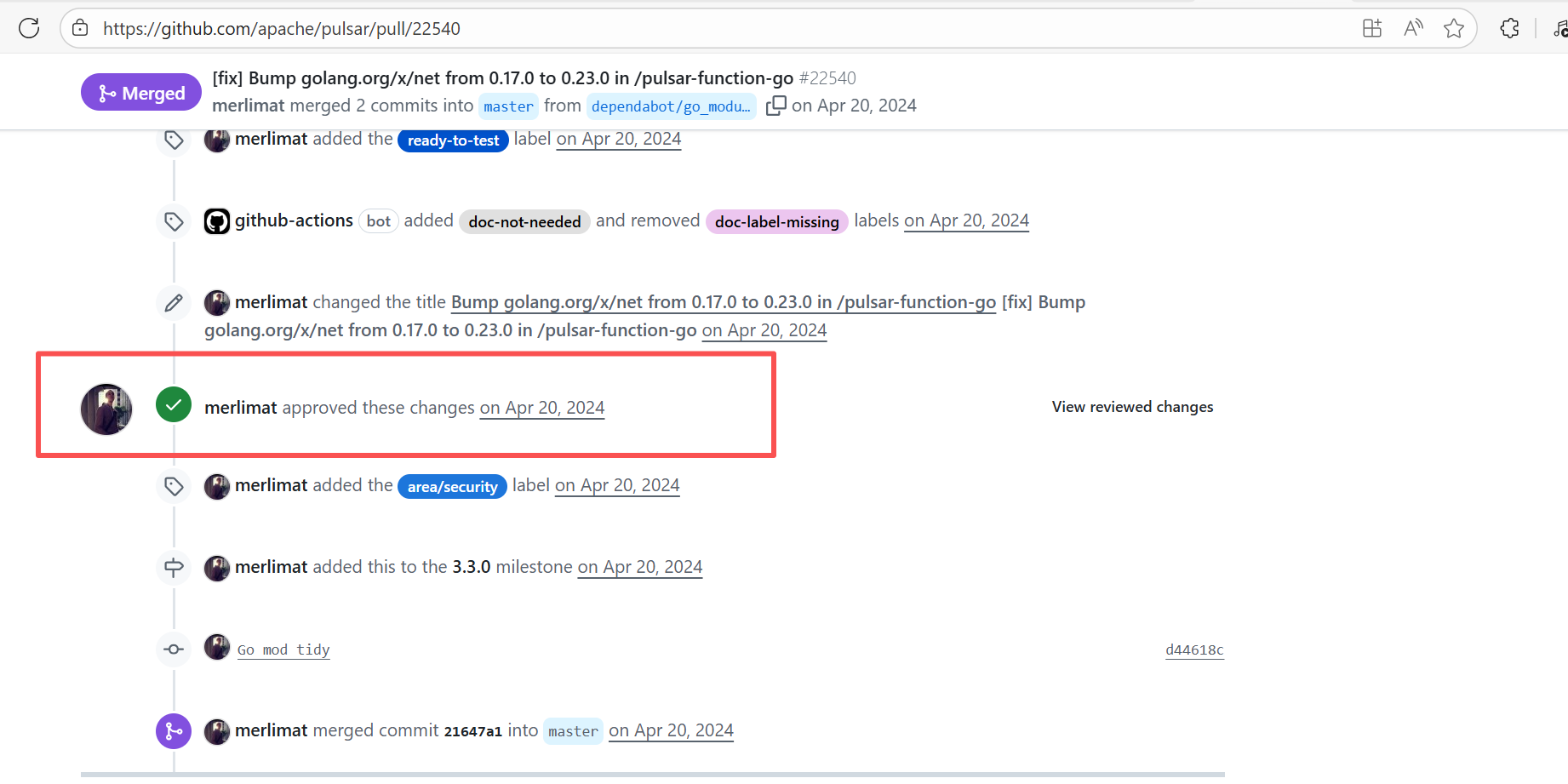

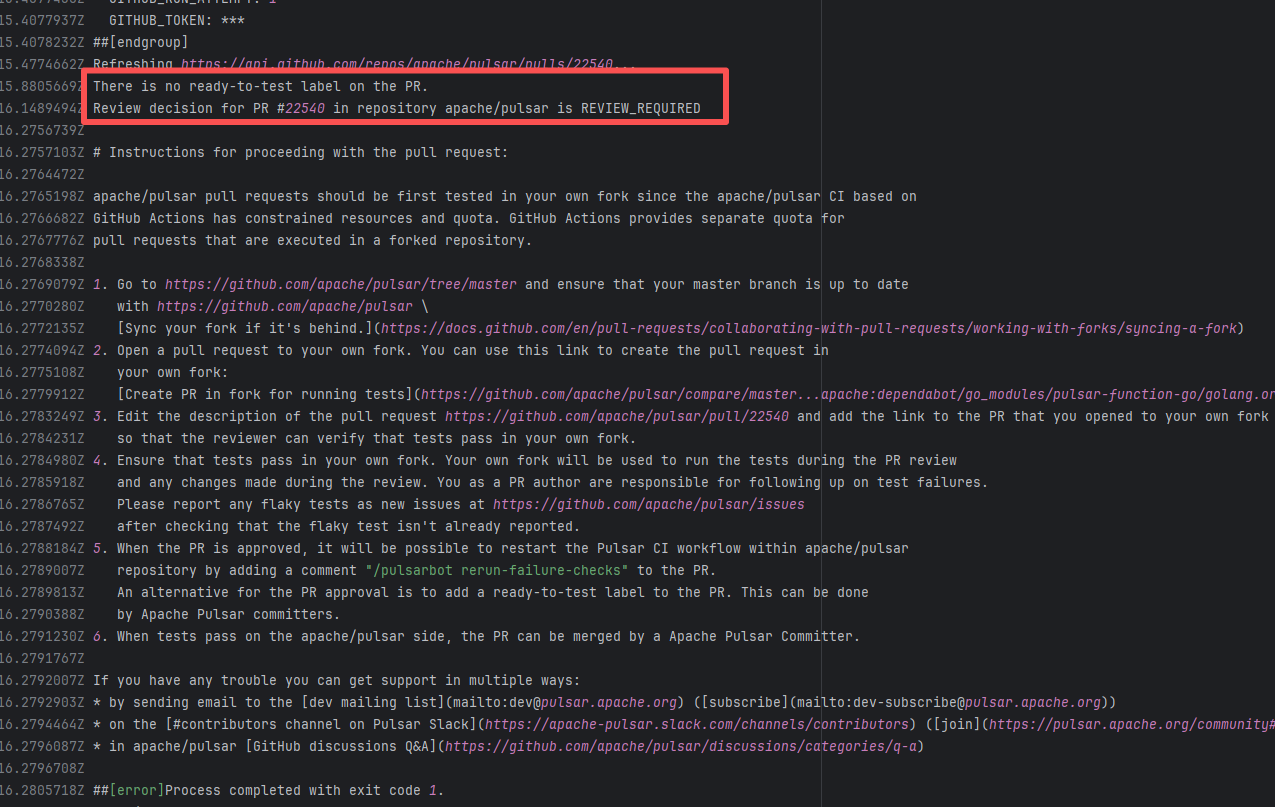

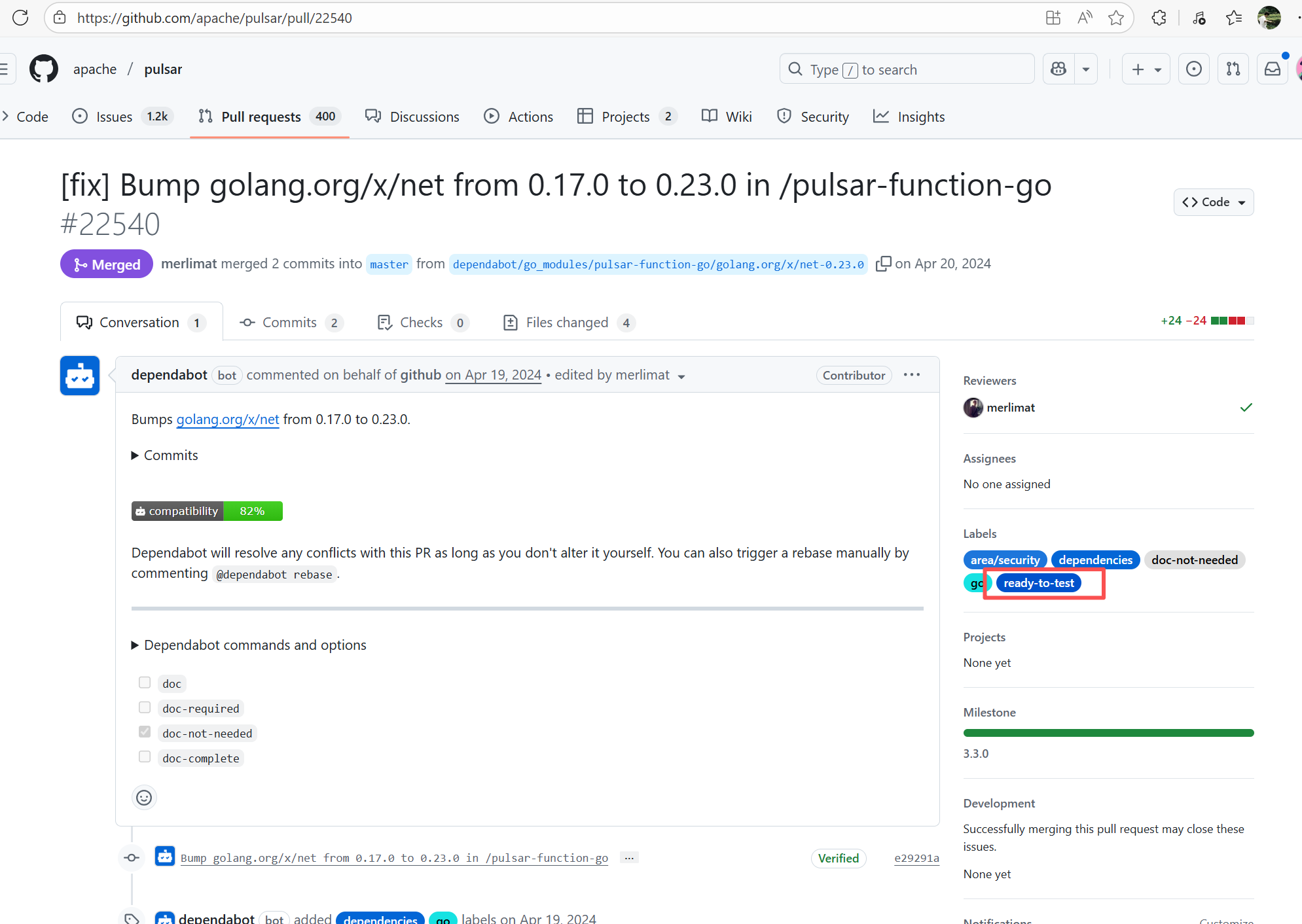

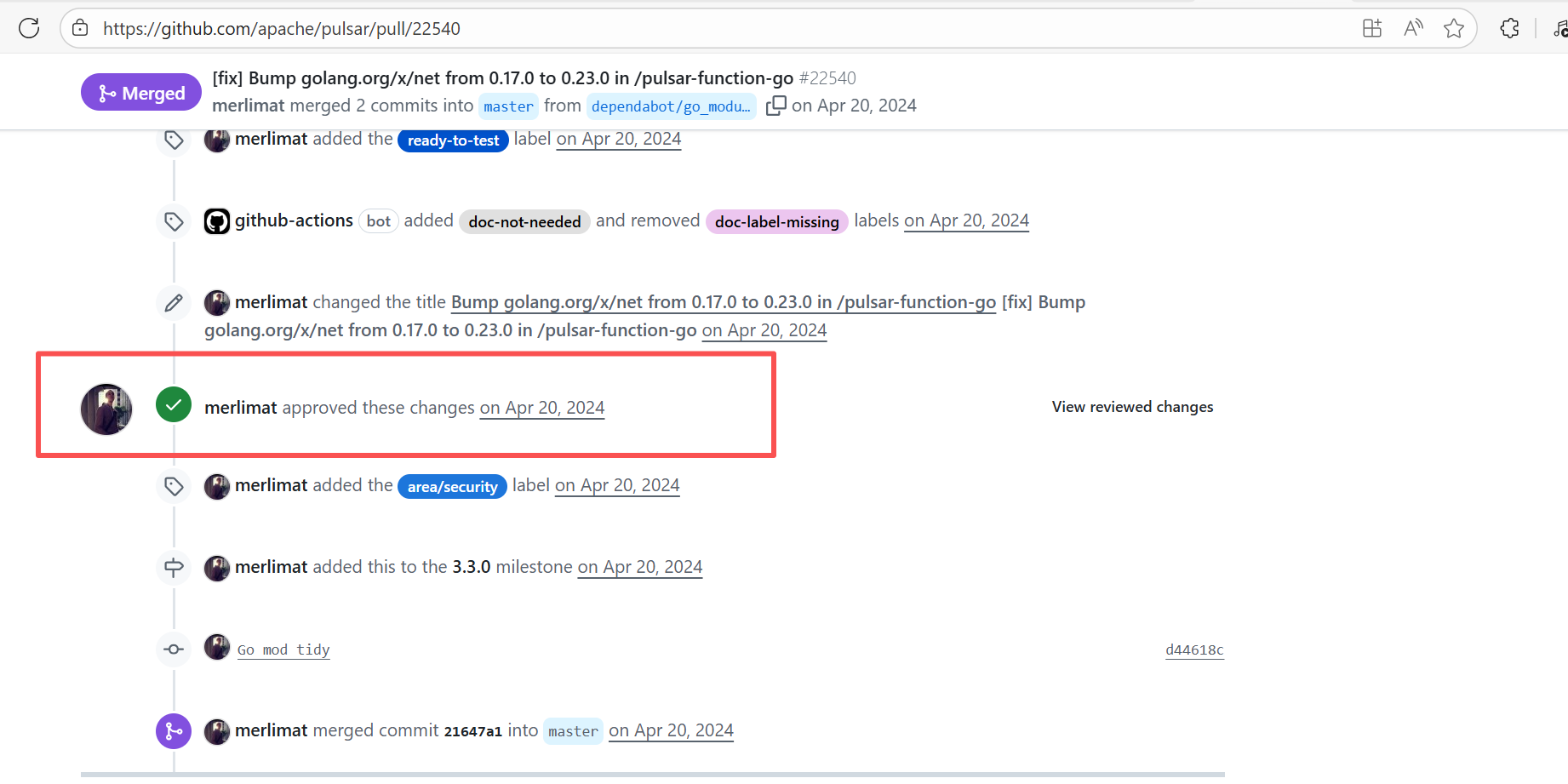

| apache/pulsar |

24023618188 |

Workflow Policy Violation |

Error: Operations on the PR #22540 in the Apache Pulsar repository are currently restricted. The core reason is that the PR is missing the "ready-to-test" label and is in the "REVIEW_REQUIRED" state, so subsequent operations are prohibited.

Root Cause: CI based on GitHub Actions in the main repository has resource and quota limits. The official mandatory requirement is: all PRs must first complete test verification in the contributor's personal Fork repository. Only after confirming there are no problems can they be submitted to the main repository, to avoid occupying the limited CI quota of the main repository.

After a PR is submitted, it is necessary to contact the repository administrator to add the "ready-to-test" label to the PR. After the label is added, the main repository CI will allow the test process to execute; the repository administrator will review the code, modify the code according to the review comments (if any), and push it to the corresponding branch of the Fork repository (the PR will automatically synchronize the modifications). After the review is passed, the PR state will change from "REVIEW_REQUIRED" to "APPROVED", and then the subsequent merging process can be entered. |

Contact the relevant personnel to conduct testing and review according to the main repository requirements, and rerun after completion |

|

| apache/pulsar |

22111131739 |

Workflow Policy Violation |

Error: Operations on the PR #22161 in the Apache Pulsar repository are currently restricted. The core reason is that the PR is missing the "ready-to-test" label and is in the "REVIEW_REQUIRED" state, so subsequent operations are prohibited.

Root Cause: CI based on GitHub Actions in the main repository has resource and quota limits. The official mandatory requirement is: all PRs must first complete test verification in the contributor's personal Fork repository. Only after confirming there are no problems can they be submitted to the main repository, to avoid occupying the limited CI quota of the main repository.

After a PR is submitted, it is necessary to contact the repository administrator to add the "ready-to-test" label to the PR. After the label is added, the main repository CI will allow the test process to execute; the repository administrator will review the code, modify the code according to the review comments (if any), and push it to the corresponding branch of the Fork repository (the PR will automatically synchronize the modifications). After the review is passed, the PR state will change from "REVIEW_REQUIRED" to "APPROVED", and then the subsequent merging process can be entered. |

Contact the relevant personnel to conduct testing and review according to the main repository requirements, and rerun after completion |

|

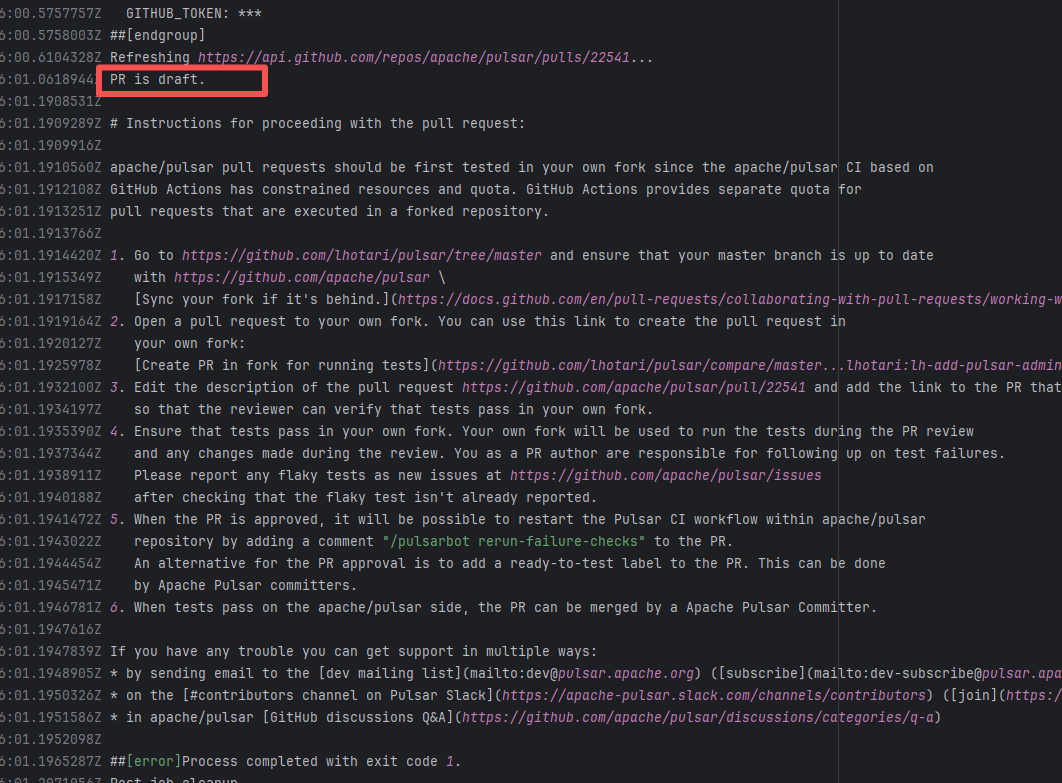

| apache/pulsar |

24089152357 |

Workflow Policy Violation |

Error: "Draft PRs" in GitHub are usually used to mark "work in progress". The Apache Pulsar project places additional restrictions on the use of main repository CI resources for such PRs, mandating verification in the Fork repository first to avoid wasting resources.

Root Cause: Rerun succeeded after converting the PR from "Draft state" to "Formal PR" once it was ready. |

Convert the PR from "Draft state" to "Formal PR" once it is ready, and rerun successfully. |

|

| apache/pulsar |

19741444431 |

Workflow Policy Violation |

Error: Operations on the PR #22722 in the Apache Pulsar repository are currently restricted. The core reason is that the PR is missing the "ready-to-test" label and is in the "REVIEW_REQUIRED" state, so subsequent operations are prohibited.

Root Cause: CI based on GitHub Actions in the main repository has resource and quota limits. The official mandatory requirement is: all PRs must first complete test verification in the contributor's personal Fork repository. Only after confirming there are no problems can they be submitted to the main repository, to avoid occupying the limited CI quota of the main repository.

After a PR is submitted, it is necessary to contact the repository administrator to add the "ready-to-test" label to the PR. After the label is added, the main repository CI will allow the test process to execute; the repository administrator will review the code, modify the code according to the review comments (if any), and push it to the corresponding branch of the Fork repository (the PR will automatically synchronize the modifications). After the review is passed, the PR state will change from "REVIEW_REQUIRED" to "APPROVED", and then the subsequent merging process can be entered. |

Contact the relevant personnel to conduct testing and review according to the main repository requirements, and rerun after completion |

|

| metasfresh/metasfresh |

27350084866 |

Artifact Conflict |

Error: The error log indicates a failure when publishing a Maven artifact to the GitHub Packages repository. The core reason is receiving a 409 Conflict response, indicating that the artifact to be uploaded conflicts with an artifact that already exists in the repository.

Root Cause: Maven repositories like GitHub Packages typically do not allow repeatedly deploying an artifact with the same groupId:artifactId:version (i.e., overwriting an existing version is not allowed). When the current artifact was published, it collided with an artifact that had already been deployed to the repository. The rerun succeeded after deletion. |

Delete previously uploaded artifacts |

|

| apache/pulsar |

24234003846 |

Workflow Policy Violation |

Error: Operations on the PR #22540 in the Apache Pulsar repository are currently restricted. The core reason is that the PR is missing the "ready-to-test" label and is in the "REVIEW_REQUIRED" state, so subsequent operations are prohibited.

Root Cause: CI based on GitHub Actions in the main repository has resource and quota limits. The official mandatory requirement is: all PRs must first complete test verification in the contributor's personal Fork repository. Only after confirming there are no problems can they be submitted to the main repository, to avoid occupying the limited CI quota of the main repository.

After a PR is submitted, it is necessary to contact the repository administrator to add the "ready-to-test" label to the PR. After the label is added, the main repository CI will allow the test process to execute; the repository administrator will review the code, modify the code according to the review comments (if any), and push it to the corresponding branch of the Fork repository (the PR will automatically synchronize the modifications). After the review is passed, the PR state will change from "REVIEW_REQUIRED" to "APPROVED", and then the subsequent merging process can be entered. |

Contact the relevant personnel to conduct testing and review according to the main repository requirements, and rerun after completion |

|

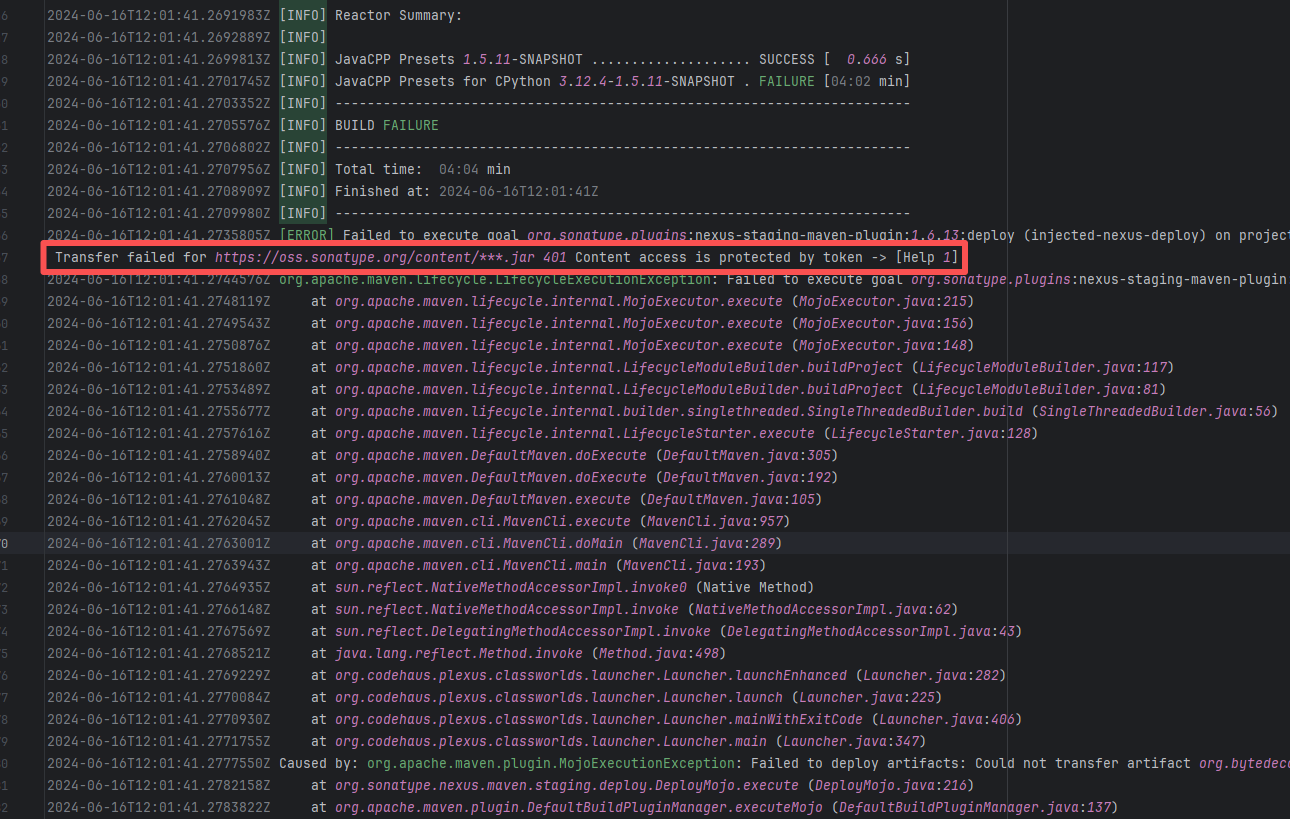

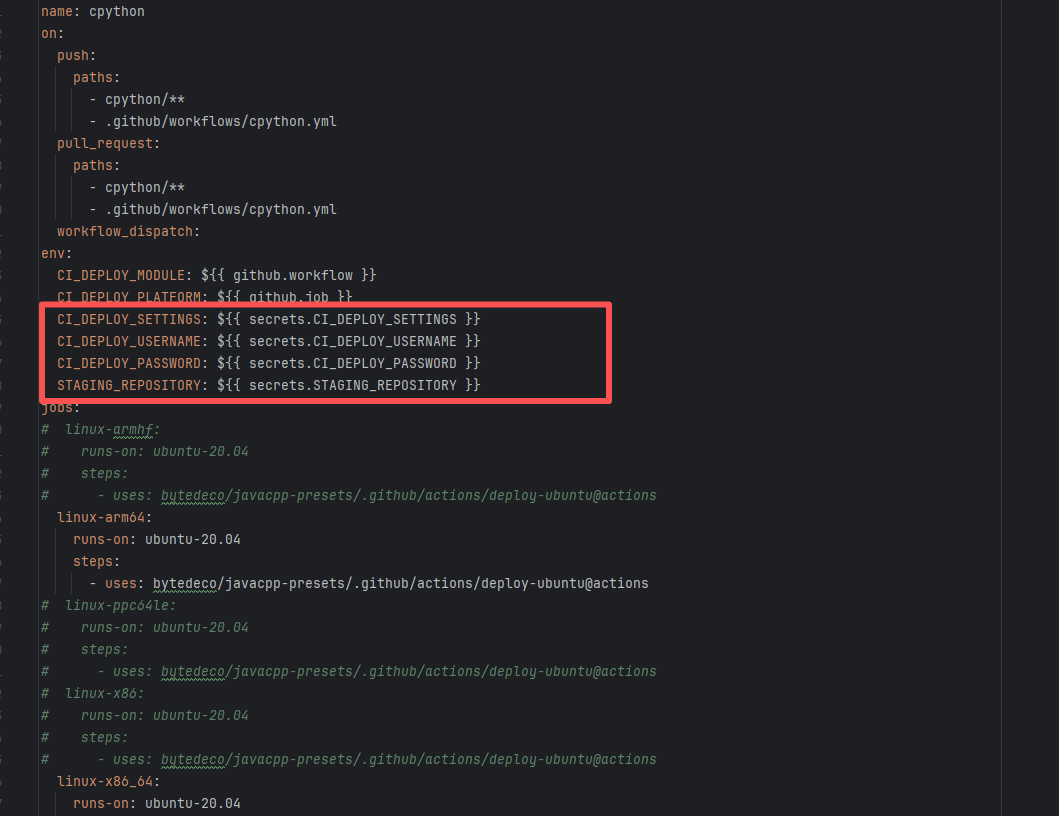

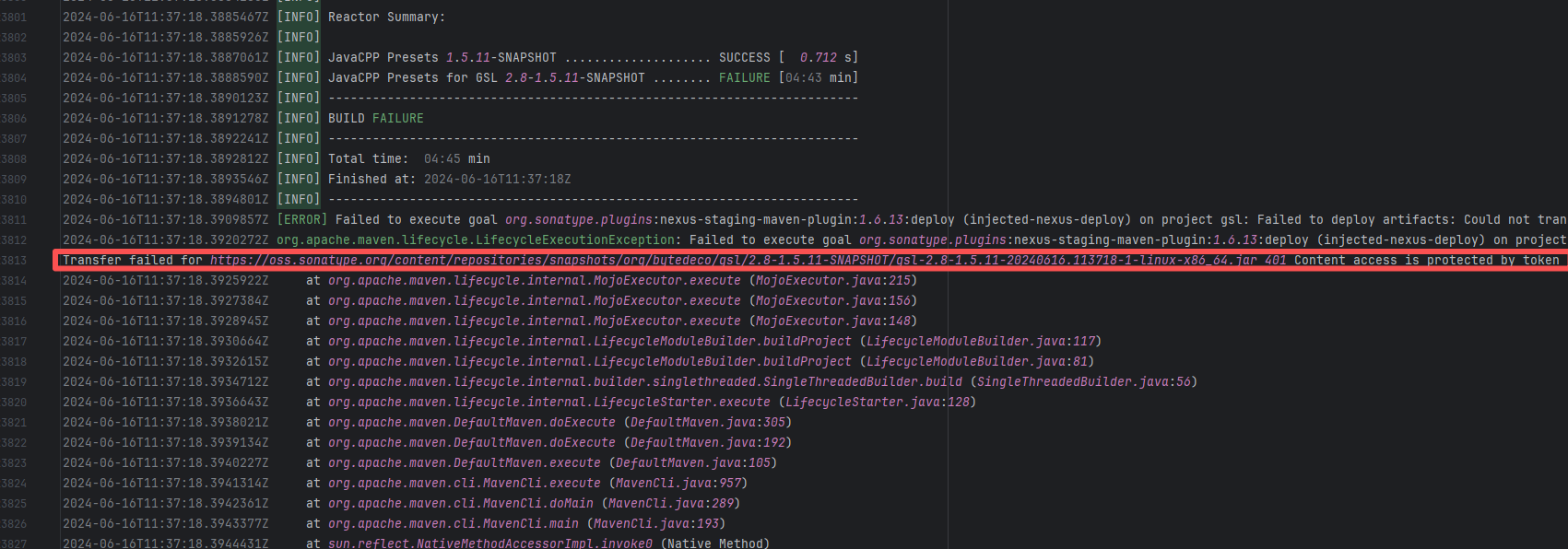

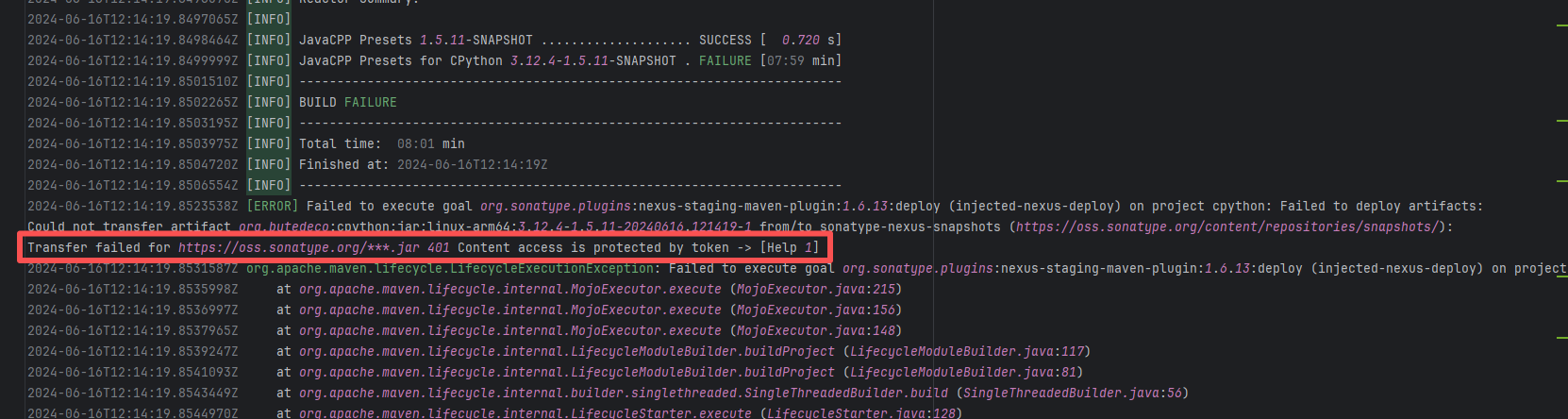

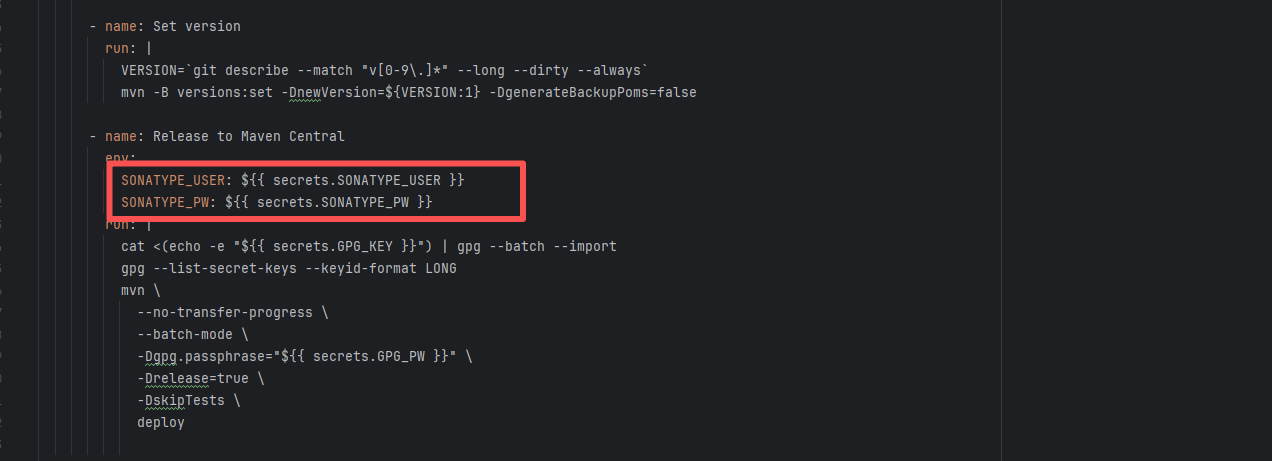

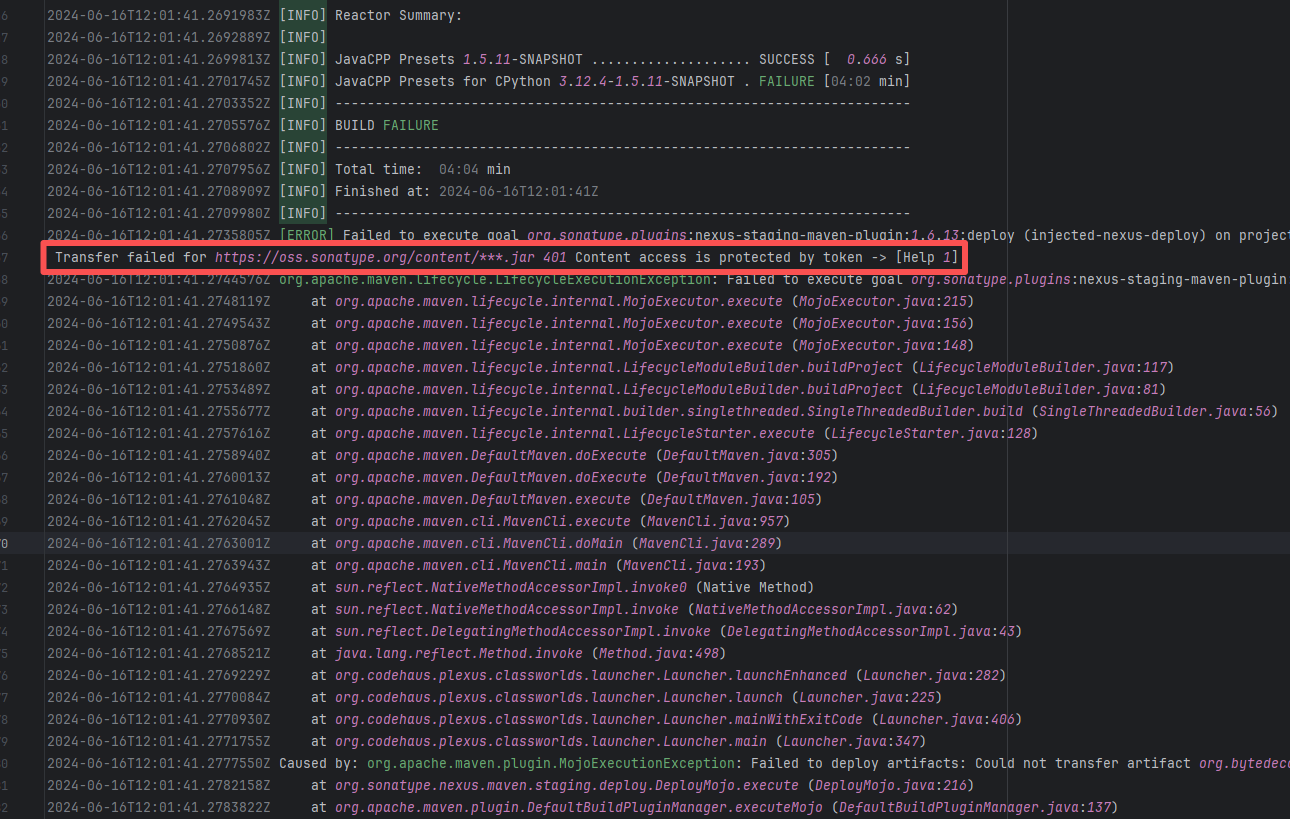

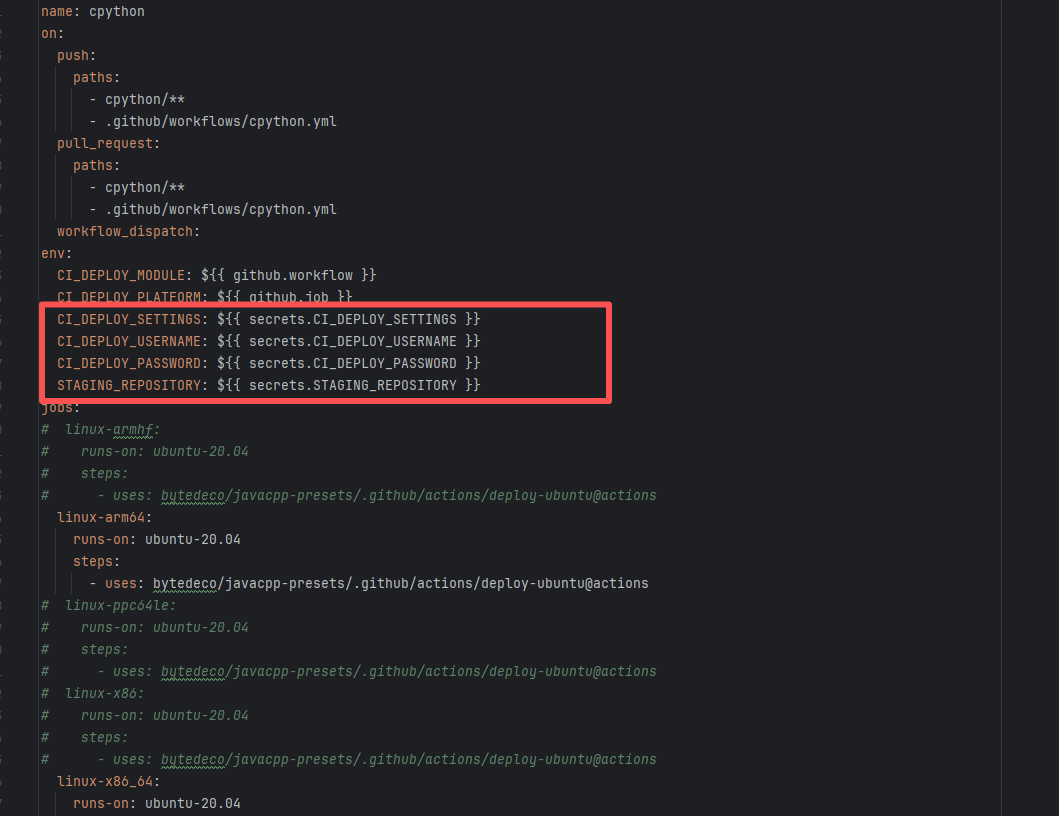

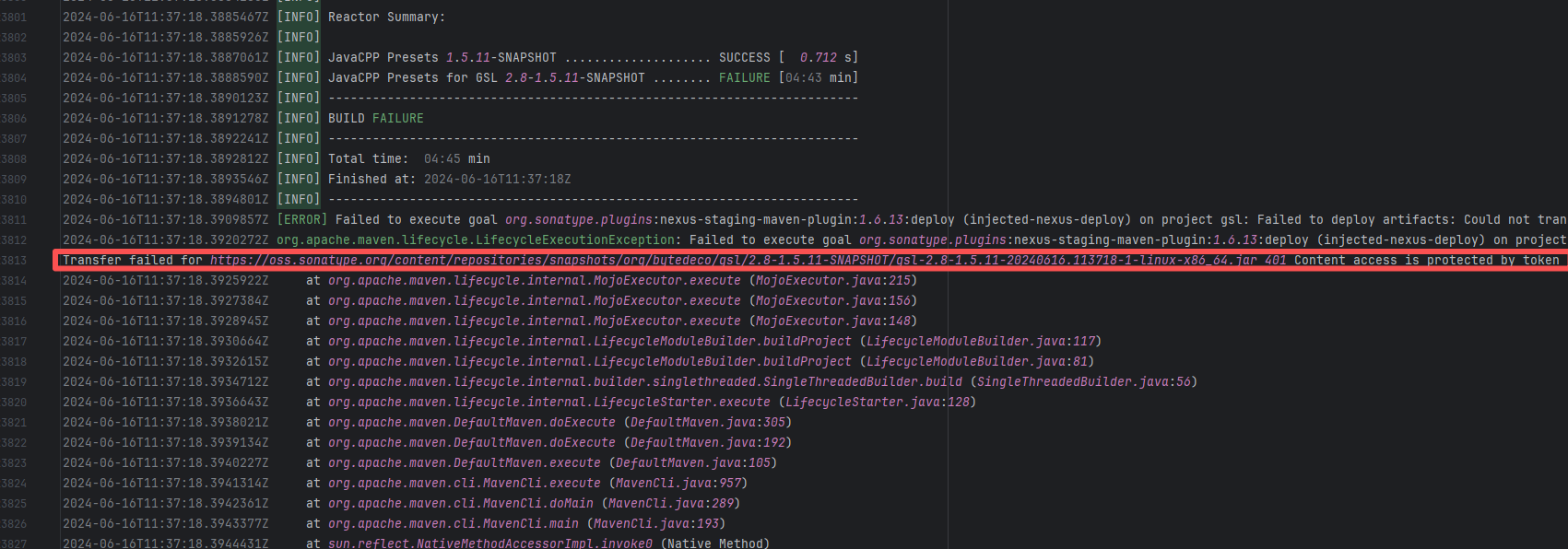

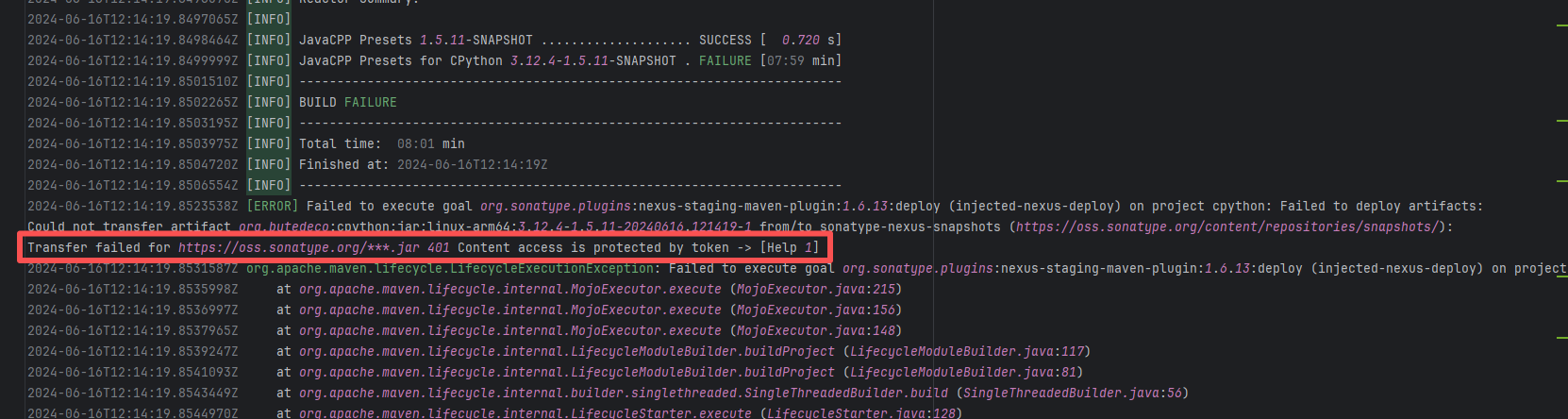

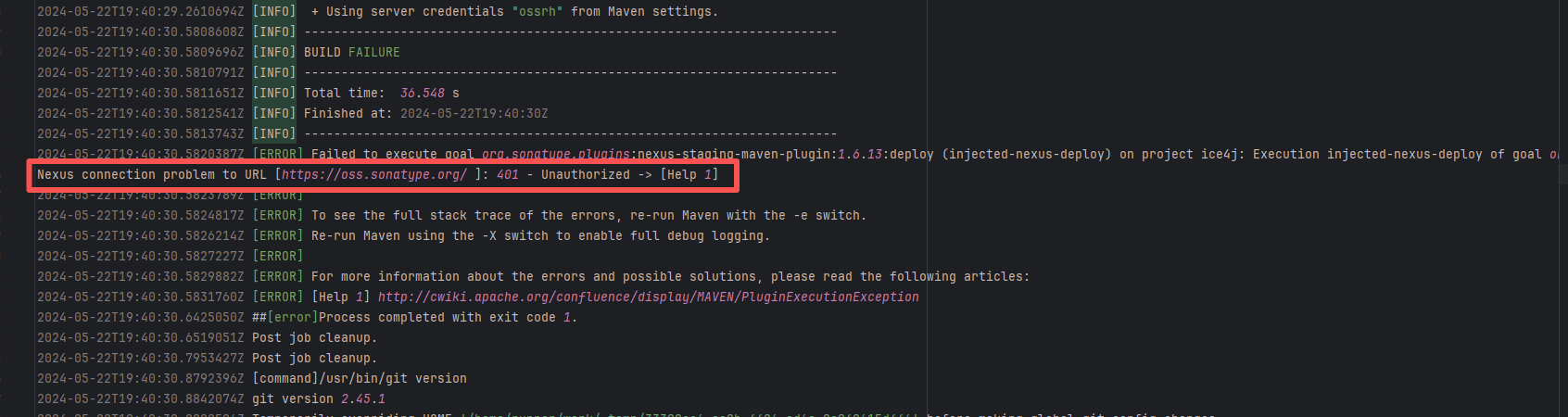

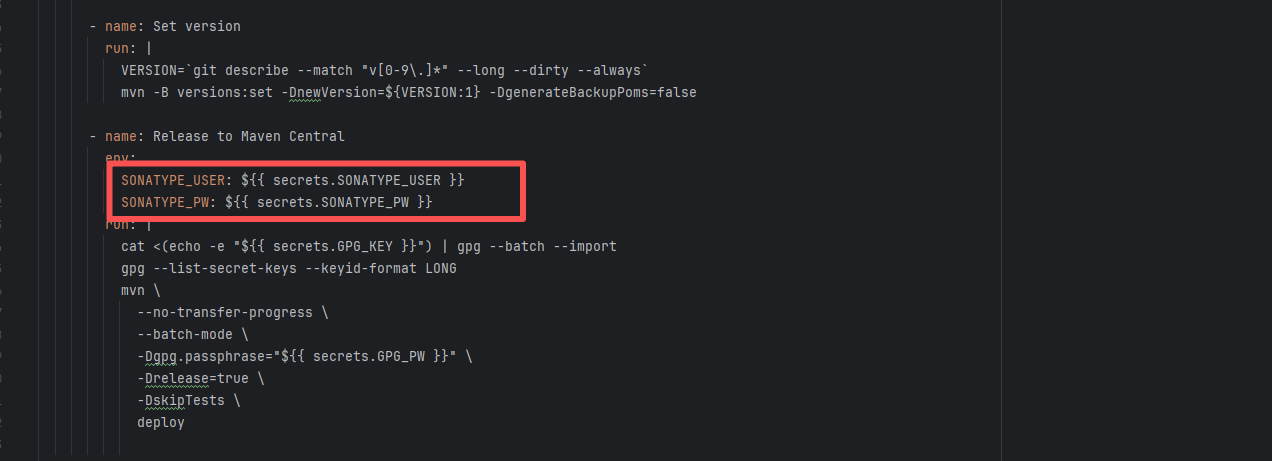

| bytedeco/javacpp-presets |

26281262349 |

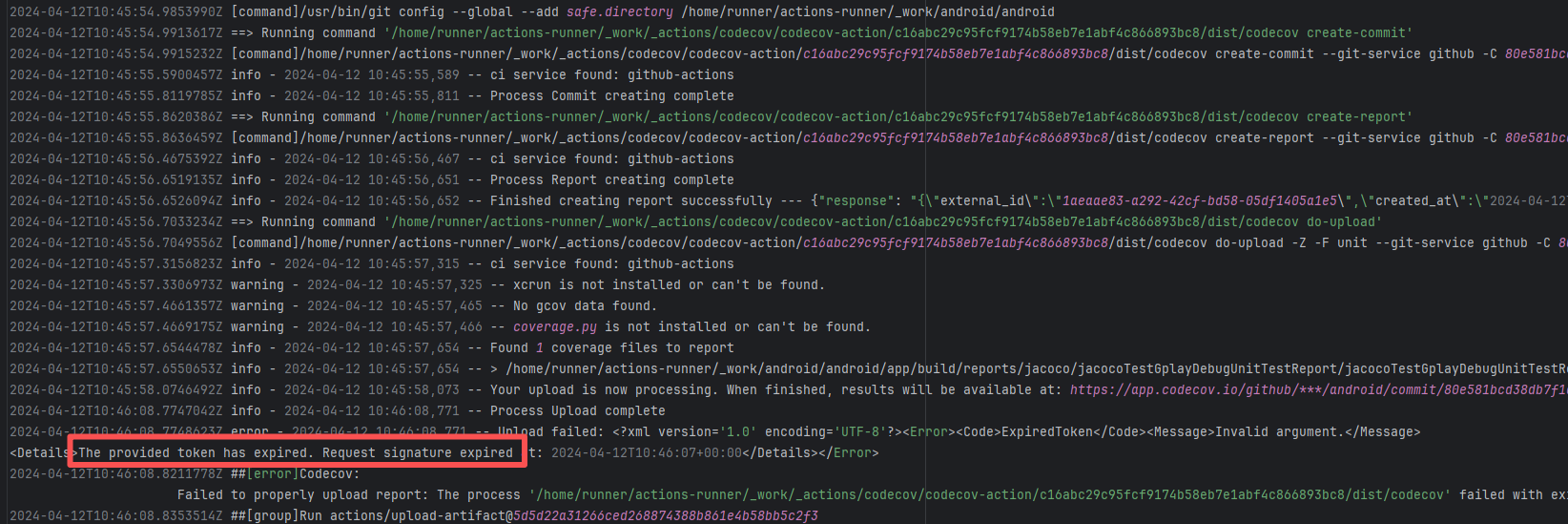

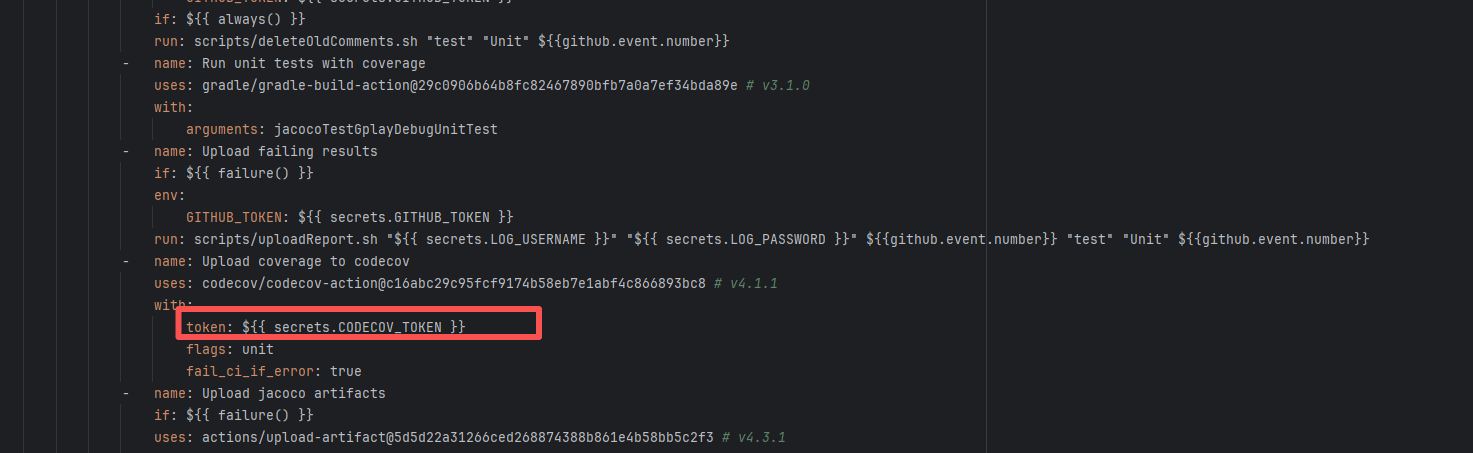

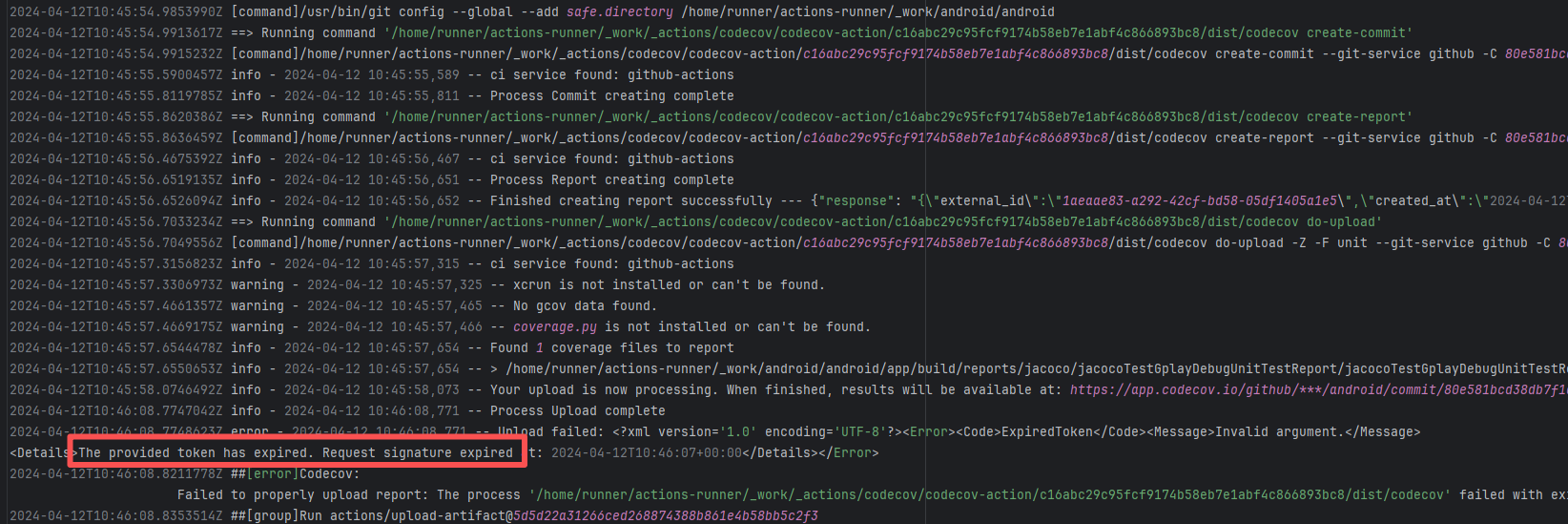

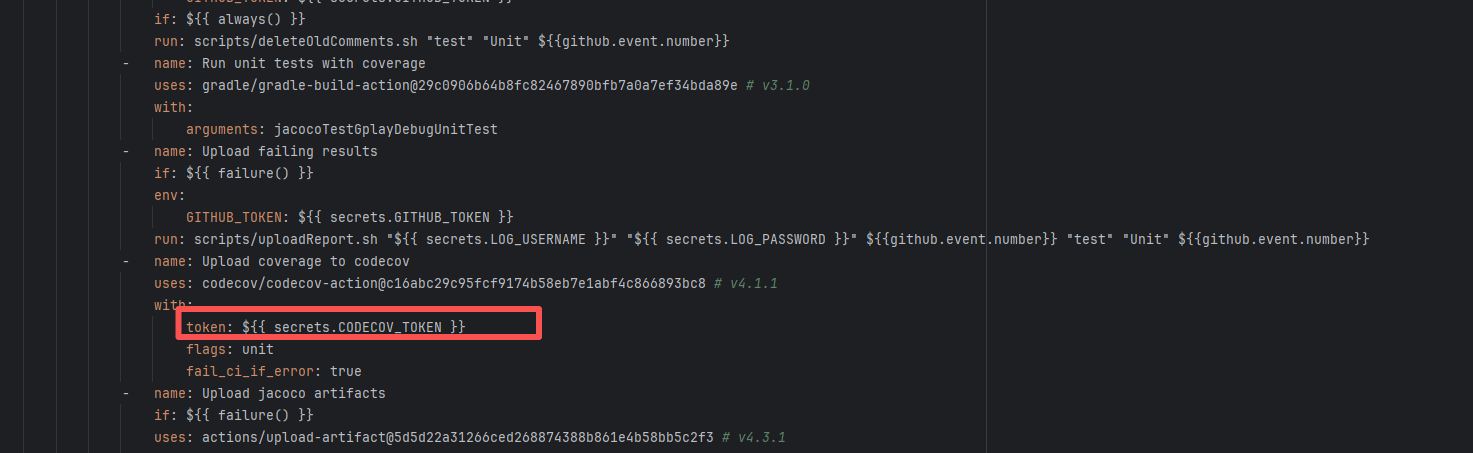

Authentication Failure |

Error: Failed to deploy the artifact to the Sonatype OSS snapshot repository using the nexus-staging-maven-plugin. The core reason is a 401 Unauthorized error, meaning the deployment request lacks a valid authentication token.

Root Cause: Rerun succeeded after replacing the token. |

Modify the deployment token in the secrets within the repository settings interface. |

|

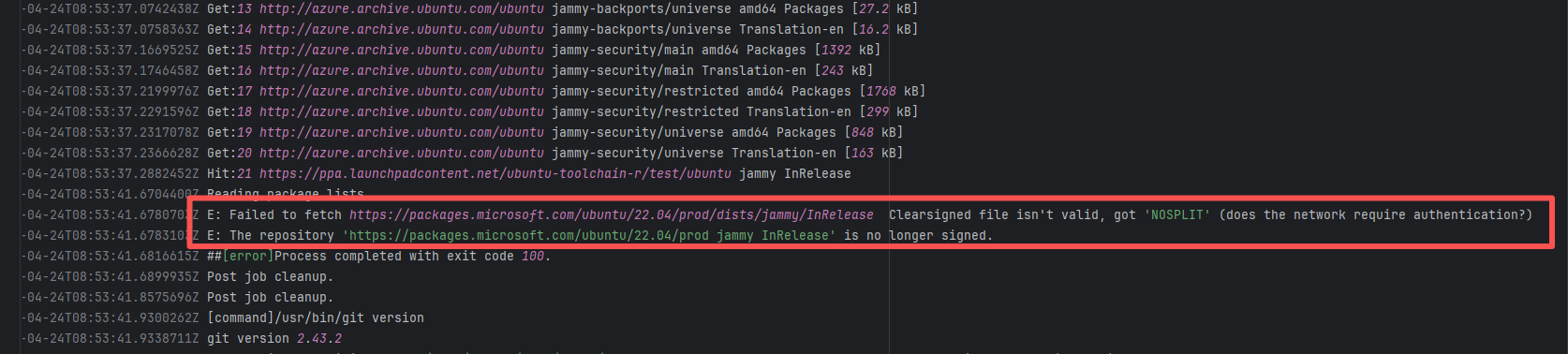

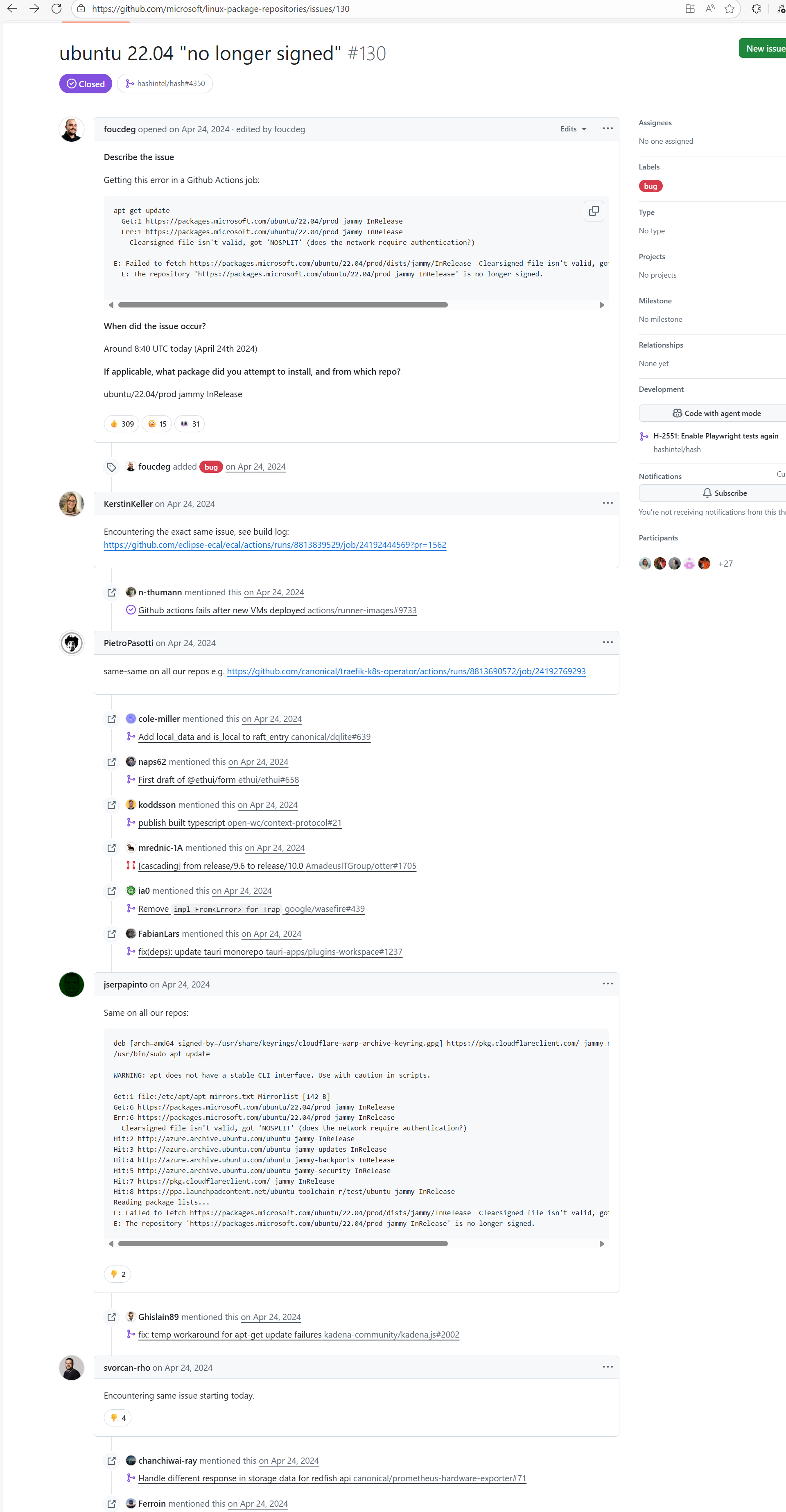

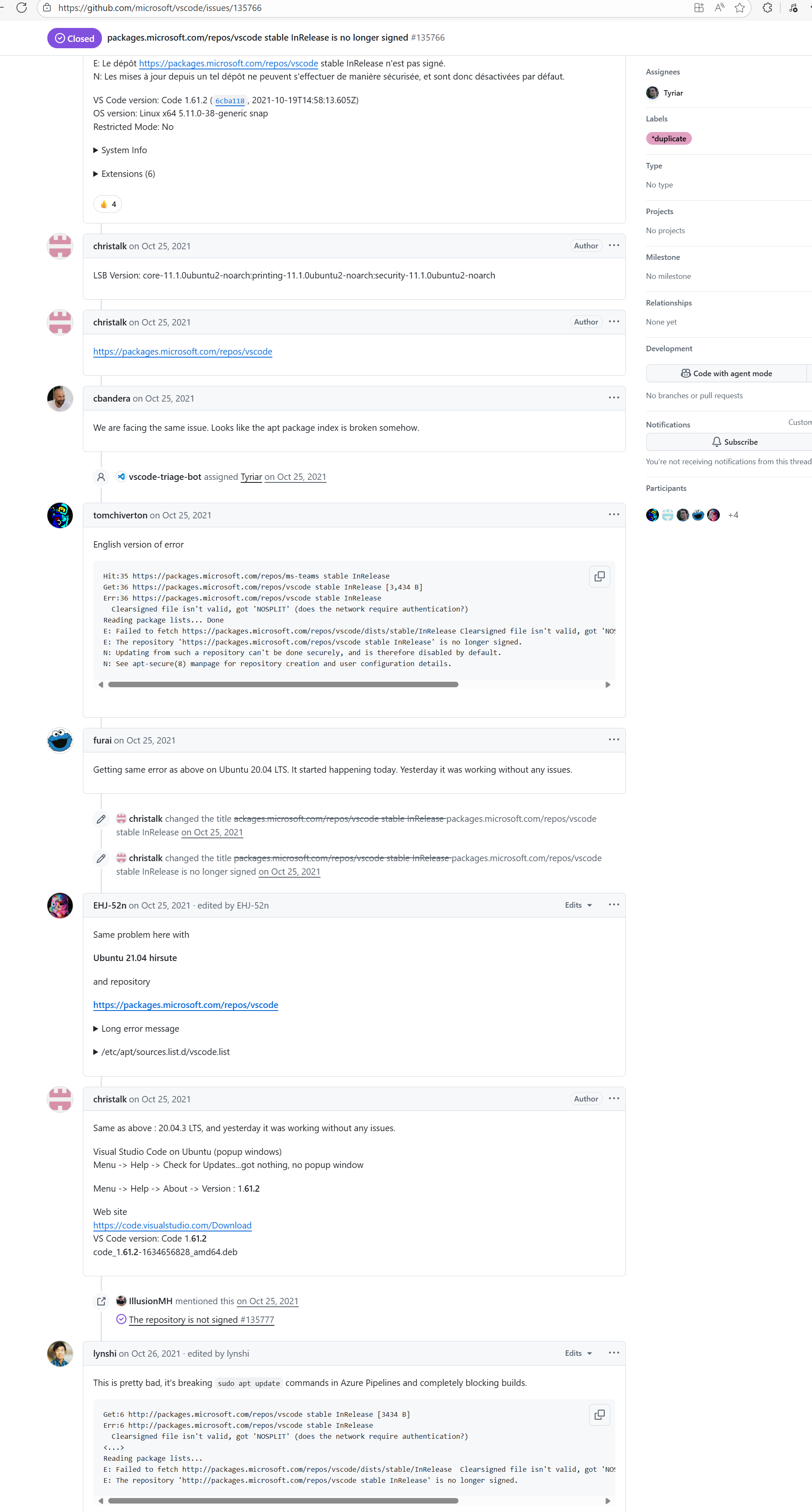

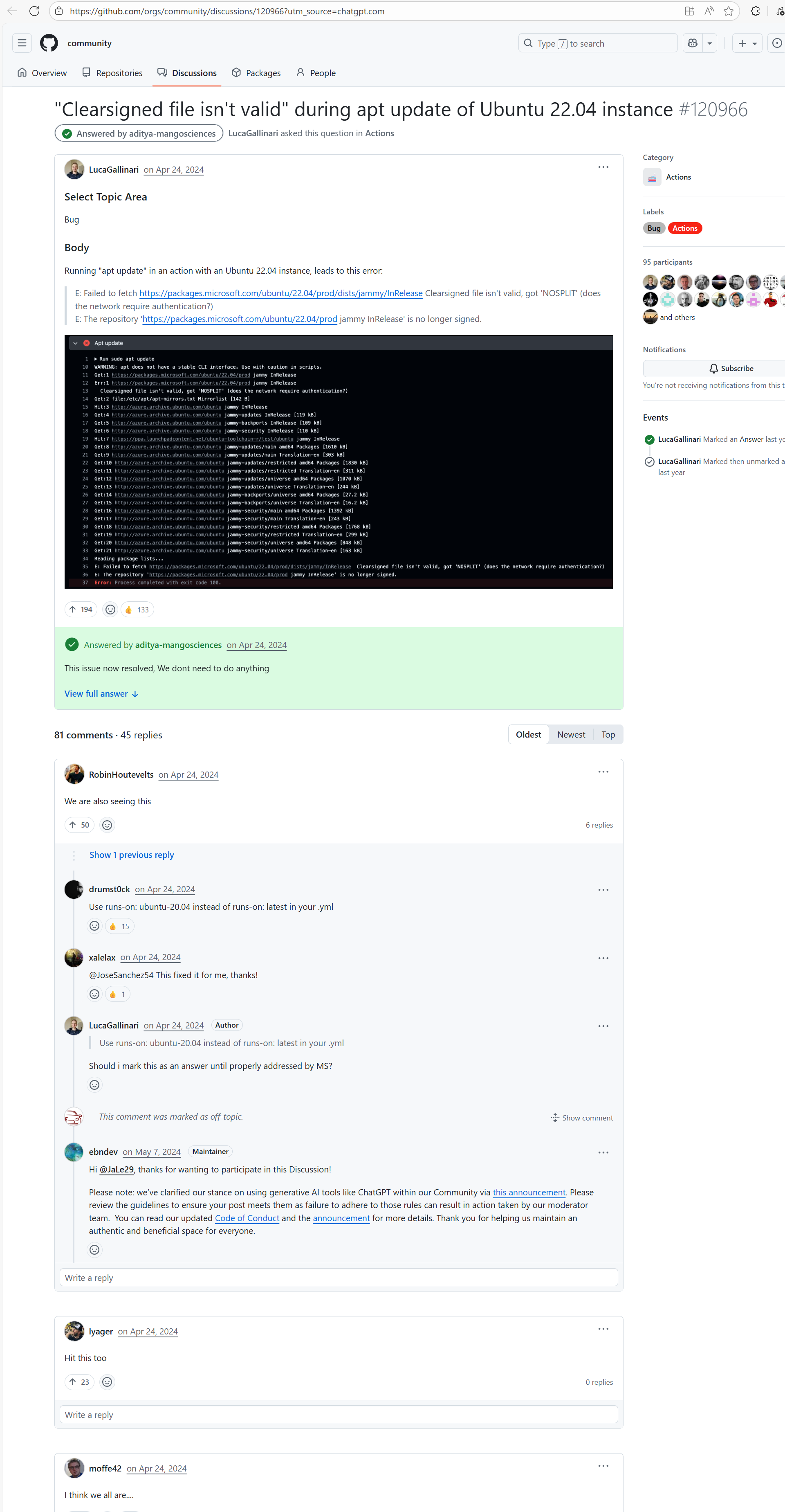

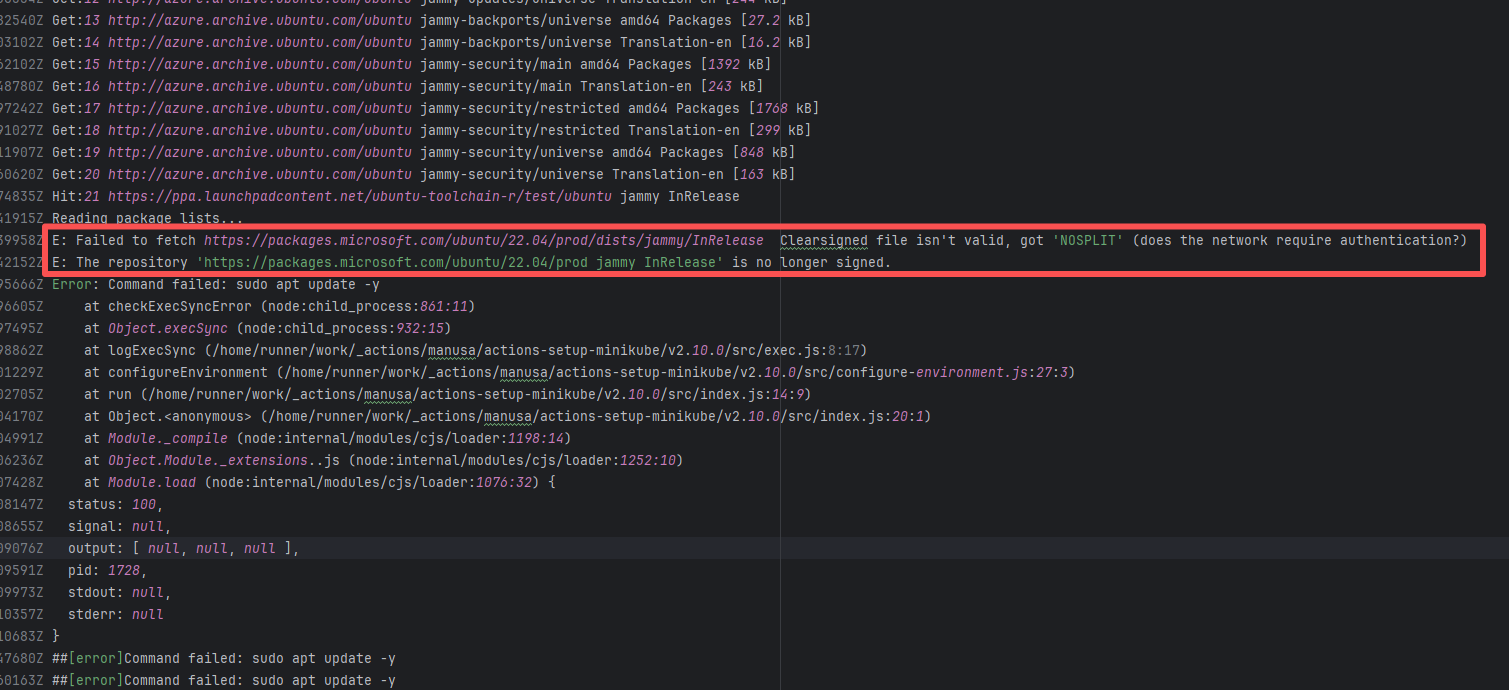

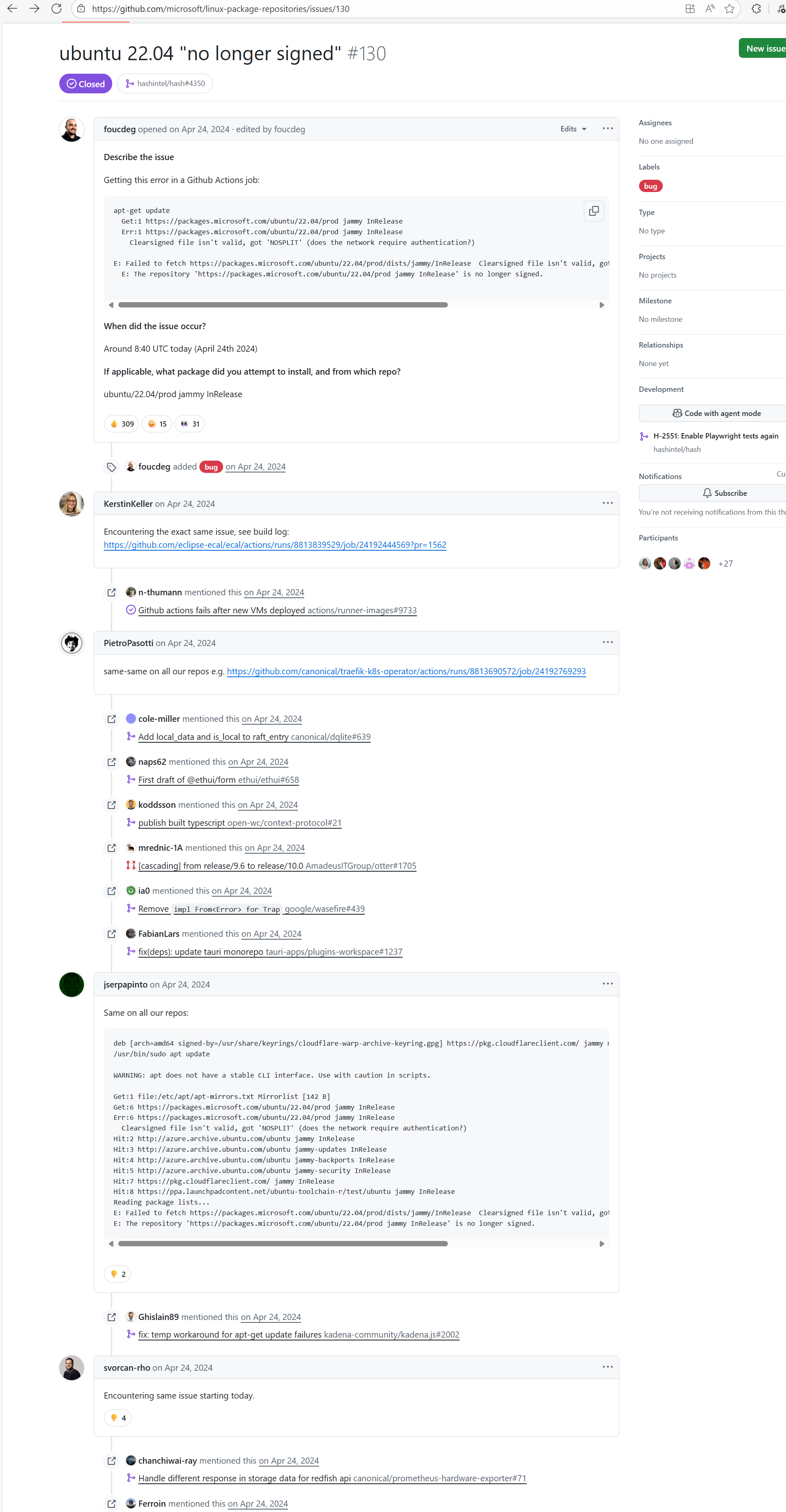

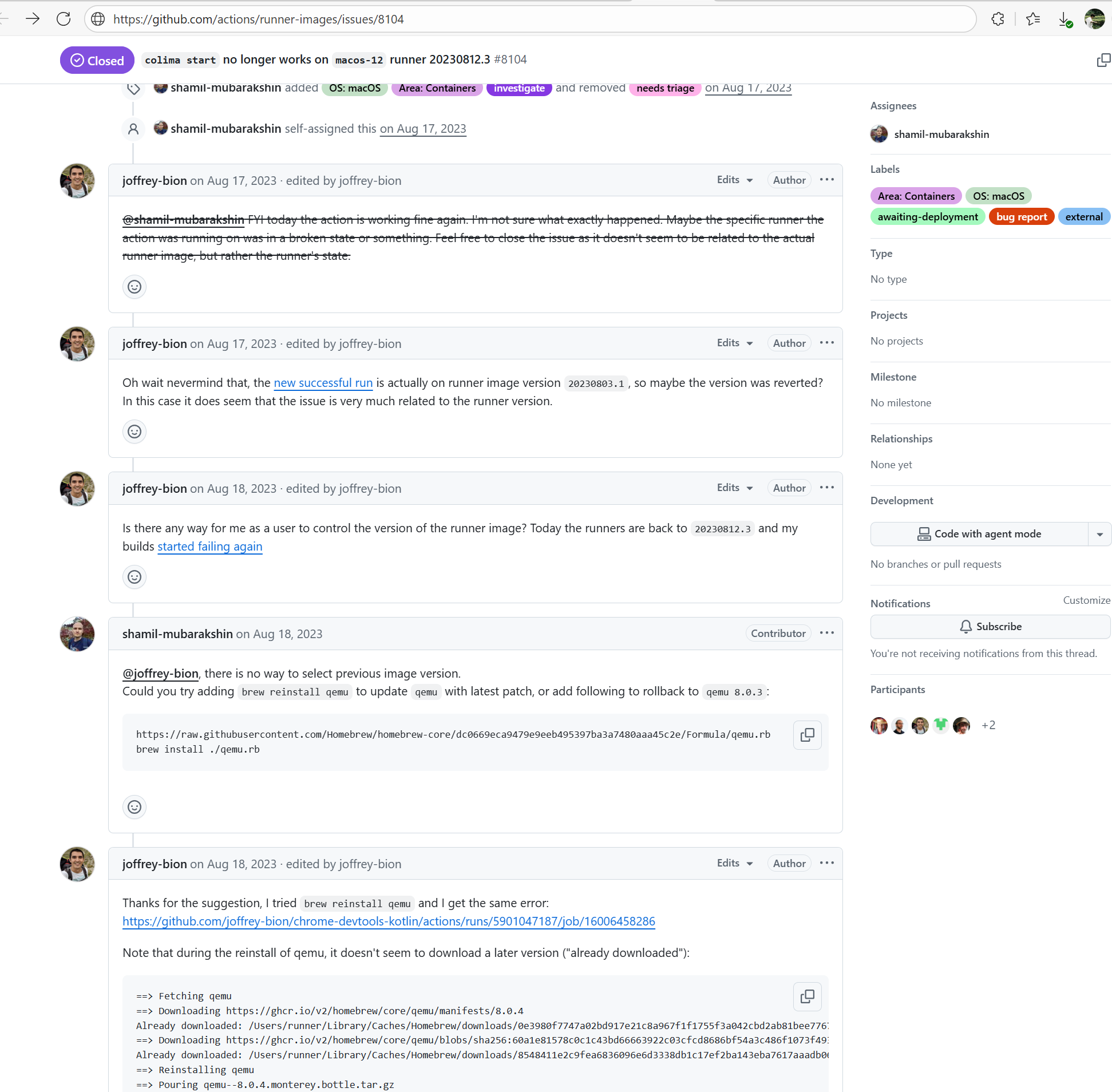

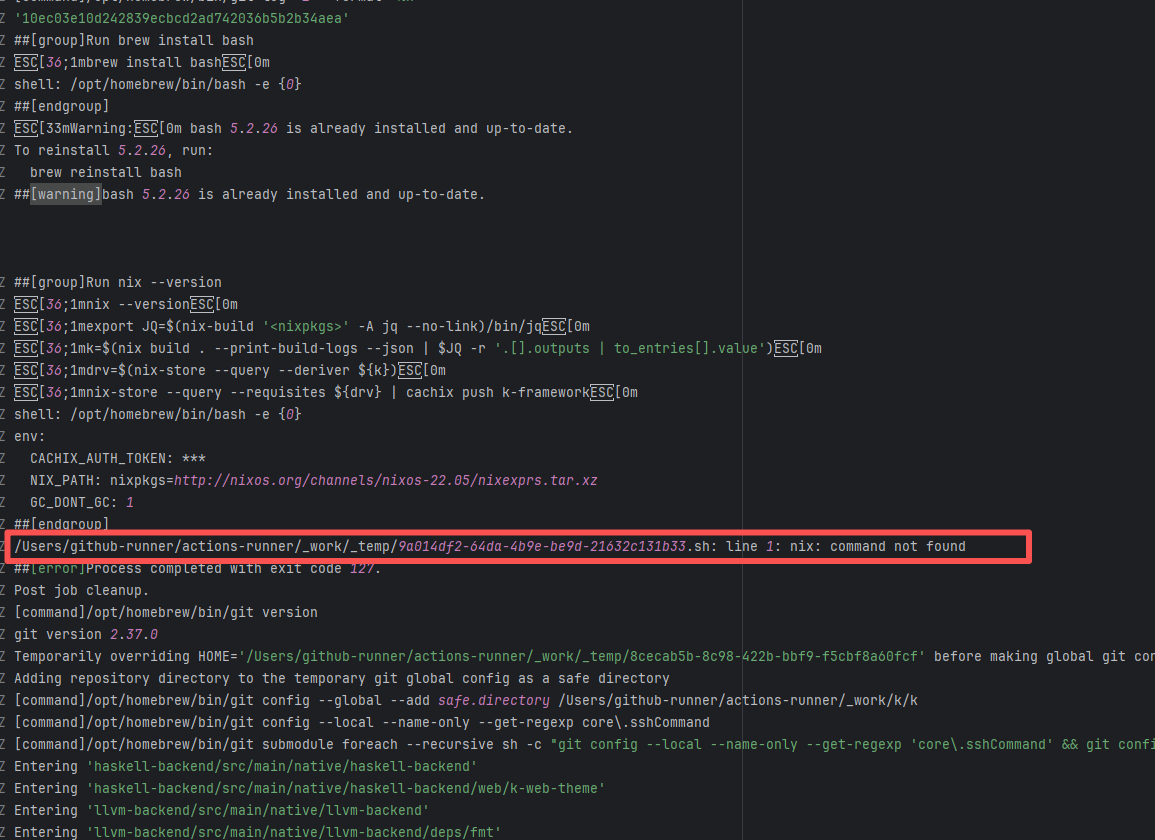

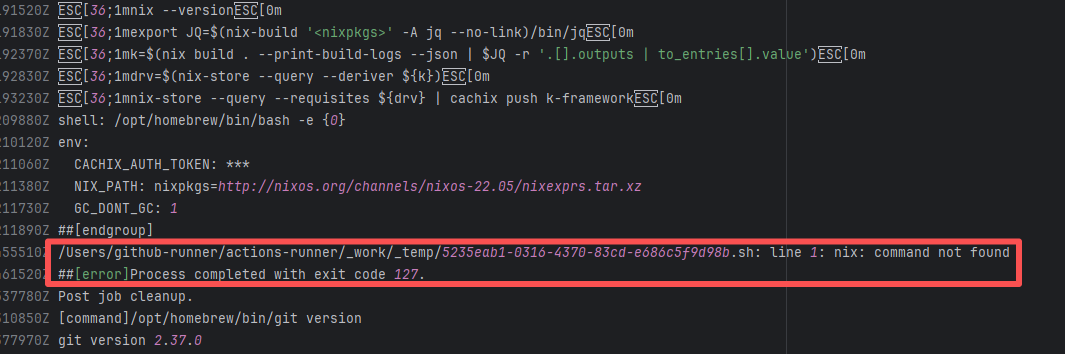

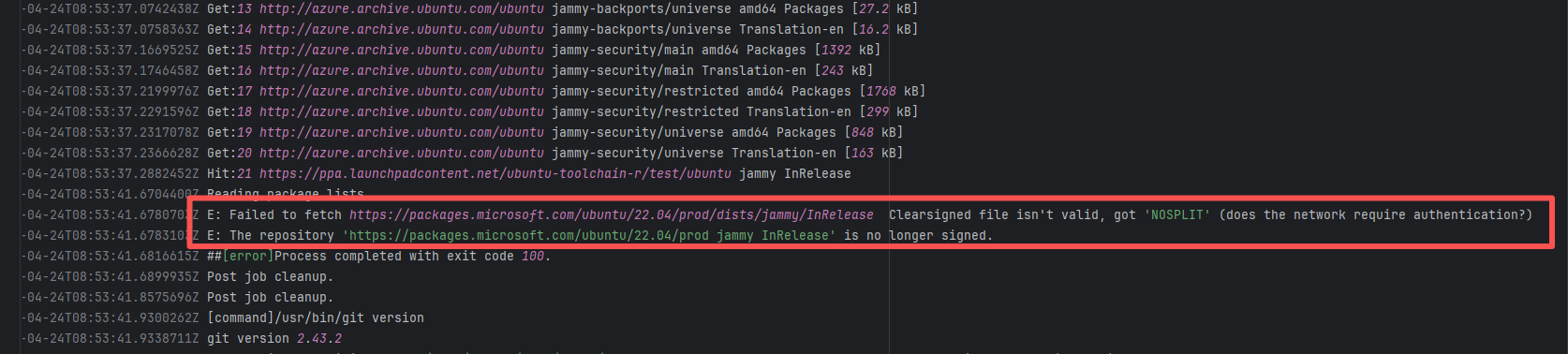

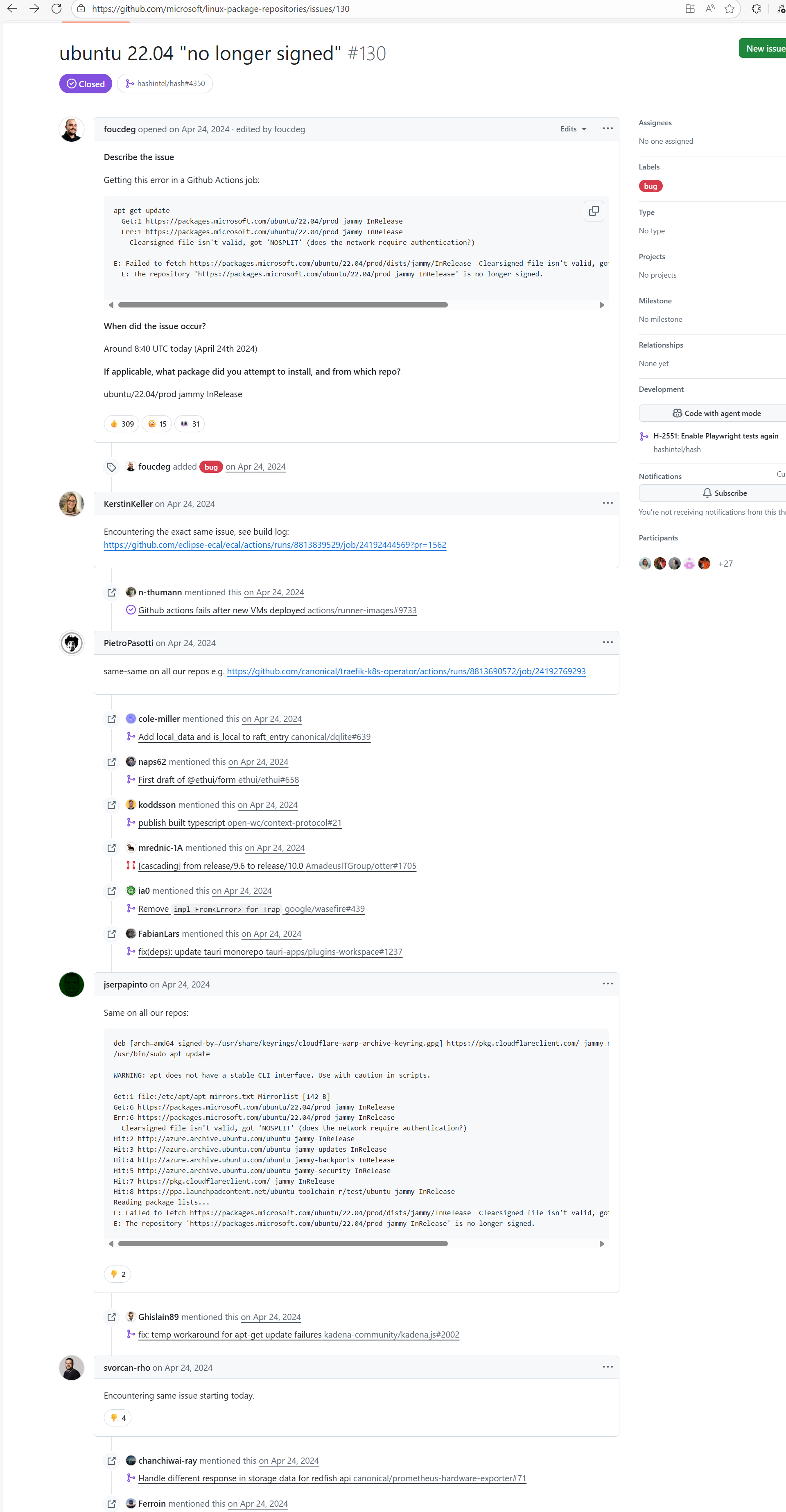

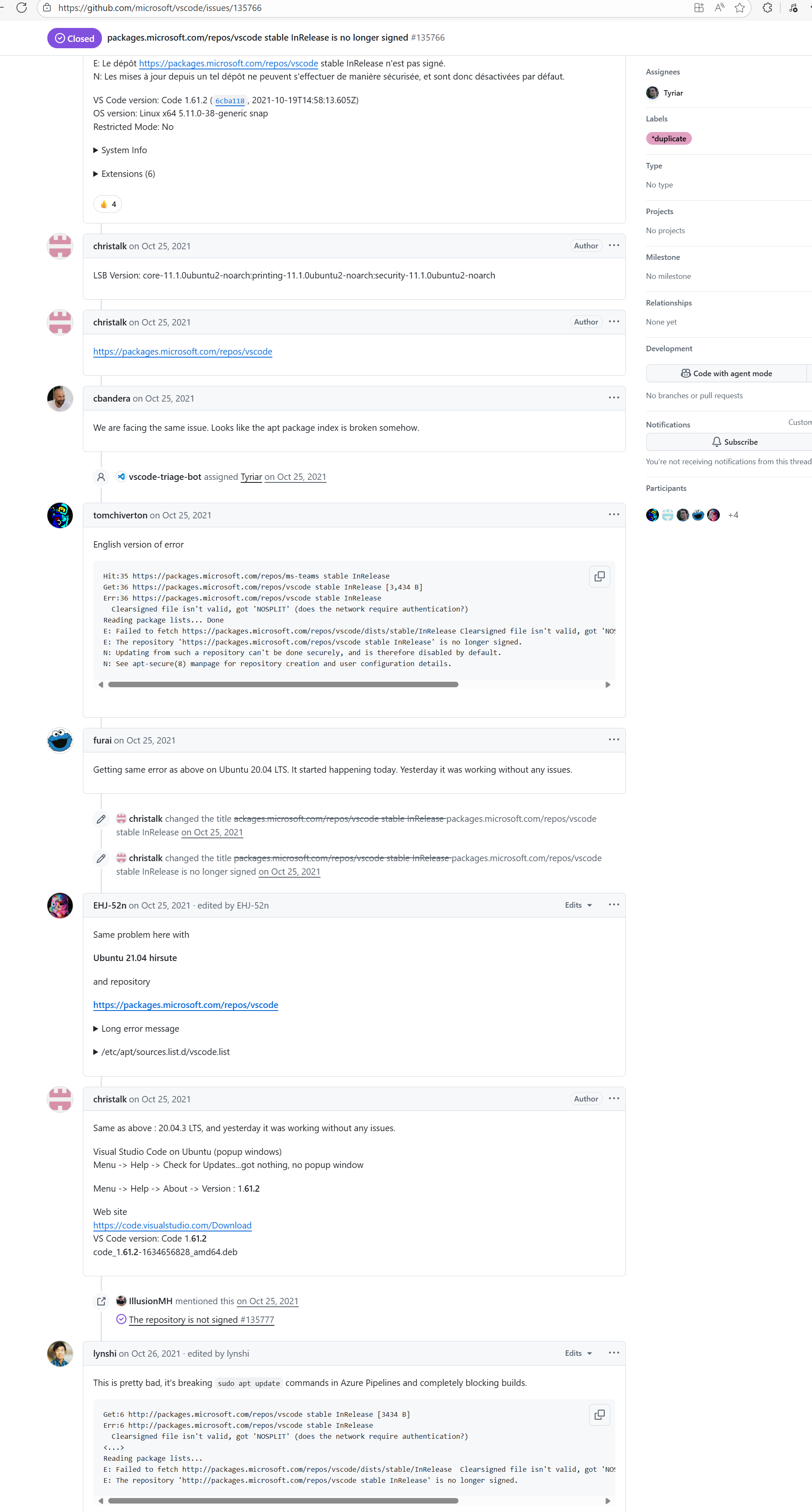

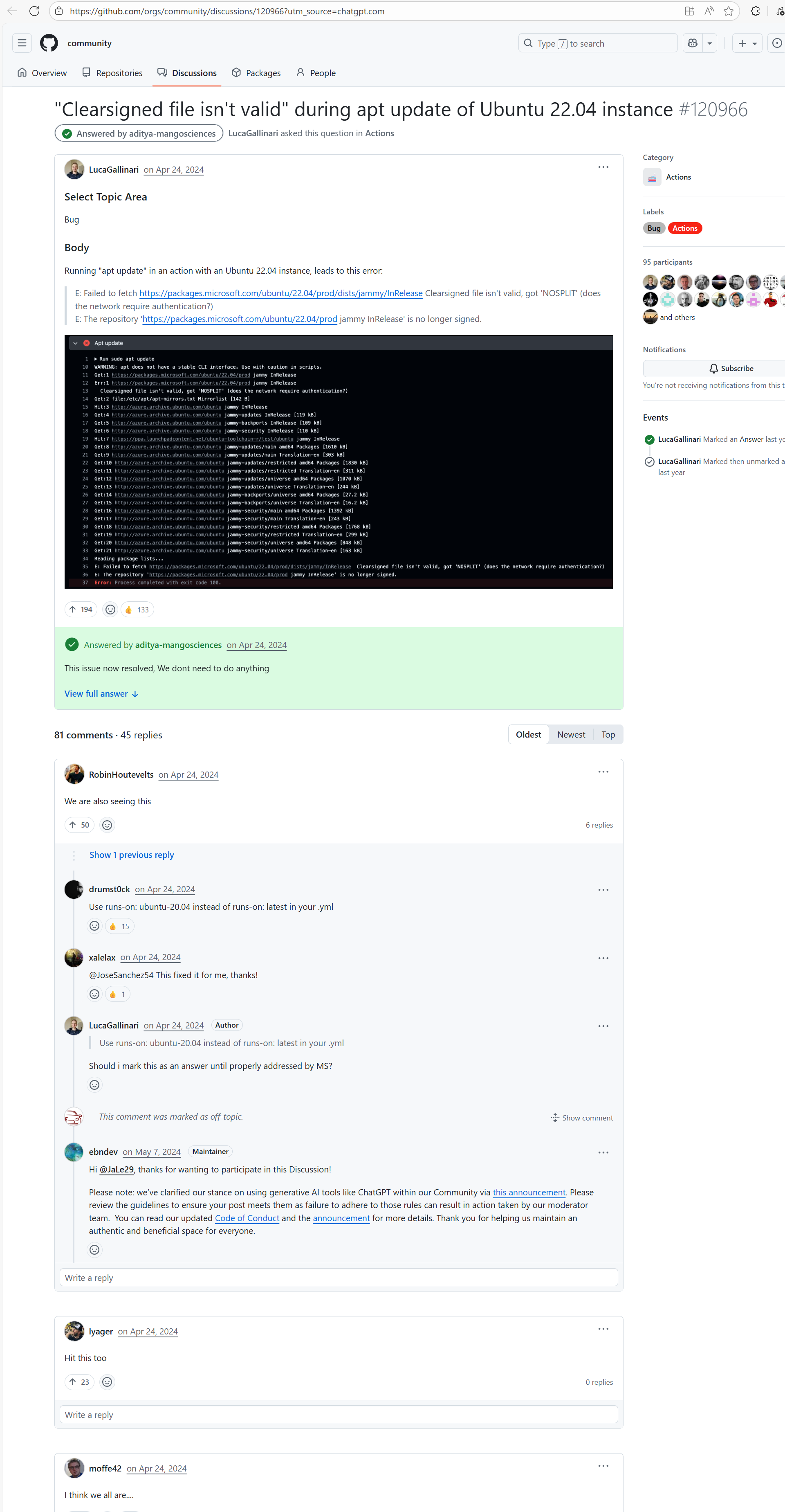

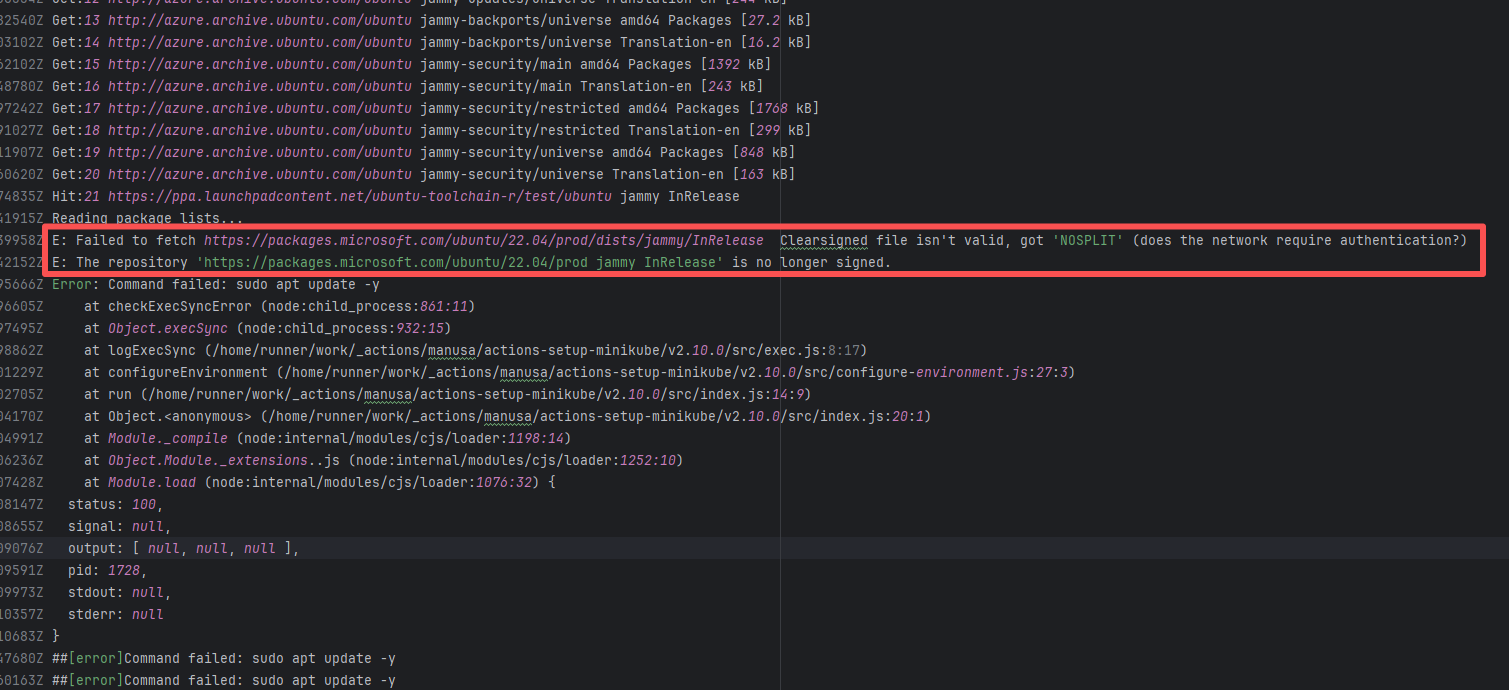

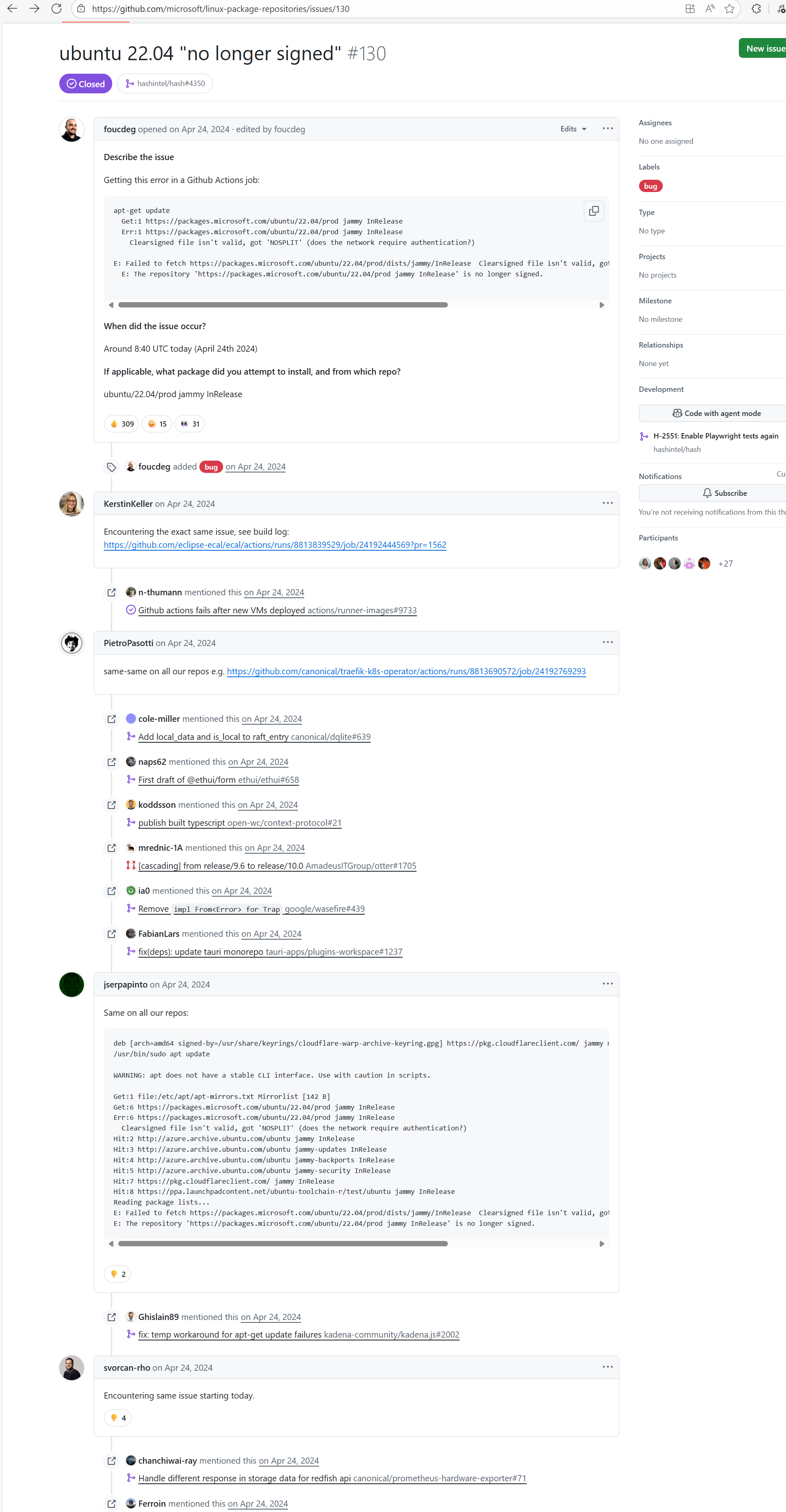

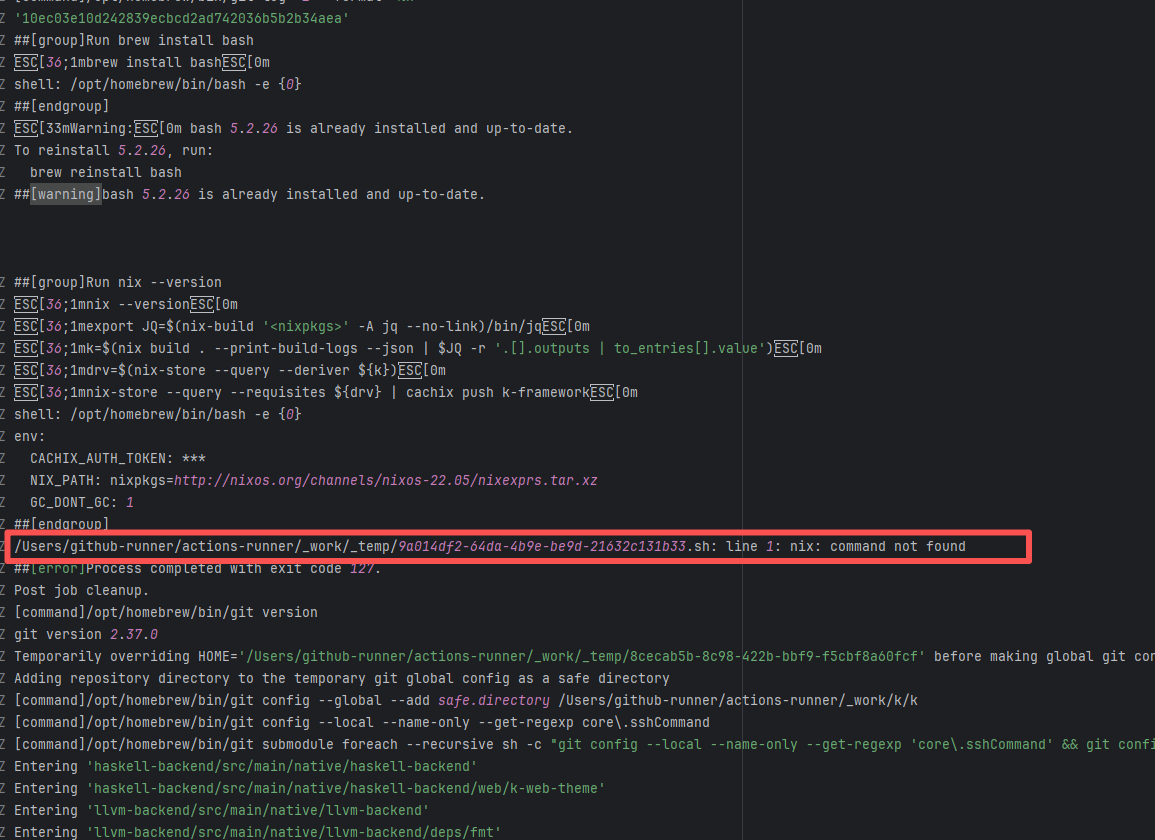

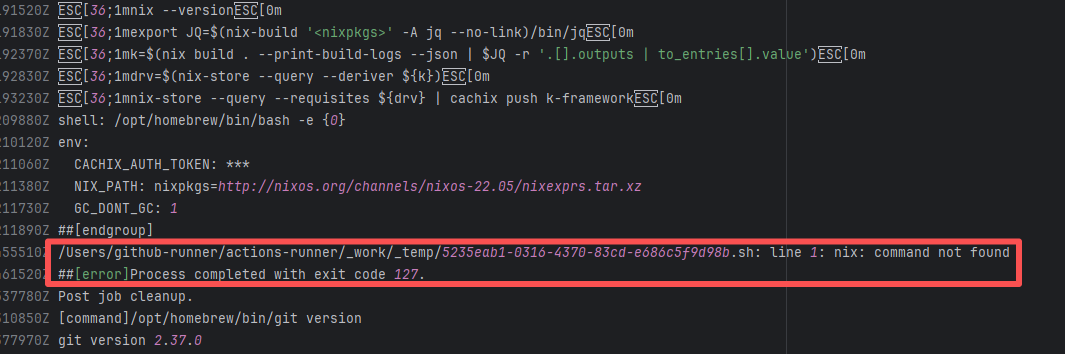

| runtimeverification/k |

24192350307 |

Tool Intermittent Failure |

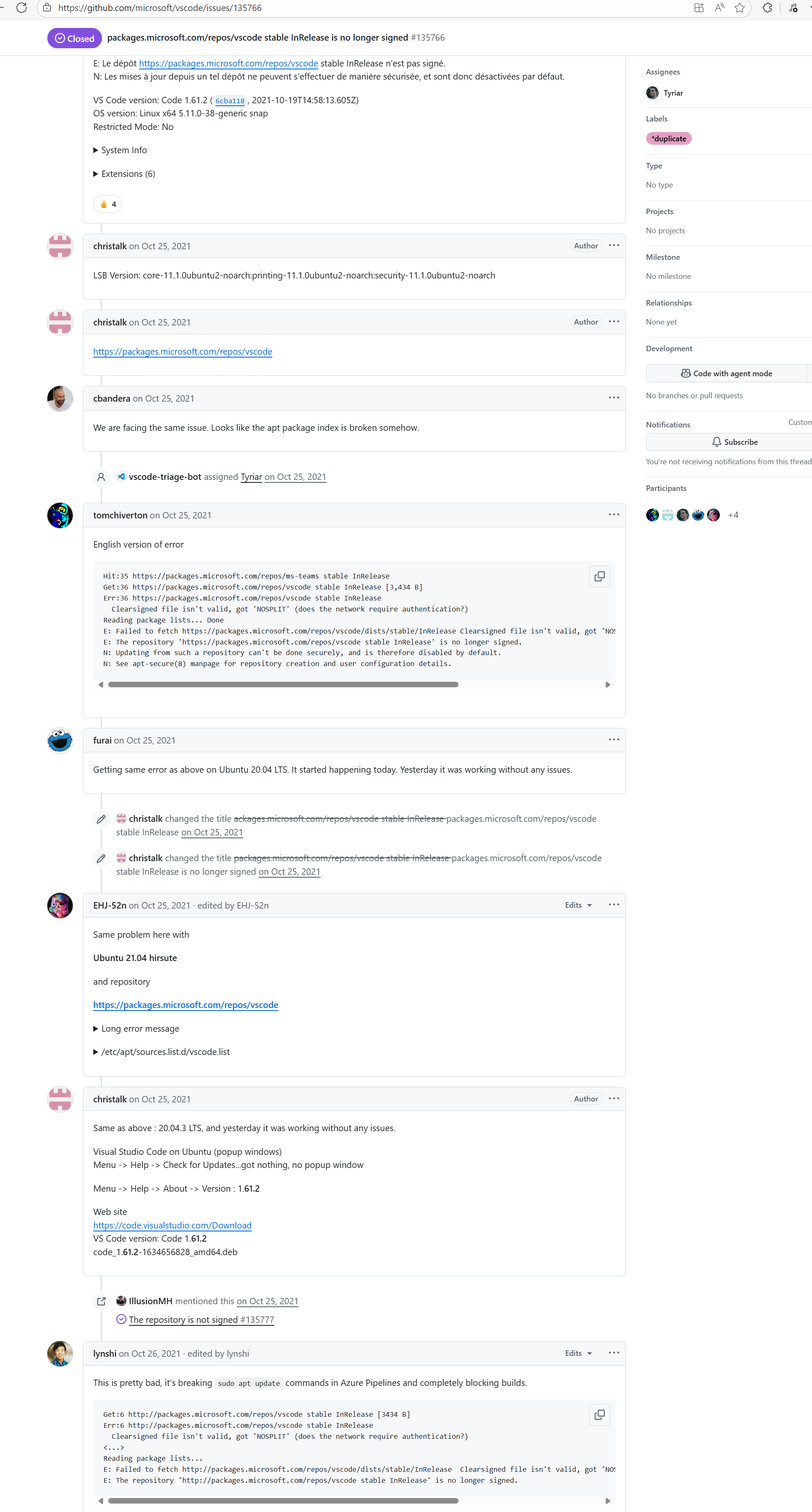

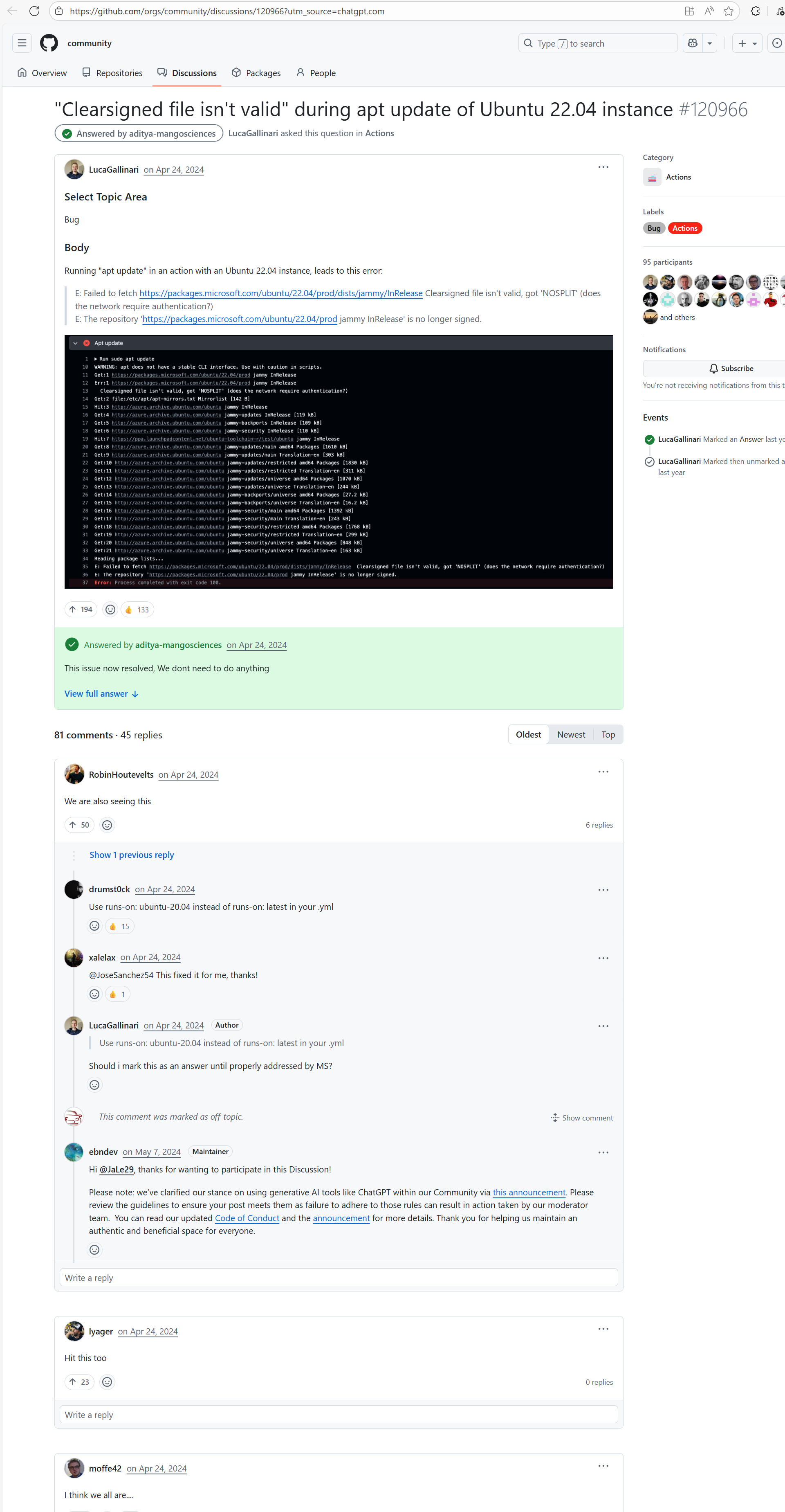

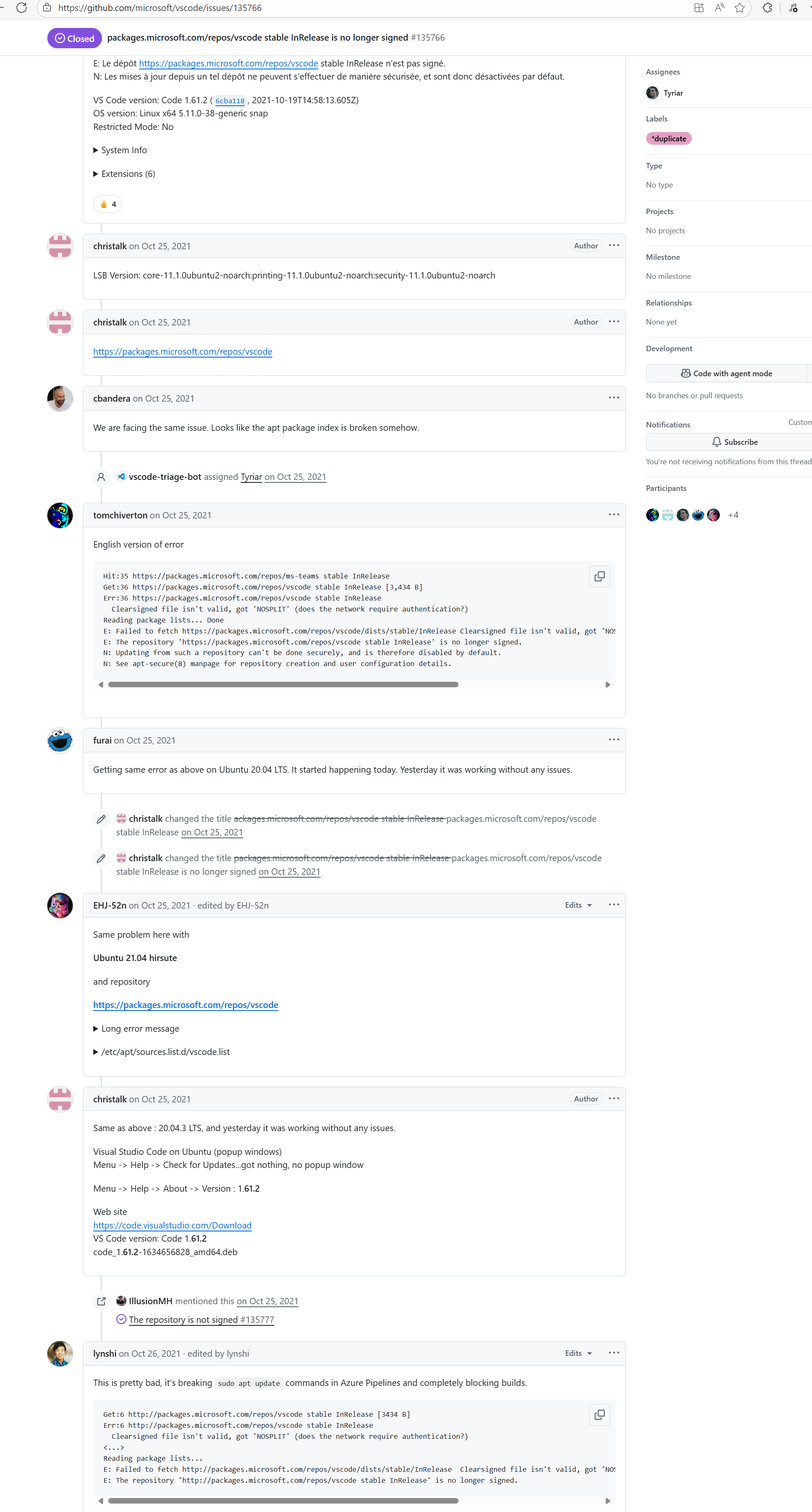

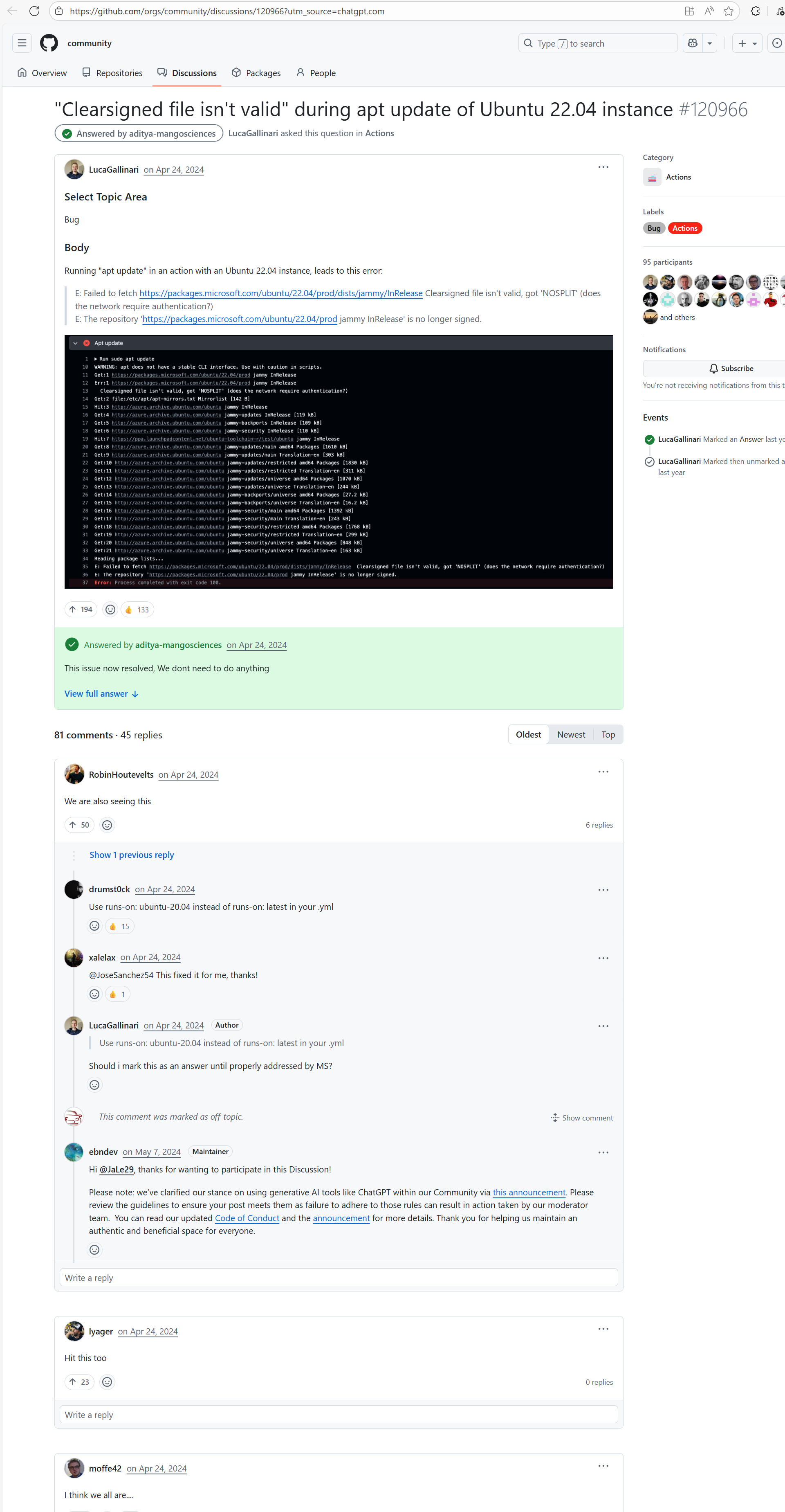

Error: According to the logs, the current network (such as a company intranet or proxy server) may require authentication to access external resources, causing the signature file from the Microsoft repository to not download correctly or to be tampered with, which leads to verification failure.

Root Cause: Succeeded after multiple reruns without modifying network authentication. |

A web search reveals this is a bug with the Microsoft mirror. This error has been reported multiple times during various periods (e.g., October 25, 2021; August 24, 2024 - peak), pointing to a short-term failure or synchronization issue with the Microsoft repository / CDN, but there is no specific mitigation strategy. |

|

| bytedeco/javacpp-presets |

26281262769 |

Authentication Failure |

Error: Failed to deploy the artifact to the Sonatype OSS snapshot repository using the nexus-staging-maven-plugin. The core reason is a 401 Unauthorized error, meaning the deployment request lacks a valid authentication token.

Root Cause: Rerun succeeded after replacing the token. |

Modify the deployment token in the secrets within the repository settings interface. |

|

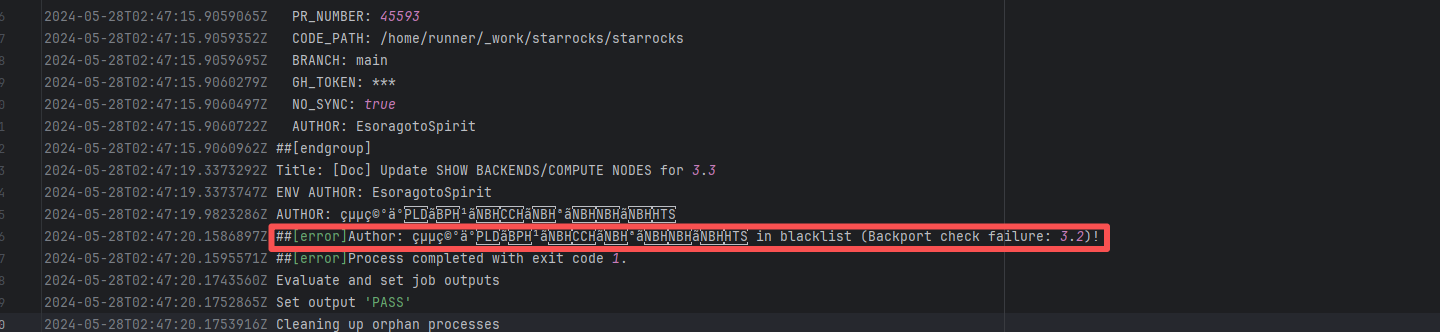

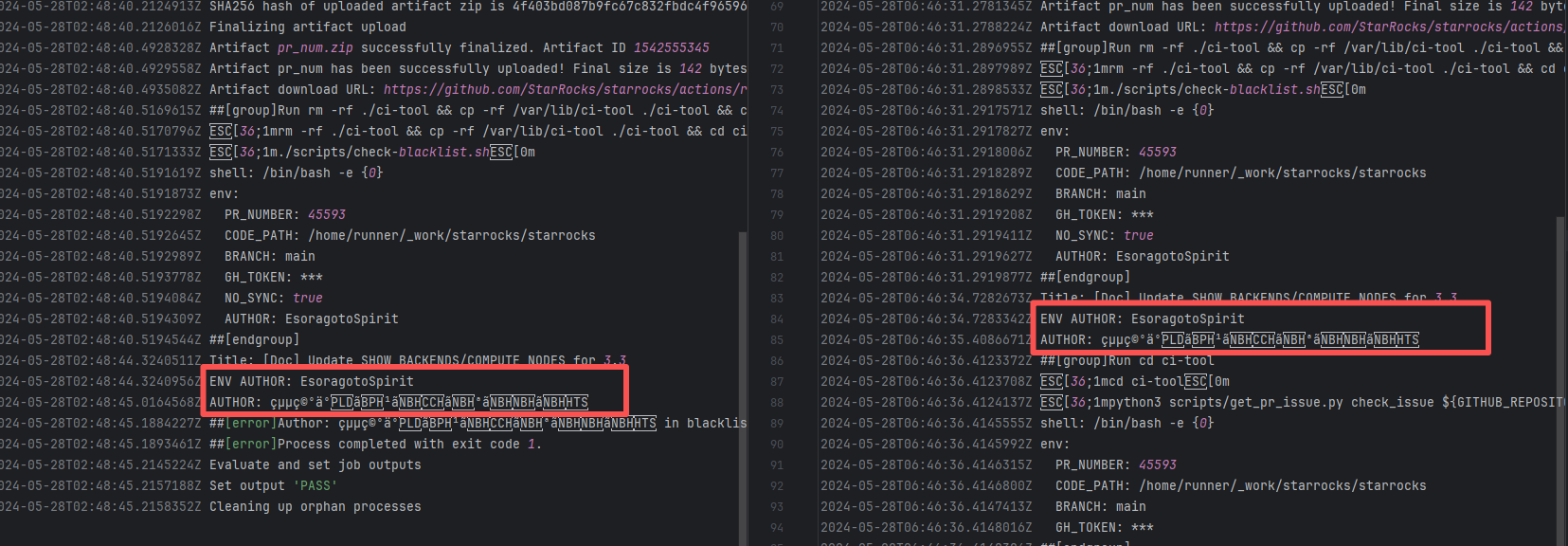

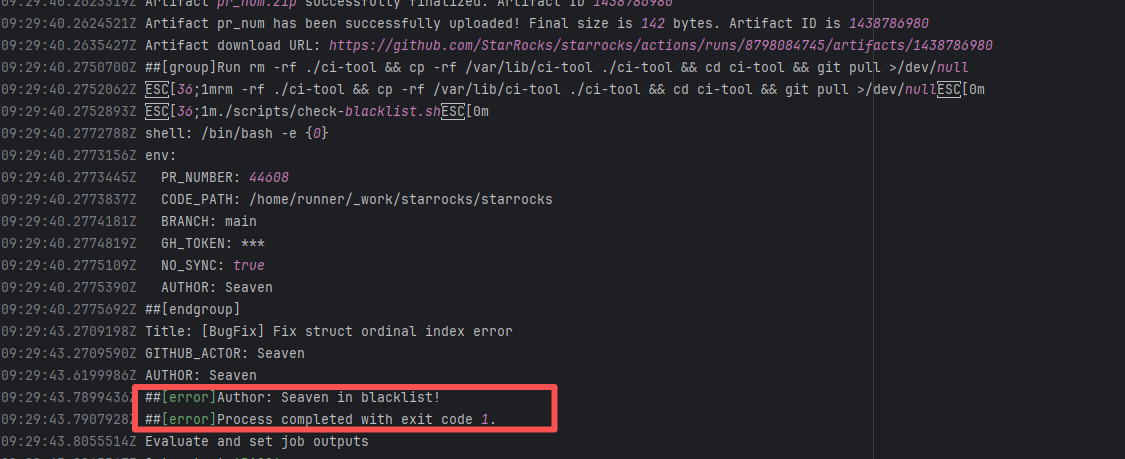

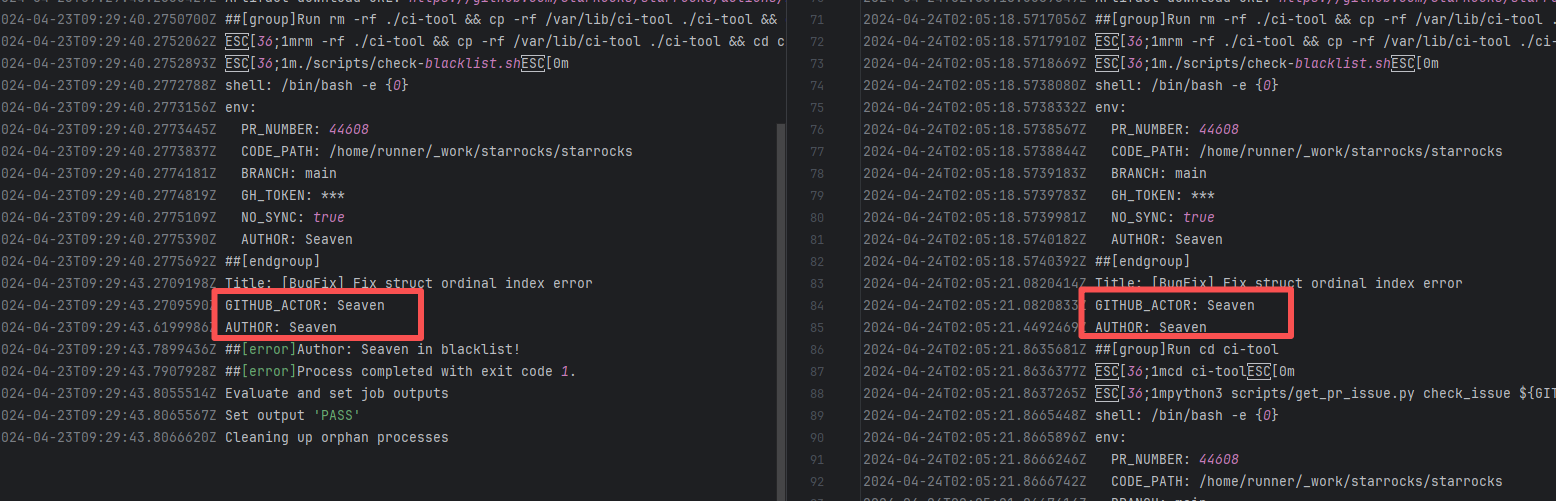

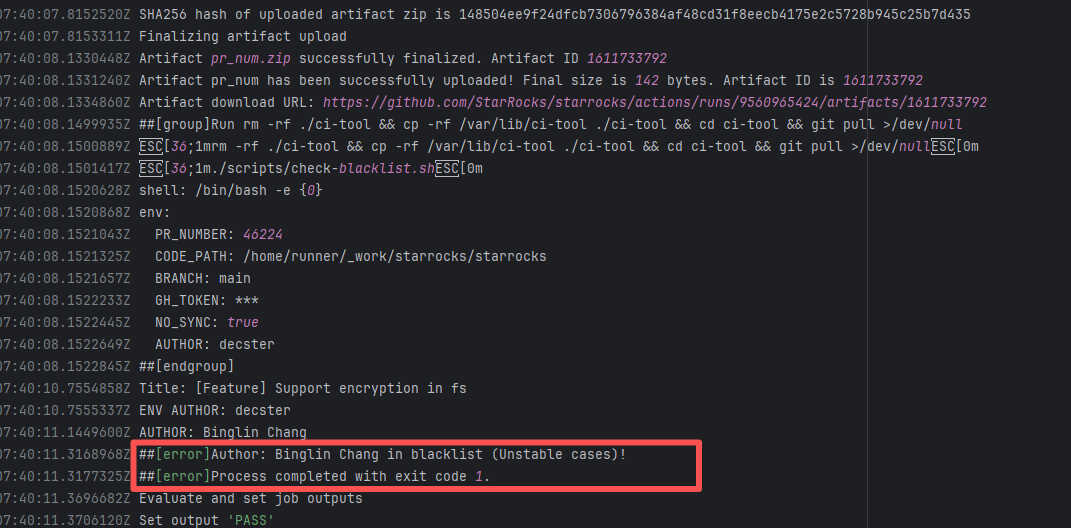

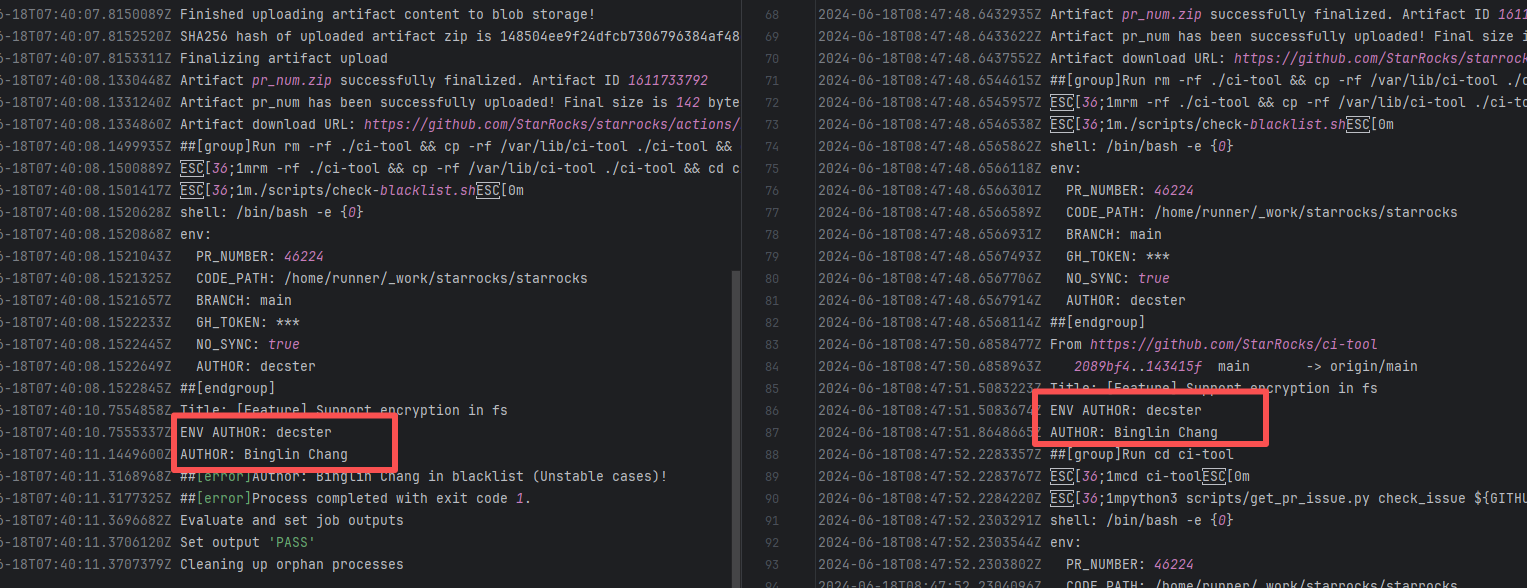

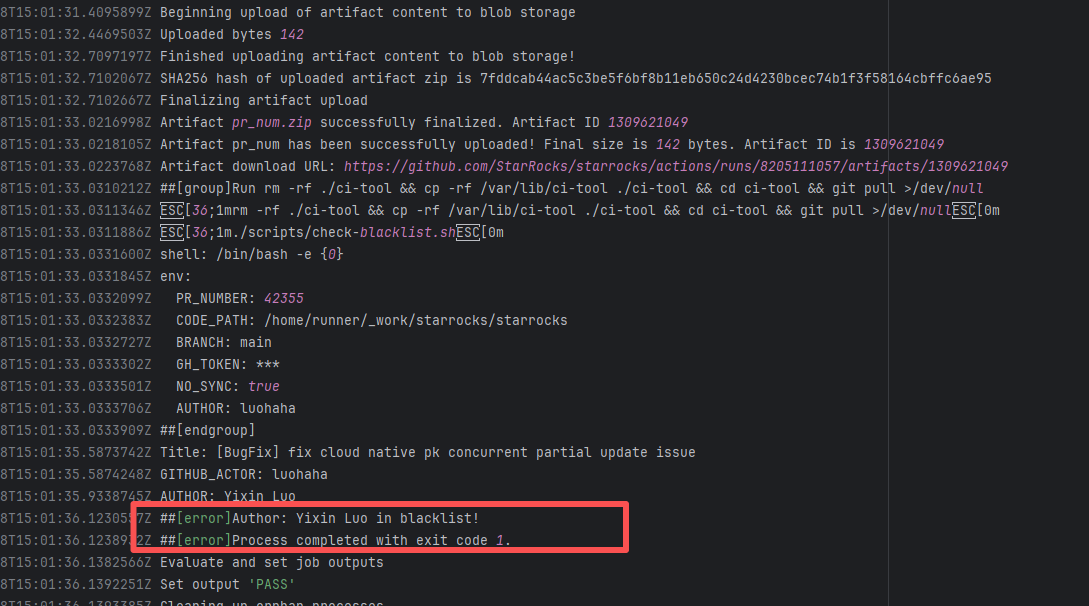

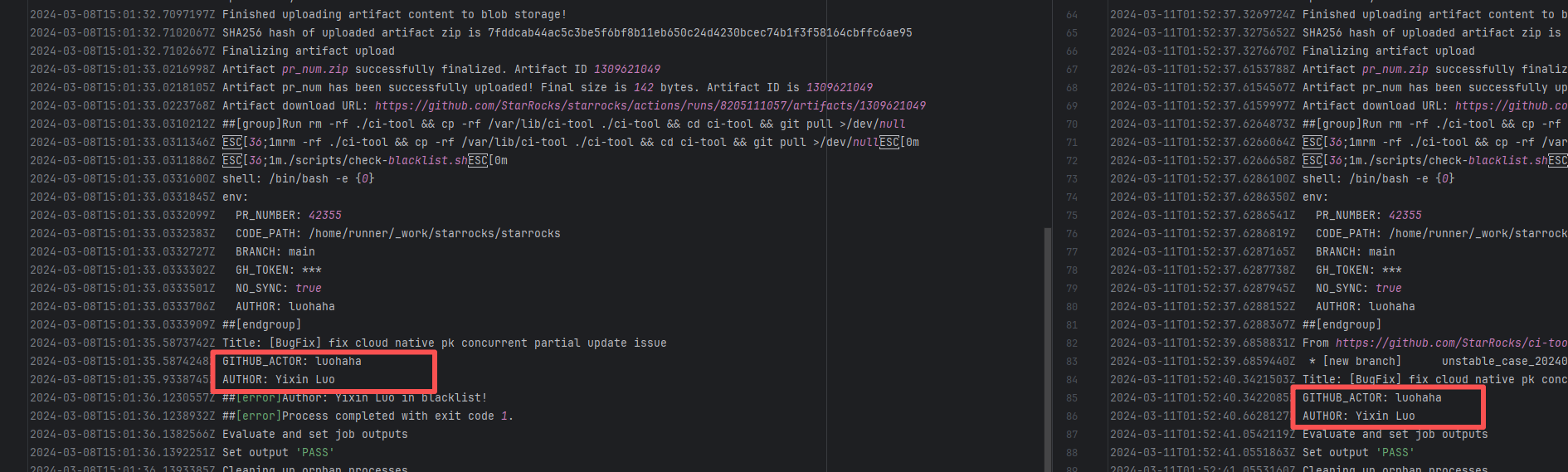

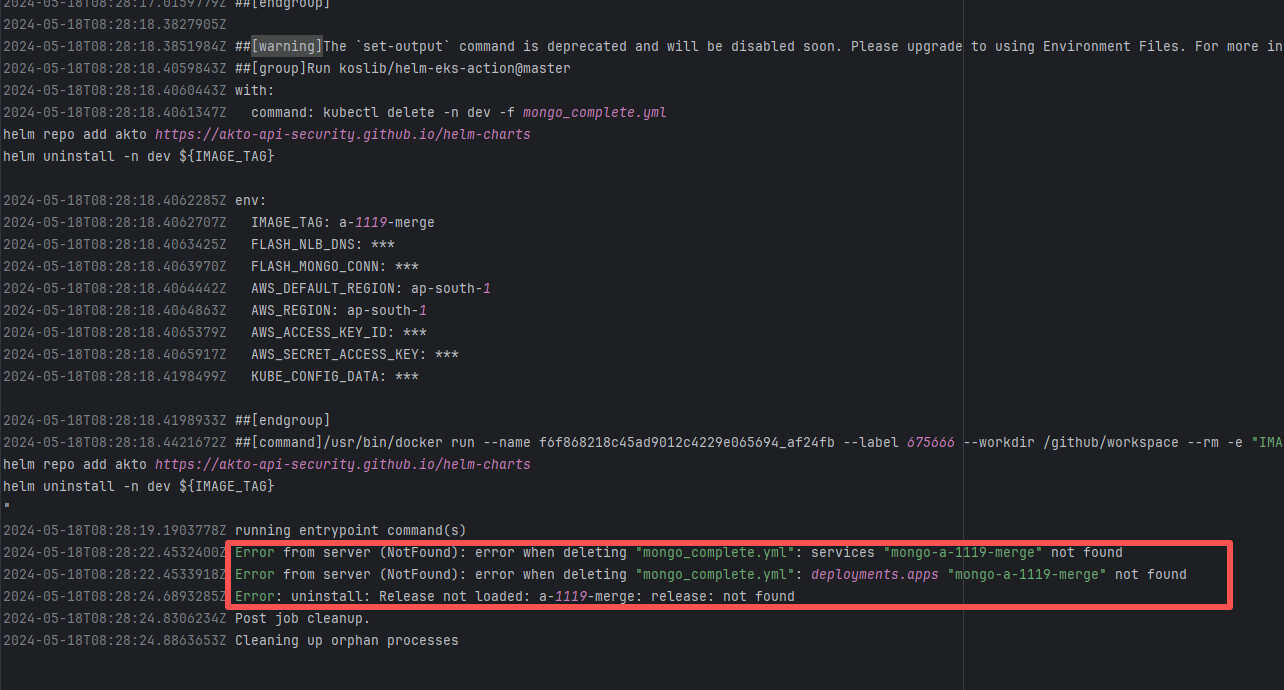

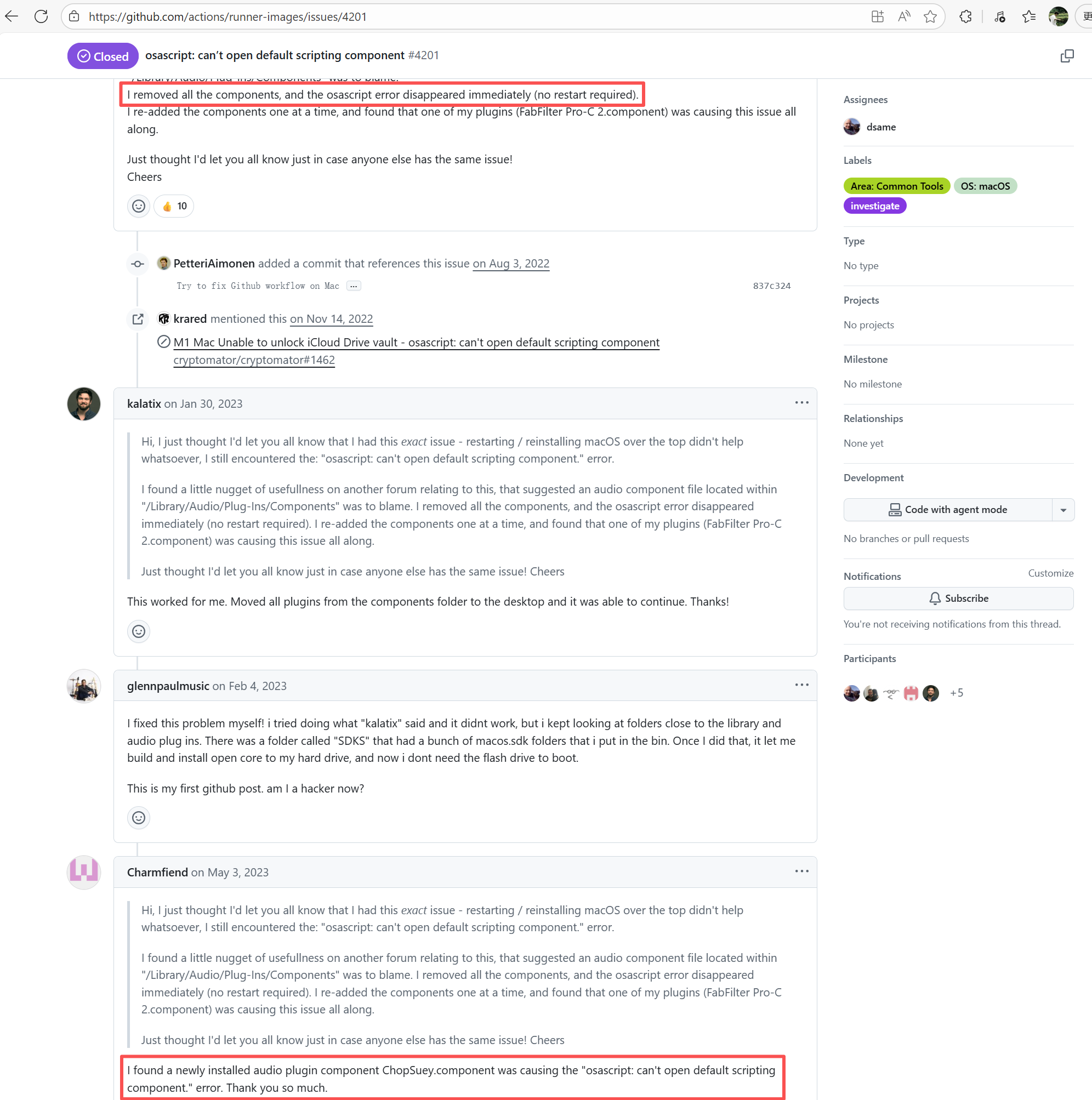

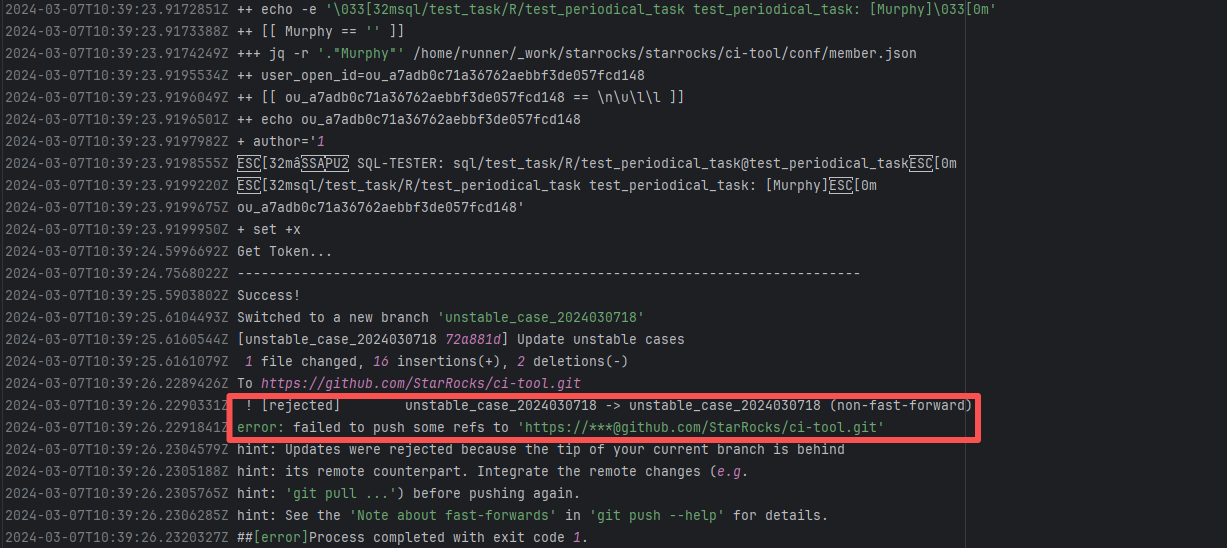

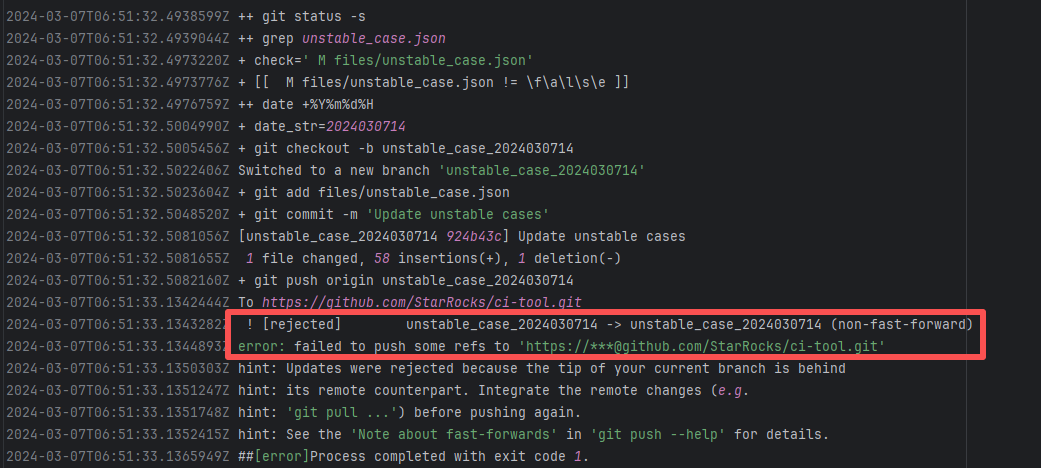

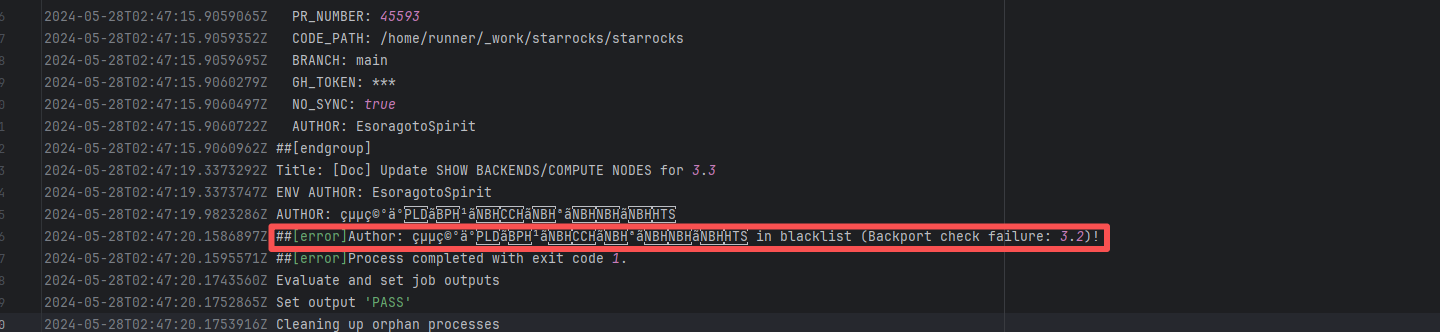

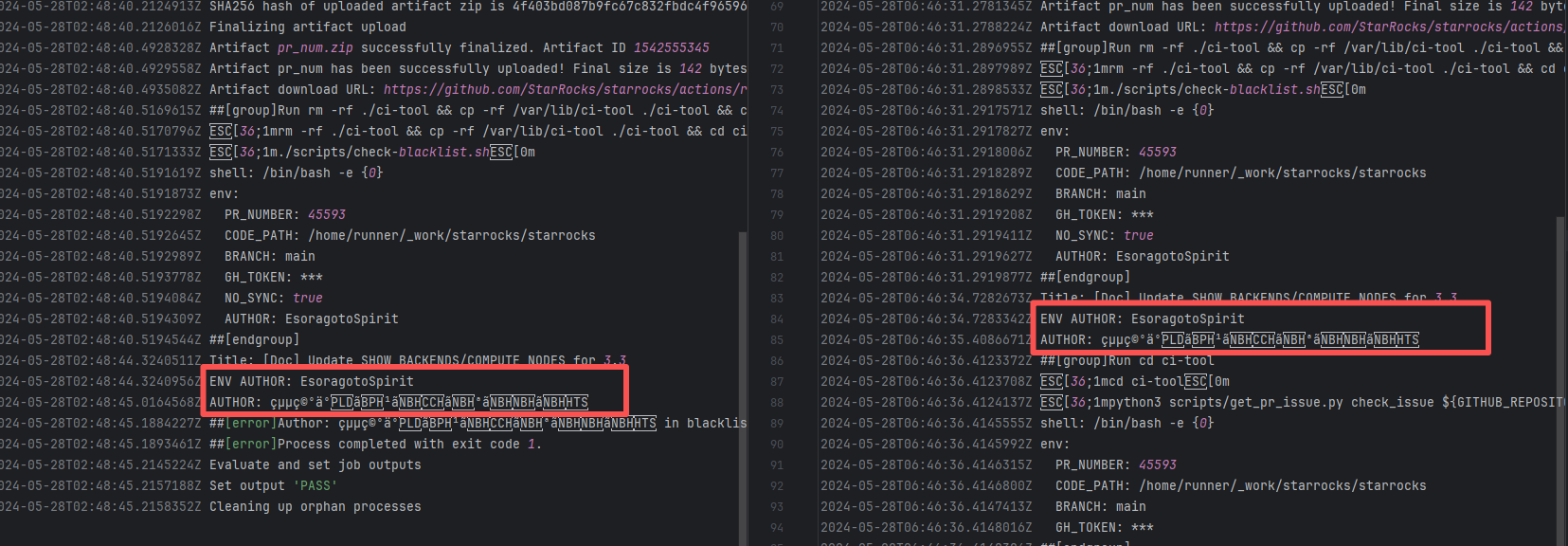

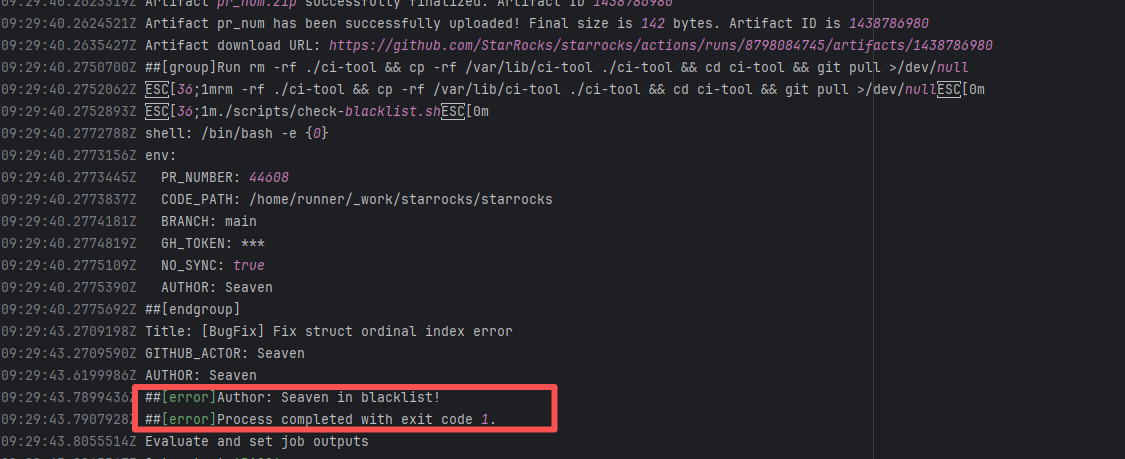

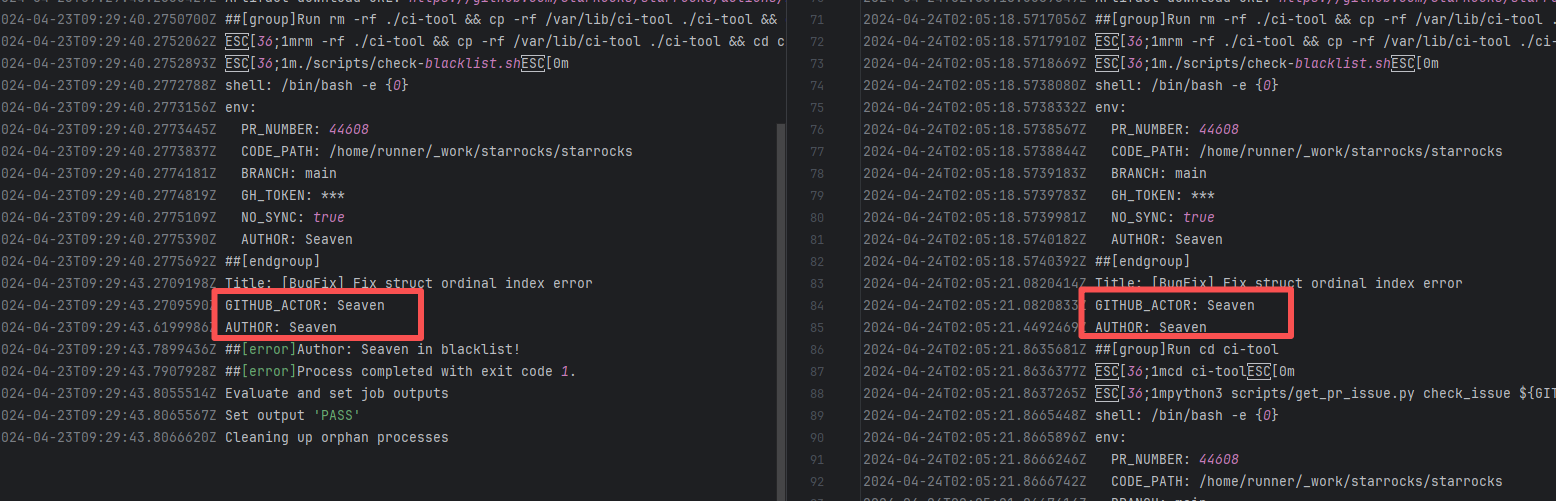

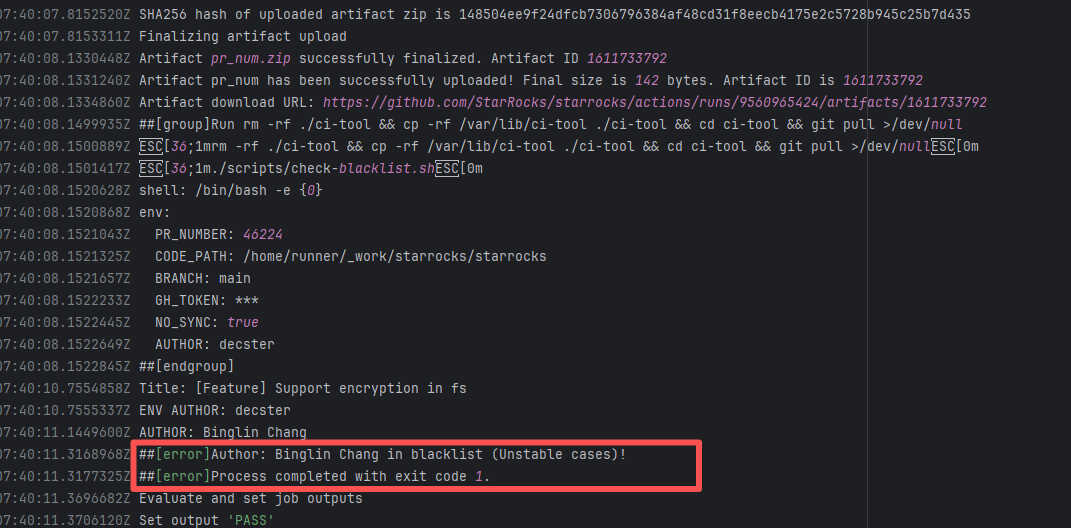

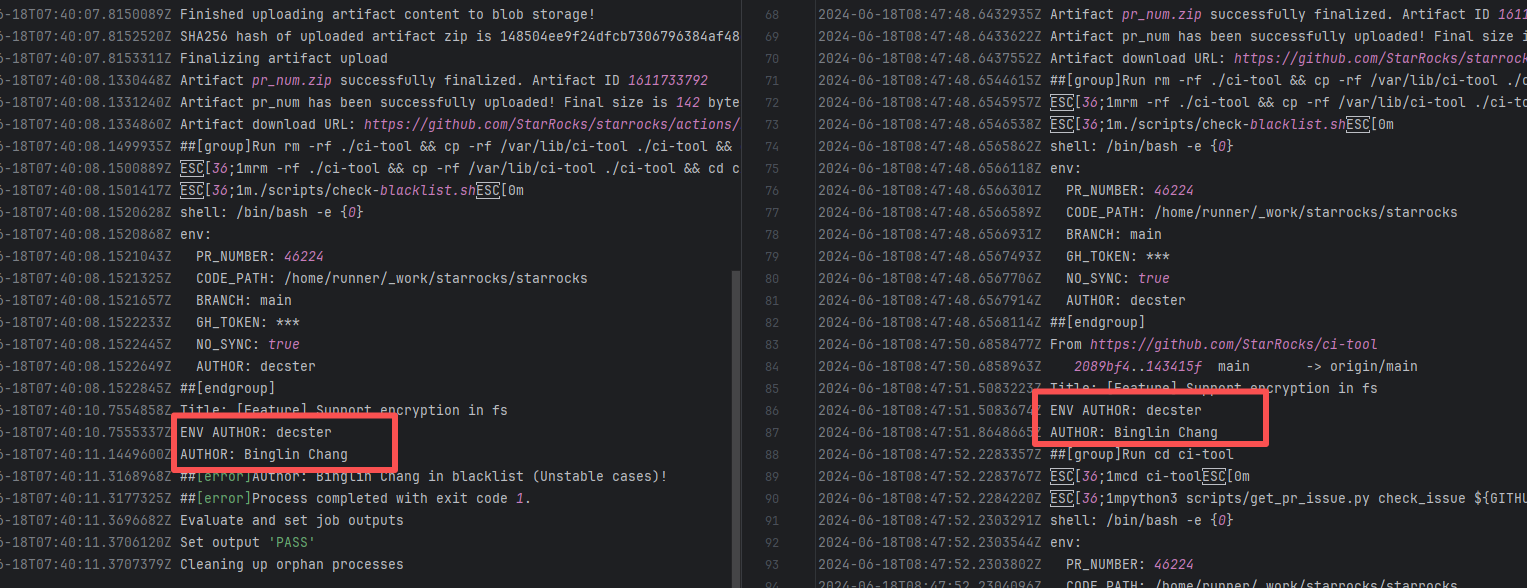

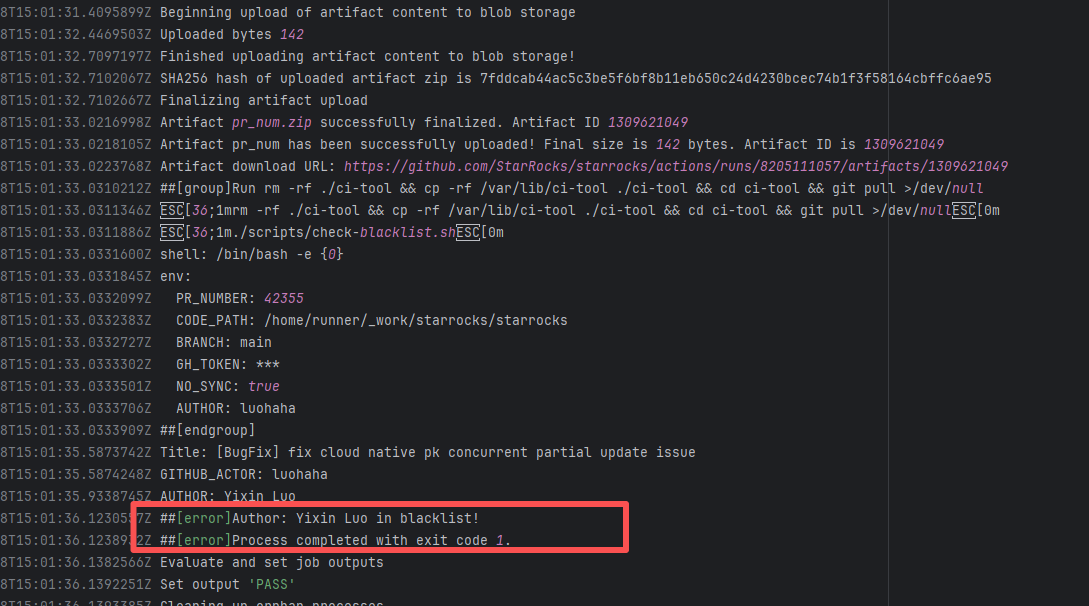

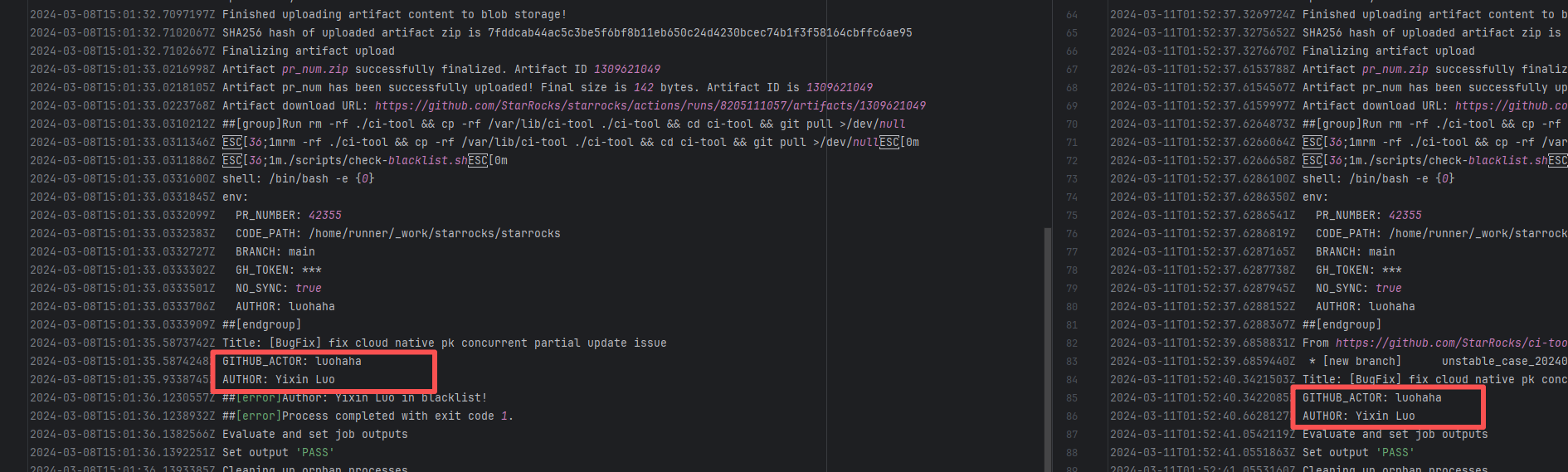

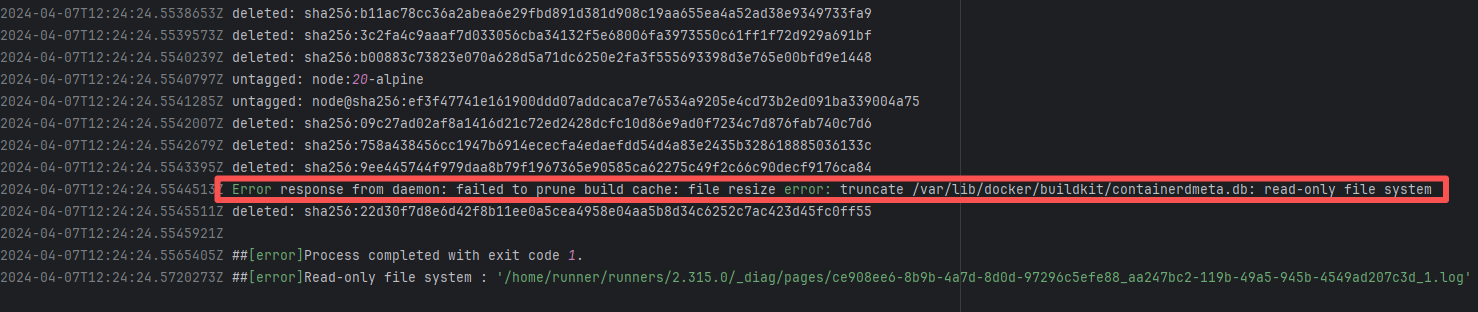

| StarRocks/starrocks |

25480164939 |

Authentication Failure |

Error: The log error message indicates that the user is on a blacklist and the request was rejected. `Author ... in blacklist` indicates that the repository prevented commits from unauthorized users (or bots) from being merged or backported.

Root Cause: The current runner is a self-hosted server, and the blacklist is stored at `/var/lib/ci-tool/scripts/check-blacklist.sh`. The rerun was successful after the developer modified the blacklist. |

Remove the user from the blacklist / add them to the whitelist |

|

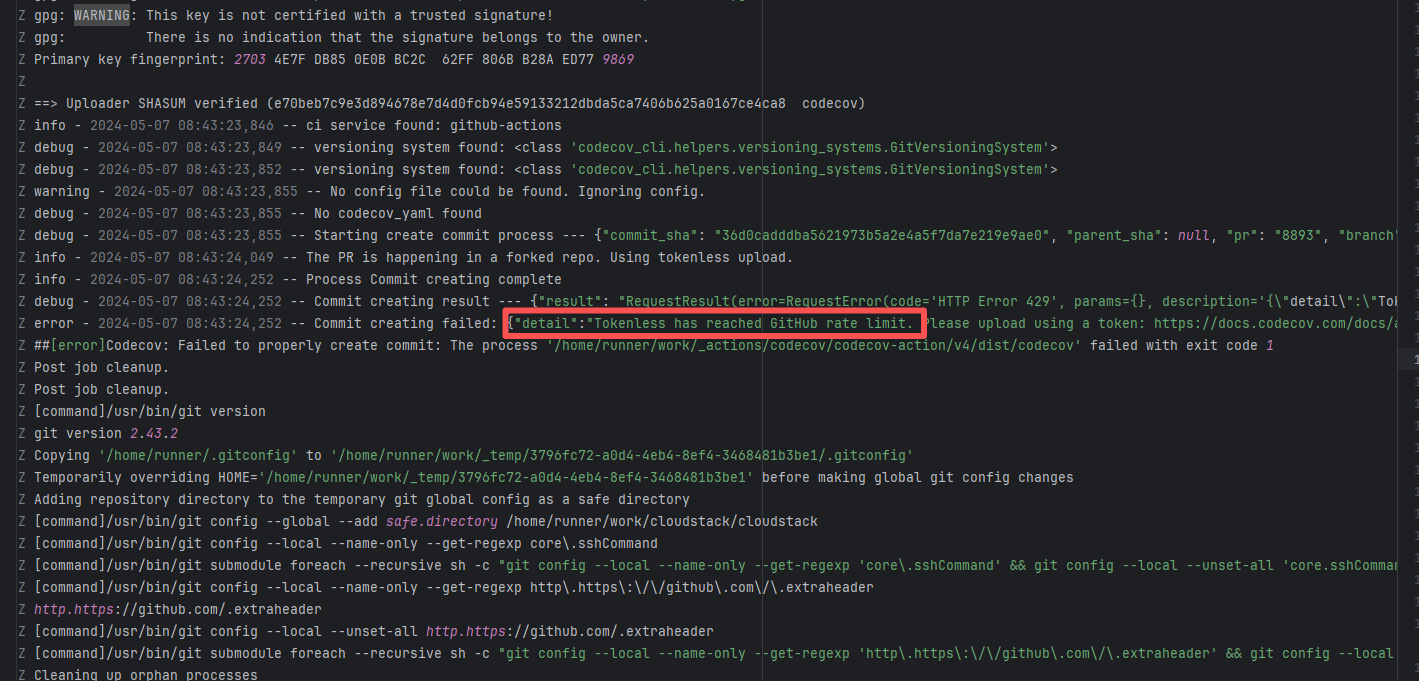

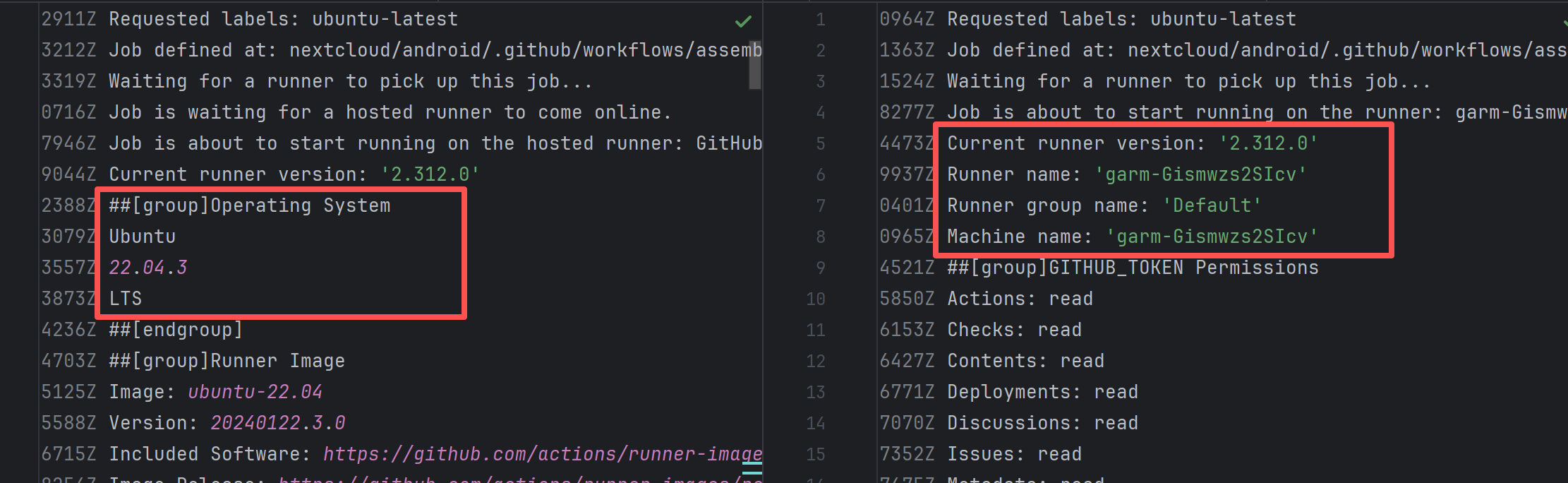

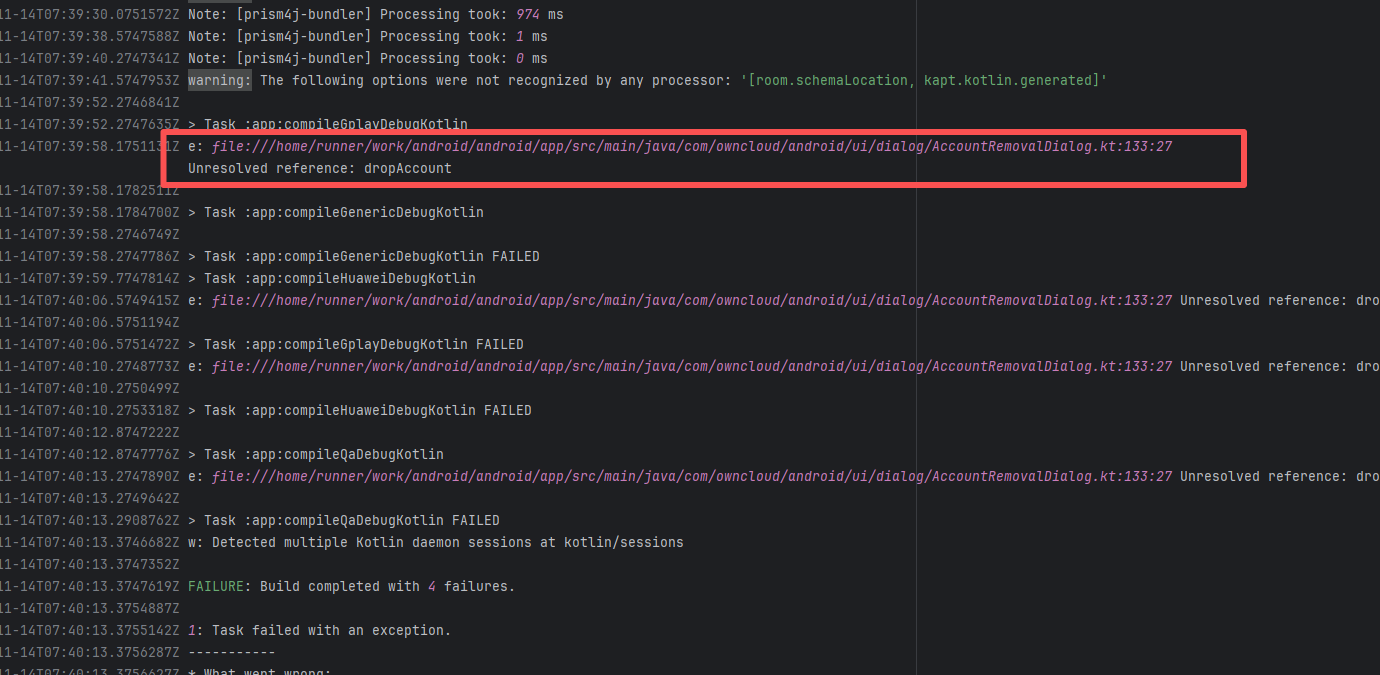

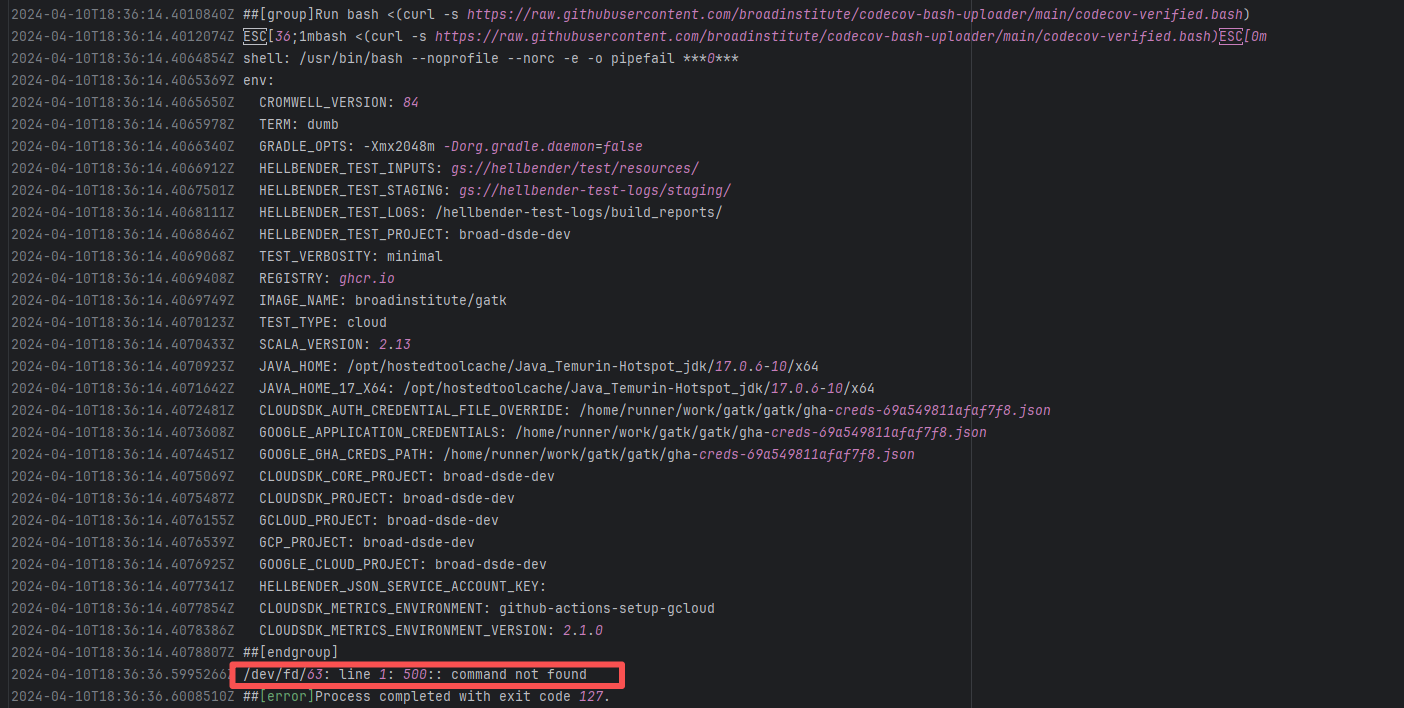

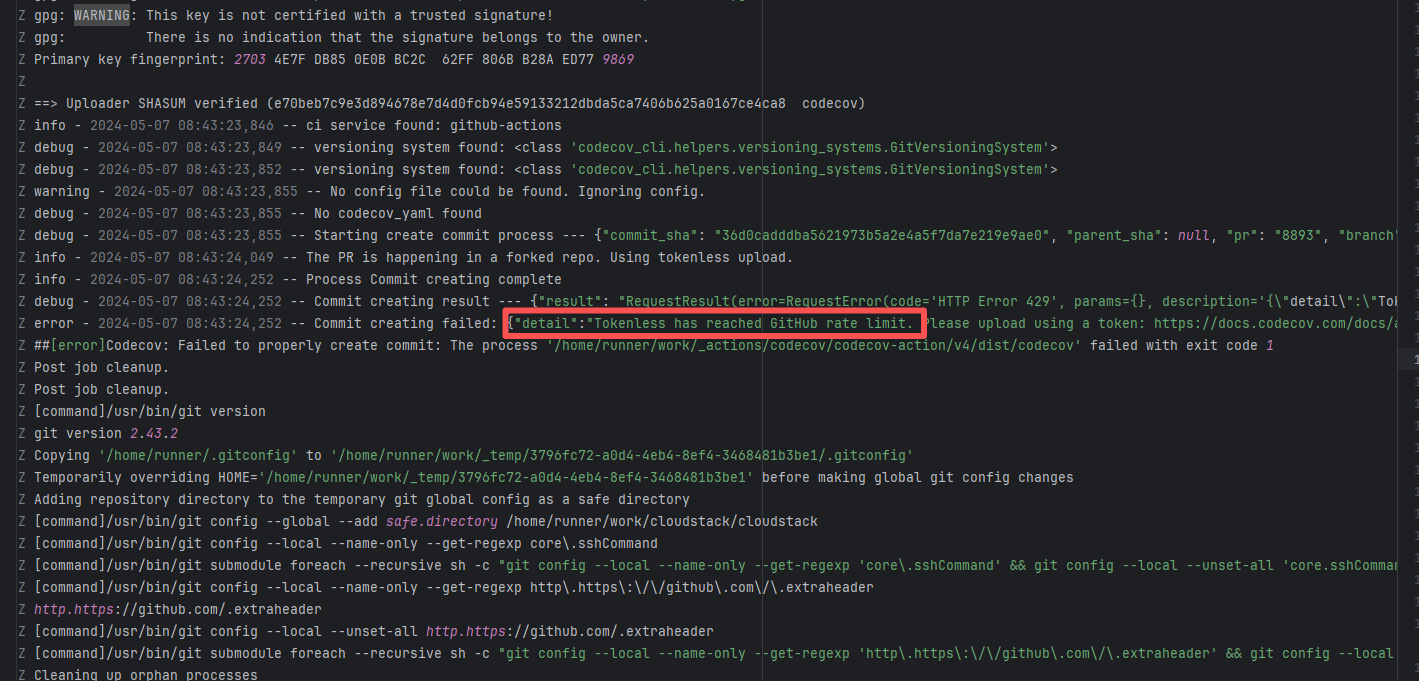

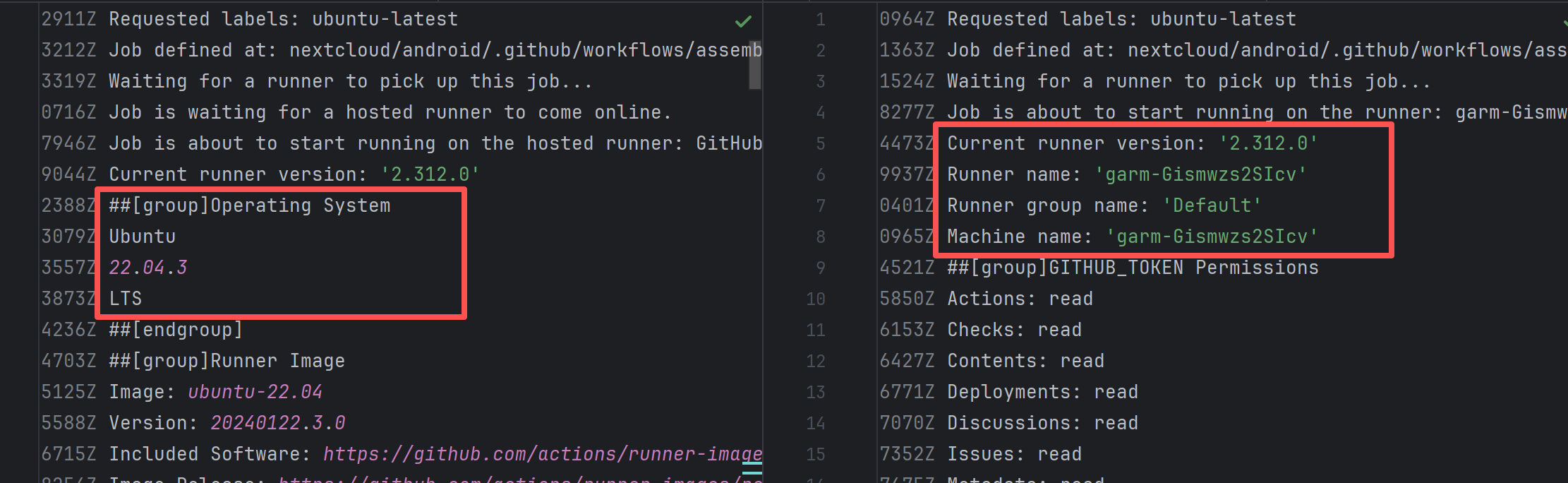

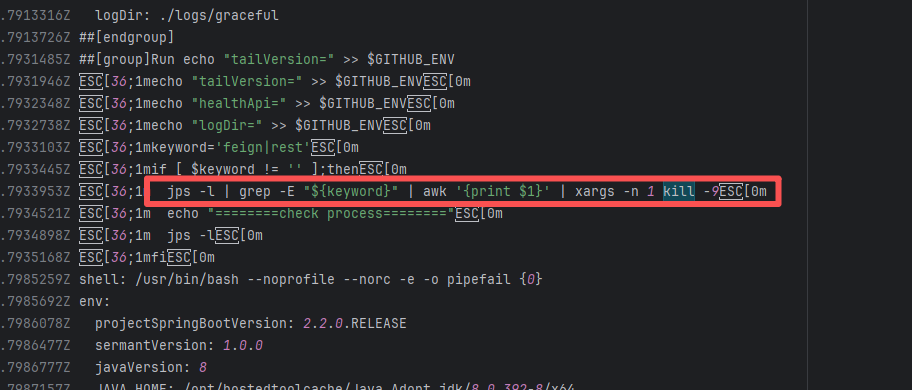

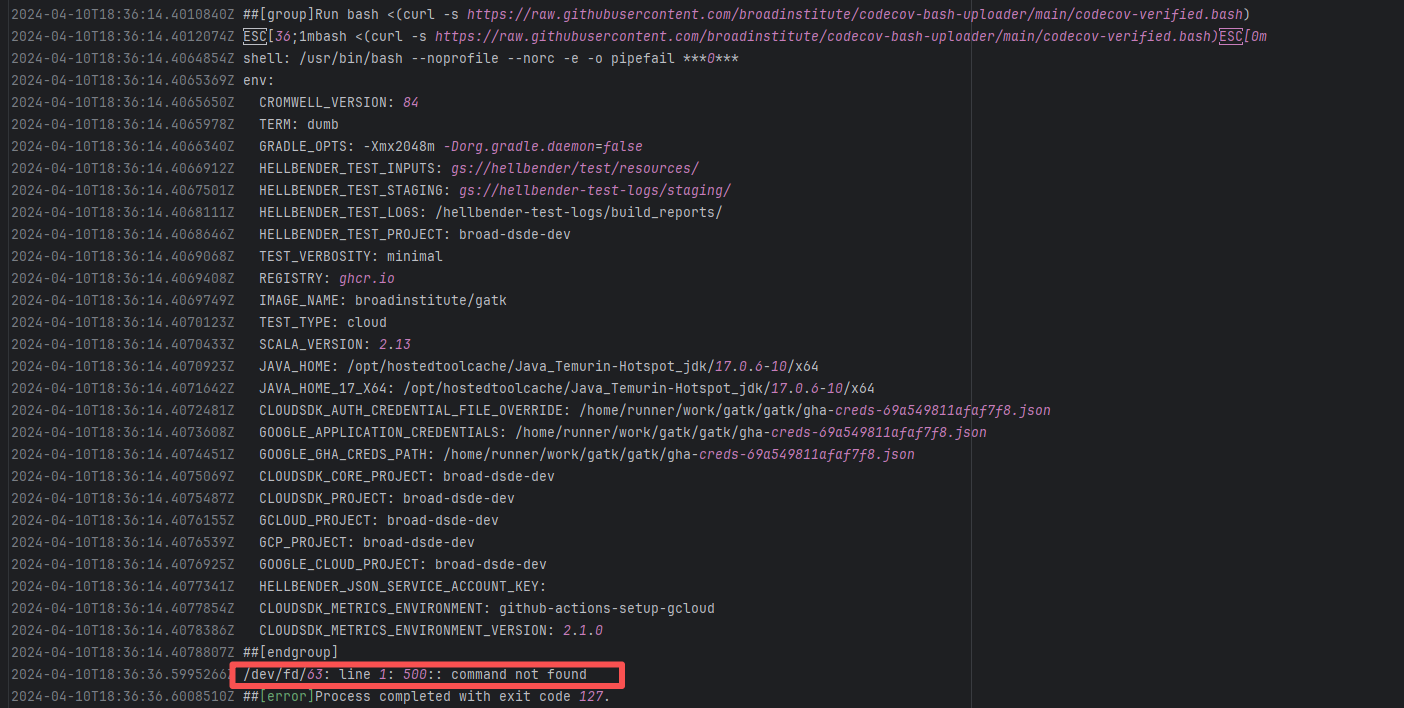

| nextcloud/android |

23749365892 |

Authentication Failure |

Root Cause: The CI script used a tokenless anonymous mode when uploading Codecov code coverage reports. Since anonymous mode is highly susceptible to triggering API rate limits from third-party service providers (HTTP 429 Too Many Requests), the coverage report upload was interrupted. |

Short-term Fix: Retry the job after the anonymous API limit window period passes.

Long-term Defense: Generate an exclusive Token on the Codecov platform and inject it into the GitHub Secrets workflow (CODECOV_TOKEN) to completely avoid the strict rate limits associated with anonymous uploads. |

|

| bytedeco/javacpp-presets |

26281262053 |

Authentication Failure |

Error: Failed to deploy the artifact to the Sonatype OSS snapshot repository using the nexus-staging-maven-plugin. The core reason is a 401 Unauthorized error, meaning the deployment request lacks a valid authentication token.

Root Cause: Rerun succeeded after replacing the token. |

Modify the deployment token in the secrets within the repository settings interface. |

|

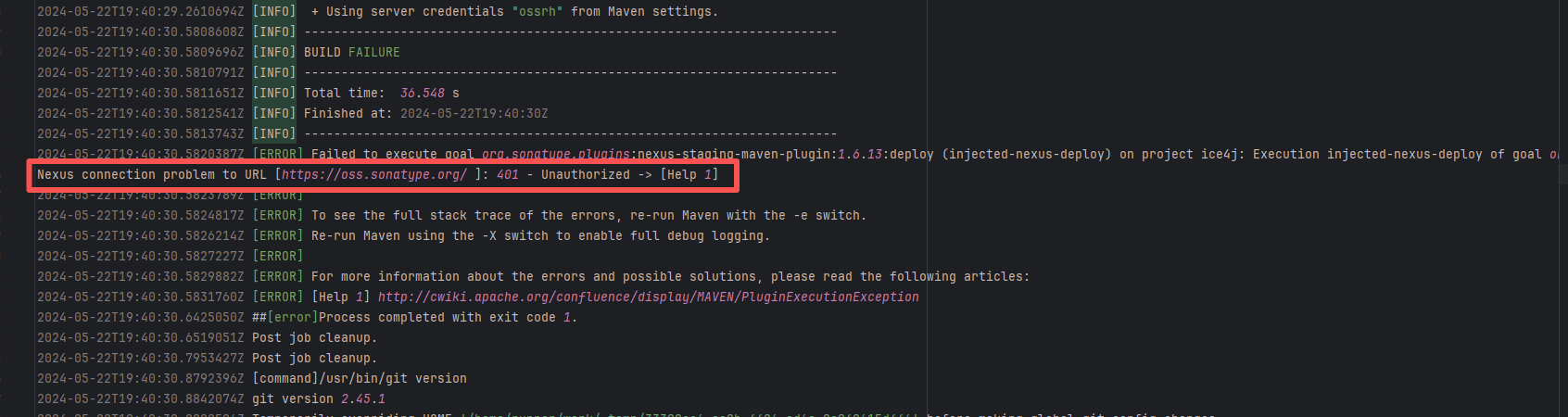

| jitsi/ice4j |

25297741539 |

Authentication Failure |

Error: Failed to deploy the artifact to the Sonatype OSS snapshot repository using the nexus-staging-maven-plugin. The core reason is a 401 Unauthorized error, meaning the deployment request lacks a valid authentication token.

Root Cause: Rerun succeeded after replacing the token. |

Modify the deployment token in the secrets within the repository settings interface. |

|

| StarRocks/starrocks |

24144340058 |

Authentication Failure |

Error: The log error message indicates that the user is on a blacklist and the request was rejected. `Author ... in blacklist` indicates that the repository prevented commits from unauthorized users (or bots) from being merged or backported.

Root Cause: The current runner is a self-hosted server, and the blacklist is stored at `/var/lib/ci-tool/scripts/check-blacklist.sh`. The rerun was successful after the developer modified the blacklist. |

Remove the user from the blacklist / add them to the whitelist |

|

| StarRocks/starrocks |

26354139772 |

Authentication Failure |

Error: The log error message indicates that the user is on a blacklist and the request was rejected. `Author ... in blacklist` indicates that the repository prevented commits from unauthorized users (or bots) from being merged or backported.

Root Cause: The current runner is a self-hosted server, and the blacklist is stored at `/var/lib/ci-tool/scripts/check-blacklist.sh`. The rerun was successful after the developer modified the blacklist. |

Remove the user from the blacklist / add them to the whitelist |

|

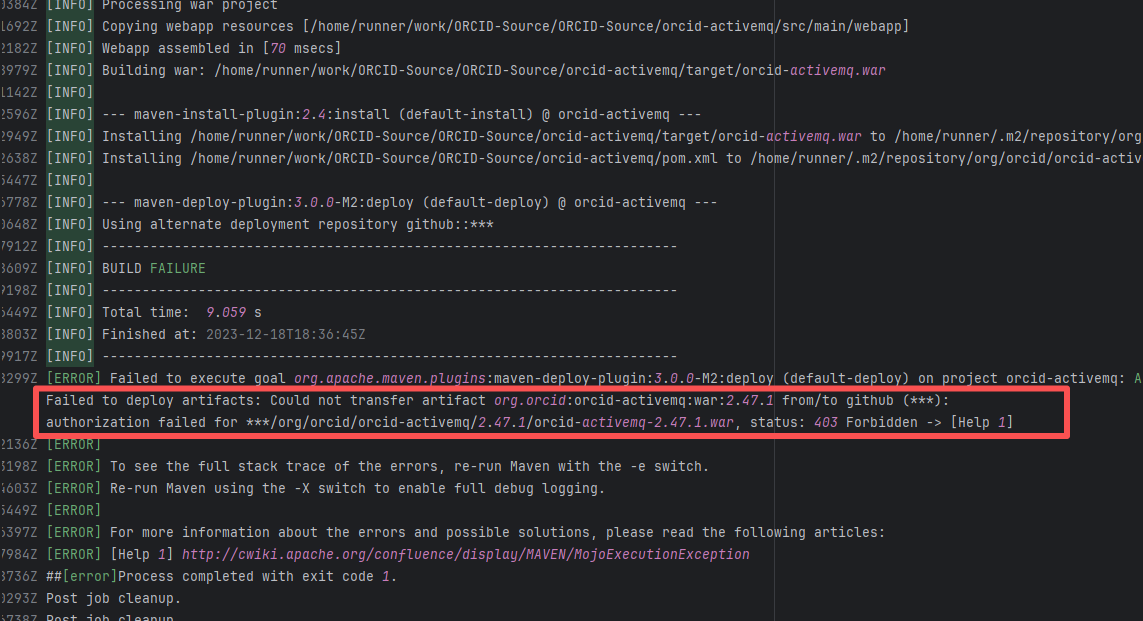

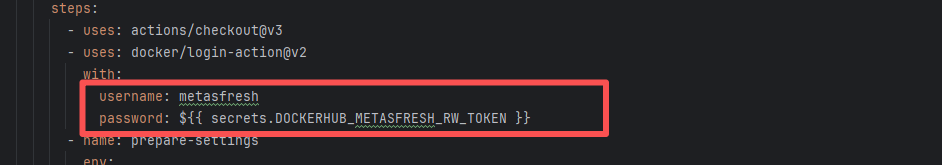

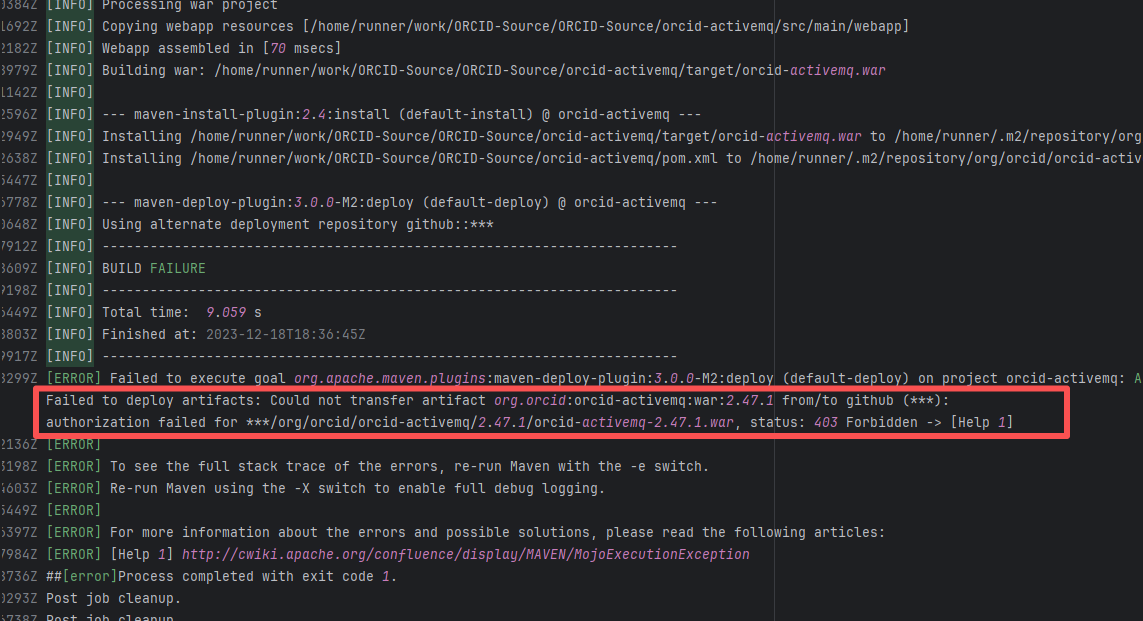

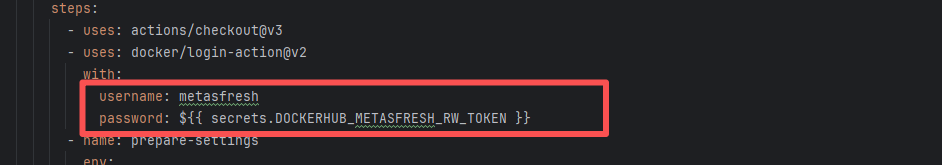

| line/armeria |

21043962217 |

Authentication Failure |

Error: The error log shows that a 403 Forbidden error occurred when deploying the artifact during the Maven build process, indicating that the upload of orcid-activemq-2.47.1.war to the target GitHub repository failed due to permission issues.

Root Cause: The 403 Forbidden error indicates that permission was denied, possibly due to incorrect configuration of the GitHub token or username/password, or insufficient permissions of the account to execute the operation. Rerun was successful after modifying the github token. |

Modify the token in the secrets within the repository settings interface. |

|

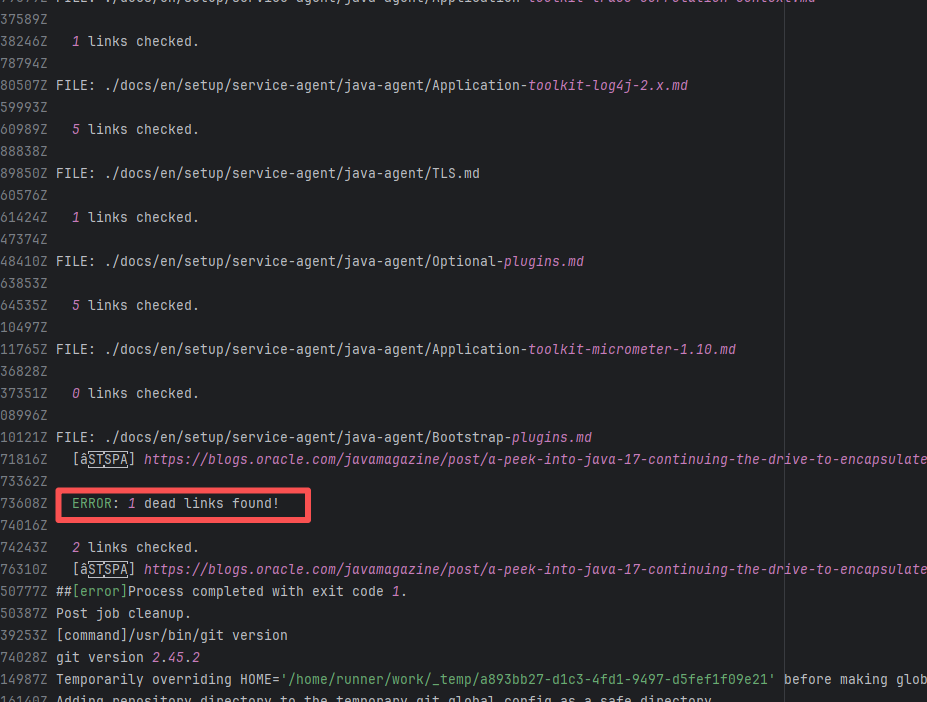

| operator-framework/java-operator-sdk |

24197580009 |

Tool Intermittent Failure |

Error: According to the logs, the current network (such as a company intranet or proxy server) may require authentication to access external resources, causing the signature file from the Microsoft repository to not download correctly or to be tampered with, which leads to verification failure.

Root Cause: Succeeded after multiple reruns without modifying network authentication. |

A web search reveals this is a bug with the Microsoft mirror. This error has been reported multiple times during various periods (e.g., October 25, 2021; August 24, 2024 - peak), pointing to a short-term failure or synchronization issue with the Microsoft repository / CDN, but there is no specific mitigation strategy. |

|

| StarRocks/starrocks |

22441251870 |

Authentication Failure |

Error: The log error message indicates that the user is on a blacklist and the request was rejected. `Author ... in blacklist` indicates that the repository prevented commits from unauthorized users (or bots) from being merged or backported.

Root Cause: The current runner is a self-hosted server, and the blacklist is stored at `/var/lib/ci-tool/scripts/check-blacklist.sh`. The rerun was successful after the developer modified the blacklist. |

Remove the user from the blacklist / add them to the whitelist |

|

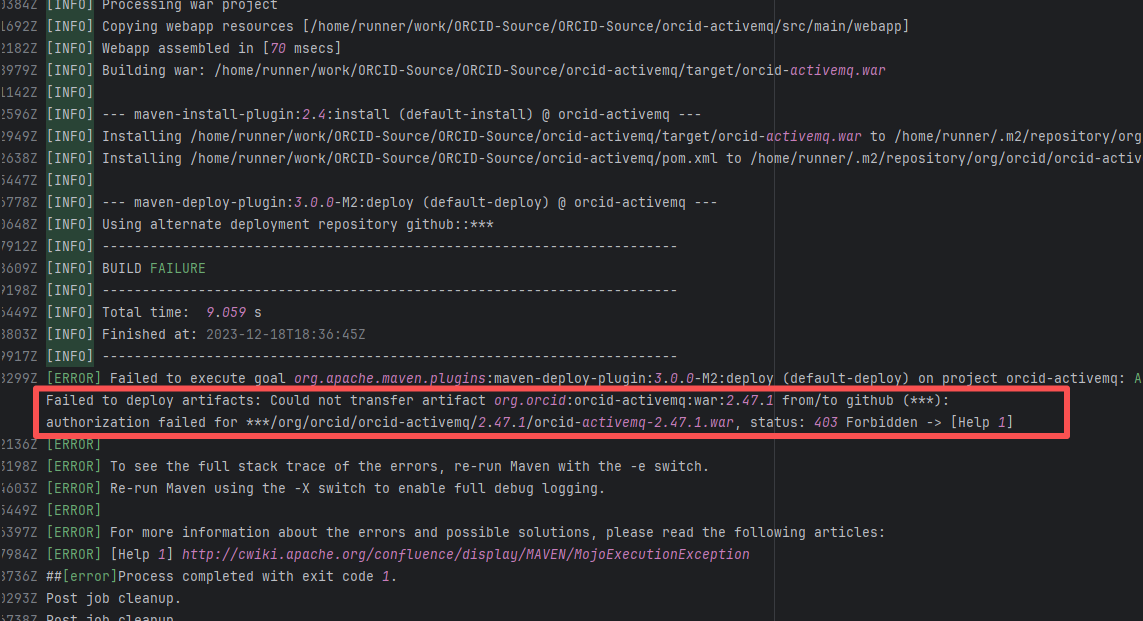

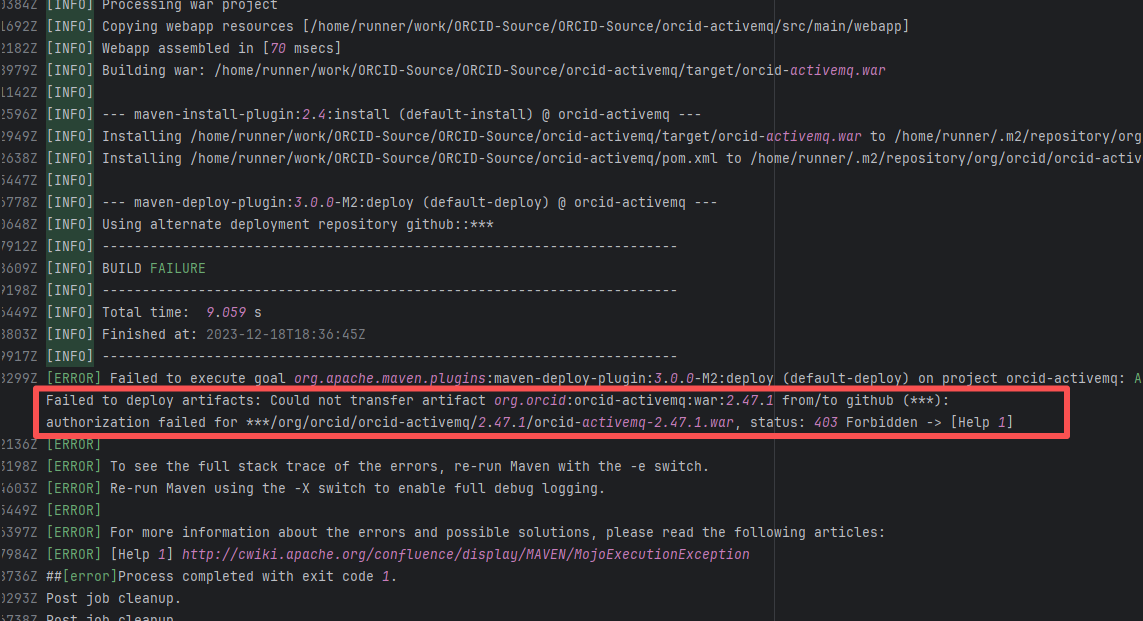

| orcid/orcid-source |

19756626642 |

Authentication Failure |

Error: The error log shows that a 403 Forbidden error occurred when deploying the artifact during the Maven build process, indicating that the upload of orcid-activemq-2.47.1.war to the target GitHub repository failed due to permission issues.

Root Cause: The 403 Forbidden error indicates that permission was denied, possibly due to incorrect configuration of the GitHub token or username/password, or insufficient permissions of the account to execute the operation. Rerun was successful after modifying the github token. |

Modify the token in the secrets within the repository settings interface. |

|

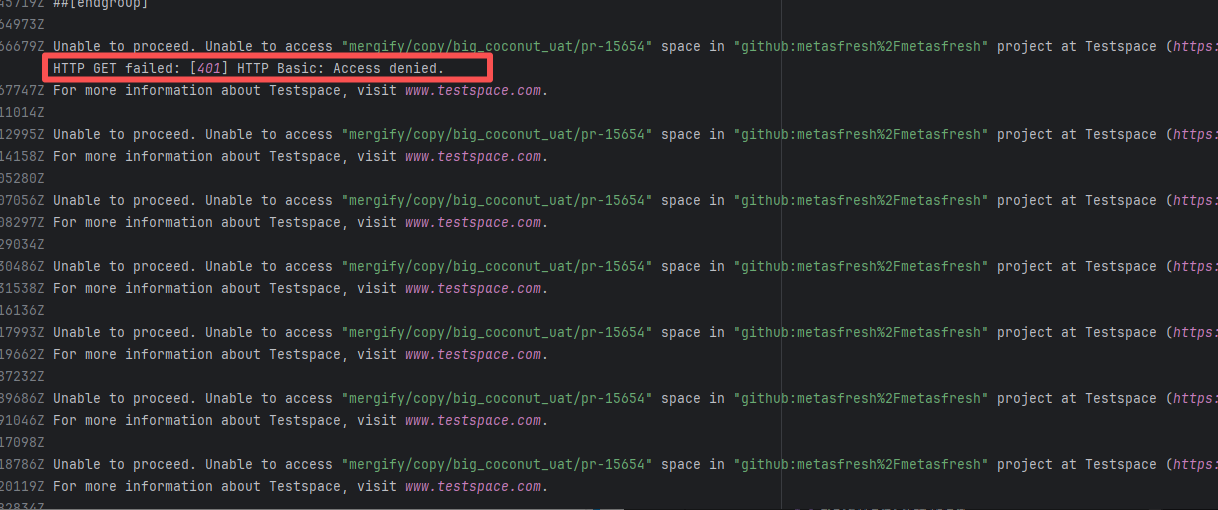

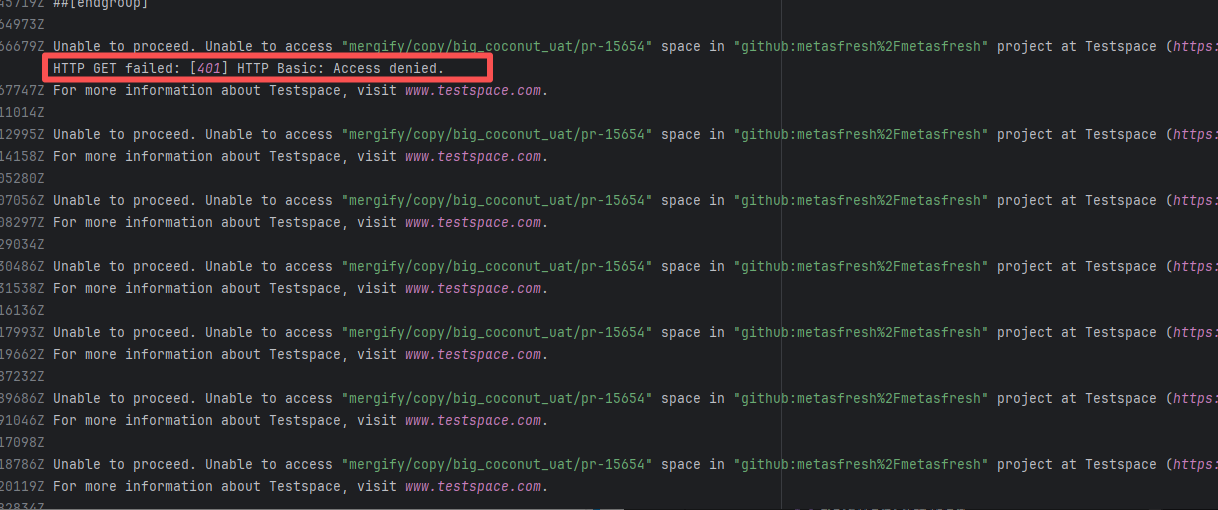

| metasfresh/metasfresh |

17558157801 |

Authentication Failure |

Error: The log error shows that when trying to access the mergify/copy/big_coconut_uat/pr-15654 project on https://metasfresh.testspace.com, the HTTP GET request was rejected (401 Unauthorized) because the credentials provided were invalid or missing.

Root Cause: The 403 Forbidden error indicates that permission was denied, possibly due to incorrect configuration of the token or username/password, or insufficient permissions of the account to execute the operation. Rerun was successful after modifying the token. |

Modify the token in the secrets within the repository settings interface. |

|

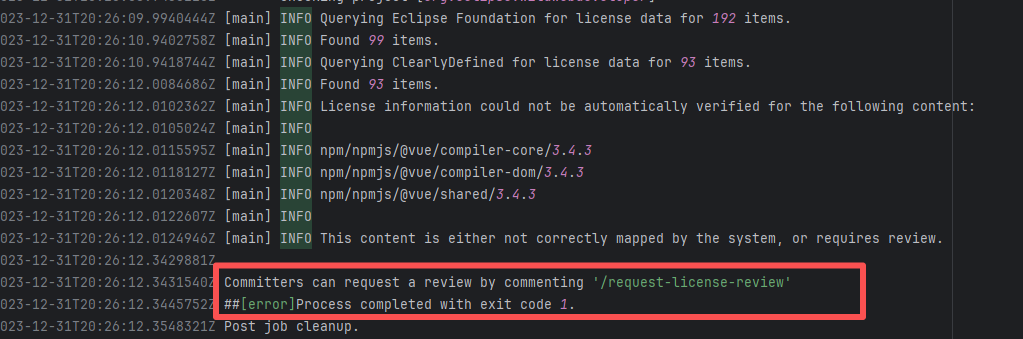

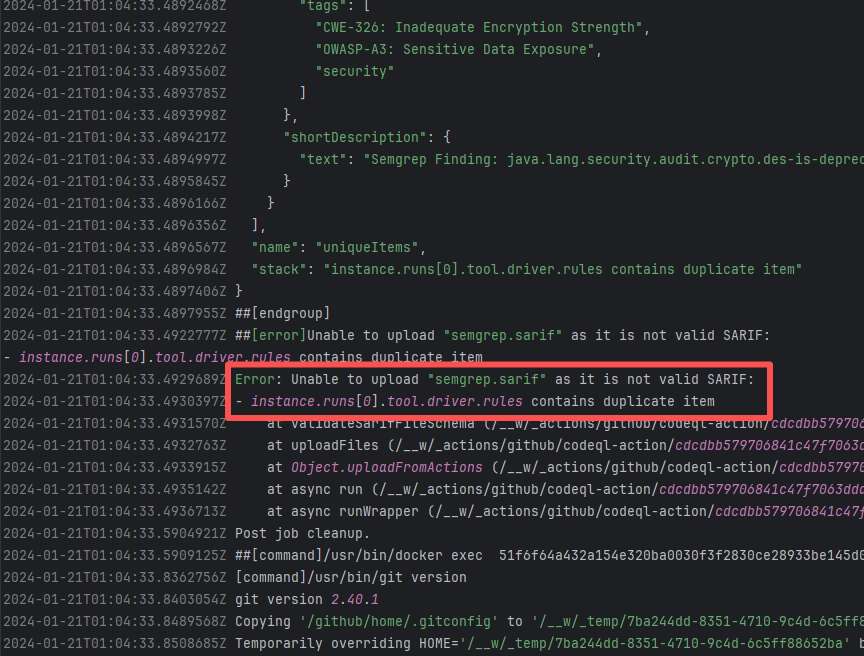

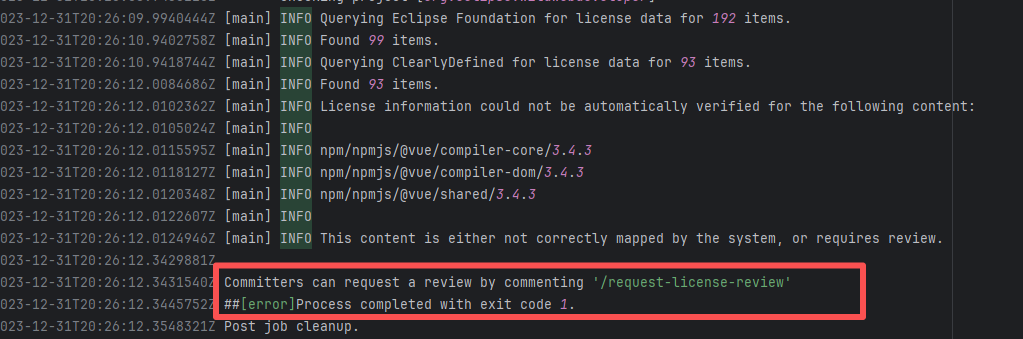

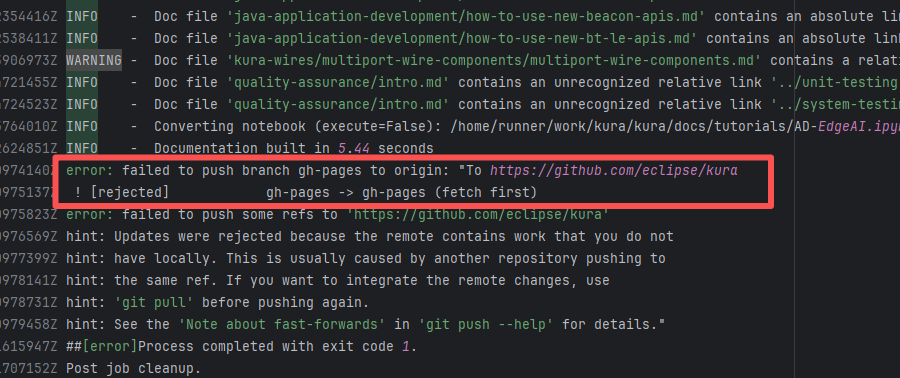

| eclipse-wildwebdeveloper/wildwebdeveloper |

20061016237 |

Workflow Policy Violation |

Error: The current commit involves new dependencies, code files, or license changes, which requires triggering a license review process through specific commands, but this step has not been completed.

Root Cause: The project Committer types the command `/request-license-review` in the PR or commit comments to trigger the automated review process. Once the review passes, it is fine. |

Type the review command according to the main repository requirements for review, and rerun after completion. |

|

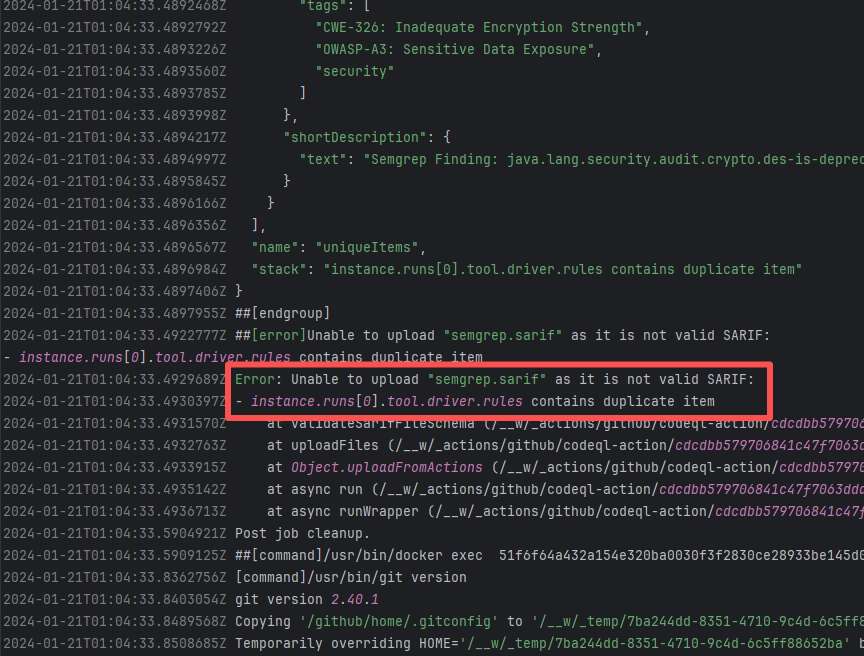

| API Service Unavailable |

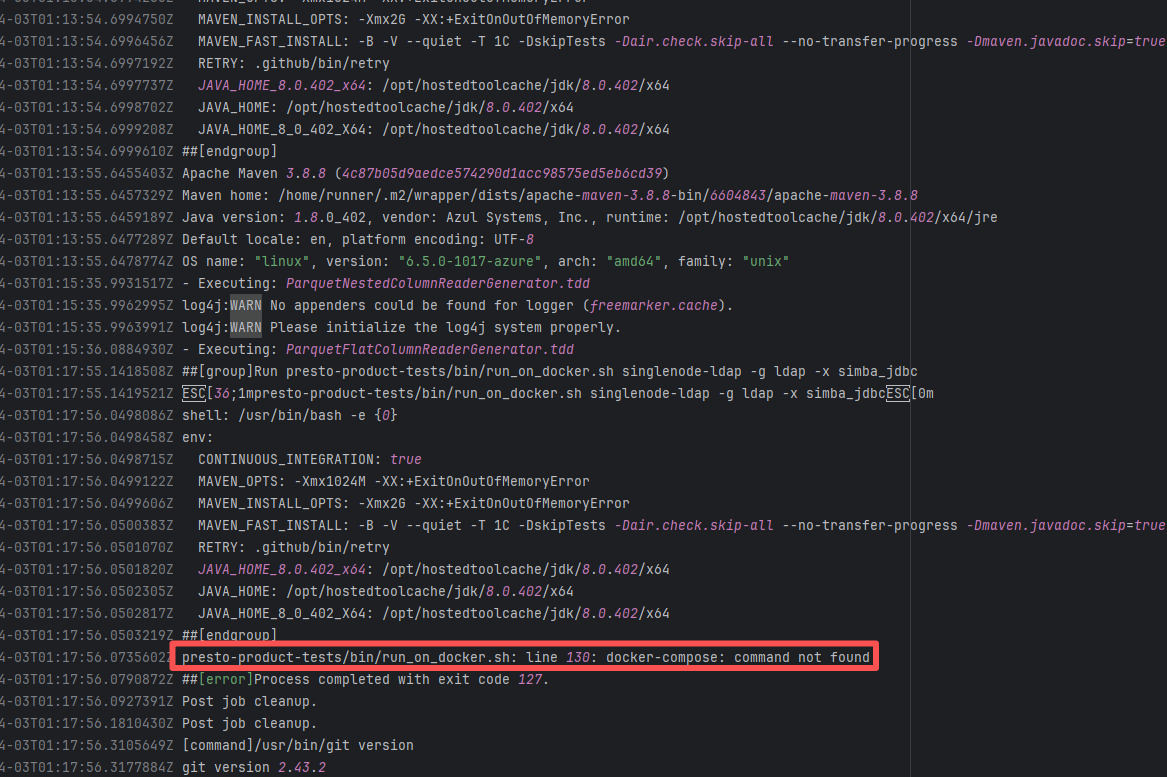

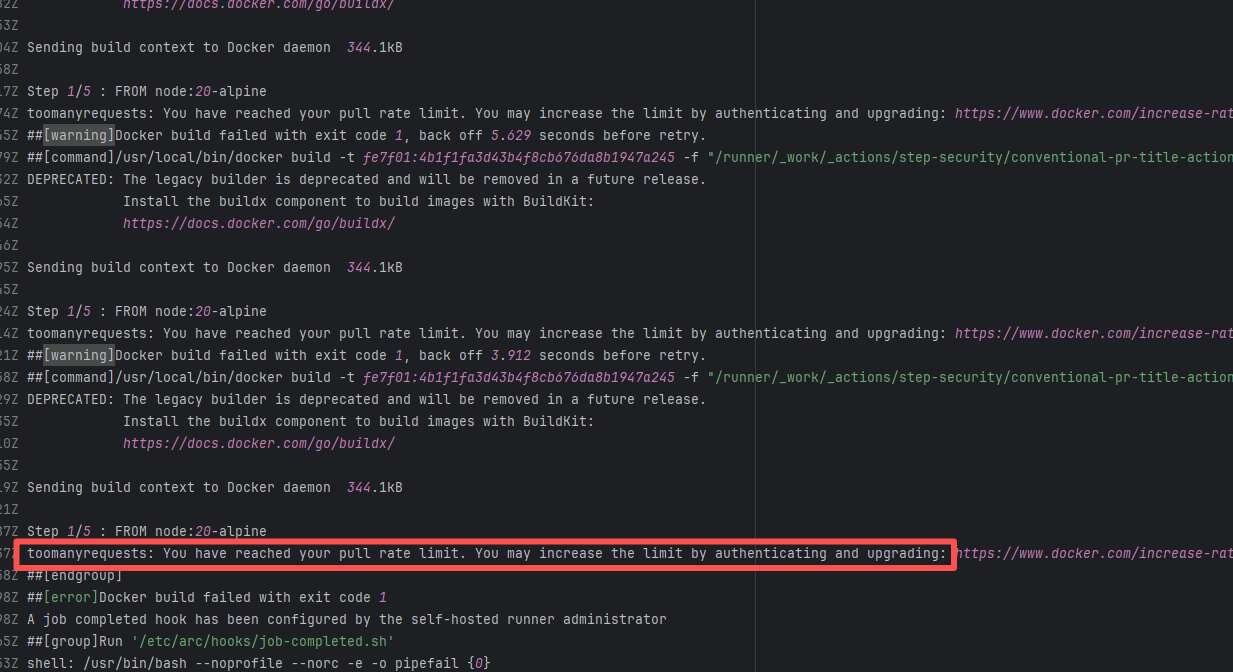

prestodb/presto |

26090585172 |

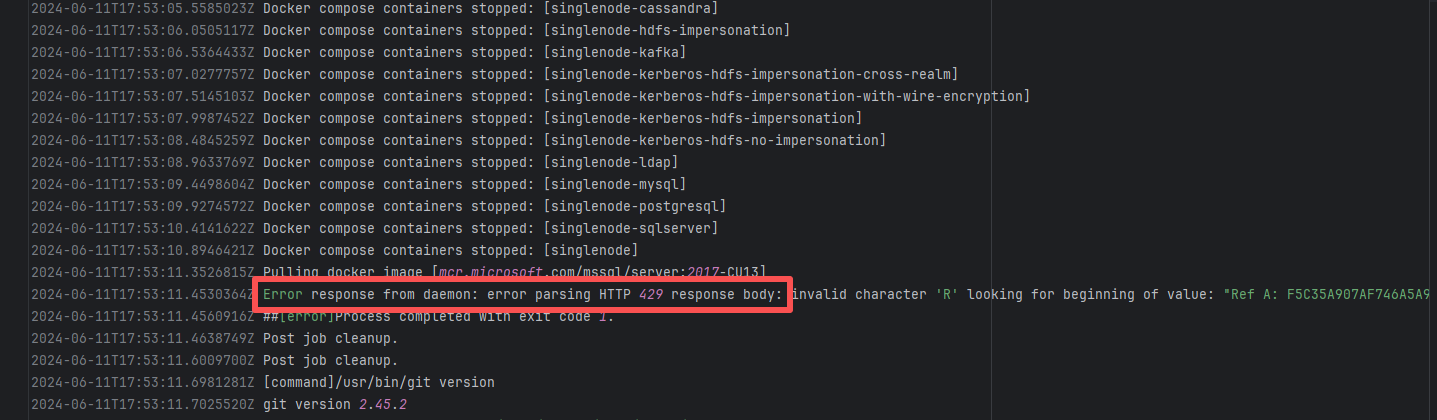

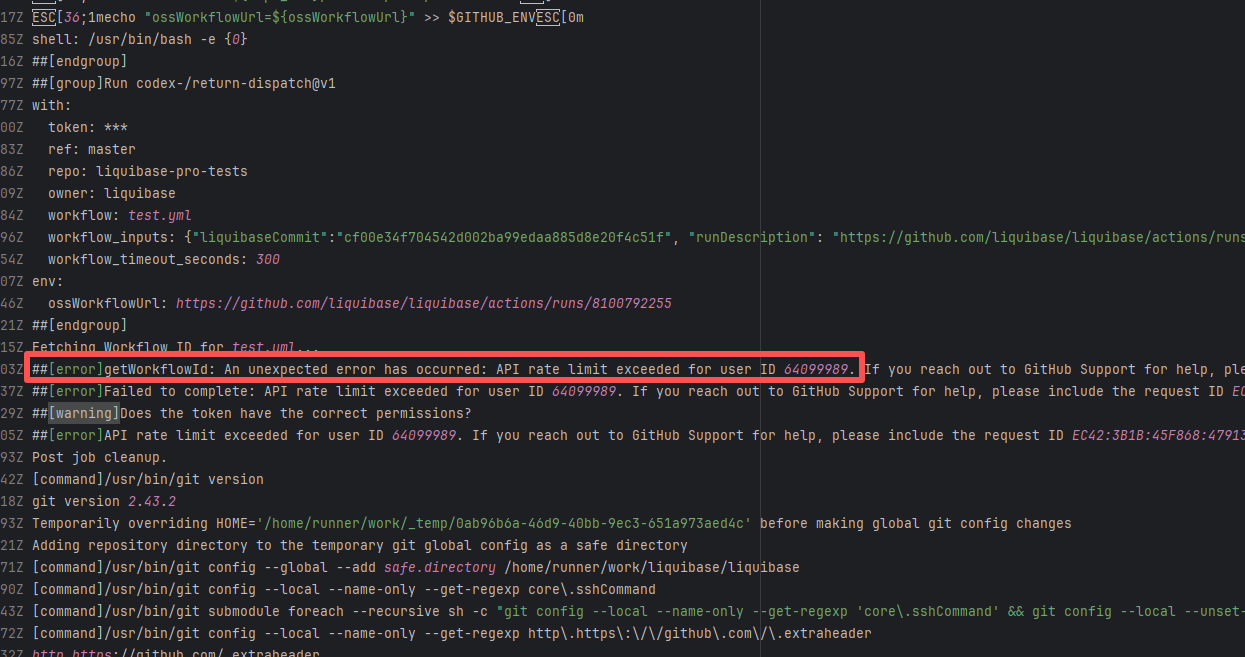

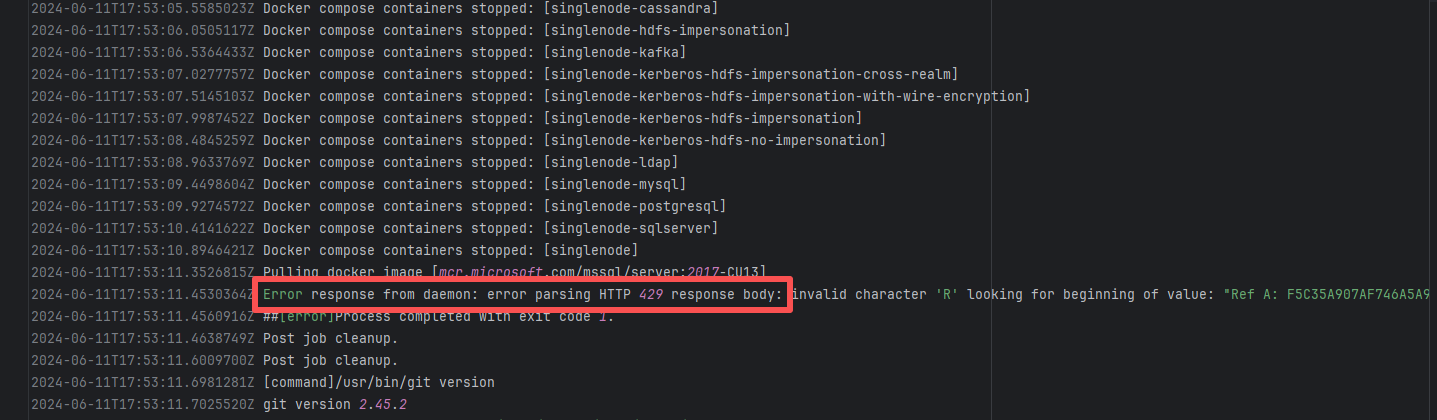

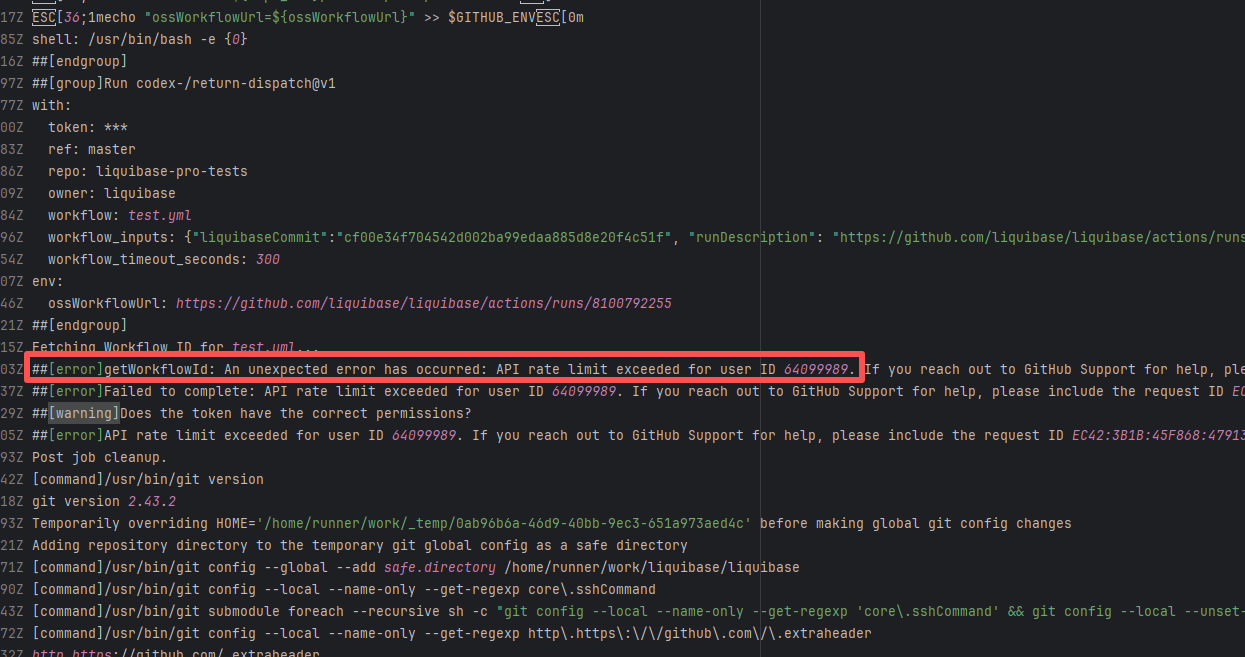

API Rate Limit |

Error: Docker sent an HTTP 429 (Too Many Requests) response to a server, but the response format was unexpected, causing parsing to fail. HTTP 429 status code means "too many requests", the server temporarily refuses to process new requests because the number of requests sent by the client exceeds the limit

Root Cause: Wait for API quota to recover and re-execute successfully |

Wait for API quota to recover and re-execute successfully |

|

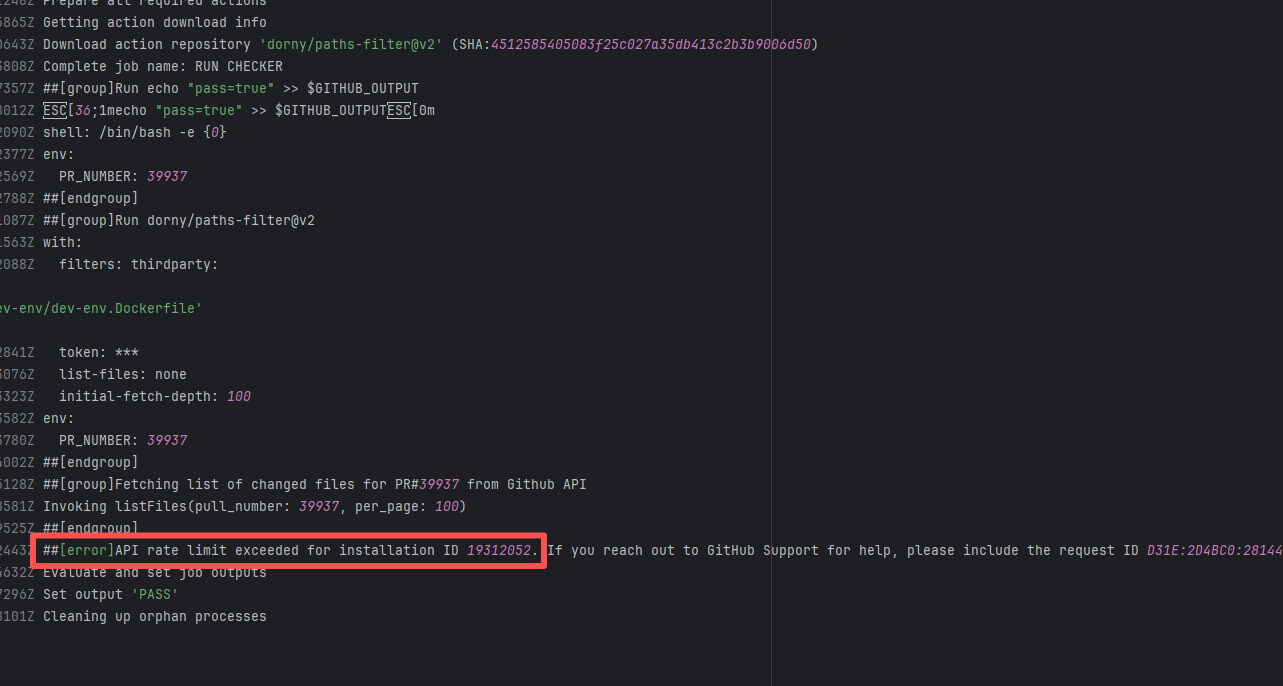

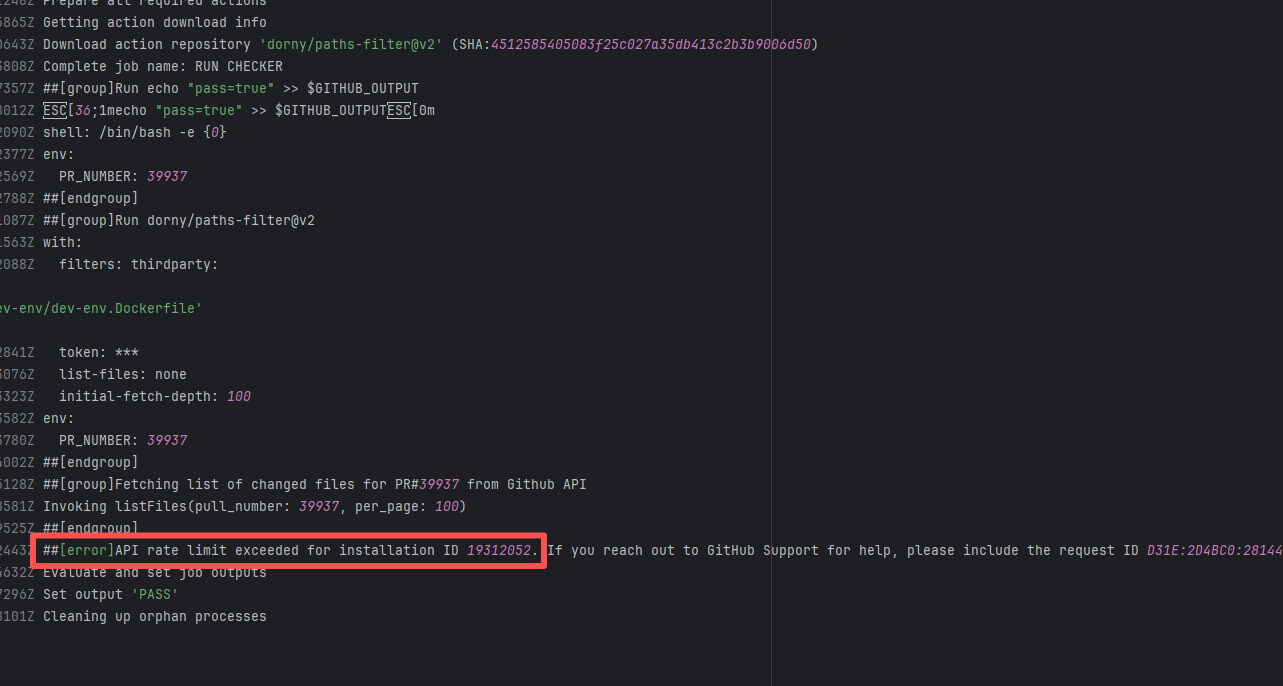

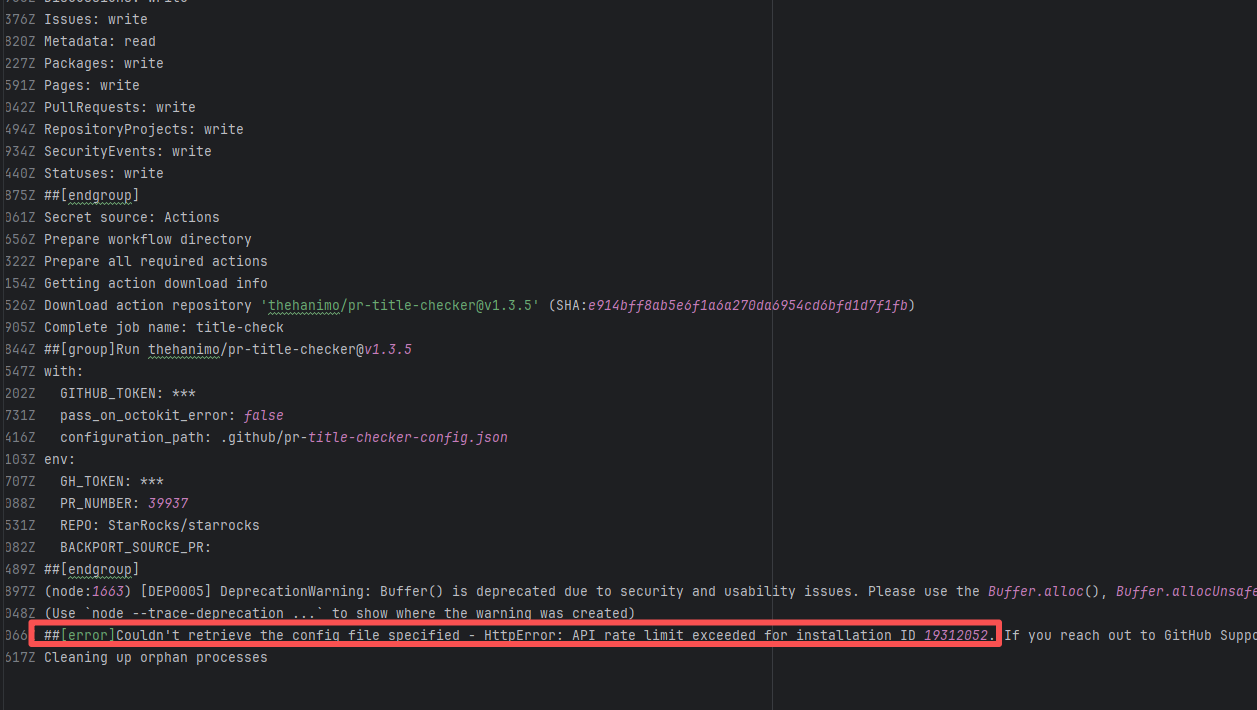

| StarRocks/starrocks |

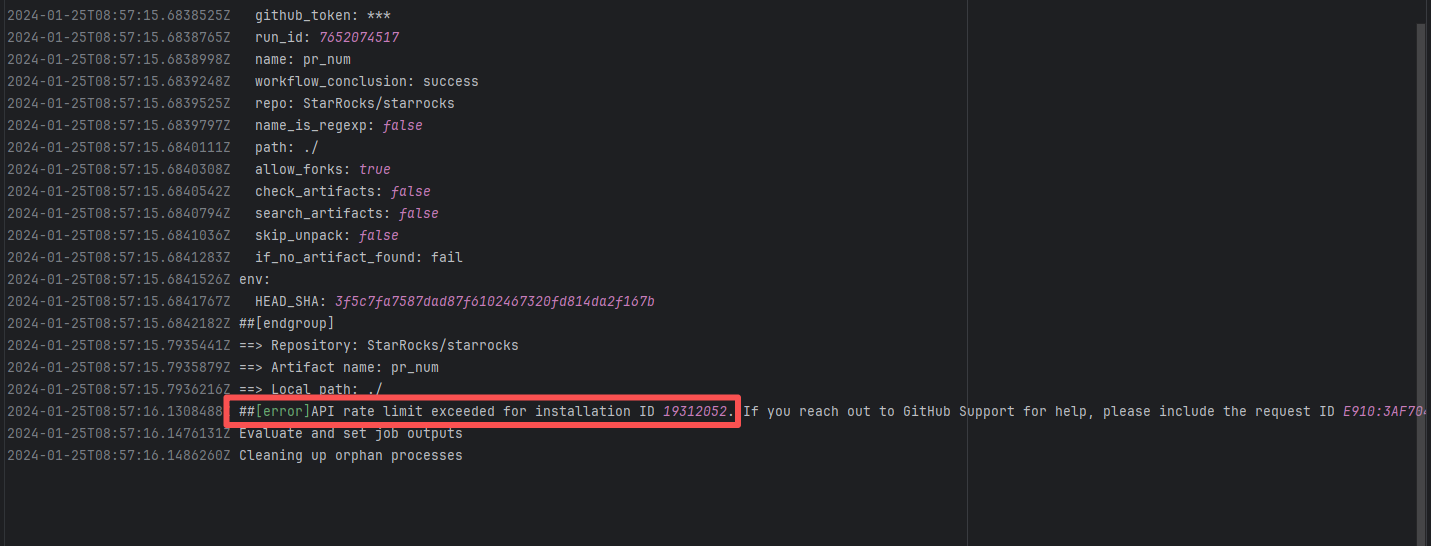

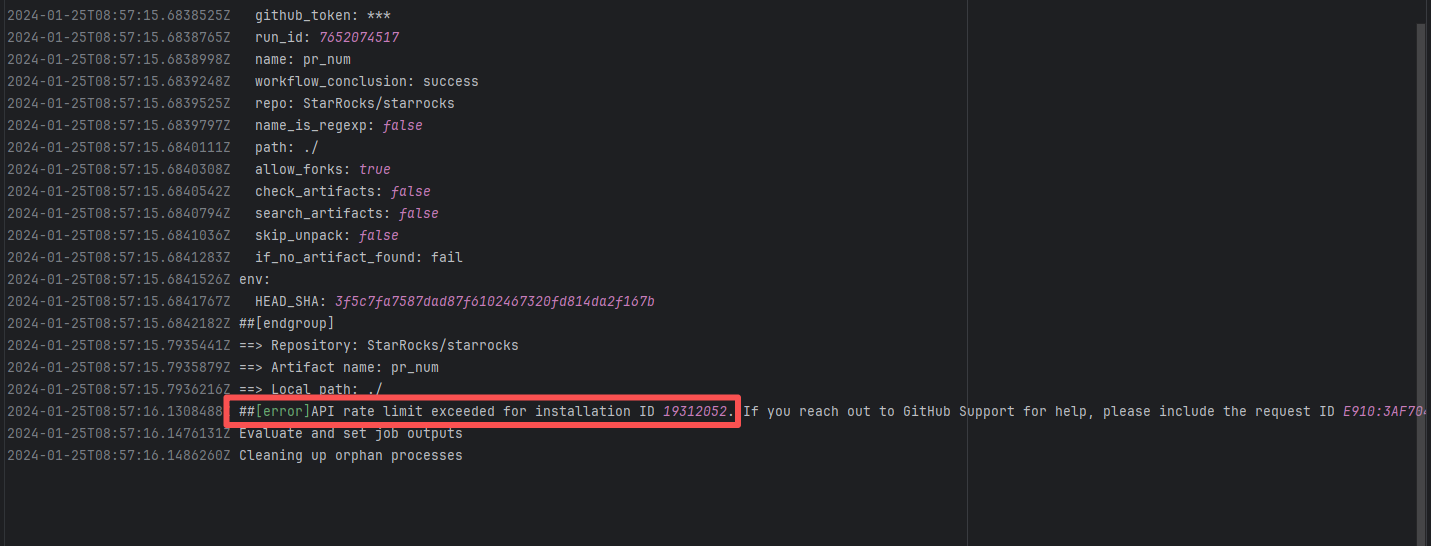

20851141718 |

API Rate Limit |

Error: Triggered API rate limit exceeded error.

Root Cause: The installation ID of the current GitHub App or Actions exceeded GitHub's official rate limit quota within a short period, causing subsequent requests to be intercepted and rejected by the server. |

Short-term Fix: Wait 5-10 minutes for API quota to automatically recover, then Rerun.

Long-term Defense:

1. Optimize CI processes to reduce unnecessary GitHub API calls;

2. Configure exclusive Personal Access Tokens (PAT) with higher quotas for frequently-called Actions;

3. Introduce local caching strategies (e.g., cache dependencies) to reduce cross-network fetch frequency. |

|

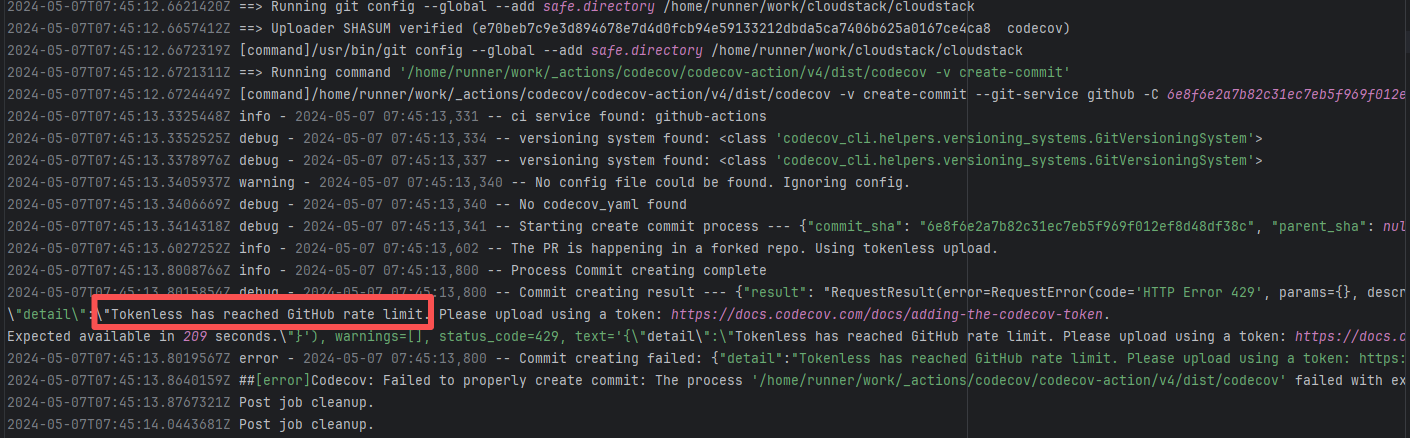

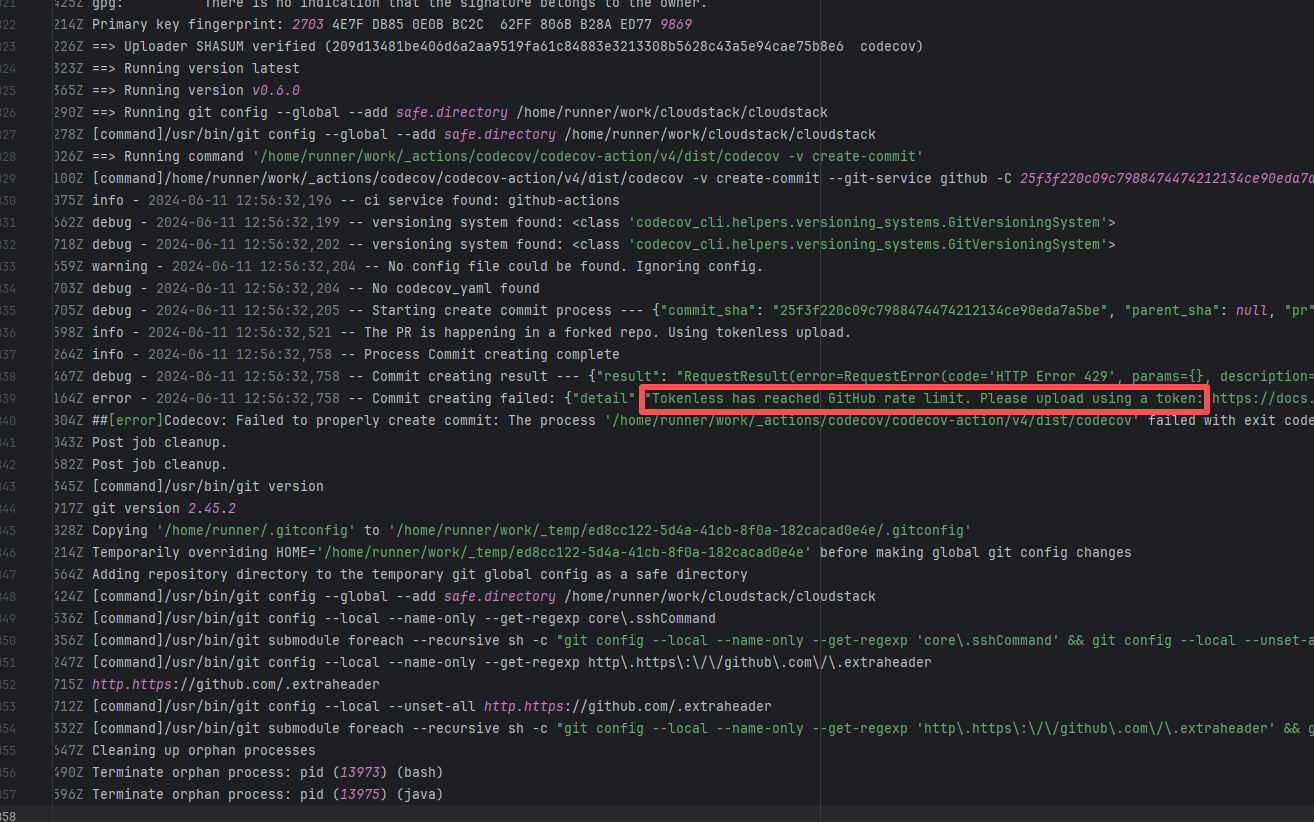

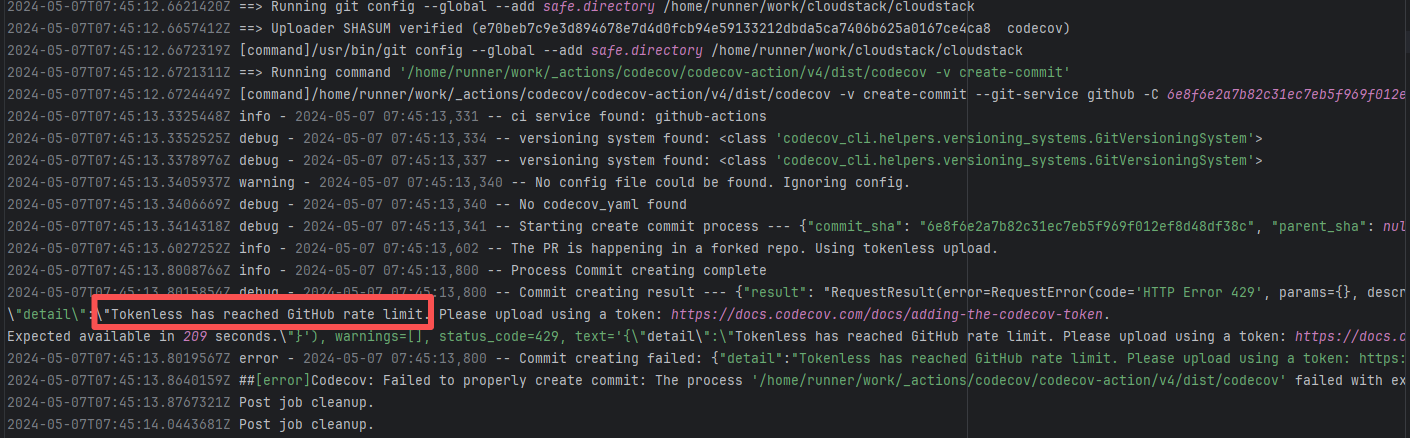

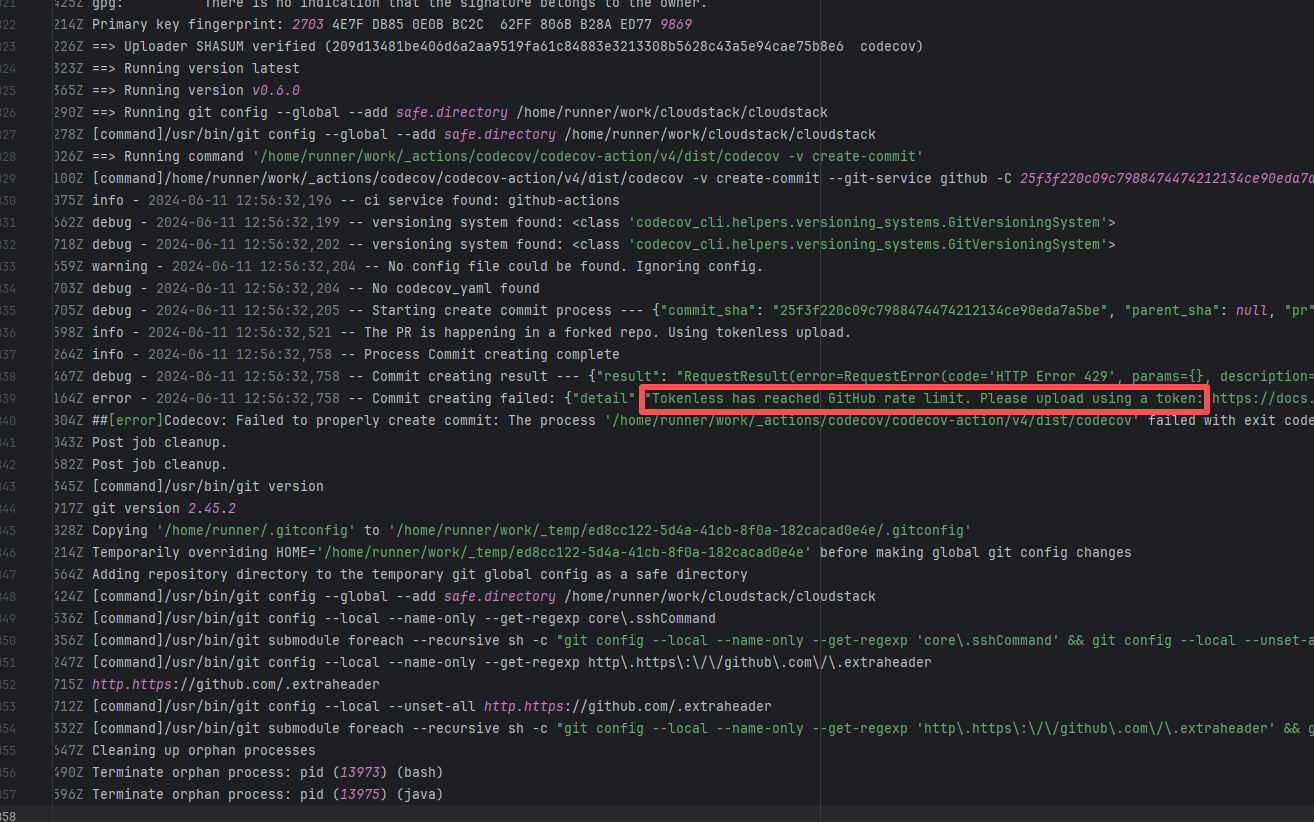

| apache/cloudstack |

24666080255 |

API Rate Limit |

Root Cause: The CI script used a tokenless anonymous mode when uploading Codecov code coverage reports. Since anonymous mode is highly susceptible to triggering API rate limits from third-party service providers (HTTP 429 Too Many Requests), the coverage report upload was interrupted. |

Short-term Fix: Retry the job after the anonymous API limit window period passes.

Long-term Defense: Generate an exclusive Token on the Codecov platform and inject it into the GitHub Secrets workflow (CODECOV_TOKEN) to completely avoid the strict rate limits associated with anonymous uploads. |

|

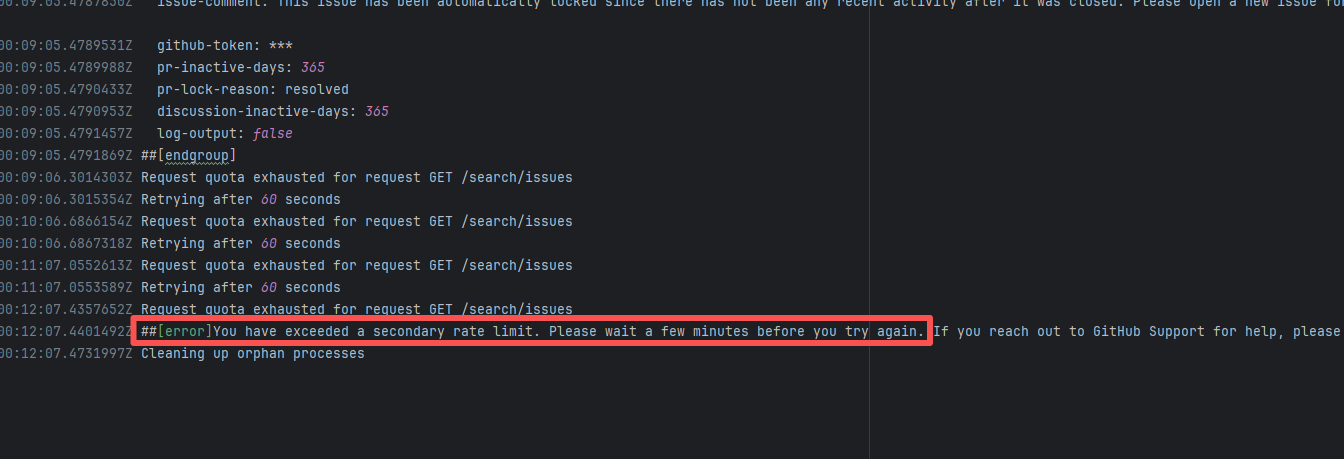

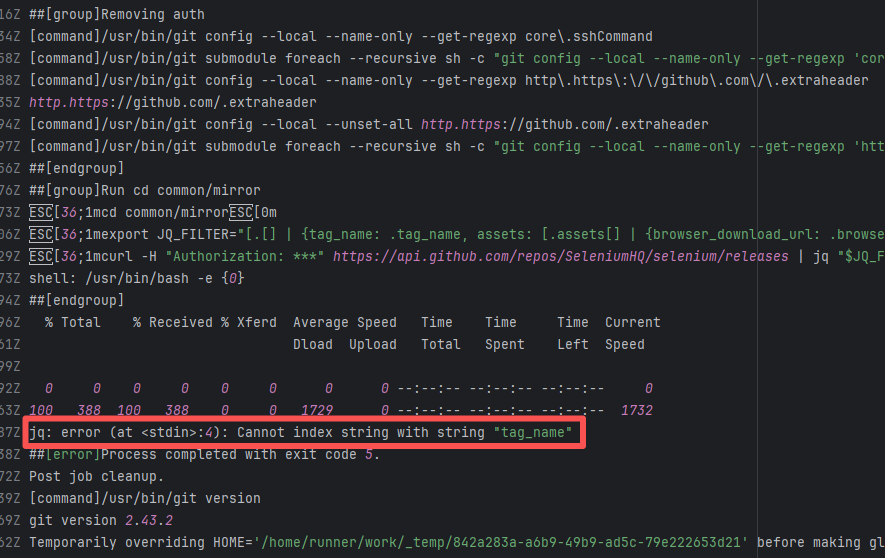

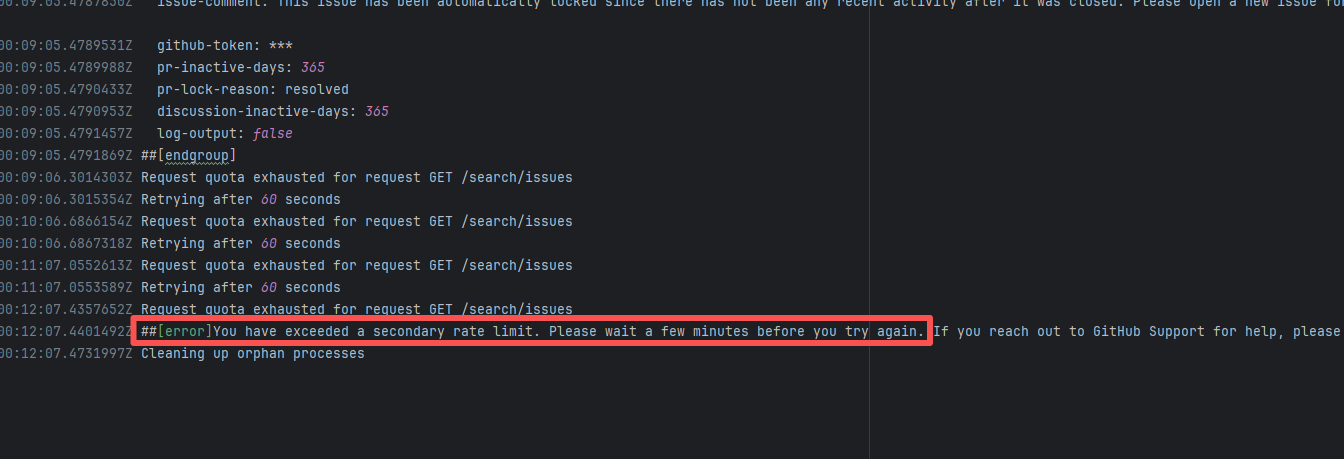

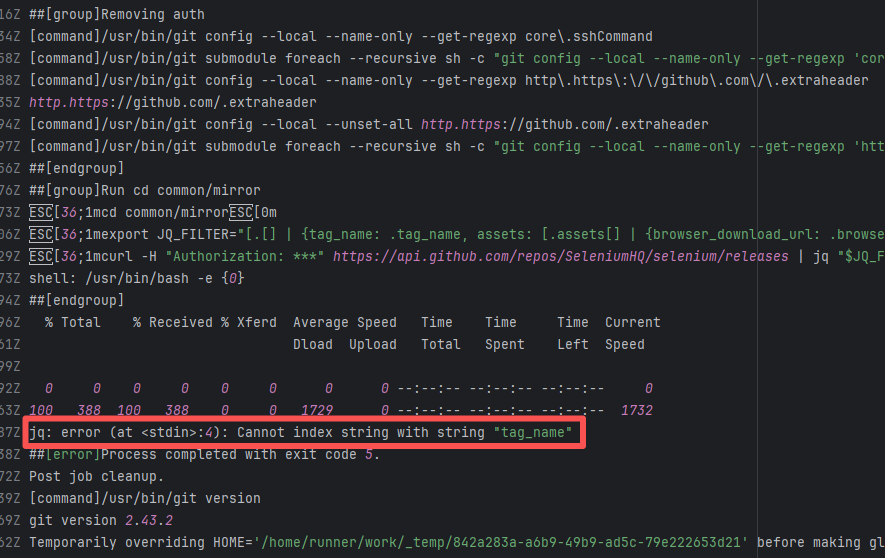

| seleniumhq/selenium |

22368973870 |

API Rate Limit |

Error: `You have exceeded a secondary rate limit` indicates that access to GitHub has reached its limit. GitHub API secondary rate limit triggered (secondary limits are short-term high-frequency request limits set by GitHub to prevent abuse), returning error 429 Too Many Requests

Root Cause: Wait a few minutes and re-execute successfully |

- Pause requests and try again in 5-10 minutes, usually recovers automatically

- If urgent handling is needed, record the `request ID` in the error (e.g., `DACE:3DA8CE:1C705:31493:66043904`) and contact GitHub Support to explain the situation |

|

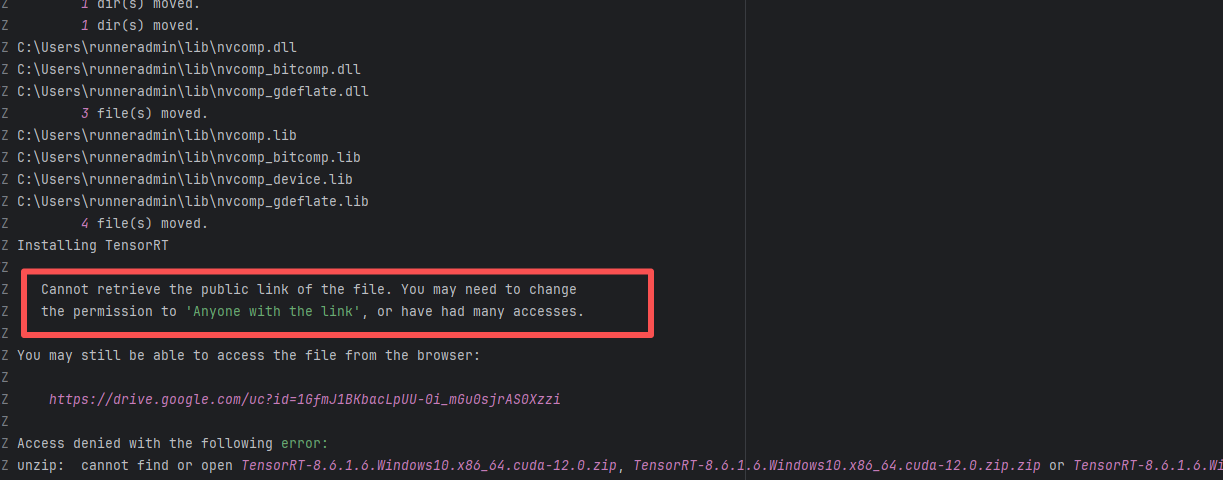

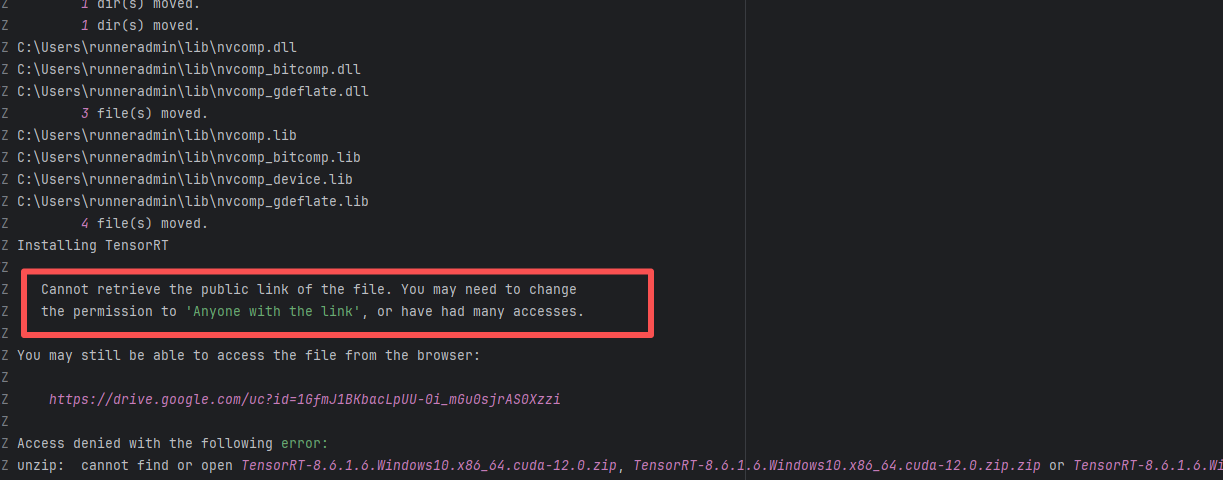

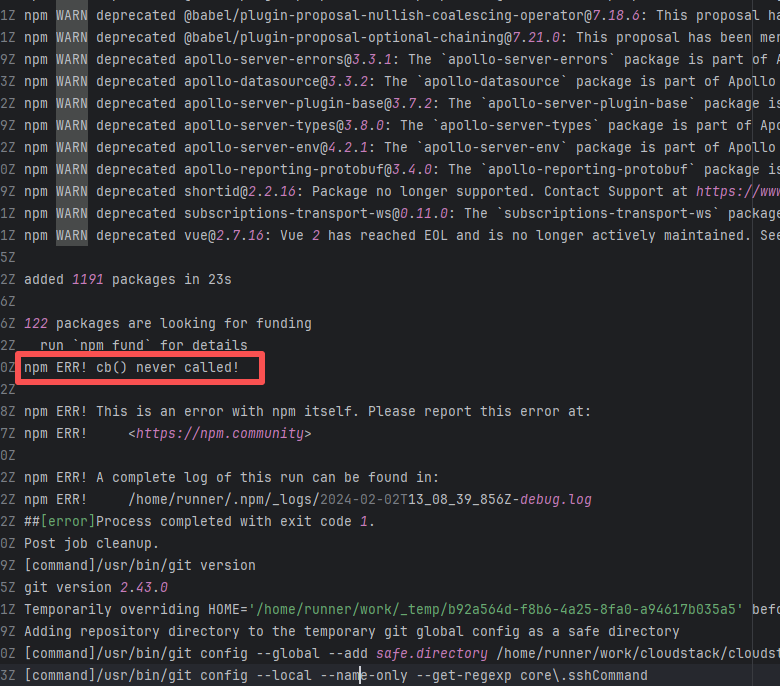

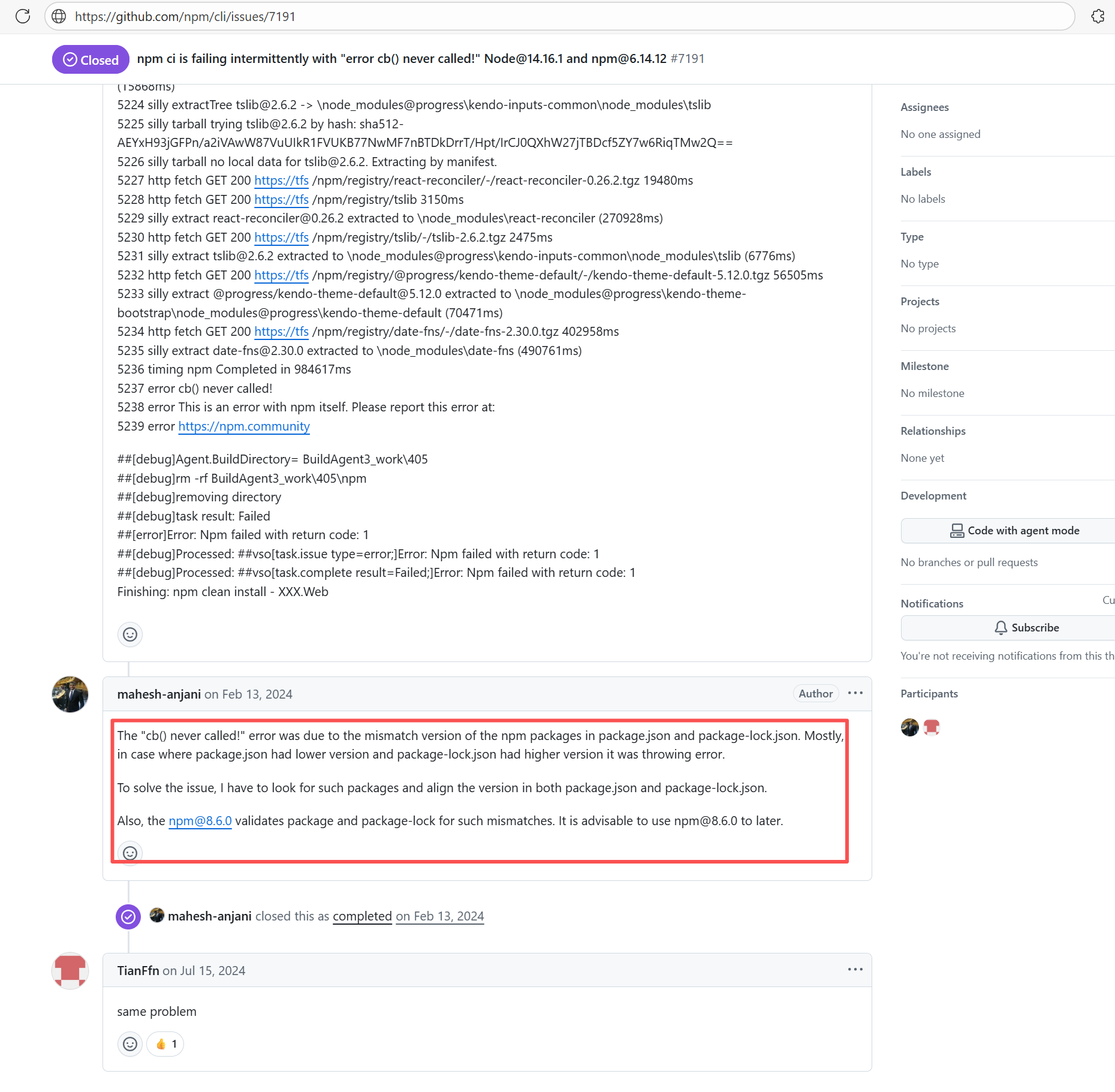

| bytedeco/javacpp-presets |

20414451893 |

API Rate Limit |

Error: Logs indicate the core reason is improper file permission settings or excessive access frequency, resulting in the inability to generate or obtain a valid public link.

Root Cause: Analysis of rerun logs found that all 23 reruns at different intervals over the past 3 days failed. Therefore, this error is not resource access denial caused by high access frequency, but rather that the file's current permissions may not be set to "Anyone with the link". After modifying the resource permissions, the rerun was successful. |

Root Cause Fix: Manually modify the security policy of the target cloud resource to grant public read access, then the job returns to normal. |

|

| apache/cloudstack |

24668038497 |

API Rate Limit |

Root Cause: The CI script used a tokenless anonymous mode when uploading Codecov code coverage reports. Since anonymous mode is highly susceptible to triggering API rate limits from third-party service providers (HTTP 429 Too Many Requests), the coverage report upload was interrupted. |

Short-term Fix: Retry the job after the anonymous API limit window period passes.

Long-term Defense: Generate an exclusive Token on the Codecov platform and inject it into the GitHub Secrets workflow (CODECOV_TOKEN) to completely avoid the strict rate limits associated with anonymous uploads. |

|

| apache/cloudstack |

26074021724 |

API Rate Limit |

Root Cause: The CI script used a tokenless anonymous mode when uploading Codecov code coverage reports. Since anonymous mode is highly susceptible to triggering API rate limits from third-party service providers (HTTP 429 Too Many Requests), the coverage report upload was interrupted. |

Short-term Fix: Retry the job after the anonymous API limit window period passes.

Long-term Defense: Generate an exclusive Token on the Codecov platform and inject it into the GitHub Secrets workflow (CODECOV_TOKEN) to completely avoid the strict rate limits associated with anonymous uploads. |

|

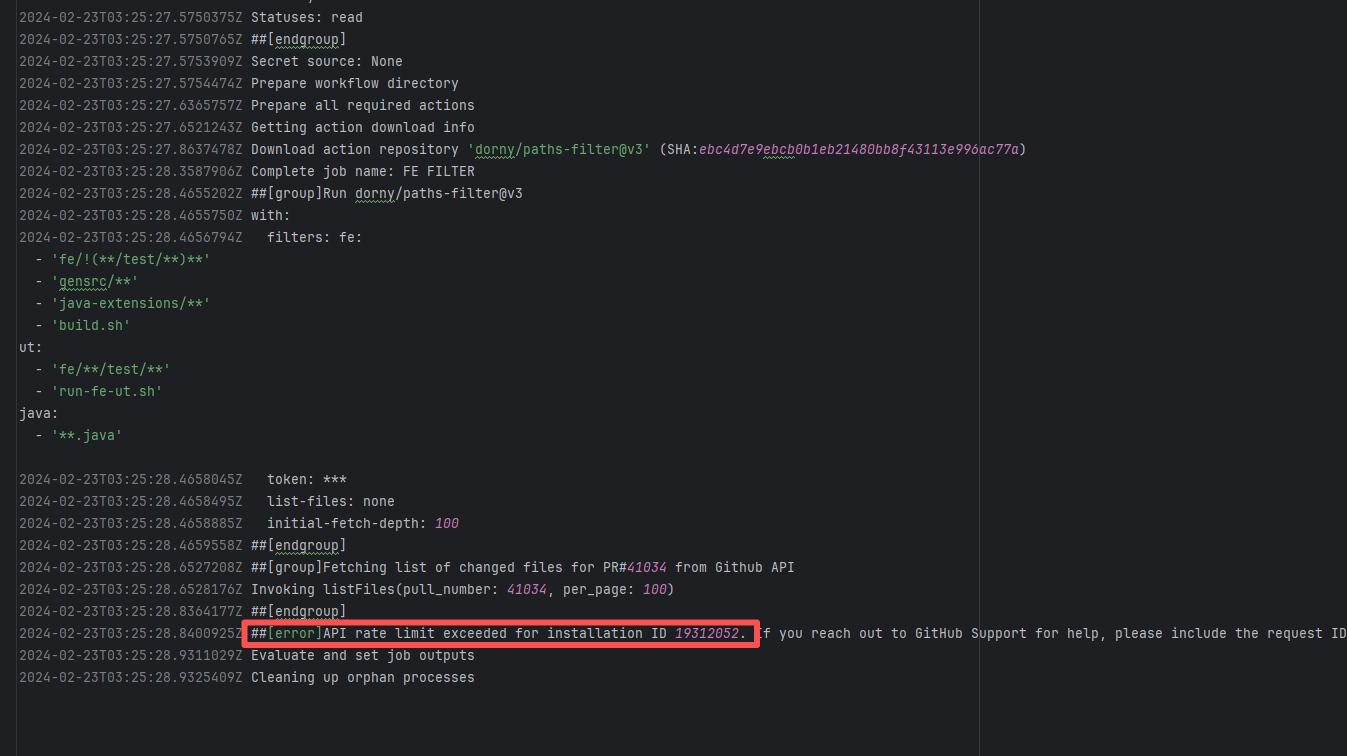

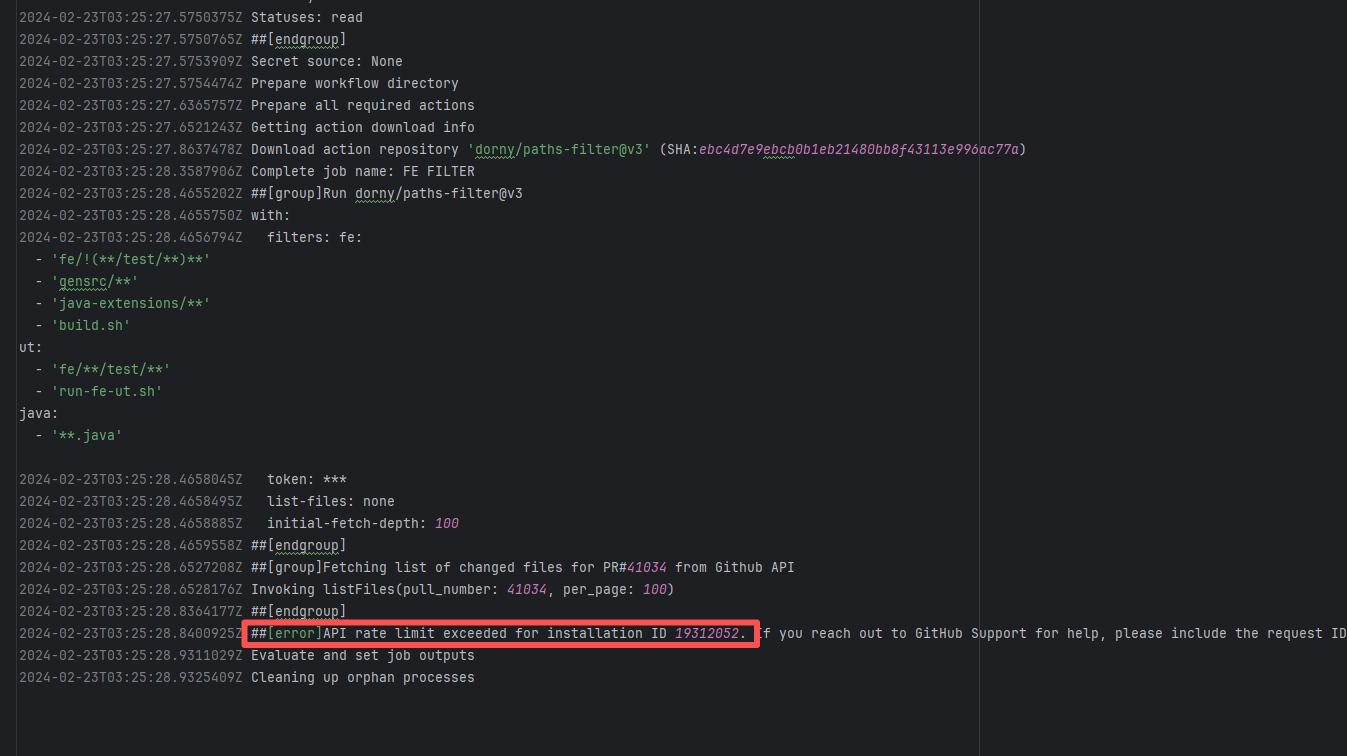

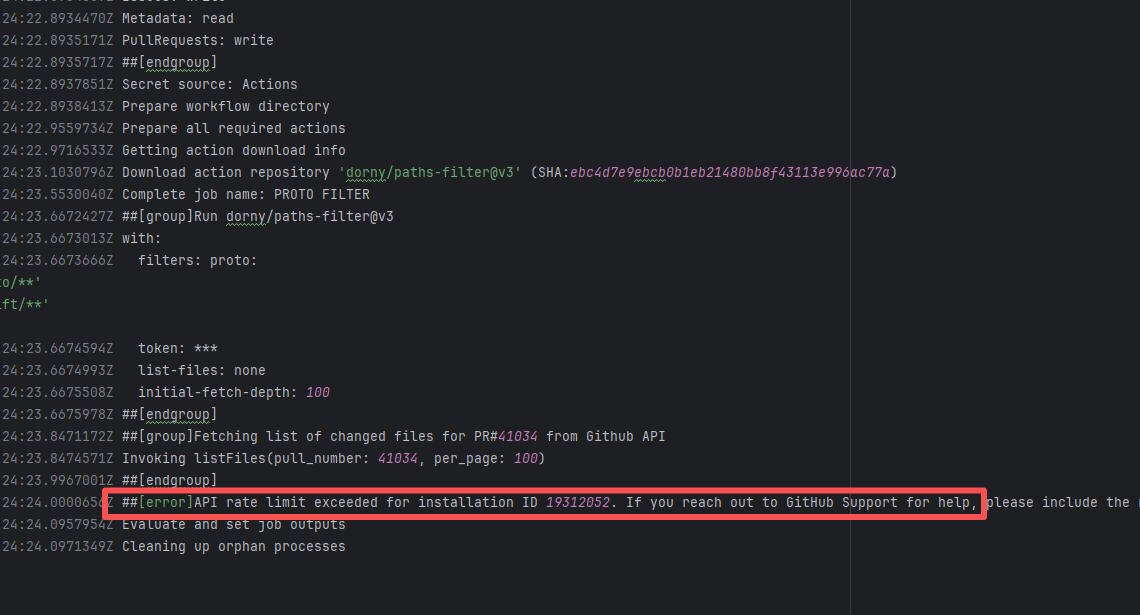

| StarRocks/starrocks |

20844377334 |

API Rate Limit |

Error: Triggered API rate limit exceeded error.

Root Cause: The installation ID of the current GitHub App or Actions exceeded GitHub's official rate limit quota within a short period, causing subsequent requests to be intercepted and rejected by the server. |

Short-term Fix: Wait 5-10 minutes for API quota to automatically recover, then Rerun.

Long-term Defense:

1. Optimize CI processes to reduce unnecessary GitHub API calls;

2. Configure exclusive Personal Access Tokens (PAT) with higher quotas for frequently-called Actions;

3. Introduce local caching strategies (e.g., cache dependencies) to reduce cross-network fetch frequency. |

|

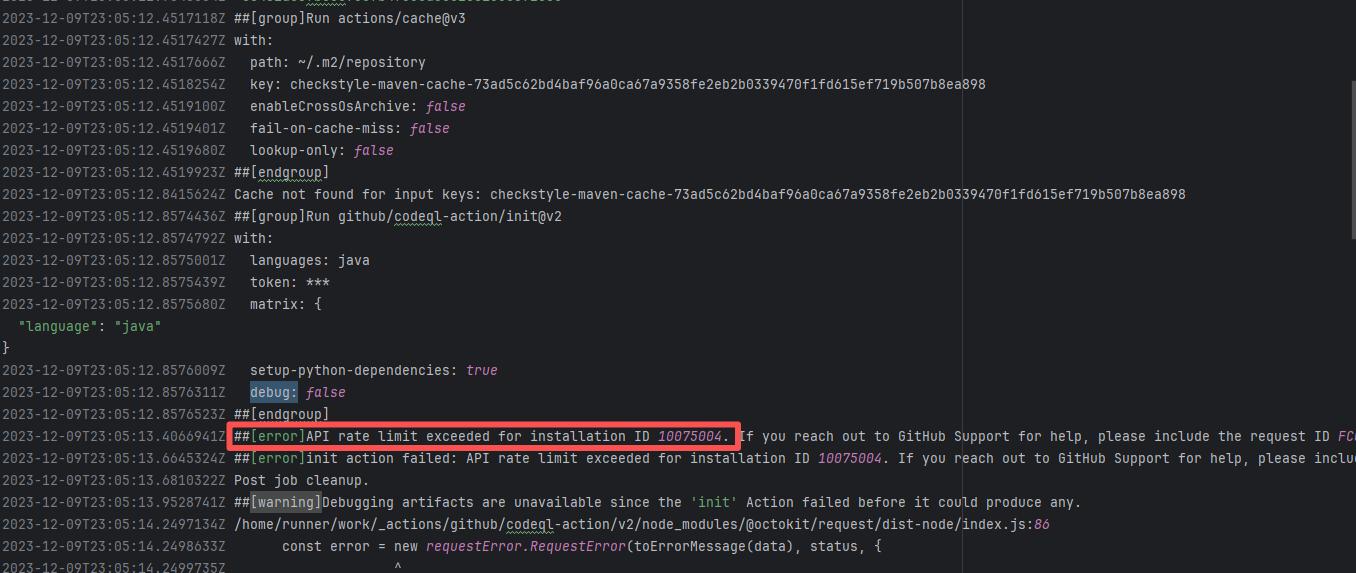

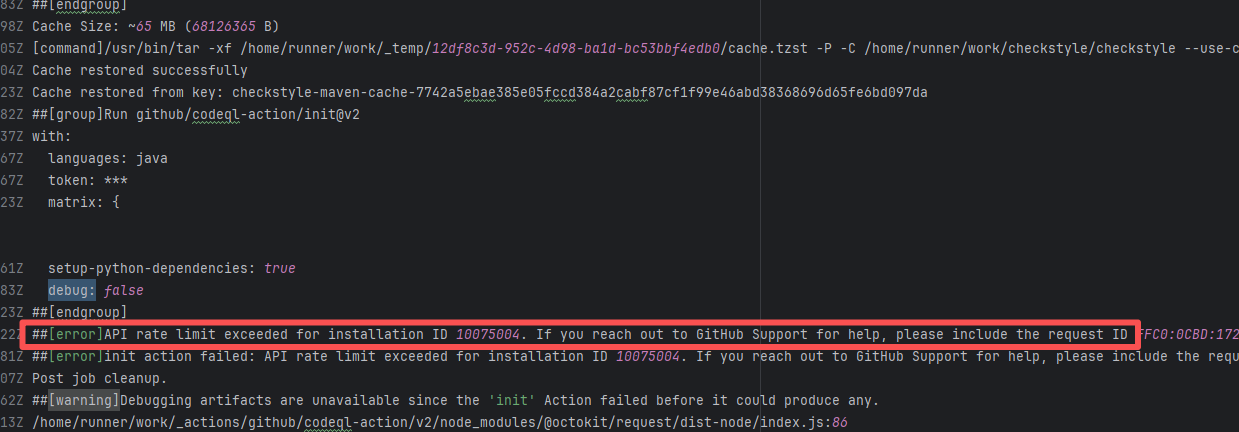

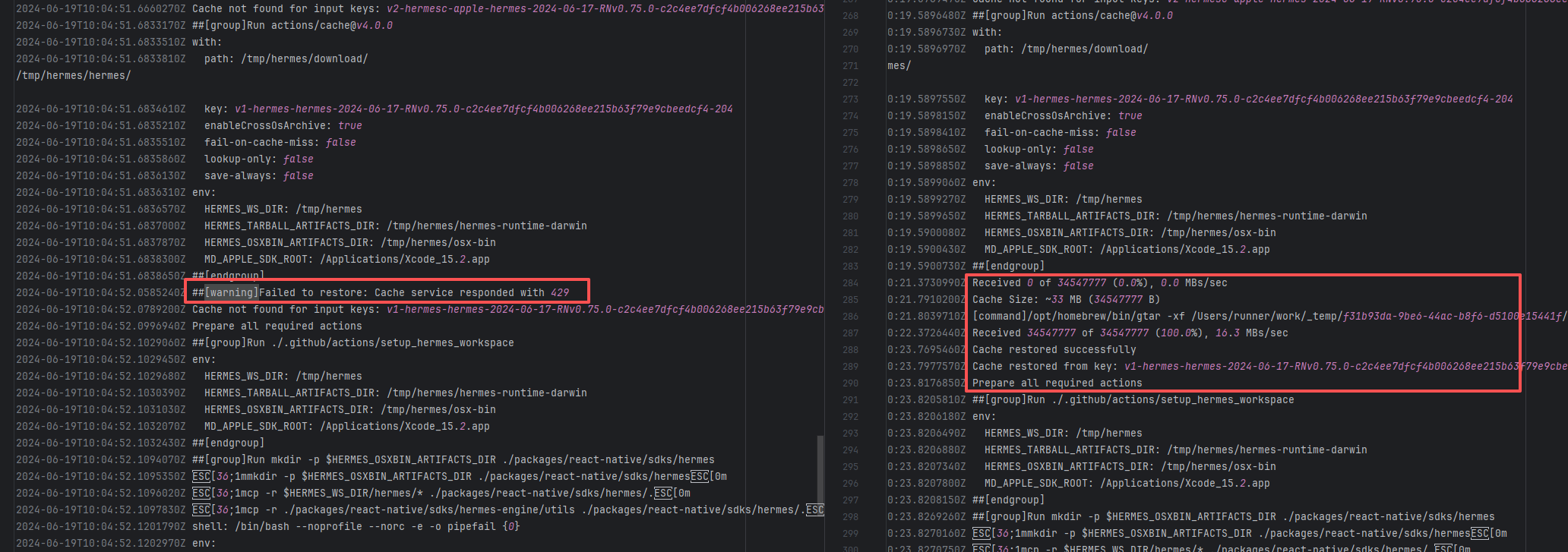

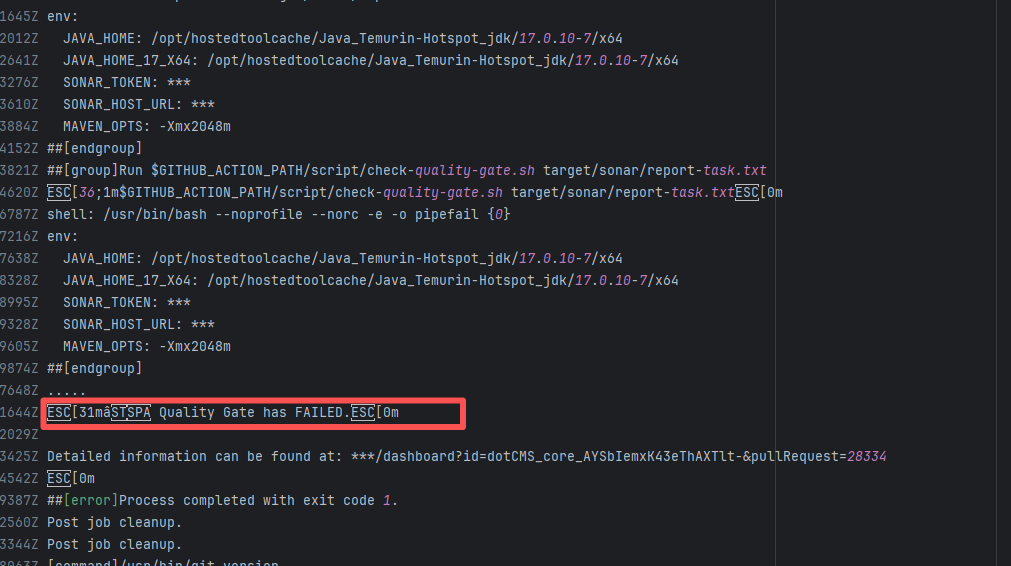

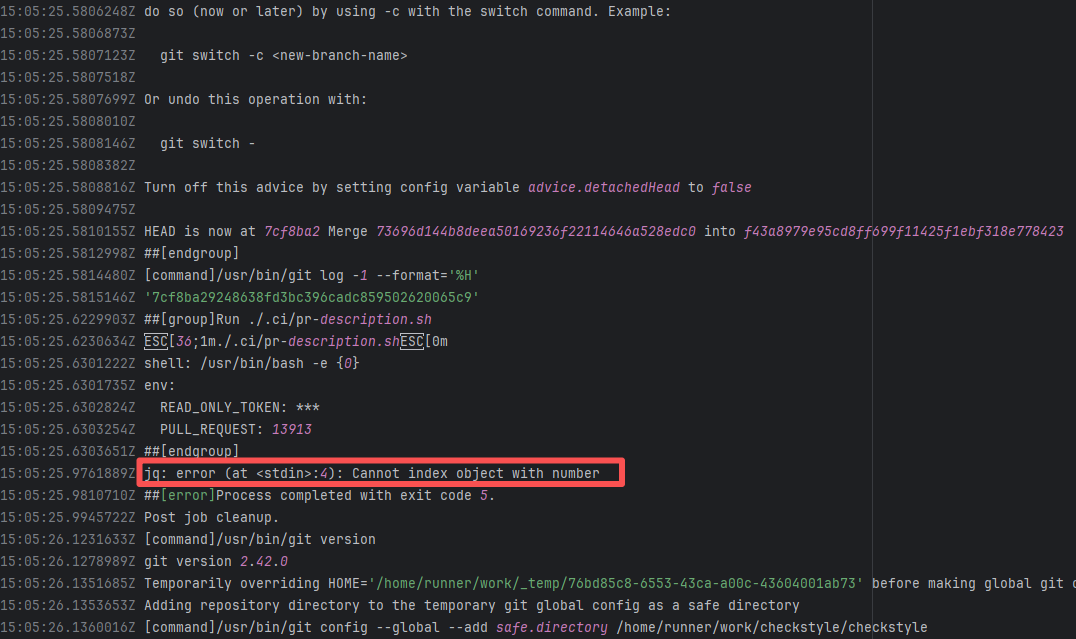

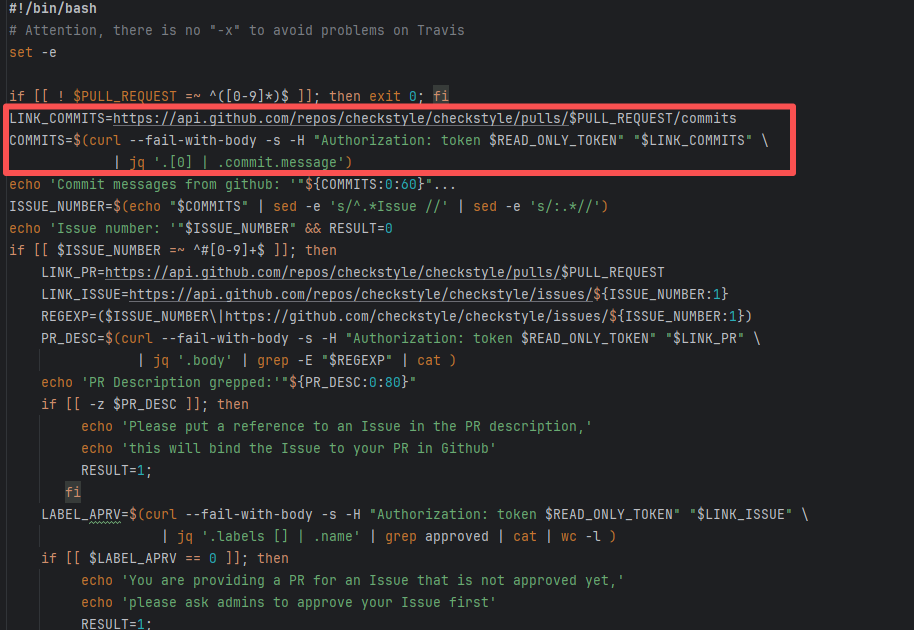

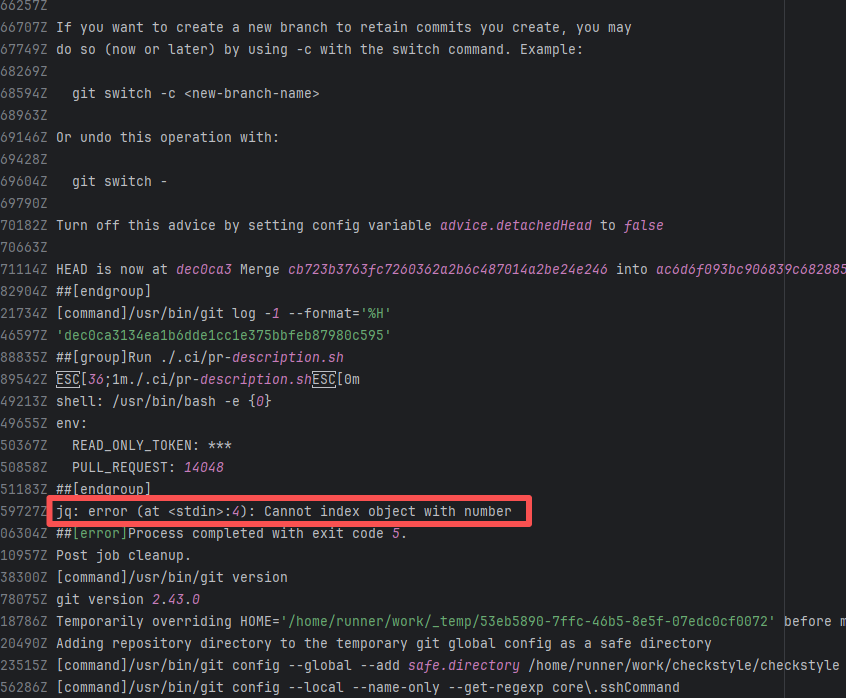

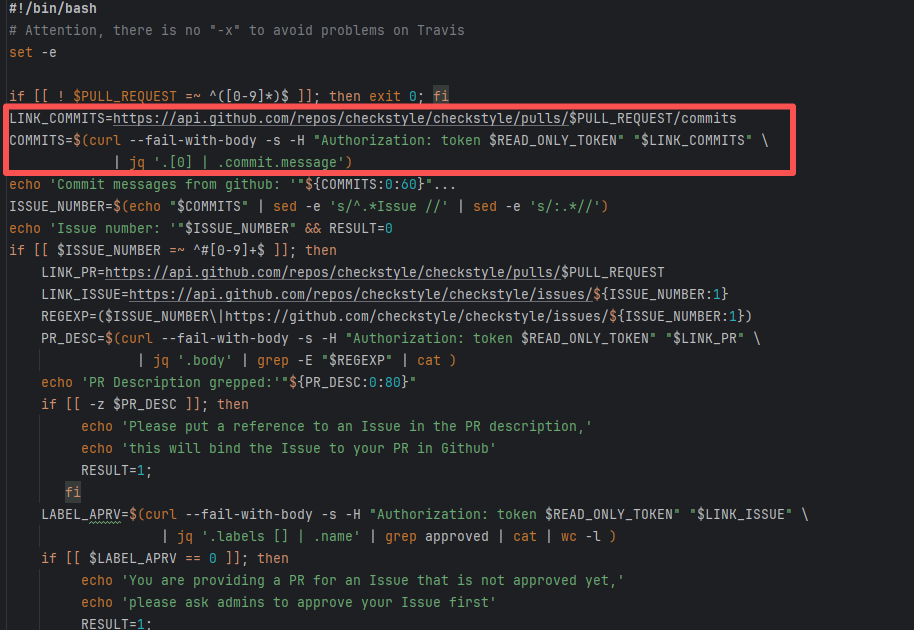

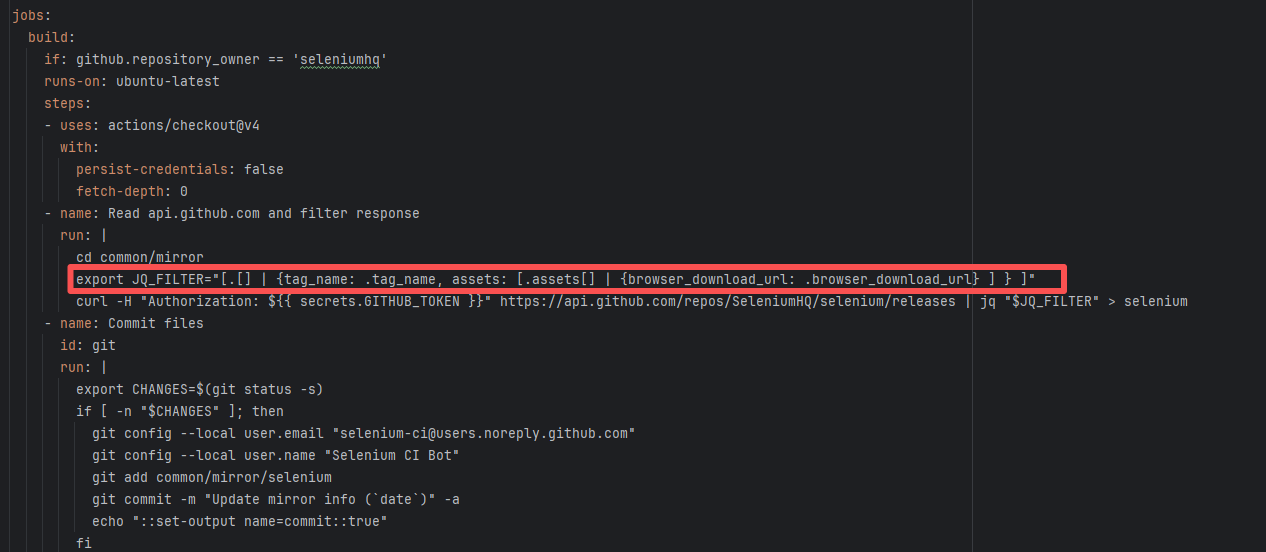

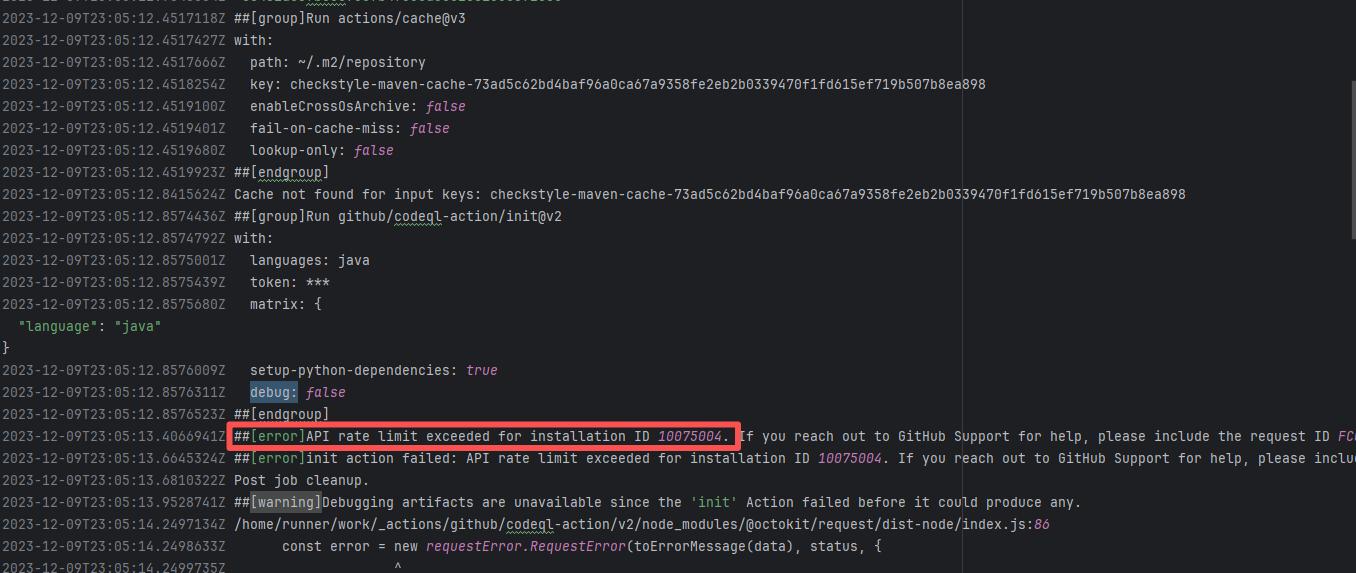

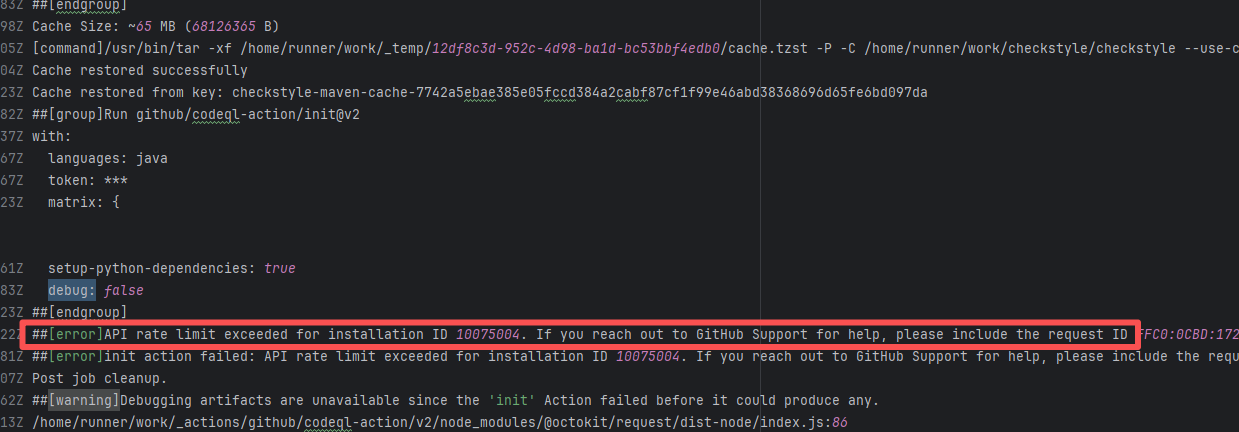

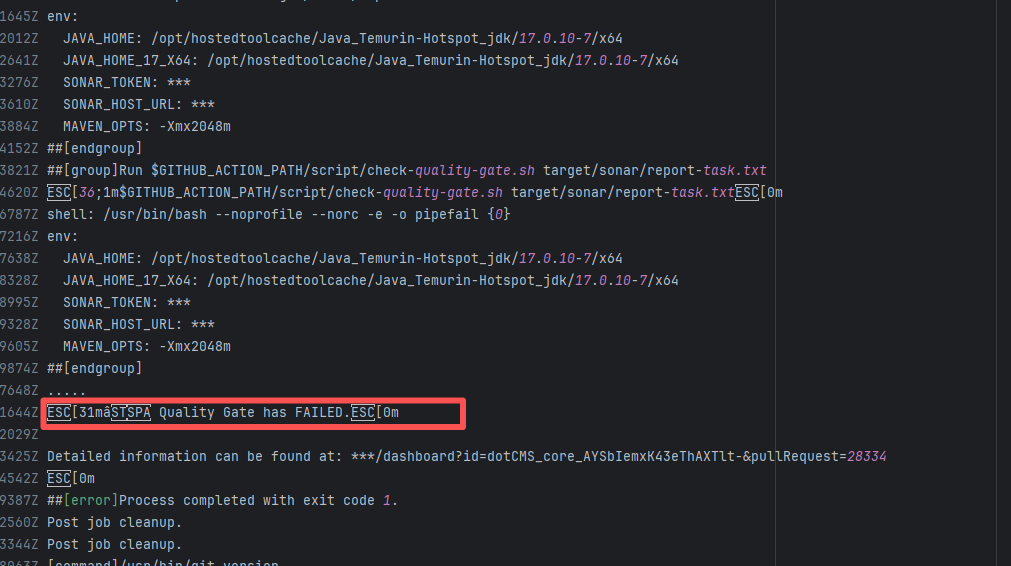

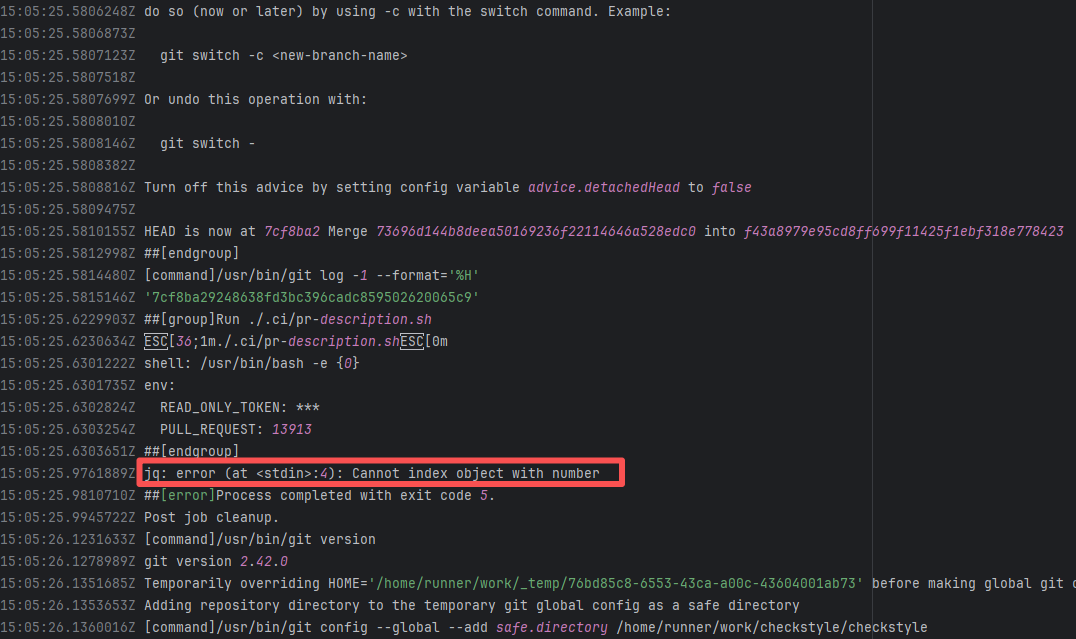

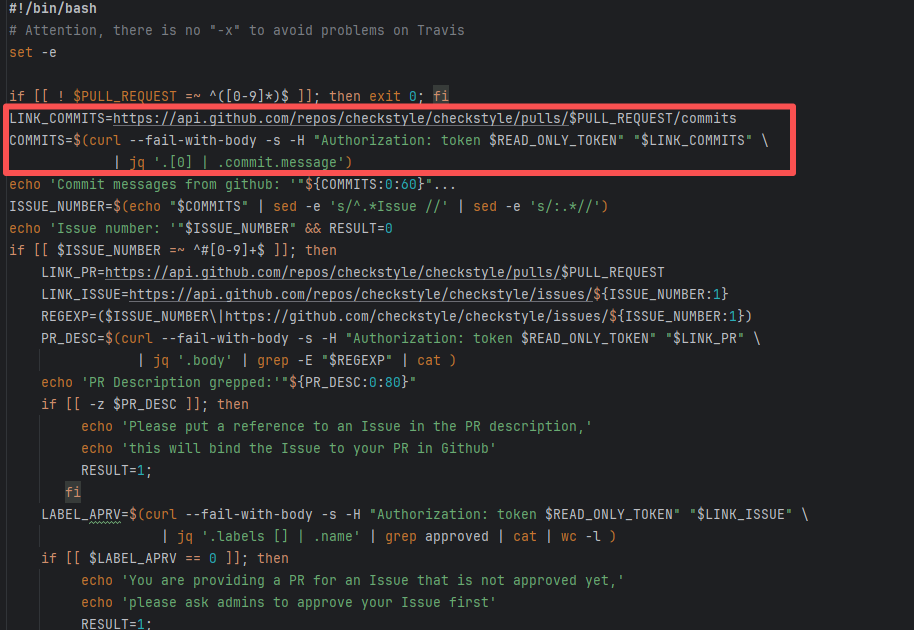

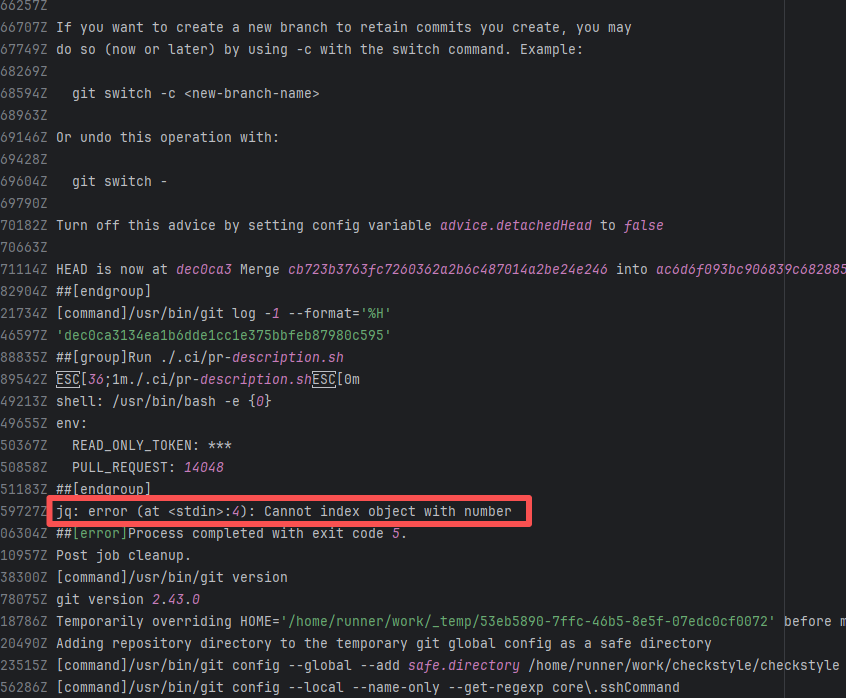

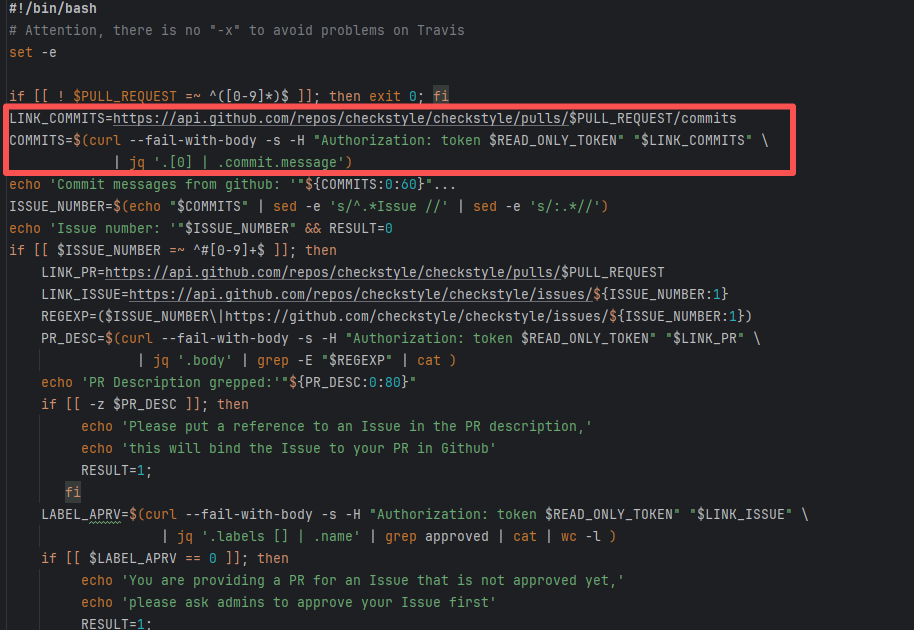

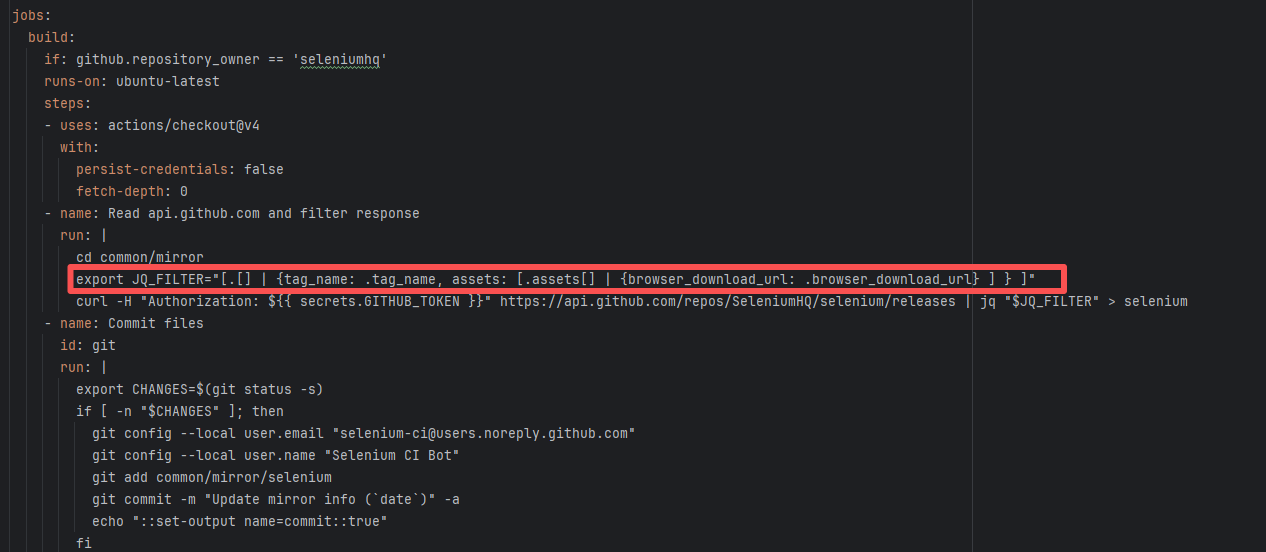

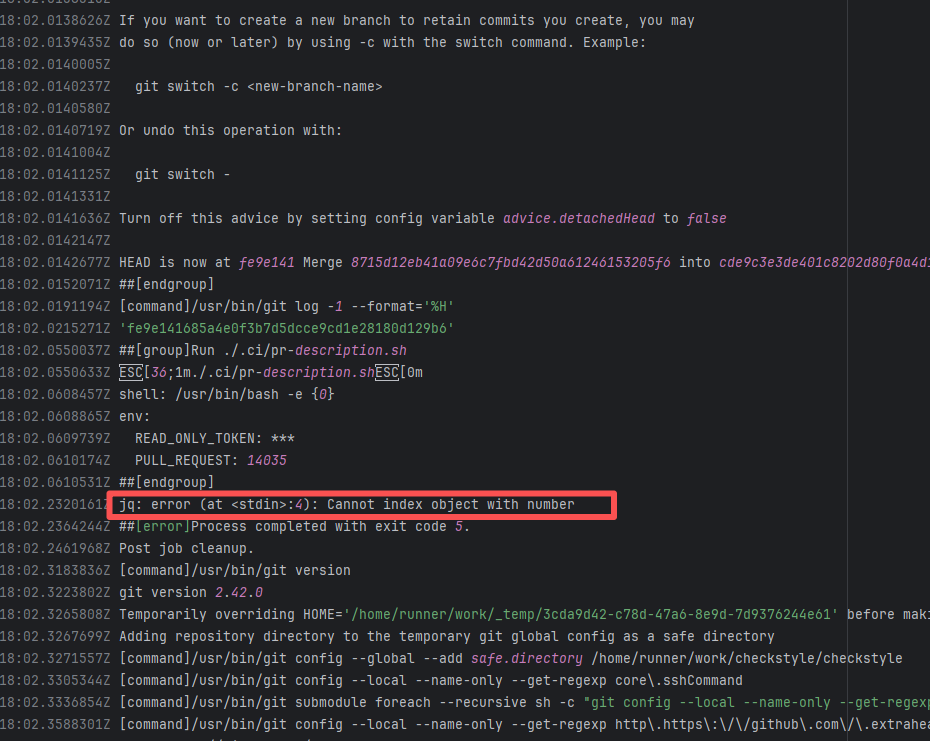

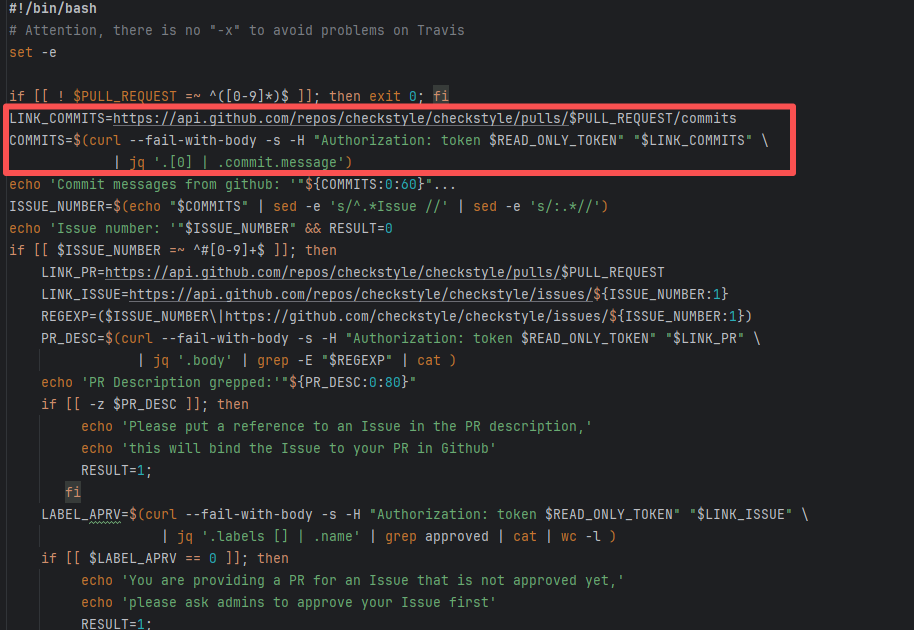

| checkstyle/checkstyle |

19481006838 |

API Rate Limit |

Error: Triggered API rate limit exceeded error.

Root Cause: The installation ID of the current GitHub App or Actions exceeded GitHub's official rate limit quota within a short period, causing subsequent requests to be intercepted and rejected by the server. |

Short-term Fix: Wait 5-10 minutes for API quota to automatically recover, then Rerun.

Long-term Defense:

1. Optimize CI processes to reduce unnecessary GitHub API calls;

2. Configure exclusive Personal Access Tokens (PAT) with higher quotas for frequently-called Actions;

3. Introduce local caching strategies (e.g., cache dependencies) to reduce cross-network fetch frequency. |

|

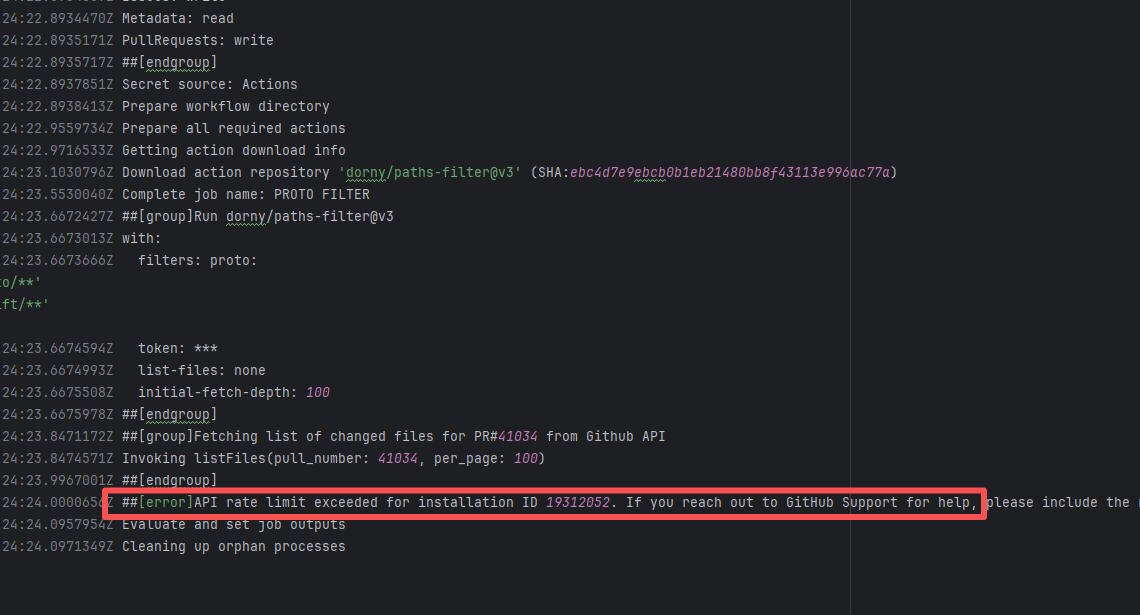

| StarRocks/starrocks |

21892706317 |

API Rate Limit |

Error: Triggered API rate limit exceeded error.

Root Cause: The installation ID of the current GitHub App or Actions exceeded GitHub's official rate limit quota within a short period, causing subsequent requests to be intercepted and rejected by the server. |

Short-term Fix: Wait 5-10 minutes for API quota to automatically recover, then Rerun.

Long-term Defense:

1. Optimize CI processes to reduce unnecessary GitHub API calls;

2. Configure exclusive Personal Access Tokens (PAT) with higher quotas for frequently-called Actions;

3. Introduce local caching strategies (e.g., cache dependencies) to reduce cross-network fetch frequency. |

|

| StarRocks/starrocks |

21892686784 |

API Rate Limit |

Error: Triggered API rate limit exceeded error.

Root Cause: The installation ID of the current GitHub App or Actions exceeded GitHub's official rate limit quota within a short period, causing subsequent requests to be intercepted and rejected by the server. |

Short-term Fix: Wait 5-10 minutes for API quota to automatically recover, then Rerun.

Long-term Defense:

1. Optimize CI processes to reduce unnecessary GitHub API calls;

2. Configure exclusive Personal Access Tokens (PAT) with higher quotas for frequently-called Actions;

3. Introduce local caching strategies (e.g., cache dependencies) to reduce cross-network fetch frequency. |

|

| StarRocks/starrocks |

20844369672 |

API Rate Limit |

Error: Triggered API rate limit exceeded error.

Root Cause: The installation ID of the current GitHub App or Actions exceeded GitHub's official rate limit quota within a short period, causing subsequent requests to be intercepted and rejected by the server. |

Short-term Fix: Wait 5-10 minutes for API quota to automatically recover, then Rerun.

Long-term Defense:

1. Optimize CI processes to reduce unnecessary GitHub API calls;

2. Configure exclusive Personal Access Tokens (PAT) with higher quotas for frequently-called Actions;

3. Introduce local caching strategies (e.g., cache dependencies) to reduce cross-network fetch frequency. |

|

| liquibase/liquibase |

22140404789 |

API Rate Limit |

Error: Triggered API rate limit exceeded error.

Root Cause: The installation ID of the current GitHub App or Actions exceeded GitHub's official rate limit quota within a short period, causing subsequent requests to be intercepted and rejected by the server. |

Short-term Fix: Wait 5-10 minutes for API quota to automatically recover, then Rerun.

Long-term Defense:

1. Optimize CI processes to reduce unnecessary GitHub API calls;

2. Configure exclusive Personal Access Tokens (PAT) with higher quotas for frequently-called Actions;

3. Introduce local caching strategies (e.g., cache dependencies) to reduce cross-network fetch frequency. |

|

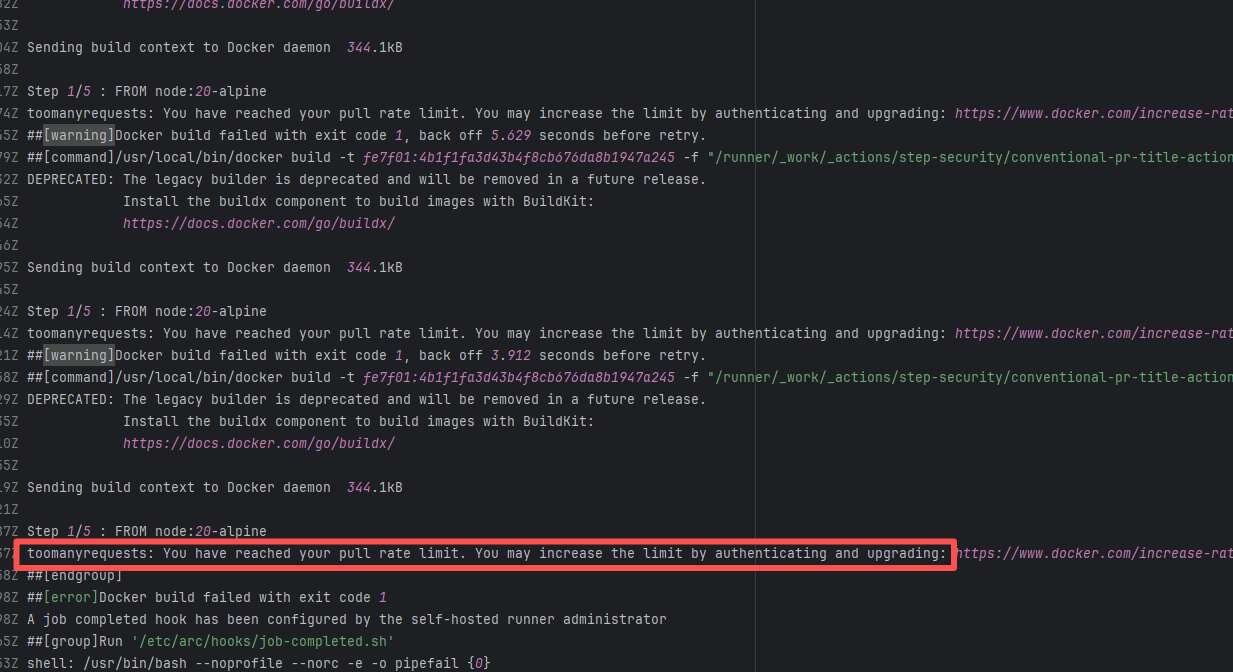

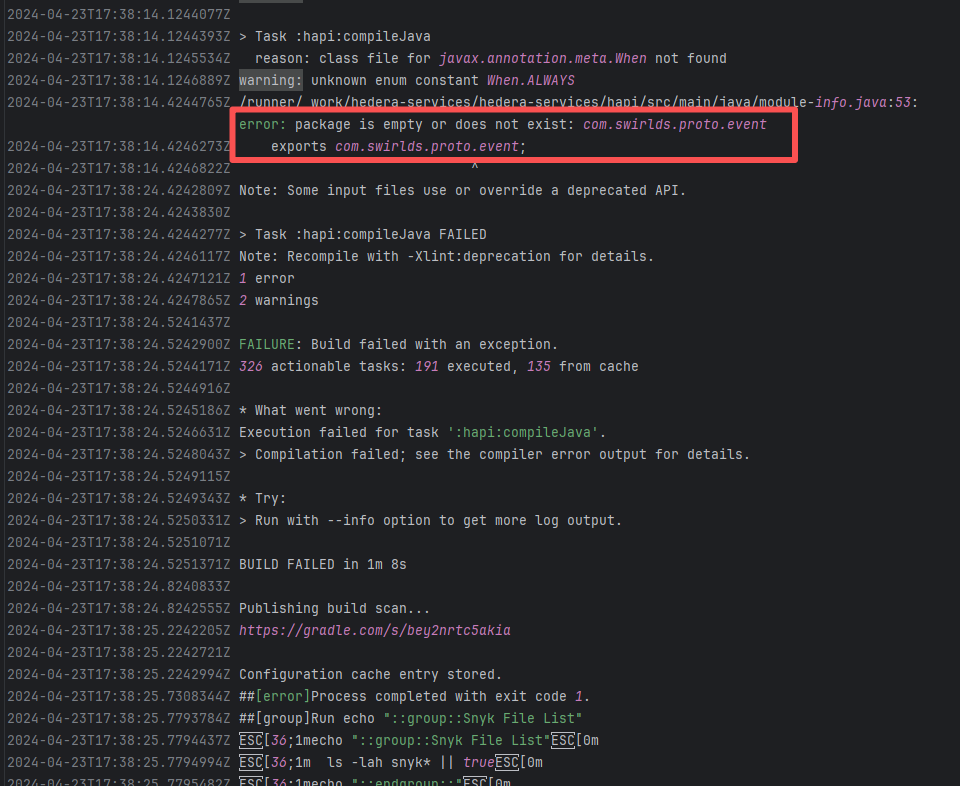

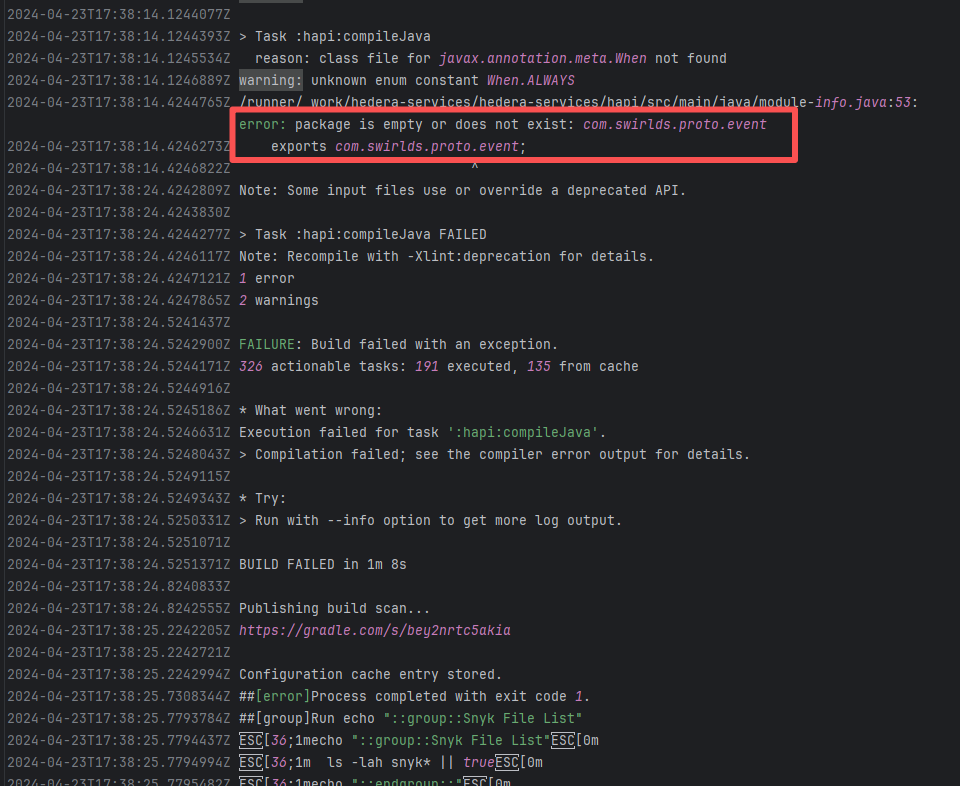

| hashgraph/hedera-services |

27622217486 |

API Rate Limit |

Error: The error message indicates that the Docker Hub pull rate limit has been reached. Docker Hub has different pull rate limits for anonymous and authenticated users; unauthenticated users can only pull a limited number of images within a short period.

Root Cause: Succeeded after waiting for the API quota to recover and re-executing. |

Succeeded after waiting for API quota to recover and re-executing |

|

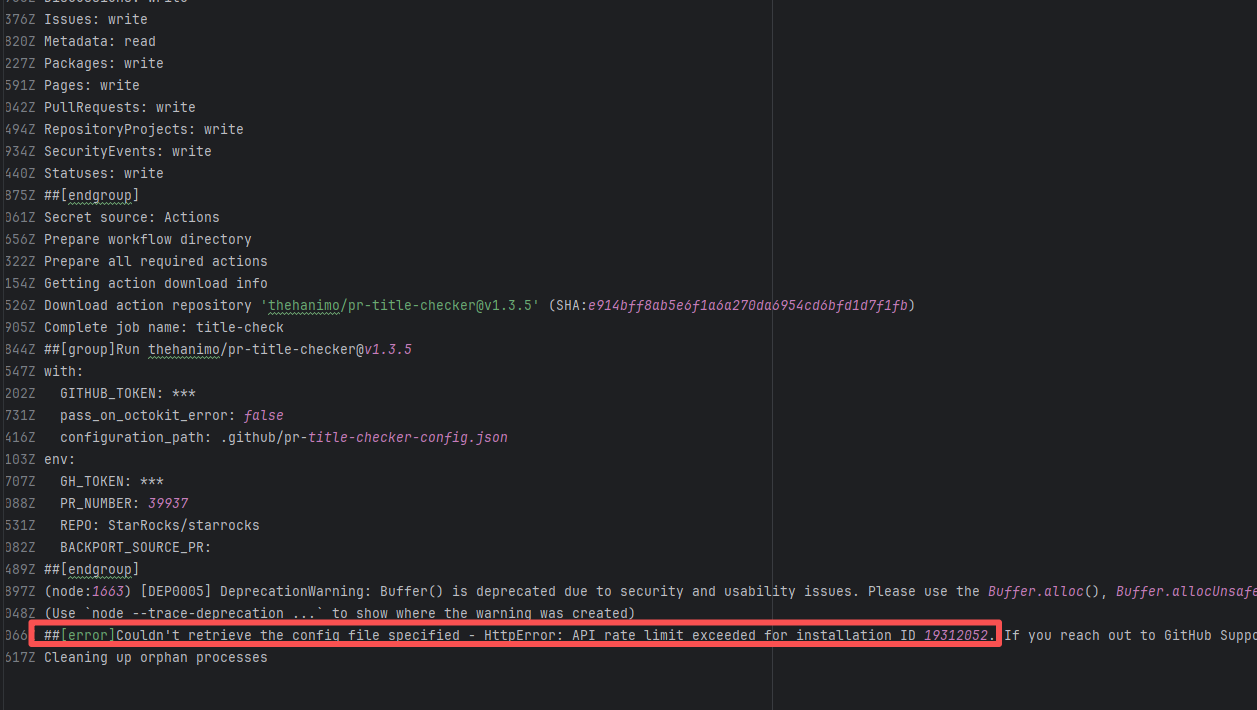

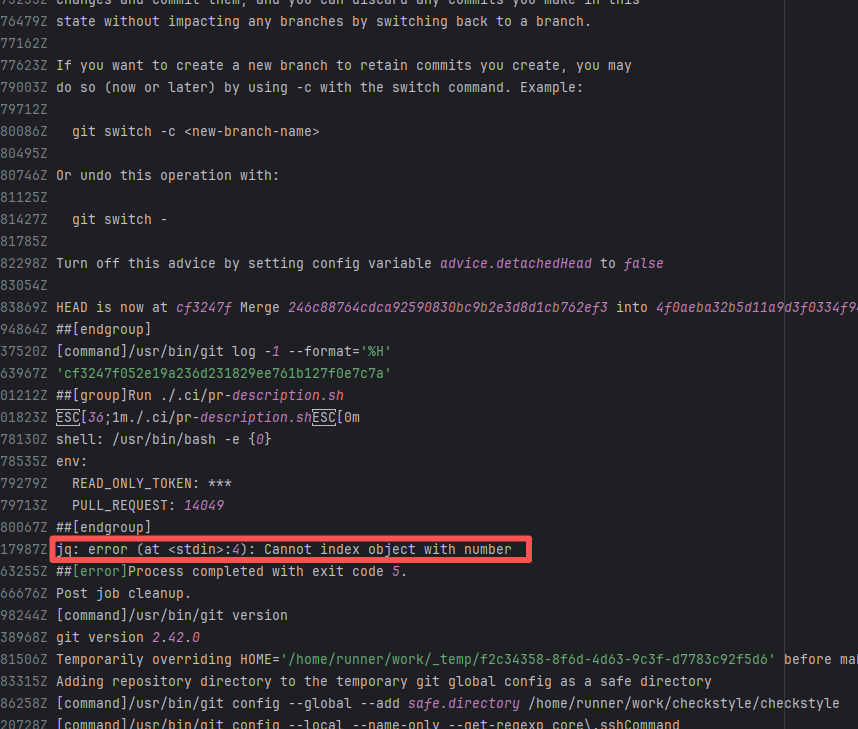

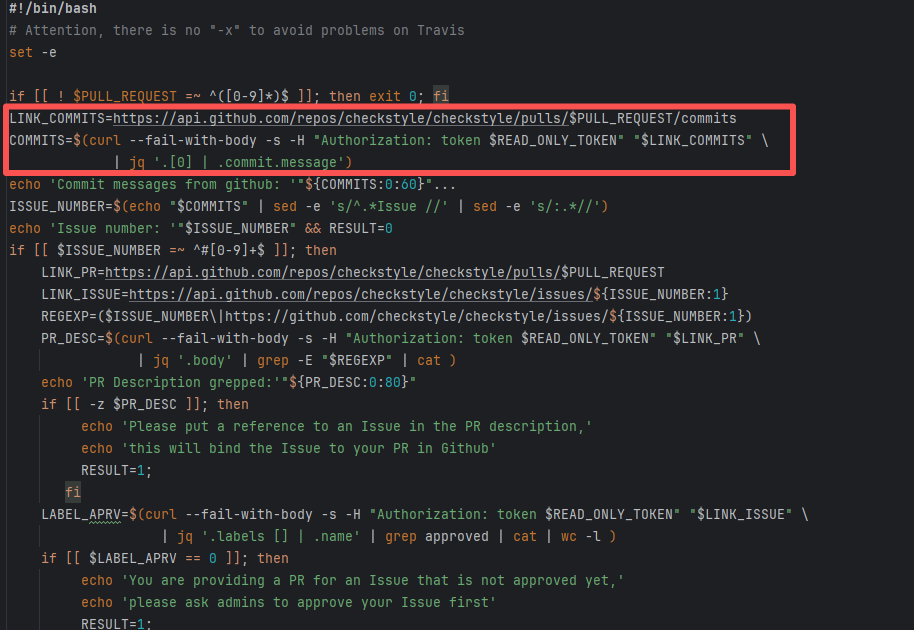

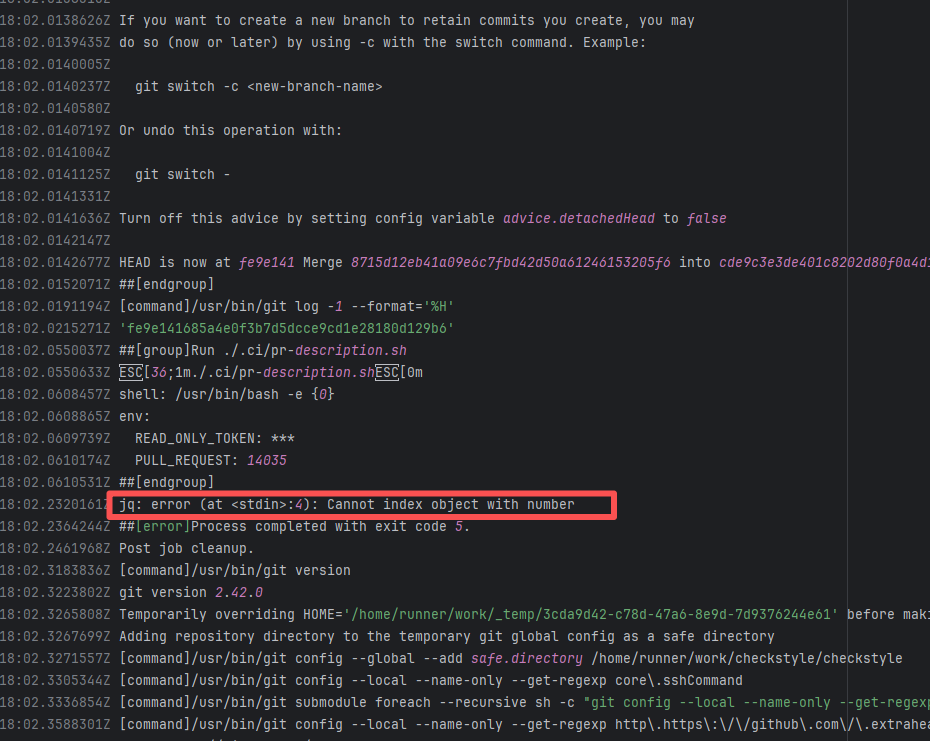

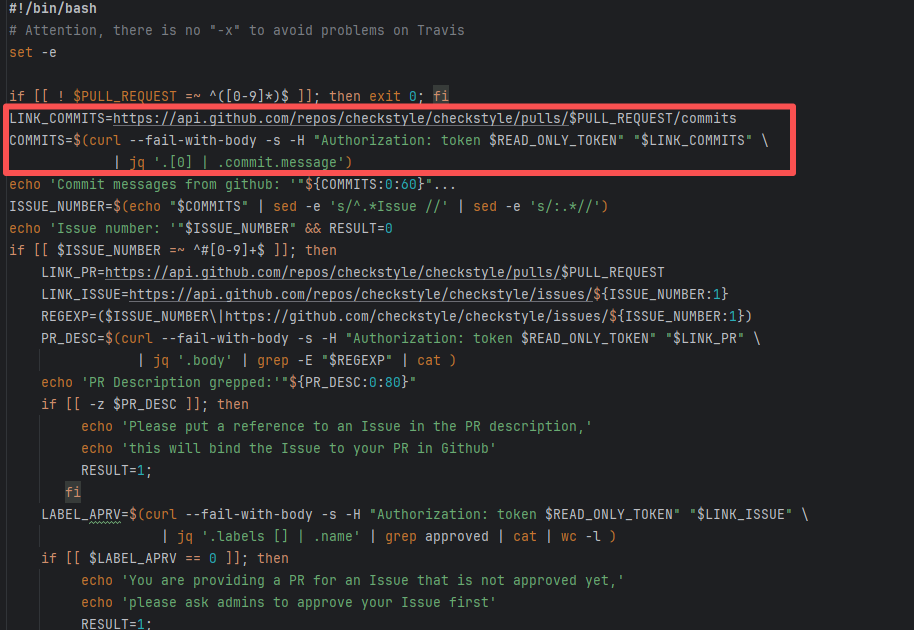

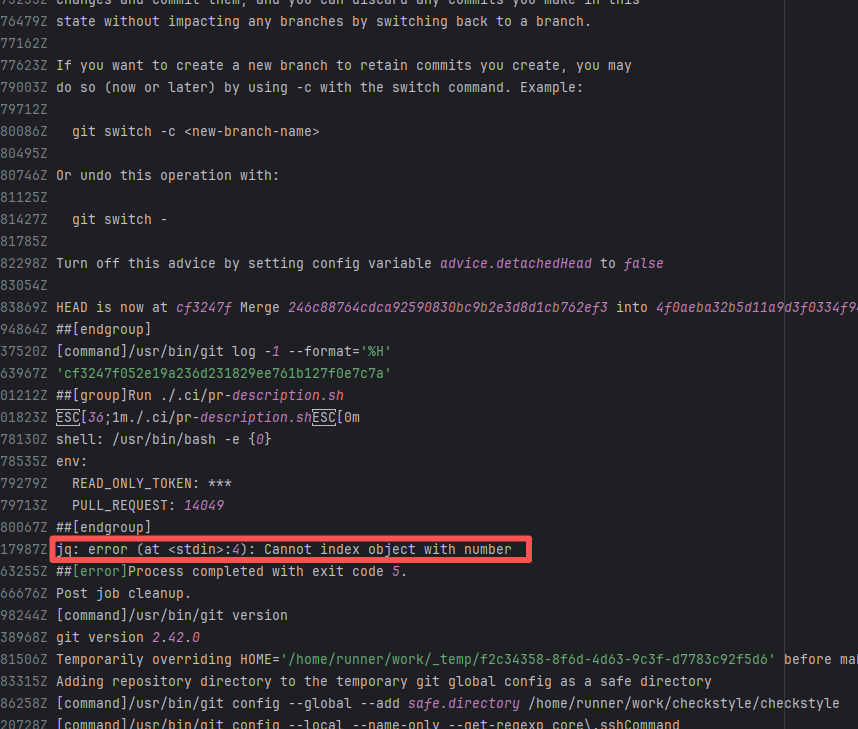

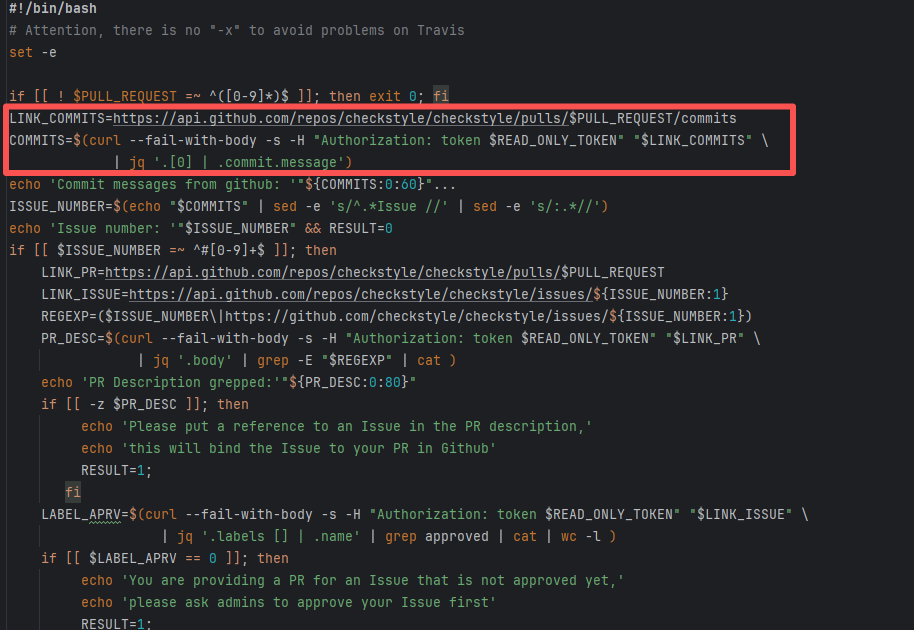

| checkstyle/checkstyle |

19490772351 |

API Rate Limit |

Error: Triggered API rate limit exceeded error.

Root Cause: The installation ID of the current GitHub App or Actions exceeded GitHub's official rate limit quota within a short period, causing subsequent requests to be intercepted and rejected by the server. |

Short-term Fix: Wait 5-10 minutes for API quota to automatically recover, then Rerun.

Long-term Defense:

1. Optimize CI processes to reduce unnecessary GitHub API calls;

2. Configure exclusive Personal Access Tokens (PAT) with higher quotas for frequently-called Actions;

3. Introduce local caching strategies (e.g., cache dependencies) to reduce cross-network fetch frequency. |

|

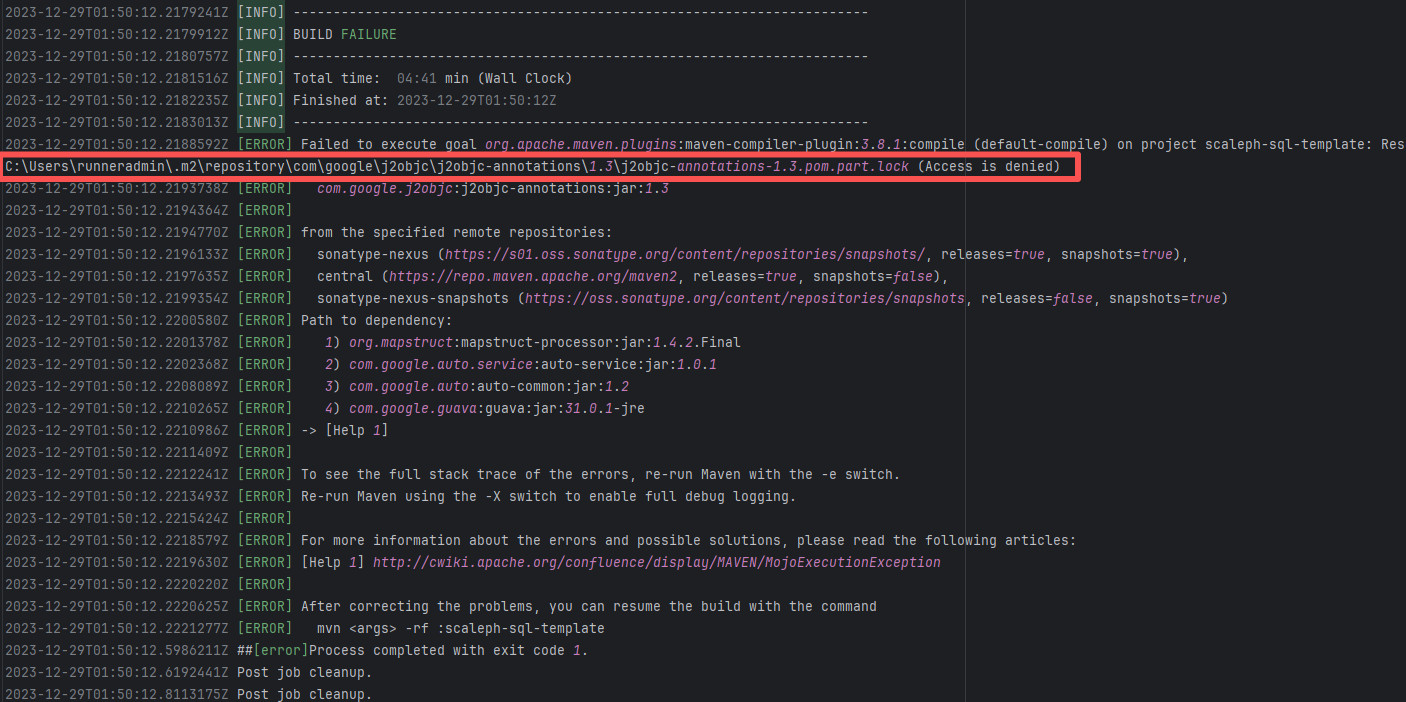

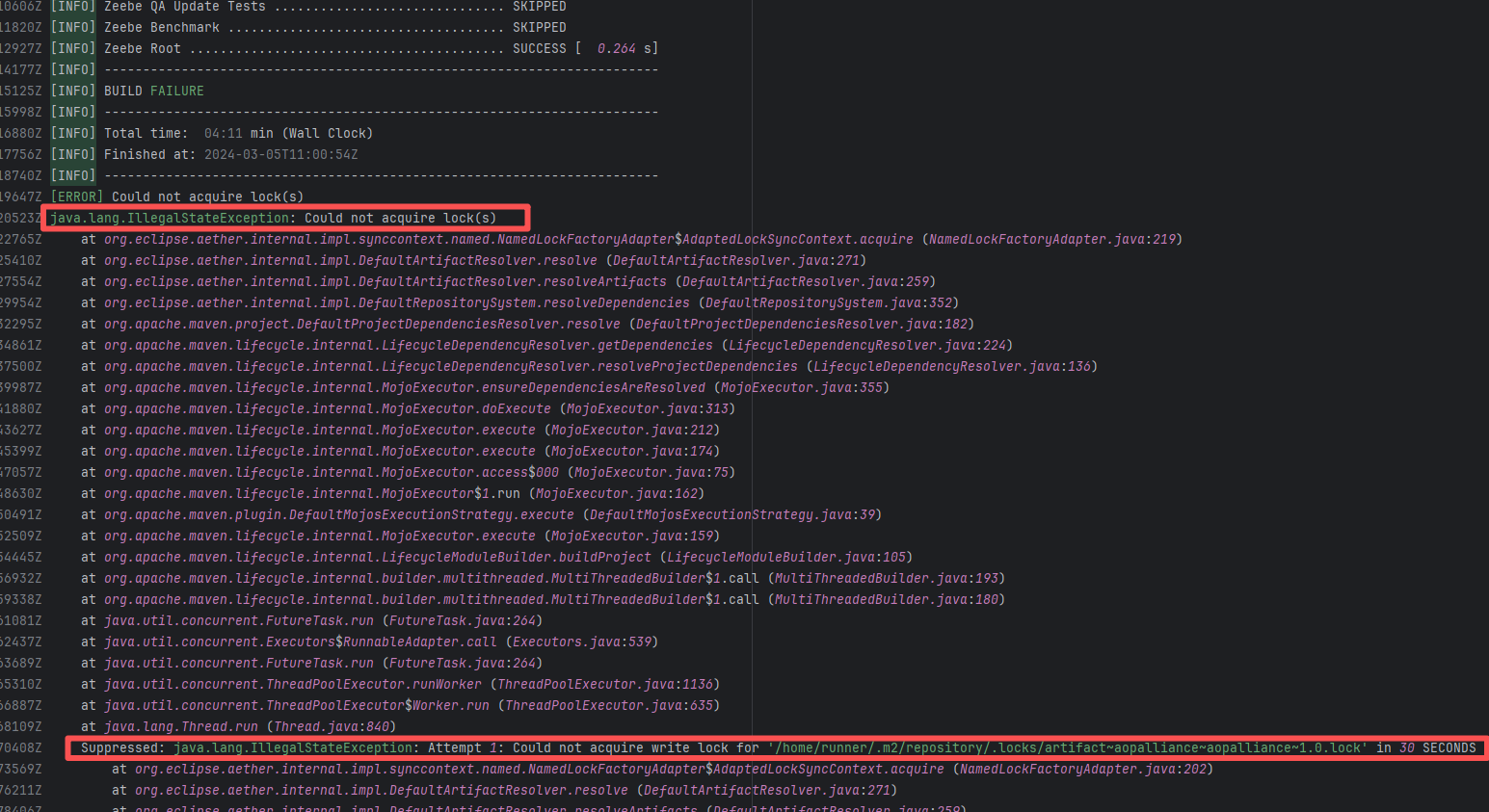

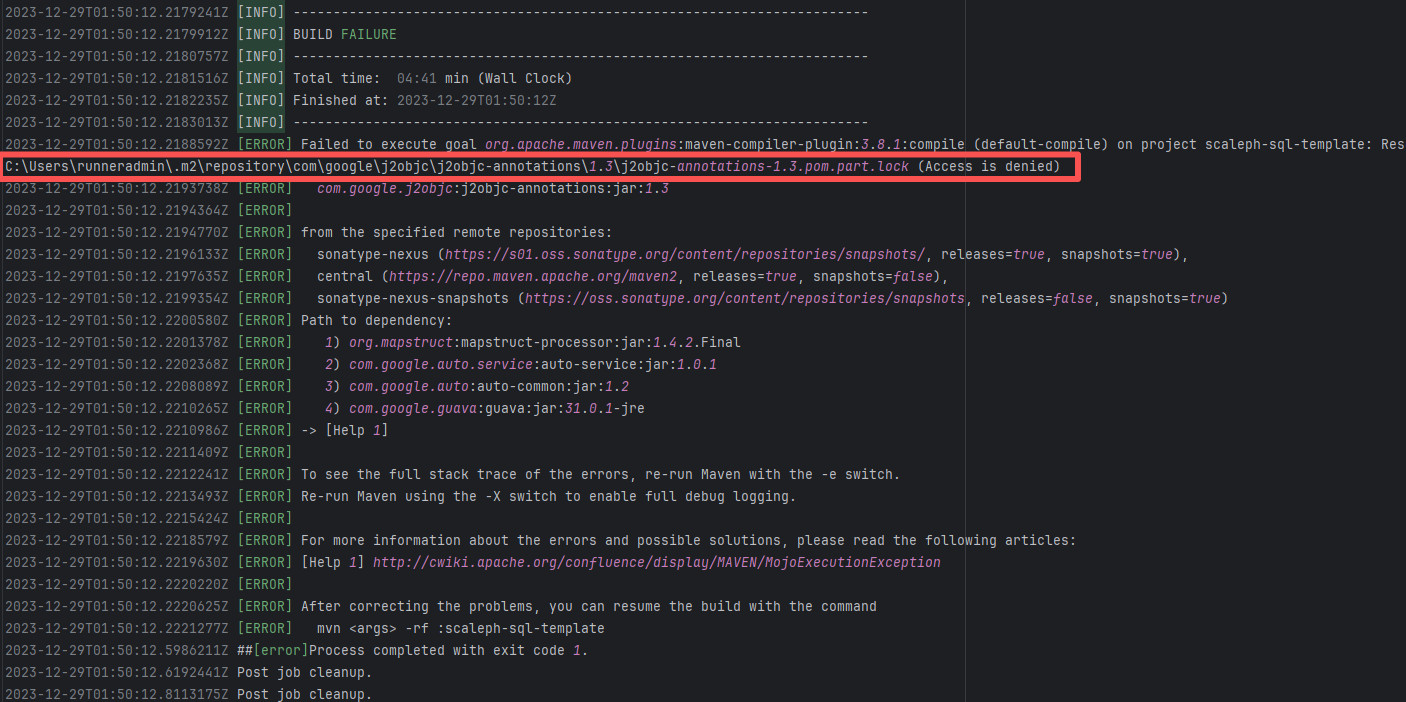

| Concurrency Issue |

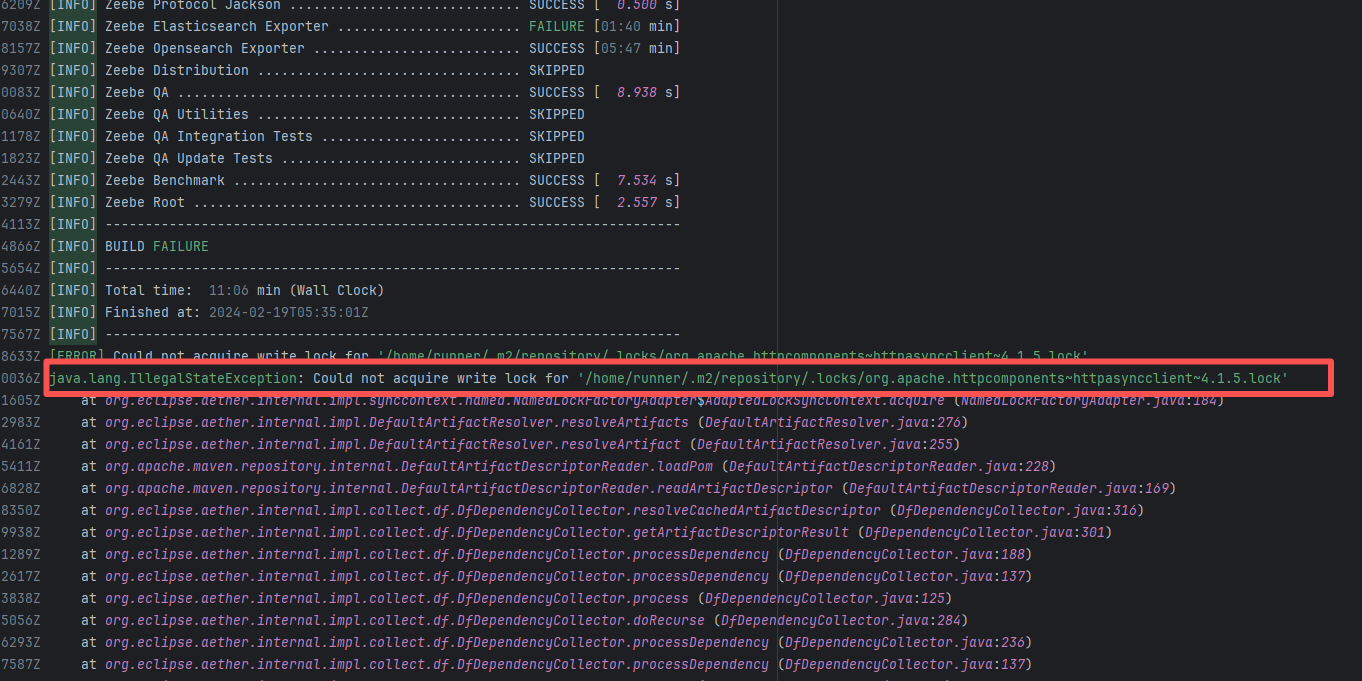

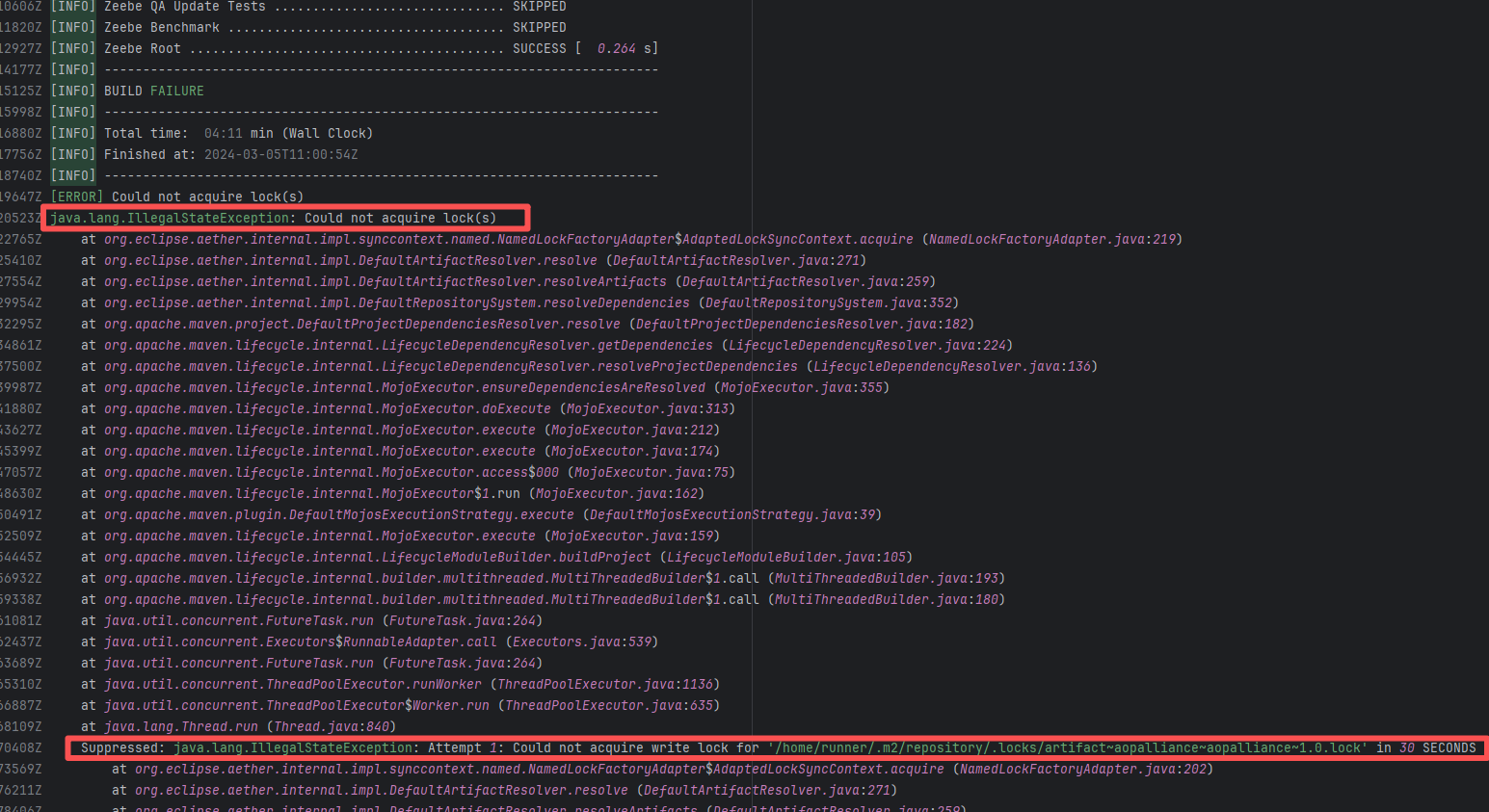

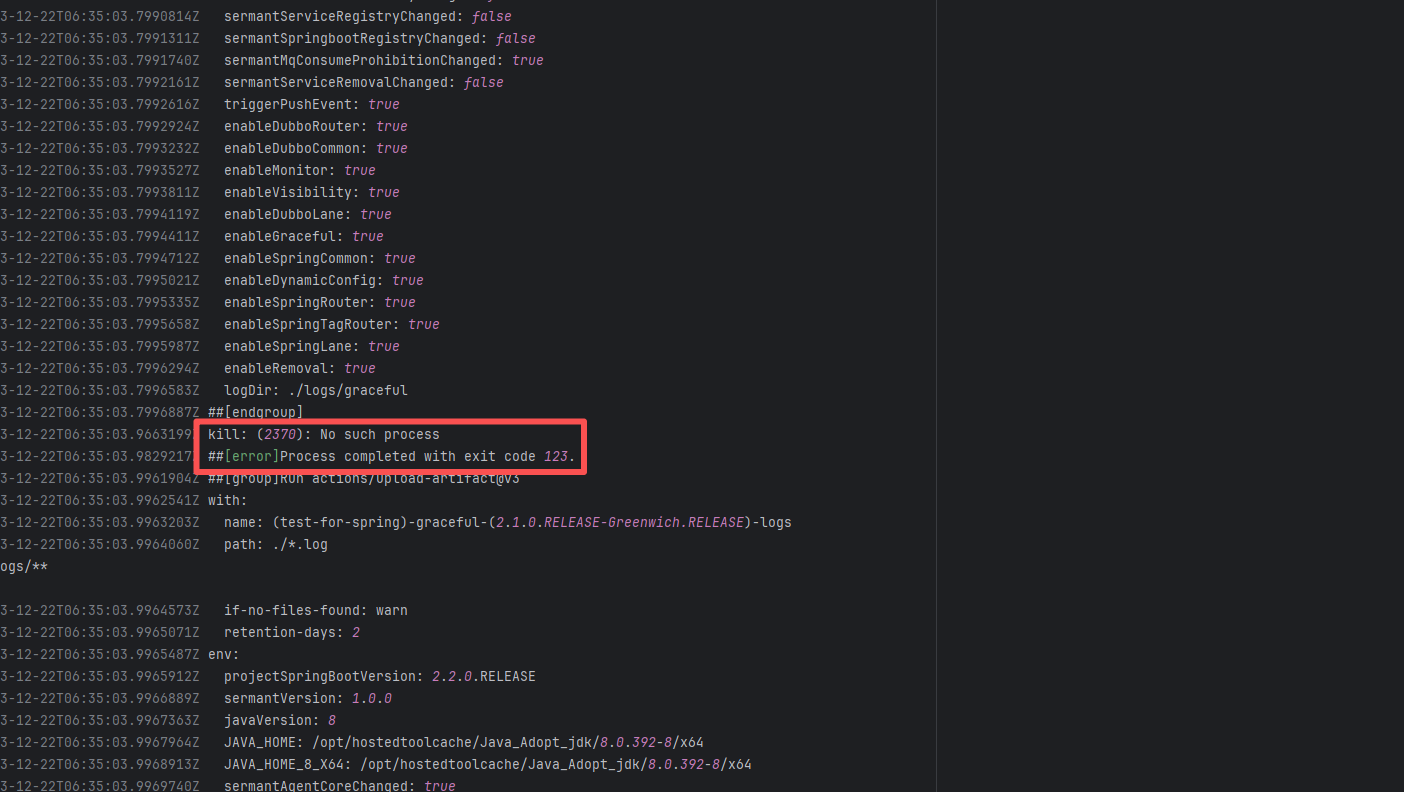

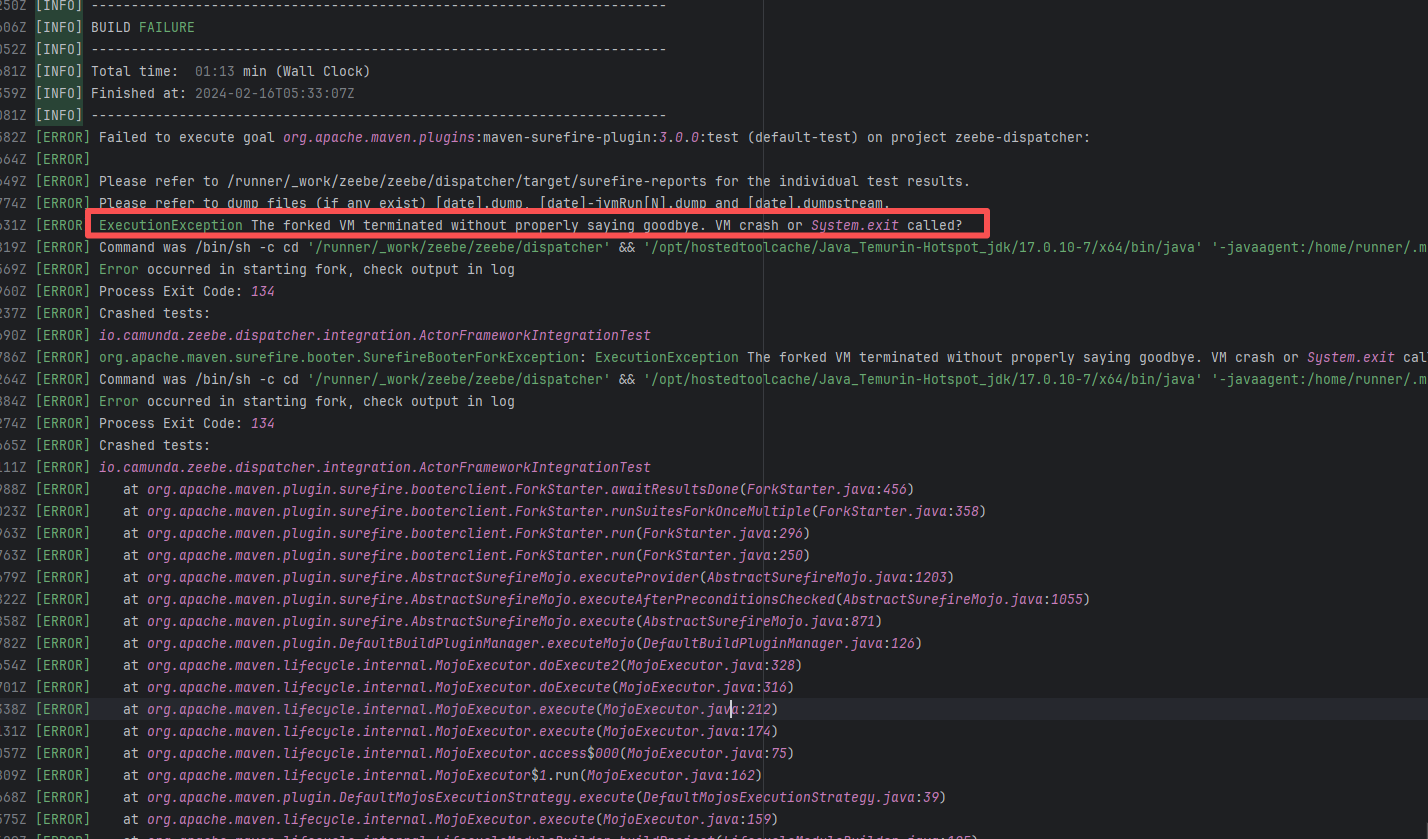

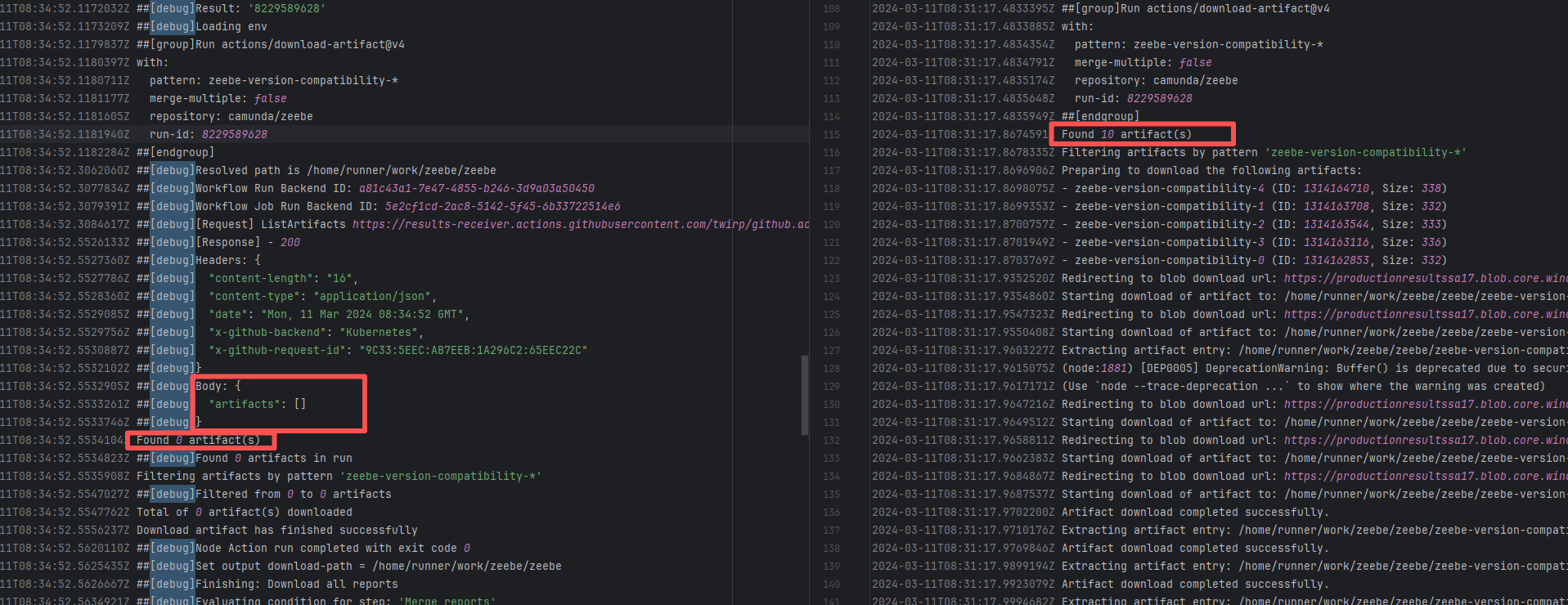

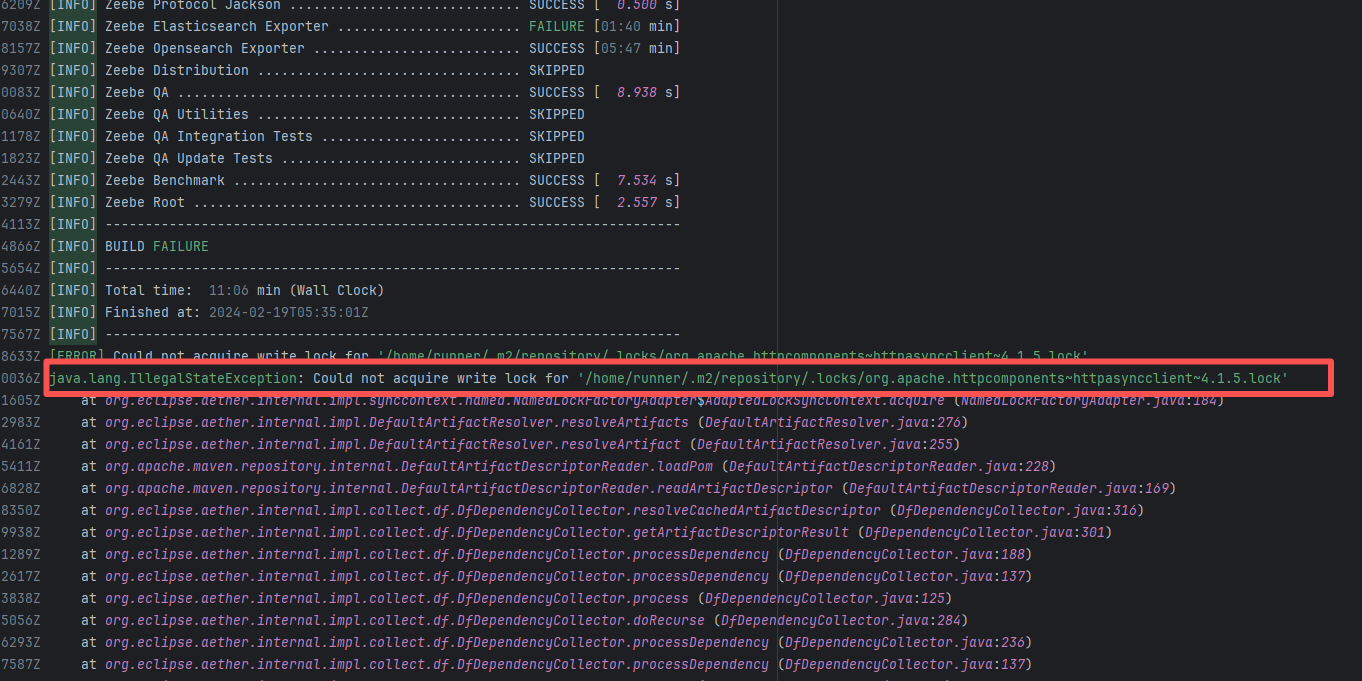

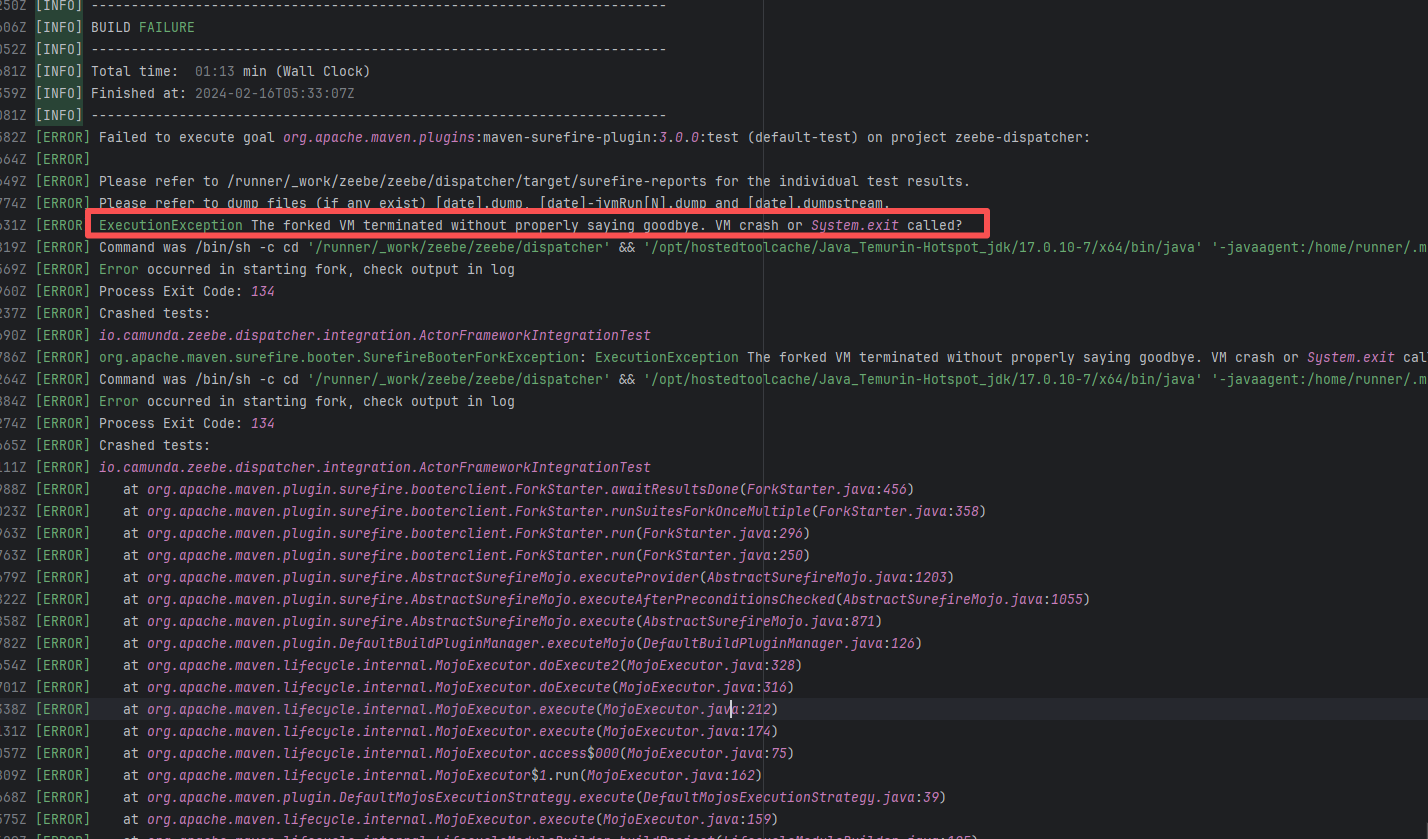

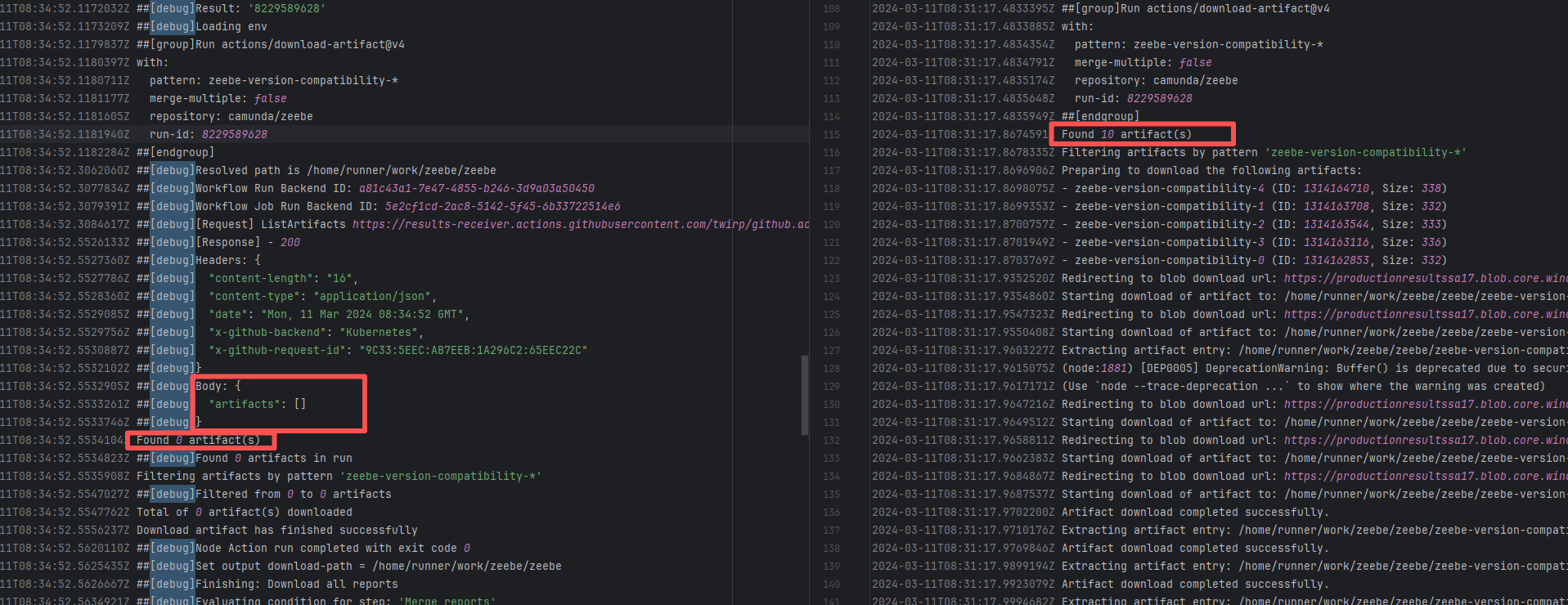

camunda/zeebe |

21713258717 |

Lock Contention |

Error: This error occurs when Maven cannot acquire write permissions for the dependency lock file during the build process. The core reason is that the lock file `/home/runner/.m2/repository/.locks/org.apache.httpcomponents~httpasyncclient~4.1.5.lock` is occupied or inaccessible, preventing Maven from downloading or updating dependencies normally.

Root Cause: Analysis of the error log revealed that the cache fetched both times was identical. Therefore, the error was caused by a lock file conflict due to concurrent execution order. Succeeded after rerun. |

Short-term Fix: Succeeded after Rerun the job.

Long-term Defense: Continuous monitoring; if frequent, investigate root cause in depth. |

|

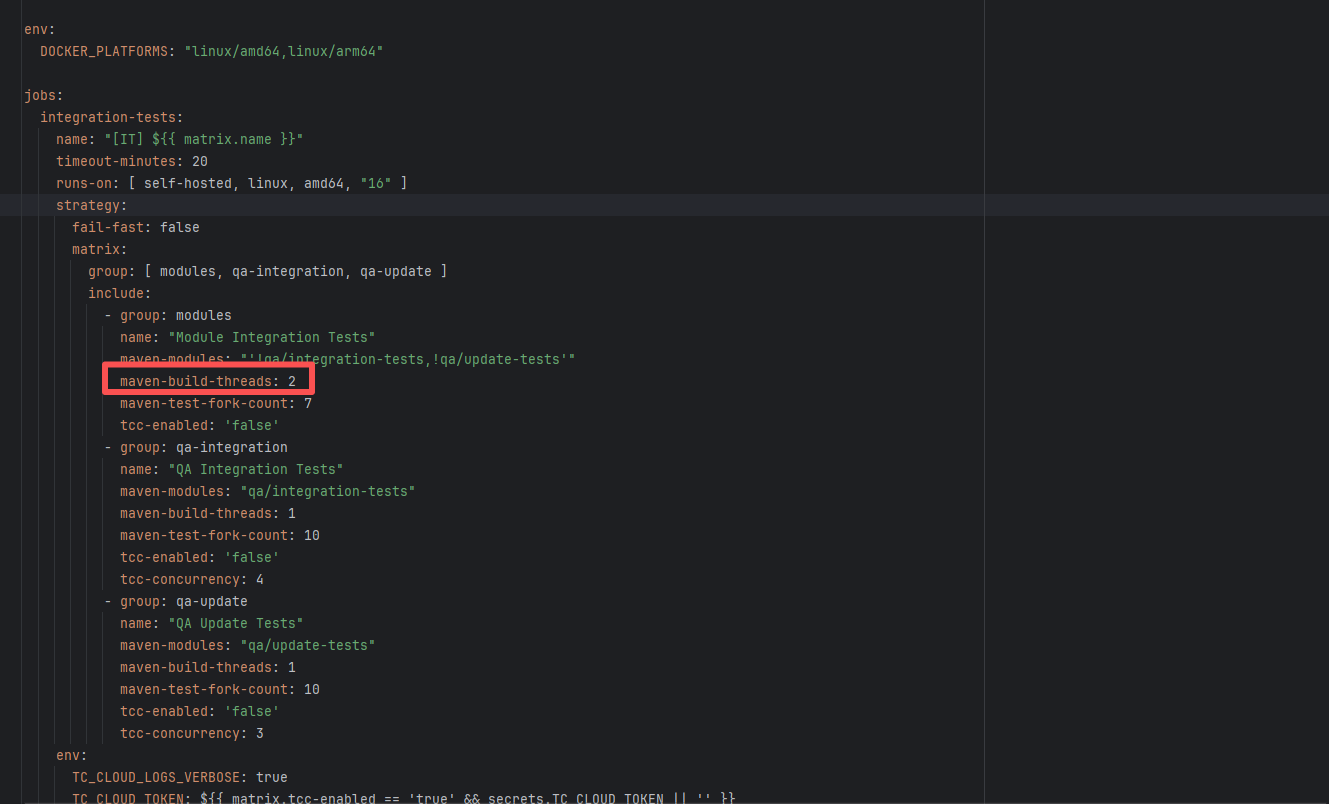

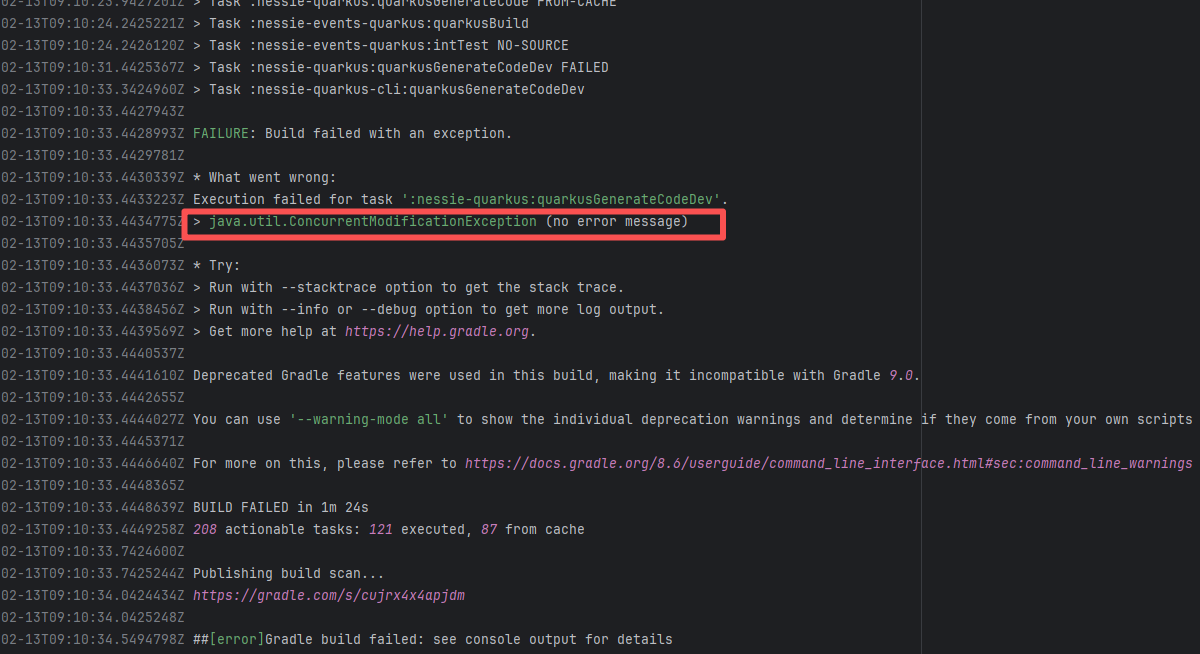

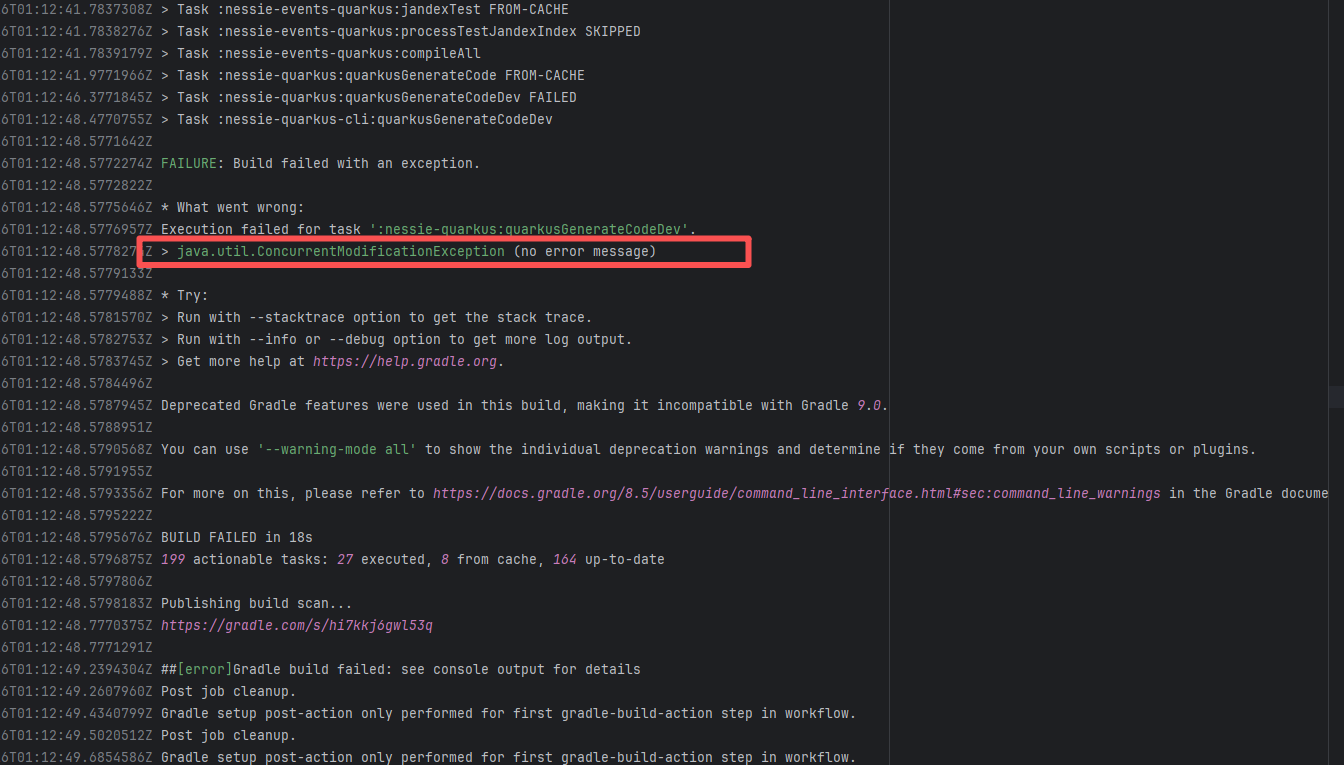

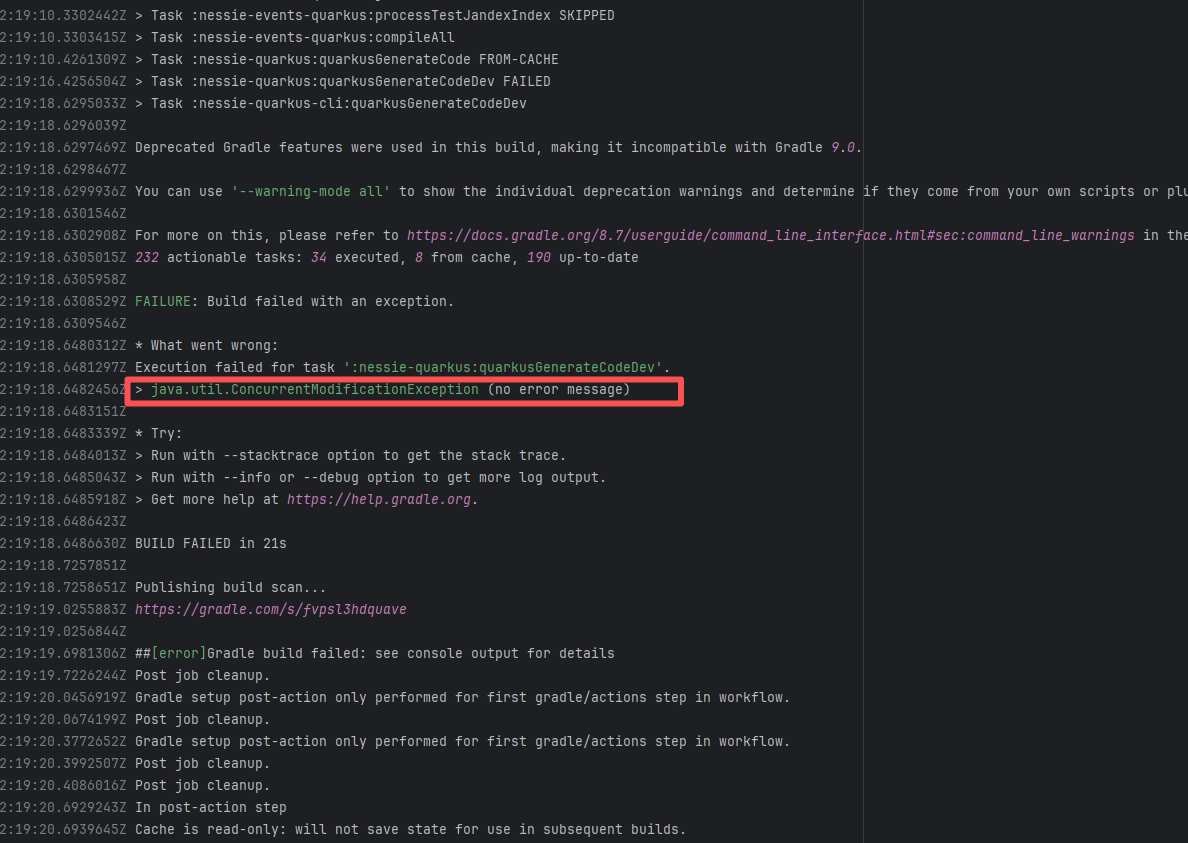

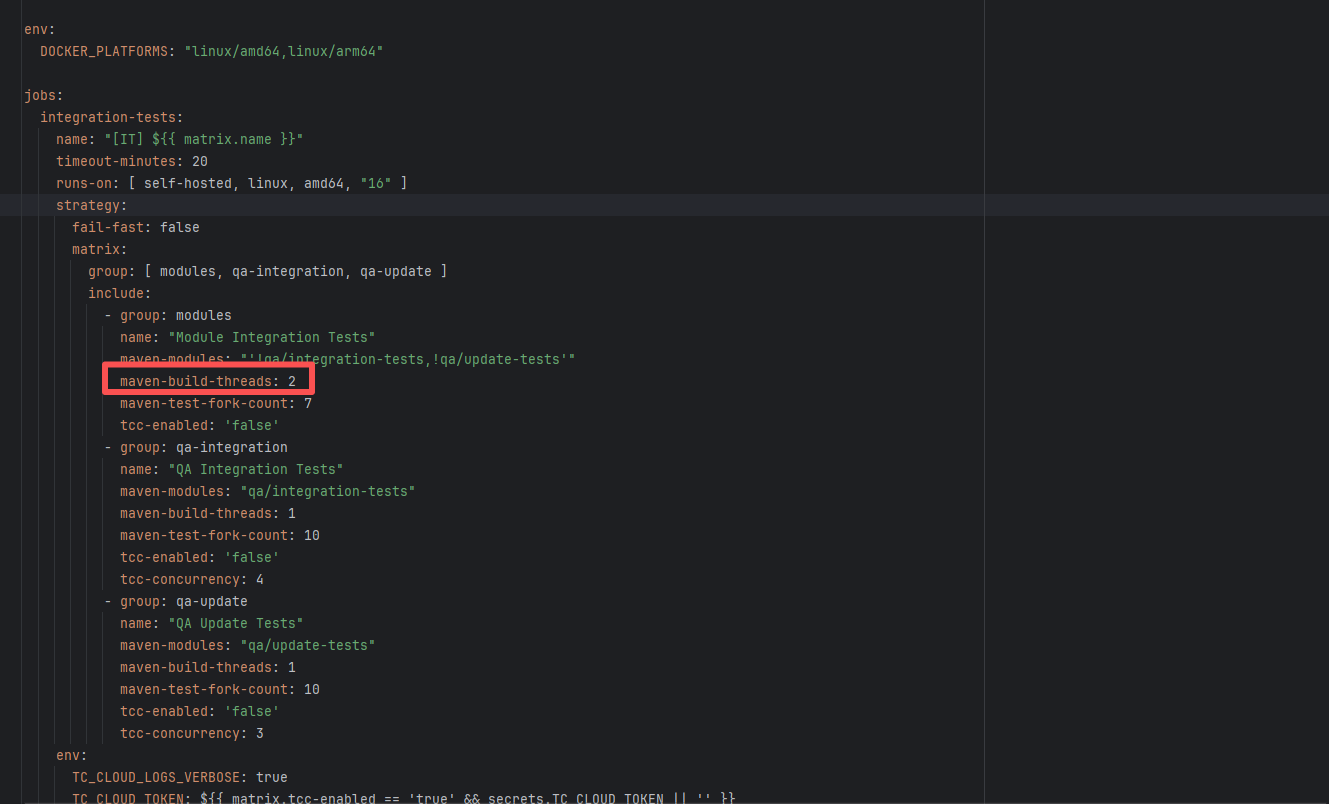

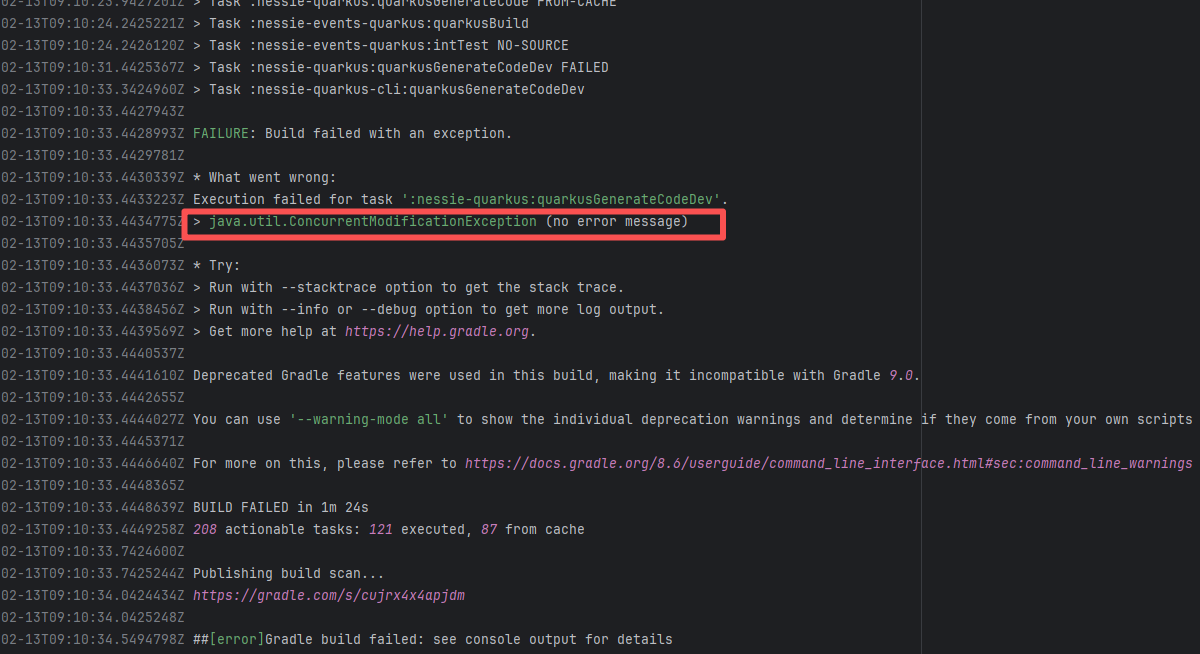

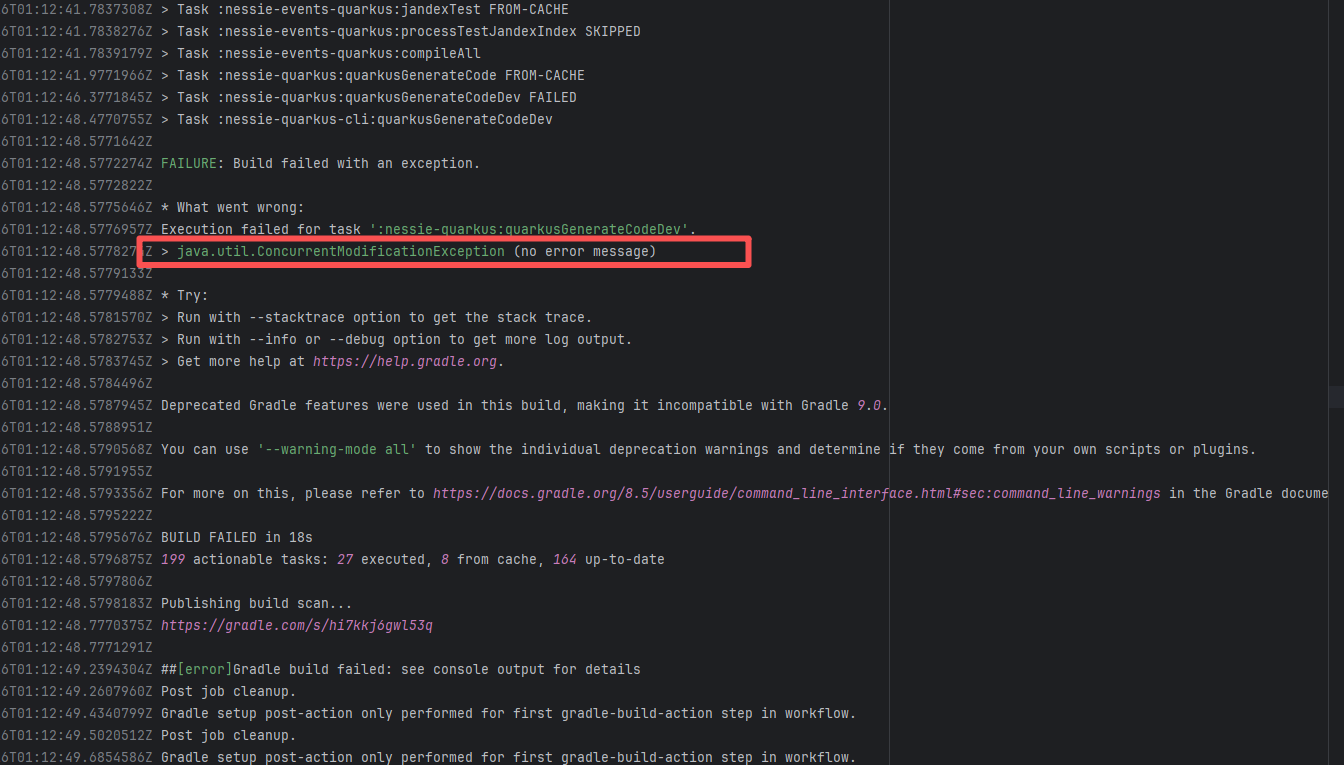

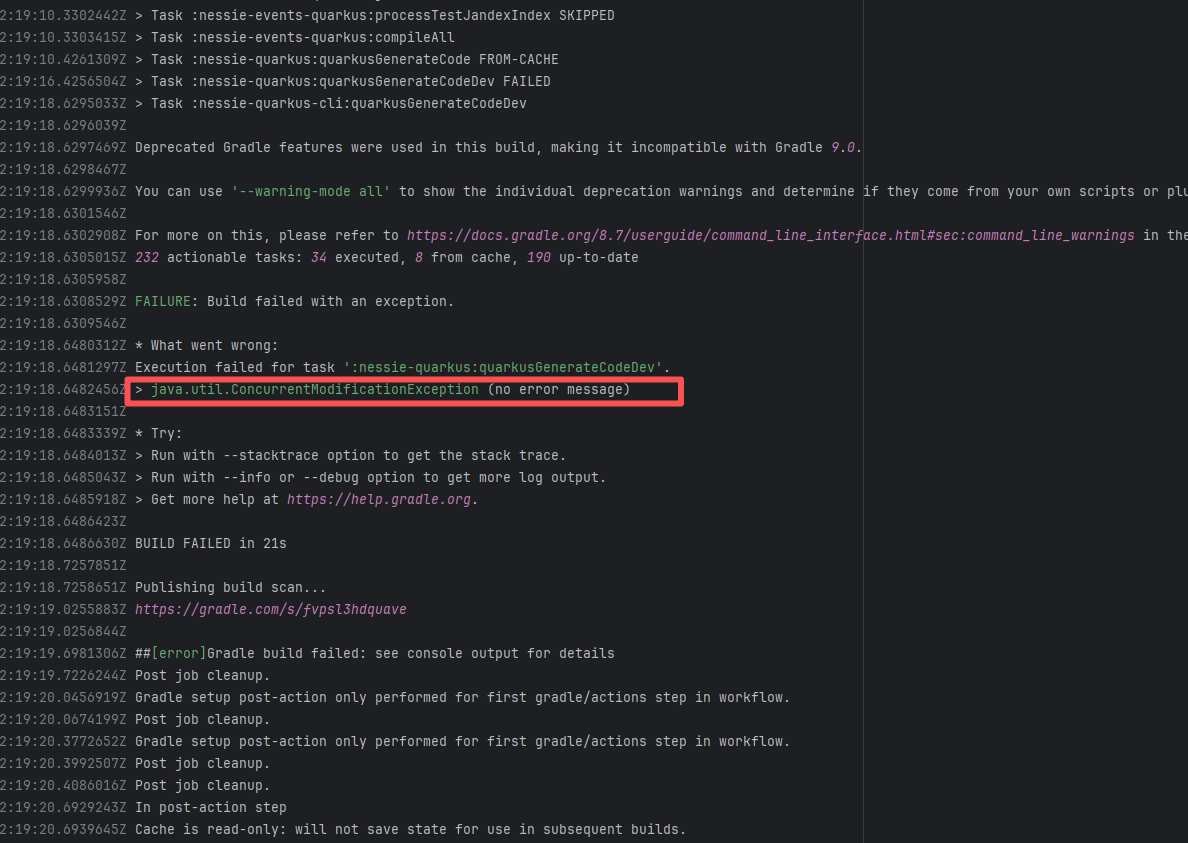

| projectnessie/nessie |

21512445344 |

Concurrent Collection Modification |

Error: `java.util.ConcurrentModificationException` is a concurrent modification exception that is thrown when multiple threads operate on the same collection (such as List, Map, etc.) simultaneously, and at least one thread modifies the collection's structure.

Root Cause: Checking the rerun history revealed that the rerun was successful. Therefore, this error was a lock file conflict caused by concurrent execution order. Succeeded after rerun. |

Short-term Fix: Succeeded after Rerun the job.

Long-term Defense: Continuous monitoring; if frequent, investigate root cause in depth. |

|

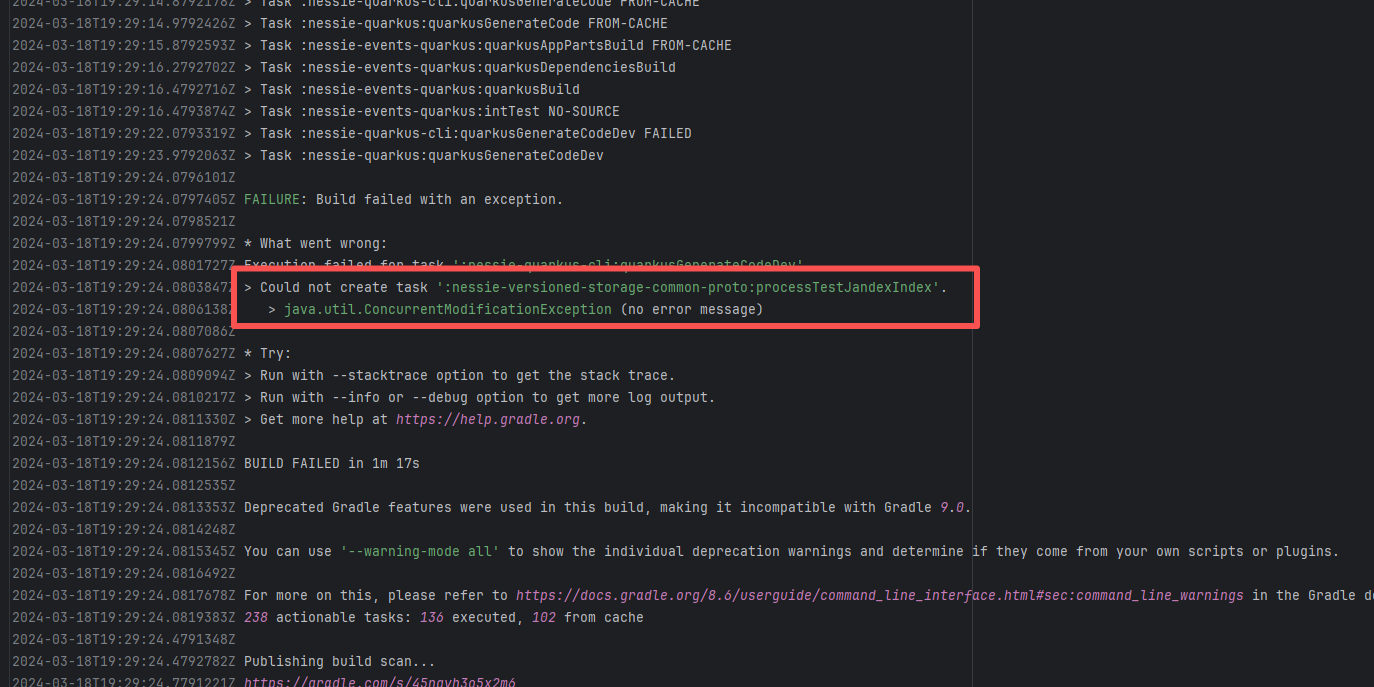

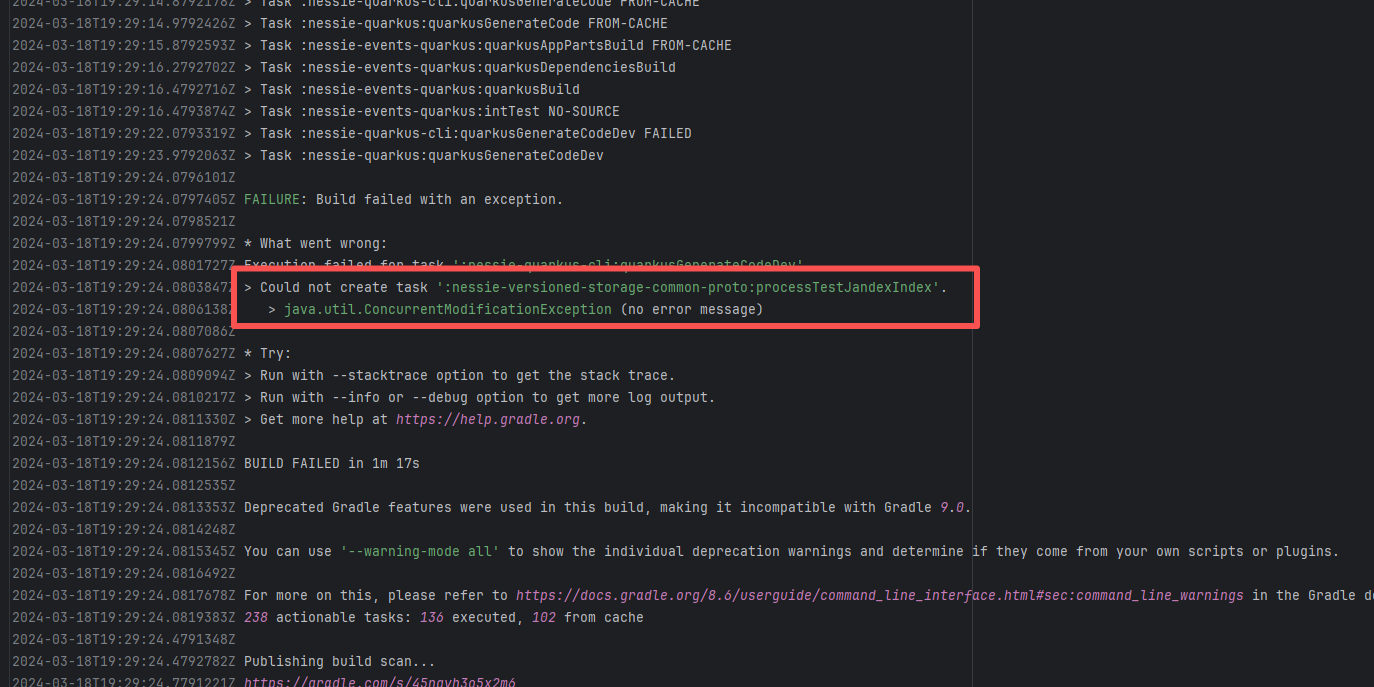

| projectnessie/nessie |

22801785559 |

Concurrent Collection Modification |

Error: `java.util.ConcurrentModificationException` is a concurrent modification exception that is thrown when multiple threads operate on the same collection (such as List, Map, etc.) simultaneously, and at least one thread modifies the collection's structure.

Root Cause: Checking the rerun history revealed that the rerun was successful. Therefore, this error was a lock file conflict caused by concurrent execution order. Succeeded after rerun. |

Short-term Fix: Succeeded after Rerun the job.

Long-term Defense: Continuous monitoring; if frequent, investigate root cause in depth. |

|

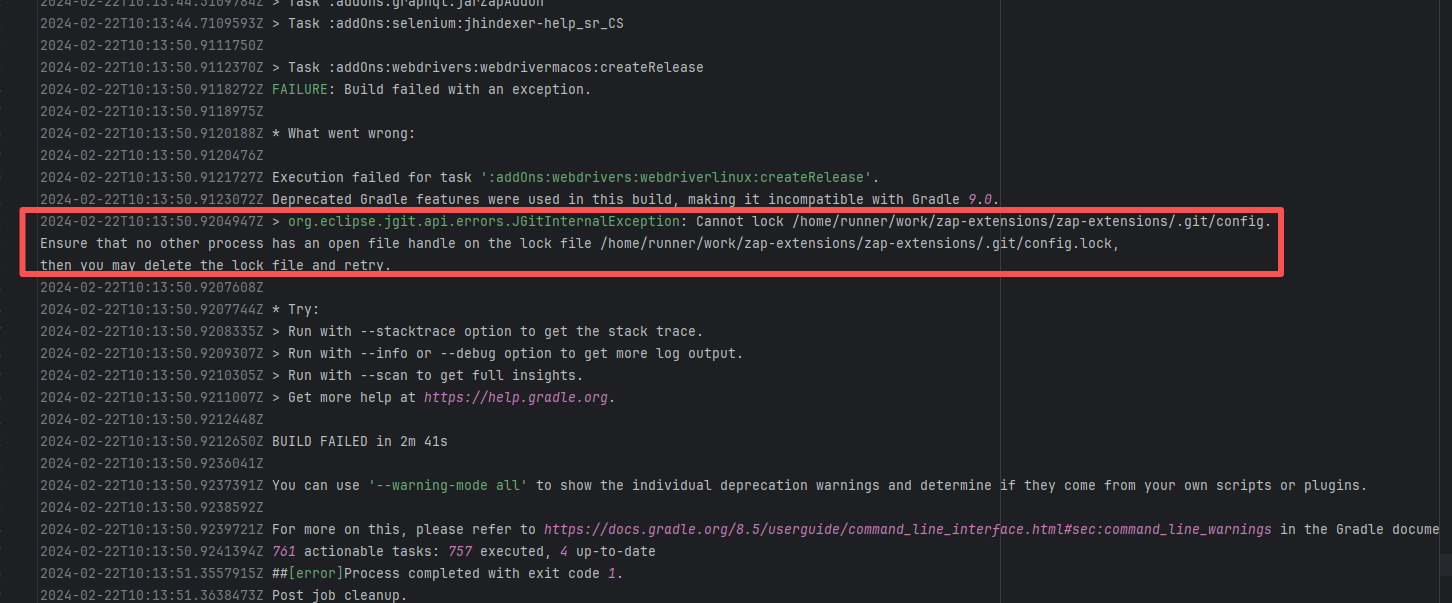

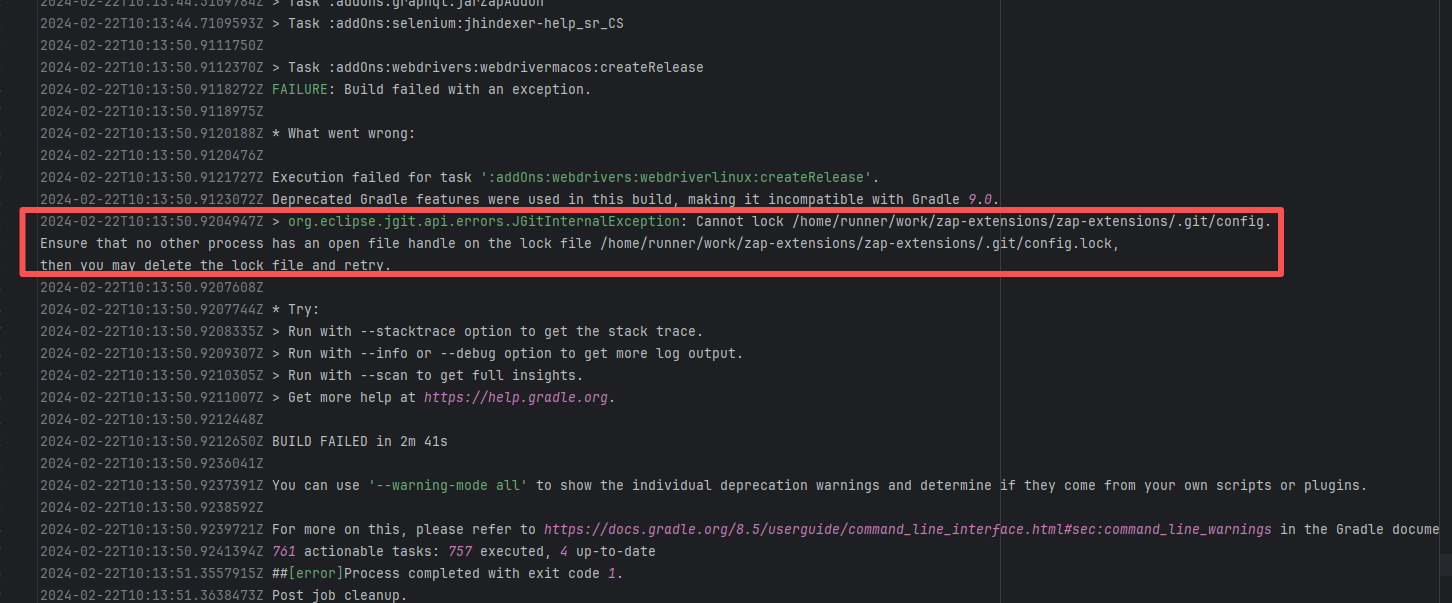

| zaproxy/zap-extensions |

21856627368 |

Lock Contention |

Error: This error is a file lock conflict during a Git operation. Specifically, when JGit (a Java-implemented Git library) attempted to access the repository's `.git/config` file, it found that the corresponding lock file `.git/config.lock` was already occupied by another process, resulting in a failure to acquire the lock.

Root Cause: Checking the rerun history revealed that the rerun was successful. Therefore, this error was a lock file conflict caused by concurrent execution order. Succeeded after rerun. |

Short-term Fix: Succeeded after Rerun the job.

Long-term Defense: Continuous monitoring; if frequent, investigate root cause in depth. |

|

| projectnessie/nessie |

19698692763 |

Concurrent Collection Modification |

Error: `java.util.ConcurrentModificationException` is a concurrent modification exception that is thrown when multiple threads operate on the same collection (such as List, Map, etc.) simultaneously, and at least one thread modifies the collection's structure.

Root Cause: Checking the rerun history revealed that the rerun was successful. Therefore, this error was a lock file conflict caused by concurrent execution order. Succeeded after rerun. |

Short-term Fix: Succeeded after Rerun the job.

Long-term Defense: Continuous monitoring; if frequent, investigate root cause in depth. |

|

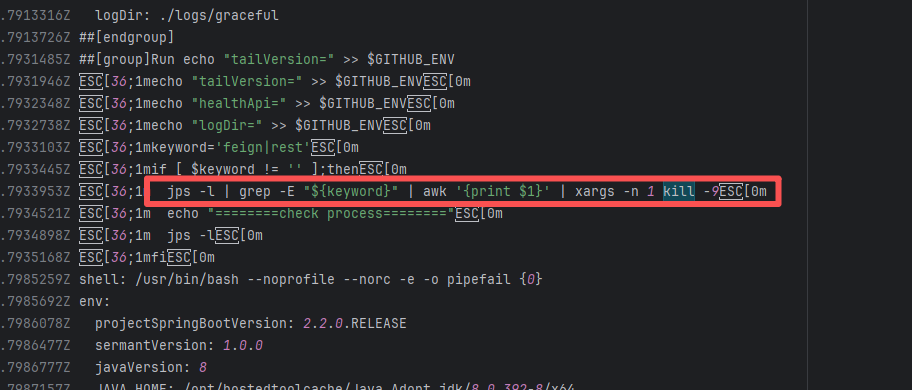

| spring-cloud/spring-cloud-kubernetes |

19962377093 |

Lock Contention |

Error: A timeout error occurred while fetching resources.

Root Cause: Transient connection timeout caused by network fluctuations, not a service provider server error. |

Short-term Fix: Rerun the job; it will automatically succeed after network conditions recover.

Long-term Defense:

1. Configure domestic mirror sources to replace official sources;

2. Add a retry mechanism (e.g., retry plugin);

3. Use CDN to accelerate dependency downloads. |

|

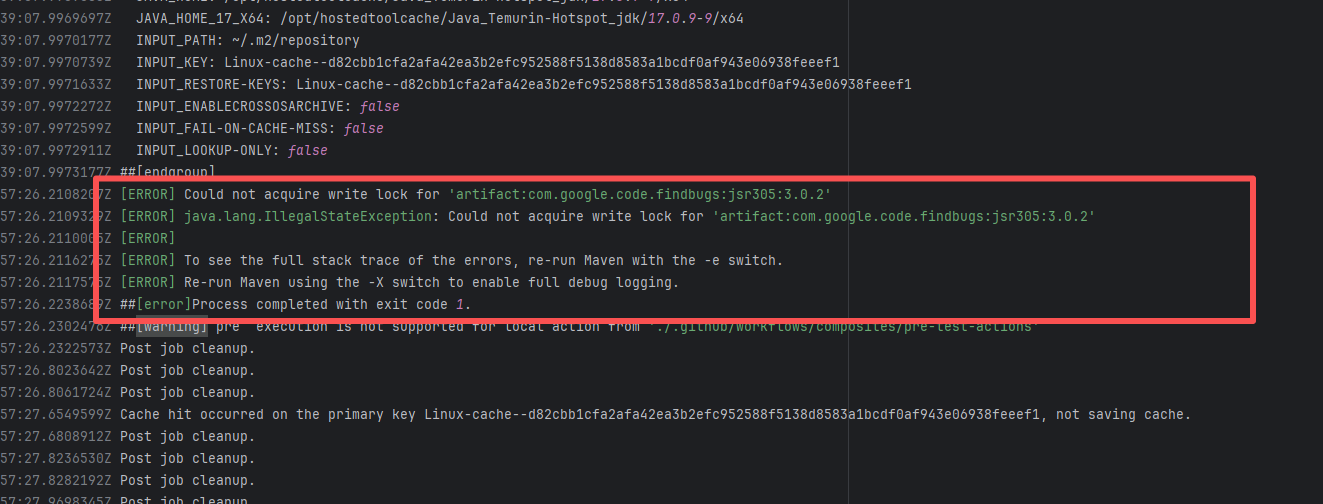

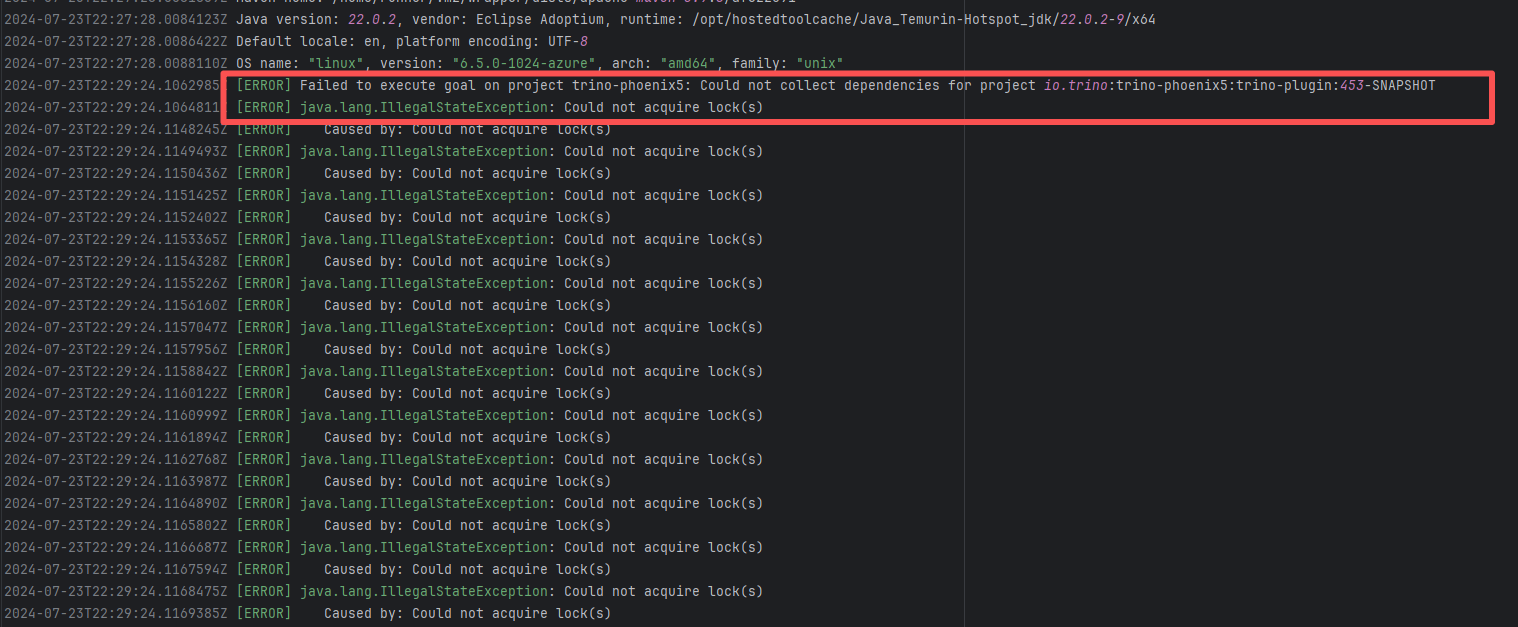

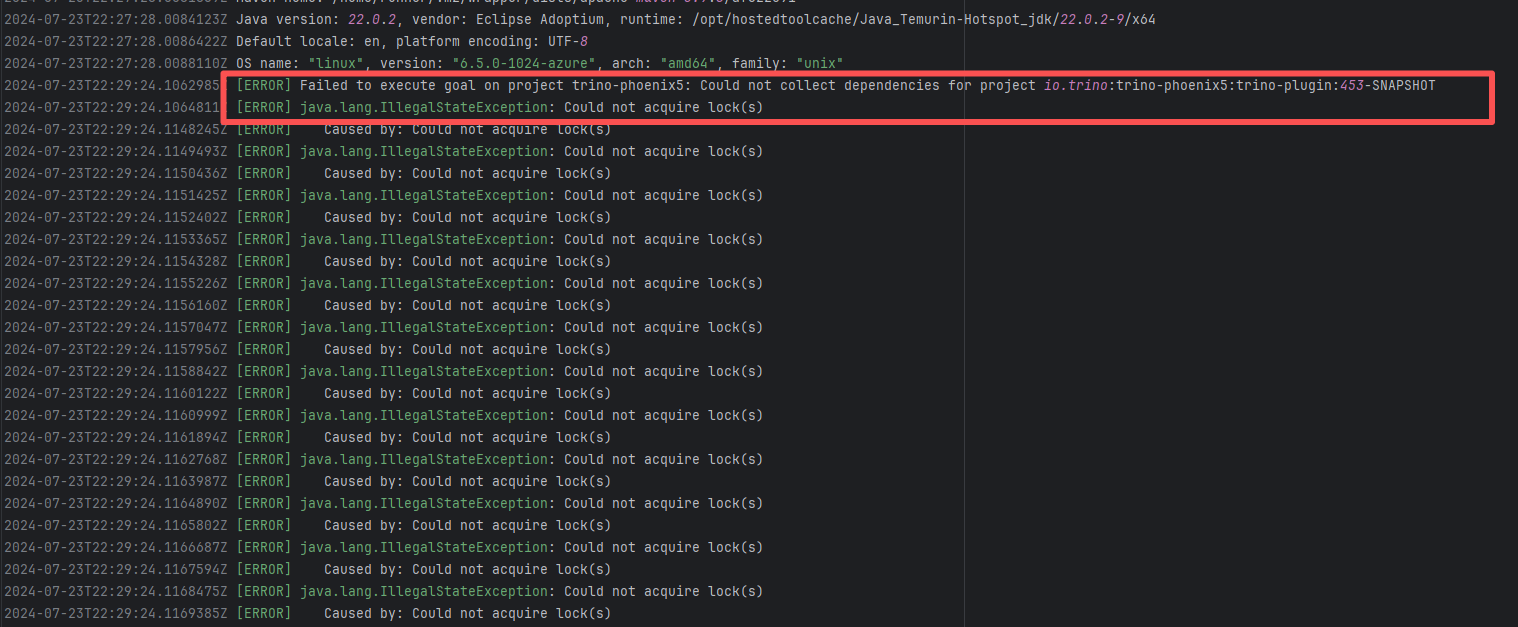

| trinodb/trino |

27830367445 |

Lock Contention |

Error: A timeout error occurred while fetching resources.

Root Cause: Transient connection timeout caused by network fluctuations, not a service provider server error. |

Short-term Fix: Rerun the job; it will automatically succeed after network conditions recover.

Long-term Defense:

1. Configure domestic mirror sources to replace official sources;

2. Add a retry mechanism (e.g., retry plugin);

3. Use CDN to accelerate dependency downloads. |

|

| camunda/zeebe |

22289680309 |

Lock Contention |

Error: This error occurs when Maven cannot acquire write permissions for the dependency lock file during the build process. The core reason is that the lock file `/home/runner/.m2/repository/.locks/org.apache.httpcomponents~httpasyncclient~4.1.5.lock` is occupied or inaccessible, preventing Maven from downloading or updating dependencies normally.

Root Cause: Analysis of the error log revealed that the cache fetched both times was identical. Therefore, the error was caused by a lock file conflict due to concurrent execution order. Succeeded after rerun. |

Short-term Fix: Succeeded after Rerun the job.

Long-term Defense: Continuous monitoring; if frequent, investigate root cause in depth. |

|

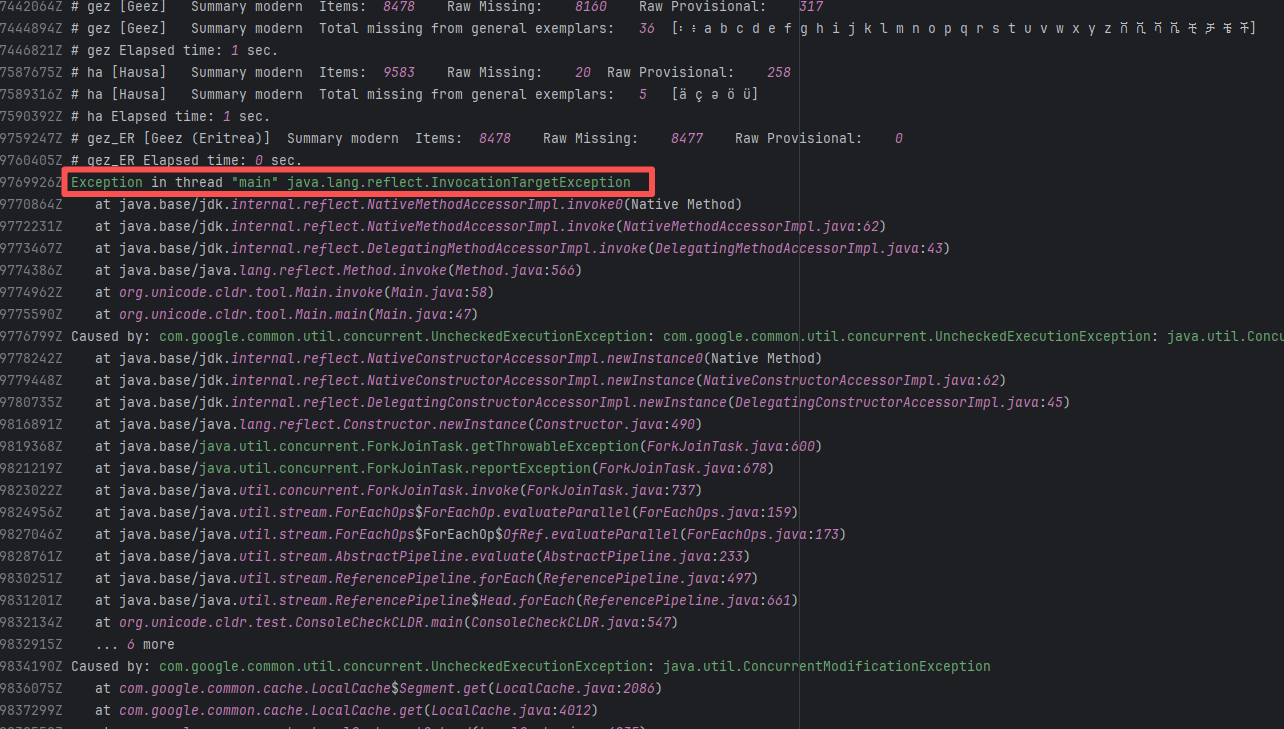

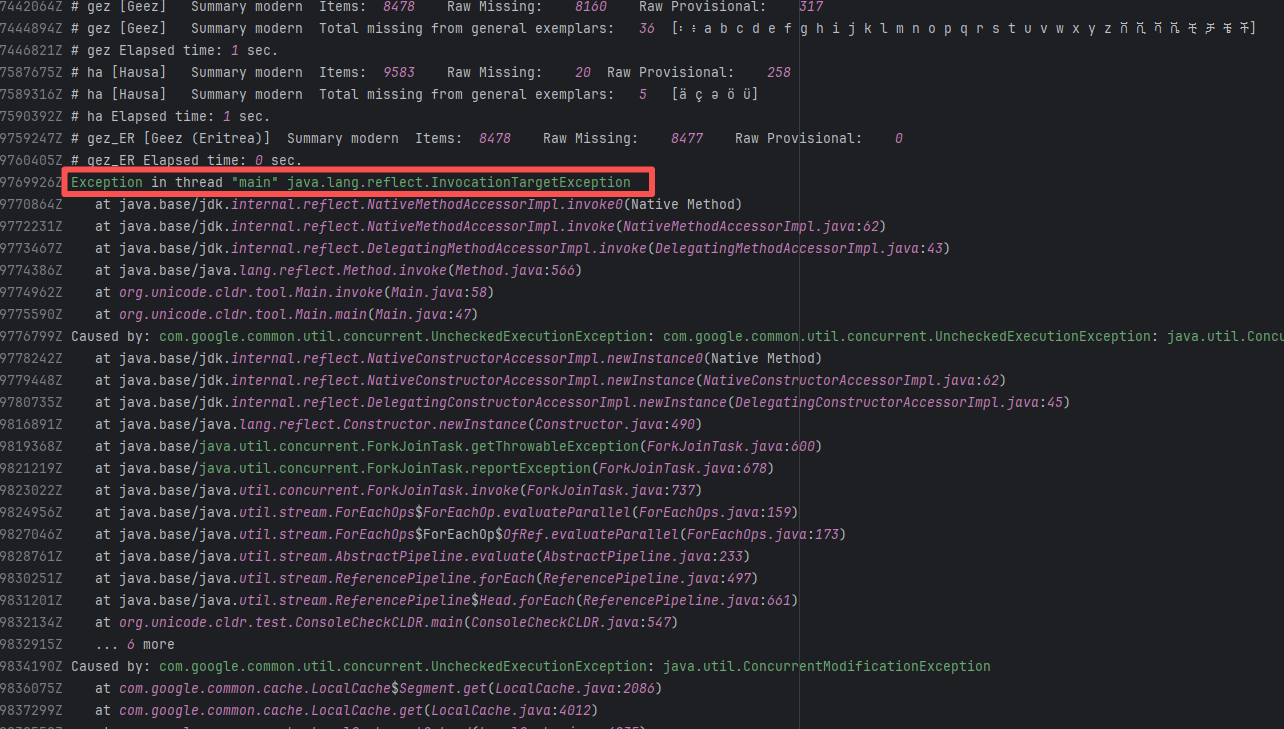

| unicode-org/cldr |

21036909232 |

Concurrent Collection Modification |

Error: `java.util.ConcurrentModificationException` is a concurrent modification exception that is thrown when multiple threads operate on the same collection (such as List, Map, etc.) simultaneously, and at least one thread modifies the collection's structure.

Root Cause: Checking the rerun history revealed that the rerun was successful. Therefore, this error was a lock file conflict caused by concurrent execution order. Succeeded after rerun. |

Short-term Fix: Succeeded after Rerun the job.

Long-term Defense: Continuous monitoring; if frequent, investigate root cause in depth. |

|

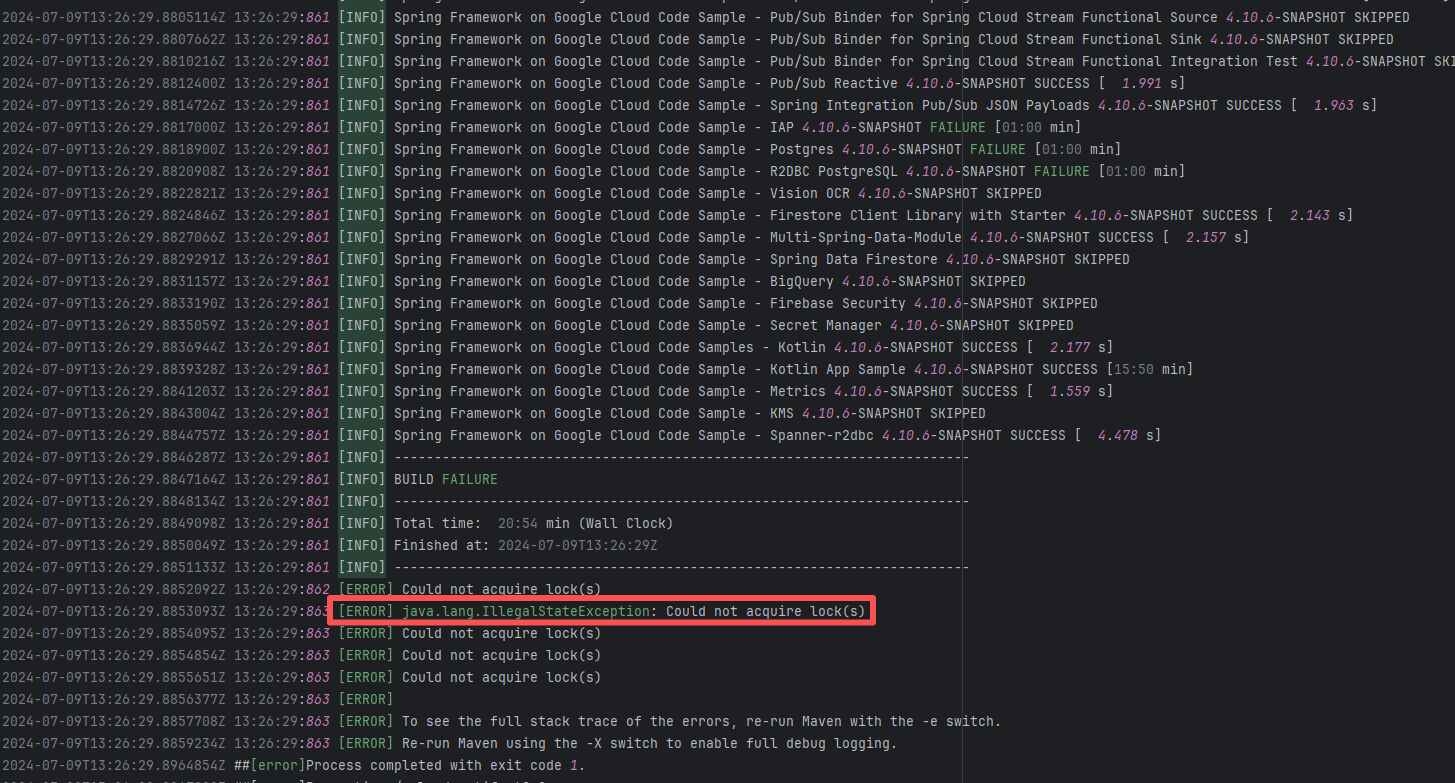

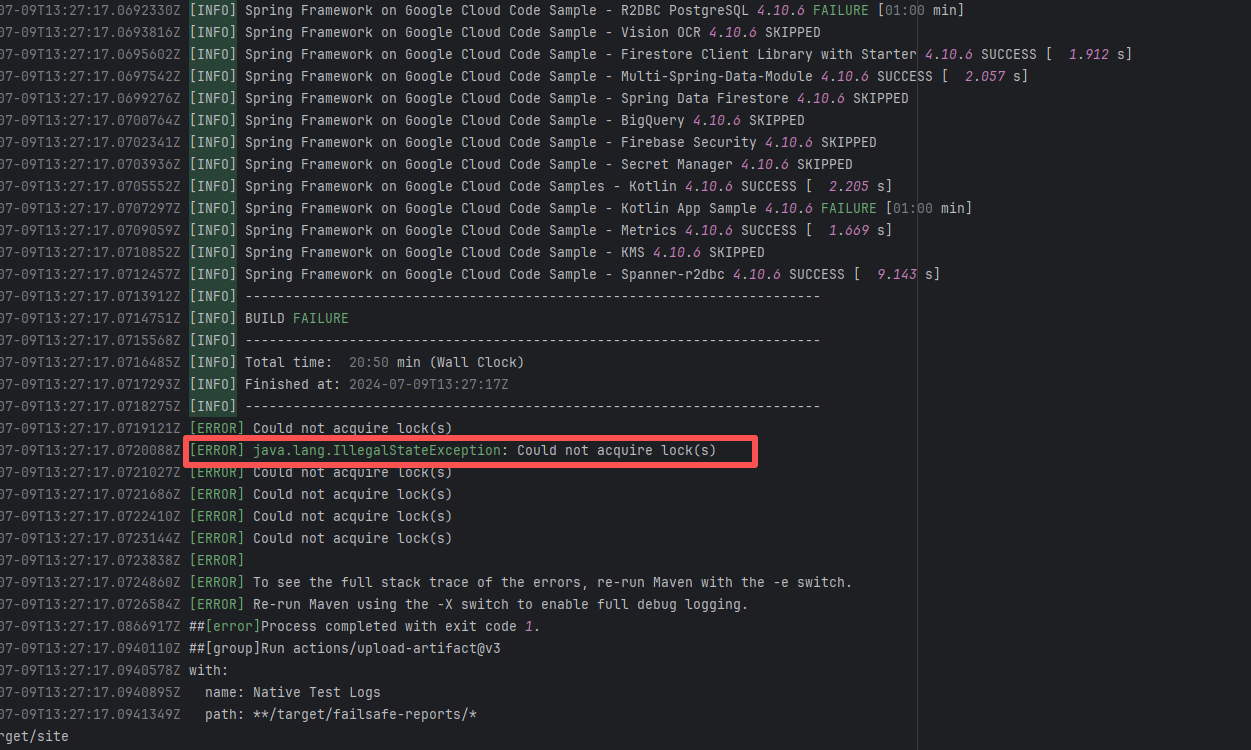

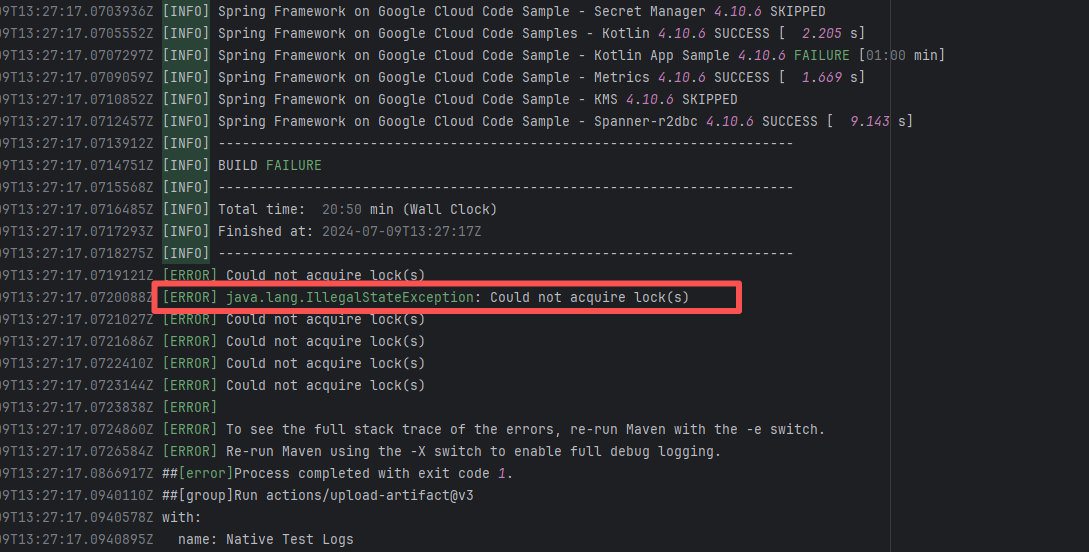

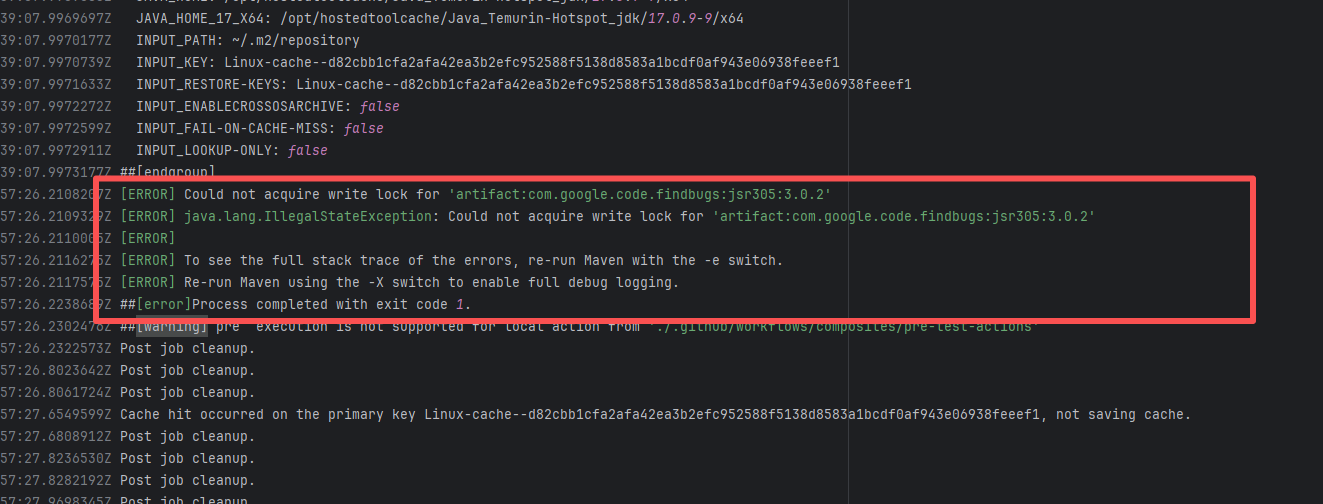

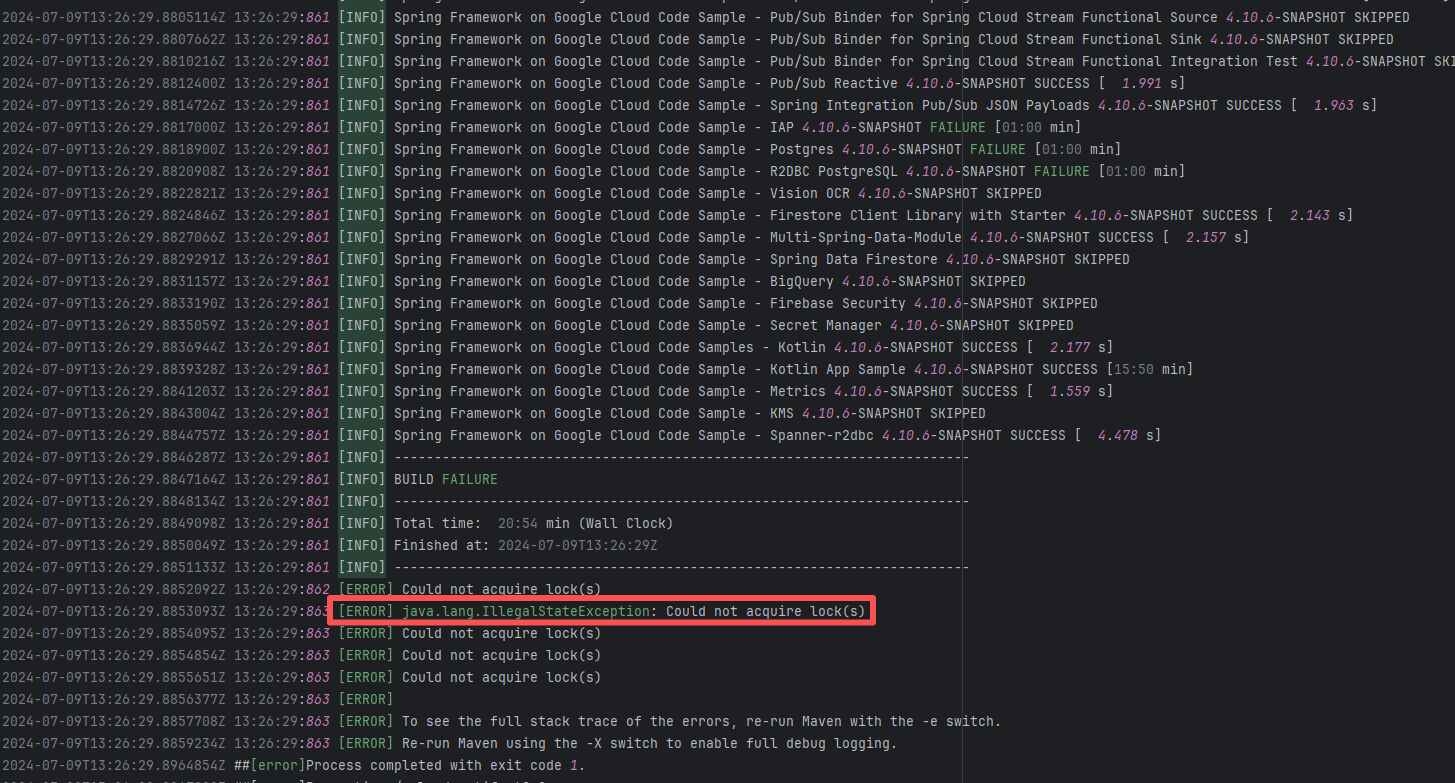

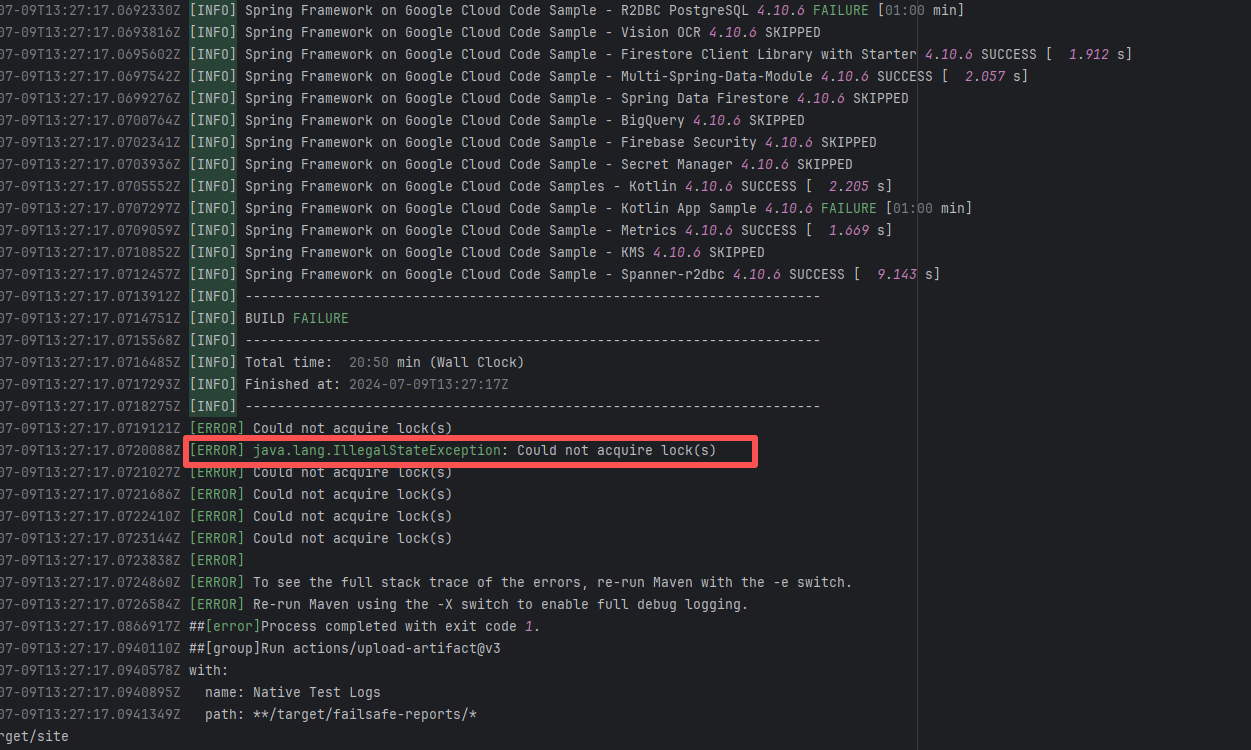

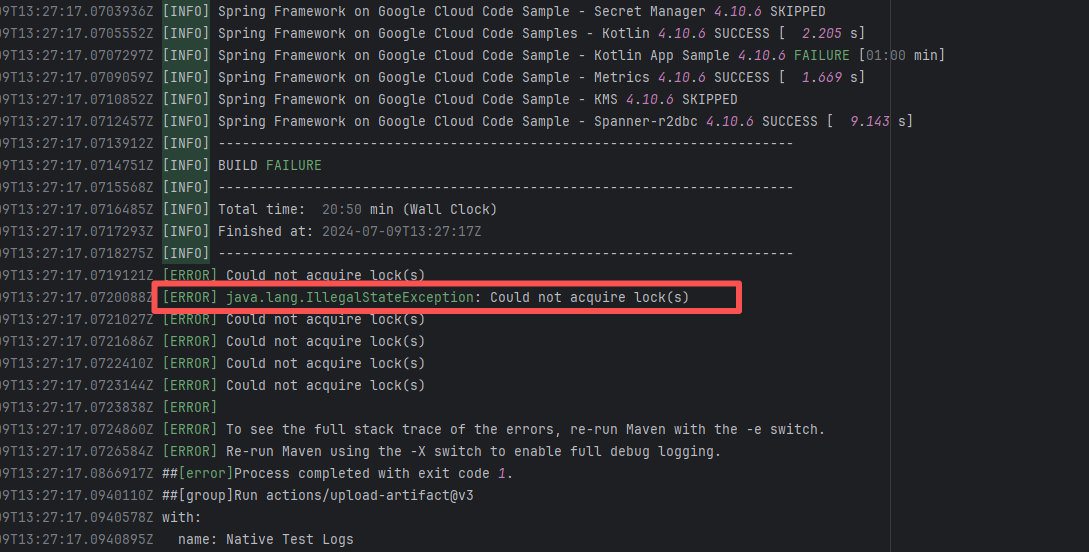

| googlecloudplatform/spring-cloud-gcp |

27217079283 |

Lock Contention |

Error: The log error shows that Maven encountered a `java.lang.IllegalStateException: Could not acquire lock(s)` error during the build process, causing the build to fail. The specific problem occurred during dependency resolution for multiple modules, where Maven could not acquire the required lock files, thus preventing it from continuing execution.

Root Cause: Checking the rerun history revealed that the rerun was successful. Therefore, this error was a lock file conflict caused by concurrent execution order. Succeeded after rerun. |

Short-term Fix: Succeeded after Rerun the job.

Long-term Defense: Continuous monitoring; if frequent, investigate root cause in depth. |

|

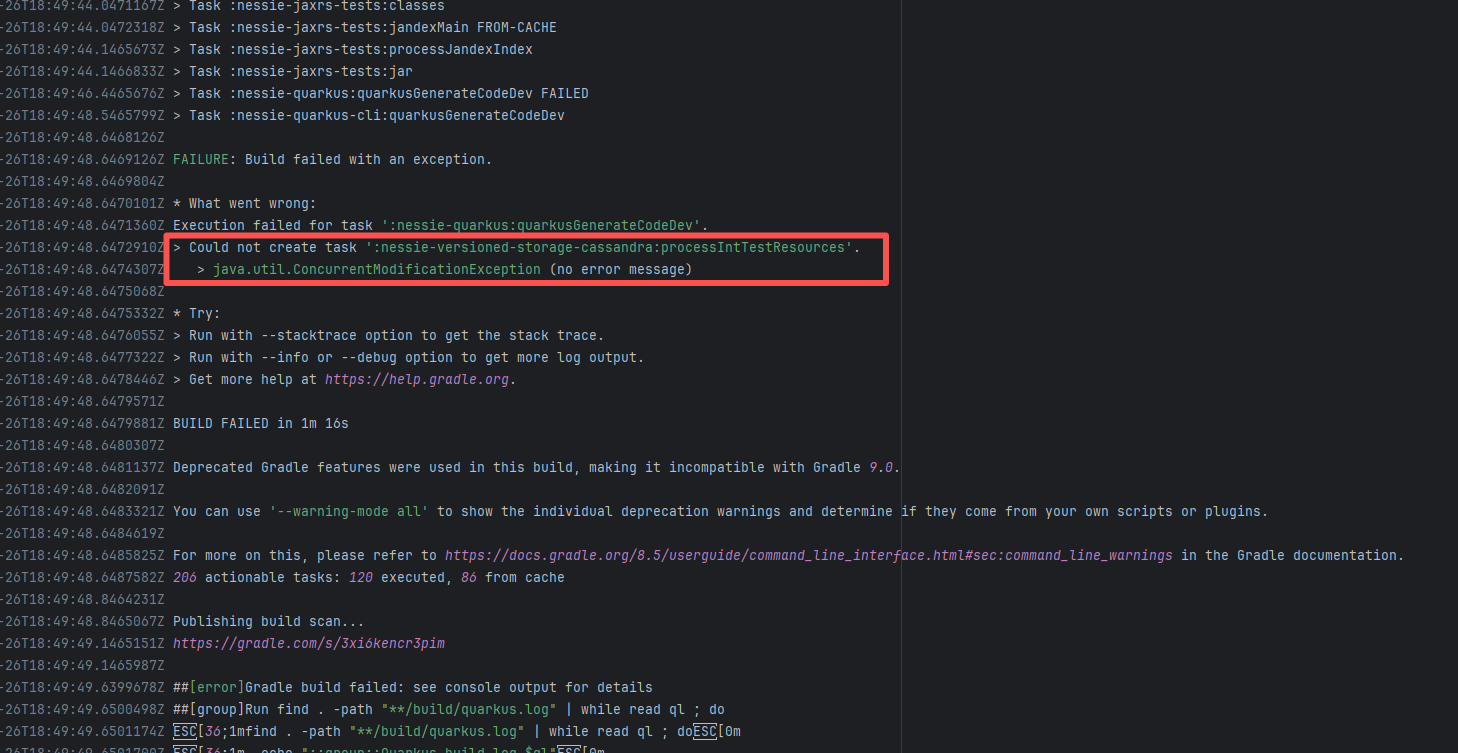

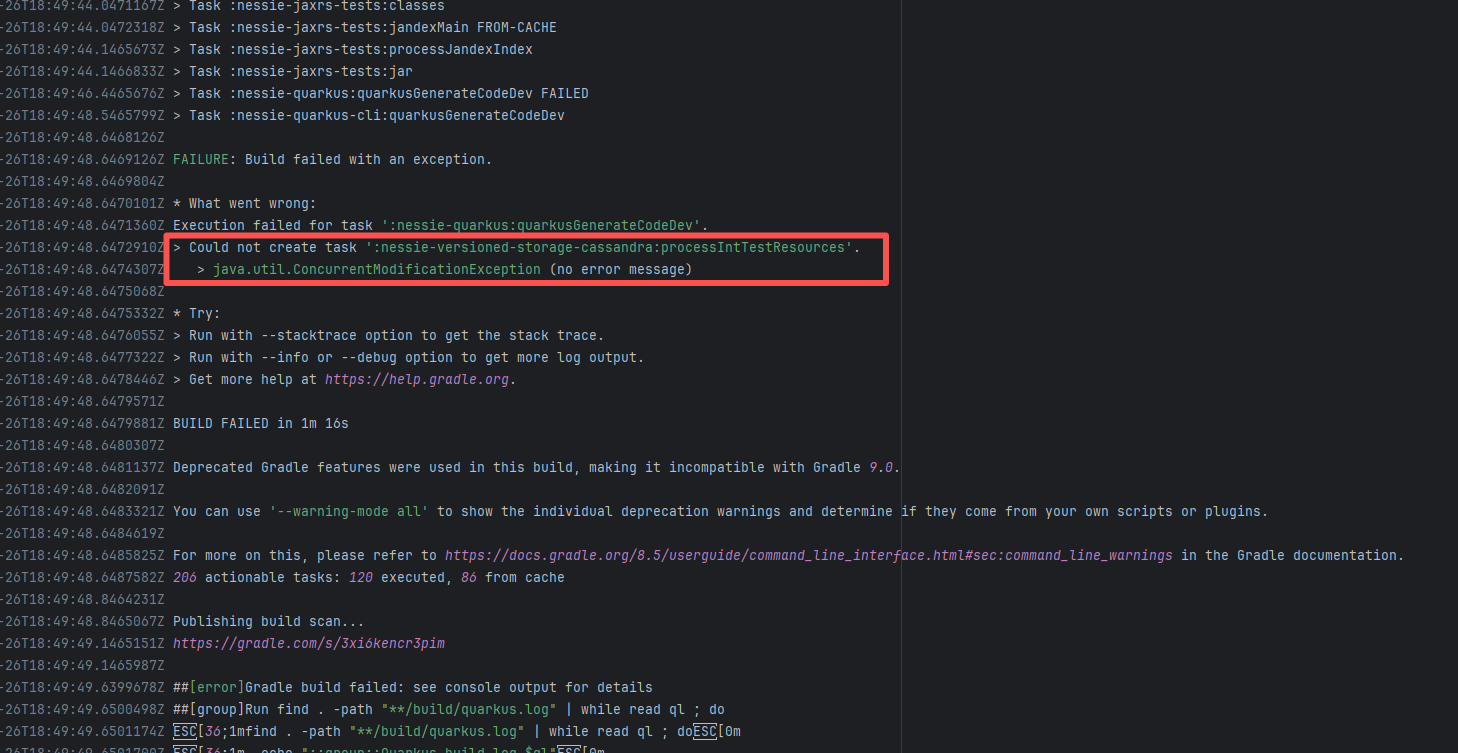

| projectnessie/nessie |

19965217183 |

Concurrent Collection Modification |

Error: `java.util.ConcurrentModificationException` is a concurrent modification exception that is thrown when multiple threads operate on the same collection (such as List, Map, etc.) simultaneously, and at least one thread modifies the collection's structure.

Root Cause: Checking the rerun history revealed that the rerun was successful. Therefore, this error was a lock file conflict caused by concurrent execution order. Succeeded after rerun. |

Short-term Fix: Succeeded after Rerun the job.

Long-term Defense: Continuous monitoring; if frequent, investigate root cause in depth. |

|

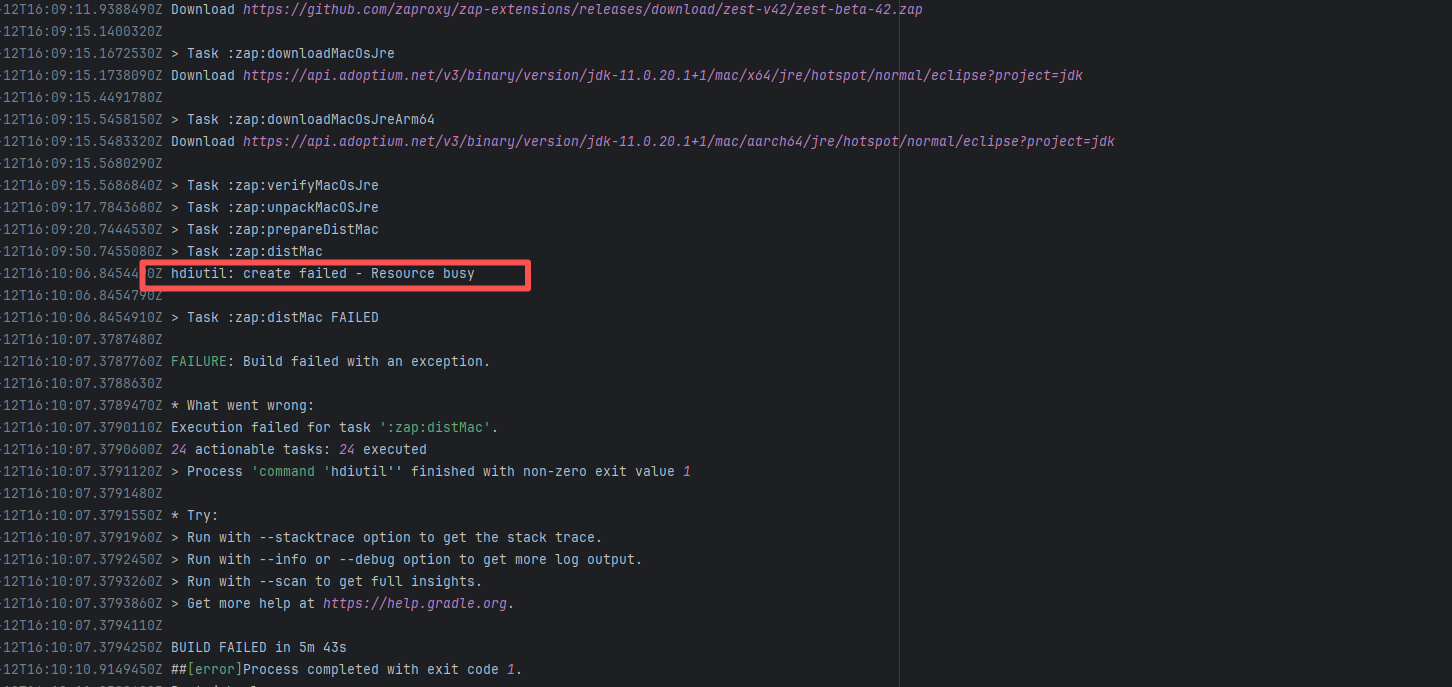

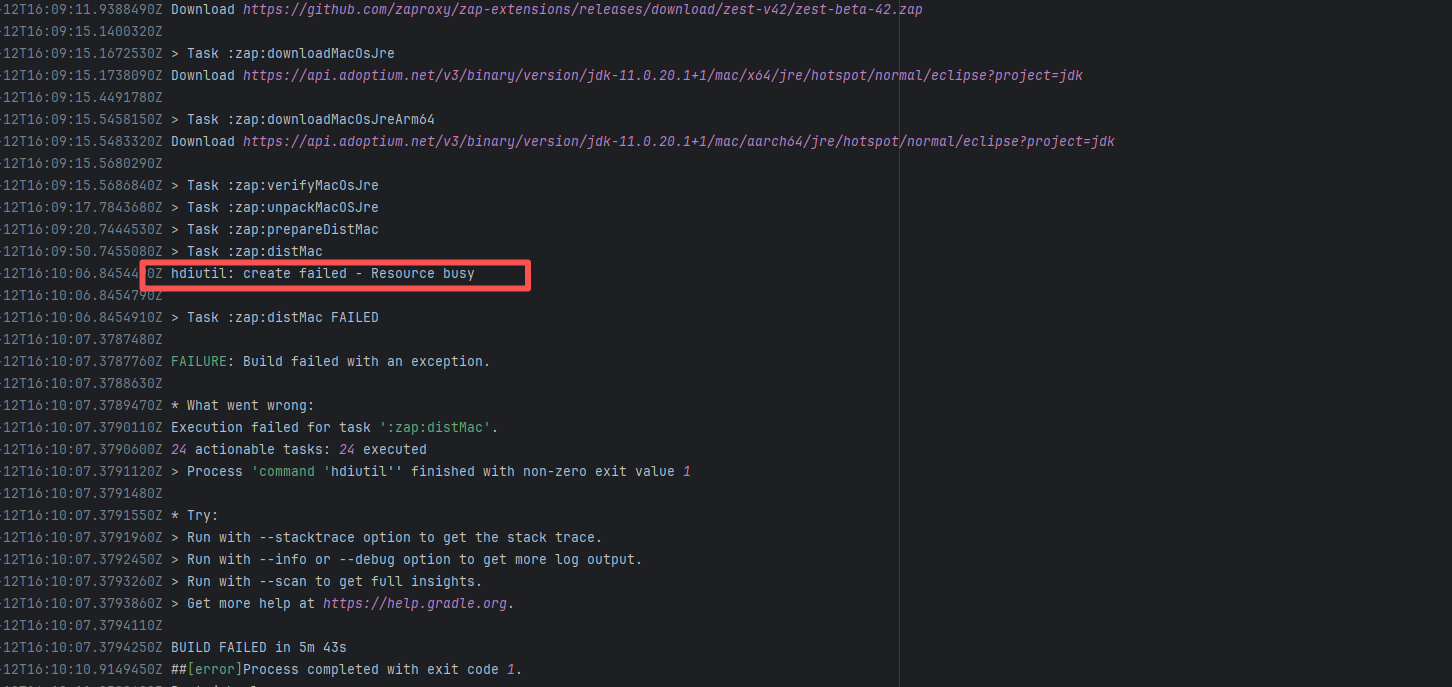

| zaproxy/zaproxy |

17648710792 |

Lock Contention |

Error: The log error shows that `hdiutil` failed when attempting to create a disk image due to 'resource busy': This error usually means that `hdiutil` cannot access a file or device because it is being used by another process. It could be a disk image, file, or other resource.

Root Cause: Checking the rerun history revealed that the rerun was successful. Therefore, this error was a lock file conflict caused by concurrent execution order. Succeeded after rerun. |

Short-term Fix: Succeeded after Rerun the job.

Long-term Defense: Continuous monitoring; if frequent, investigate root cause in depth. |

|

| projectnessie/nessie |

23287539479 |

Concurrent Collection Modification |

Error: `java.util.ConcurrentModificationException` is a concurrent modification exception that is thrown when multiple threads operate on the same collection (such as List, Map, etc.) simultaneously, and at least one thread modifies the collection's structure.

Root Cause: Checking the rerun history revealed that the rerun was successful. Therefore, this error was a lock file conflict caused by concurrent execution order. Succeeded after rerun. |

Short-term Fix: Succeeded after Rerun the job.

Long-term Defense: Continuous monitoring; if frequent, investigate root cause in depth. |

|

| googlecloudplatform/spring-cloud-gcp |

27217135285 |

Lock Contention |

Error: The log error shows that Maven encountered a `java.lang.IllegalStateException: Could not acquire lock(s)` error during the build process, causing the build to fail. The specific problem occurred during dependency resolution for multiple modules, where Maven could not acquire the required lock files, thus preventing it from continuing execution.

Root Cause: Checking the rerun history revealed that the rerun was successful. Therefore, this error was a lock file conflict caused by concurrent execution order. Succeeded after rerun. |

Short-term Fix: Succeeded after Rerun the job.

Long-term Defense: Continuous monitoring; if frequent, investigate root cause in depth. |

|

| camunda/zeebe |

21713167366 |

Lock Contention |

Error: The log error shows that Maven encountered a `java.lang.IllegalStateException: Could not acquire lock(s)` error during the build process, causing the build to fail. The specific problem occurred during dependency resolution for multiple modules, where Maven could not acquire the required lock files, thus preventing it from continuing execution.

Root Cause: Checking the rerun history revealed that the rerun was successful. Therefore, this error was a lock file conflict caused by concurrent execution order. Succeeded after rerun. |

Short-term Fix: Succeeded after Rerun the job.

Long-term Defense: Continuous monitoring; if frequent, investigate root cause in depth. |

|

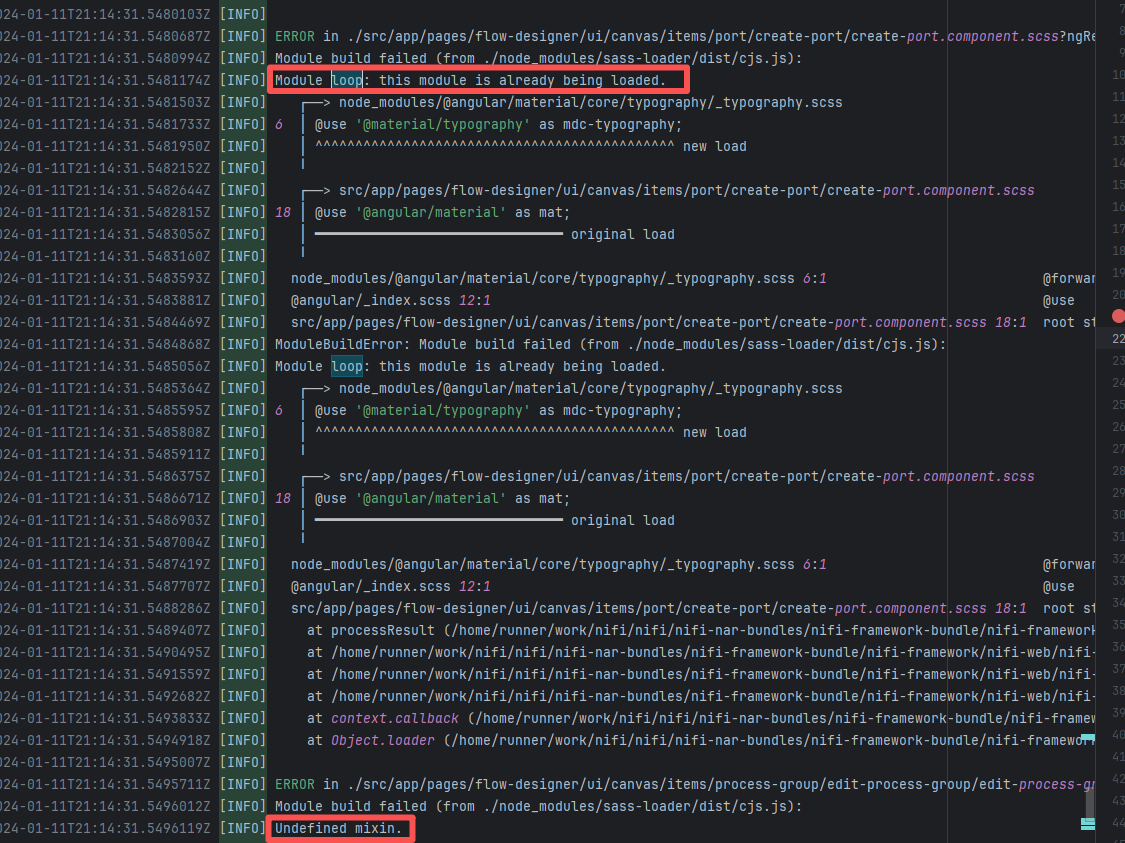

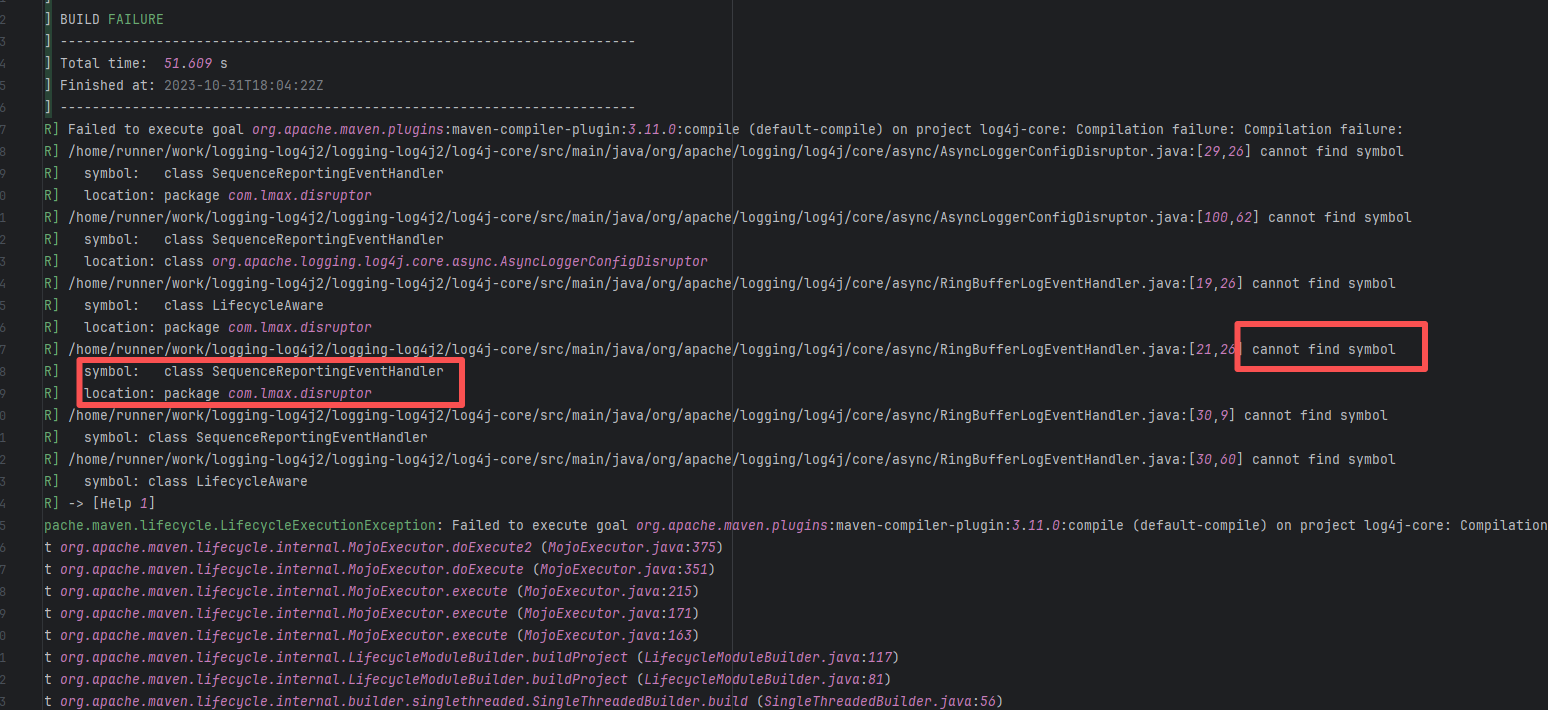

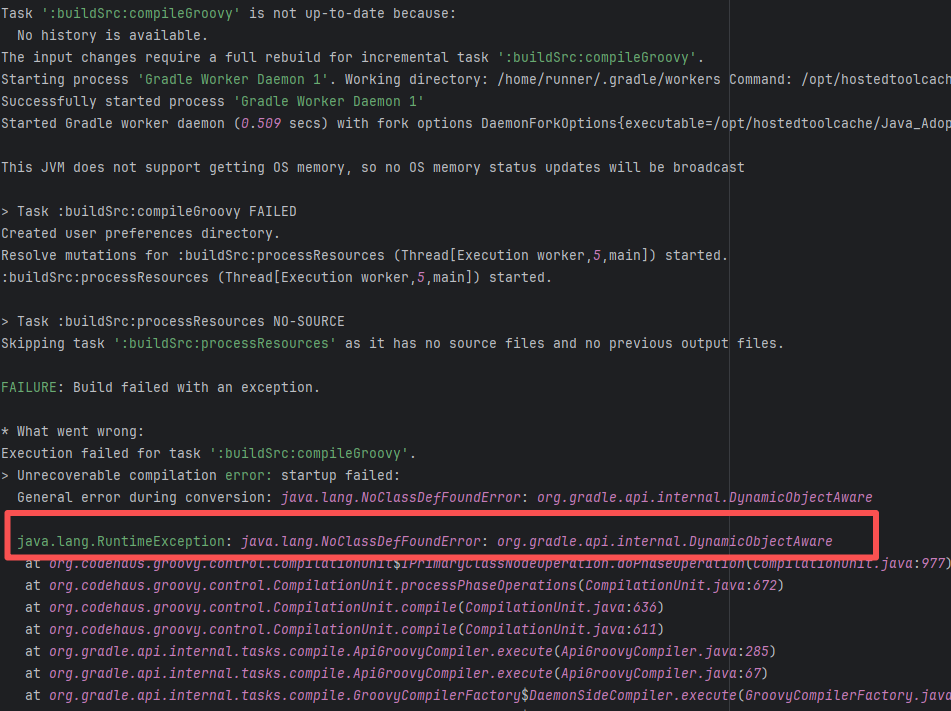

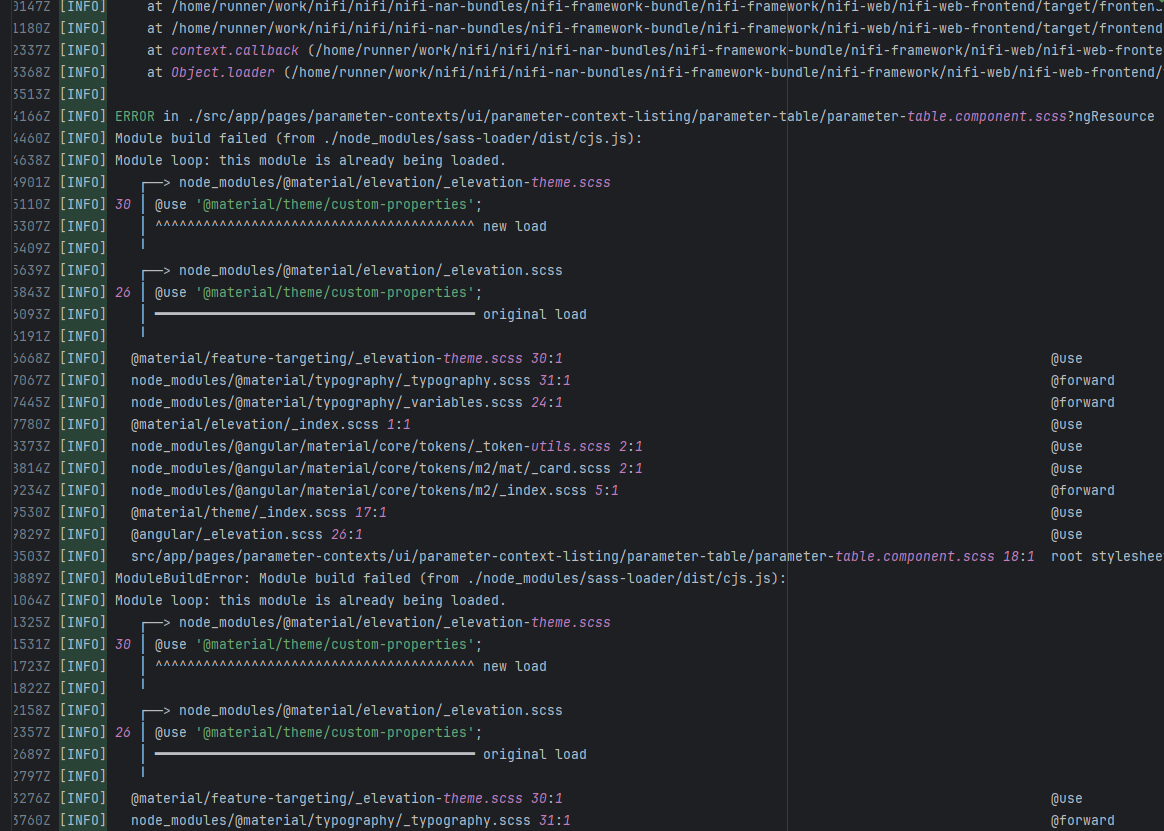

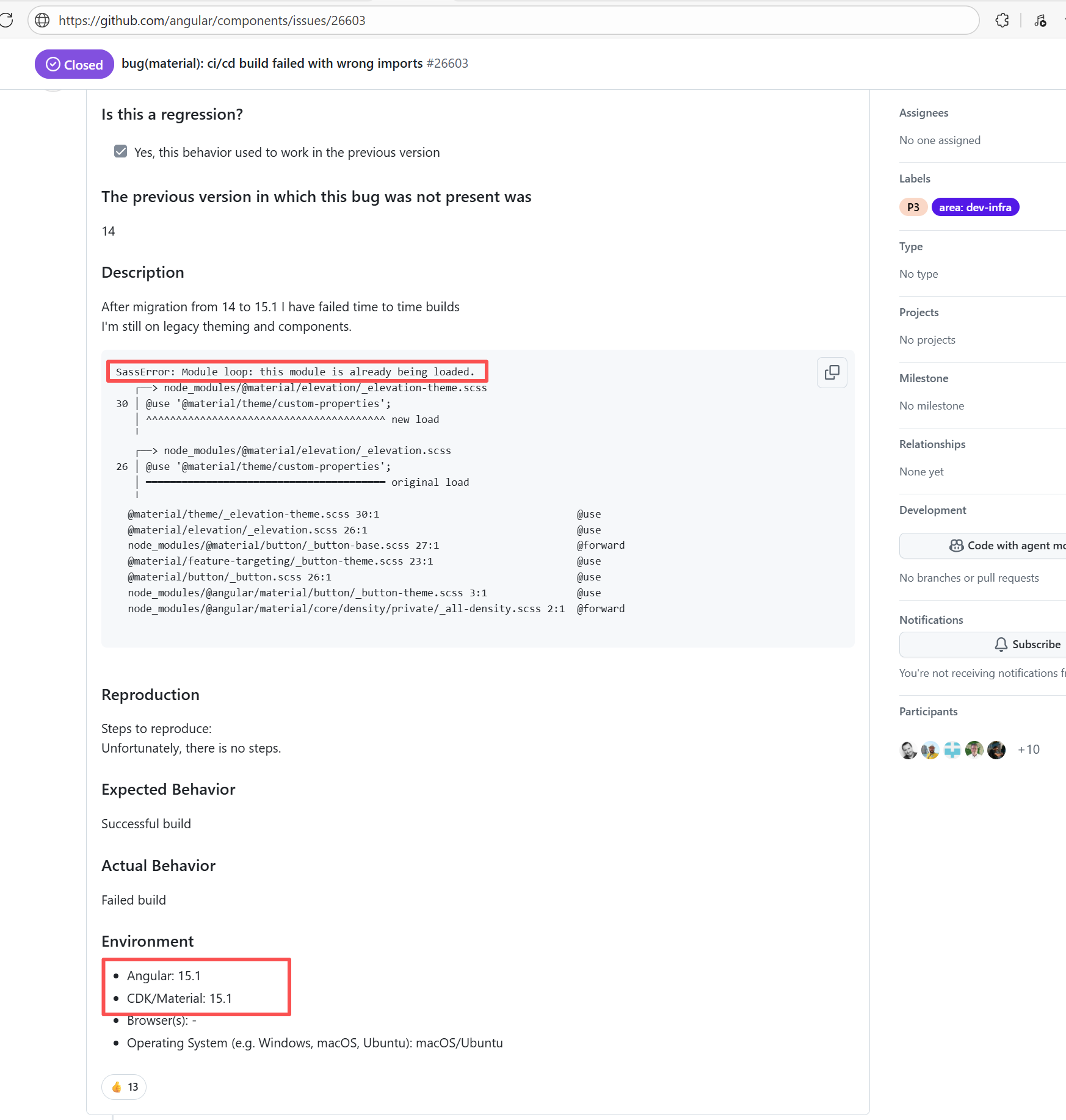

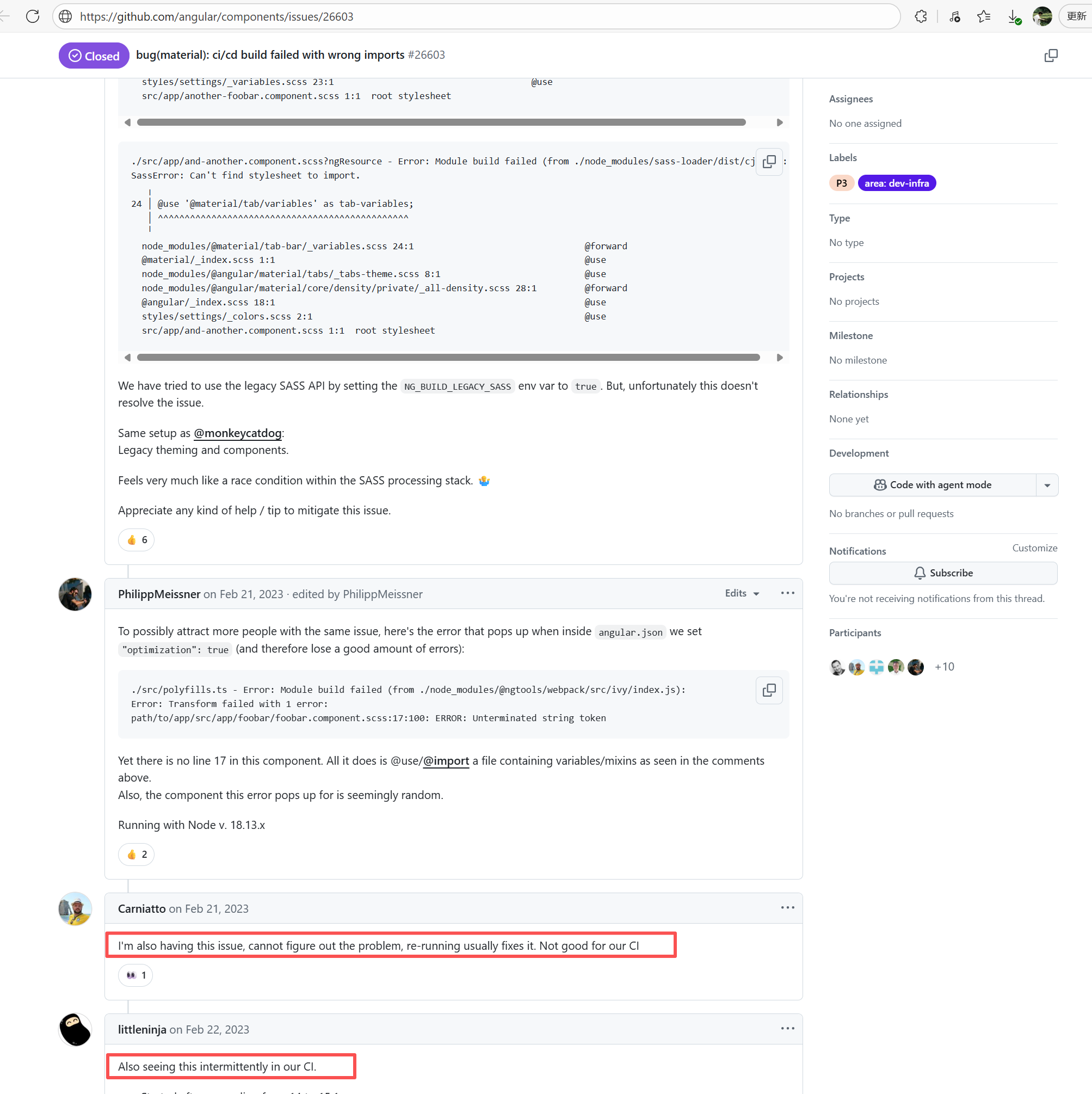

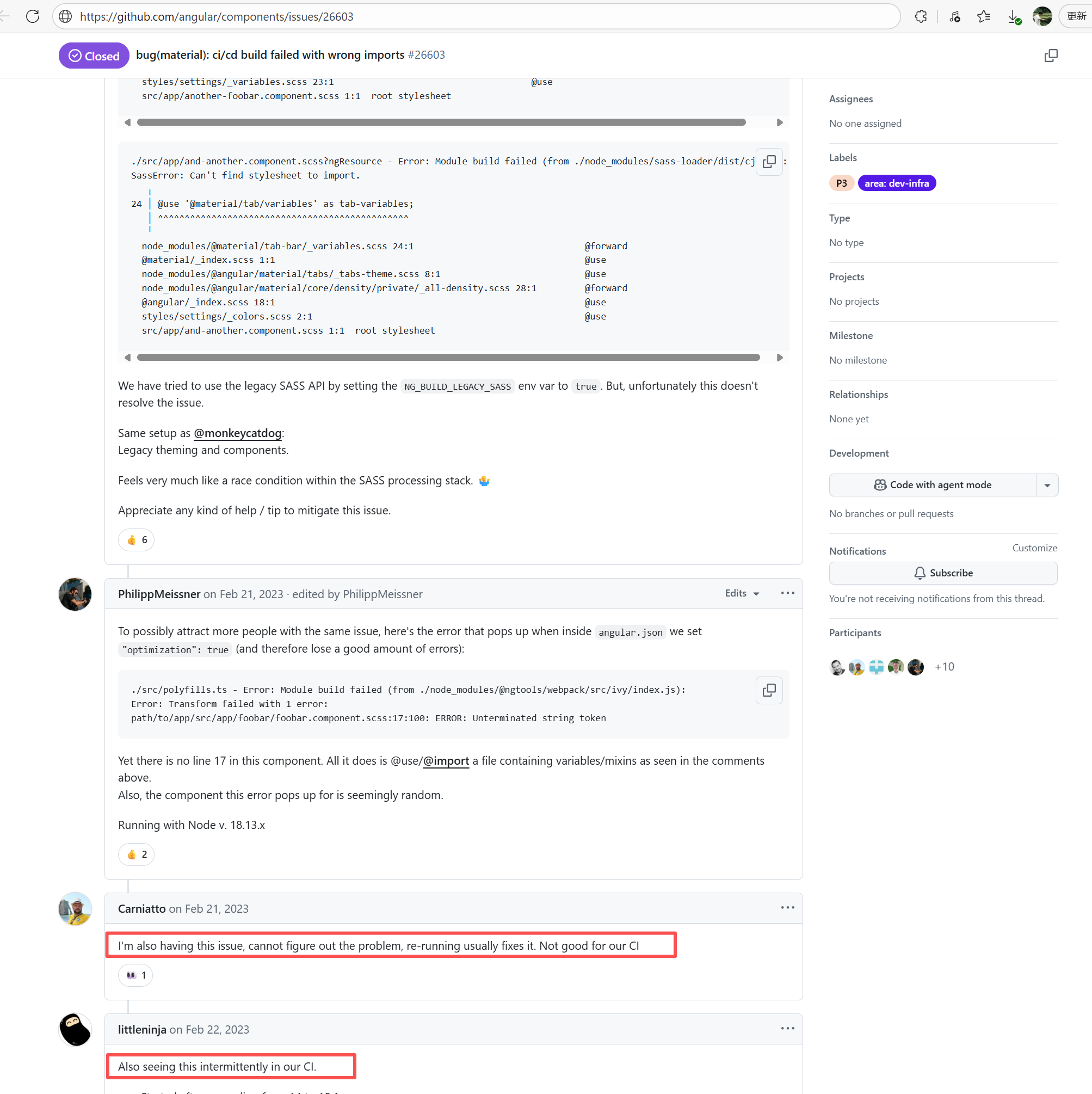

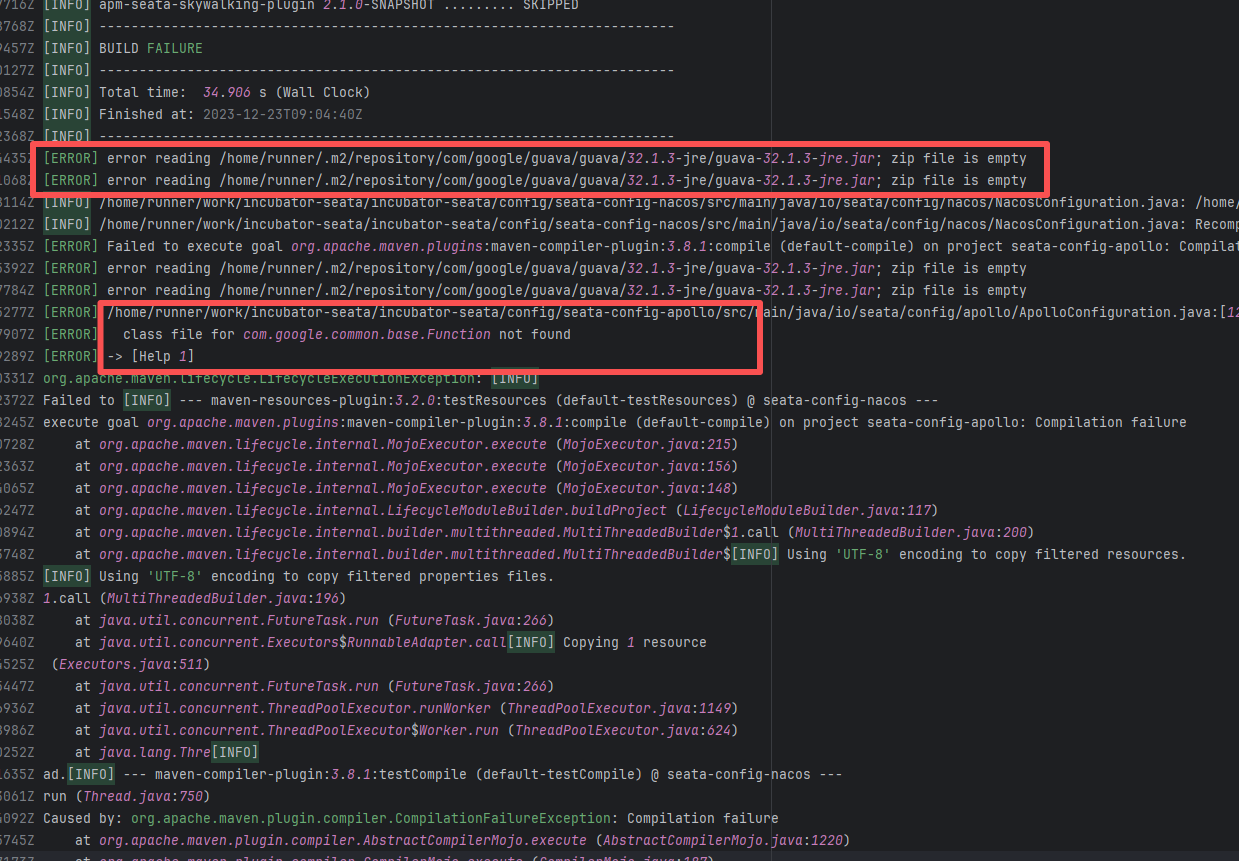

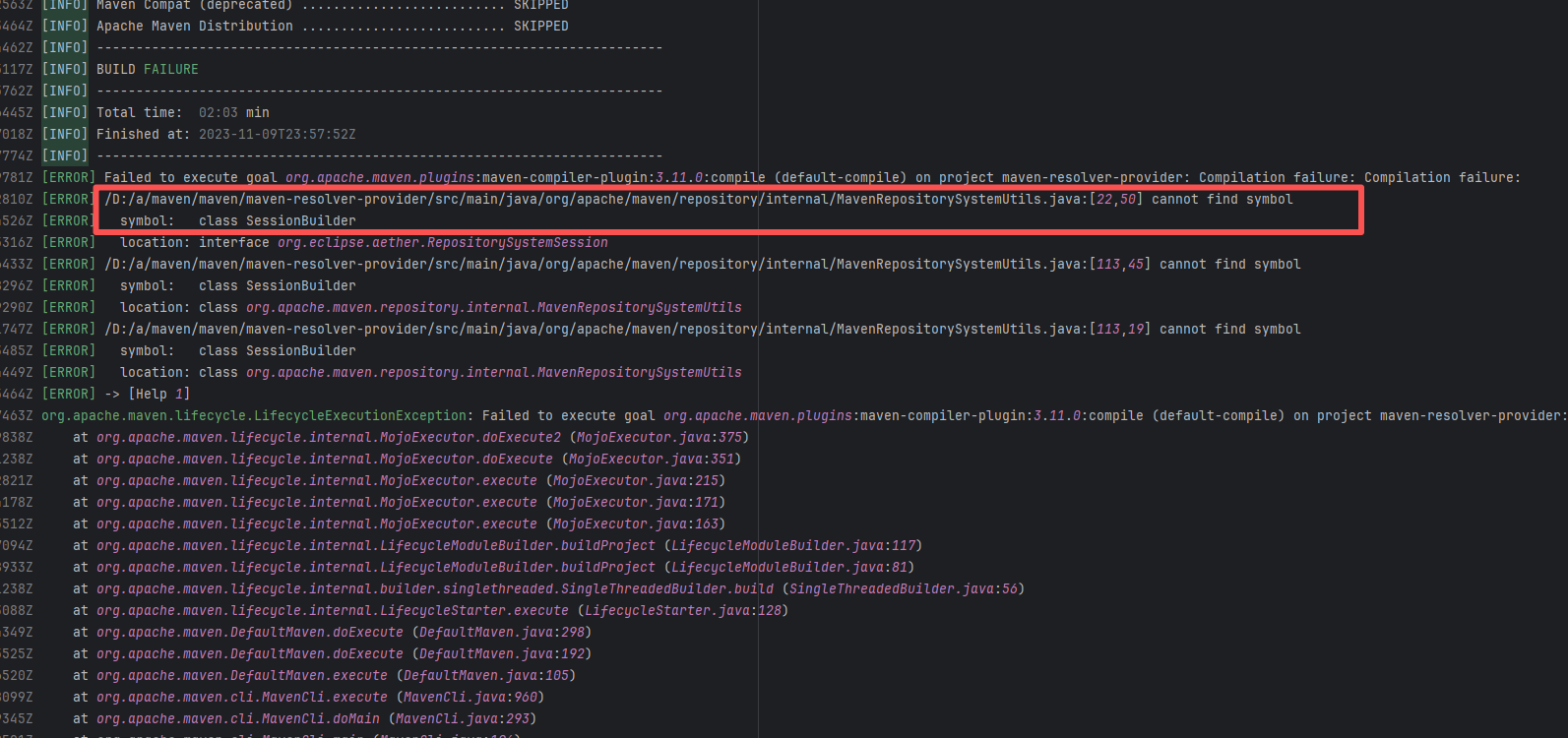

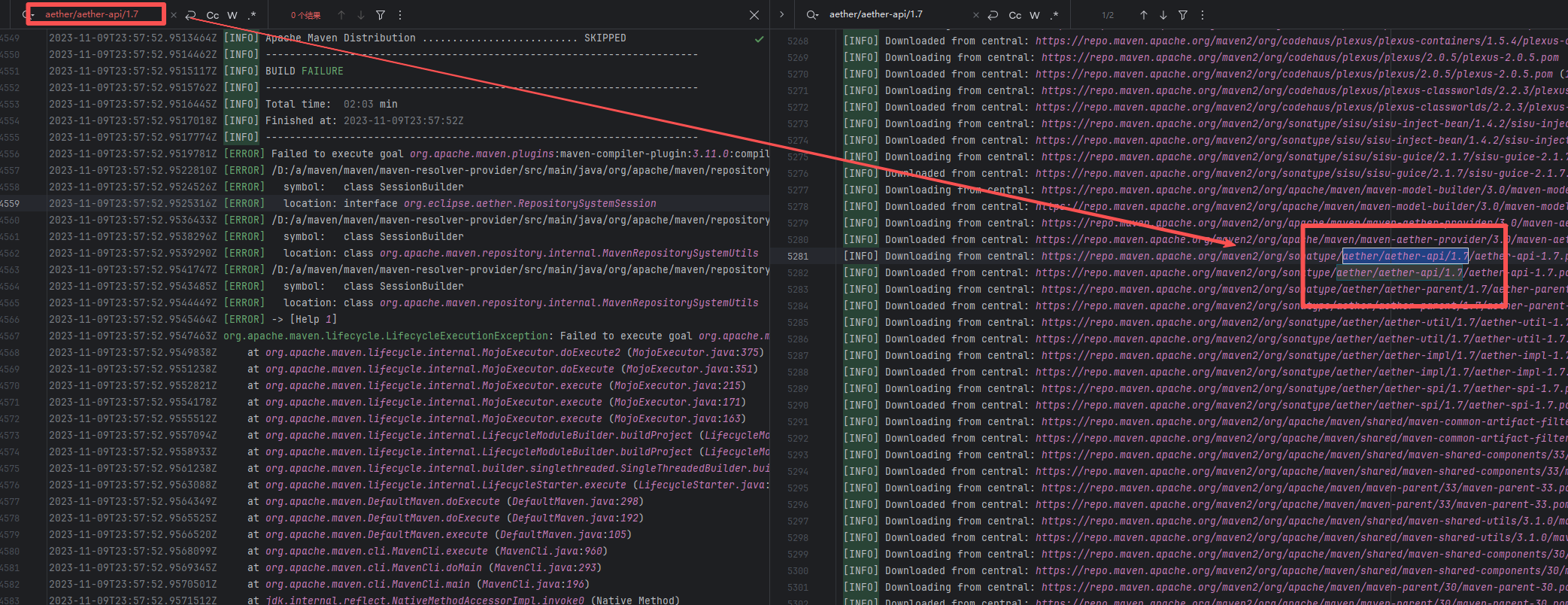

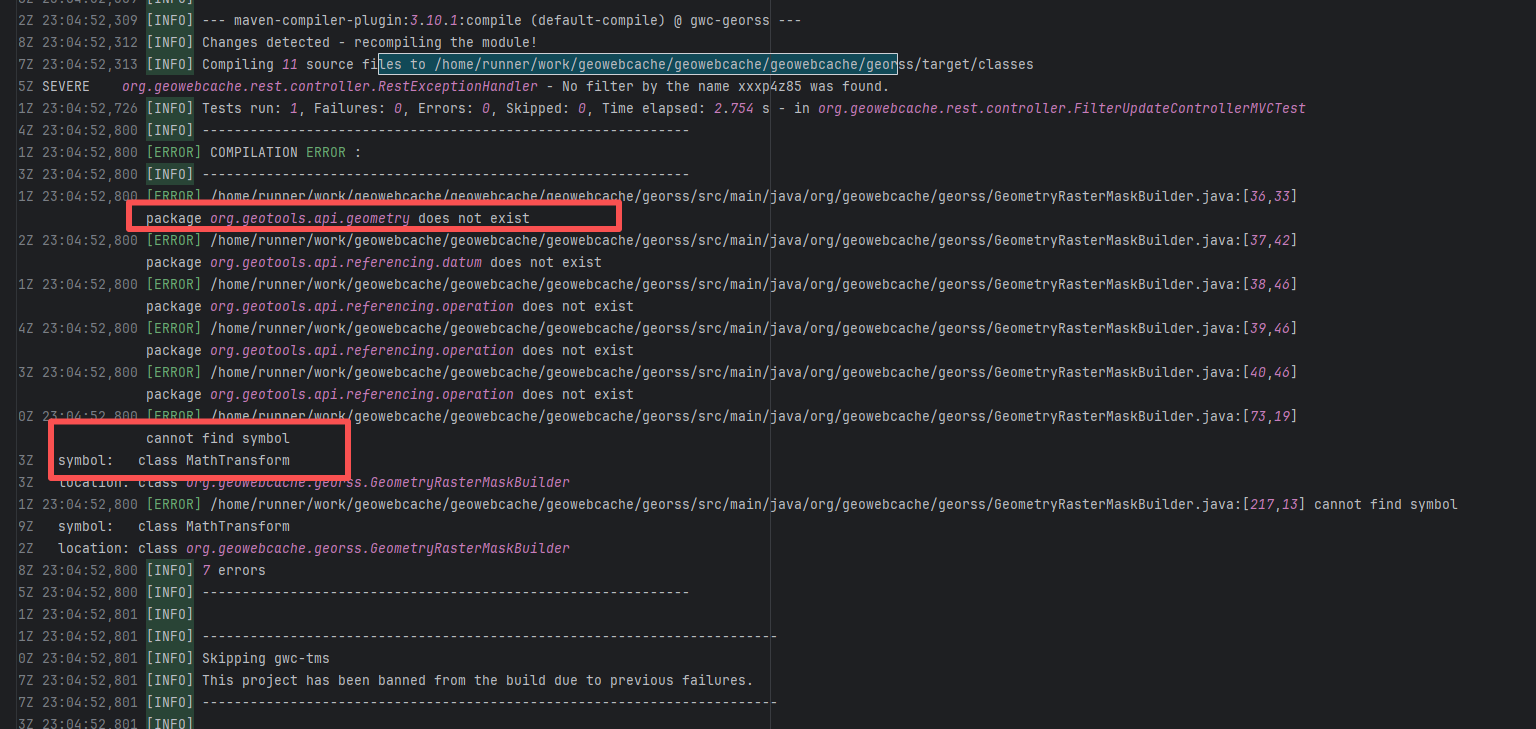

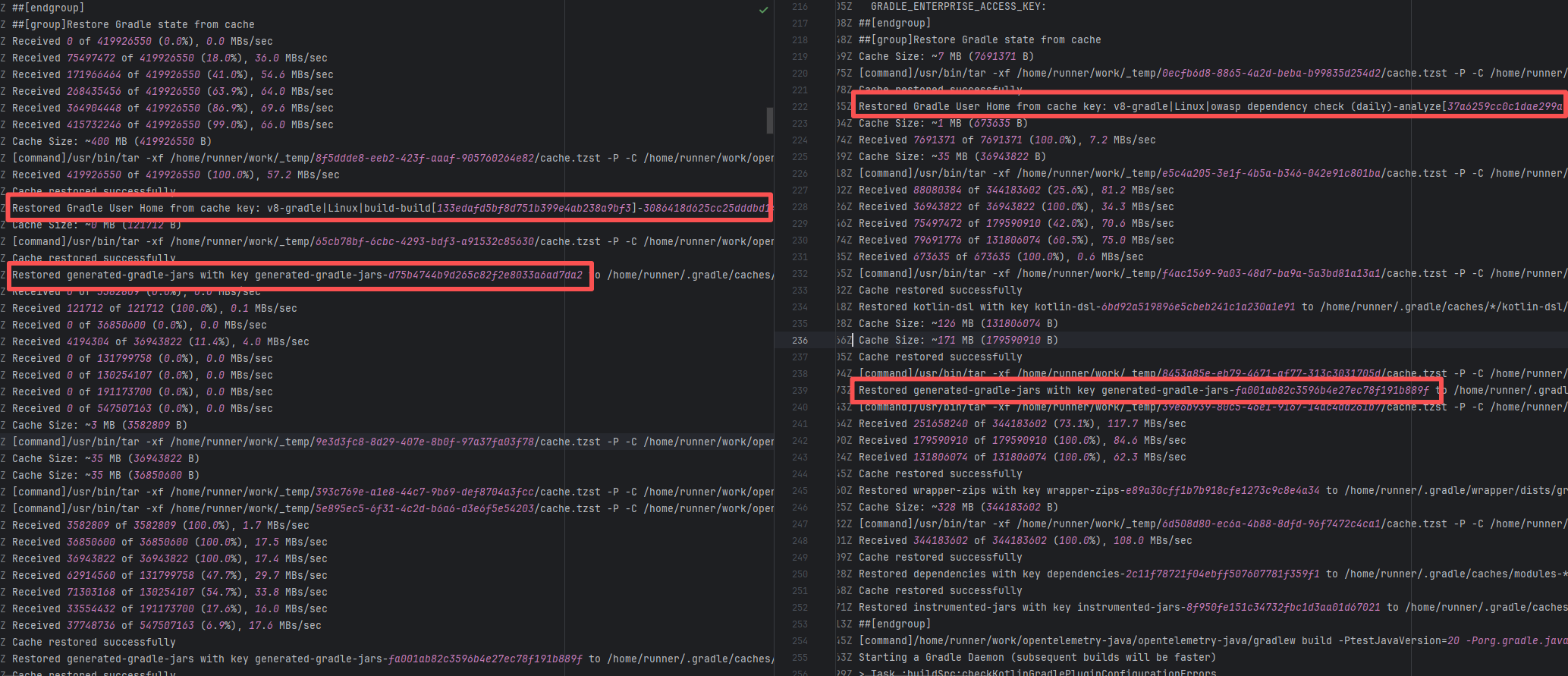

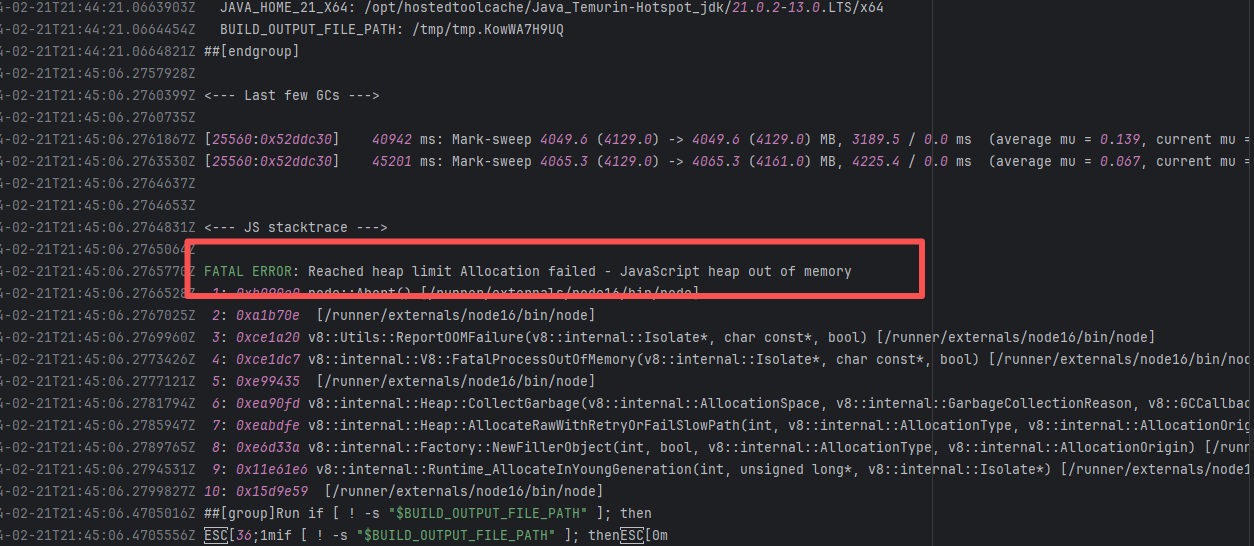

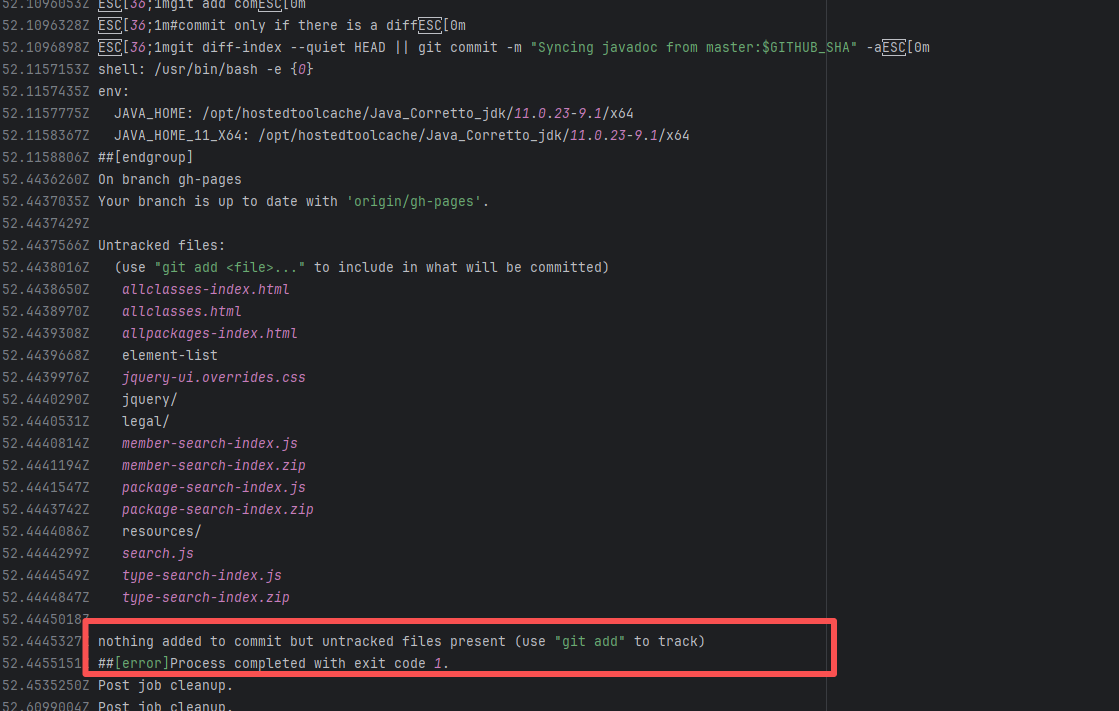

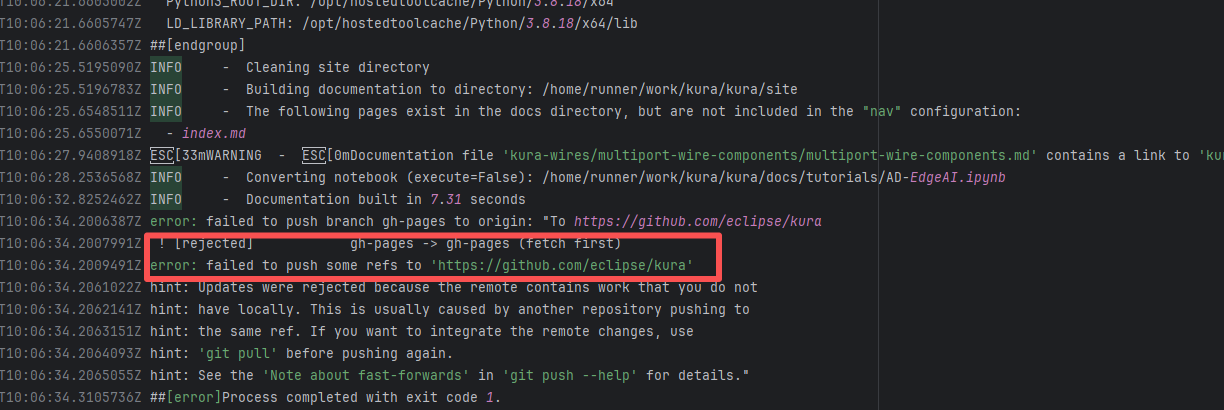

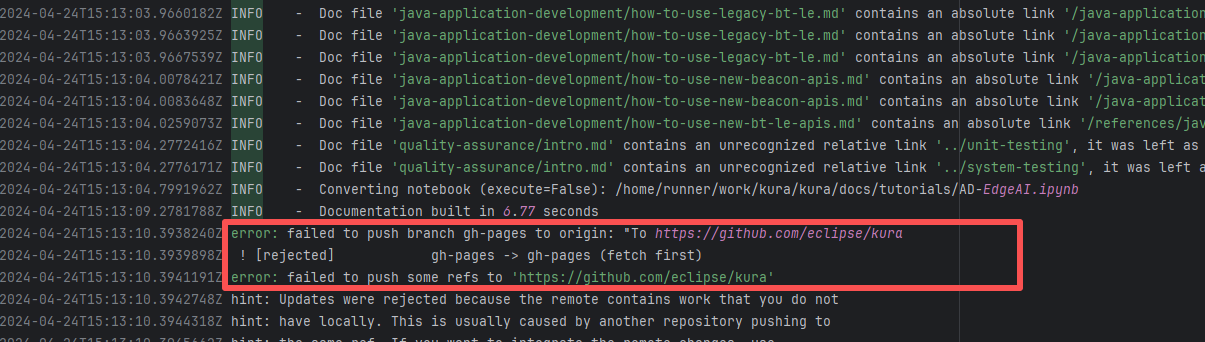

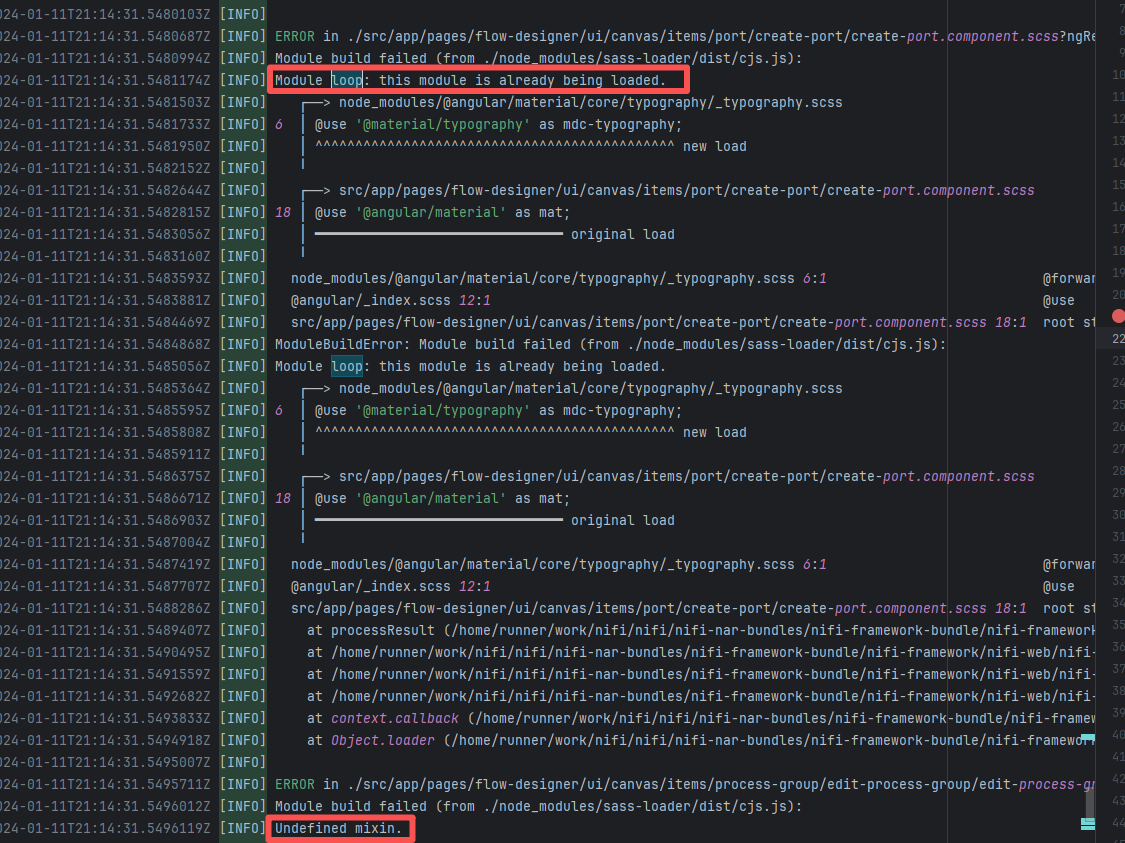

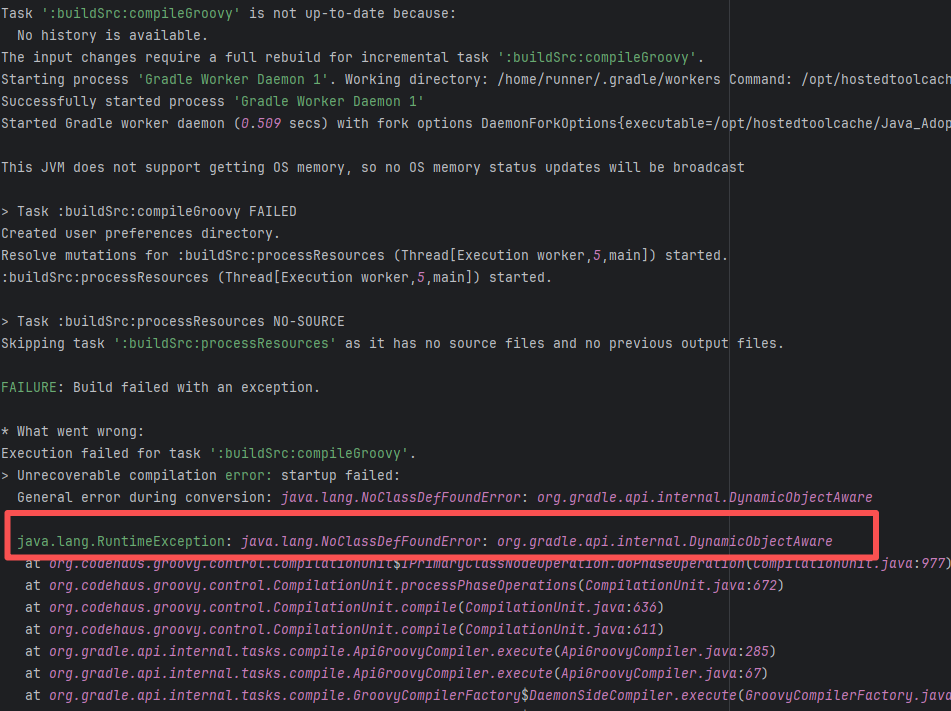

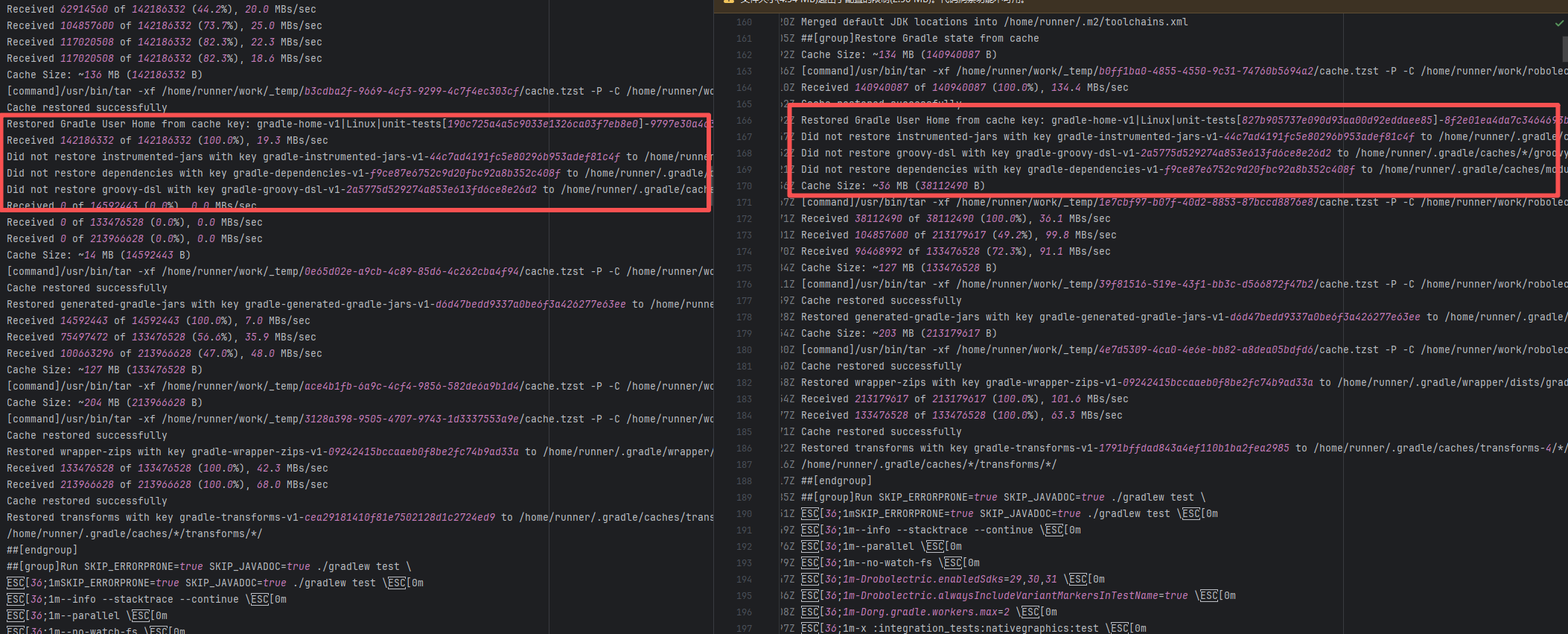

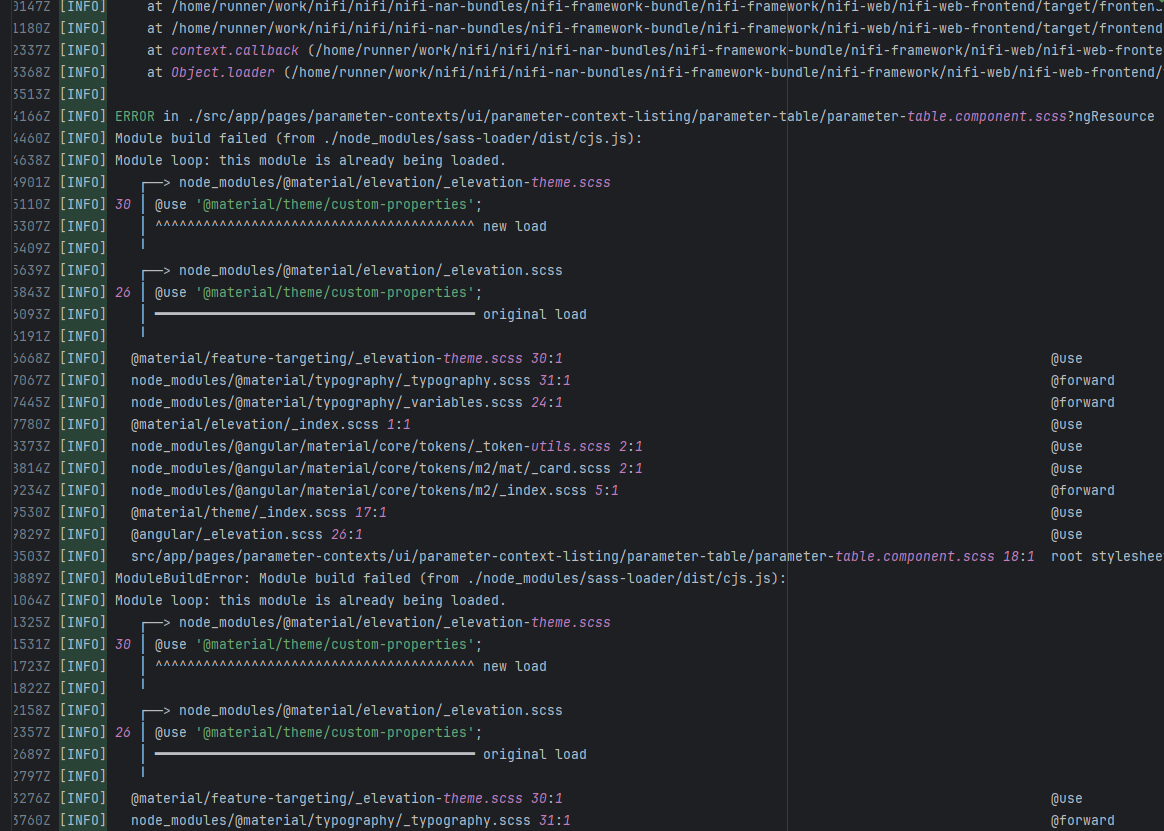

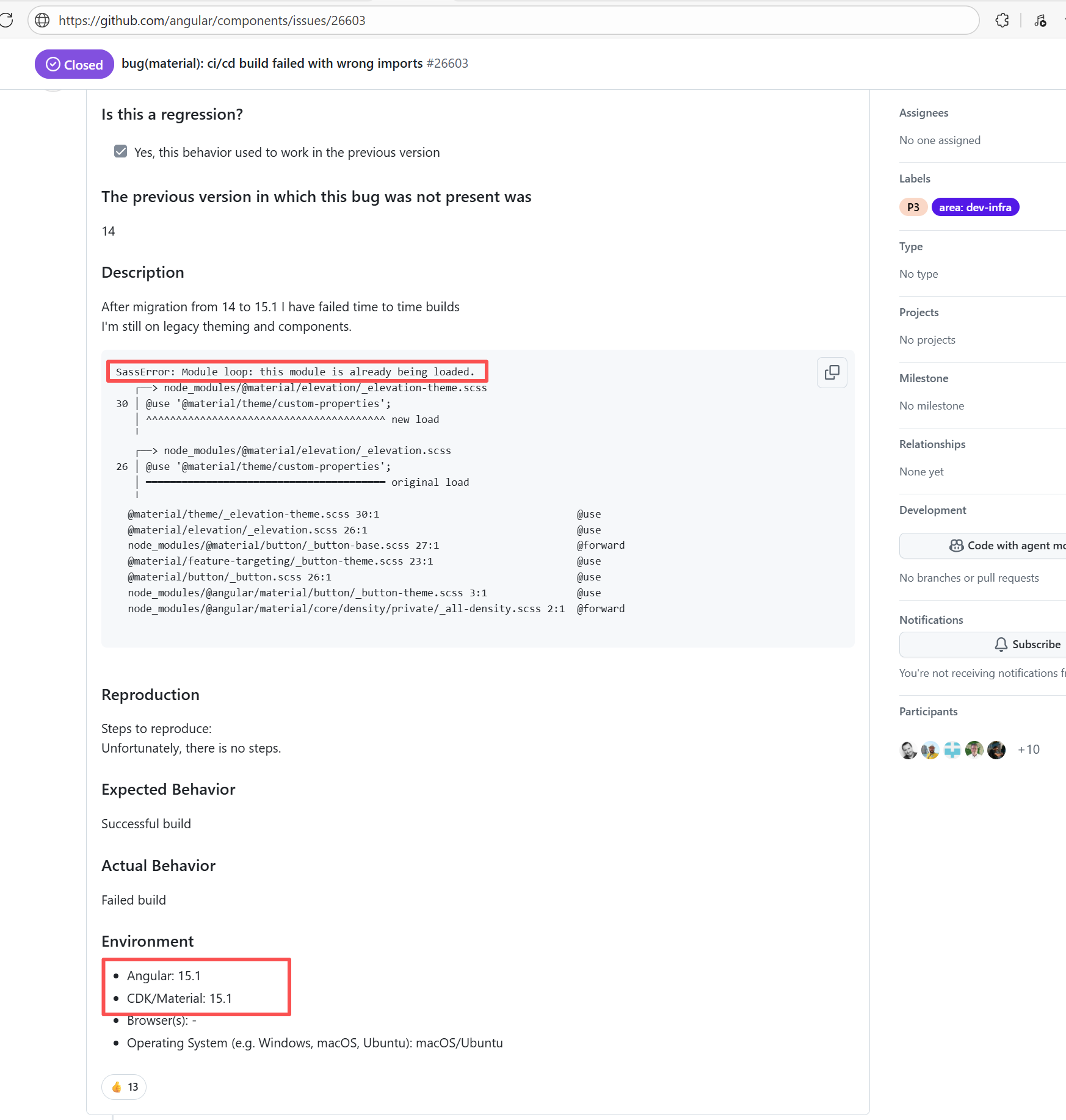

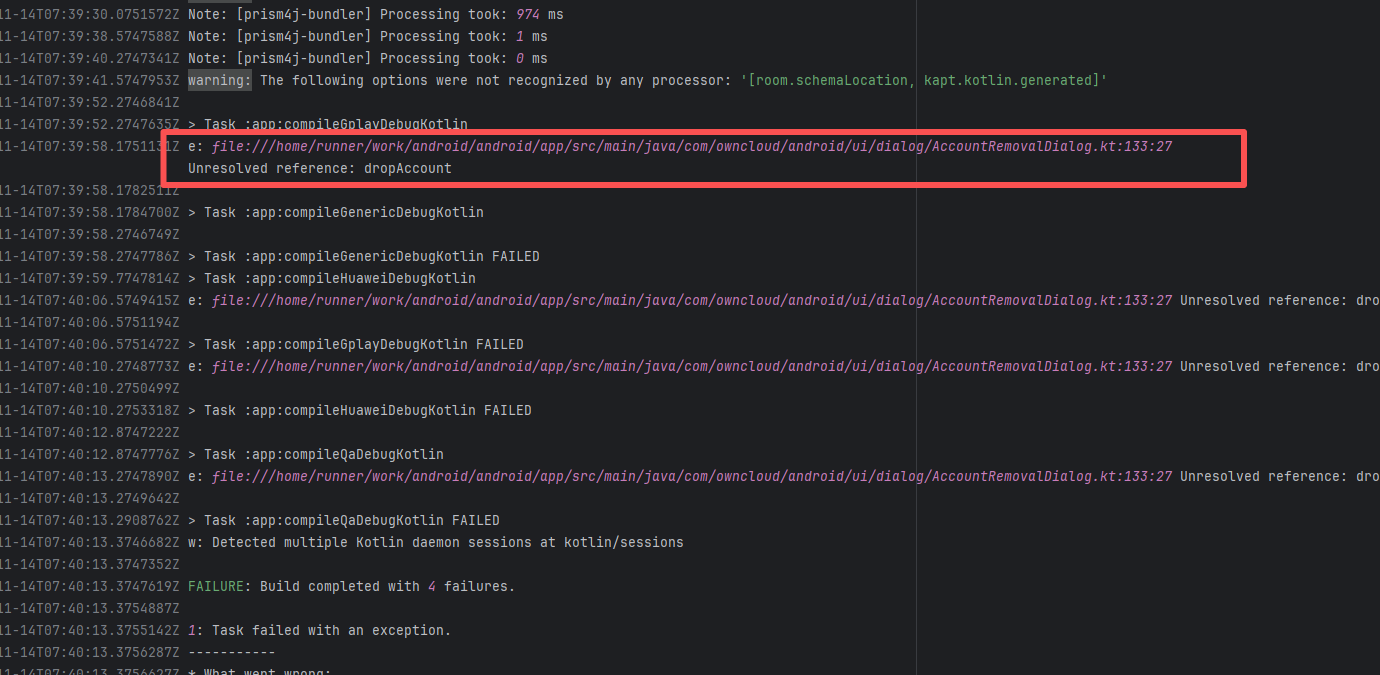

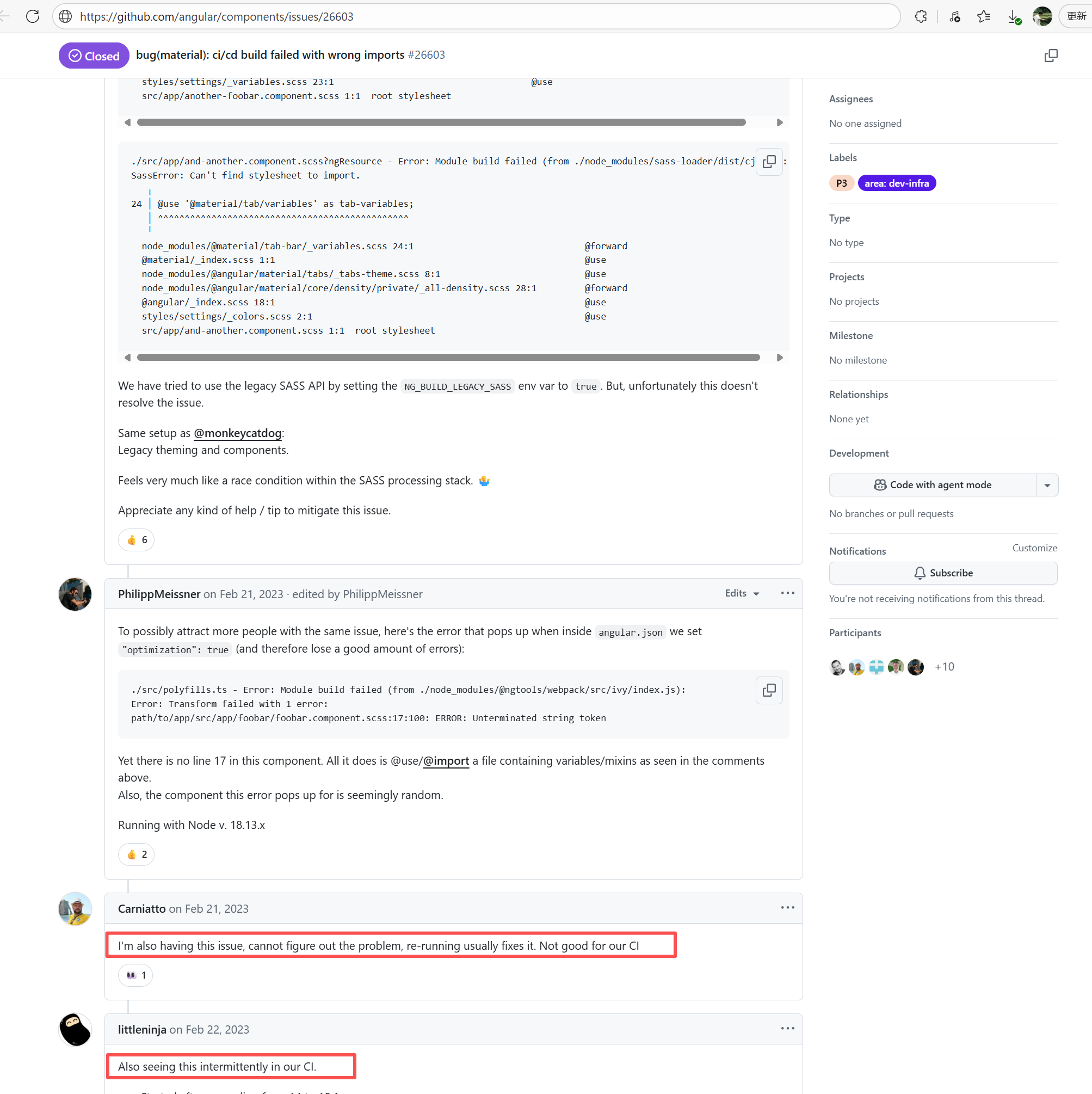

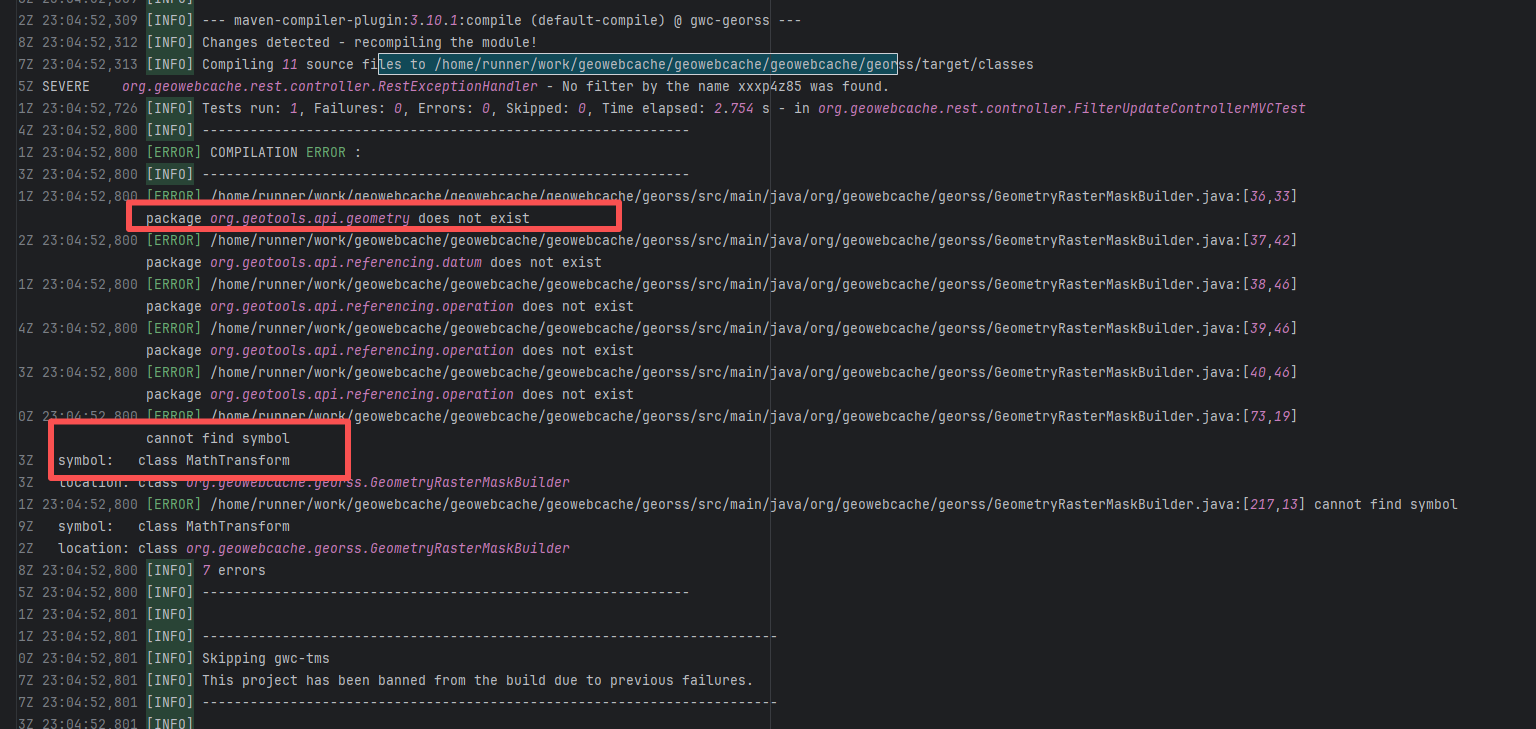

| Compilation Error |

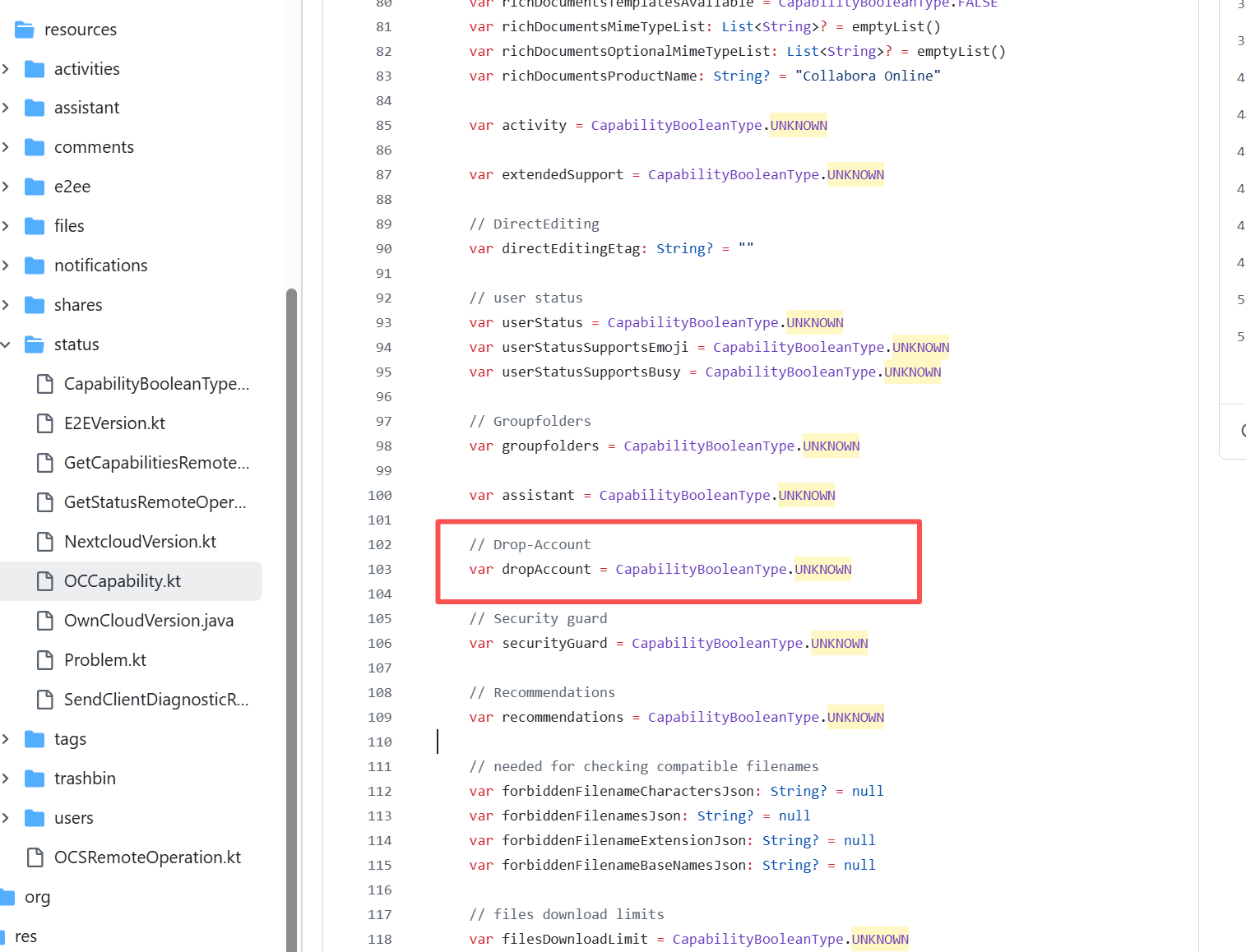

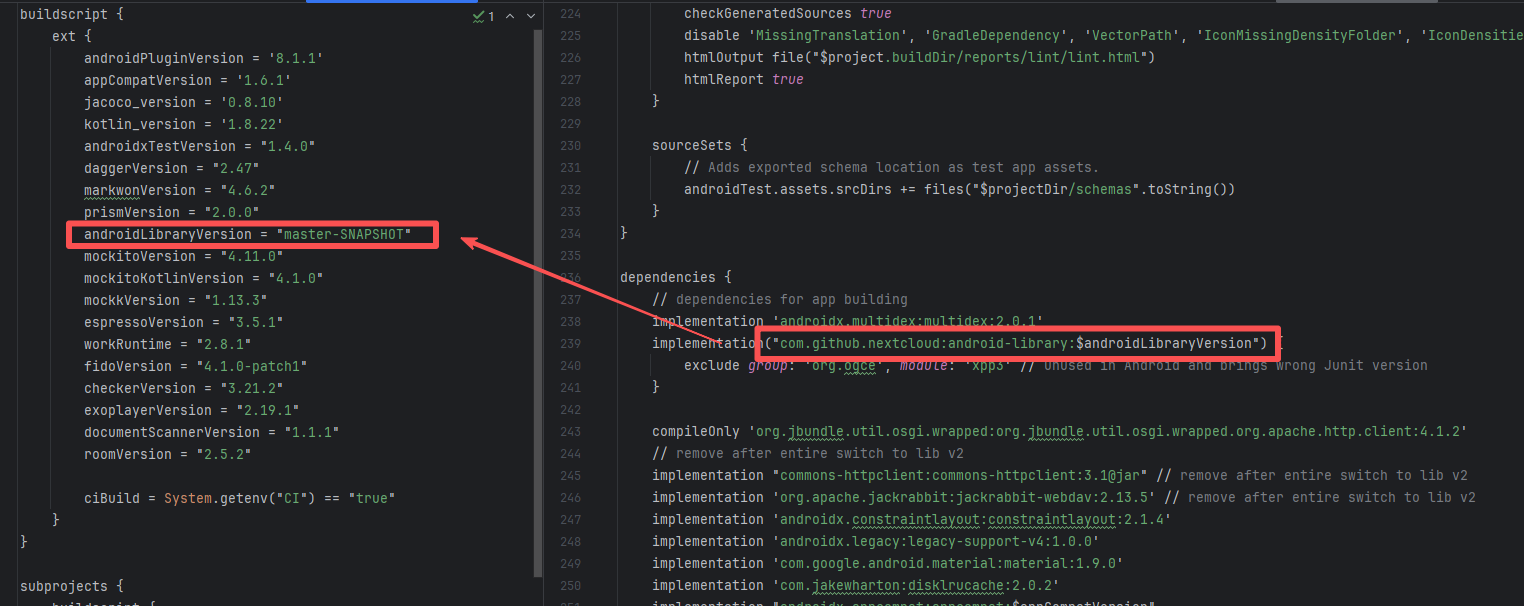

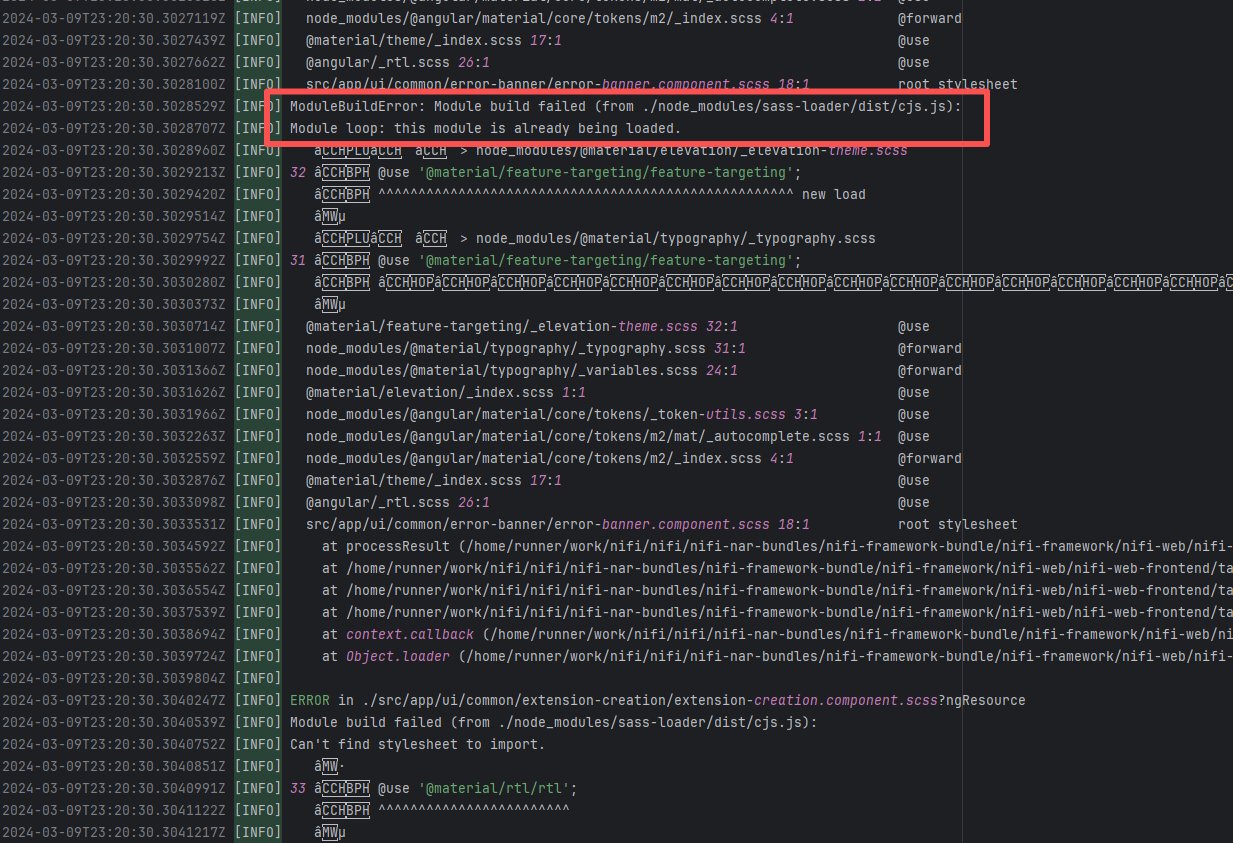

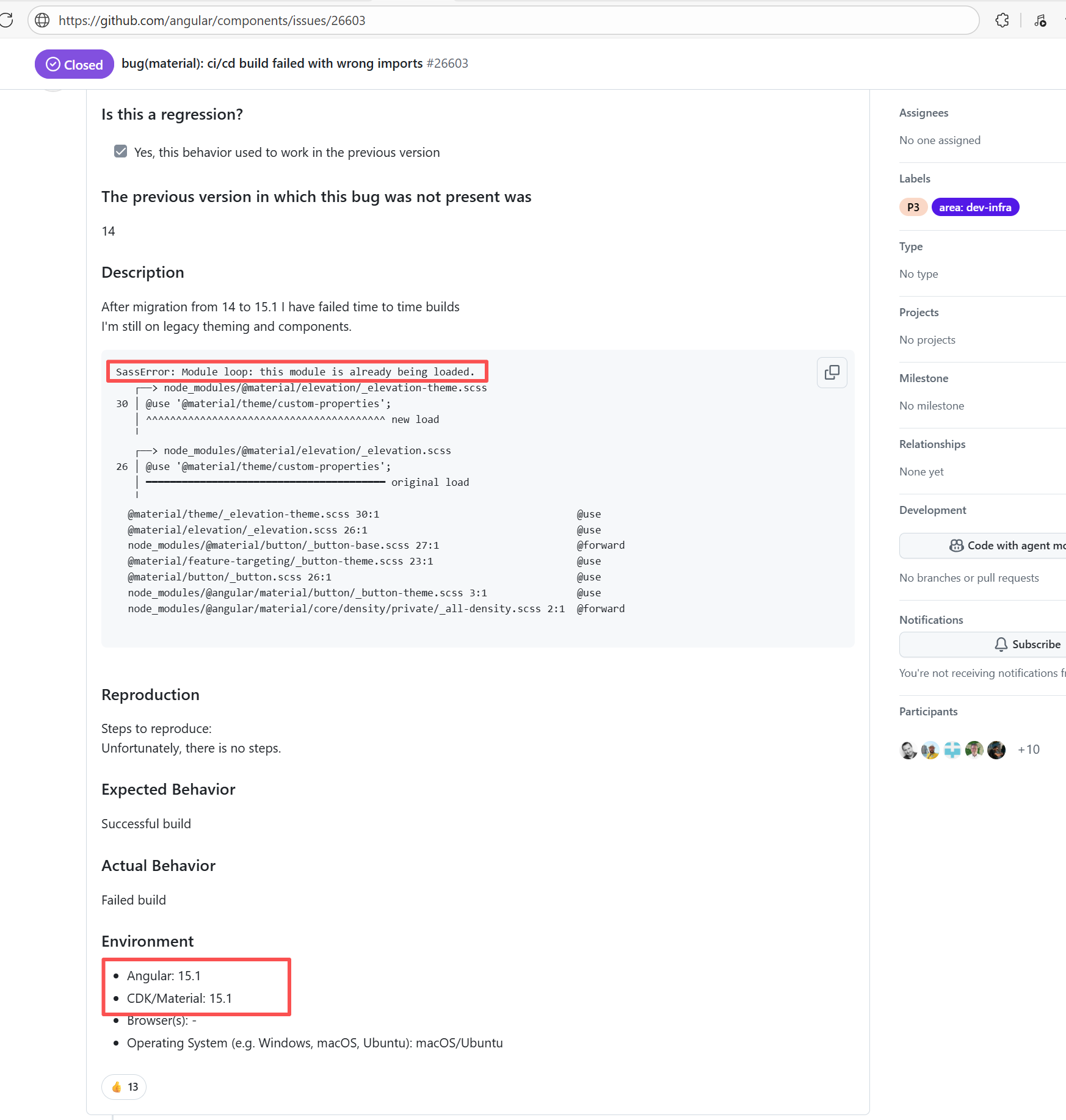

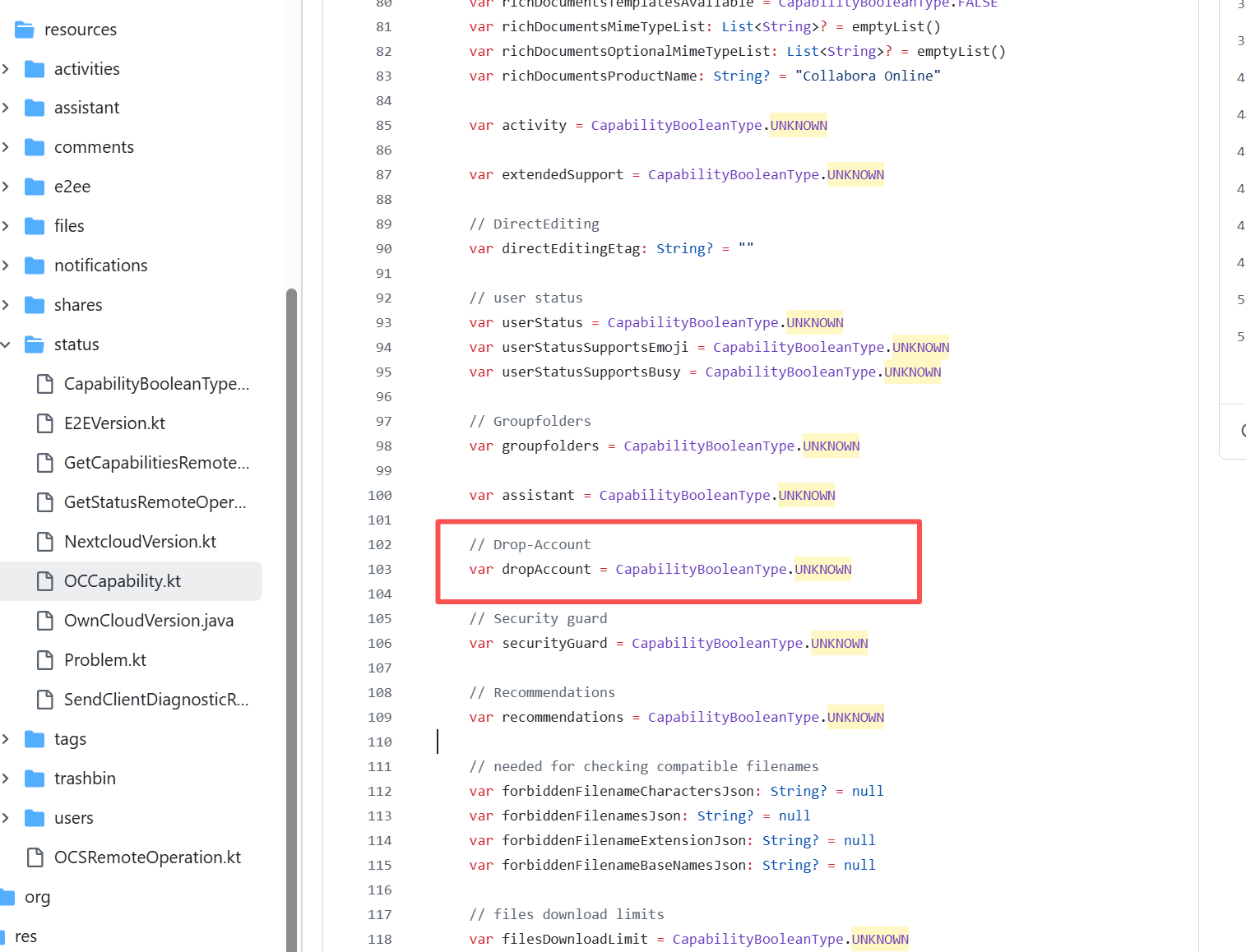

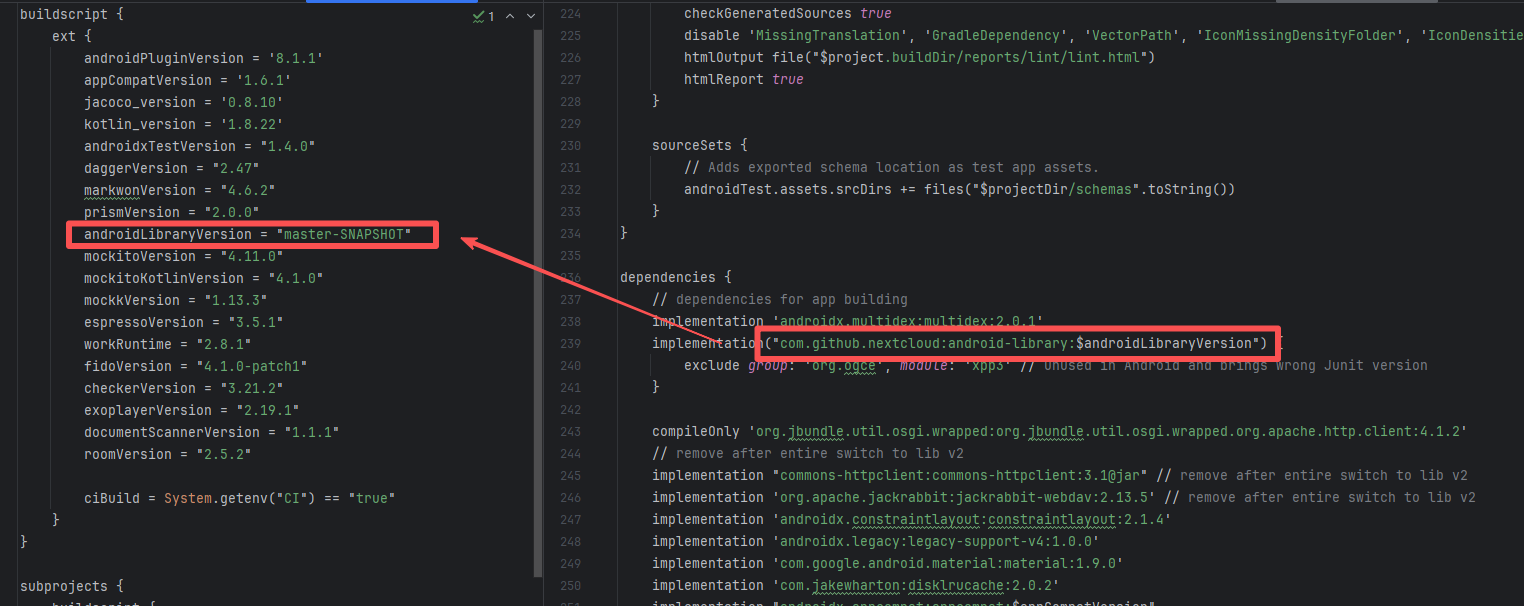

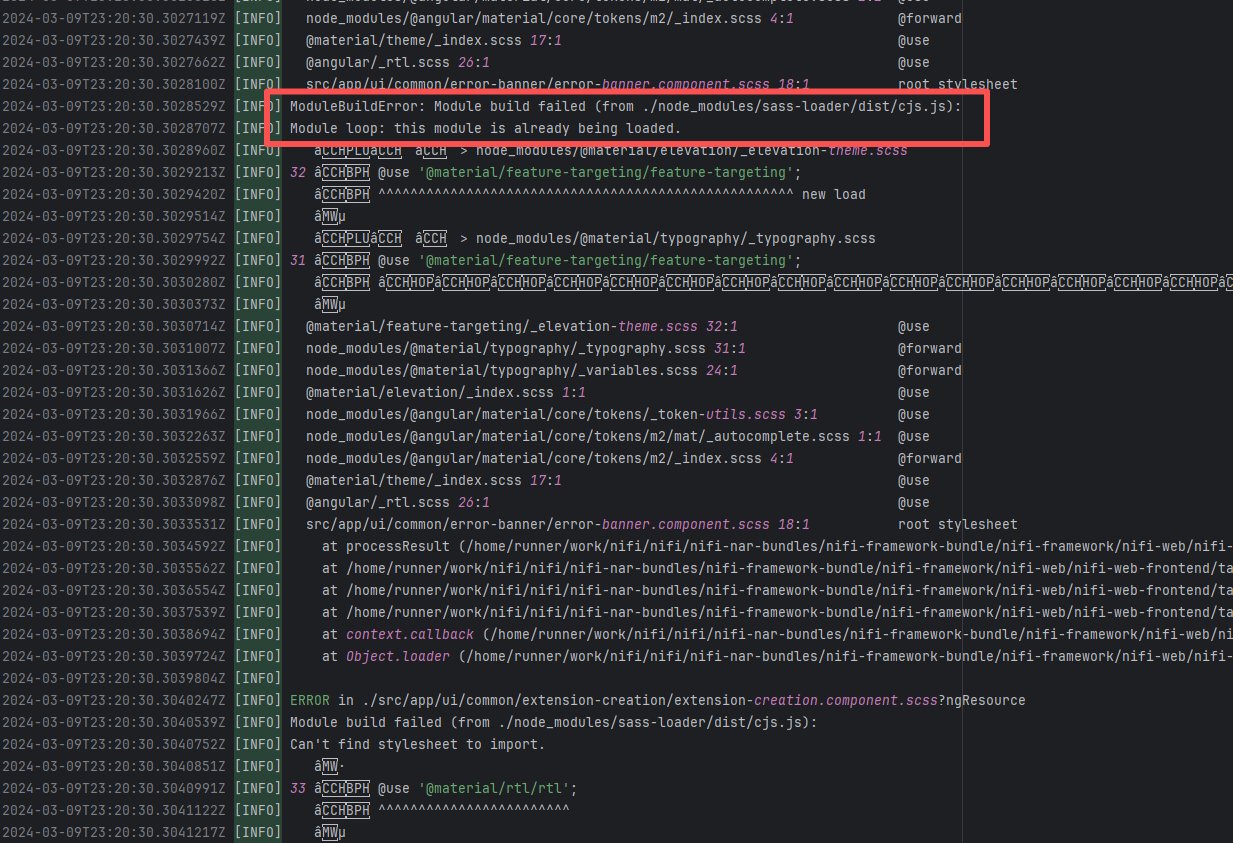

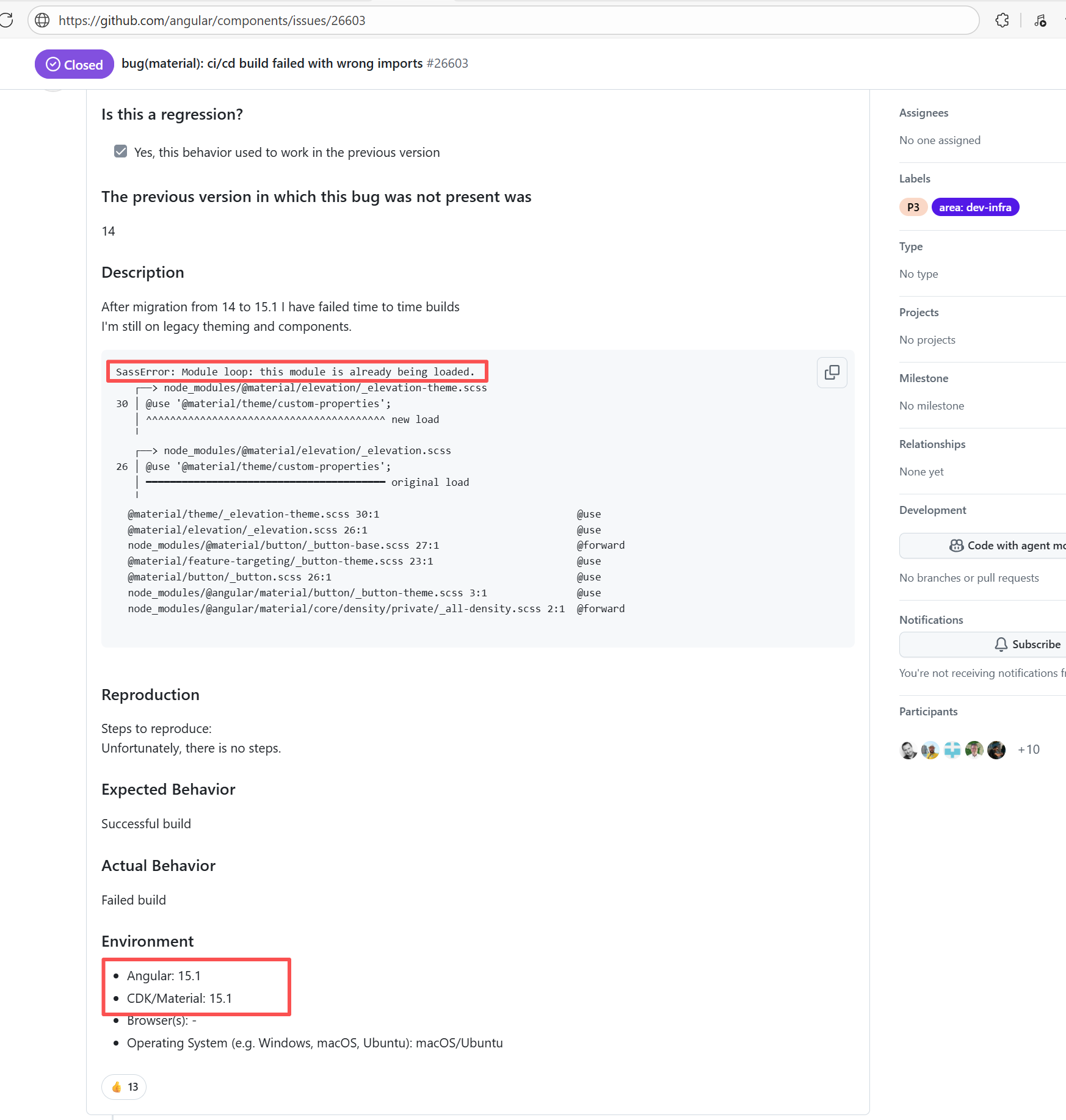

apache/nifi |

20402383310 |

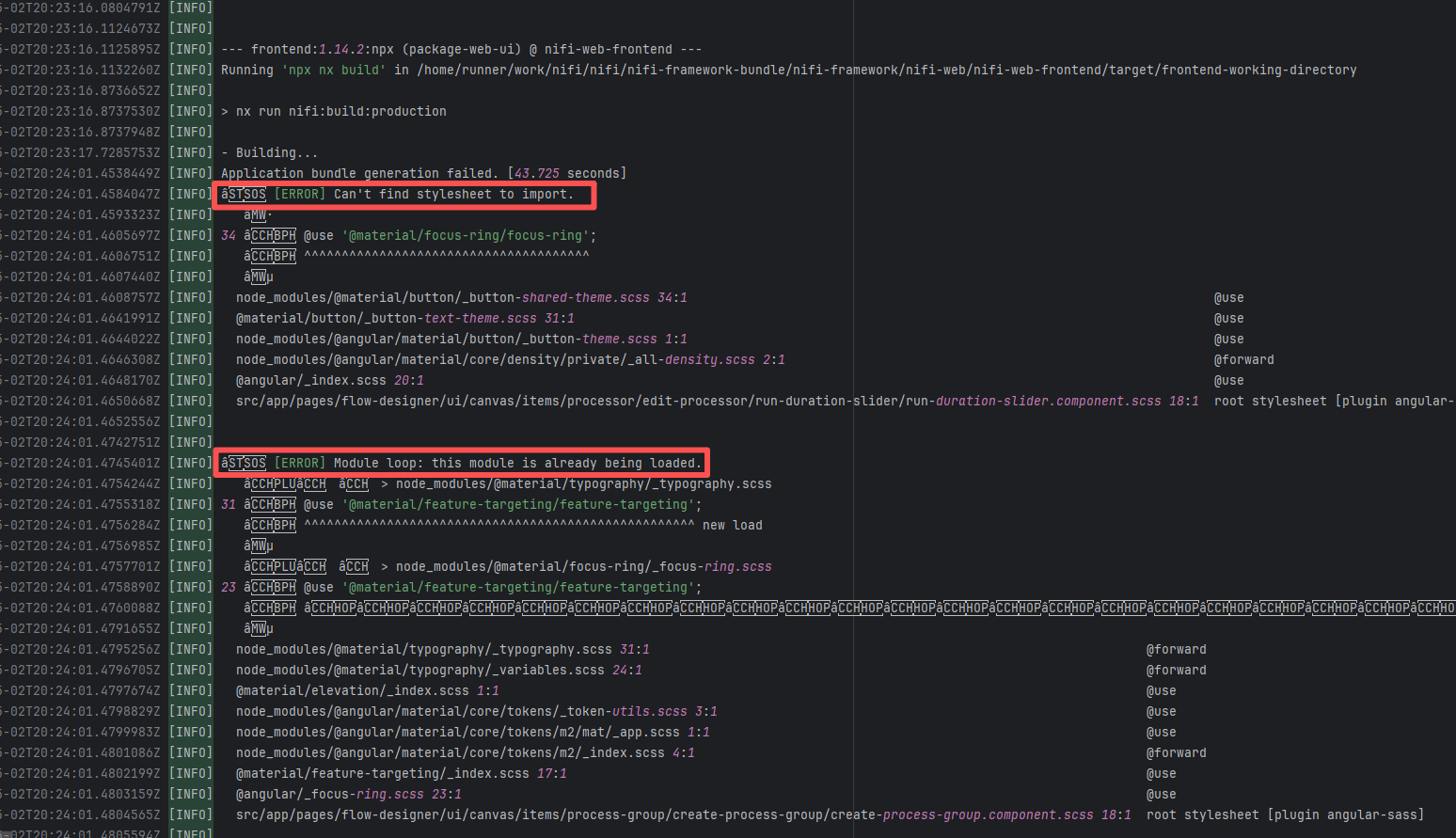

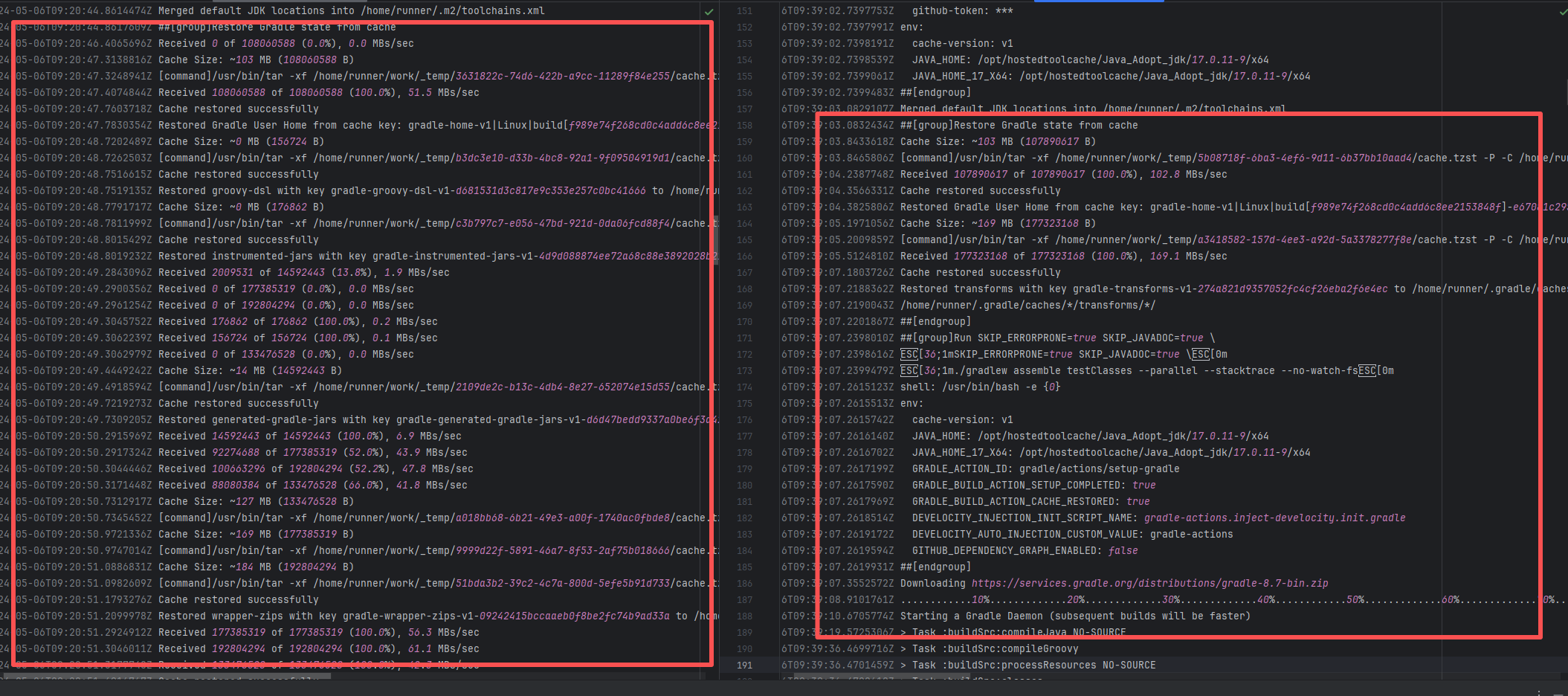

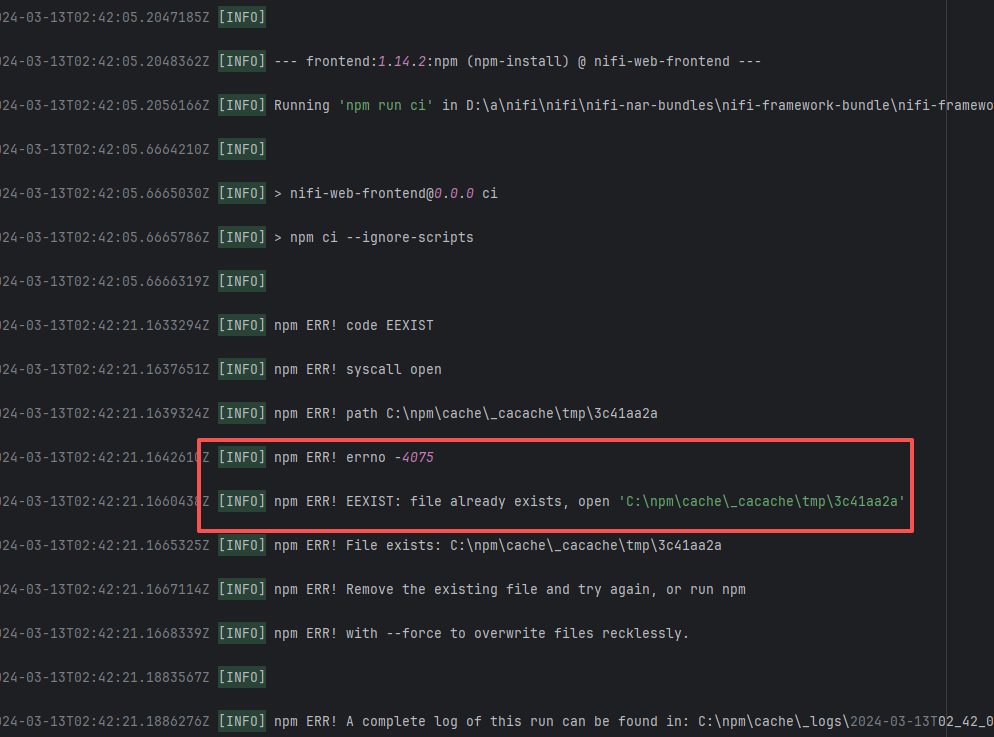

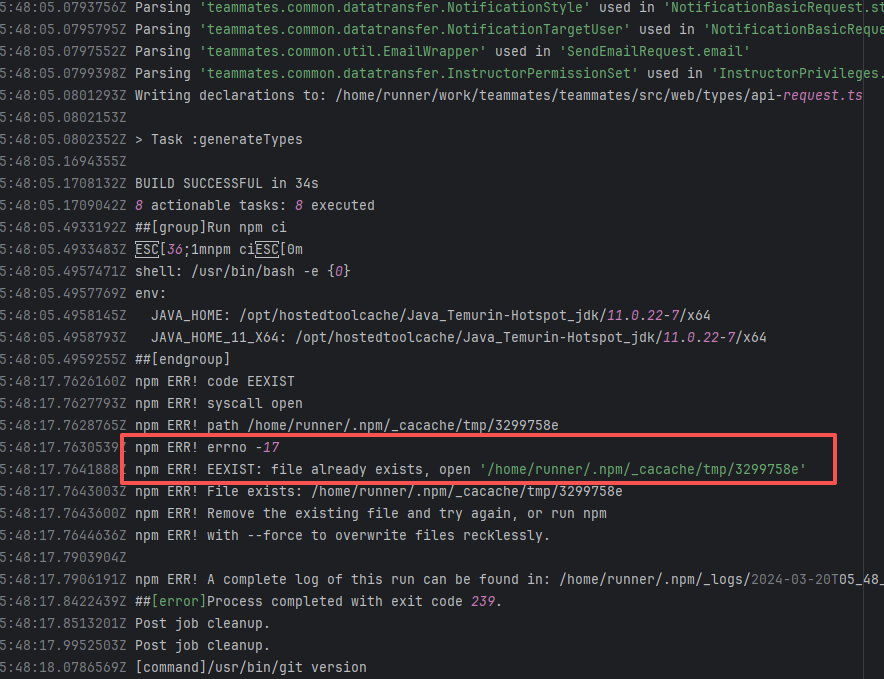

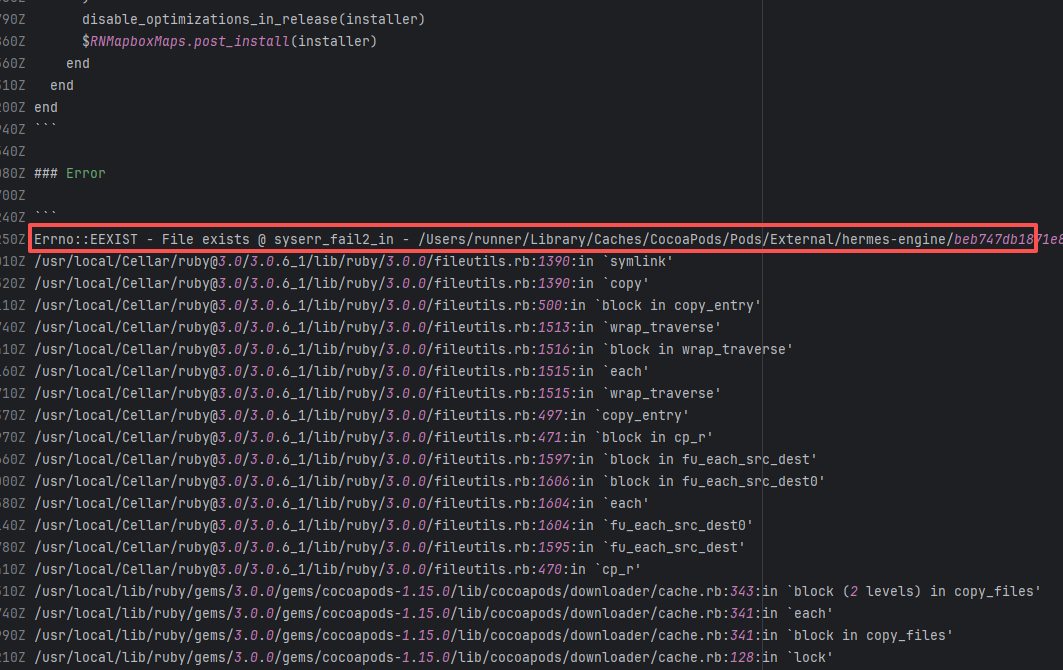

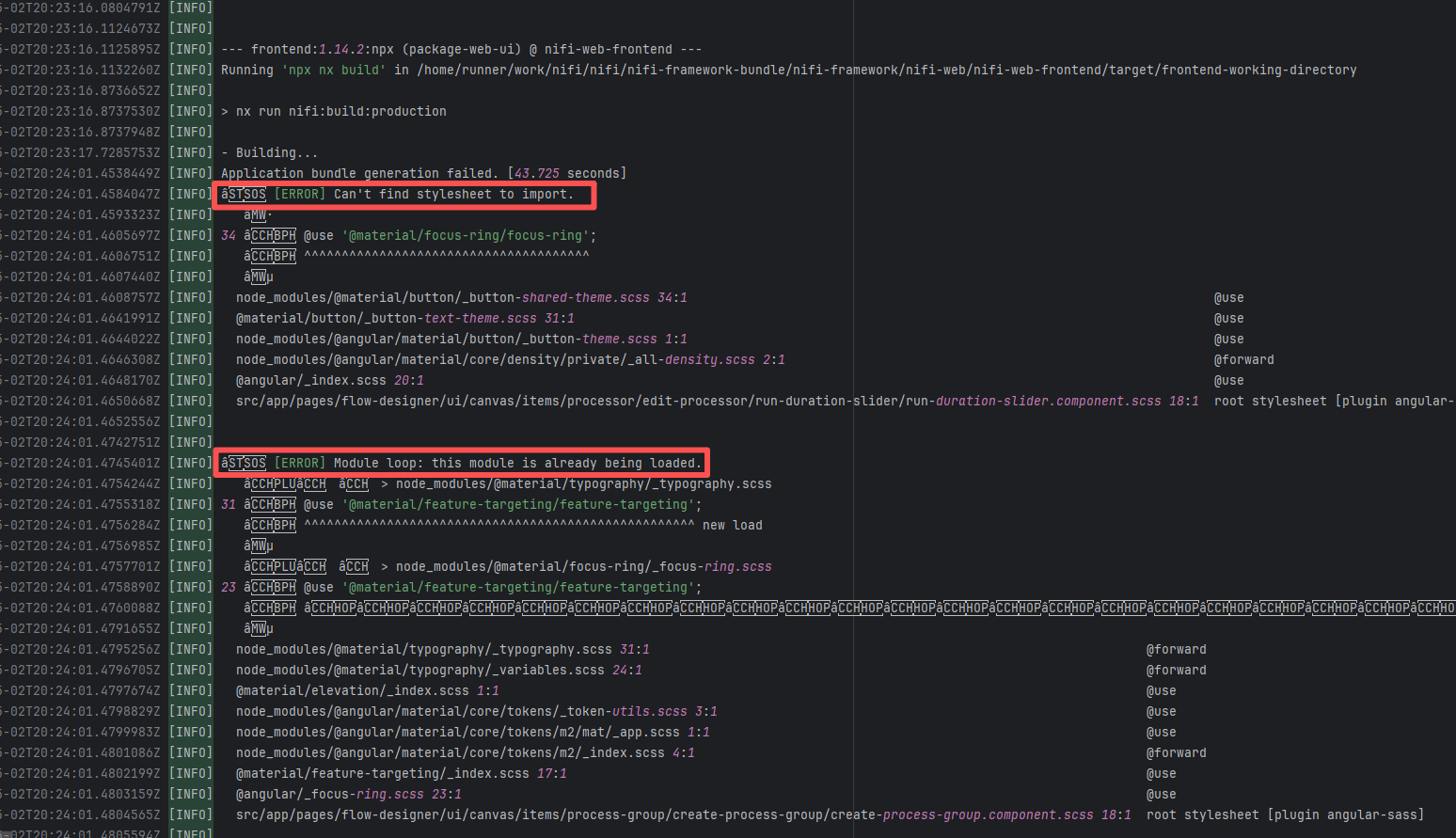

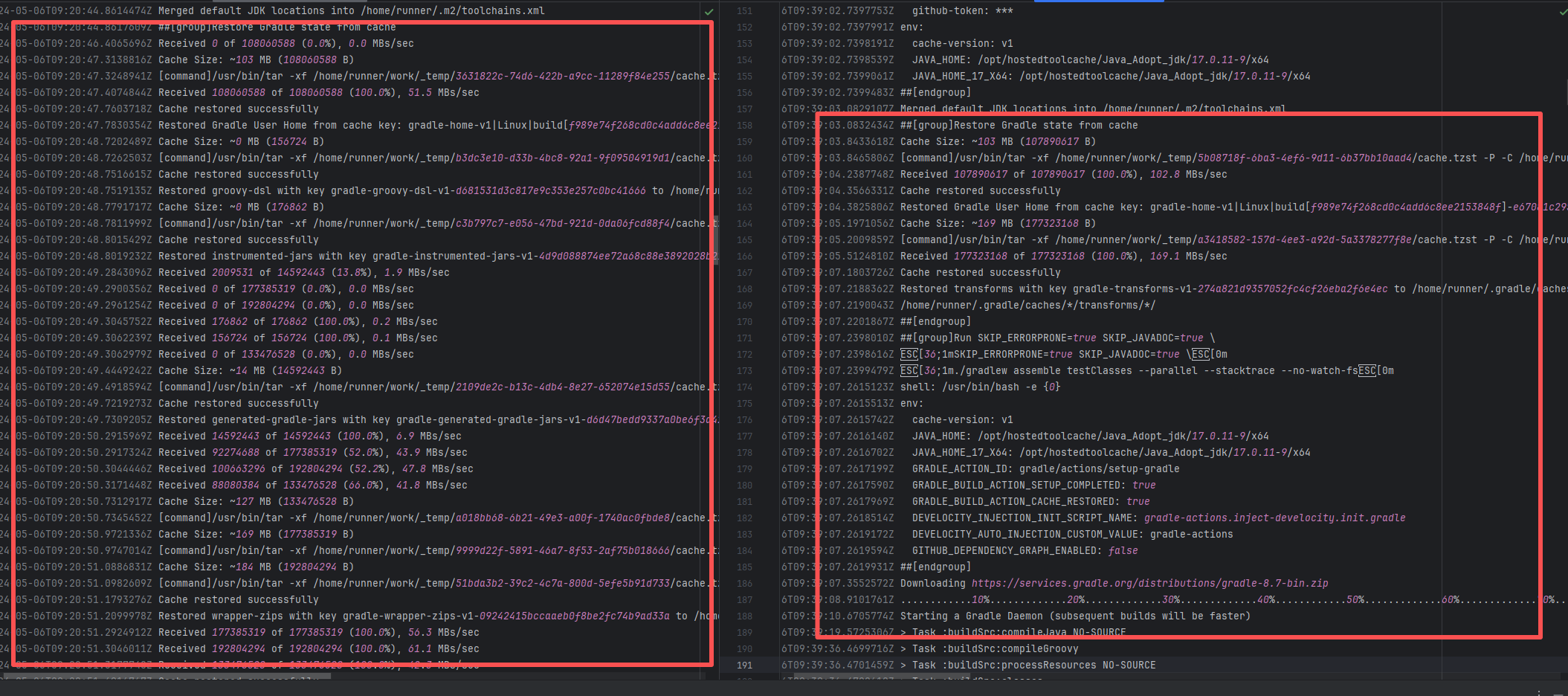

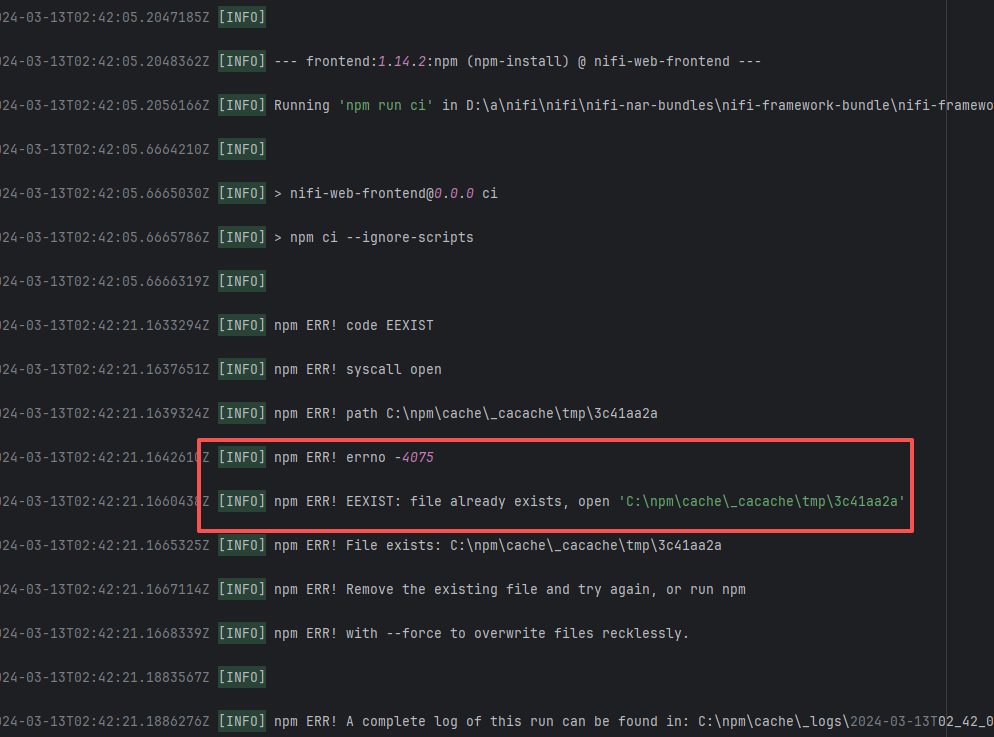

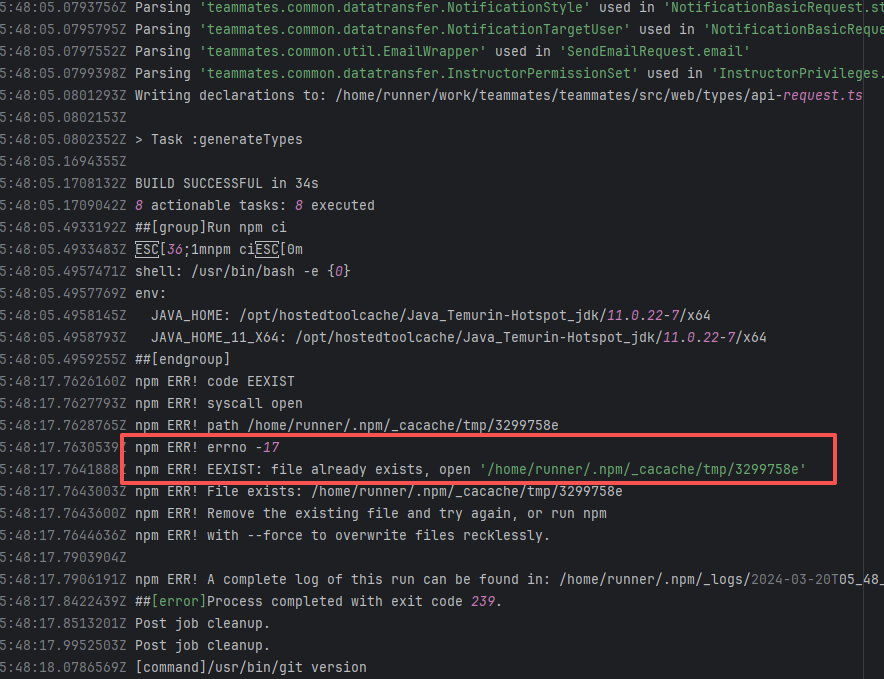

Dirty Cache |

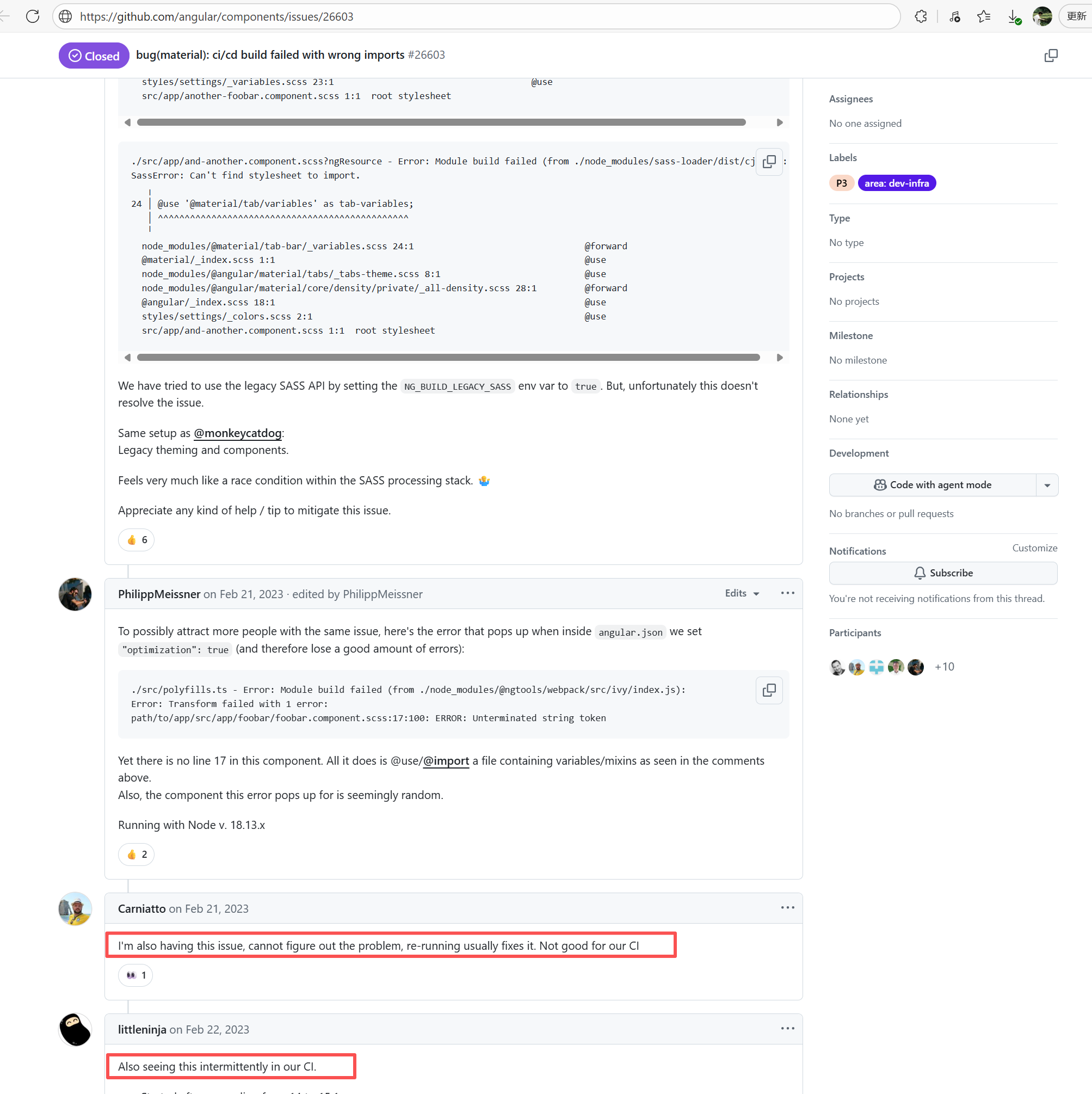

Error: This error is a typical "non-deterministic" compilation error in the front-end build phase. The dependency modules loaded during the Sass compilation process were inconsistent or partially missing, leading to circular references in module loading (possibly because some .scss files were truncated or loaded repeatedly).

Root Cause: The Sass compiler (sass-loader) reads multiple levels of .scss modules and mixin definitions (such as @use '@angular/material' as mat;) during the Angular build process, which relies on a complete module reference tree and consistent file content. A careful analysis of the logs revealed that the failed job had a cache hit while the successful job had a cache miss. Therefore, the Root Cause is that the dependency content (such as node_modules) saved in the GitHub Actions cache was interrupted or partially corrupted during the previous build and packaging, resulting in incomplete or conflicting files after restoration. The rerun was successful. |

Rerun after clearing the cache |

|

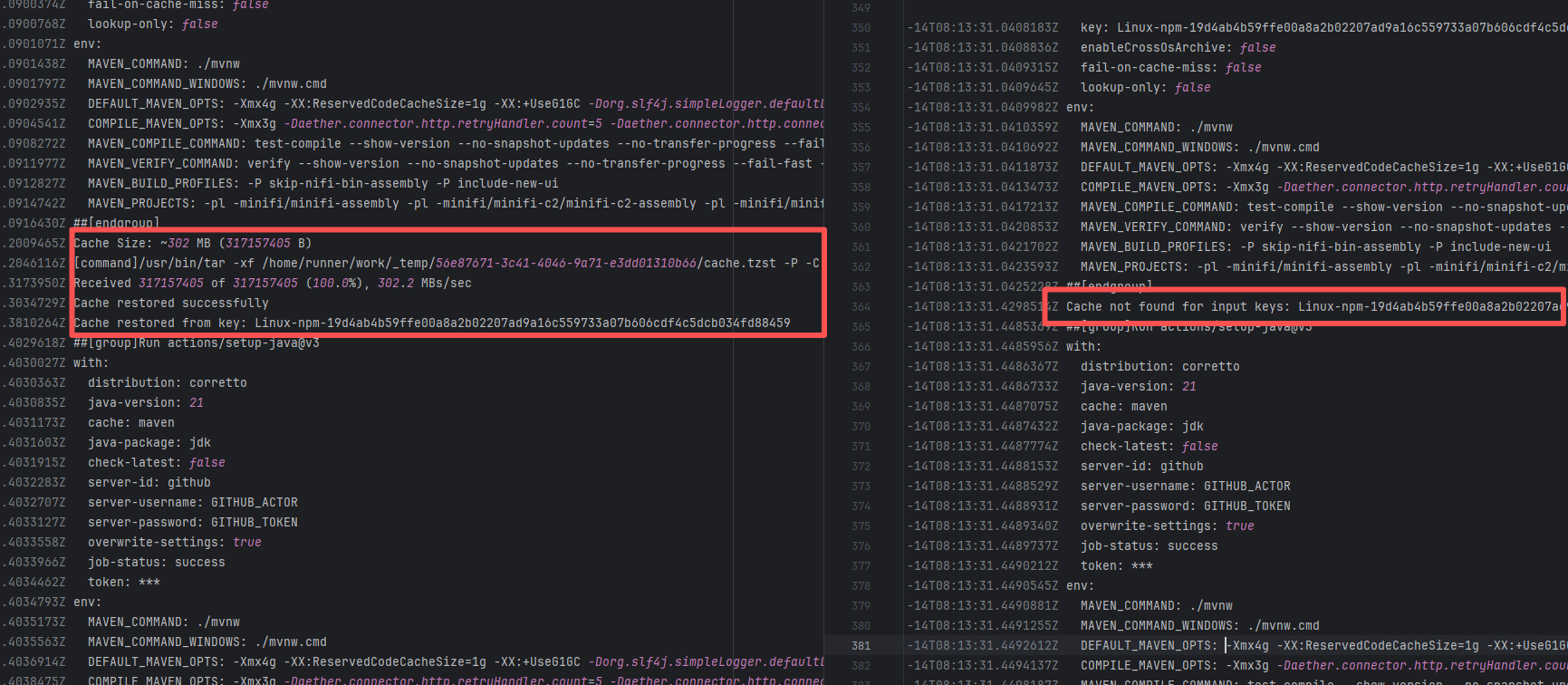

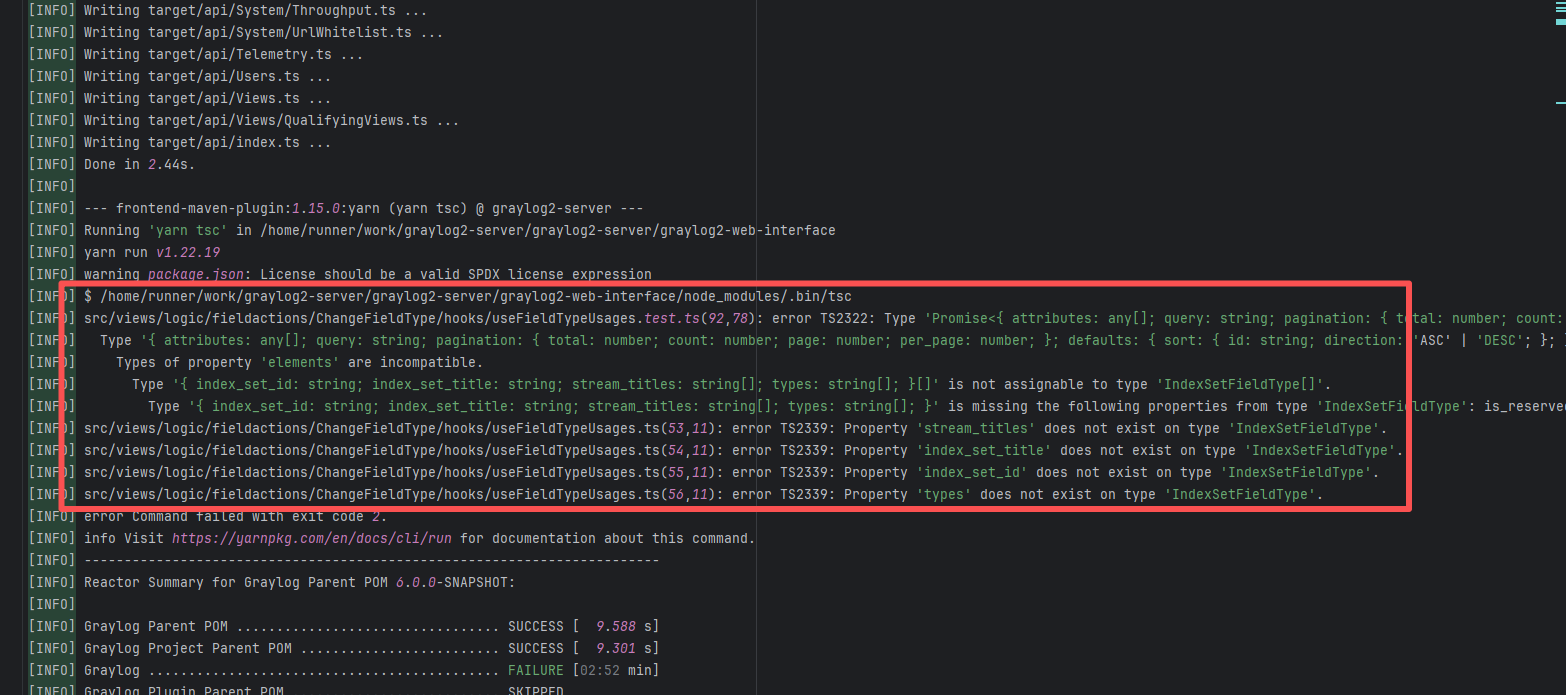

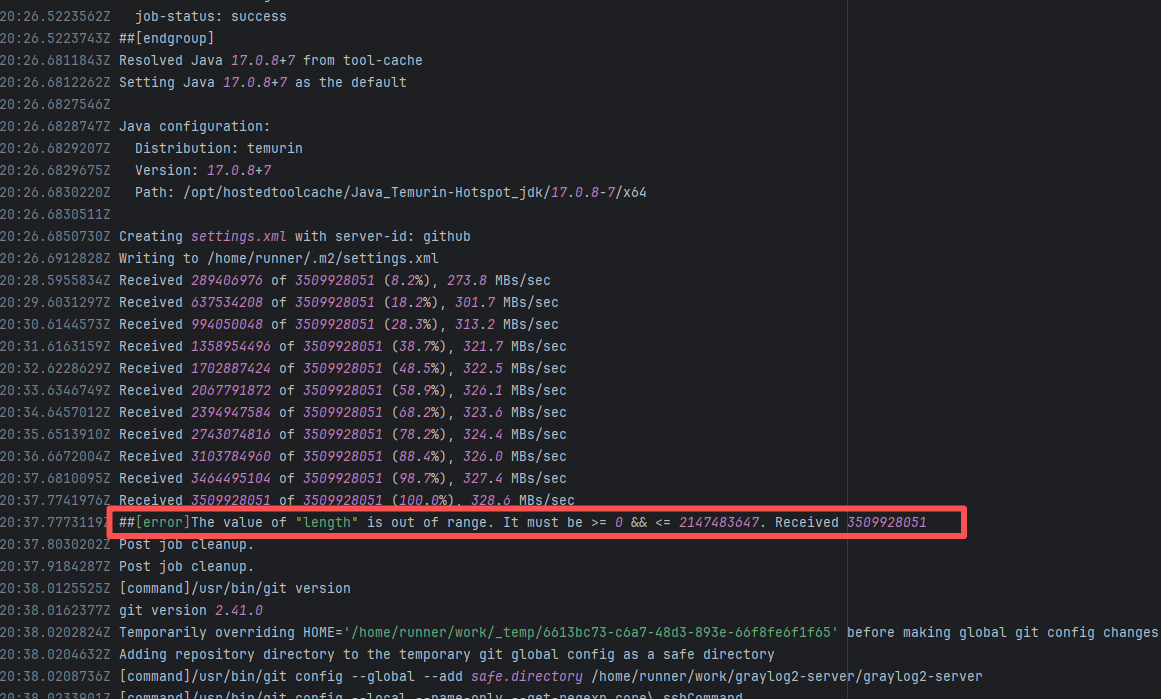

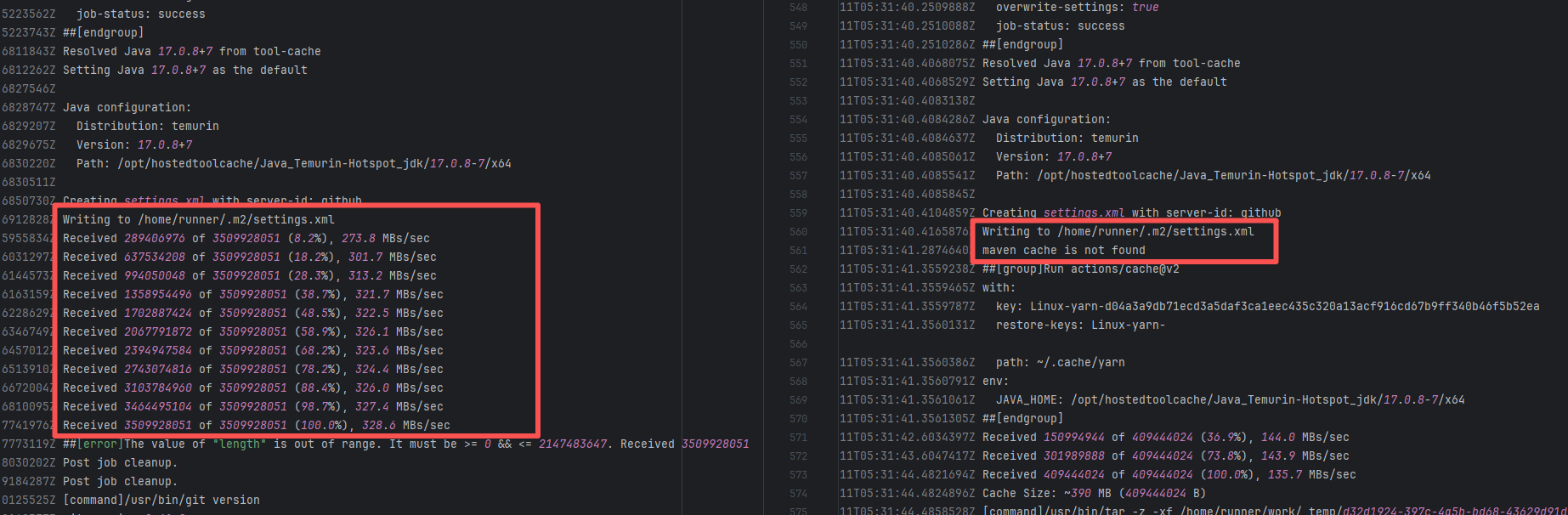

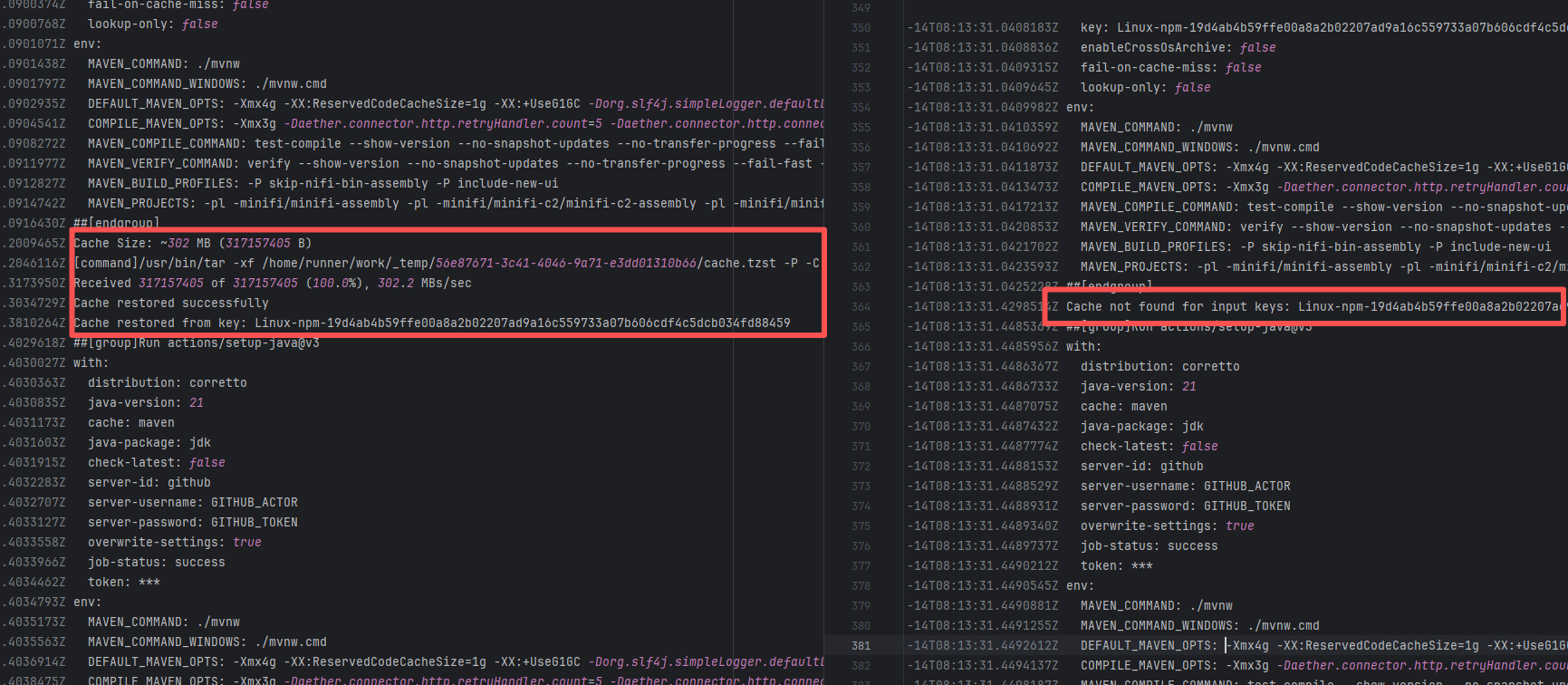

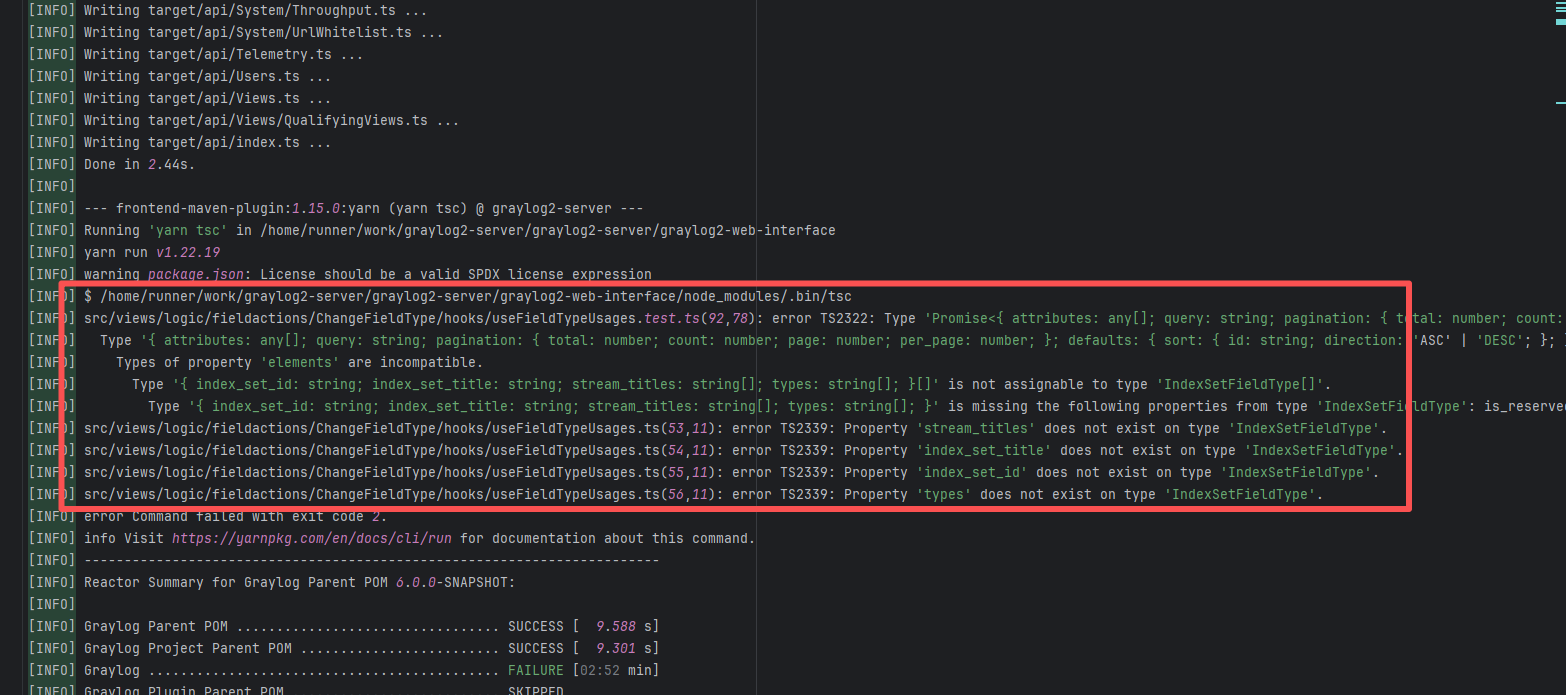

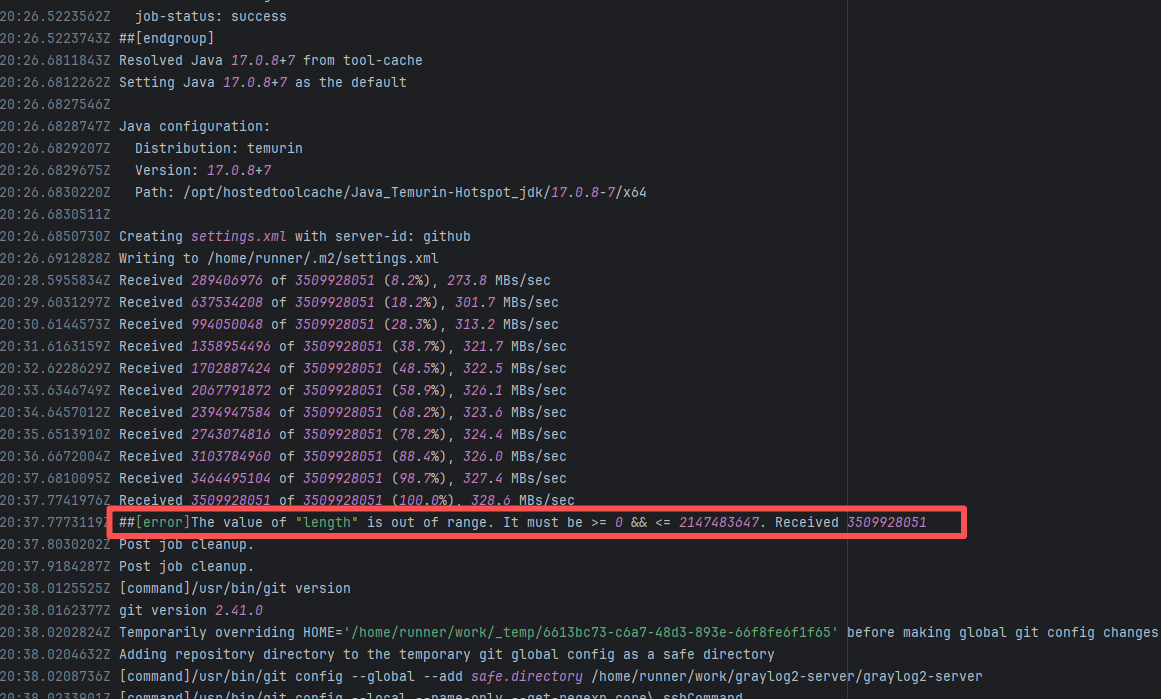

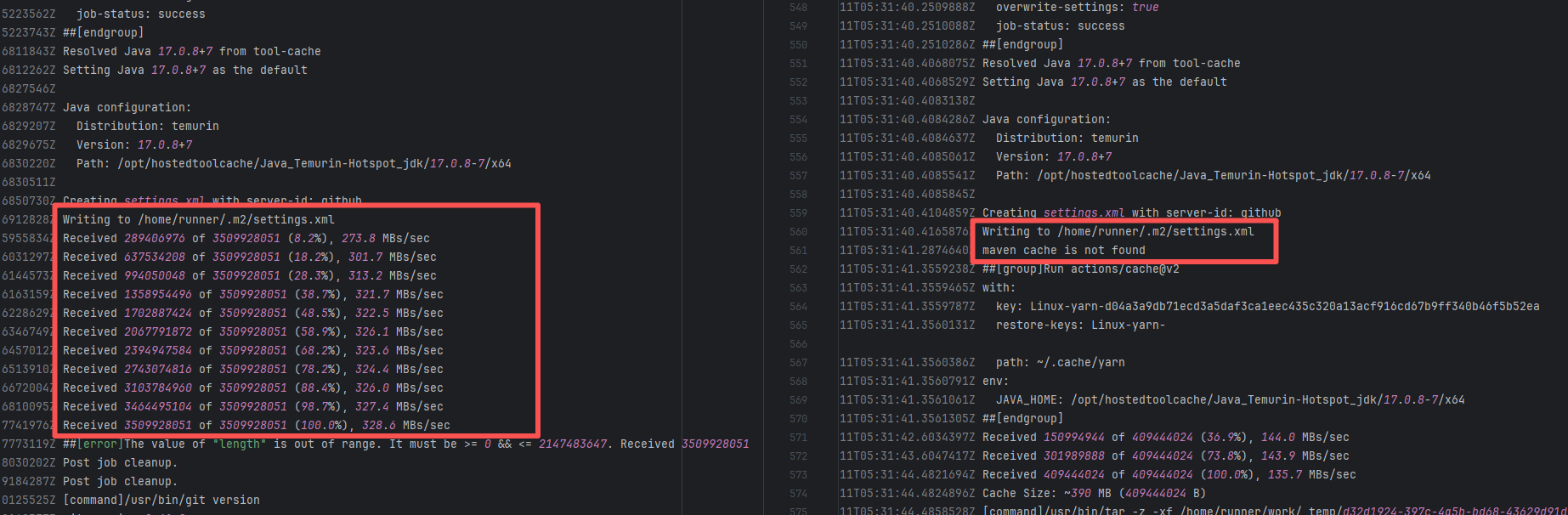

| graylog2/graylog2-server |

19934418670 |

Corrupted Cache |

Error: During type checking, TypeScript found that the returned data structure did not match the expected type. Specifically, the returned object type was inconsistent with the structure of the PageListResponse or IndexSetFieldType types, causing TypeScript to report an error.

Root Cause: A careful analysis of the logs revealed that the failed job had a cache hit while the successful job had a cache miss. Therefore, the Root Cause is that the dependency content (such as node_modules) saved in the GitHub Actions cache was interrupted or partially corrupted during the previous build and packaging, resulting in incomplete or conflicting files after restoration. The rerun was successful. |

Rerun after clearing the cache |

|

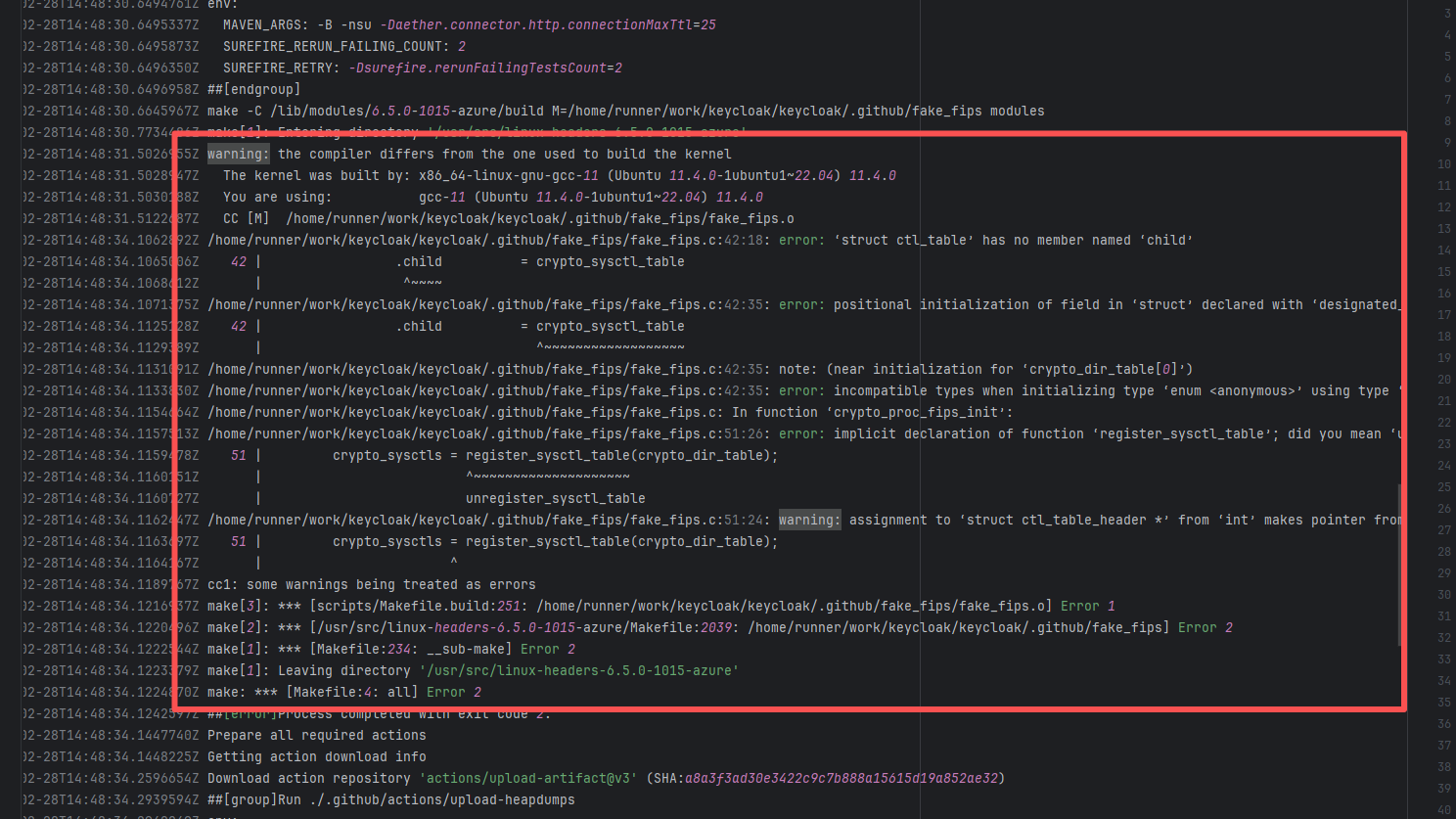

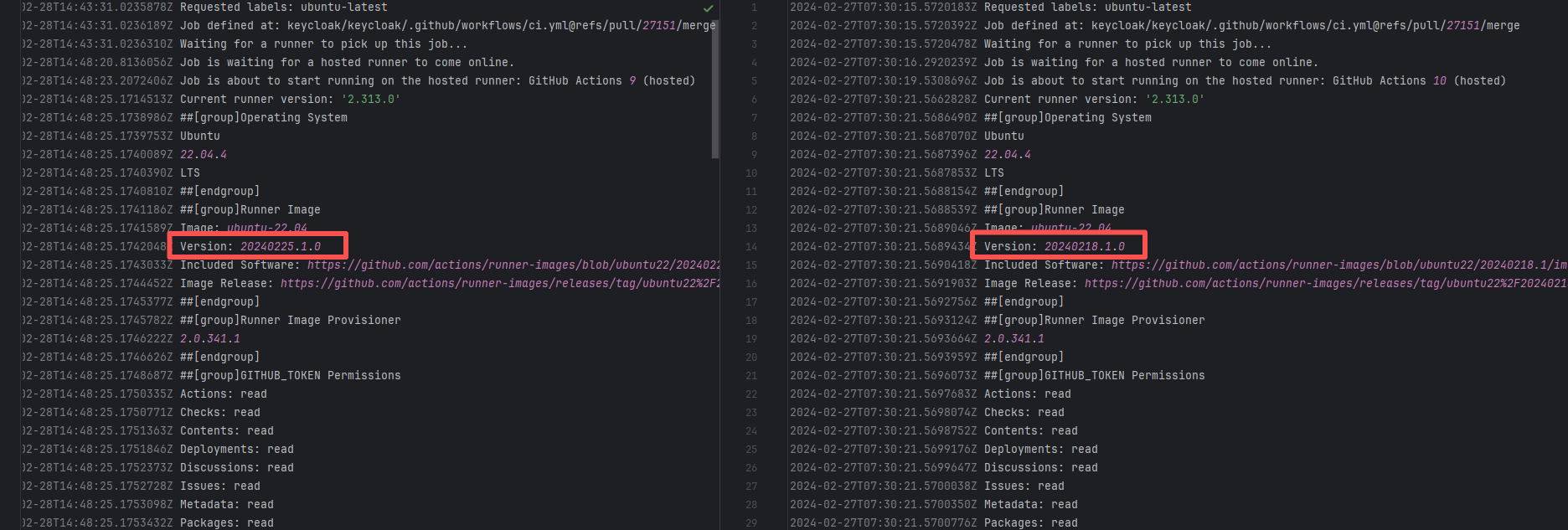

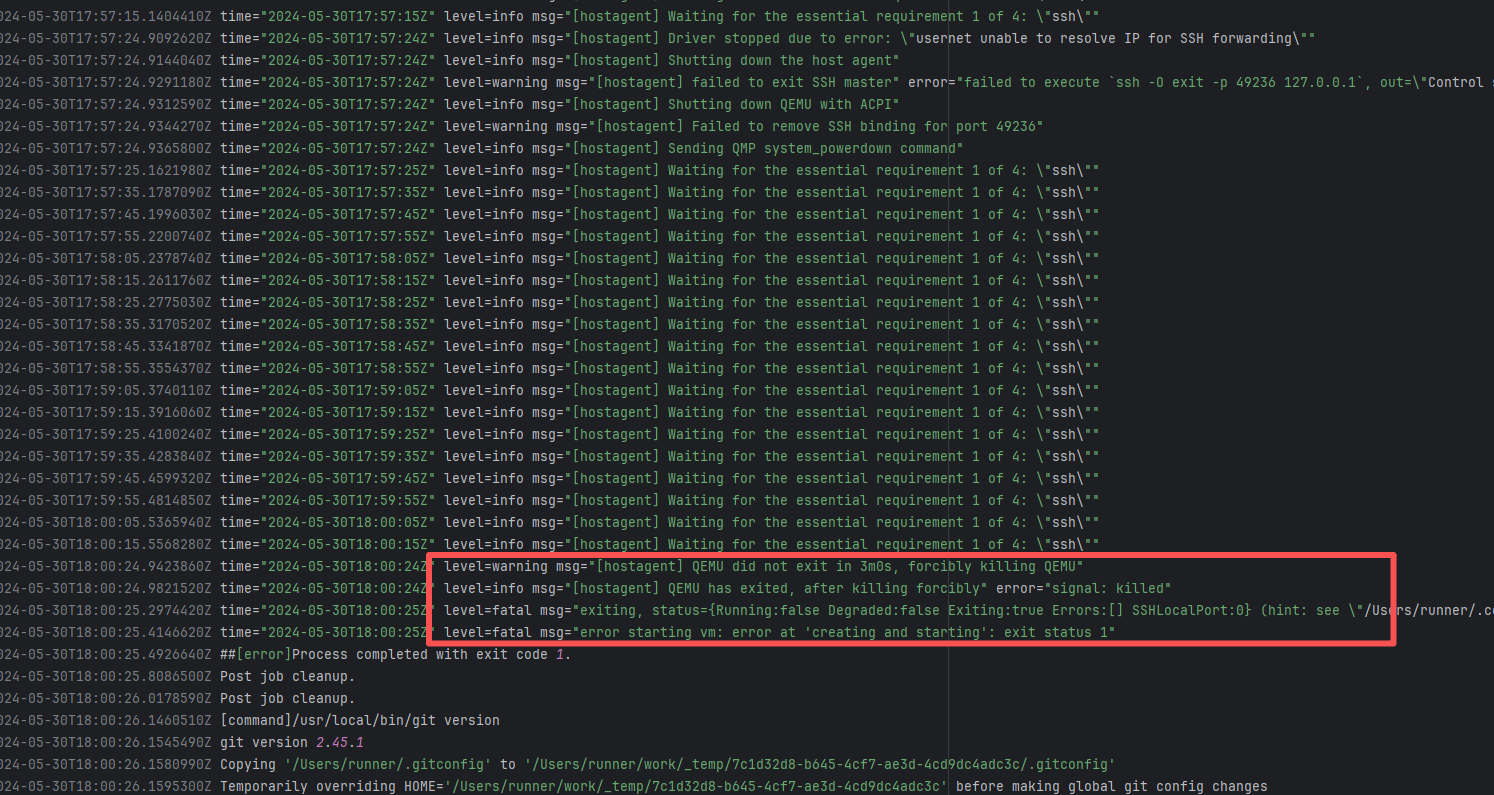

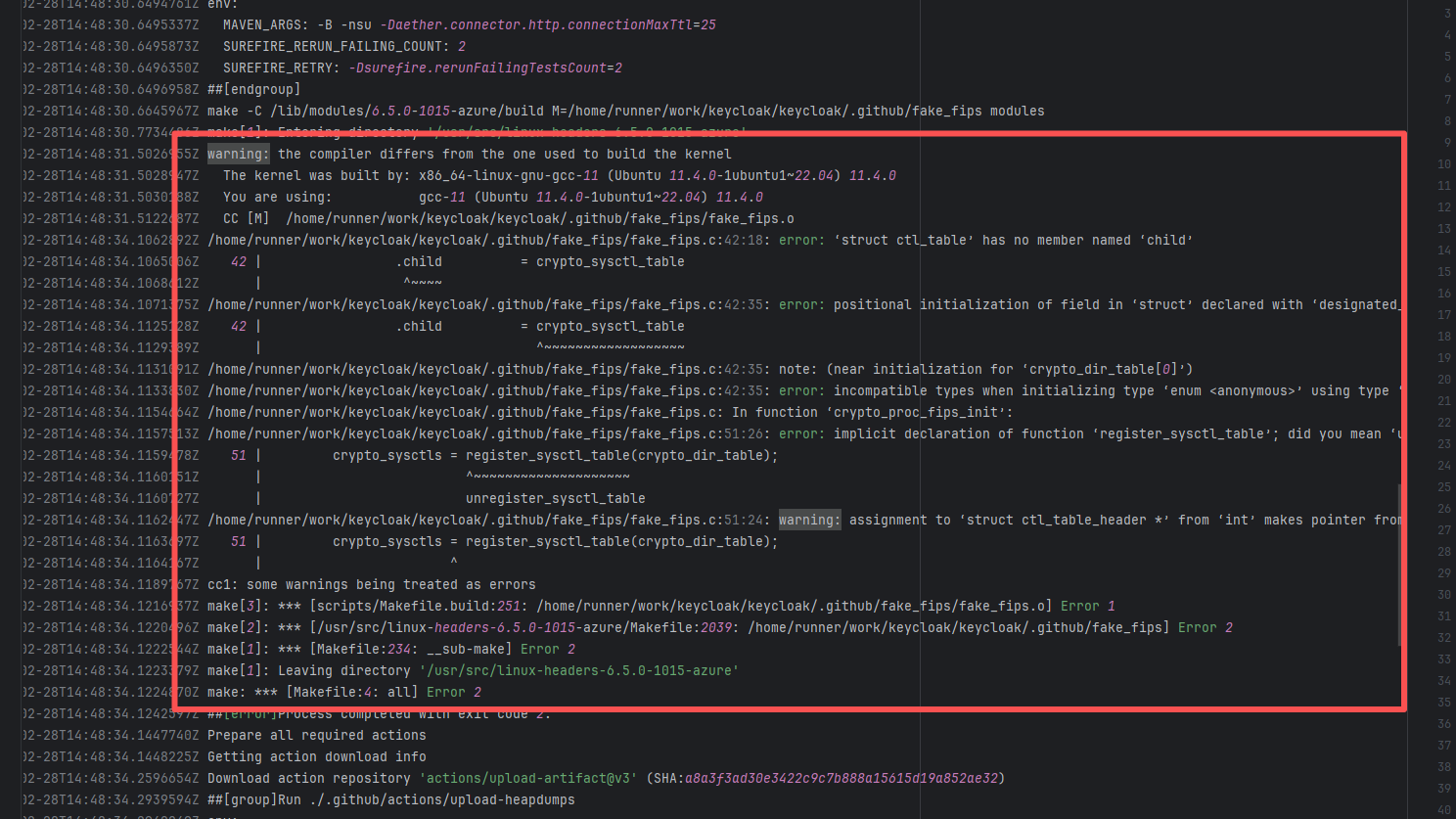

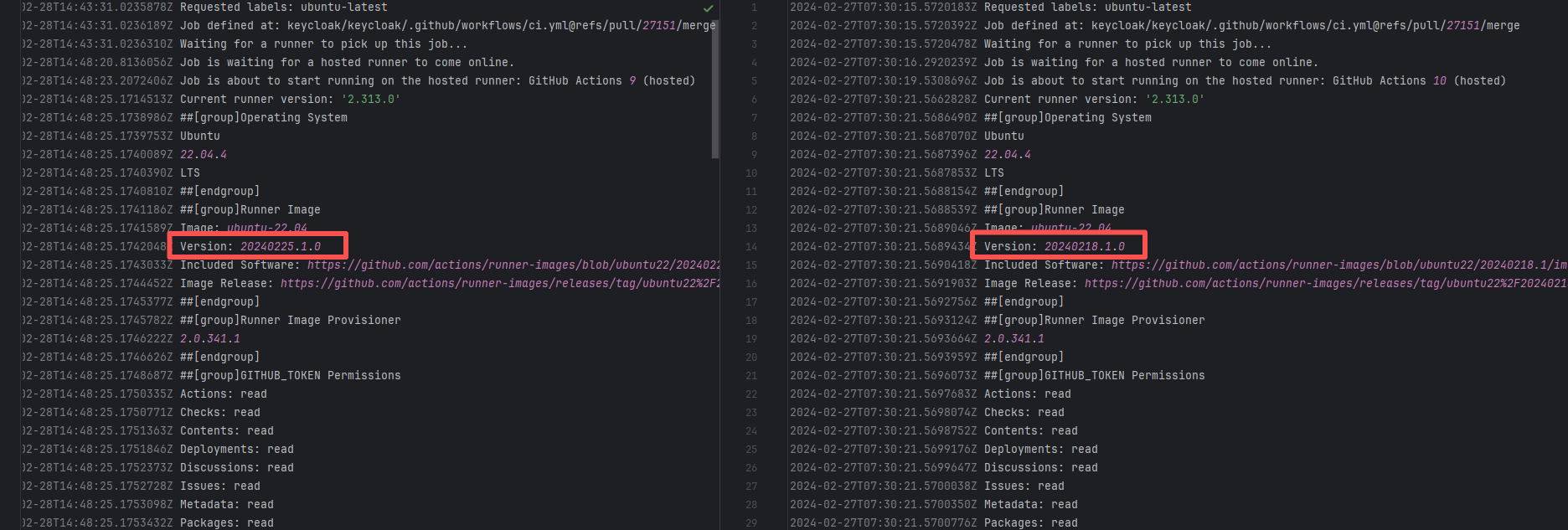

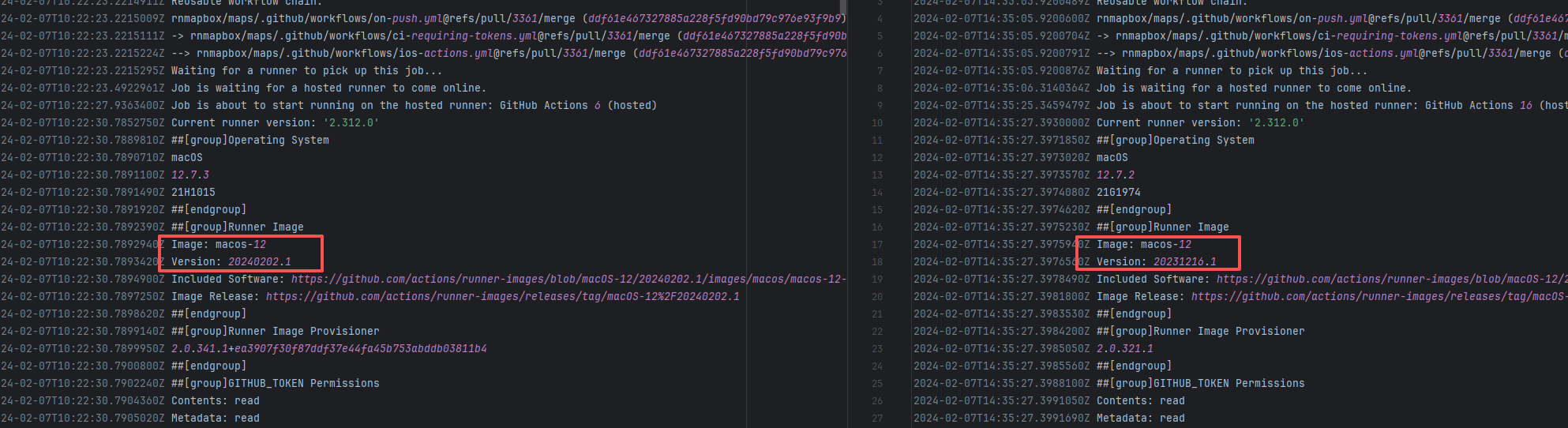

| keycloak/keycloak |

22082527146 |

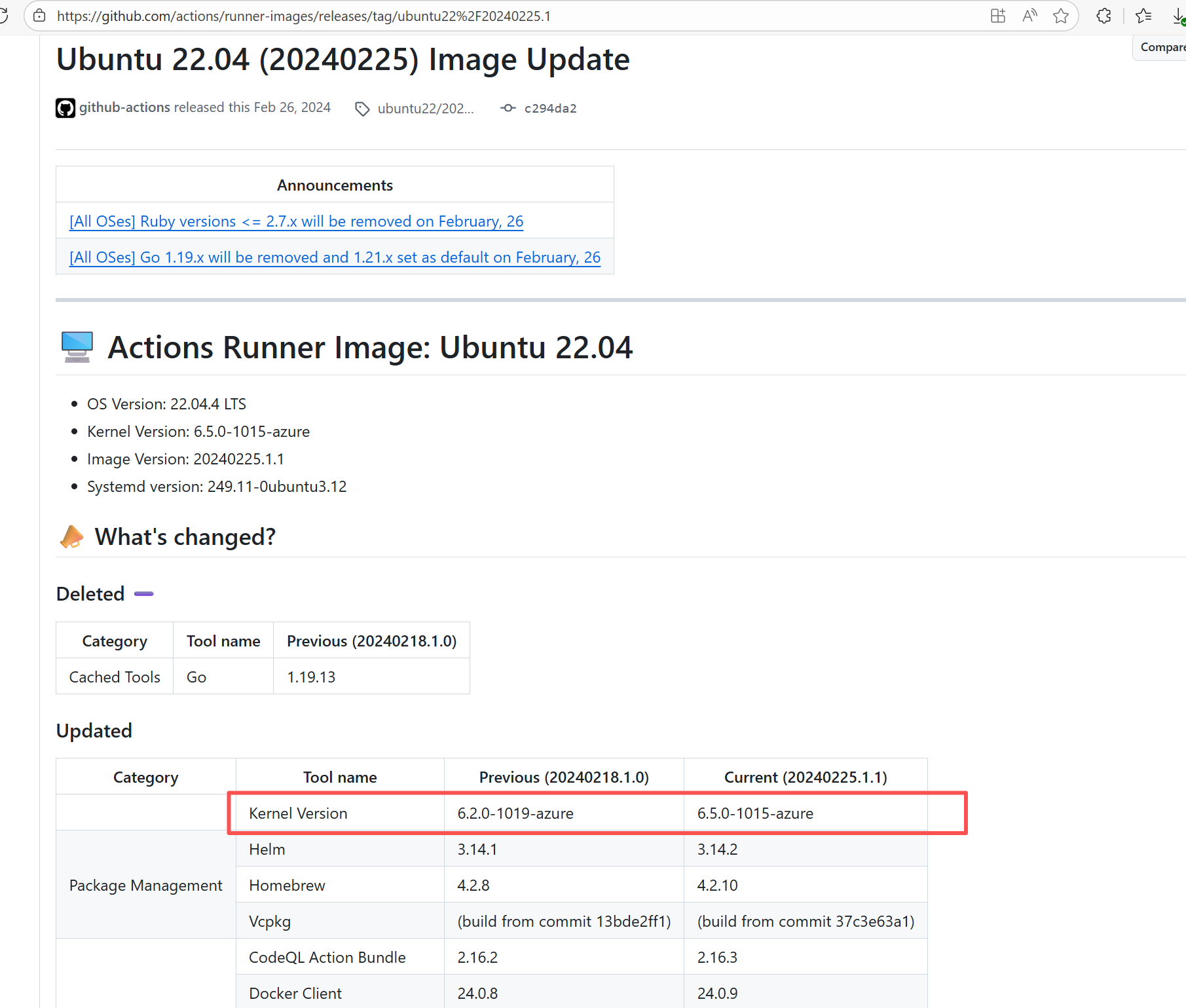

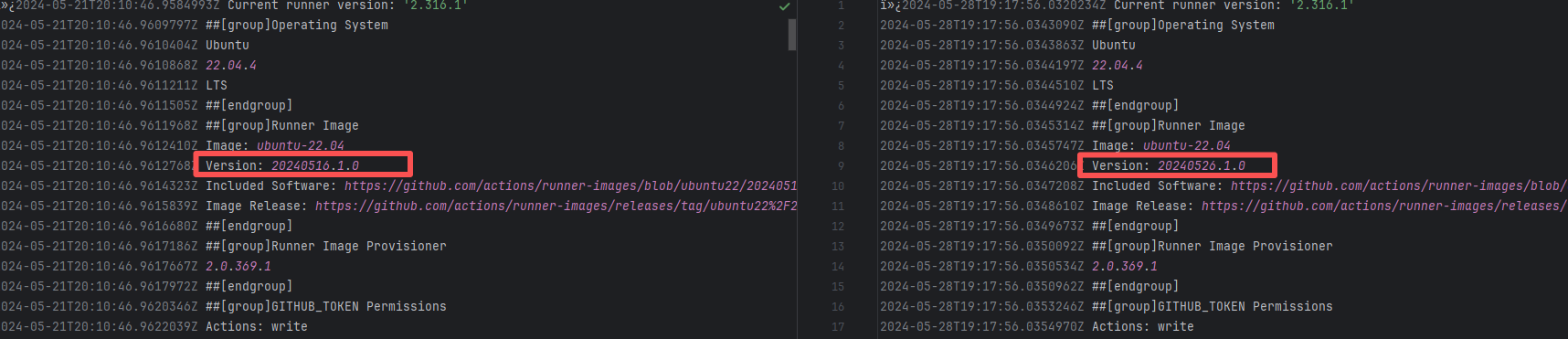

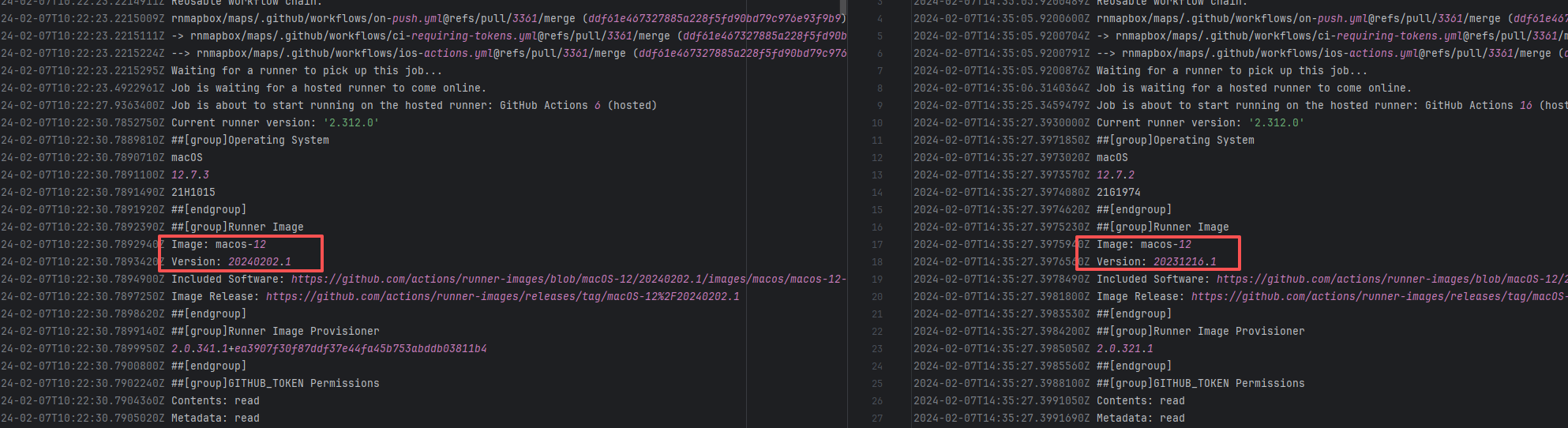

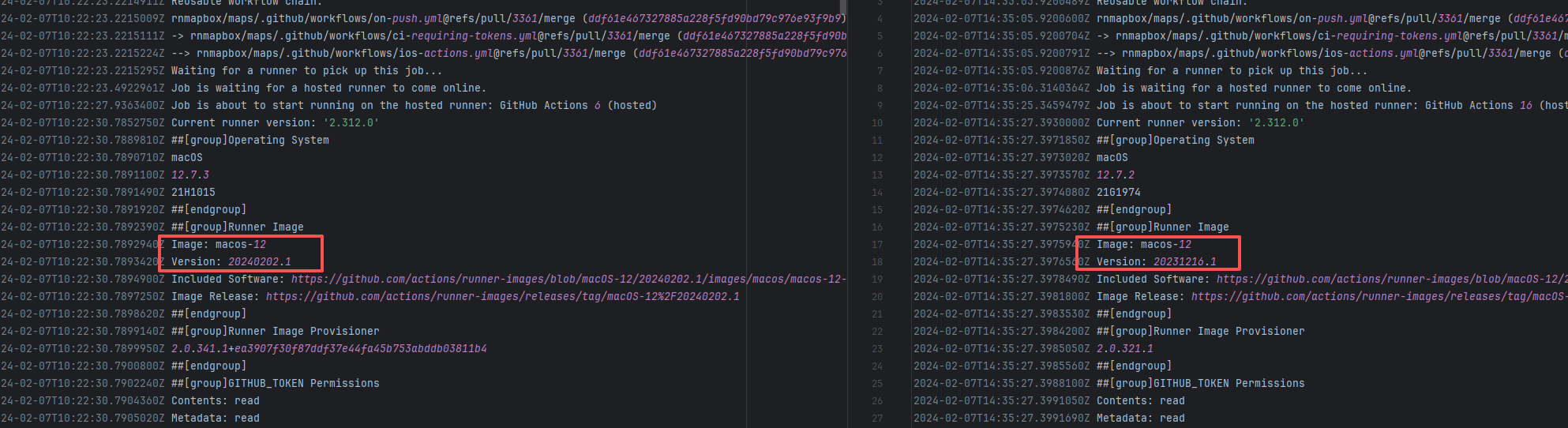

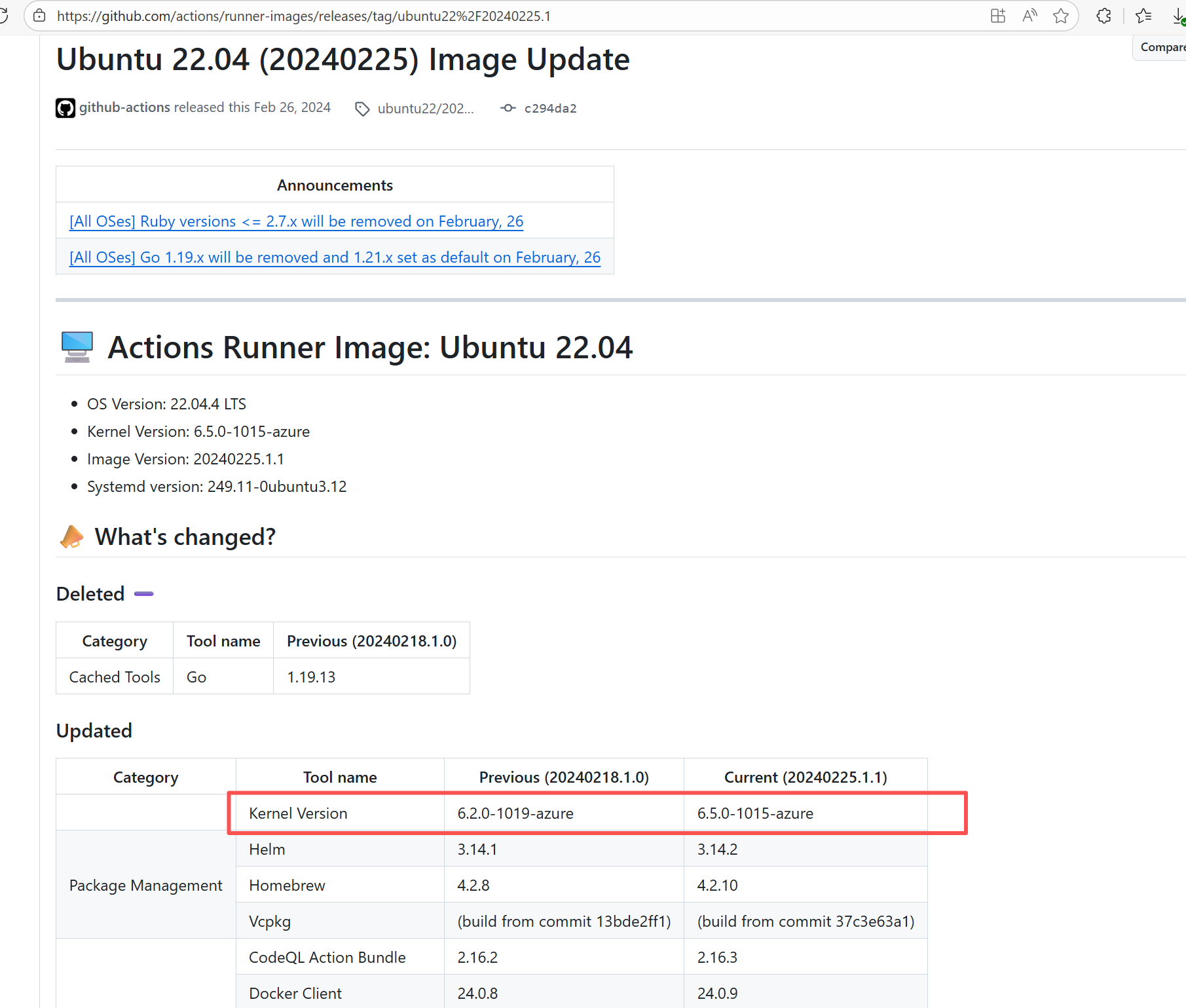

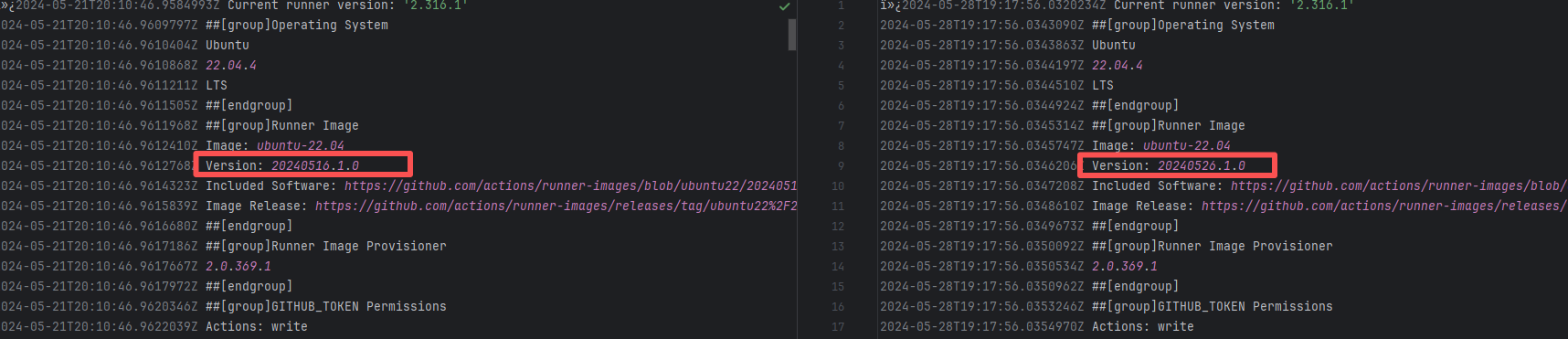

Runner Incompatibility |

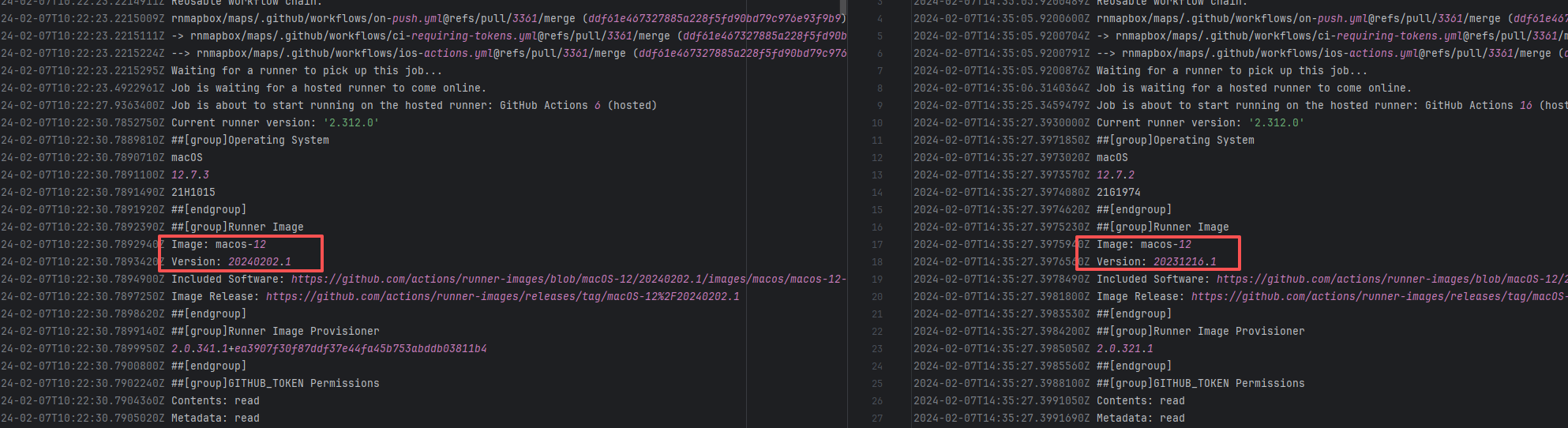

Error: The log shows that the kernel module compilation failed. The core reason is that fake_fips.c used an old sysctl interface (such as the .child field of struct ctl_table and the register_sysctl_table() function) that has been deprecated or removed in Linux kernel 6.5, causing a compilation error on the new kernel.

Root Cause: Since the runner kernel version of GitHub Actions is updated with the image (for example, upgrading from 6.2 to 6.5), the runner image was updated in the rerun after the first successful execution, resulting in all subsequent builds encountering kernel module compilation failure issues. |

- Update code to be compatible with the new kernel API

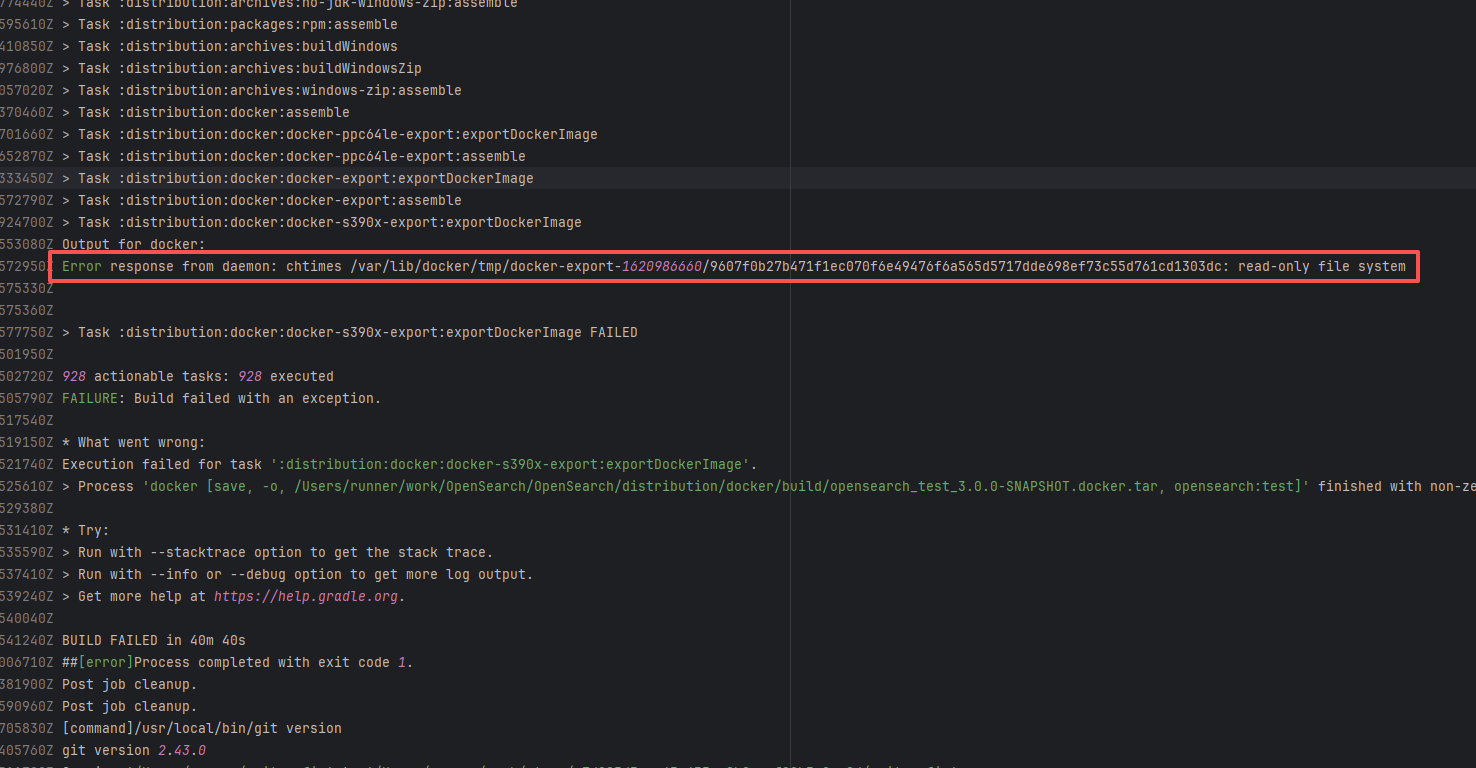

- Skip the build of this module in the test environment |

|

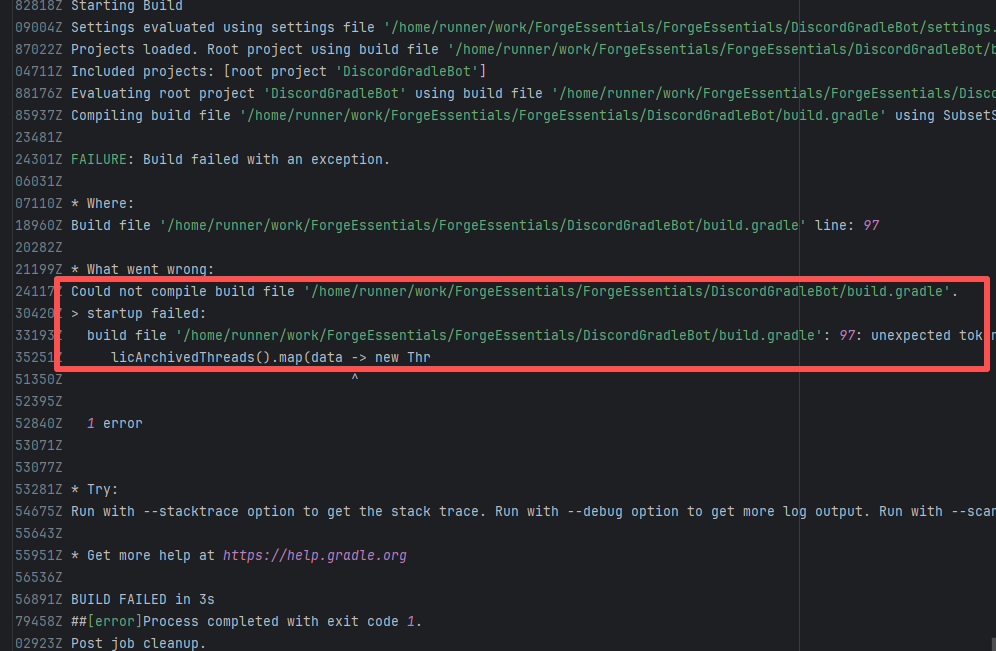

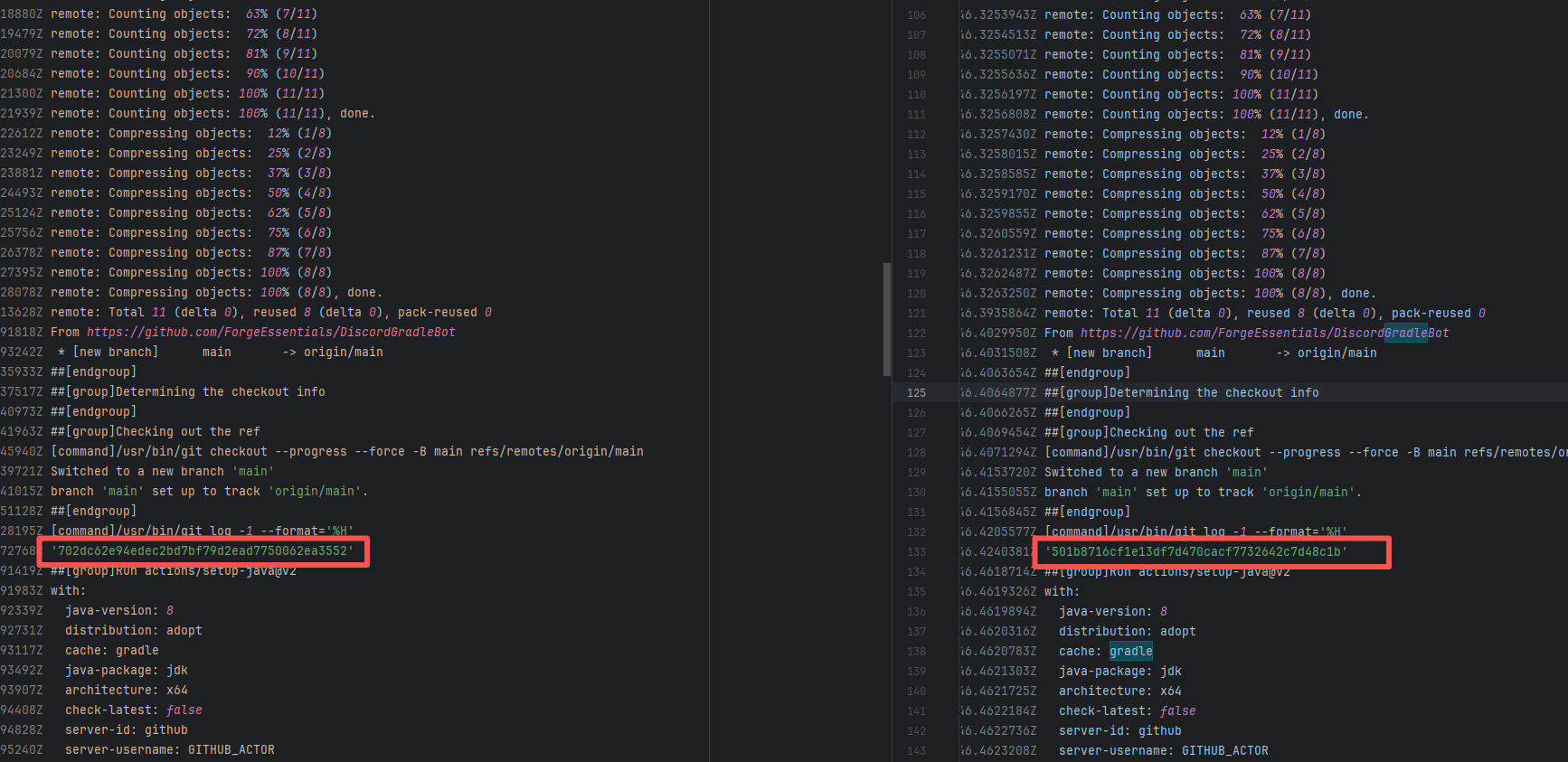

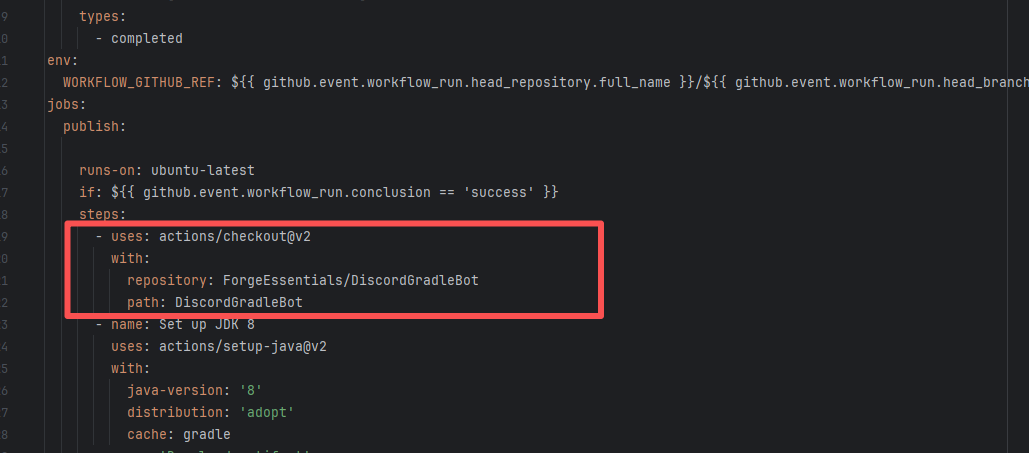

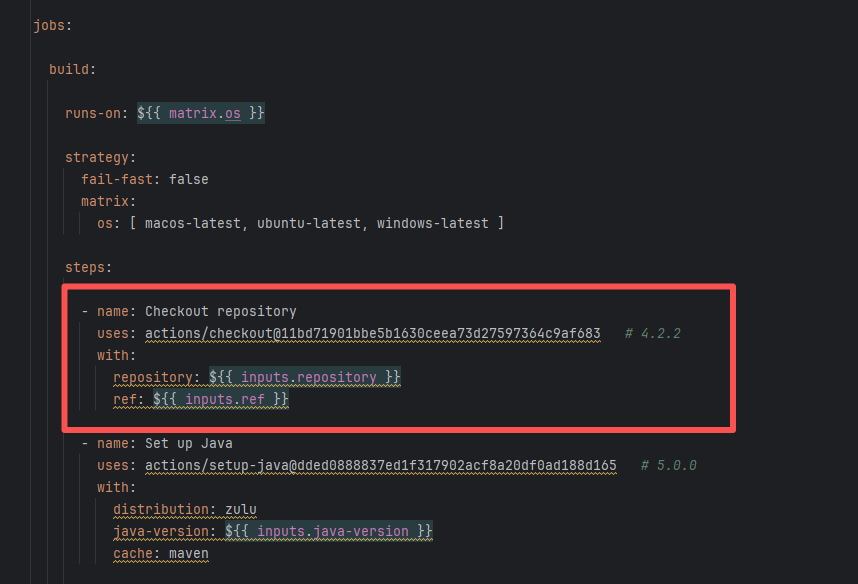

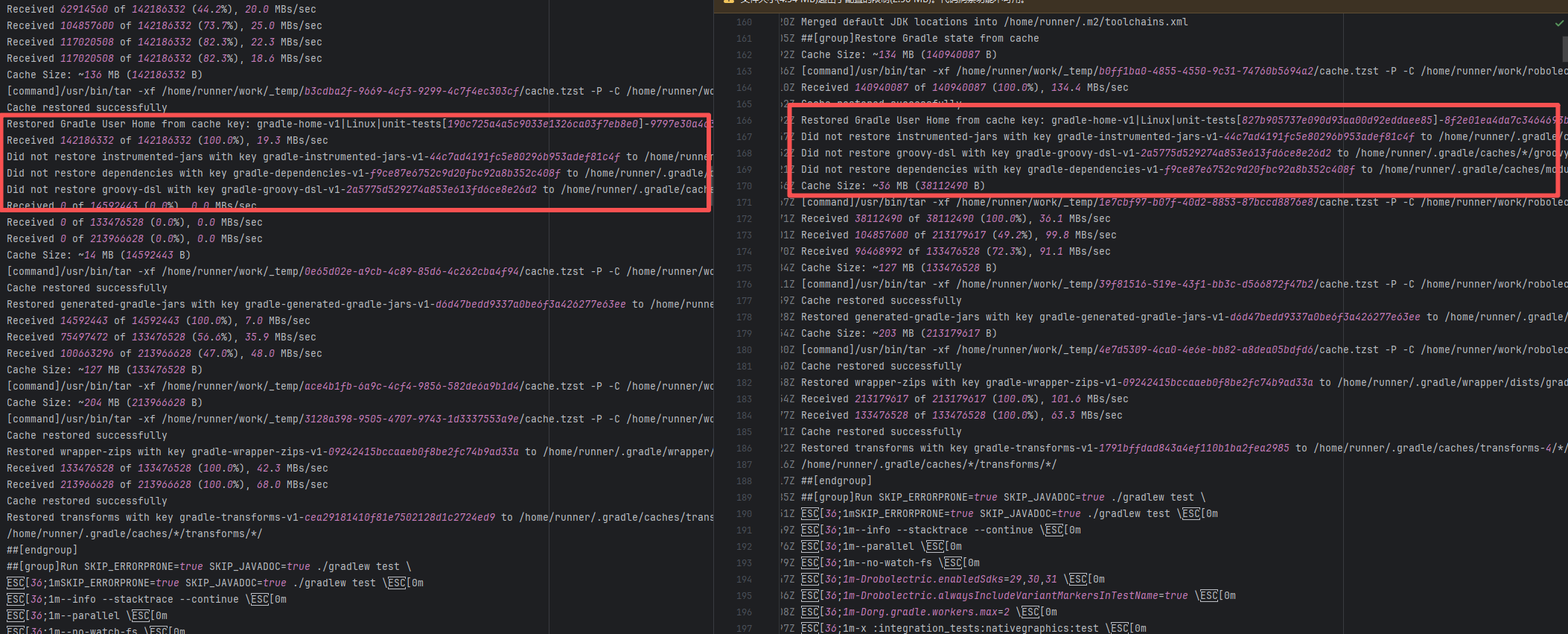

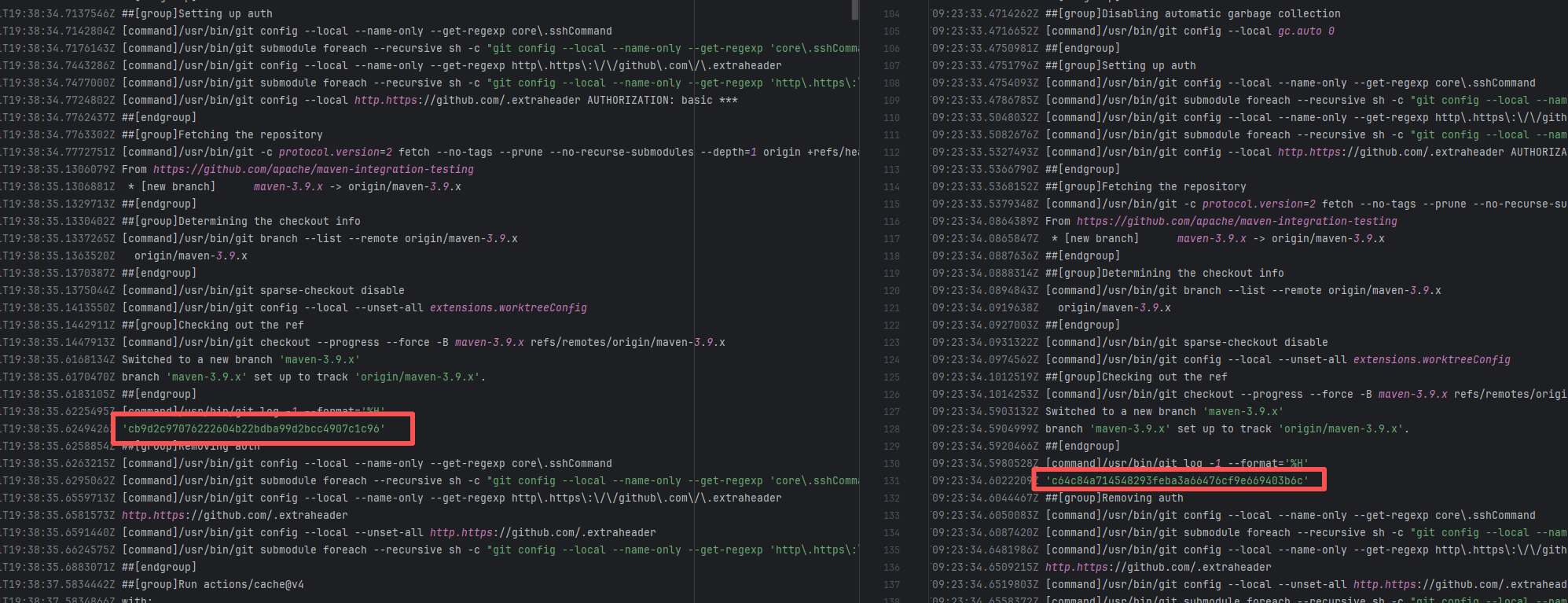

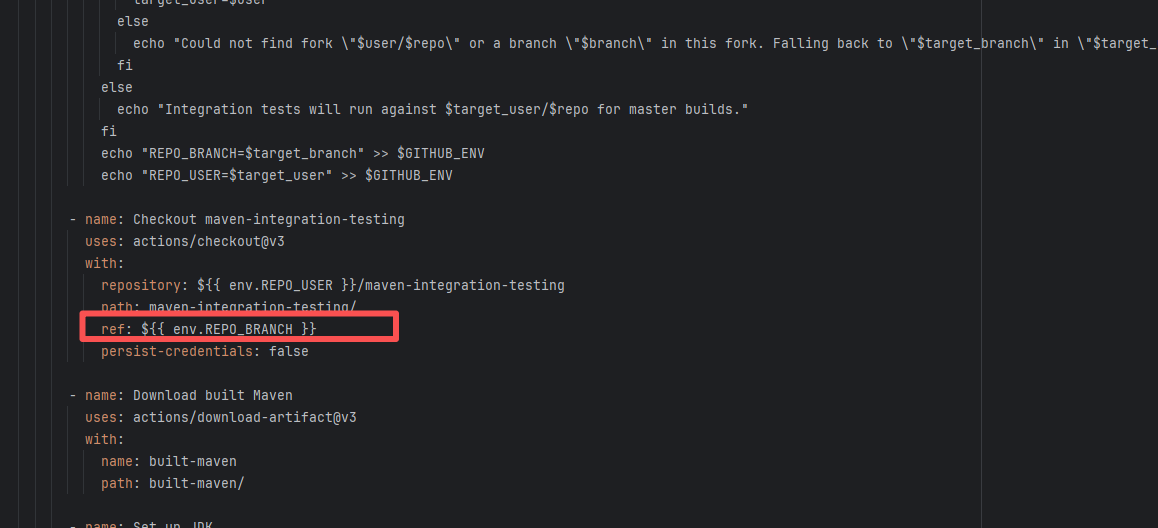

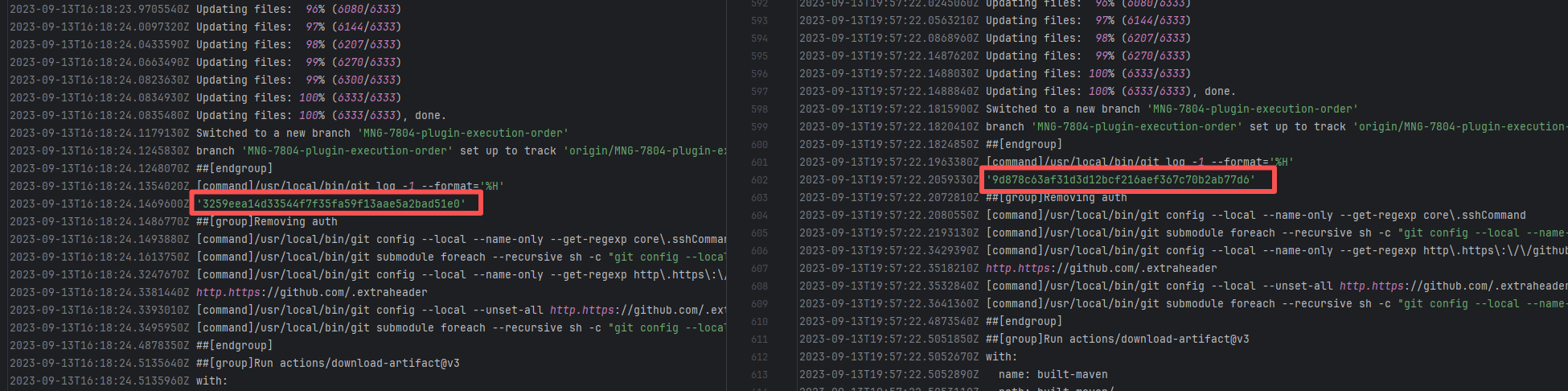

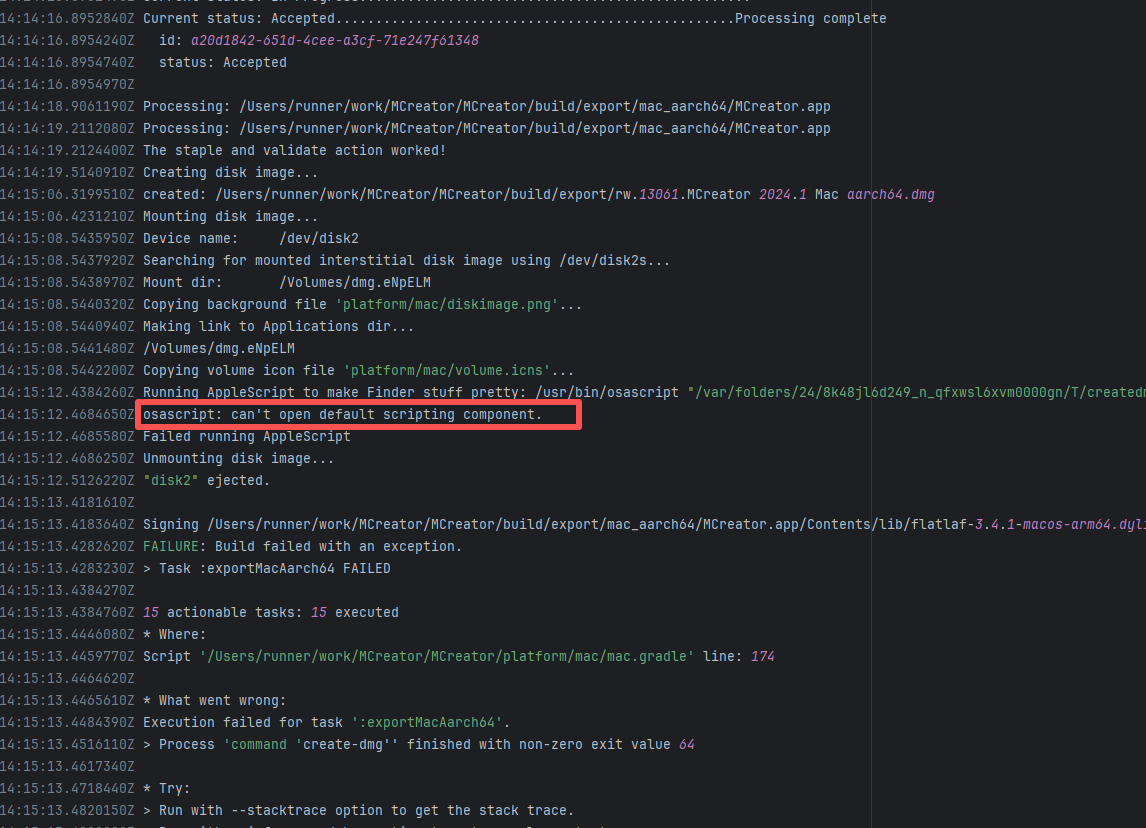

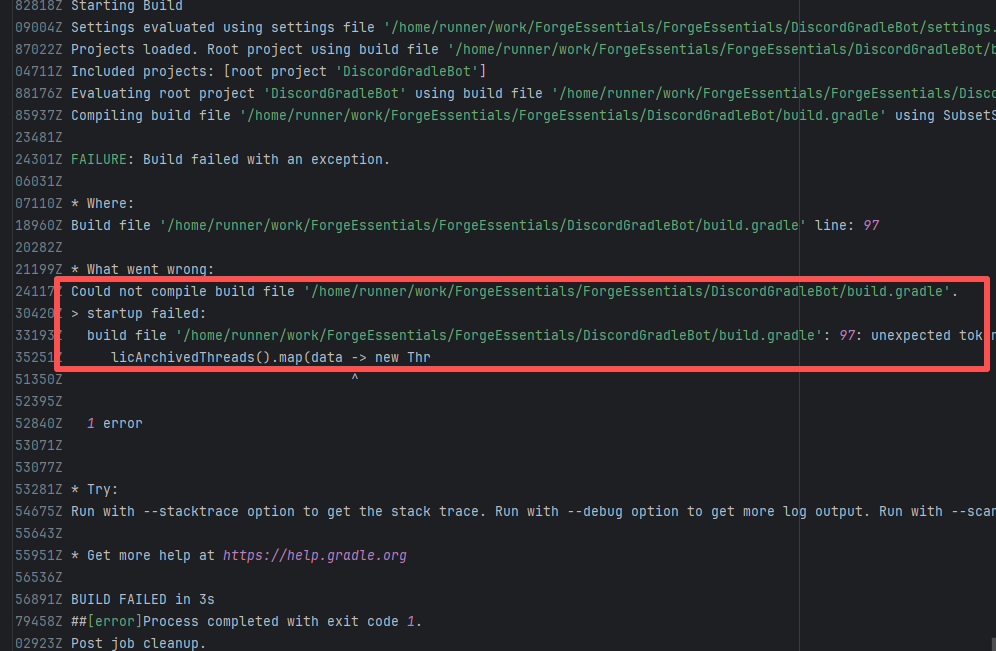

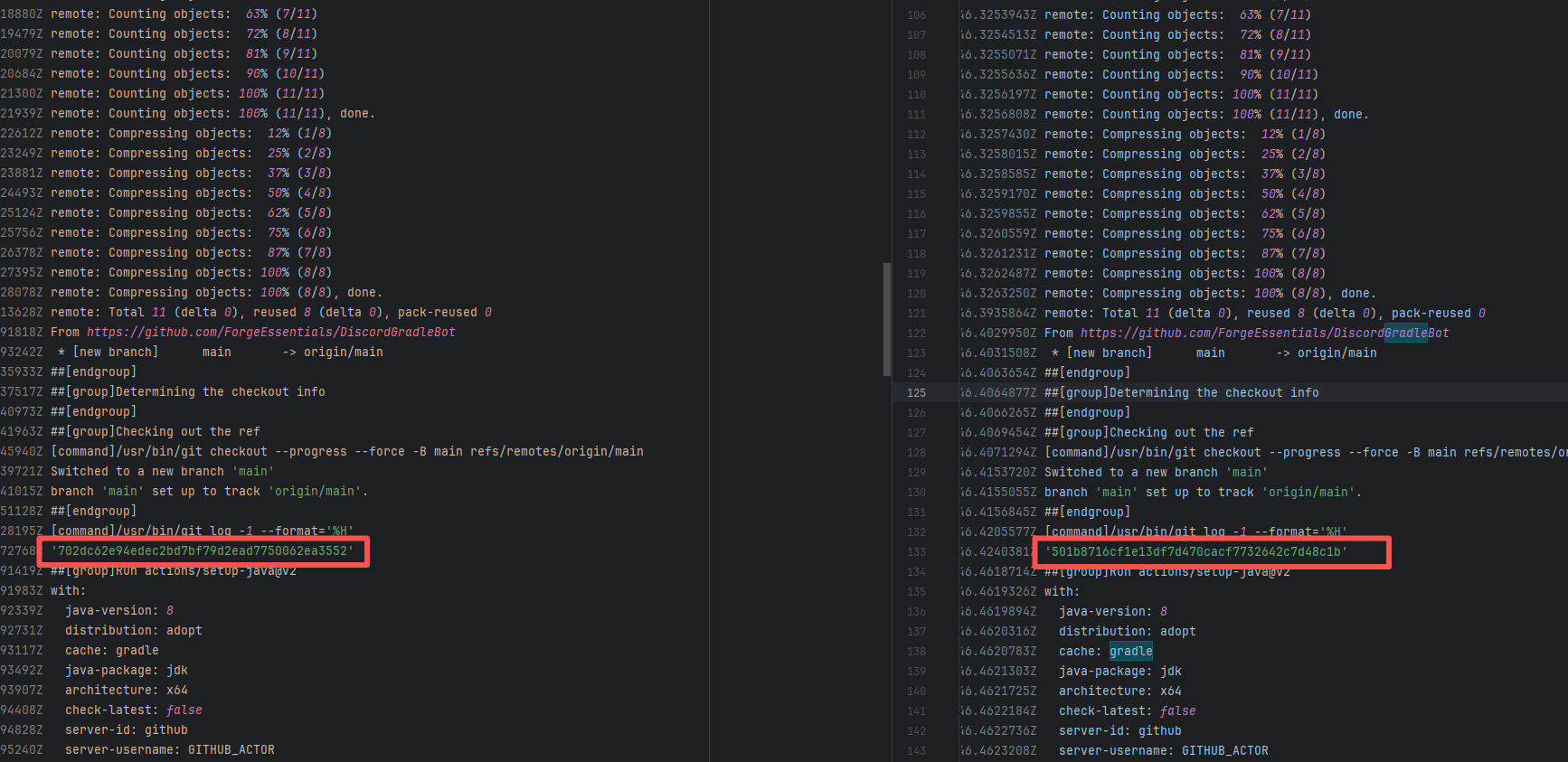

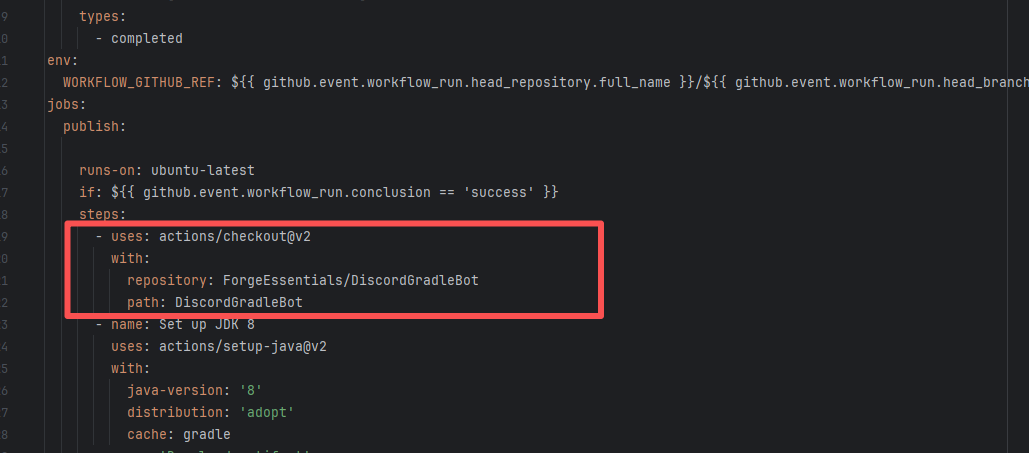

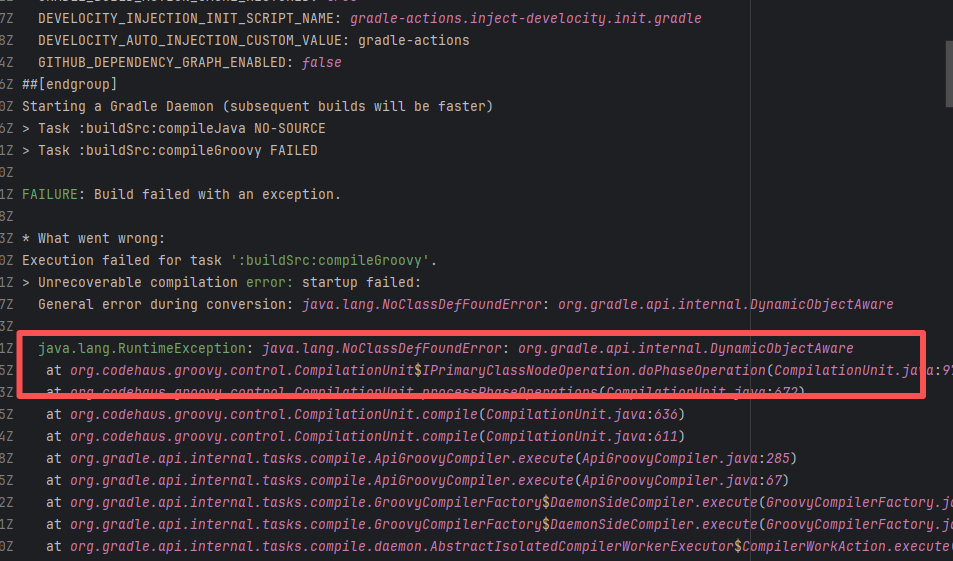

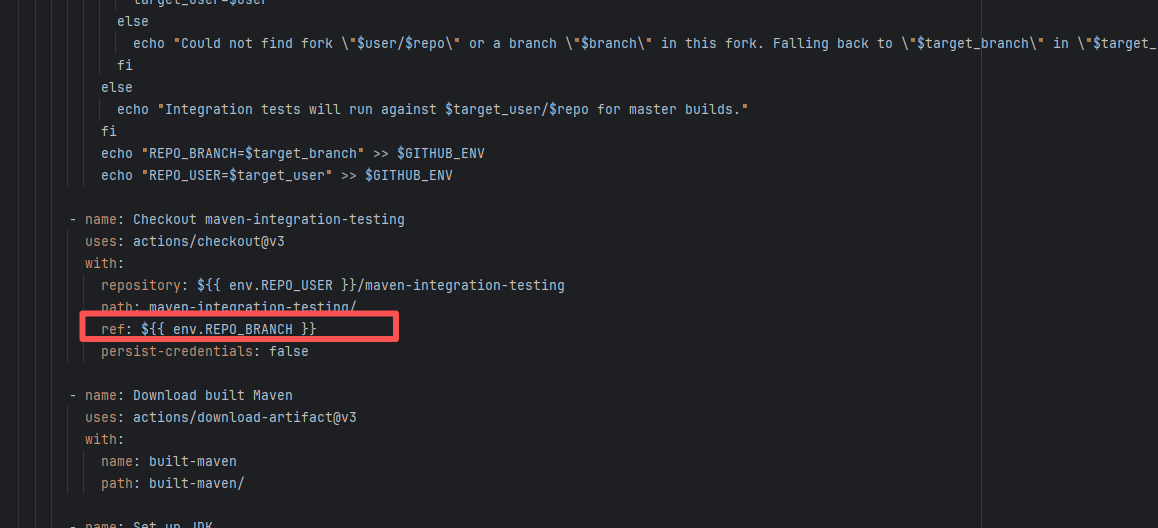

| forgeessentials/forgeessentials |

18250417040 |

Upstream Repository Issue |

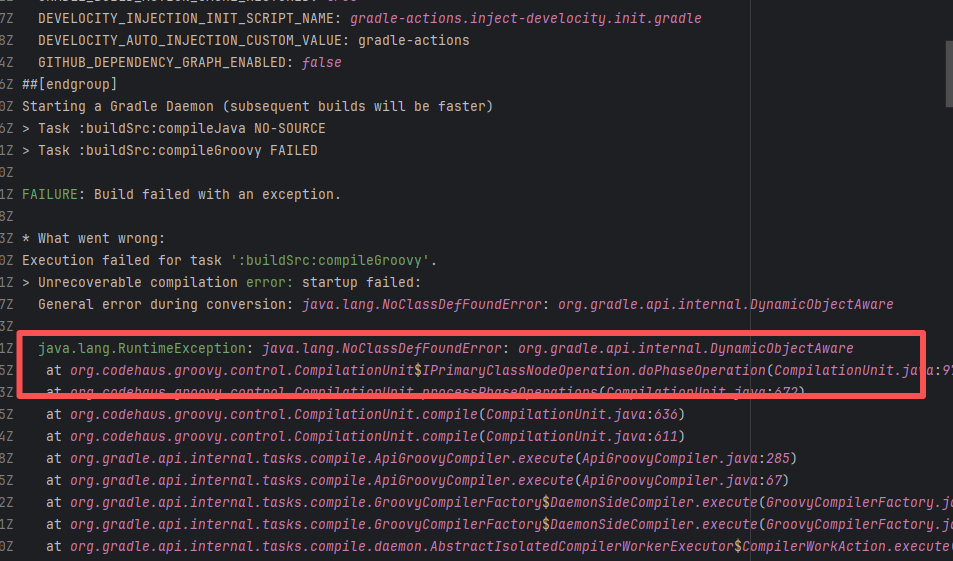

Error: The error message in the log indicates that the lambda expression syntax with the arrow -> in Groovy is not supported. Due to the Gradle version or Groovy version not being new enough, it cannot recognize the Java-style lambda like data -> new Thread(...).

Root Cause: By analyzing the logs, it was found that this flaky issue was not caused by modifying external configurations or data and then rerunning it successfully, but originated from changes in the upstream repository's source code. In the workflow, the checkout step specified the repository parameter, so each pull will fetch the latest commit of the default branch of that repository. This caused the code version executed by the workflow to be inconsistent. Therefore, the current error was actually fixed after the commit had already been modified. |

|

|

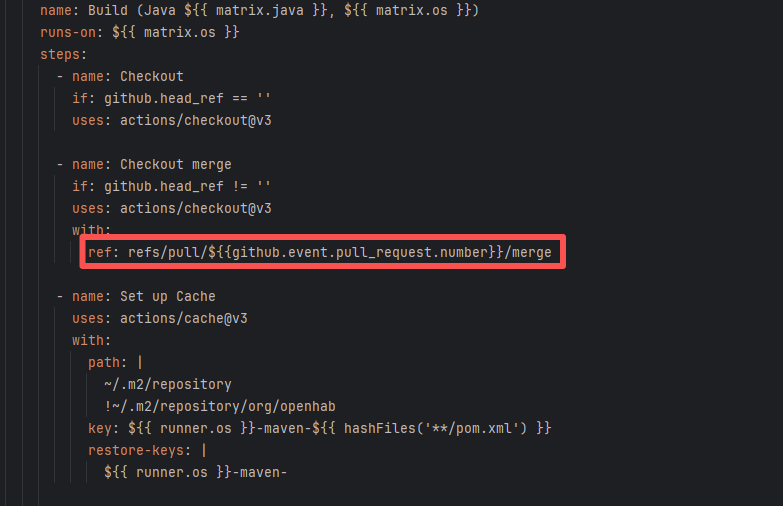

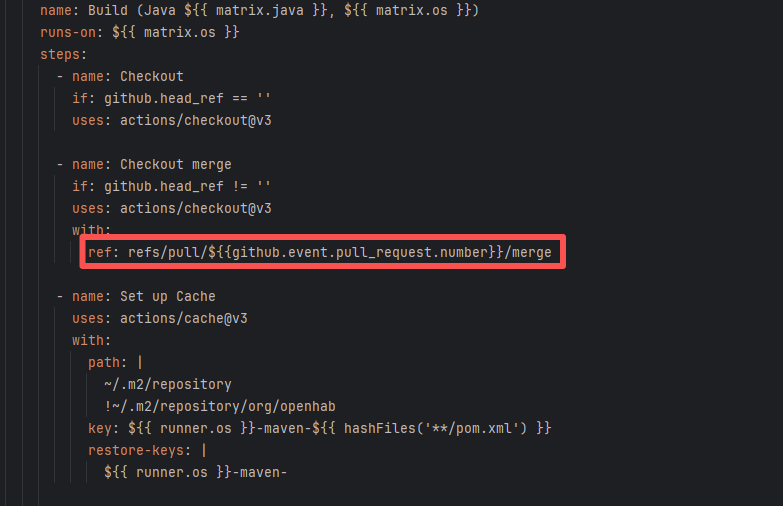

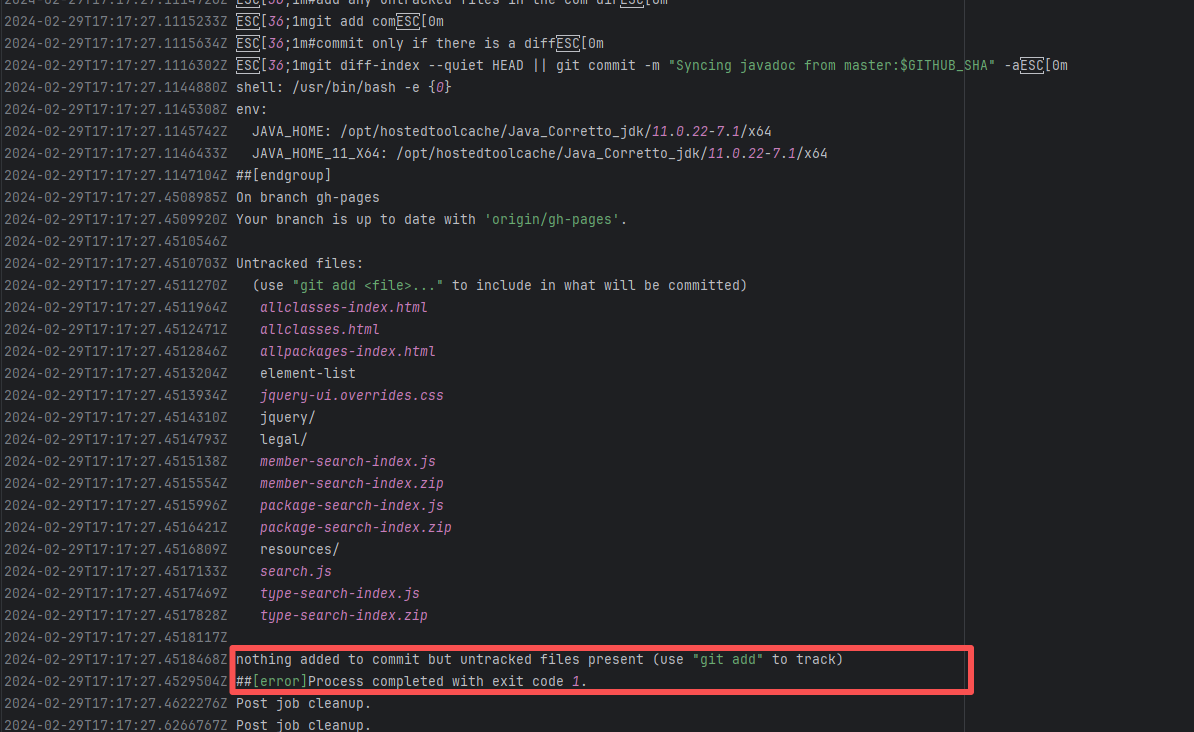

| openhab/openhab-addons |

17719308676 |

Missing Dependency |